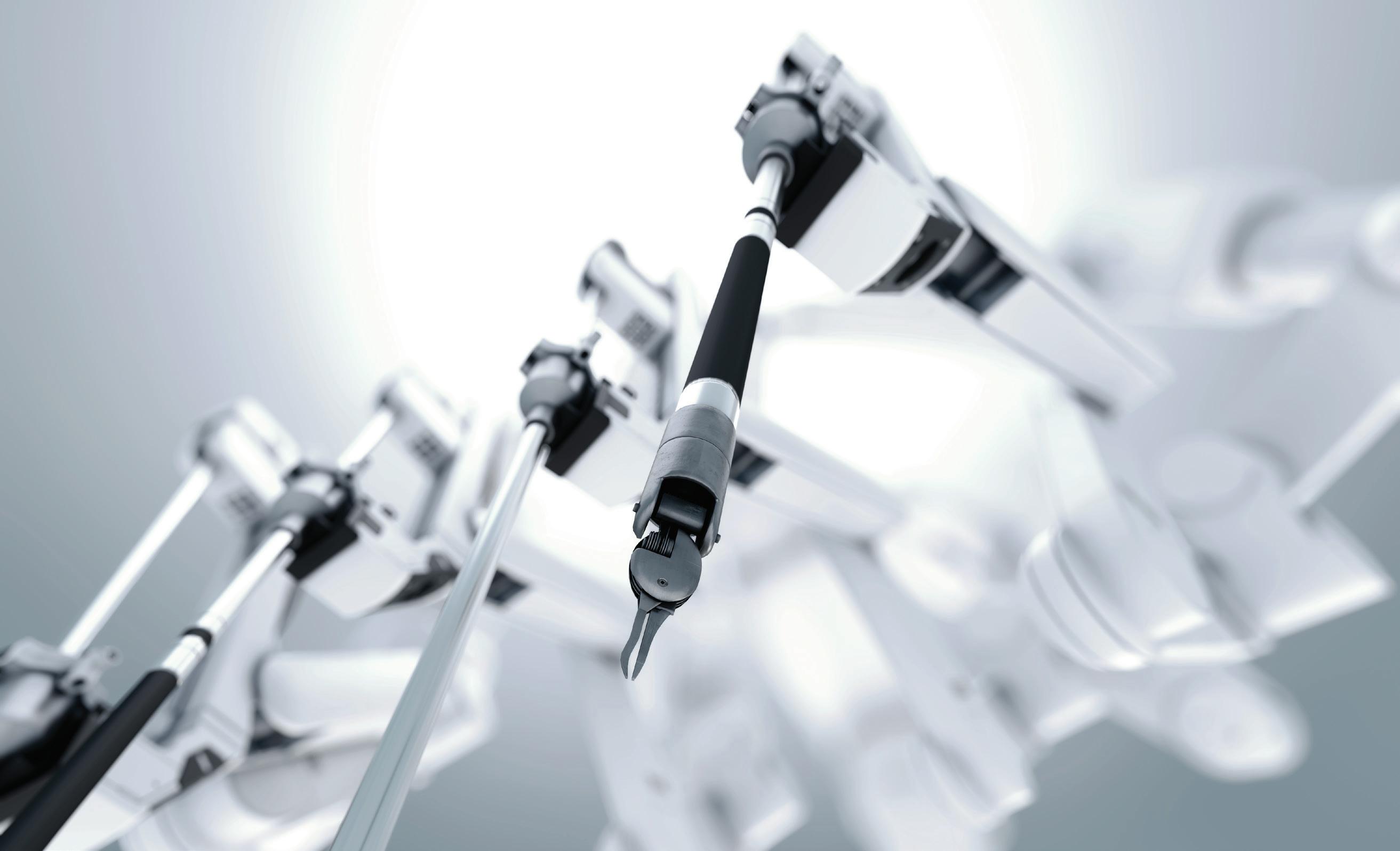

For the past few years, Asensus Surgical Inc. has been working to bring LUNA, its next surgical robot, to market.

First announced in 2023, the company has now finalized the design for the system and plans to pursue U.S. Food and Drug Administration and European CE approval this year. To develop LUNA, Asensus drew on its experience rolling out the Senhance system and the more than 20,000 cases it has handled with that minimally invasive system.

Dustin Vaughan, vice president of robotics research and development at Asensus, shared the company’s top priorities for LUNA and its hopes for the surgical robotics field as a whole in the coming years.

Surgical robots must remain operational for 10+ years

Developing surgical robots is a long and slow process. While roboticists often adopt a “move fast and break things” mindset to iterate on robotics, this won’t work for surgical robots.

“The challenge, as a technologist or as an engineer, is that you want things to move very quickly, and you want to evolve and improve,” Vaughan told The Robot Report. “But in softtissue surgical robotics, it’s just slow. You’re talking about a platform that takes hundreds of millions of dollars to get to market, and then it really needs to be a successful and viable platform for 10 years.”

Hospitals or healthcare providers that are investing in robotics don’t just expect a system to last for a long time; they need it to. Otherwise, they’re investing millions of dollars into something that they can’t replace in a few years.

“We have this enhanced surgical platform that’s been in the U.S. clinically since 2017, and we’ve done over 20,000 cases with that platform globally,” Vaughan said. “So, we learned a lot. We learned a lot of the things that break. We learned a lot of the things that work really well. And we learned that the maintenance and servicing aspects of a complex system like this require a lot of effort.”

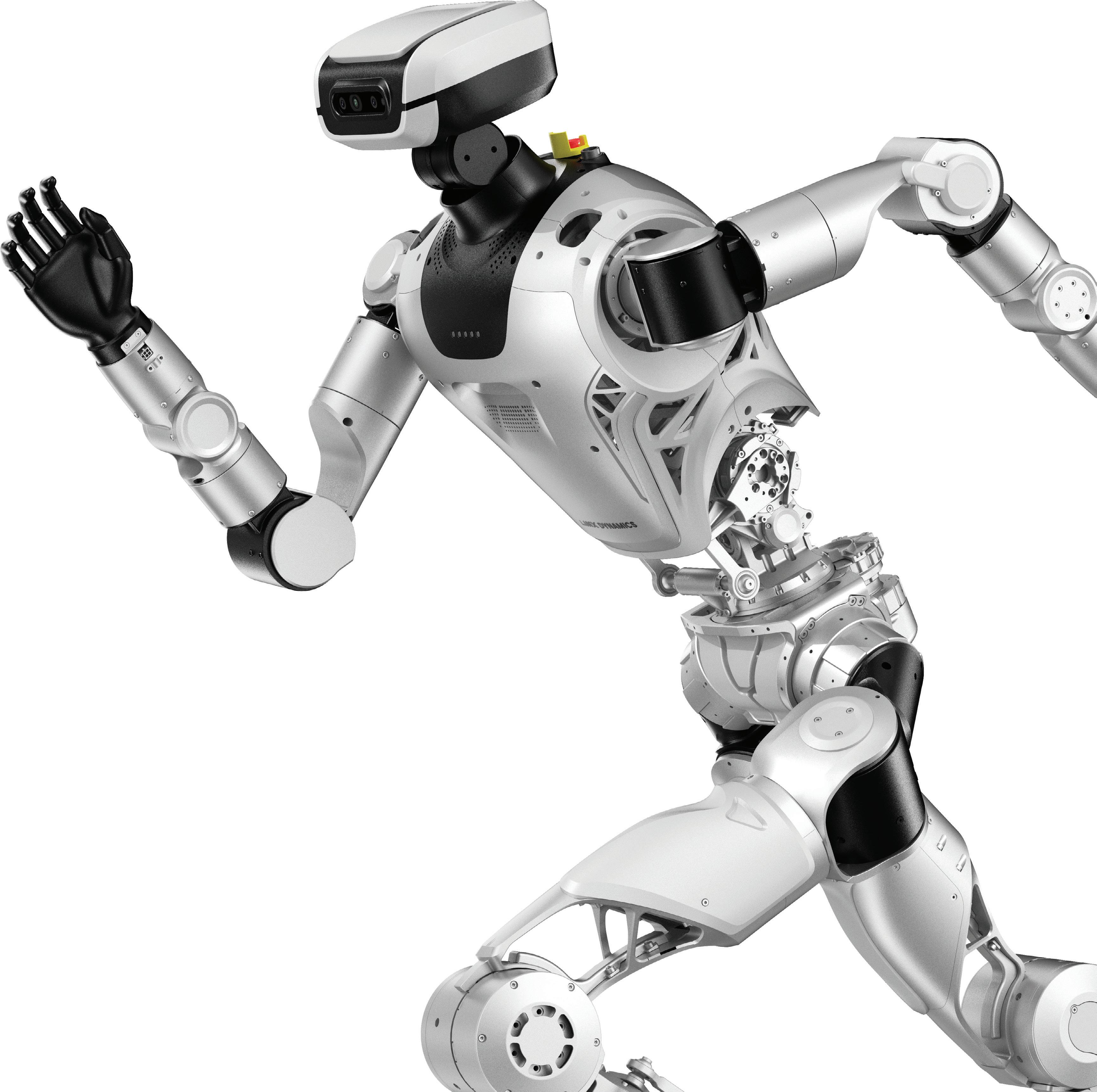

The LUNA surgical robot has a modular design. Asensus Surgical

When designing LUNA, Asensus prioritized making the system modular and easy to service, so that it could eliminate downtime as much as possible.

“The modularity of the system is something that we really focused on to identify all of the areas that we think could, or would, see the need to be replaced, and make them as serviceable as possible,” Vaughan said. “This actually created some real engineering challenges because of the lengths that we went to enable that.”

Durham, N.C.-based Asensus does provide a level of support to its customers. With LUNA, it wanted to give hospitals the power to solve as many problems on their own as possible, if they want to.

“We just want to really limit downtime, because we certainly don’t want it to influence a case. That’s just No. 1,” Vaughan said. “You’ve got a patient, they’re prepared for care. They may have had very specific prep activities. Their surgeon is ready. They picked this surgeon for whatever reason, and he or she is trained and ready with the robot that they’ve elected to use for that case.”

“So all of these things are already there. The room is reserved. There’s a very high price tag on that moment in time,” he explained. “So, if something were to happen to the robot, they may have to abandon the case, or they’d convert to

use traditional laparoscopy, depending on what it is and the surgeon.”

Currently, Asensus guarantees that it can resolve cases within 24 hours, but Vaughan claimed this could be even faster.

“What if we deploy some of these repairable items to hospitals for them to maintain?” he said. “And we actually will. The way that the service is structured is that we’ll relay those cost savings back to the hospital if they’re willing to enable that.”

In recent years, hospitals, especially in rural areas, have been under a tighter monetary squeeze than ever before. With many hospitals losing federal funding, Asensus said cost of the robotic arm and cost of instruments was a huge consideration with LUNA.

“We wanted to be able to wheel [the robot] in at little or no cost to the hospital and come up with a subscription model for the platform,” Vaughan said. “You really can’t do that if you’re wheeling in a very, very expensive platform.”

“We can’t just provide millions and millions and millions of dollars of these systems at little to no cost out of the gate. It’s a big capital investment,” he noted. “Whereas, if you have a really, really aggressive cost model, that becomes much easier. You can really bring in a lot of the players that have historically not had access to the surgical robotics market because of funding.”

In making its robot more economically viable, Vaughan said Asensus did have to make sacrifices when it comes to capabilities.

“It also forces you to create a product to be as economically sound as possible, and that means, at times, you have to delete features,” he acknowledged. “Sometimes these features require

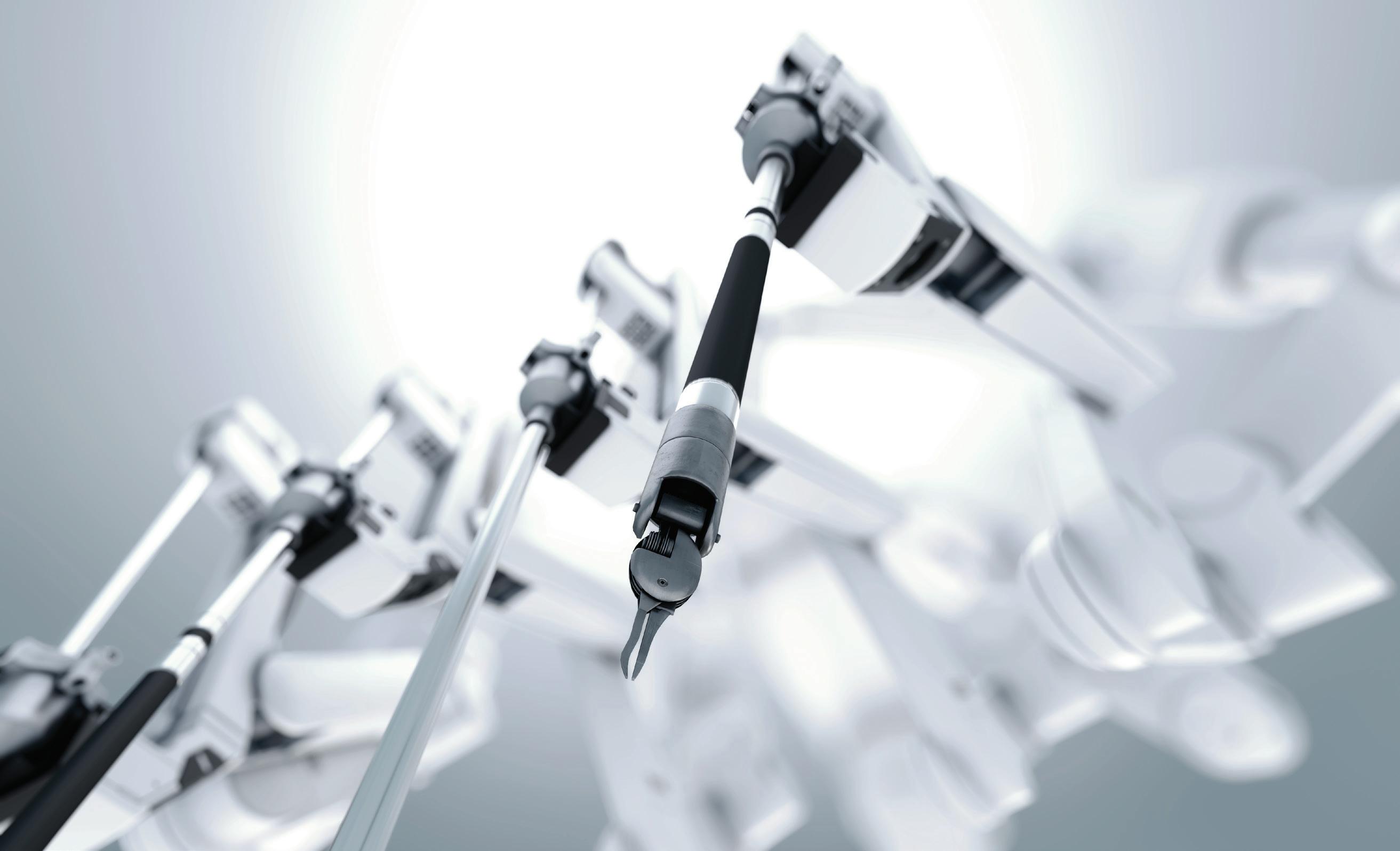

Senhance is designed to be movable and includes interchangeable arms. Asensus Surgical

Autonomous features are gradually coming to robotic surgery.

Asensus Surgical

bespoke hardware or sensors or other elements that really dramatically modify your cost profile.”

The company also benefited from a much more mature market than the one it experienced when developing Senhance. Both quality and cost of parts have improved in the past 20 years, Vaughan said.

What did Asensus learn from surgeons using Senhance?

Asensus’ experience with Senhance played a large role in its development of LUNA.

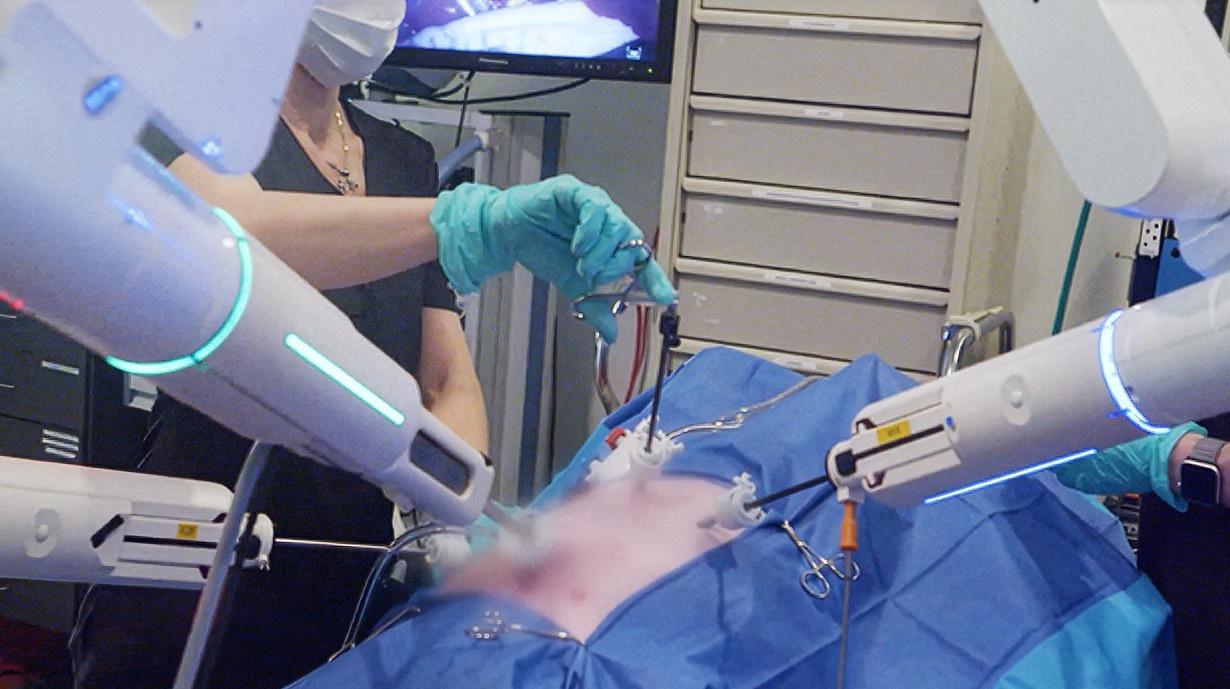

“We’ve been fortunate that we’ve had access to our Senhance user base for the entire duration of Luna’s development,” Vaughan said. “We took the things that worked really well, and we really had a lot of feedback from that user console, especially, where the surgeon sits. That’s their office for the day. They really like the open concept, where they can see the patient.”

The company learned that surgeons prefer to have access to their patients during surgery. “We have a lot of patient access as part of our boom setup and the overall kinematic structure of the arm, it allows for a lot of direct physical access, but also visually from the console. It’s incredible,” Vaughan said.

This was something the pediatrics community, especially, benefited from, Vaughan said.

“We invested heavily in bringing people in who have never seen the system before, and taking that raw kind of first date feedback. You never get another first impression, so we really tired to create a lot of those experiences for surgeons that we trust,” Vaughan said. “We’ve been trying to create as diverse of a profile as possible to understand if there are regional differences and things like that.”

Right now, with conversations about AI growing every day, many are wondering when, or if, robots will start performing surgeries independently. Vaughan said he believes that, on a long enough pipeline, robots will be able to do many more things in surgery. However, right now, Asensus is focusing on what it can roll out soon.

“I’m worried about safely deploying things in the next five years, and what comes in 20 is very, very hard to predict in my mind based on what I’m seeing now,” Vaughan said. “What we are doing is creating these clinical support tools that a surgeon can elect to use or not. It’s similar to how the levels of autonomy in your car have gone up.”

Ten years ago, people would have been very hesitant to jump into an autonomous car, but backup cameras, lane assist, and other features have paved the way for this technology.

“That’s going to slowly start to become more and more prevalent in surgery. We’ll call it a boundary layer first,” Vaughan said. “If you have an experienced surgeon, and they’re letting, for example, a resent preform some cases. They may want to enforce that boundary and say, don’t go here.”

“That’s one of the beauties of robots in general, that it can actively prevent that mistake in ways that shouting at your fellow at bedside cannot,” Vaughan continued. “So, that’s what you’re going to see in the next five years. We’re going to deploy things that actively prevent errors.” RR

DUSTIN VAUGHAN VP OF ROBOTICS R&D ASENSUS SURGICAL

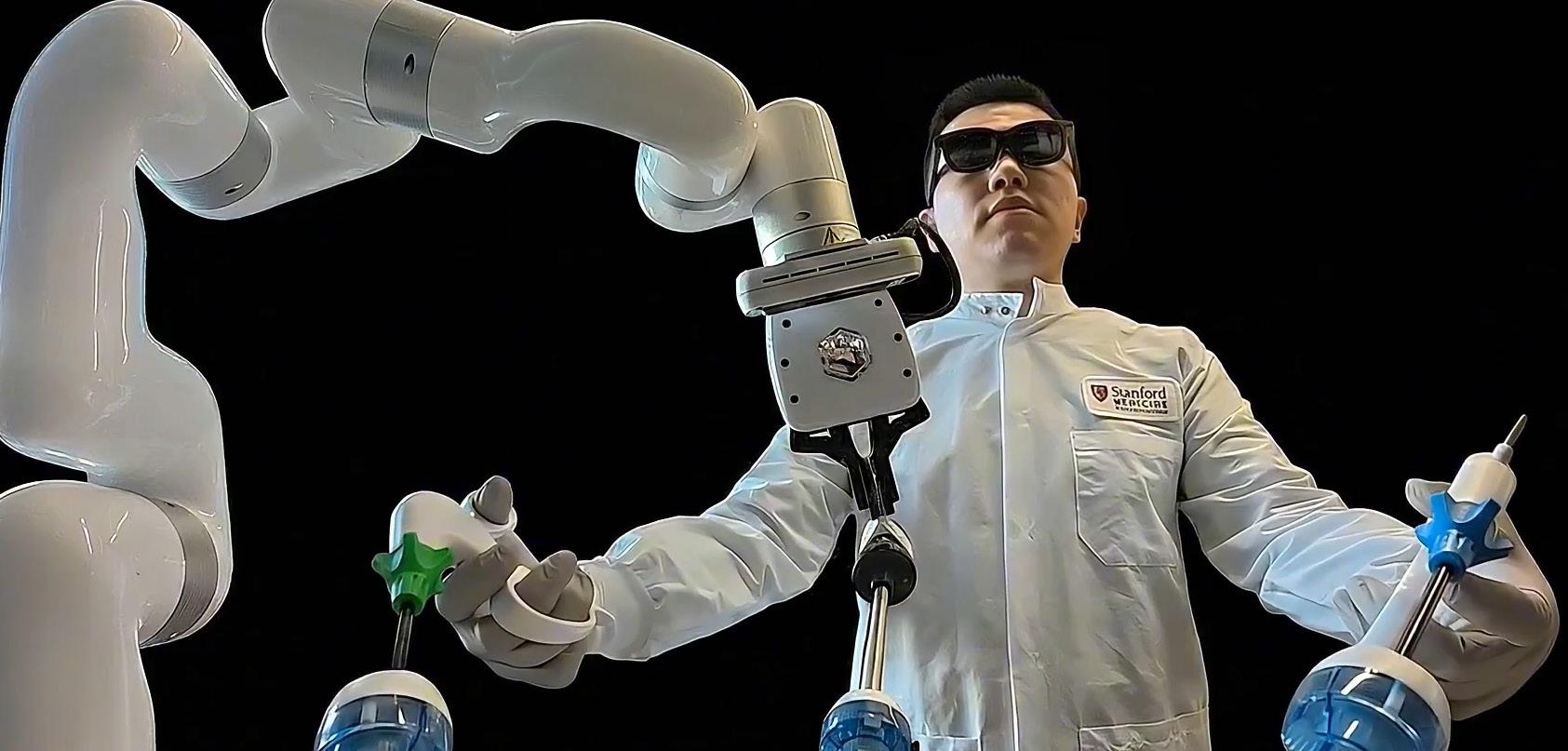

Developers are finding ways to combine artificial intelligence, augmented and virtual reality, and robotics for clinical applications. The Stanford-Princeton AI Coscientist Team recently launched MedOS, which it claimed is “the first AI–XR-cobot system designed to actively assist clinicians inside real clinical environments.”

More than 60% of physicians in the U.S. have reported symptoms of burnout, according to recent studies. The Stanford and Princeton researchers said they designed MedOS to alleviate burnout, not by replacing clinicians, but by reducing cognitive overload, catching errors, and extending precision through intelligent automation and robotic assistance.

“The goal is not to replace doctors. It is to amplify their intelligence, extend their abilities, and reduce the risks posed by fatigue, oversight, or complexity,” stated Dr. Le Cong, co-leader of the interdisciplinary project and an associate professor at Stanford University. “MedOS is not just an assistant. It is the beginning of a new era of AI as a true clinical partner.”

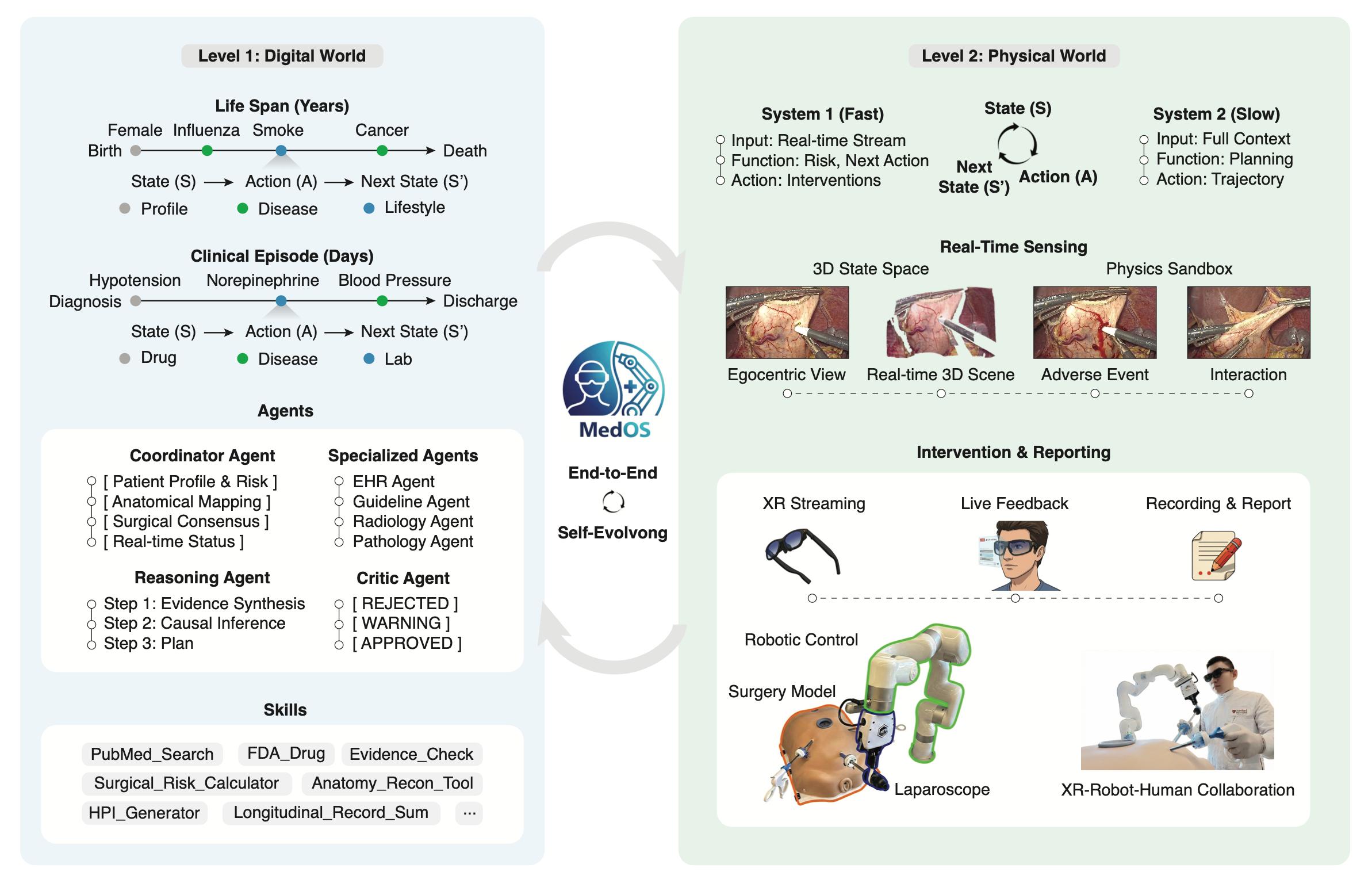

MedOS incorporates feedback loop “Medicine historically separates abstract clinical reasoning from physical intervention,” said the researchers in

a paper. “We bridge this divide with MedOS, a general-purpose embodied world model. Mimicking human cognition via a dual-system architecture, MedOS demonstrates superior reasoning on biomedical benchmarks and autonomously executes complex clinical research.”

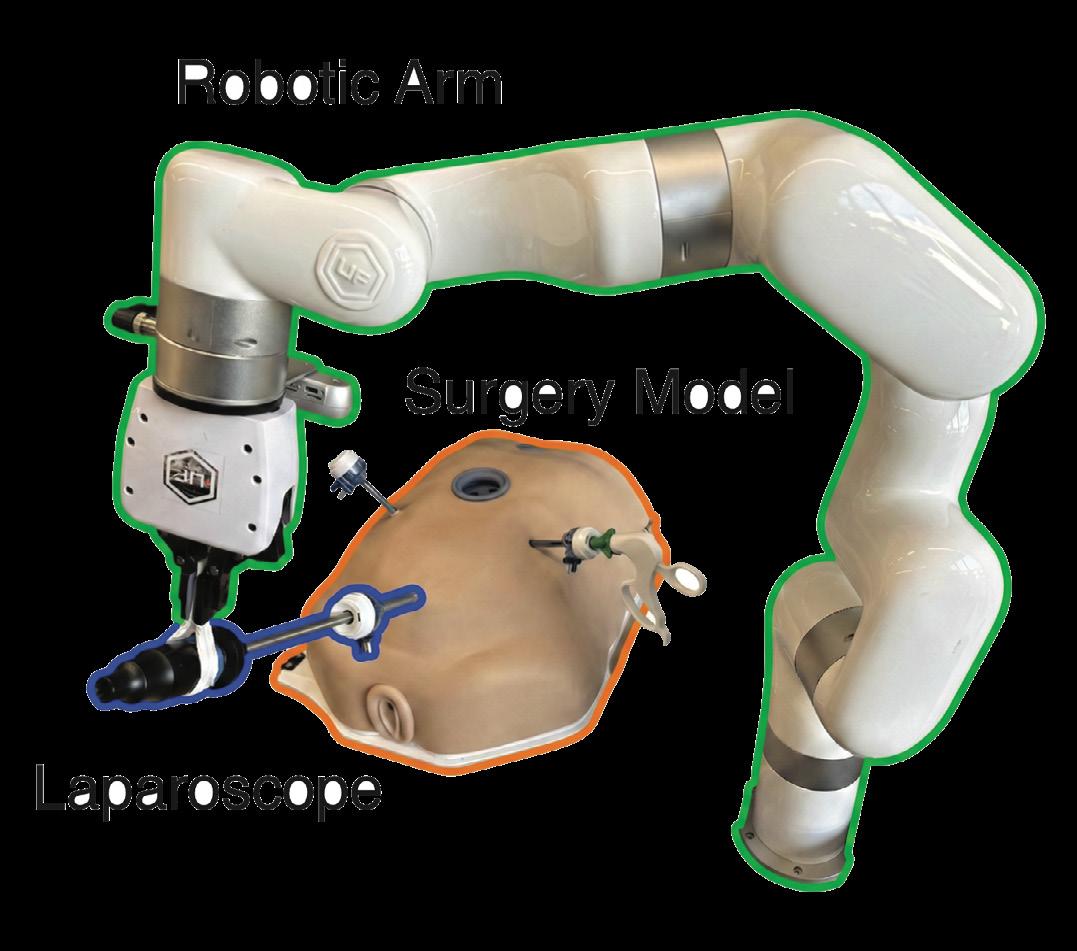

MedOS combines smart glasses, robotic arms, and multi-agent AI to form a real-time co-pilot for doctors and nurses, said the Stanford-Princeton AI Coscientist

Team. Its mission is to reduce medical errors, accelerate precision care, and support overburdened clinicians.

The scientists built on years of experience with LabOS to bridge digital diagnostics with physical action in MedOS. They said the system can perceive the world in 3D; reason through medical scenarios; and act in coordination with doctors, nurses, and care teams.

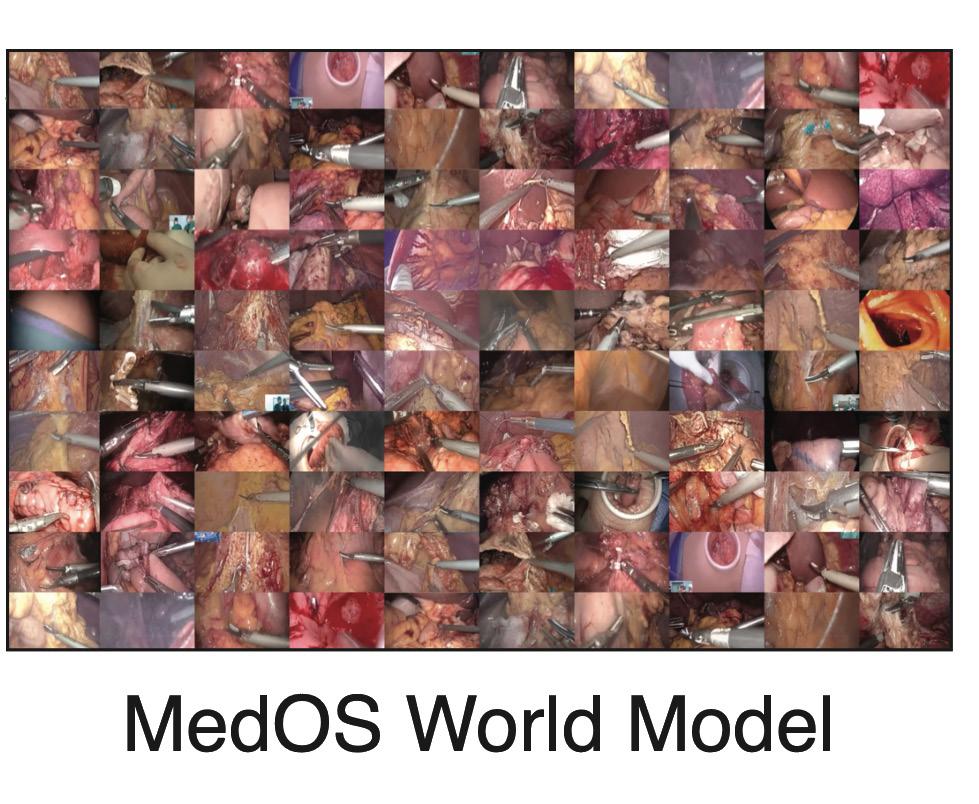

MedOS also introduces a “world model for medicine” that incorporates

MedOS could help democratize surgical expertise. Stanford-Princeton AI Coscientist Team, via Github

perception, intervention, and simulation in a continuous feedback loop. Using smart glasses and robotic arms, it can understand complex scenes, plan procedures, and execute them in close collaboration with clinicians.

“The data layer enables us to build the world model with the spatial intelligence to allow robotics to work with humans today rather than wait for fully humanoid robots,” Dr. Cong told The Robot Report “There are very few robots in hospitals now other than [Intuitive Surgical’s] da Vinci. We want to bring robots into every single part of medicine.”

The platform has been tested in surgical simulations, hospital workflows, and live precision diagnostics. The researchers said it has shown early promise in tasks such as laparoscopic assistance, anatomical mapping, and treatment planning.

AI Coscientist Team builds modular system

The Stanford and Princeton researchers

said they designed MedOS to be modular and adaptable across clinical settings and specialties. In surgical simulations, it has demonstrated the ability to interpret realtime video from smart glasses, identify anatomical structures, and assist with robotic tool alignment.

The team has developed its own tactile sensors to work with force- and power-limited robot arms, said Cong. It works with off-the-shelf smart glasses and cameras to collect training data, which complements publicly accessible databases.

MedOS integrates perception, planning, and action, functioning as a clinical co-pilot and an active collaborator in high-stakes procedures, said the Stanford-Princeton AI Coscientist Team. Its capabilities include:

• A multi-agent AI architecture that mirrors clinical reasoning logic, synthesizes evidence, and manages procedures in real time

• MedSuperVision, an open-source medical video dataset featuring more

than 85,000 hours of surgical footage across procedures

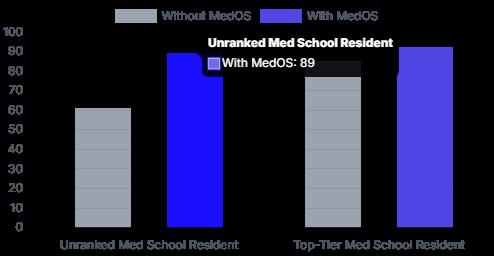

• Demonstrated success in helping nurses and medical students reach physician-level performance and reducing human error in fatigueprone environments

• Case studies, including uncovering novel immunotherapy resistance pathways through large-cohort data integration

“We need to first generate the world model,” said Cong. “By giving intelligence from physicians, clinicians,

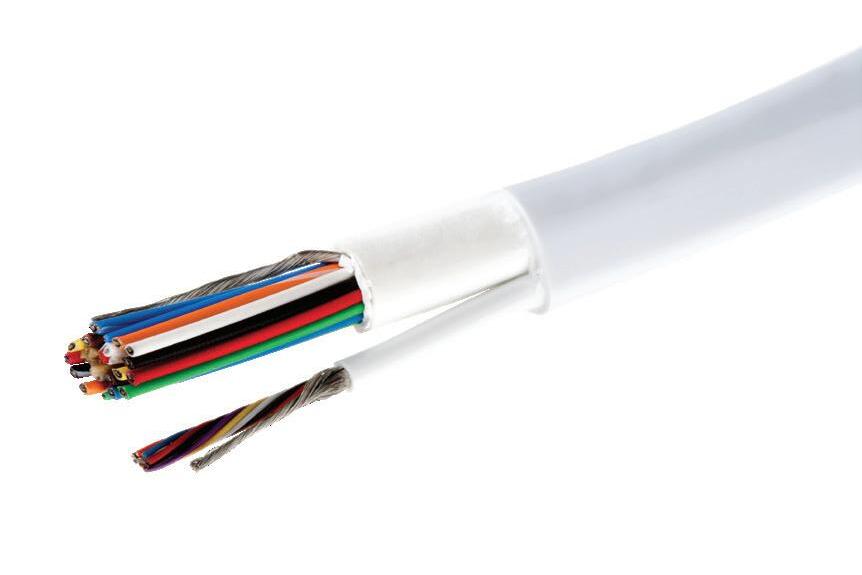

The Integrated Series is a family of compact actuators that deliver high torque with exceptional accuracy and repeatability. These servo actuators feature high precision Harmonic Drive® gearing combined with a brushless servo motor, a brake option (for SHA models), magnetic absolute encoders and an Integrated Servo Drive with CANopen® or EtherCAT® (for LPA or SHA models) communication options. This revolutionary product eliminates the need for an external drive and greatly simplifies cabling, yet delivers high-positional accuracy and torsional stiffness in a compact housing.

• Actuator with Integrated Servo Drive utilizing CANopen® or EtherCAT®

• 24 or 48 VDC nominal supply voltage

• A single cable with only 4 conductors is needed: CANH, CANL, +VDC, 0VDC

• Zero Backlash Harmonic Drive® Gearing

• Panel Mount Connectors or Pigtail

Cables Available with Radial and Axial Options

• Control Modes include: Torque, Velocity, and Position Control, CSP, CSV, CST

The AI-XR-Cobot world model incorporates perception, intervention, and simulation. StanfordPrinceton AI Coscientist Team, via Github

and nurses, our AI brain could be the foundation for deploying fully autonomous humanoids someday.”

In the meantime, the team is working on initial deployments in hospital logistics and laboratories. For logistics, MedOS can help quickly deploy robots to move blood samples and supplies.

Labs are a good place to start because they are not patient-facing and are therefore simpler to ensure that testing and diagnosis are faster and error-free, Cong explained.

Early pilots lead to GTC unveiling MedOS is launching with support from NVIDIA, AI4Science, Nebius, and VITURE. It has been deployed in early pilots at Stanford, Princeton, and the University of Washington. Clinical collaborators can now request early access.

“We’re just starting to work with clinicians on testing surgical procedures on a mock body,” Cong said. “We want to make sure that for patient-facing applications, we’ve already tested with different levels of physicians and simulations before moving into actual clinical settings. MedOS has already proven to be robust and better than Gemini for spatial tests. It could be very flexible for surgical automation.”

MedOS will be showcased at a Stanford-hosted event in early March, followed by a public unveiling at NVIDIA’s GPU Technology Conference (GTC). Media, clinicians, and research institutions interested in early demonstrations or interviews can contact the MedOS team for coordination.

The Stanford-Princeton AI Coscientist Team is dedicated jointly building real-time AI systems designed to work alongside human scientists and clinicians. It said LabOS and MedOS are deployed across leading universities and hospitals to accelerate discovery, reduce human error, and improve scientific and clinical outcomes.

“We’re in touch with hospital systems in the Northwest and soon the East Coast,” said Cong. “We’re sending data-collection tools to other partner institutions and for the next version, which will use massive amounts of benchmark data. It’s an ongoing progress, and multiple institutions are interested in MedOS.” RR

MAY 27-28, 2026

BOSTON, MA