EDUCATION IN ACTION

Dear Friends and Partners,

Welcome to this special issue of Education in Action: AI in Praxis, where we present leading research and practical applications at the intersection of artificial intelligence and education.

Across these pages, you will discover how USF College of Education faculty, students and partners are reimagining education in ways that extend far beyond the classroom. We support learning at every stage, from early childhood through higher education and into community programs and workforce development. What unites it all is a deep commitment to producing scholarship that not only advances knowledge, but also creates translational solutions for real educational challenges.

You’ll read about:

• Smart systems and AI tools that support instruction across contexts and provide just-in-time sca olds for learning.

• Extended reality and AI-enhanced experiences that transform interactive learning environments into spaces that support the co-construction of knowledge.

• AI ethics and decision-making frameworks developed hand in hand with learners and community partners to ensure that intelligent systems serve all.

These featured projects are not theoretical prototypes locked away in labs. Each one is grounded in field testing, district partnerships or community settings. They are being deployed, iterated, scaled and scrutinized for access, usability and impact.

As you turn the pages, I hope you see three imperatives shaping the future of education:

1. AI is amplifying human teaching and learning, not replacing it.

2. The most powerful AI systems are those designed with context, culture and collaboration in mind.

3. The future of education depends on transdisciplinary bridges, connecting K-12, higher ed, community and workforce organizations.

We are excited to share this work with you — to build new alliances, to spark new projects and to strengthen our collective capacity to transform learning for a rapidly evolving world.

Thank you for joining us in this journey. Your insights, engagement and leadership are what help turn innovation into impact.

Sincerely,

Jenifer Jasinski Schneider, Ph.D. Interim Dean, College of Education Professor, Literacy Studies

University of South Florida jschneid@usf.edu

From to

HORTICULTURE HOMELESSNESS:

THE COLLEGE OF EDUCATION COLLABORATORY ON THE USF SARASOTA-MANATEE CAMPUS OFFERS EXTENDED REALITY TRAINING

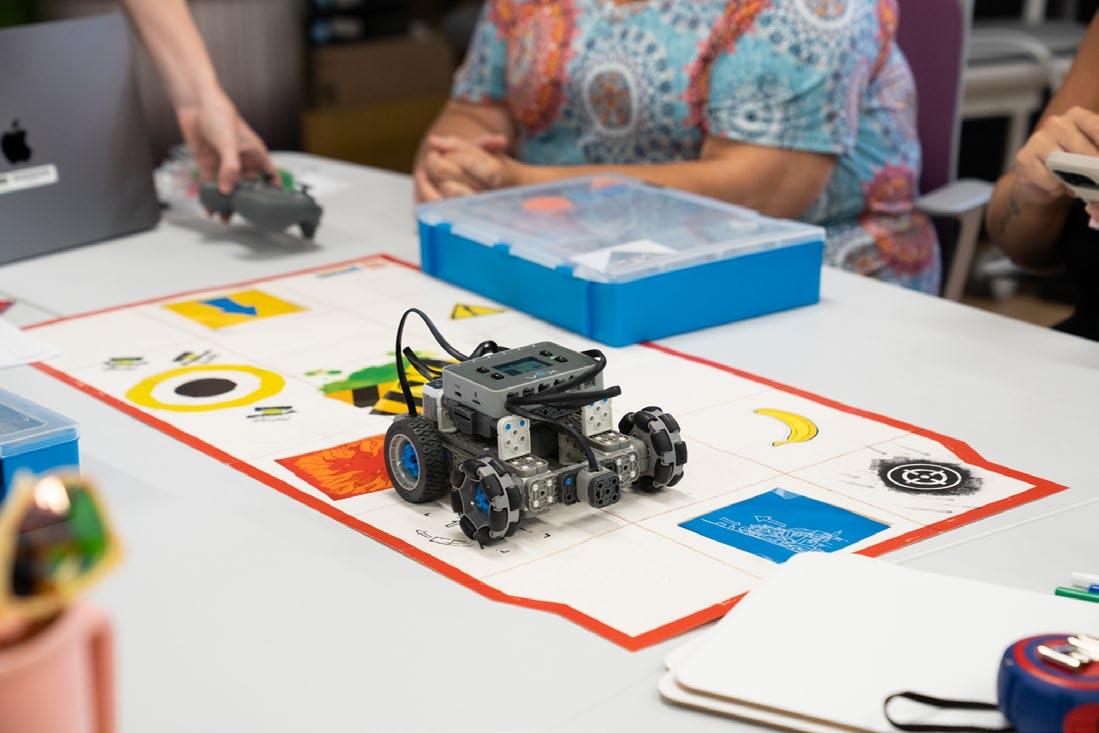

With technological tools ranging from animatronic robots and virtual reality goggles to grow chambers for plants, drones, sewing machines and 3-D printers, the College of Education Collaboratory at the University of South Florida’s Sarasota- Manatee campus is helping professors and students rethink what it means to teach and to learn in a rapidly changing digital world. And it’s extending the way we think about artificial intelligence (AI).

AI is part of Extended Reality (XR) experiences that Csaba Osvath, assistant professor of instruction, curates in his role as Director of the Collaboratory at the Sarasota-Manatee campus. The vision for the Collaboratory began with a grant from U.S. Representative Kathy Castor for a lab on the St. Petersburg campus with matching funds from the Sarasota-Manatee campus and recurring funding from the College of Education. The lab opened in Spring 2025 with rave reviews from community partners, school districts, and USF faculty, staff, and students. To help others understand how these technologies converge, Osvath illustrates the nuanced role AI plays within immersive XR environments.

“Extended reality (XR) encompasses augmented reality (AR), virtual reality (VR), and mixed reality (MR),” Osvath explained in a recent video interview, adding that AI plays a role in each of these domains but one which is not necessarily predictive or generative. As an example, Osvath described a person using VR who enters a world where they must interact physically with the environment and/or with other characters. In this case, AI acts as the behind-the-scenes interface between the user and the virtual world which makes possible the actual physical sensations of turning a virtual doorknob, and opening a door, or conversing with virtual characters.

Such interaction, Osvath said, changes learning from a “sedentary, disembodied” reading-aboutsomething to a more sensory discovery-by-doing experience.

Teaching children to use tools always involves exercising caution. Osvath explained that newer headsets have eliminated earlier problems with motion sickness and headaches. However, as with television and movies, children learning to use VR may become deeply immersed in virtual experiences and narratives. Osvath recounted how some of his personal VR experiences involving historical times of war impacted him, as an adult, emotionally to the point that it took a few days for him to recover. “The psychological presence makes a huge difference,” Osvath said, noting they are curating the VR content students engage with and monitoring their responses to it.

“In medicine they call it [VR] the virtual morphine,” Osvath added. “They take burn victims into an icy-cold world with VR, and the technology has been shown to be better than morphine.”

Osvath tested that power of suggestion during this past hurricane season when the power was out. “I have this game where I landed on an icy planet and saw snow, and I actually felt physically cold.”

ENGAGING IN TRANSDISCIPLINARY SOLUTIONS

Not all tools are VR based. The sophisticated grow chamber, for example, can be programmed to simulate various conditions on earth or even in outer space. Osvath, whose first degrees earned were in horticulture and theology, explained this means students can be introduced to various agricultural conditions without leaving campus. “They can monitor soil, water, and nutrient levels and can take videos and time-lapse photos as the plant grows,” Osvath said.

In the Collaboratory, Osvath works primarily with pre-service teachers earning degrees in education, with professors in the COEDU, and with in-service teachers from area schools. Recently the Collaboratory co-hosted with the Education Foundation of Sarasota County an Education Roundtable Talk for local teachers to learn about project-based learning using immersive technology. Such technology can be useful for helping students understand situations that might not be safe for them to explore in the real world.

“One teacher from Booker Middle School has been bringing a group of students every other Friday to experiment with virtual reality and these virtual tools,” Osvath said. “We expose them to virtual storytelling, how to interact and navigate in virtual worlds, and how to make meaning from the experience.”

When a few of the students wanted to address the problem of homelessness, Osvath found an application that lets users experience virtually what it means to be a homeless person. “This means you can go and experience someone else’s story from the inside,” Osvath explained. “You could read about homelessness or maybe visit a site. But to experience it was different. They just talked about it for hours after that.”

Osvath, whose most recent doctorate is in literacy studies, understands the power of story—whether a fictional fairy tale or the more realistic story of a homeless person—to inspire change. In a 2018 article, “Ready Learner One: Creating an Oasis for Virtual/Online Education,” Osvath recounted how a fairy tale his mother read to him when he was very young motivated him, not to become the hero-prince but to invent a flotation device to save the horse that had drowned so the prince could live. Altering real-life story endings is at the heart of most inventions, Osvath noted in the article, writing, “Personal storytelling through writing often serves as the beginning of the actualization of a tangible future product.”

APPLICATIONS FOR OTHER COLLEGES AND DEPARTMENTS

It’s not just education professors and students stopping by to see the Collaboratory. Osvath says he keeps the door open when he is there and has had professors and students from other programs come into the lab. “I always encourage professors to see the lab as a new site for instruction,” Osvath wrote in a follow-up email, “especially for history and nursing.” Relevant technologies are available in both fields, Osvath explained, and he is creating a playlist of material for future use.

For now, however, Osvath focuses mostly on preparing for summer camp students, developing lesson ideas for teachers to use with younger learners, and working with Lindsay Persohn, assistant professor in the Literacy Studies program, to develop her grant-funded immersive VR application based on Lewis Carroll’s Alice’s Adventures in Wonderland. The new app helps middle-school students develop real-world problem-solving skills. Osvath also piloted a course this past summer that is part of a new Graduate Certificate called AI and Everyday Impact: Applied Practices. The certificate is a cooperative venture among the College of Education, the MUMA College of Business, and the Journalism Department in the College of Arts and Sciences and focuses on the non-technical and societal aspects of artificial intelligence.

Continue Reading

WANT TO LEARN MORE?

In his keynote address, “Cosmos from Chaos,” presented to the 2020 Hillsborough Literacy Council’s Student/Tutor Appreciation Assembly, Osvath takes viewers with him into a virtual world where he describes how reading and writing have always involved “struggle, discomfort, and even pain.” Nevertheless, he has come to realize that those who persist through the pain are those who are truly “passionate” readers and writers.

Osvath coauthored a chapter in Teaching Multicultural Children’s Literature in a Diverse Society: From a Historical Perspective to Instructional Practice (Routledge, 2023). The chapter traces the historical development and pedagogies of immersive material and includes listings of interactive, non-virtual books, educational video games, augmented reality books, and numerous books that have been transported into virtual experiences.

To view the keynote visit: youtu.be/XngfeuWNFOk

INSIDE THE GAMERS’ CLUB:

ENGAGING ELEMENTARY STUDENTS IN CRITICAL CONVERSATIONS ABOUT GENERATIVE AI TOOLS THROUGH COLLABORATIVE COMPOSING AND CODING

In an era where artificial intelligence is reshaping nearly every aspect of life—including how young people learn and express themselves—one innovative project is putting the power of AI and gaming directly into the hands of youth. What began as a small, grant-funded initiative quickly evolved into a dynamic after-school experience known as the Gamers Club. Designed to merge youth culture, emerging technology, and educational innovation, the club offers students a space to explore storytelling, design, and problem-solving through the lens of game creation and AI tools.

At the heart of the project is Leah Burger, a doctoral student in Literacy Studies in the USF College of Education, whose deep connections to the community helped launch a unique collaboration with the Tampa Housing Authority. Initially joining the effort as a research assistant, she quickly stepped into a leadership role, shaping the club’s direction and mentoring participants. Together with faculty support and AI-powered platforms, the team created an engaging model for how education can evolve alongside technology— amplifying creativity, agency, and learning for the next generation.

A PROGRAM GROUNDED IN IDENTITY AND EXPERIENCE

The Gamers Club emerged at the intersection of educational expertise, personal passion, and a deep understanding of student engagement. Drawing on her background in English education and her long-standing love of video games, doctoral student Leah Burger envisioned a space where youth culture and academic learning could coexist and thrive. “I’m a writing teacher at heart,” she explained. “My master’s is in English Education, and I’ve been involved with the Tampa Bay Area Writing Project. But I’ve also always loved games—especially video games— and saw how central they were to my students’ lives, even though schools often overlooked them.”

That insight became the foundation for an innovative pilot project. Armed with a modest set of Oculus VR headsets, Burger teamed up with faculty mentors Jenifer Jasinski Schneider and James King to launch the first iteration of

the club. At the outset, students engaged with existing VR games, analyzing their structure and design through a critical lens. But the program soon evolved from consumption to creation. Using accessible platforms like CoSpaces, students began designing their own augmented reality (AR) games, applying block coding to bring their stories to life. This shift not only democratized the technology but also empowered students to embed their own experiences, identities, and imaginations into every digital world they built.

GENERATIVE AI AND CRITICAL CONVERSATIONS

In its fourth year, the Gamers Club entered a new phase with the integration of generative AI tools like Canva’s Magic Write and image generator. Initially tested during a summer session, the use of AI was born from the students’ curiosity and quickly led to deep conversations.

“We noticed how a lot of critical conversations occurred naturally,” Burger said. “They were running into problems or

the curriculum with Burger. As interim dean of the College of Education, professor of Literacy Studies, and Burger’s dissertation advisor, Schneider’s role in the project was instrumental. “When kids start generating ideas with AI, they’re really thinking about how their ideas show up in text or images, and how those texts and images reflect or distort reality,” she said.

Burger emphasized that this evolution of the Gamers Club has been a collaborative effort, but one deeply tied to her own academic journey. “The shift in focus is part of my dissertation,” she said. “I created the curriculum with Dr. Schneider, with support and feedback from the administrators. Every piece of it—especially this latest iteration—is grounded in what we’ve learned over time and what the students have asked for.”

Burger and the broader research team, the real value lies in how AI

“AI was a great way to frame conversations about identity and representation,” said Schneider, who co-developed

By combining immersive technology, student-driven design, and space for critical discussion, the Gamers Club has become more than an after-school program. It’s a model for how education can honor students’ lived experiences while preparing them to think deeply and creatively about the digital world around them.

A SCHOLARLY PARTNERSHIP

WITH REAL-WORLD IMPACT

Schneider sees the Gamers Club not only as a model for innovative education but also as an example of how faculty can support doctoral students to lead community-based, equity-driven research. “My role has always been to support Leah’s vision and to help her think deeply about what it means to engage in public scholarship,” she said. “What she’s doing is rooted in theory,

but it’s lived, local, and community-responsive.”

The curriculum, now in its latest iteration, reflects what the team has learned from students over time. “This is a design-based experiment,” Burger said. “And every adjustment is based on what the students are showing us they need or are interested in.”

The partnership between USF and the Housing Authority continues to be vital. Schneider underscores the importance of this sustained collaboration. “The trust that Leah built through years of teaching in the community and staying connected as a scholar is what made this possible.”

ENTERING STUDENTS BY HONORING THEIR INTERESTS

What makes the Gamers Club unique is its commitment to honoring students’ lived experiences. By giving them the tools—and the trust—to create, question, and share, the program

becomes more than a STEM initiative. It’s a space for identity exploration, cultural affirmation, and social commentary.

“One student created a game about travelling around the world” Burger recalls “That’s something that resonated with the student’s experience of travelling to New York City, and she used the platform to tell a story about it.” Others build game narratives rooted into their backgrounds or imagined futures.

“They’re bringing all their knowledge about their home experiences, communities, interests, and values to the games they create. It’s not easy, but they love it.”

A MODEL FOR THE FUTURE

As districts and schools look for ways to integrate technology meaningfully and equitably, the Gamers Club offers a powerful blueprint. It shows what’s possible when education centers student voice, combines immersive technology with critical pedagogy, and grounds innovation in community relationships.

For Schneider, the Gamers Club is a case study in sustainable, humanizing learning. “It’s not just about coding or AI,” she said. “It’s about young people seeing themselves as thinkers, creators, and citizens. And it’s about scholars like Leah doing the work that bridges theory and practice in ways that truly matter.”

As the Gamers Club continues to grow, one thing remains clear: it’s not just preparing students for the future. It’s helping them shape it.

The program also helps students recognize their own power as storytellers and designers. As Burger puts it, “We want them to understand they can build the kinds of systems they want to see. That they don’t have to just accept what already exists.”

WORLD OF WONDER

Using Innovation to Foster Middle School Community Engagement

INNOVATING STUDENTDRIVEN INQUIRY

In an exciting fusion of classic literature and cutting-edge technology, an innovative virtual reality (VR) project is reimagining how middle school students engage with real-world challenges. Spearheaded by Lindsay Persohn, an assistant professor of literacy studies, the initiative draws inspiration from the parallels of Lewis Carroll’s Alice’s Adventures in Wonderland (1865) and Joseph Campbell’s The Hero’s Journey (1990) to create an immersive educational experience.

At the heart of the project is a prototype VR application that transports students into a fantastical world where curiosity, critical thinking, and creativity are the keys to navigating complex real-world problems—and discovering their own inner heroes.

Persohn’s deep connection to Alice’s Adventures in Wonderland began during her doctoral research, where she explored the story’s cultural legacy through the lens of nearly 6,000 illustrations. That fascination with Alice’s journey continues to shape her work today.

“Ultimately, we envision multi-player, interactive spaces like a library, workshop, and gallery where youth participants bring the challenges they notice in their communities to the ‘World of Wonder,’” said Persohn. “There, they can develop and test innovative solutions they can take back to their real worlds.”

As a key component of the prototype’s research and development, Persohn and her team are inviting local middle school students to participate in Camp at College World of Wonder, held at the The College of Education Collaboratory on the USF Sarasota-Manatee Campus. The camp offers students handson opportunities to engage with emerging technologies in a supportive, imaginative environment.

During the program, students explore a variety of virtual reality tools, including the prototype application itself. They rotate between a “VR Station” and an “IRL Station,” where they use creative tools to experience the innovation process firsthand. The goal is to help students see how their ideas can take shape and how they might impact the world around them someday.

“Imagine a world where Alice’s Wonderland frames immersive learning experiences,” said Persohn. “Each area in this ‘World of Wonder’ offers opportunities for participants to explore, create, and collaborate to solve real-world problems.”

In a space where imagination meets innovation, it’s no surprise that some of the most extraordinary ideas emerge from the youngest minds. One student, moved by the challenges of red tide in her local area, proposed a thoughtful solution of creating an innovative vending machine that dispenses often forgotten beach necessities in exchange for litter. Her invention showcased not only creative problem solving but the determination that defines this generation’s approach to real-world issues.

A CAMP AT COLLEGE STUDENT STORY

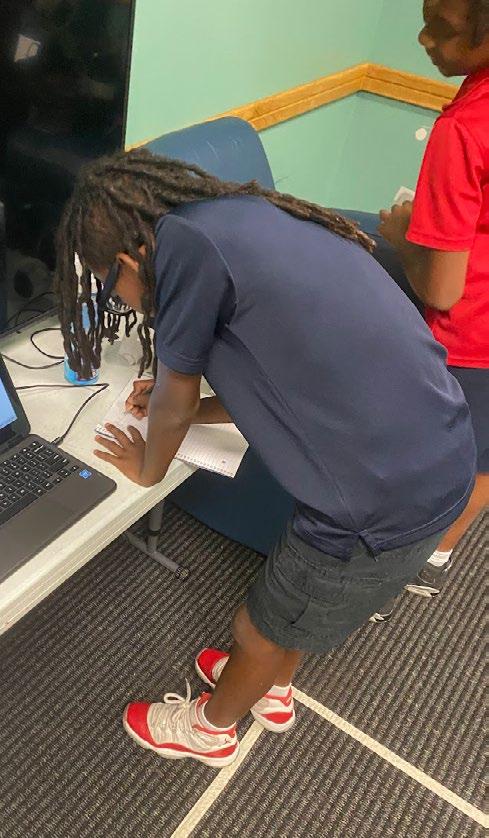

Solving an issue prevalent in the community never seemed daunting for Chrisean. Every other Friday, Chrisean entered the Camp at College World of Wonder program, ready to tackle issues of homelessness to help unhoused people in need.

And on this particular week, the students were working to apply their research to prototype a design that solves their community issue. Chrisean decided to build modular buildings with rooftop gardens so those in need could live and feed themselves fresh fruits and vegetables.

Focused, he began to build his design with magnetic blocks that matched the modular style of his innovation. Ten minutes later, with almost the last block in place, the whole design fell.

So, he calmly rounded up the blocks and went to build it again. Another ten minutes pass, and he’s got the housing units, rooftop garden, and doors. Just as he is almost finished, the structure crashes again.

Although some people might be frustrated by these events, the Camp at College students know that this is part of the design process, creating prototypes is an important way to test innovations, and problems are also opportunities.

This time, the structure holds, and Chrisean smiles, knowing he is one more step closer to innovating a solution that helps his community.

Chrisean’s idea and the challenge he identified exemplify the remarkable creativity emerging from the program’s participants. Each student has brought their own unique perspective, tackling the issues that matter most to them with imagination, insight, and a strong sense of purpose.

Chrisean takes a step back, thinks, and begins to build a bit more slowly, adding more internal structure to his design. And as he builds, so does another idea – a communal living space, with a communal rooftop garden.

Inspired by his more structured approach to the building, he realized it might be too much work for one person to tend a garden, and many hands make light work. In this iteration, he also talks about housing chickens in the rooftop garden space, so tenants would have access to eggs.

One major challenge Persohn, based at the USF Sarasota-Manatee campus, has identified amongst participants in these sessions is the emotional and psychological toll recent hurricanes have taken on children in the Sarasota and greater Tampa Bay area. Many have faced frightening experiences like flooded homes, disrupted routines, and a sense of helplessness.

“It’s such a scary time to be a kid,” Persohn said. “I remember thinking when I was young, ‘Well, I’m just a kid. What can I do?’ We want children to know they do have a voice—and that their ideas and actions can make a real difference in the world.”

Insights gathered during these hands-on sessions play a vital role in shaping the ‘World of Wonder’ virtual reality prototype. Fueled by real student experiences and feedback, the development process is a true collaboration between research and imagination. With USF undergraduate students at the Advanced Visualization Center leading much of the app’s creation, the team is designing interactive tools

that empower young minds to tackle some of the world’s most pressing challenges.

As the team looks to the future, they’re already envisioning an exciting feature. They plan to integrate an AI-powered guide to support students navigating their identified challenges, helping them spark new ideas and stay motivated throughout their problem-solving journey.

“Within the ‘World of Wonder’ app, we plan to have the Cheshire Cat act as an AI support help button,” said Persohn. “Integrating AI technologies will support individualized and

tailored responses to user questions as they work within the virtual world.”

Backed by the Florida High Tech Corridor Early-Stage Innovation Fund and an Interdisciplinary Research Grant from the USF Sarasota-Manatee Office of Research, ‘World of Wonder’ is paving the way for a new kind of learning where students are not just participants, but pioneers. With each new idea and iteration, the project proves that young minds can imagine and build a brighter future when they have the right tools.

TEACHERSERVER.COM:

A NO-COST, PRIVATE HUB OF AI TOOLS FOR TEACHERS

An idea is a powerful thing, yet so fleeting. We have countless ideas every day. They pop into our minds unexpectedly and vanish just as quickly, each with unrealized potential. Occasionally, an idea sticks and is nurtured into something transformative.

Zafer Unal, a professor of elementary education in USF’s College of Education, is revolutionizing teaching with his groundbreaking idea, TeacherServer.com. In an era where artificial intelligence (AI) is rapidly transforming entire

industries, TeacherServer.com stands out as a beacon of innovation in education. This powerful, free, and private resource includes over 1100 AI-enhanced tools designed specifically for K-12 teachers and higher education faculty to enhance their teaching methods.

“Teachers with AI knowledge will stand out when looking for a job and doing things efficiently,” said Unal. “Rather than starting from scratch, you can start

”

at 80 percent and spend the same amount of time perfecting your work.

TeacherServer.com is a robust, no-cost hub of AI tools designed to make life easier for teachers— from daily lesson planning to specialized supports for assessment and accommodations. It’s ideal for educators seeking a private, fast, and comprehensive AI teaching companion.

TeacherServer.com empowers educators to harness the potential of AI. By developing advanced technologies specifically for teachers, Unal’s creation is shaping the future of education. As we navigate the complexities of the 21st century, TeacherServer.com exemplifies how a single idea, when nurtured and developed, can lead to transformative change, addressing the evolving needs of teachers and students.

MAJOR CONCERNS

Do you use artificial intelligence?

If so, how do you use it?

If not, why are you not using it?

In a survey of teachers, Zafer Unal and colleagues found that the biggest concern was the privacy and security of student data. Teachers did not want student information to be stored and utilized by corporate algorithms, especially as it could violate the rules of their schools and districts. The risk was just too significant.

“Teachers were telling us that the risk was just too great when it came to the privacy and security of AI tools,” said Unal. “They didn’t want to upload sensitive student data into these AI programs out of fear for who that information will be available to and what it may be used for.”

Costs dissuaded many teachers from using the technology in their classrooms. AI tools can get expensive, with many market-leading AI tools charging around $20 monthly. This price point can quickly steepen once you incorporate specialty tools for generating images, videos, and sound, making them inaccessible for many teachers without free access.

Many teachers found existing tools frustrating and inefficient—generating a single rubric or assignment often required lengthy, 20-question exchanges with an AI prompt over the course of 40 minutes. What they really needed was a tool that could instantly generate content by simply selecting a grade level, subject area, and relevant state standards. Unfortunately, leading products on the market failed to meet this need, leaving teachers stuck in time-consuming and repetitive prompting.

Teachers expressed concerns over insufficient training, especially for using AI in a learning environment. Without proper strategies to efficiently prompt, analyze correctness, and modify results, implementing AI in the classroom can be a daunting undertaking.

A NOVEL IDEA CATCHES FIRE

Unal embarked on a mission to create an AI platform, TeacherServer.com, explicitly tailored to the needs and interests of teachers. His first step involved downloading an open-source AI model onto the USF server, which enabled enhanced privacy and security measures.

Unlike other tools available, and somewhat counter-intuitive to using AI, TeacherServer.com was instructed not to collect data or train itself based on user behavior, such as prompting.

utilize AI in their work and brainstorming ideas for an initial set of tools to include on the platform.

This proactive approach directly addresses teachers’ primary concerns about privacy and security, guaranteeing a safe and protected learning environment.

TeacherServer.com is free for all users. This accessibility revolutionizes the way teachers can explore and integrate AI tools into their teaching practices, removing financial barriers entirely.

With two major concerns addressed, Unal and his team turned their attention to designing an innovative interface that goes beyond the traditional chat box. They actively gathered input from teachers, seeking insights on how they

TeacherServer.com launched with 49 tools, featuring a variety of lesson plan and quiz generators. As teachers began logging on and experimenting, requests for new tools poured in. Today, TeacherServer.com includes over 1100 tools and counting, all meticulously designed with teachers, for teachers. When selecting a tool, TeacherServer.com prompts users to complete a form to initiate the conversation. This feature allows educators to input key information—such as state and national standards, grade level, subject area, point scales, and more—based on the specific requirements of the tool. As a result, it significantly reduces the time spent on back-andforth prompting.

Once the form is completed, TeacherServer. com generates initial materials. Users can then prompt the AI tool to make necessary adjustments, maintaining control while significantly increasing efficiency.

Beyond the classroom, the platform offers tools for parents to simplify homework instructions and create study materials. It also caters to college

faculty with tools such as the peer review simulator, which assists in preparing research papers or projects for rigorous review processes.

Unal has been conducting both virtual and inperson workshops to introduce participants to AI and machine learning. These sessions explore how to responsibly integrate the technology into curricula, enhance lesson plans, and improve learning outcomes. By offering this training, Unal is addressing the final key concern teachers have when using AI in the classroom.

In a little over, TeacherServer. com has taken off—now with over 4 million users and counting. The workshops do more than introduce participants to AI and machine learning; they’re also a powerful way to build awareness of the platform. As excitement grows, more teachers across the country are exploring its features and discovering how it can simplify and enhance their classroom planning.

AI EDUCATION SPREADS ON CAMPUS

In the ever-evolving landscape of education, Stephanie Arthur, an assistant professor of instruction in science education at the USF College of Education, is leading the way in integrating artificial intelligence into teacher training. By introducing her students to advanced AI tools such as TeacherServer.com, Arthur seeks to prepare future educators to engage with these technologies in thoughtful, responsible ways. Her mission is to provide practical familiarity with AI while deepening conceptual understanding, ensuring they are equipped to lead and innovate in educational practice.

Remarkably, this growth happened without any formal marketing initiatives. TeacherServer. com’s user base expanded purely through word of mouth, a testament to the platform’s significant impact on teachers and their ability to adopt AI technologies.

“We’re at the very cusp of generative AI blowing up across the country as far as education is concerned,” said Arthur, an advisory board member for TeacherServer.com. “We have to do a better job of making sure our teachers understand it and can use it properly.”

Arthur tackles this challenge head-on by weaving lessons on generative AI into her undergraduate and graduate courses. She underscores the significance of a deeper understanding of AI, delving into the intricacies of large language models (LLMs) and machine learning. In her classes, students investigate the data fed into these models and unravel the process behind generating outputs.

In one assignment, Arthur has her classes explore lesson plans generated by popular AI tools. This exercise helps her students realize that AI isn’t perfect; it makes mistakes.

“It is lots of fun because the students pick apart the AI-generated lesson plan, and they’re just amazed at the number of errors it contains,” said Arthur. “It is empowering for them to realize that they are in control and more knowledgeable than a bot or AI tool.”

Helping future educators grasp the complexities of AI equips them to explore thoughtful, research-informed ways to integrate it in support of teaching and learning. Within this intellectually rich training environment, Arthur introduces a range of AI tools—emphasizing free, accessible platforms—and fosters critical engagement with their use. This structured exploration opens the door to in-depth practice with prompt engineering, enabling students to generate learning resources such as assignments and images. They also learn to detect and evaluate AI hallucinations, instances in which models produce responses despite lacking accurate or factual information.

“It’s a frustrating process because it’s like having a conversation with someone who doesn’t understand you, and you’re constantly saying, ‘No, that’s not what I mean,’” Arthur explained.

“Through the practice of prompt engineering, students discover that it’s not a quick fix but rather a more nuanced and detailed dialogue.”

Arthur emphasizes the importance of a deep, comprehensive understanding of AI, noting that these future educators will not only soon be guiding K–12 students but also introducing fresh perspectives and innovative AI-driven methods to the classrooms where they are apprenticing. Through her holistic approach, Arthur ensures her students are prepared not only to teach the next generation, but also to support and lead their colleagues in navigating this rapidly evolving technological landscape.

With a solid understanding of AI principles and practices, Arthur guides her students in applying their knowledge through hands-on use of TeacherServer. Each student develops an AI-assisted lesson plan, which they implement during their practicum or internship in local K–12 schools. This experience not only reinforces their learning but also allows them to introduce innovative, AI-supported instructional methods to practicing teachers in the field.

“Our work is preparing our future teachers to leverage and harness generative AI,” said Arthur. “They will become the leaders in their schools who guide veteran teachers through implementing AI in the classroom.”

THE IDEA FACTORY

Unal is just getting started.He and his team are tirelessly working on new tools, listening to teachers, and creating solutions to foster strong learning environments. Despite the continuous innovation, Unal’s commitment to providing a secure and free platform remains unwavering.

“I’m thrilled! USF supports creative scholarship, and my role as a professor provides me with the academic freedom to take on more ambitious projects,” said Unal. “This is my moment to take a risk and tackle innovative initiatives like TeacherServer.com.”

TeacherServer.com is just the beginning for Unal. He is developing several new AI tools, including an AI-powered platform for faculty members and college students called FacultyServer. It will build upon many of the “College Faculty” tools offered in TeacherServer.com, providing even more robust resources for higher education.

Beyond the classroom, Unal is working on an AIpowered social platform called December 3 Club. The goal is to develop a social media platform specifically designed for people with disabilities, focusing on accessibility, inclusivity, and meaningful connections.

The project started in a teacher education course in the USF College of Education, where Unal was inspired by student feedback on the pros and cons of social media. A few students with disabilities went beyond the usual concerns regarding social media in education and suggested improvements to help make these platforms more accessible.

Unal obviously couldn’t let an idea as powerful as this fade away. He is now developing features based on these student insights in hopes of launching the platform on December 3, 2025, the International Day for Persons with Disabilities.

“I’m just excited to take on new projects,” said Unal. “The December 3 platform is just such a special idea and what better place to start it than USF?”

Despite all his groundbreaking work, Unal remains dedicated to giving back and shaping a brighter future in education and beyond. He welcomes every new idea with open arms, encouraging his students never to fear innovation. After all, it only takes one idea to change the world.

INNOVATION LANE:

THE COLLEGE OF EDUCATION

TECHNOLOGY LABS ON THE USF ST. PETERSBURG CAMPUS

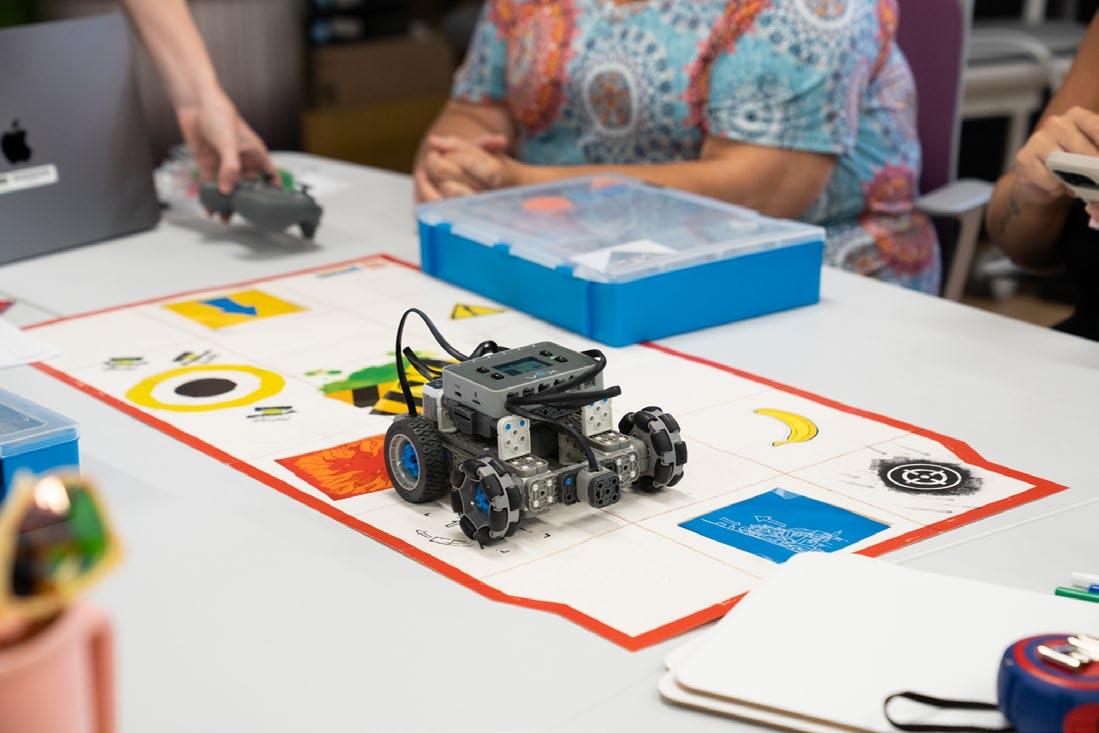

On the USF St. Petersburg campus, Innovation Lane is home to the Emerging Technology Lab and the neighboring STEM Lab. This College of Education corridor is reshaping the way educators-in-training and community members engage with immersive technology.

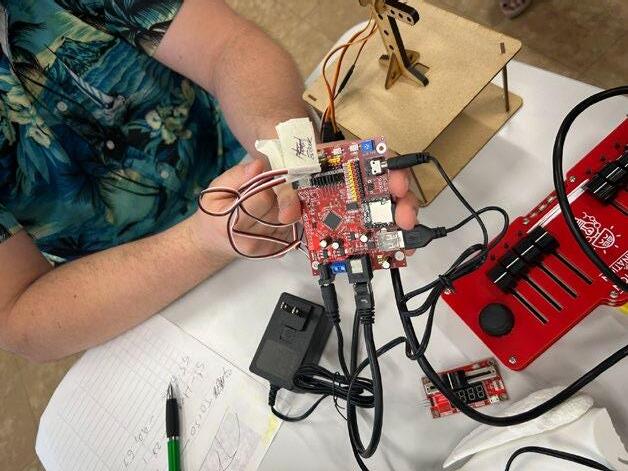

Under the leadership of David Rosengrant, professor of science education, and in collaboration with Richard Rho, Director of Technology for the USF College of Education, participants engage in hands-on experiences with tools for virtual reality, augmented reality, and drones. Activities are designed to prepare future teachers for rapidly evolving learning environments by simulating scenarios they may face in the years ahead—including working with different learners and incorporating interactive tech into instruction.

Backed by federal funding and a National Science Foundation grant, the lab features state-of-the-art equipment such as Oculus headsets and interactive display systems, making high-level tech accessible to students and local organizations. The initiative also supports STEM participation from underrepresented groups. Positioned within Tampa Bay’s growing innovation landscape, the lab functions as both a teaching tool and a community resource, helping to build future-ready educators while fostering local partnerships in technology and education.

ETHICAL AI WITH CHILDREN AT THE CENTER OF DECISION-MAKING

As artificial intelligence continues its swift integration into educational settings, much of the discourse has focused on its use in secondary and higher education - namely, fears of cheating, plagiarism, and the erosion of academic integrity. But Ilene and Michael Berson, professors at the USF College of Education, have spent decades studying the intersections of emerging technology and child development, and they’re raising critical questions about what AI means for the youngest learners among us.

“AI is already here,” Ilene Berson said. “And children are engaging with it in more ways than we often realize - through toys, smart speakers, and even classroom tools.”

AI-enabled technologies might disrupt vital human connections.

“We’re seeing classrooms adopt social robots and emotion-recognition tools that simulate empathy,” Ilene Berson said. “But what happens when a child begins to confuse that simulation for authentic human responsiveness?”

The risk, they argue, isn’t malicious misuse but unintended harm, especially in developmental years when children are still learning to navigate relationships, build trust, and understand abstract concepts like privacy or data collection. These formative experiences can have long-term implications if left unexamined.

THE QUESTION OF AI AND SOCIAL-EMOTIONAL DEVELOPMENT

One of the Bersons’ most pressing concerns is how early exposure to AI can shape a child’s social-emotional development, cognitive growth, and understanding of relationships. In early childhood education, a field that has long prioritized the importance of human-centered interaction, there is growing unease about how

ETHICS BEYOND THE ALGORITHM

In their work, the Bersons emphasize that AI itself is not inherently good or bad, it’s how it’s designed, implemented, and contextualized that matters. They advocate for a childcentered, participatory approach to AI in education, one that questions:

Whose interests are being served by these tools?

• What values are embedded in their design?

• Are we prioritizing efficiency over creativity and curiosity?

“We see a troubling trend toward using AI to streamline learning into narrow, efficiency-driven pathways,” said Michael Berson. “That can erode opportunities for critical thinking and child-led inquiry, especially in early education, where those qualities are foundational.”

Instead, they encourage the use of AI to expand children’s access to cultural and developmental resources, particularly when done in ways that are playful, creative, and inclusive.

DIGITAL LITERACY AND AI

A key theme in the Bersons’ research is the evolving concept of multimodal literacy - the ability to navigate, interpret, and critically engage with varied forms of media and technology. In the AI era, this includes understanding not just how to use tools, but how they work and why they matter.

“A child might be using AI without even knowing it,” Ilene Berson notes. “But the role of educators is to pull back the curtain, help students and families understand what’s happening behind the scenes, and foster intentional use.”

For educators to do that effectively, however, they need support.

RETHINKING PROFESSIONAL LEARNING

Despite a wave of school-led AI trainings, many educators still feel unprepared to meaningfully integrate AI into their classrooms, especially in age-appropriate, ethical ways.

Professional learning must go beyond the technical “how-tos” and delve into the why and what ifs. The Bersons stress the need for:

• Ongoing training on critical assessment of AI tools.

• Collaborative, participatory models where educators, researchers, and families codesign AI experiences.

• Clearer policies and safeguards around data privacy, especially for tools collecting biometric or emotional data.

“AI tools are often dropped into classrooms without input from teachers, children, or families,” Michael Berson said. “We need to empower educators to shape how these technologies are used, not just react to them.”

COMPARISON OF AI POLICYMAKING AROUND THE WORLD

Finland: Participatory policymaking with educators and parents

EU: Child-rights and privacy-focused approach

China: Rapid national implementation

US: Mixed policies, often reactive rather than proactive

GLOBAL LESSONS, LOCAL ACTION

Drawing from international collaborations, the Bersons point to places like Finland and the EU as models of participatory policymaking and children’s rights-based approaches. In contrast, other regions focus on rapid implementation, often without fully considering the social implications.

“There’s no one-size-fits-all model for ethical AI,” Ilene Berson said. “But fostering open dialogue, rooted in our local values and the needs of our communities, is the first step.”

As the Bersons make clear, the question isn’t whether children can use AI - they already do. The real question is how we, as educators, researchers, and community members, guide them toward thoughtful, empowering, and ethical engagement with these tools.

In a world where digital and physical realities are increasingly blurred, it’s our responsibility to ensure that the next generation grows up not just tech-savvy, but also able to socially and emotionally connect the dots.

MICROLEARNING SOLUTIONS FOR HIGHER EDUCATION:

OPEN ACCESS LEARNING FOR PRACTICING EDUCATORS

As artificial intelligence begins to reshape education, educators are increasingly being called upon to understand and integrate these powerful technologies into their teaching practices. But for many in-service teachers, already juggling heavy workloads, keeping up with technological advancements can be a daunting task.

Sanghoon Park, professor of instructional design and technology at the University of South Florida, is helping bridge that gap. Through an innovative AI literacy training module designed specifically for teachers, Park is offering a practical, flexible, and timely resource to support educators on their journey into the world of AI.

“My research centers on improving teachers’ and learners’ experiences using various learning technologies,” said Park, who has spent years exploring the intersection of technology and instruction. In recent semesters, his focus has shifted toward the educational implications of generative AI—an area that has seen explosive growth with the rise of tools like ChatGPT, DALL·E, and other large language models.

“Over the last couple of years, I started to explore how teachers are dealing with the rise of generative AI in their classrooms and in their daily instructional practices,” Park said. “There’s a lot of excitement, but also a lot of uncertainty.”

Recognizing a growing need for foundational knowledge and accessible professional development, Park developed a training module aimed specifically at in-service teachers—those already working in classrooms. Rather than delivering the training in a traditional, lecturebased format, he turned to microlearning: a

strategy that breaks complex topics into brief, easily digestible lessons that can be completed in just minutes at a time.

The result is an engaging, user-friendly online resource that introduces educators to key concepts in AI, including how it differs from traditional computing, the basics of machine learning, and the role generative AI plays in creating content. Importantly, the module doesn’t stop at technical explanations—it goes further, offering practical classroom applications, ethical considerations, and discussion prompts to help teachers reflect on how AI might impact their work and their students’ learning.

“I created the module as a series of short videos with interactive activities and reflection prompts,” Park explained. “Teachers don’t have a lot of time, so I wanted to give them something they could work through at their own pace, when it’s convenient for them.”

The training is designed to be both foundational and forward-looking. Teachers can explore how AI can support lesson planning, feedback generation, content creation, and student engagement. At the same time, they are prompted to think critically about the challenges AI presents, from concerns about plagiarism and bias to issues of equity and student data privacy.

One of the most innovative aspects of the project is its collaborative design. Rather than developing the module entirely on his own, Park involved students from his graduate-level instructional design course at USF in the creation process. “This training module actually emerged from a course project,” Park said. “I had my students create individual learning units on AI literacy as part of their final assignment. Then I compiled their work into this broader resource.”

DESIGNED FOR FORMAT TOTAL TIME

IN-SERVICE TEACHERS

SHORT, DIGESTIBLE LESSONS

(can be completed in minutes)

The collaboration provided a valuable two-way learning experience. Graduate students gained hands-on experience in designing learning materials for a real-world audience, while the teachers who used the module benefited from fresh, researchbased insights delivered in creative and engaging formats.

“It was really important to me that this wasn’t just a theoretical exercise,” Park said. “By creating something that could actually be used in the field, my students were able to see the impact of their work—and the teachers gained access to practical, peer-reviewed content.”

Another important feature of the training module is accessibility. Park made the entire course available online for free, ensuring that any educator—regardless of location, school funding, or professional development budget— can benefit from it. “You can complete the whole module in about an hour, or just choose a few units that interest you,” he said. “It’s designed to be very flexible.”

~1 HOUR TO COMPLETE ENTIRE COURSE

100% FREE AND ONLINE

thinking about how to take this further—how to build out more advanced modules, or how to adapt the content for different grade levels and subject areas.”

He also sees the project as a starting point for deeper engagement between universities and the broader educational community. “I really believe that we, as instructional design faculty, should be creating more open-access learning experiences,” Park said. “We have an opportunity to be part of this moment—this shift that’s happening in education—and to support teachers in a meaningful, practical way.”

As the pace of technological change accelerates, Dr. Park’s work offers a model for how higher education, emerging instructional designers, and practicing educators can come together to address complex challenges. By creating scalable, flexible, and relevant resources, he is not only helping teachers understand AI—he is empowering them to shape its future in the classroom.

Park is already looking to expand the module’s impact. He’s considering offering certificates of completion to recognize teachers’ learning and is exploring partnerships that could bring the module to a broader audience. “Right now, the focus is on helping teachers get started,” he said. “But we’re

“I see this as a living project,” he said. “There’s so much potential for growth in this area, and we’re just beginning. If we can empower teachers with the right knowledge and tools, they’ll be ready to guide their students through whatever comes next.”

To join the training or preview the content email Dr. Park: park2@usf.edu

GAME-BASED LEARNING AND AI POWERED PLAY: A VISION FOR AI-POWERED LITERACY TOOLS

In a digital world overflowing with instant information, reading comprehension and critical literacy are in crisis, especially among younger generations. Glenn Smith, professor of Instructional Technology in USF’s College of Education, is tackling that crisis head-on with a bold blend of educational research, interactive design, and artificial intelligence.

“Students can read words and sentences, but struggle with deeper comprehension like evaluating sources, comparing documents, and identifying bias,” Smith said. “These are essential skills, especially now that AI can generate content that looks credible but isn’t always accurate.”

Smith’s latest work addresses a fundamental challenge in the field of educational technology: the high cost and complexity of developing meaningful learning games. His solution? A scalable, AI-driven model that empowers teachers (not developers) to create their own educational games in just minutes.

FROM IMAPBOOKS TO AIPOWERED PLAY

Smith’s journey into interactive literacy tools began more than a decade ago with the creation of “Imapbooks”: web-based e-books interspersed with comprehension games that students had to “win” before moving on. Over time, he layered in social elements such as digital book clubs and real-time chat discussions to support collaborative learning.

But something was still missing. “Too often, students engage in online discussions without actually processing what their peers are saying,” Smith said. “I wanted to design something that made discussion meaningful.”

That led to his next innovation: the CREW discussion model. In CREW, students read a passage, chat about it in a linear SMS-style format, and then co-write a collaborative response, forcing them to listen, reflect, and agree on a shared interpretation.

Smith has deployed these tools in classrooms from Tampa to Slovenia, and recently received a Fulbright award to expand his research into Serbian schools. But the heart of his current focus is even more ambitious: helping educators overcome the bottleneck of content creation through an AI-driven platform called Saboteur.

SABOTEUR: AI MEETS GAMEBASED LEARNING

Saboteur flips the script on educational game design. Instead of requiring teams of coders and months of development time, teachers simply upload a short passage of text. The system, powered by AI, generates a multiplayer role-playing game where students debate and evaluate multiple documents, some of which may be AI-generated.

GAMIFICATION GAME-BASED LEARNING

Adds game elements to traditional tasks (e.g., badges, leaderboards)

Often used in quizzes or assessments (e.g., Kahoot)

Motivates behavior

In disciplines like English or civics, students might be tasked with identifying which text was written by a human versus a machine. In science or history, they could compare versions to determine which is more accurate or well-reasoned.

“This is true game-based learning. Students are navigating interactive, decision-based experiences,” said Smith.

The goal isn’t to trick students, but to train them. Smith sees this as a way to sharpen higher-order skills like source evaluation, credibility analysis, and collaborative writing; these are 21st-century competencies that are vital in an AI-saturated world.

Involves actual gameplay as the learning experience

Focused on decision-making, interaction, and storytelling

Builds critical thinking and collaboration

instructional games. In the future, he hopes to add a social-sharing component that would allow teachers to exchange game templates and adapt each other’s designs.

We’re democratizing game design so educators can shape learning in real time.

A PROSUMER APPROACH TO GAME DESIGN

Underlying all of Smith’s work is a radical idea: teachers should be able to create their own educational games as easily as they create a YouTube video.

Inspired by the content creation boom that fueled platforms like Instagram and TikTok, Smith envisions a “prosumer” model where educators are both producers and consumers of

“We’re democratizing game design,” Smith said. “It shouldn’t take months of development or thousands of dollars to build something engaging and pedagogically sound.”

WHAT’S NEXT

With studies underway in Florida and upcoming international research, Smith is optimistic about the potential impact of Saboteur and other AI-driven tools. A prototype is currently live on a private server, with plans to expand access once security and publishing considerations are addressed.

His team includes doctoral students and postdocs collaborating across institutions and countries, reinforcing the interdisciplinary and global nature of this work.

“Ultimately,” Smith said, “this is about making critical literacy engaging and accessible, and giving teachers the tools they need to lead that charge.”

ENHANCING GRADUATE STUDENT LEARNING:

DATA ANALYSIS WITH THE POWER OF

AI

Bo Pei, assistant professor of instructional technology in the USF College of Education, is revolutionizing the learning experience for his students. From developing sophisticated chatbots powered by large language models to crafting cutting-edge AI algorithms, Pei is leveraging the power of artificial intelligence to enhance educational outcomes and foster a dynamic learning environment.

The journey began in an online data visualization course for master’s students. Many of his students lacked backgrounds in data analysis, requiring them to sift through extensive learning materials to grasp the data sets and identify effective visualization strategies. Compounding this challenge, most students were juggling fulltime jobs and additional classes, leaving little time for thorough reading.

This dilemma inspired Pei to create a learning platform that integrates all the course materials with an AI chatbot to guide students. The platform offers personalized assistance, helping students navigate the course content based on their unique learning needs.

“This platform can reduce the time it takes students to navigate the course materials and look for answers,” Pei explained. “Students can quickly find answers and simultaneously delve deeper into relevant concepts.”

The platform also tracks each student’s interaction history as they move through the course. By recommending targeted follow-up questions and resources based on this data, the platform can help students stay aligned with course objectives while exploring topics that spark their interest.

An intuitive functionality in the platform helps students struggling with large-scale data analysis by allowing them to upload their datasets directly into the system and receive a concise summary. This feature helps demystify complex data, allowing students to focus on interpretation and visualization rather than getting bogged down in raw numbers.

“The platform will generate context to describe the data set,” said Pei. “By generating distributions, means, medians, and more, the platform provides students with text descriptions of their data sets instead of numbers on a sheet.”

Additionally, the platform generates goals and recommendations based on this data set. For example, it may push students to analyze the relationship between two specific columns of data or utilize a well-suited data visualization strategy.

Pei isn’t stopping at this platform; he is actively building AI algorithms to help analyze student learning data. He aims to identify learning gaps early in a course before it would be possible for a professor or instructor to notice them on their own. This identification would allow for more precise intervention strategies to enhance student learning.

“At the early stages of a course, many students do not realize that they are struggling,” said Pei. “We can identify these students early and guide our instructors to provide the necessary information or help to these students.”

In many educational contexts, data often reflects imbalances that can unintentionally lead machine learning algorithms to favor majority trends. Pei and his team have developed another specialized platform enabling users to assess model performance, detect biased labels, and examine fairness across student groups.

The platform incorporates bias detection and mitigation strategies, either rebalancing datasets or generating post-processing insights that help instructors interpret results with unintended bias. By helping educators recognize where bias may occur, they can create more fair learning environments.

Pei’s expertise isn’t going unnoticed. He has been actively involved with other colleges across the University of South Florida on their AI and technology initiatives.

He is collaborating with the Center for Innovation, Technology, and Aging (CITA) in the USF College of Engineering,an initiative focused on reimagining care for individuals living with Alzheimer’s disease and related dementias (AD/ ADRD). The center also extends its mission to support other vulnerable older adults, including those with disabilities such as Parkinson’s disease and individuals recovering from strokes.

Exploring life-long learning, Pei is contributing to CITA’s intervention and care core, a project bringing experts together across numerous colleges at the University of South Florida.

Learn more at: usf.edu/engineering/cita/ index.aspx/

Pei is also actively engaged with the Institute for Artificial Intelligence + X, a university-wide hub for AI research and education that emphasizes interdisciplinary collaboration. The institute brings together experts from various fields to explore innovative applications of AI across a broad spectrum of disciplines.

Learn more at: aix.eng.usf.edu

BEYOND EFFICIENCY: RETHINKING

AI ETHICS IN QUALITATIVE RESEARCH

As artificial intelligence continues to weave itself into the fabric of academic life, the conversation in higher education is evolving beyond automation and efficiency. For Jennifer Wolgemuth, a qualitative methodologist and professor at the University of South Florida, the ethical implications of AI stretch far past convenience and deep into questions about relationships, reflexivity, and responsibility.

“AI is not just a faster way to code data or generate text,” Wolgemuth said. “It’s also a new kind of partner in inquiry - one that forces us to reconsider the very nature of interpretation, authorship, and ethical responsibility.”

THE ETHICS OF SPEED

The predominant justification for integrating AI into research, especially qualitative research, is speed. Transcription, coding, and even thematic analysis can now be done in minutes.

But Wolgemuth pushes back on the idea that faster is inherently better.

“If the argument for AI is just that it makes things quicker, we have to ask: Quicker for what?” she said. “Efficiency, when decoupled from ethics, starts to echo a free market drive to produce more, with less reflection.”

We must ask not just what AI helps us produce, but what it teaches us about how we think, relate, and know.

Wolgemuth, whose research spans interdisciplinary education and qualitative methodology, describes herself as a “polyglot” of research methods. Her curiosity about AI didn’t come from a technical impulse, but from a philosophical one: What happens to the ethical foundations of qualitative inquiry when machine learning becomes part of the research process?

Her answer: It depends on how we relate to the tool.

That concern is grounded in what she calls a potential trade-off: the erosion of reflexivity, creativity, and interpretive depth that is central to qualitative research.

VALIDITY, VOICE AND MACHINE BIAS

Wolgemuth is also raising flags about how AI interacts with issues of data ownership, transparency, and bias. For example, what happens when interview transcripts are uploaded into AI tools? Who owns that data, and what new ethical obligations do researchers have to participants when their words are processed by third-party algorithms?

“There’s an intertwining of ethics and validity in qualitative work,” she said. “If we outsource interpretation to AI, we may risk losing a level of interpretive sufficiency - the richness and nuance that makes qualitative research meaningful.”

Moreover, there’s a growing concern about algorithmic bias. When trained on narrow or unbalanced data, these tools can reinforce

outdated assumptions—subtly influencing outcomes through the words and images they generate. As Wolgemuth notes, “If you ask AI to generate a picture of a scientist, you’re likely to get a man in a lab coat. That’s not just an oversight—it shapes how knowledge and expertise are visually defined.”

CREATIVE POSSIBILITY OR ETHICAL MIRAGE?

Despite her stance, Wolgemuth isn’t a technoskeptic. She sees value in exploring how AI might spark creative and even “creationary” thinking. One of the most intriguing questions for her is whether AI can be treated as a “dialogic partner” - a kind of critical friend in the research process.

“There are scholars who are asking AI questions like, ‘Tell me about your relationship with me,’ and treating the responses as points of reflection,” she said. “It’s not about believing the machine is sentient - it’s about examining what it reveals about us.”

That line of inquiry edges into what some scholars call “technicity” - the unpredictable, emergent potential that arises not from using a tool but from creatively engaging with it. Wolgemuth is interested in how AI might be used not just for technique, but for insight.

footprint” of AI. It’s not just about carbon emissions, though she notes that AI requires significant computing infrastructure that necessitates high energy consumption, significant carbon emissions, and increased electronic waste, It’s also about the accumulation of data, the proliferation of research for research’s sake, and what that says about the ethics of academic inquiry.

“If we’re producing more just because we can, then we’re reproducing a model of knowledge that’s extractive,” she said. “We need to ask not only what we gain, but what we lose and who might be harmed.”

TOWARD ECOLOGICAL ETHICS

More recently, Wolgemuth has begun thinking about what she calls the “methodological

A SHIFTING ROLE FOR EXPERTS

Looking ahead, Wolgemuth predicts that qualitative experts may increasingly be called on not to produce knowledge in the traditional sense, but to act as prompt engineers, designing the inputs that guide AI systems to do interpretive work.

“The role of expertise may shift from being about knowing how to analyze data to knowing how to structure a prompt that gets a machine to simulate analysis,” she said.

That shift, she explains, demands a reimagining of what it means to do ethical research in the digital age—and a reminder that not every advance is inherently progressive.

“There’s enormous potential here,” Wolgemuth said. “But if we don’t ground that potential in values—creativity, care, responsibility—then we risk turning our tools into replacements for reflection, rather than catalysts for it.”

AI IN TEACHING & LEARNING

GRADUATE CERTIFICATE

4

FULLY ONLINE COURSES | 12 CREDIT HOURS | 100% ONLINE FORMAT

The Graduate Certificate in Artificial Intelligence in Teaching and Learning gives educators the tools to integrate AI into everyday practice.

Through four online courses, you’ll learn to understand foundational principles, select and design AI tools, and implement them to enhance student learning and streamline administrative tasks.

KEY SKILLS & OUTCOMES

• Understand the ethical use and principles of AI in education

• Identify and evaluate AI tools for teaching and curriculum design

• Design custom AI applications for classroom and admin tasks

• Implement and assess AI-driven solutions to improve student outcomes

WHO SHOULD APPLY

This program is ideal for:

• K–12 Teachers looking to enhance classroom practices

• Instructional Professionals seeking AI-driven learning solutions

• Educational Technologists leading AI integration e orts

How You’ll Benefit:

✓ Gain practical AI integration skills

✓ Enhance teaching and assessment practices

✓ Advance your career as an educational innovator

For more information scan this code or visit: bit.ly/edu-ai-certification

CREDITS

University of South Florida College of Education

INTERIM DEAN OF THE COLLEGE OF EDUCATION Jenifer Jasinski Schneider

EXECUTIVE EDITOR Leah Burger

ART & DESIGN Joshua Swenson

WRITERS Tyler Ennis, Anne W. Anderson, Rebecca Darmoc

PHOTOGRAPHY Jordon Myers, Tyler Ennis, Leah Burger, and Molly Fitzsimmons

The University of South Florida (USF) College of Education is a regional, national, and global leader in preparing the next generation of educators, leaders, and innovators. With dynamic undergraduate and graduate programs offered across three campuses, the college equips students for impactful careers as classroom teachers, school counselors, instructional designers, exercise scientists, educational psychologists, higher education professionals, and researchers. Through innovative research, transformative teaching, and strong community partnerships, USF’s College of Education is committed to student success and educational advancement—driving change in schools, institutions, and