To achieve legitimacy and effectiveness, law enforcement demands public trust – a requirement which grows in importance when law enforcement agencies adopt AI systems. Public attitudes towards AI in policing remain cautious, and trust in law enforcement agencies can strongly influence whether they agree to new technologies. Therefore, it is up to law enforcement agencies to act effectively and, above all, fairly when making decisions about whether, when and how to adopt and implement AI systems.

In particular, they need to exhibit transparency – that is, clear, open communication about which AI systems they are using, for which purposes and according to what rules and guidelines. In addition to improving trust in how law enforcement agencies use AI systems, transparency is essential to safeguarding human rights, scrutiny and system quality, as well as encouraging sustainable adoption. Yet transparency is commonly challenged by organizational cultures, operational confidentiality, vendor restrictions and limited resources. To achieve and ensure transparency, indispensable for maintaining public confidence and institutional legitimacy, law enforcement agencies using AI for public safety should:

Act responsibly and transparently when introducing AI systems, ensuring decisions are fair, unbiased, and protective of human rights.

Invest resources in transparency, with the recognition that doing so can strengthen legitimacy, innovation and public confidence.

Seek stakeholder perspectives through surveys, consultations and ongoing feedback to tailor transparency efforts to community needs.

Build trust over time, while acknowledging that trust is fragile and requires sustained, reliable and consistent behaviour across all interactions.

Start transparency efforts early, communicating before, during and after AI adoption, and offer proactive updates to prevent misinformation.

Communicate positive, truthful narratives about how AI systems support public safety while also openly addressing risks and disclosing safeguards.

Acknowledge and address mistakes through accountability, corrective action and reliance on oversight bodies.

Clarify what cannot be disclosed and why, respecting operational secrecy needs while still sharing purposes, rules and safeguards.

Embed transparency clauses in vendor contracts.

Respond quickly and accurately, prepare clear answers in advance, ensure informed representatives address public concerns and continuously refine transparency practices.

Because transparency is inherently a two‑way process, it requires strong, clear communication from law enforcement agencies about their use of AI systems, combined with effective public engagement that allows communities to contribute and shape how law enforcement uses AI systems.

First, by communicating openly, consistently and thoughtfully, law enforcement can strengthen trust, promote responsible AI innovation, and ensure that communities feel informed, respected and involved. Some practical recommendations for effective, clear communication about the use of AI systems include the following:

Clearly disclose uses of AI systems. Explain how they serve the public interest and provide accessible information about governance, oversight, risk mitigation, procurement and accountability mechanisms.

Tailor communication efforts to audiences. General audiences need simple, plain‑language explanations of purpose, benefits, risks, data use and rights. Expert audiences require more technical details, such as system performance metrics, data sources, safeguards and oversight processes.

Engage in early communication, well before AI deployment, and continue throughout the AI system’s life cycle. Long‑term, proactive communication strategies and consistent information sharing help prevent misinformation and build durable trust.

Exploit different communications channels to reach different audiences and avoid excluding any group, including websites, printed materials, signage, social media, broadcast media, community forums and visible system identification on sites.

Use simple, clear language. Avoid overwhelming details, acknowledge concerns and provide layered information to help audiences learn at their own pace.

Communicate in an engaging, human manner, using visual tools, real‑world examples, interactive formats and clear options for follow‑up, to make AI systems more understandable and approachable.

Second, public engagement is an equally important, core pillar of trust. Sharing information outward alone is insufficient; law enforcement agencies must gather information by listening, responding to and meaningfully involving communities. Engagement must be sincere, inclusive and grounded in the understanding that they earn trust through dialogue. Practical recommendations for fostering effective public engagement include the following:

Ensure the engagement is meaningful, by actively listening to stakeholder feedback, explaining when and why certain suggestions cannot be implemented, remaining open to changing course and adopting a self‑critical stance that welcomes co‑creation with communities.

Involve the entire organization. All law enforcement personnel should have basic training in dialogue, bias awareness and community interaction.

Engage inclusively and broadly, across all levels of government and with all stakeholder groups, including technology providers, civil society organizations, academics, intermediaries, mediators and communities directly affected by AI systems.

Prioritize vulnerable and underrepresented communities. Incorporate their perspectives, maintain continuous dialogue, establish safe feedback mechanisms, and communicate clearly about safeguards against profiling, mass surveillance and systemic bias. Early engagement with distrustful or vulnerable groups is crucial to prevent further alienation and reinforce safeguards against discrimination.

Use diverse formats and modalities for engagement, from informal community meetings and coffee chats to structured forums, expert roundtables, closed‑door sessions and hands‑on demonstrations.

Gather feedback through inclusive channels. Input may be written, oral, digital or in community‑specific formats, and invitations to provide feedback should be widely disseminated across both physical and digital platforms.

Start small and build trust progressively. Small expert groups might create safe spaces for dialogue. Neutral settings can foster candid conversations and constructive negotiation about sensitive topics.

Provide education and real‑world exposure through public information sessions, training workshops, community forums and station visits that demonstrate how AI systems operate in practice and how they support public safety.

Strengthen community‑level structures and platforms to encourage recurring dialogue, accountability and representation.

Ground all engagement in relatability, respect and accessibility. Work with trusted local actors, address concerns empathetically, recognize cultural dynamics, and ensure that engagement practices reduce fears, tensions and exclusion.

Public trust is the bedrock for legitimate, effective law enforcement. Contemporary law enforcement, grounded in the principle of policing by consent and characterized by approaches such as community-oriented policing, relies on public approval and cooperation to prevent and respond to crime.1 Trust drives such cooperation, yet it is remarkably hard to gain and sustain.

To encourage it, transparency is essential, especially for law enforcement agencies seeking to introduce artificial intelligence (AI) to their practices. Although AI systems already support various law enforcement tasks, reflecting agencies’ efforts to enhance their efficiency and strengthen resource allocation,2 the pairing of AI with law enforcement remains uniquely challenging. Issues such as algorithmic bias and expanded surveillance capabilities pose inherent human rights risks and raise concern among the public.3 In such a setting, transparency – meaning the extent to which agencies disclose relevant information about the decision making processes, procedures, performance and outcomes related to their AI innovation endeavours –4 can be a crucial enabler of appropriate governance, scrutiny and accountability.5, 6

The need for openness is especially critical today, considering evidence from a global survey (with around 34,000 respondents from 28 countries) that 70% of people worry that leaders and institutions intentionally mislead the public and 60% report moderate to high grievances towards institutions.7 Beyond such general distrust, public perceptions of AI are even more concerning. Most people indicate an unwillingness to trust AI systems, particularly in advanced economies, and trust levels appear to be declining over time (e.g., by 17% between 2022 and 2025).8 Yet AI perceptions also tend to depend on the context, and people’s understanding of benefit–risk trade offs seems limited, resulting in varied, nuanced attitudes towards AI use across different public sector settings. The same underlying technology might be rejected in some cases but accepted in others, depending on the public service for which it is deployed.9,10

1 United Nations Department of Peacekeeping Operations Department of Field Support. (2018). Manual community oriented policing in united nations peace operations. Ref. 2018.04. Accessible at: https://police.un.org/sites/default/files/manual community oriented poliicing.pdf

2 UNICRI and INTERPOL. (revised February 2024). Toolkit for responsible AI innovation in law enforcement: Introduction to responsible AI innovation. Accessible at: https://ai lawenforcement.org/sites/default/files/2025 03/Intro_Resp_AI_Innovation_Mar25.pdf

3 UNICRI. (2024). Not just another tool: Public perceptions on police use of artificial intelligence. Accessible at: https://unicri.org/Publications/ Public Perceptions AI Law Enforcement

4 Albert Meijer. (2013). Understanding the complex dynamics of transparency. Public Administration Review, 73(3), 429 439. Accessible at: https://dspace.library.uu.nl/bitstream/handle/1874/407242/puar.12032.pdf Stephan G. Grimmelikhuijsen & Erik W. Welch. (2012). Developing and testing a theoretical framework for computer‐mediated transparency of local governments. Public Administration Review, 72(4), 562 571. Accessible at: https://dspace.library.uu.nl/bitstream/1874/252024/1/GrimmelikhuijsenDevelopingandTesting.pdf

5 Tom R. Tyler. (2006). Why people obey the law. Princeton University Press. Accessible at: https://www.researchgate.net/publication/220011500_ Why_do_People_Obey_the_Law

6 Tero Erkkilä. (2020, May 29). Transparency in public administration. In Oxford research encyclopedia of politics. Oxford University Press. Accessible at: https://oxfordre.com/politics/view/10.1093/acrefore/9780190228637.001.0001/acrefore 9780190228637 e 1404.

7 Richard Edelman. (2022). 2022 Edelman Trust Barometer: The cycle of distrust. Edelman. Accessible at: https://www.edelman.com/ trust/2022 trust barometer

8 The study adopted the International Monetary Fund’s (IMF) classification of advanced and emerging economies. The advanced economies surveyed were Australia, Austria, Belgium, Czech Republic, Denmark, Estonia, Finland, France, Germany, Greece, Ireland, Israel, Italy, Japan, Latvia, Lithuania, Netherlands, New Zealand, Norway, Portugal, Republic of Korea, Singapore, Slovakia, Slovenia, Spain, Sweden, Switzerland, United Kingdom. The emerging economies surveyed are Argentina, Brazil, Chile, China, Colombia, Costa Rica, Egypt, Hungary, India, Mexico, Nigeria, Poland, Romania, Saudi Arabia, South Africa, Türkiye, and the United Arab Emirates. Nicole Gillespie, Steve Lockey, Alexandria Macdade, Tabi Ward & Gerard Hassed. (2025). Trust, attitudes and use of artificial intelligence: A global study 2025. The University of Melbourne and KPMG. Accessible at: https://mbs.edu/ /media/PDF/Research/Trust_in_AI_Report.pdf?rev=0ee82285b2b0439bba524dbddc58214a

9 Matilda Dorotic, Emanuela Stagno & Luk Warlop. (2024). AI on the street: Context dependent responses to artificial intelligence. International Journal of Research in Marketing, 41(1), 113 137. Accessible at: https://doi.org/10.1016/j.ijresmar.2023.08.010

10 Roshni Modhvadia, Tvesha Sippy, Octavia Field Reid & Helen Margetts. (2025). How do people feel about AI?. Ada Lovelace Institute and The Alan Turing Institute. Accessible at: https://attitudestoai.uk/

When it comes to law enforcement agencies’ uses of AI, the way they engage with and communicate about the systems can powerfully shape public attitudes. A comprehensive survey of public perceptions of AI uses by law enforcement, conducted by the United Nations Interregional Crime and Justice Research Institute (UNICRI) and the International Criminal Police Organization (INTERPOL) revealed a correlation between trust in authorities and AI acceptance. In addition, acceptance increased if safeguards were in place, and human oversight and strong legal frameworks emerged as essential to public confidence. Yet the survey participants consistently cited a lack of information about how their local law enforcement agencies were using AI.11 This gap may stem, at least in part, from a lack of guidance regarding how to devise transparency requirements. Which information should law enforcement agencies convey to the public to assure them of the trustworthiness of AI systems and the entities that design, deploy or operate them?

Answering this question and bridging the public perception gap represent a governance imperative. Any innovation might be rejected if it is not presented with openness, respect for human rights and responsible practices. For example, controversial uses of AI can undermine its promise to enhance crime prevention and investigation efforts, erode the public’s confidence in law enforcement agencies and ultimately compromise the pursuit of justice. Successful endeavours require public cooperation. Therefore, deploying opaque or harmful AI systems risks fostering misunderstanding and damaging the very relationships required by effective policing. In an attempt to avoid such failures and facilitate success, this report offers comprehensive guidance to help law enforcement decision makers and related actors build and maintain public confidence, through transparency, during their responsible implementation of AI systems to enhance public safety.

This report is the result of joint research conducted by UNICRI, through its Centre for Artificial Intelligence and Robotics, and BI Norwegian Business School (BI), under the project AI4Citizens: Legal, Ethical, and Societal Considerations of Implementing AI Systems for Anonymized Crowd Monitoring to Improve Public Safety. It received financial support from the Norwegian Research Council. It builds on UNICRI’s extensive research into responsible AI innovation, including the Toolkit for Responsible AI Innovation in Law Enforcement (AI Toolkit) and the AI-POL: Advancing Innovation, Governance and Responsible AI in Law Enforcement.12 Launched in 2024 by UNICRI and INTERPOL, with funding from the European Union, the AI Toolkit guides law enforcement agencies worldwide on how to integrate AI systems responsibly into their work. AI POL is a joint initiative of UNICRI and INTERPOL, funded by the European Union, which seeks to translate the guidance in the AI Toolkit into practical support for participating agencies.

11 UNICRI. (2024).

12 UNICRI and INTERPOL. (Revised February 2024). Toolkit for Responsible AI Innovation in Law Enforcement: README file. Accessible at: https://ai lawenforcement.org/sites/default/files/2025 03/README_File_Mar25.pdf

To inform decision makers in law enforcement and other public safety institutions about effective approaches to fostering public trust, through enhanced transparency surrounding responsible of AI innovation, this report contains three focused chapters.

First, it outlines key drivers of public trust in law enforcement, including transparency, which is both a principle and an organizational attitude (> Chapter 1). Second, this report considers two dimensions of transparency that operate in tandem to enable law enforcement agencies to build and maintain public confidence:

Communication: providing clear, accessible information to the public about AI use (> Chapter 2).

Public engagement: establishing meaningful, two way dialogue with communities, including vulnerable groups (> Chapter 3).

Each chapter includes a conceptual overview of the topics and a set of practical, action oriented recommendations to support implementation.

The research relied on six main methods:

1. Desk based research. A comprehensive literature review supported all stages of this research and ensured contextual grounding and analytical relevance.

2. Analysis of a hypothetical use case. UNICRI analysed an AI system designed for crowd monitoring and anomaly detection in public spaces. With a responsible AI innovation perspective and building on technical work performed for the broader project AI4Citizens: Responsible AI for Citizen Safety in Future Smart Cities, 13 it undertook a comprehensive analysis of the human rights considerations associated with implementing privacy preserving AI‑enhanced surveillance. The resulting Use Case Description and Analysis 14 outlined critical considerations from the point of view of human rights and responsible AI innovation. The case study informed the research questions for the semi structured interviews, as well as the final recommendations.

13 Project funded by the Research Council of Norway, in which the Norwegian University of Science and Technology (project owner), BI Norwegian Business School, the University of Agder, the University of Sussex and several partners from public institutions and businesses join forces to contribute to the global dialogue on the societal security challenges and potential solutions when implementing AI from multistakeholder perspectives. Accessible at: https://prosjektbanken.forskningsradet.no/en/project/FORISS/320783

14 UNICRI. (2026). AI4Citizens use case description and analysis. Accessible at: https://unicri.org/sites/default/files/2026 01/USE_Cases AI4Cit.pdf

3. Semi‑structured interviews. UNICRI conducted 48 interviews with 52 participants across five geographic regions: Europe, Americas, Africa, Asia and Oceania. The interviews targeted multidisciplinary experts, mainly with backgrounds in law enforcement, humanrights and ethics, communications and public relations, sociology and political science. Unless otherwise indicated, the findings presented in this report draw primarily from these semi‑structured interviews. The expert interviews explored key considerations for implementing AI systems in law enforcement. In particular, they addressed how transparency, accountability, communication and engagement can foster trust in responsible uses of AI systems for public safety, including public trust in AI based crowd monitoring. The distinct sets of questions developed for each area of expertise prompted the experts’ feedback on the use case scenario, based on their professional experience with AI systems, public safety or communication (> see annex).

4. Qualitative analysis. The analysis of information derived from the semi structured interviews, performed jointly by UNICRI and BI Norwegian Business School, relied on a qualitative research approach. It leveraged several technical tools, including NVivo software, to help analyse the qualitative data. For privacy and data security, the software applied only to anonymized interview data, and no external servers were used for storage. The results then were incorporated into the study recommendations.

5. Experimental studies. Members of BI Norwegian Business School conducted a dozen experimental studies as part of the overall research process. They examined in detail the various drivers of public acceptance of AI systems, trade offs between perceived benefits and costs, the impacts of trust in government and various aspects of transparency. The findings of these experimental studies are being published separately, but their relevant insights are incorporated into the recommendations.

6. Consultations with stakeholders and experts. Finally, peer reviews of the draft recommendations took place between September 2025 and January 2026. Both key stakeholders and experts offered detailed feedback, which has been integrated into the final version of this report.

The report and its recommendations are intended primarily for decision makers in law enforcement agencies and other actors concerned with public safety that have implemented or plan to implement AI systems responsibly and that seek effective approaches on how to be transparent. For the sake of narrative flow, this report refers primarily to law enforcement agencies; however, this does not exclude from its scope other actors concerned with public safety that are not classified as such (such as local governments).

This report may also be relevant to policymakers, civil society organizations, academic researchers, human rights lawyers, ethics experts, technology providers and other stakeholders interested in issues of public trust in AI and law enforcement, transparency and accountability.

This report adopts the following definitions from the Toolkit for Responsible AI Innovation in Law Enforcement:

Law enforcement agencies are primarily the police and other state authorities that exercise police functions, such as investigating crimes, protecting individuals and property, and maintaining public order and safety.15

Artificial intelligence refers to the field of computer science dedicated to studying and developing technological systems that can imitate human abilities such as visual perception, decision making and problem solving.16

AI systems are computer systems that use AI algorithms to achieve specific goals, with a certain degree of autonomy.17

AI innovation refers to the wide range of activities organizations undertake when implementing AI systems in their work. This includes all stages of the AI life cycle, from planning to deployment, use and monitoring, and anything else it may involve.

Explainability is a principle for responsible AI innovation in law enforcement that makes it possible for people to understand an AI system from a technical standpoint, including how the AI system makes decisions and generates outputs (i.e., explainability in a narrow sense) and why a certain result has been generated (i.e., interpretability).18

This report forms part of the broader project AI4Citizens: Responsible AI for Citizen Safety in Future Smart Cities, funded by the Research Council of Norway – a multidisciplinary research effort involving the development and responsible deployment of AI systems for public safety. The project brings together the Norwegian University of Science and Technology (project owner), BI Norwegian Business School, the University of Agder, the University of Sussex and several public and private partners to advance global dialogue about societal security challenges and the implementation of AI from a multistakeholder perspective.19

This collaborative effort conducted research into privacy preserving crowd monitoring AI systems. A specific scenario was developed to ground the research in a concrete operational context. A version of this scenario was shared with interview participants. In parallel, UNICRI conducted an assessment of the scenario’s ethical and legal dimensions, which was published separately.20 The Use Case Snapshots included in this report build on this scenario to illustrate the provided guidance. The AI

15 UNICRI and INTERPOL. (Revised February 2024). README file.

16 UNICRI and INTERPOL. (Revised February 2024). Introduction.

17 Ibid.

18 UNICRI and INTERPOL (2024). Principles.

19 More information is available at https://prosjektbanken.forskningsradet.no/en/project/FORISS/320783

20 UNICRI. (2026).

system referenced in the snapshots was devised exclusively for scientific and research purposes. All deployment characteristics, operational workflows, and system details are fictional and crafted for the purposes of this report. They do not represent or imply any real‑world deployment or com‑ mercialization of the described AI system.

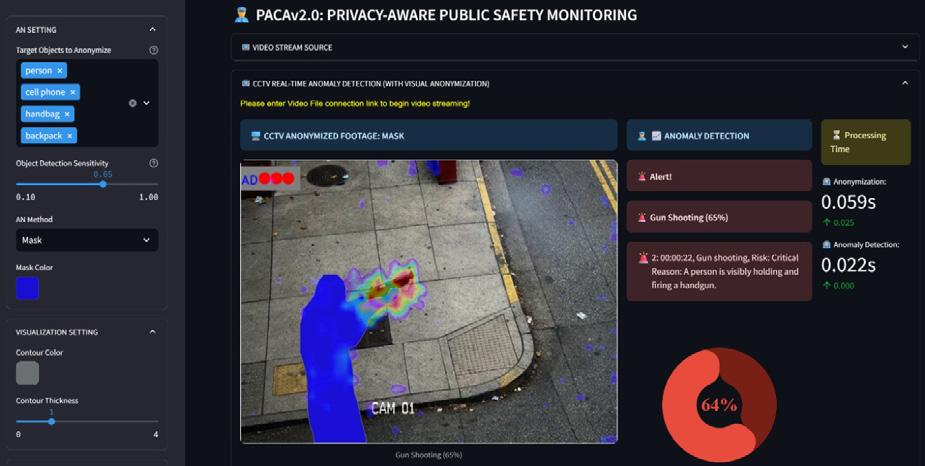

The hypothetical use case scenario analysed under AI4Citizens focuses on an AI system for crowd monitoring and anomaly detection in public spaces, designed with human rights compliance and responsible innovation principles in mind.

Deployment scenario:

A law enforcement agency identifies strategic high traffic areas where crowd monitoring is essential for public safety. It applies the AI system to pre existing closed circuit television (CCTV) cameras which capture live video. To reduce privacy impacts, the AI system immediately anonymizes CCTV footage by masking individual figures –anonymization algorithm. It subsequently identifies anomalies, defined as events that may indicate threats to public safety – anomaly detection algorithm – and issues alerts to human operators in law enforcement monitoring centres.21 The algorithms operate as illustrated in the figure below:

AD Report Metadata:

Anonymized Footage

Anomaly Alert Flag and Score

Anomaly Type Categorization

Anomaly Context Description

Anomaly Heatmap Localization

Operators assess the alert and decide whether further action, such as dispatching a patrol, is required.

For instance, in a crowded train station, the AI system detects a sudden act of vandalism. The anomaly detection algorithm detects this anomaly, triggering an alert that notifies the monitoring officer. The operator reviews the anonymized footage and makes an informed decision on whether to dispatch personnel to the scene.

Benefits and risks:

The implementation of the AI system in this scenario aims to provide several benefits for law enforcement agencies: by recognizing specific motions and actions, the system helps officers detect anomalies in public spaces more systematically, which should reduce human fatigue, strengthen incident monitoring capabilities and improve patrol deployment efficiency. It thus has the potential to enhance public safety by reacting to threats in a timely manner, while maximizing the efficient use of available human resources (> see Use Case snapshot 5).

To address foreseeable risks, such as privacy intrusions, over‑surveillance, opacity in automated decision‑making, reinforced biases or overreliance on automated outputs, the hypothetical scenario incorporates built‑in organizational safeguards, including privacy‑protecting body masking, mandatory human oversight and clear restrictions on automated decision‑making (> see Use Case snapshot 2).

Although the research was grounded in the scenario detailed in > Use Case snapshot 1, the recommendations in this report extend beyond this specific application. They refer to the wider landscape of AI uses in law enforcement, which might include, among others, other forms of image processing, text and speech analysis, risk assessments and predictive analytics, as well as more recent content generation uses that rely on large language models (LLM). When responsibly developed and deployed, such technologies can enhance operational efficiency and support more informed and timely decision‑making, while reducing physical and psychological strain on human personnel. However, the integration of AI into law enforcement also raises significant concerns. As noted, AI systems may reproduce or amplify biases present in training data, leave decision‑making processes opaque and generate far‑reaching and less visible impacts. In law enforcement contexts, these risks may translate into exacerbated discrimination, intrusive or disproportionate surveillance, privacy and personal data breaches and chilling effects on freedoms of assembly and expression, among other concerns.

Noting both these opportunities and risks, the importance of transparency, accountability and trust is clear, for any and all uses of AI in law enforcement. The recommendations presented in this report aim to support such transparency around responsible AI innovation, regardless of the specific technology or operational context.

Trust determines the legitimacy of law enforcement agencies. Without it, their ability to perform their mission is compromised. Broadly, trust refers to the willingness of one party to be vulnerable to the actions of another party,22 given the expectation that the latter party is likely to act in a reasonable, reliable and appropriate manner.23 Such a broad definition can be insightful, but it also lacks precision and specific application to different contexts or social groups.24 Therefore, this section introduces the unique concept of public trust in law enforcement and the factors that drive it. It also considers what happens to public trust when law enforcement agencies introduce AI systems.

Trust in law enforcement refers to expectations held by individuals, communities and/or the wider public about how agencies will behave, in specific interactions or more generally (i.e., how they treat others or certain segments of the population). People trust law enforcement agencies when they regard them as competent, just, genuinely caring about the people they serve and consistent in doing the right thing. Thus, trust is linked to perceptions of:

Effectiveness. People trust agencies more if they believe they perform their job well –catching criminals, responding quickly when called, solving local problems and so on.25 The expert interviews reinforced this view and also added that perceived ineffectiveness, particularly when police actions do not address root causes of crime, drive distrust.

Fairness. Perceptions of effectiveness alone cannot guarantee trust though, because it also depends on perceptions of how law enforcement agencies interact with people and make decisions (or procedural justice; > see Learn More Box 1), not just the outcomes of those decisions.26 The experts identified several drivers of distrust rooted in people’s perceptions of a lack of fairness, as when the public regards the police as an arm of the state rather than a protector of people and believes personnel are biased towards protecting the interests of privileged groups.

Procedural justice requires meaningful and consistent demonstrations; merely superficial or insincere observance can backfire. As a study of police community relations in Zimbabwe illustrated, citizens’

22 Roger C. Mayer, James H. Davis & F. David Schoorman. (1995). An integrative model of organizational trust. The Academy of Management Review, 20(3), 709–734. Accessible at: https://doi.org/10.2307/258792

23 Diego Gambetta (1988). Can we trust trust? In D. Gambetta (Ed.), Trust: Making and breaking cooperative relations (pp. 213–237). Blackwell. Accessible at: https://www.researchgate.net/publication/255682316_Can_We_Trust_Trust_Diego_Gambetta

24 Mayer, Davis & Schoorman (1995). Ibid.

25 Jonathan Jackson & Jacinta M. Gau. (2015). Carving up concepts? Differentiating between trust and legitimacy in public attitudes towards legal authority. In Interdisciplinary perspectives on trust: Towards theoretical and methodological integration (pp. 49 69). Springer International Publishing. Accessible at: https://dx.doi.org/10.2139/ssrn.2567931 Kristina Murphy, Lorraine Mazerolle & Sarah Bennett. (2014). Promoting trust in police: Findings from a randomised experimental field trial of procedural justice policing. Policing and Society, 24(4), 405 424. Accessible at: https://doi.org/10.1080/10439463.2013.862246. Julia Yesberg, Ian Brunton Smith & Ben Bradford. (2023). Police visibility, trust in police fairness and collective efficacy: A multilevel Structural Equation Model. European Journal of Criminology, 20(2), 712 737. Accessible at: https://journals.sagepub.com/doi/10.1177/14773708211035306

26 Tyler. (2006).

trust in and willingness to cooperate with law enforcement agencies related directly to the fairness and consistency with which law enforcement officers exercised discretion in applying rules. On the other hand, those discretionary powers also appeared vulnerable to an organizational culture which framed the police as an extension of state power, as well as political influence and structural constraints (e.g., resource limitations or weak oversight). These pressures seemingly undermined the consistency and impartiality of officers’ behaviours, thereby eroding public trust in the police as an instrument of procedural justice.27

Psychologists have studied institutional trust from two main angles. Organizational psychologists ask about which conditions need to exist for people to trust an institution at all. Social psychologists ask about what authorities need to do to earn that trust. Together, these perspectives suggest a way to understand public trust in law enforcement, in terms of what people need to believe about an agency and how agencies can demonstrate it.

Trust in an institution draws on three pillars, as described by a framework known as the Ability, Benevolence and Integrity (ABI) model 28 Applied to law enforcement, these pillars can be defined as follows:

1. Ability: The agency has the specialized skills, knowledge and psychological readiness to perform its mandate effectively.

2. Benevolence: The agency’s primary orientation is the welfare of the people it serves; its actions are motivated by a sincere desire to protect the community rather than a desire to assert dominance or meet bureaucratic quotas.

3. Integrity: The agency consistently lives up to its stated values and adheres to a stable, predictable moral and legal compass, regardless of external pressures or internal convenience.

Closely related to benevolence and integrity is the social psychology concept of procedural justice, which recognizes that people consider the fairness of the process to judge authorities, rather than just the outcomes of authorities’ decisions. By considering why people comply with legal authorities, researchers have identified four pillars of procedural justice: voice, neutrality, respect and trustworthiness.29

27 Joshua Foma, Riska Sri Handayani & Husnul Fitri. (2025). Understanding police discretion in Zimbabwe: Institutional drivers and consequences for community relations in Harare Metropolitan Province. Journal of Social Research, 4(12), 2001 2015. Accessible at: https://doi. org/10.55324/josr.v4i12.2885 Ishmael Mugari & Emeka E. Obioha. (2018). Patterns, costs, and implications of police abuse to citizens’ rights in the Republic of Zimbabwe. Social Sciences, 7(7), 116. Accessible at: https://doi.org/10.3390/socsci7070116

28 Mayer, Davis & Schoorman. (1995).

29 Tom R. Tyler. (2003). Procedural justice, legitimacy, and the effective rule of law. Crime and Justice, 30, 283 357. Accessible at: https:// www jstor org.leidenuniv.idm.oclc.org/stable/1147701?seq=68 Steven Blader & Tom R. Tyler. (2003). A four component model of procedural justice: Defining the meaning of a “fair” process. Personality and Social Psychology Bulletin, 29(6), 747 758. Accessible at: https://doi. org/10.1177/0146167203029006007

Applied to the law enforcement context, this means that agencies can engage in procedural justice by:

• Giving people a voice and allowing individuals and communities to express their perspectives, so as to take them into account in decision making.30

• Acting neutrally by making decisions consistently across groups, based on facts rather than personal opinions or institutional biases.31

• Treating people with dignity and respect.32

• Showing trustworthiness, genuine care and a commitment to lawful and appropriate uses of authority, in the interest of the community, such as respecting the rule of law and human rights, exercising powers proportionately and refraining from abuses.33

The particular importance of trust for law enforcement results from the power imbalances inherent in policing. To ensure public welfare and safety, law enforcement agencies are allowed to (proportionately) use force or impose serious consequences to freedom of movement and expression, such as detaining people. Ordinary people cannot opt out of this relationship in a rule of law based society. Yet modern law enforcement also relies on the principle of “policing by consent”, such that people voluntarily submit to police powers because they believe (trust) these powers are appropriate, lawful and justified, and that agencies will use them fairly to maintain a sense of common public safety.34 Trust is the very foundation of the legitimacy of law enforcement agencies’ authority.35

In practical terms, effective law enforcement requires public cooperation, and trust is a key driver of cooperation. Greater public trust correlates with greater deference in face to face encounters, voluntary collaboration with authorities and compliance with the law. Even a single, brief, personal interaction, such as a stop for a random alcohol breath test, can improve trust, as long as officers use fair and respectful procedures. If people have repeated, positive interactions with members of law enforcement agencies over time, it increases their willingness to cooperate and their sense of obligation to comply even further.36

Such outcomes are not guaranteed though. As one expert put it, trust is difficult to build and easy to break. As this point makes clear, public trust in law enforcement agencies is both complex and dynamic. It evolves over time, and new expectations continually emerge. What might have been acceptable decades ago may no longer suffice today. Even within the same time frame, trust is subjective, based

30 Murphy, Mazerolle & Bennett. (2014).

31 Ibid.

32 Jackson & Gau. (2015). Murphy, Mazerolle & Bennett. (2014). Yesberg, Brunton Smith & Bradford. (2021).

33 Jackson & Gau. (2015). Murphy, Mazerolle & Bennett. (2014). Yesberg, Brunton Smith & Bradford. (2021).

34 The concept of “policing by consent” derived from the ideas prominently articulated in the Anglo policing tradition by Sir Robert Peel. See: Sarah Tudor. (2023). Police standards and culture: Restoring public trust. Accessible at: https://lordslibrary.parliament.uk/police standards and culture restoring public trust/#heading 1

35 Jackson & Gau. (2015).

36 Murphy, Mazerolle & Bennett. (2014). Yuning Wu & Ivan Y. Sun. (2009). Citizen trust in police: The case of China. Police Quarterly, 12(2), 170 191. Accessible at: https://www.researchgate.net/publication/247748694_Citizen_Trust_in_Police_The_Case_of_China

on public perceptions and expectations. Individual perceptions result from past experiences37 and “gut feelings.”38 Even if a person has not personally experienced police violence, one interviewed expert acknowledged that knowing someone else who has suffered such treatment can reduce individual trust in the police. Immigrants’ negative experiences with the police in their home country continue to influence their perceptions of the police in their country of residence.39 Societal experiences also matter. The wider national context, both current and historical, shapes judgements too. A documented history of human rights violations significantly influences how a community assesses the trustworthiness of law enforcement.

As described in the Introduction, people tend to be cautious and sceptical about AI. Law enforcement agencies that plan to introduce AI systems thus need to consider the public’s general attitudes towards AI. But they should also recognize that when people develop performance expectations, they base them on their beliefs about how effectively the AI system will be in performing its assigned tasks.40 Research shows that, thus far, people simply do not believe that AI is effective in contexts that involve moral judgements 41 When those judgements also threaten meaningful risks, as is true in law enforcement contexts, the public likely rejects AI systems42 and prefers human decision makers, whom they see as more trustworthy for tasks that demand judgement and empathy. A 2021 study in the United Kingdom revealed that people trusted police officers’ decisions more than those by AI algorithms, especially if the decisions had a community wide impact.43

Thus, to build public trust in their uses of AI systems, law enforcement agencies must realize that multiple, complex factors interact to shape their technology perceptions:

The context. Trust in technology varies with the context.44 The same AI system can prompt trust or distrust, depending on its perceived purpose. Facial recognition software might seem fine to unlock a mobile phone, potentially acceptable to monitor traffic and totally unacceptable to surveil people on the street. People tend to be more trusting and accepting of privacy intrusive technologies deployed by airport security for border control than of uses by local police for general monitoring of public

37 Ibid.

38 Jackson & Gau. (2015).

39 Wu & Sun. (2009).

40 Berkeley J.Dietvorst. (2025). Understanding when laypeople adopt predictive algorithms. Nature Human Behaviour, 9(5), 851 853. Accessible at: https://dx.doi.org/10.2139/ssrn.5280790

41 Yochanan E. Bigman & Kurt Gray. (2018). People are averse to machines making moral decisions. Cognition, 181, 21 34. Accessible at: https:// cdr.lib.unc.edu/downloads/4q77g7072

42 UNICRI and INTERPOL. (2024).

43 Human decision making was especially valued in a fictional scenario where a crime hotspot was identified and a police officer needed to decide whether or not to dispatch officers to the hotspot. Interestingly, the same did not apply in a scenario where a single police officer observed suspects and needed to decide whether to conduct a stop and search. See Zoë Hobson, Julia A. Yesberg, Ben Bradford & Jonathan Jackson. (2021). Artificial fairness? Trust in algorithmic police decision‑making. Journal of Experimental Criminology, 19(1), 165 189. Accessible at: https://link.springer.com/article/10.1007/s11292 021 09484 9

44 Mayer, Davis & Schoorman. (1995). Dorotic, Stagno & Warlop. (2024).

spaces.45 A comprehensive survey of public perceptions of AI use by law enforcement, conducted by UNICRI and INTERPOL between November 2022 and June 2023, indicated that people appear more comfortable with retrospective uses, such as analysing past events, than with predictive or real time decision making.46

The actor that deploys the technology. Europeans indicate greater acceptance of high risk AI applications (e.g., surveillance) implemented by public rather than corporate entities.47

Group norms and demographics. Different groups interpret the same technologies in conflicting ways. One group might regard cameras as tools to support public safety, but another may see them as tools of oppression that disproportionately target minority populations.

Historical and cultural context. Studies across the United States of America, United Kingdom and Australia showcase the influences of existing, historical factors on acceptance of surveillance technologies (e.g., facial recognition, body worn cameras [BWCs]) and trust. Overall, United States residents are more accepting of surveillance technology but less trusting of the police than residents of the United Kingdom and Australia.48 They express scepticism about whether BWCs can improve police–citizen relationships, reflecting existing tensions between police and minority communities, and cite law enforcement agencies’ discretionary uses of BWC as a key obstacle, despite evidence that BWCs can document interactions accurately.49, 50

Institutional trust in law enforcement agencies thus strongly influences support for their adoption of AI systems.51,52 When law enforcement agencies already enjoy trust, they can readily justify their AI implementation efforts and counter the scepticism normally evoked by this technology. The previously cited UNICRI–INTERPOL study identified a correlation between people who believe law enforcement agencies respect the law and individual rights and their support for AI adoption. Their acceptance also increased when safeguards were in place; the study suggested that transparency, human oversight and strong legal frameworks were essential for establishing public confidence.53 Another study of public attitudes towards police uses of face recognition technology reached a similar conclusion: trust in law enforcement related positively to acceptance of the AI‑driven technology.54

45 Ada Lovelace Institute and The Alan Turing Institute. (2023). How do people feel about AI? A nationally representative survey of public attitudes to artificial intelligence in Britain. (2023). Accessible at: https://www.turing.ac.uk/sites/default/files/2023 06/how_do_people_feel_about_ai_ _ada_ turing.pdf

46 UNICRI. (2024).

47 Dorotic, Stagno & Warlop. (2024).

48 Kay L. Ritchie, Charlotte Cartledge, Bethany Growns, An Yan, Yuqing Wang, Kun Guo, Robin S. S. Kramer, Gary Edmond, Kristy A. Martire, Mehera San Roque & David White. (2021). Public attitudes towards the use of automatic facial recognition technology in criminal justice systems around the world. PloS One, 16(10), e0258241. Accessible at: https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0258241

49 William H. Sousa, Terance D. Miethe & Mari Sakiyama. (2018). Inconsistencies in public opinion of body worn cameras on police: Transparency, trust, and improved police–citizen relationships. Policing: A Journal of Policy and Practice, 12(1), 100–108. Accessible at: https://doi. org/10.1093/police/pax015

50 Cynthia Lum, Christopher S. Koper, David B. Wilson, Megan Stoltz, Michael Goodier, Elizabeth Eggins, Angela Higginson & Lorraine Mazerolle. (2020). Body‑worn cameras’ effects on police officers and citizen behavior: A systematic review. Campbell Systematic Reviews, 16(3), e1112. Accessible at: https://doi.org/10.1002/cl2.1043

51 Anna Sagana, Mengying Zhang & Melanie Sauerland. (2026). Public attitudes towards police use of AI driven face recognition technology. Computers in Human Behavior, 174, 108821. Accessible at: https://www.sciencedirect.com/science/article/pii/S0747563225002687

52 Ritchie et al. (2021).

53 UNICRI. (2024).

54 Sagana, Zhang & Sauerland. (2025).

Integrity, achieved when law enforcement agencies demonstrate their fairness and ability to perform tasks, thus appears to drive public trust in the agencies’ AI implementation.55 Expert interviews echoed these findings. Public perceptions of government and law enforcement agencies influence their views of the use of AI in policing. These insights combine to establish an important point: trust in law enforcement agencies (or a lack thereof) can spill over into attitudes towards AI adoption by law enforcement agencies, reinforcing the critical importance of cultivating and preserving trust.

When AI contributes to decision making, it could undermine perceptions of procedural justice, because it implies that human actors are less involved in the decision. In this scenario, exposing people to repeated examples of effective, well justified AI decision making might offer another route to build trust and increase acceptance.56 As noted, trust develops on the basis of real experiences and perceptions of fairness and effectiveness, so positive experiences with AI might be an effective way to foster trust. According to one study, United Kingdom participants exposed to successful algorithmic decision making expressed more support for law enforcement agencies’ uses of the technology.57

Ultimately, law enforcement agencies should work to prompt broad public perceptions of themselves as credible and reliable, rather than focusing just on how to secure trust for a specific AI system. When detailing the specific AI systems they use, though, they also need to implement measures and mechanisms that can convincingly prevent or mitigate the risks to individuals and communities, as exemplified in > Use Case snapshot 2.

To address the potential risks and harms related to the use of AI in law enforcement – such as, but not limited to, privacy intrusions, algorithmic bias, over‑surveillance, and opaque automated decision‑making – the AI4Citizens use case included an analysis of which ethical and human rights considerations need to be accounted for when implementing the AI system. They included both technical and organizational safeguards, such as those detailed below.

The AI system was designed to comply with the applicable legal requirements, such as relevant privacy and data protection laws. The implementing law enforcement agency should ensure safeguards such as the following:

• All footage is anonymized upon capture, to protect the right to privacy and personal data of depicted people. If raw footage must be retained (e.g., due to a court order), strict compliance with applicable laws must govern its storage duration and access.

55 Theo Araujo, Natali Helberger, Sanne Kruikemeier & Claes H. de Vreese. (2020). In AI we trust? Perceptions about automated decision making by artificial intelligence. AI & Society, 35(3), 611 623. Accessible at: https://link.springer.com/article/10.1007/s00146 019 00931 w. Robin G. Li. (2025). Your faces matter: Facial recognition technology (FRT) in AI-enabled public services and provision, doctoral dissertation, Arizona State University. Accessible at: https://ascelibrary.org/doi/10.1061/JLADAH.LADR 1435. Jackson & Gau. (2015).

56 Hobson, Yesberg et al. (2023).

57 Ibid.

• Training data, performance metrics and potential impacts on different demographics are documented and communicated to law enforcement agencies.

• Regular reviews of the system’s performance check for system integrity and involve tests among different demographic contexts to ensure fairness.

• Law enforcement personnel that are the end users of the AI system are well trained in the system’s capabilities and limitations and ethical and legal considerations, including recognition that anomalies detected by the AI system are context specific and not conclusive of criminal behaviours.

• End users critically assess AI outputs, avoid overreliance on automation and work to mitigate the risk of bias. They see the AI system as a support tool and remain in control of and responsible for decision making.

Transparency, as used in this report, refers to the openness by law enforcement agencies regarding their AI innovation efforts – i.e., which AI systems they use or intend to use, for what purposes and with which processes In this sense, transparency involves a reciprocal interaction between law enforcement and relevant stakeholders, not a unidirectional process.

In the expert interviews, transparency consistently emerged as a key enabler of public trust. Transparency about AI systems is particularly important, given the significant potential impacts of law enforcement agencies’ decisions on individuals, communities and society at large. Yet law enforcement agencies appear to struggle to establish transparency, particularly in their AI implementation efforts. Several studies corroborate this observation, highlighting persistent transparency deficits, limited public oversight, an absence of impact assessments and overreliance on opaque discreet procurement processes, seemingly to avoid public scrutiny.58

These issues stem from a range of underlying challenges, including an incomplete understanding of what transparency is and why it is essential. To address such questions, this section details the meaning of transparency in law enforcement uses of AI, while also acknowledging the practical constraints on agencies attempting to provide meaningful transparency surrounding their AI innovation.

58 Ben Bradford, Julia A. Yesberg, Jonathan Jackson, & Paul Dawson. (2020). Live facial recognition: Trust and legitimacy as predictors of public support for police use of new technology. British Journal of Criminology, 60, 1502–22. Accessible at: https://pmc.ncbi.nlm.nih.gov/articles/ PMC7454338/ H. Bloch Wehba. (2021). Visible policing: Technology, transparency, and democratic control. California Law Review, 109(3), 917 978. Accessible at: https://doi.org/10.31228/osf.io/4pcf3

Transparency relates to the openness of an entity by revealing information about its decision making processes, procedures, performance and outcomes.59 Even if it only sometimes appears enshrined in law, transparency represents an ethical imperative for initiatives with a public impact, such as introducing AI systems. The concept can however be ambiguous, without any uniformly accepted definition across disciplines, jurisdictions or stakeholders. For governance actors, transparency is tied to accountability; for civil society, it implies openness and communication; for technical communities, transparency results from explainable models and methods. Even the expert interviews reflect this ambiguity. Most of the experts defined transparency as communication and openness and emphasized visibility and honest descriptions of uses of AI systems. But others associated the concept with what can also be defined as “explainability”, meaning the ability to understand how an AI system works and why it produces certain outputs. Such technical views of transparency increasingly permeate regulatory debates. As a result, determining the scope of transparency and translating it into operational practice often results in contested and ambiguous discussions.

By illustrating some of the various definitions of transparency, drawing on both expert interviews and empirical studies,60 this section suggests ways to leverage transparency in practice to strengthen public trust in law enforcement agencies’ uses of AI. Starting broadly and then addresses more specific applications of transparency, the following senses of transparency are explored:

An institution's openness to external scrutiny; making internal procedures visible and accessible.

Obligation of public administration entitites to be open and share information relevant to the public and support individuals' right to know.

Obligation of law enforcement agencies to be open and disclose information, while addressing particular challenges, such as operational secrecy.

Openness of law enforcement agencies in reporting efforts to innovate with AI, including which AI systems they use or intend to use, for what purposes and with which processes.

59 Meijer. (2013). Grimmelikhuijsen & Welch. (2012).

enforcement AI innovation

60 Dorotic, Stagno & Warlop. (2024). Dorotic et al. (2025) experimentally test how moral judgements shape the perceived permissibility of high‑risk AI systems and the effectiveness of privacy‑protection solutions. See Matilda Dorotic, Tuan Viet Do & Yochanan E. Bigman. (2025). Impact of moral judgments on permissibility of high-risk AI and effectiveness of privacy-protection solutions. BI Norwegian Business School working paper. Accessible at: https://hdl.handle.net/11250/5333378. See also Dorotic, Stagno & Warlop. (2024).

Institutional transparency in public administration

As an overriding concept, institutional transparency refers to any institution’s openness to external scrutiny.61 Such openness results from making its internal procedures visible and accessible.62 In public administration, transparency is a cornerstone for good governance and public administration accountability.63 Openness allows individuals and civil society organizations to uncover wrongful or problematic conduct by public authorities, question their decisions and hold them responsible for their actions. According to the OECD Principles of Public Administration, transparency guarantees the right to access public information; it also imposes an obligation on public authorities to make it easy for individuals to obtain that information. Access may be denied only for information that has been classified, based on compelling reasons clearly specified by law. Transparency also requires proactive disclosures of information “which is relevant, complete, accurate and up to date, accessible, understandable, machine readable, in open format and reusable.”64

According to academic literature and the expert interviews, transparency cannot be limited to one way disclosures by public entities that offer information they are willing to share. Instead, it demands complex, dynamic interactions of information disclosure, interpretations and institutional accountability. During such interactive processes, individuals must be able and willing to understand and act on information, and institutions must remain responsive and prepared to engage.65 Understanding these interactive considerations is essential. Transparency can enhance trust and acceptance, but it might also heighten security vulnerabilities or amplify people’s concerns and uncertainty (> see Chapter 1 – seCtion 2.3).

Institutional transparency in the law enforcement

Law enforcement agencies are public entities, subject to the principle of transparency for public administration in general. In addition, transparency surrounding their activities can raise unique considerations. The dual roles of law enforcement agencies often create tension between transparency and confidentiality. On the one hand, they act as regular public bodies, responsible for maintaining public order through preventive functions such as street monitoring. In this capacity, there is a strong public interest in transparency, especially in how the publicly funded entity exercises its powers. On the other hand, they operate within the judicial sphere and perform criminal investigations. In this role, they must conceal information and/or policing practices whose release could jeopardize ongoing operations.

Legal frameworks generally acknowledge that, given the nature of their work, law enforcement agencies may need to maintain a certain level of secrecy, to avoid revealing any information that could

61 Christopher Hood & David Heald (Eds.). (2006). Transparency: The key to better governance? Liverpool University Press. Accessible at: https:// www.davidheald.com/coverage/PA%20Raab%202008.pdf

62 Cornelia Moser. (2001). How open is “open as possible”? Three different approaches to transparency and openness in regulating access to EU documents. IHS Political Science Series, No. 80. Accessible at: https://irihs.ihs.ac.at/id/eprint/1389/1/pw_80.pdf. Meijer. (2013).

63 Defined as all documented information held by the public administration (individuals or legal persons who exercise public authority). See Organisation for Economic Co operation and Development (OECD). (2023). The Principles of Public Administration, OECD, Paris. Accessible at: https://www.sigmaweb.org/publications/Principles of Public Administration 2023.pdf; Principle 15. Hood & Heald. (Eds.). (2006).

64 OECD. (2023).

65 Meijer. (2013).

compromise public safety.66 Yet such necessary operational secrecy cannot serve as a justification for the absence of two‑way communication to aid their accountability. Transparent engagement still can take place, without revealing sensitive operational details, by focusing on purposes, principles and safeguards rather than information that must remain confidential.

As AI becomes increasingly complex and ubiquitous, the relevance of transparency has grown correspondingly. For AI systems, which operate through software that can run inconspicuously in the background, it is entirely possible – and often the case – for them to be embedded in decision making workflows without the knowledge of users or entities subject to the decisions.67 This inherent invisibility elevates transparency to a core principle in AI ethics, which calls for openness and disclosures of AI systems’ development and use that enable meaningful oversight and accountability.68

The UNICRI INTERPOL Toolkit for Responsible AI Innovation in Law Enforcement defines transparency as promoting good communication practices across an AI system’s life cycle; sharing clear, accessible and complete information with all stakeholders; and ensuring that individuals who interact with AI systems have sufficient knowledge and understanding of how those systems function so that they can safeguard their autonomy.69 In addition, expanding beyond ethical principles, transparency increasingly appears in binding laws, as > Learn More Box 2 explains.

The European Union’s (EU) AI Act explicitly defines transparency as AI systems’ development and use “in a way that allows appropriate traceability and explainability, while making humans aware that they communicate or interact with an AI system, as well as duly informing deployers of the capabilities and limitations of that AI system and affected persons about their rights.”70 To operationalize this principle, the AI Act establishes transparency obligations for providers and deployers of certain AI systems.71 It requires developers of high risk AI systems, including many

66 For example, Council of Europe (2018). Convention 108+:Convention for the protection of individuals with regard to the processing of personal data. Explanatory Report, para. 92. Accessible at: https://rm.coe.int/convention 108 convention for the protection of individuals with regar/16808b36f1. Explanatory Report to the Council of Europe Framework Convention on Artificial Intelligence and Human Rights, Democracy and the Rule of Law. (2024), para. 104. Accessible at: https://rm.coe.int/1680afae67

67 UNICRI and INTERPOL. (2024). Introduction.

68 United Nations Educational, Scientific and Cultural Organization (UNESCO). (2022). Recommendation on the Ethics of Artificial Intelligence. Accessible at: https://unesdoc.unesco.org/ark:/48223/pf0000381137 Jessica Fjeld, Nele Achten, Hannah Hilligoss, Adam Christopher Nagy & Madhulika Srikumar. (2020). Principled artificial intelligence: Mapping consensus in ethical and rights based approaches to principles for AI. Berkman Klein Center Research Publication No. 2020 1 Accessible at: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3518482

69 UNICRI and INTERPOL (2024). Principles.

70 Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No 300/2008, (EU) No 167/2013, (EU) No 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act) (Text with EEA relevance). (EU AI Act). Recital 27.

71 These obligations are not yet in effect as of the time of this publication.

law enforcement use cases, to design and develop them to provide sufficient operational transparency and enable deployers to interpret the system’s output and use it appropriately. In turn, high risk AI systems must be accompanied by clear, relevant, comprehensible instructions for use that enable deployers to understand the system’s capabilities, limitations and proper applications.72 A notable feature of the AI Act is its creation of an EU database, managed by the European Commission, that houses information about high risk AI systems registered by providers and public authorities that deploy them. The database is intended to be publicly accessible, though exceptions apply to AI systems for law enforcement, which instead must be recorded in a secure, non public section that is accessible only to the Commission and national authorities.73

Beyond the European Union, other jurisdictions have also sought to embed transparency into law. For instance, transparency is central to South Korea’s AI Basic Act, which imposes transparency obligations, requires clear labelling of AI generated content, and obliges AI operators and developers to comprehensively document measures they have taken to ensure AI safety and reliability.74 Brazil’s proposed AI Bill also identifies transparency as a key principle and imposes corresponding obligations on developers and users, with particular emphasis on AI systems used by public entities. This draft bill also includes individual rights to transparency, such as the right to information when interacting with AI systems.75 Transparency already has been codified in Brazil, in a federal ordinance governing the use of AI in criminal investigations, which addresses appropriate processes for federal security forces to procure AI systems.76

Transparency regarding technical aspects versus explainability and interpretability

Transparency is often intertwined with terms such as “explainability” and “interpretability”,77 but it is important to disentangle them. Both transparency and explainability are instrumental principles for safeguarding human autonomy, or the capacity and right of every individual – law enforcement personnel, victims, suspects, criminals, citizens – to exercise self governance. Such autonomy requires that those individuals have sufficient knowledge and understanding of the AI systems that affect them.78

Because this report focuses on transparency as a desired attribute of law enforcement agencies, it approaches transparency in AI implementation as part of the broader concept of institutional transparency, so it entails the relational and communicative practices that any institution uses when adopting and deploying AI systems. Such a perspective certainly requires openness about technical

72 EU AI Act, Article 13.

73 EU AI Act, Articles 49 (4) and 71.

74 Sakshi Shivhare & Kwang Bae Park. (April 18, 2025). South Korea’s new AI Framework Act: A balancing act between innovation and regulation. Future of Privacy Forum. Accessible at: https://fpf.org/blog/south koreas new ai framework act a balancing act between innovation and regulation/

75 Projeto de Lei n° 2338, de 2023.https://www25.senado.leg.br/web/atividade/materias/ /materia/157233. Most recent text is available here: https://legis.senado.leg.br/sdleg getter/documento?dm=9915448&ts=1742240908123&disposition=inline

76 Portaria 961/2025. Accessible at: https://www.gov.br/mj/pt br/assuntos/noticias/portaria do mjsp regulamenta uso de tecnologia em investigacoes criminais e inteligencia de seguranca publica/portaria mjsp no 961 de 24 de junho de 2025 portaria mjsp no 961 de 24 de junho de 2025 dou imprensa nacional.pdf

77 Jessica Fjeld, Nele Achten, Hannah Hilligoss, Adam Christopher Nagy & Madhulika Srikumar. (2020). Principled artificial intelligence: Mapping consensus in ethical and rights based approaches to principles for AI. Berkman Klein Center Research Publication No. 2020 1. Accessible at: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3518482

78 UNICRI and INTERPOL. (2024). Principles.

elements – the types of algorithms used, performance indicators, training data, but the focus is not on such elements of explainability per se. Rather, explainability is an attribute of the AI system itself. For example, AI systems are often described as “black boxes”, because their inner workings are so intricate that even expert developers struggle to explain how they produce specific outputs.79

In AI literature, explainability thus refers to the technical capacity to understand how an AI system makes decisions and generates outputs, which might be labelled explainability in a narrow sense, along with why certain results emerge, which is sometimes called interpretability.80 The distinction matters in practice. A perfectly explainable algorithm implemented without any public disclosure or engagement remains opaque in ways that matter for good governance and accountability. An agency might be highly transparent about its uses of a particular AI system, the policy framework governing its use and the safeguards in place, but the underlying model, in technical terms, could remain a black box.

In the interviews, the centrality of transparency for both AI uses and law enforcement was obvious, particularly for the policy, ethics and human rights experts. Law enforcement activities inherently affect people’s rights, and the introduction of AI systems frequently amplifies such impacts. Transparency can function as a fundamental safeguard of human rights in this context. Provided that adequate accountability and redress mechanisms are in place, when the persons affected by AI supported decisions are aware of its use and have clear information about it, they can meaningfully question the use of AI systems, assess whether outputs are accurate and challenge them as needed.81 Without such visibility, as one law enforcement expert cautioned, personnel may be tempted to shift accountability for their decisions to the AI system and away from themselves.

Deploying AI systems for surveillance represents more than an incremental upgrade to existing practices; AI fundamentally changes the nature of surveillance. Surveillance shifts from targeted observation to large scale, often indiscriminate data collection. The information gathered involves not just individuals suspected of wrongdoing but anyone in the broader population.

For law enforcement agencies, surveillance tactics are essential to criminal investigations. Surveillance includes a range of activities, such as monitoring, which consists of short term, preliminary observation to detect crime, and covert surveillance, which uses more invasive approaches during an investigation.82 Different techniques, whether they rely on AI systems, CCTV cameras

79 UNICRI and INTERPOL. (2024). Introduction.

80 UNICRI and INTERPOL. (2024). Principles.

81 UNESCO. (2022).

82 International Association of Chiefs of Police (IACP). (April 2009). Surveillance. Law Enforcement Policy Center. Accessible at: https://www. theiacp.org/sites/default/files/2020 06/Surveillance%20FULL%20 %2006222020.pdf

or physical stop and search policies, all aim to increase law enforcement effectiveness and make communities feel safer. Yet, their unintended consequences often have the opposite effect.

In particular, uncertainty about the presence or extent of surveillance and fears of being monitored can undermine trust and strain social relationships. Surveillance also increases threats to human rights, particularly privacy, which can induce a “chilling effect” and cause people to refrain from exercising other rights and freedoms. That is, concerned about the risks of surveillance, people might stop exercising their freedom of movement and assembly, freedom of expression and participation in public life and political activities, the right to protest, or the freedom of conscience and religion. Excessive surveillance even might produce counterproductive outcomes, such as exacerbating the public’s fear of criminal activity.

Transparency as a human rights safeguard takes a particularly critical role during court proceedings. Parties in criminal proceedings need access to information to ensure a fair trial. Access to adequate information about AI system uses is essential for victims and their families to seek appropriate redress, for suspects to verify the chain of evidence and build defences, and for courts to duly access the evidence presented.83

External scrutiny promises to enhance AI uses by law enforcement agencies. The AI market is highly competitive, and technology providers have strong incentives to release products quickly, often at the expense of thorough safety validation. In practice, then, law enforcement agencies might purchase AI systems that appear to offer impressive technical capabilities but that have not undergone sufficient real world testing or proactive assessments of their impacts on various stakeholders.84 But transparency supports independent evaluations, such as by academic institutions and civil society groups. These external safeguards can protect law enforcement agencies from inadvertently adopting ineffective AI systems. When law enforcement agencies involve external stakeholders in their AI innovation plans from an early stage, they gain diverse perspectives and encourage insightful discussions. Such stakeholder engagement ultimately supports the integration of AI systems that are more reliable, compliant and effective.

In addition to these safeguarding roles, transparency can enable trust. For some interviewed experts, transparency constitutes the very starting point for building trust, and trust is not possible without transparency. In general, if decision making processes are transparent, they may also seem procedurally fair, so people are more willing to accept the resulting decisions.85 If the public participates meaningfully in adoption and implementation decisions, law enforcement agencies can introduce their AI systems more smoothly, sustainably and in a socially accepted manner. As an interviewed expert noted, by improving public acceptance of AI uses in law enforcement, transparency can have the secondary effect of strengthening the broader AI innovation ecosystem of a country.

83 UNESCO. (2022).

84 Besides being frequently mentioned by expert interviewees, these concerns have been expressed in several white papers. See OECD. (2023). The impact of artificial intelligence on the public sector: Risks and opportunities, ibid.; European Commission. (2020). White paper on artificial intelligence: A European approach to excellence and trust. Publications Office of the European Union. Accessible at: https://ec.europa.eu/info/sites/default/files/commission white paper artificial intelligence feb2020_en.pdf

85 Jenny de Fine Licht. (2014). Transparency actually: How transparency affects public perceptions of political decision making. European Political Science Review, 6(2), 309 330. Accessible at: http://dx.doi.org/10.1017/S1755773913000131

Yet the expert interviews also repeatedly raised warnings about the potential for a lack of transparency to undermine public trust. When people suspect that technology is being used but do not know how, or whether it is being applied to them, their fears of misuse increase, and so does public distrust (> see Learn More Box 3). If law enforcement agencies fail to be or take too long to become transparent, this can spark social unrest that threatens to undermine their AI usage plans. Public backlash has prompted several agencies to revise their policies.86

The challenges surrounding transparency about law enforcement uses of AI persist (> see Chapter 1 – seCtion 2.3). But some encouraging developments are also emerging, signalling cultural changes and broader recognition of the need for transparency. Most government bodies now maintain institutional websites, which they can use to disclose information about the technologies they employ. Granted that while many agencies share little or no detail, some exceptions are notable. For example, a police service, described by an expert, has created a dedicated web page, describing its AI trials, which offers unusually comprehensive insights into its ongoing technological experiments.

External initiatives can contribute to public understanding too. A promising institutional approach involves algorithmic registers. That is, in some countries, police must disclose information to supervisory bodies about the systems they use, including details about data processing.87

The indirect effects of external oversight mechanisms also appear pertinent. When public procurement rules require law enforcement agencies to publish their calls and contracts, journalists and researchers can investigate what technologies they are purchasing and from whom. Supervisory bodies often have the authority to review law enforcement activities, ask detailed questions and conduct investigations, which creates another layer of accountability and transparency. A recent study in the Netherlands revealed that informing the public that an independent external body was overseeing the uses of predictive algorithms by law enforcement agencies significantly increased trust. Simply highlighting the existence of legal safeguards, mentioned alone or together with oversight, did not meaningfully boost trust though.88

Notwithstanding its importance, transparency about AI innovation in law enforcement settings is no guaranteed path to public trust. The average, overall effect of transparency on trust might be positive, but these outcomes also depend on the context and the method used to implement transparency.89 Both existing literature and several interviewed experts have emphasized important limitations.

86 Ritchie et al. (2021). Ibid. The Equality and Human Rights Commission. (2025). Accessible at: https://www.equalityhumanrights.com/met polices use facial recognition tech must comply human rights law says regulator. Jeff Larson. (2016). How we analyzed the COMPAS recidivism algorithm. ProPublica. Accessible at: https://www.propublica.org/article/how we analyzed the compas recidivism algorithm

87 The Netherlands maintains a national register for reports by public authorities, including police, about their automated systems and human oversight requirements. The level of detail varies, and the requirements remain somewhat unclear, but even just creating a register can spark cultural change because it requires public organizations to reflect on their use of AI. In addition, civil society groups can use the register to foster dialogue and public debates about responsible AI deployment. The register is accessible at: https://algoritmes.overheid.nl/en

88 E. N. Nieuwenhuizen, V. Trehan & G. Porumbescu. (2025). Does institutional transparency affect citizen trust in predictive policing? Evidence from a survey experiment in The Netherlands. Public Administration, forthcoming. Accessible at: https://doi.org/10.1111/padm.70034

89 Qiushi Wang & Zhen Guan. (2023). Can sunlight disperse mistrust? A meta analysis of the effect of transparency on citizens’ trust in government. Journal of Public Administration Research and Theory, 33(3), 453 467. Accessible at: https://doi.org/10.1093/jopart/muac040