AFRICA’S AI MOMENT

The race to scale

INSIDE: BUILD VERSUS BUY I WHY ADAPTABILITY BEATS TALENT I WHERE ROBOTICS MEETS THE REAL ECONOMY I THE NEW ARMS RACE I and more

African banks are scaling AI, tackling

AI helps utilities unlock data, predict demand, optimise operations and strengthen water resilience. 16 THE NEW AI ARMS RACE

Chips, data centres and compute power are shaping the future of arti cial intelligence globally.

22 BUILT HERE

Control over data, infrastructure and compute will determine Africa’s long-term AI independence.

23 BUILD VERSUS BUY

Owning data and governance while renting tools de nes modern enterprise AI strategy.

26 CAN AFRICA RUN AI AT SCALE?

43 GETTING AI RIGHT

Skills, structure and strategy are key to unlocking sustainable AI value in organisations.

44 WHERE ROBOTICS MEETS THE REAL ECONOMY

64 AI TURNING HEADS

Four industries show how AI delivers competitive advantage, ef ciency gains and growth opportunities.

70 WHEN ATTACKERS USE AI

Infrastructure, energy and rising compute costs challenge large-scale AI adoption across Africa. 28 AFRICA’S AI ENERGY CHALLENGE

Power constraints and rising demand raise questions about sustainable AI growth across the continent. 34 MAKING IT PAY

AI success depends on cost control, integration and governance to deliver measurable business value.

35 AI’S EXPENSIVE BACKBONE

Demand for GPUs, networks and data centres is driving unprecedented AI deployment costs.

Robotics creates value where systems, economics and environments are ready for adoption.

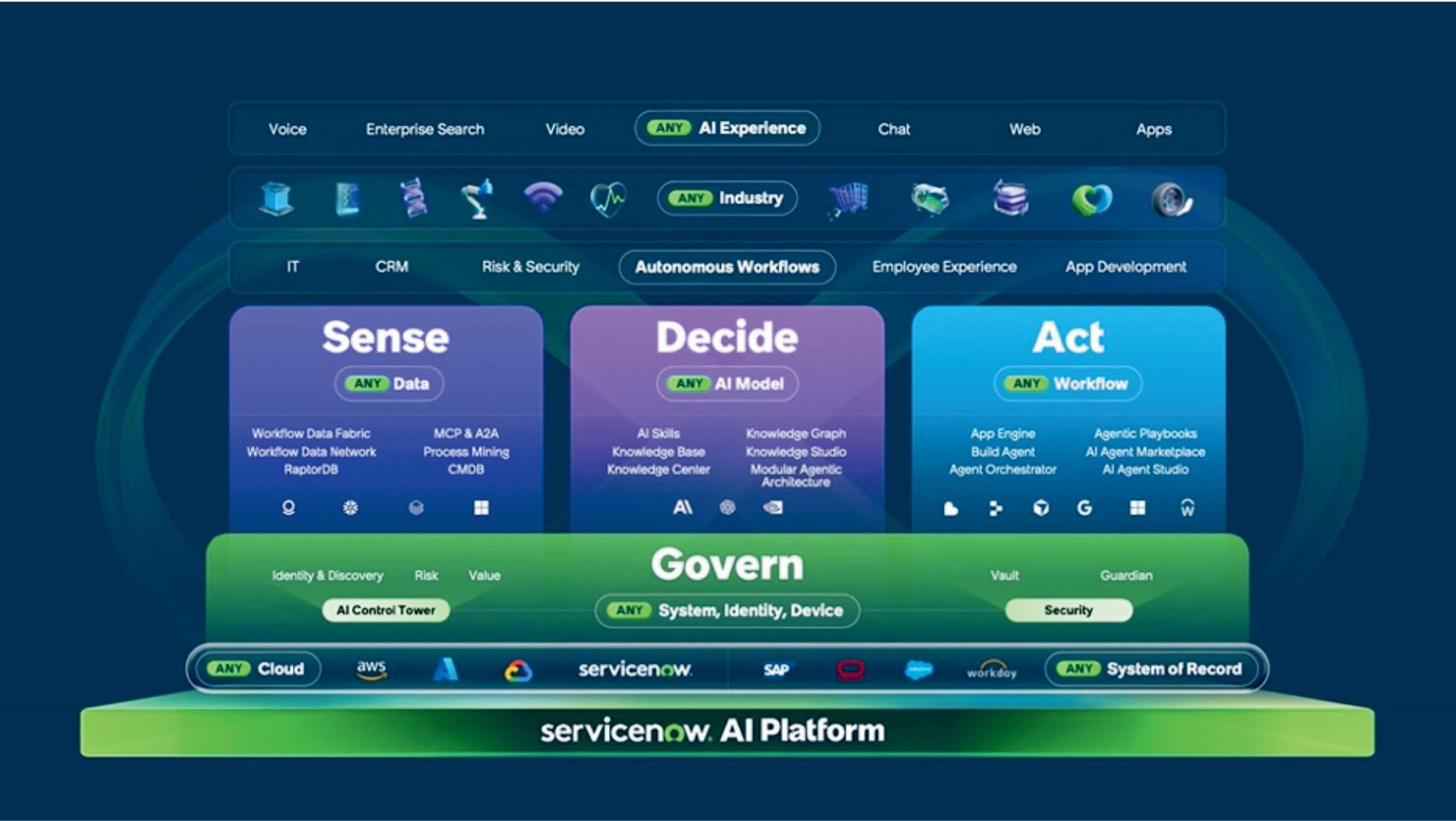

48 AUTOMATING THE BEATING HEART

AI-driven work ows enhance employee experience, improving ef ciency across core business operations.

50 WOULD YOU LIKE TO SPEAK?

AI tools support customer service teams, improving ef ciency and overall customer engagement.

52 FROM DATA TO DECISIONS

Turning data into measurable outcomes remains uneven, impacting revenue, cost and risk.

56 SCALING ENTERPRISE AI IN AFRICA

Organisations are moving from adoption to execution, focusing on practical frameworks to scale AI successfully.

60 AI AT WORK

AI success depends on data quality, systems integration, skills, regulation and risk tolerance.

Cybercriminals exploit AI tools, accelerating phishing, deepfakes and increasingly sophisticated attacks.

71 THE BACKBONE BEHIND BOTS

Chips, connectivity and energy systems power AI growth and South Africa’s evolving digital future.

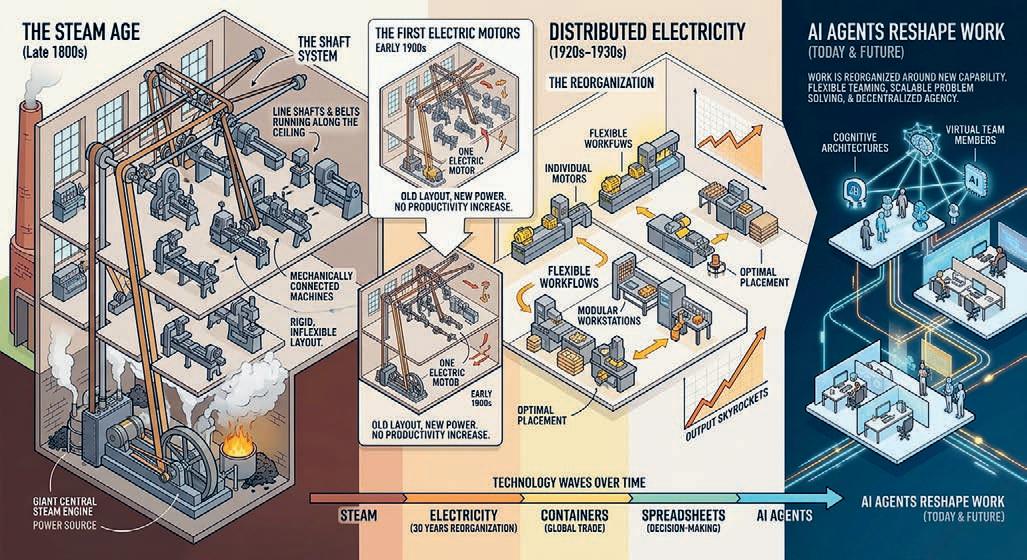

76 WHY ADAPTABILITY BEATS TALENT

Adaptability, collaboration and continuous learning are essential as AI reshapes work and future skills.

Picasso Headline,

A proud division of Arena Holdings (Pty) Ltd, Hill on Empire, 16 Empire Road (cnr Hillside Road), Parktown, Johannesburg, 2193 PO Box 12500, Mill Street, Cape Town, 8010 www.businessmediamags.co.za

EDITORIAL

Editor: Brendon Petersen

Content Manager: Raina Julies rainaj@picasso.co.za

Contributors: Tiana Cline, Trevor Crighton, Clifford de Wit, Lynn Grala, Trevor Kana, Itumeleng Mogaki, Busani Moyo, Semone Peacock, Anthony Sharpe, Rodney Weidemann

Copy Editor: Brenda Bryden

Content Co-ordinator: Natasha Maneveldt

DESIGN

Head of Design: Jayne Macé-Ferguson

Senior Designer: Mfundo Archie Ndzo

Project Designer: Annie Fraser

DIGITAL

Online Editor: Stacey Visser vissers@businessmediamags.co.za

SALES

Project Manager: Tarin-Lee Watts wattst@arena.africa | +27 87 379 7119 +27 79 504 7729

PRODUCTION

Production Editor: Shamiela Brenner

Advertising Co-ordinator: Shamiela Brenner

Subscriptions and Distribution: Fatima Dramat fatimad@picasso.co.za

Printer: CTP Printers, Cape Town

MANAGEMENT

Management Accountant: Deidre Musha

Business Manager: Lodewyk van der Walt General Manager, Magazines: Jocelyne Bayer COPYRIGHT: Picasso Headline.

DEBUNKING THE MYTHS AROUND AI

Every technology moment arrives with a certain amount of theatre.

Arti cial intelligence (AI) has had more than most. For the past few years, the conversation has been full of bold forecasts about transformation and disruption. Spend enough time around the technology sector, and it can start to feel as if AI has already remade the world. It hasn’t. At least not yet.

the publisher. The publisher is not responsible for unsolicited material. AI is published by Picasso Headline. The opinions expressed are not necessarily those of Picasso Headline. All advertisements/advertorials have been paid for and therefore do not carry any endorsement by the publisher.

This issue aims to step away from the spectacle and spend some time on the practical side of the story. What actually happens when organisations try to use AI in their day-to-day operations? What does it look like once the demos end and the work begins?

The answers are rarely glamorous.

Deploying AI in the real economy quickly becomes a conversation about infrastructure, power availability and the cost of compute. Questions about where data sits, how systems are governed and who inside the organisation is responsible for them begin to matter more than the model itself. Many companies discover that building a prototype is relatively straightforward. Running it reliably

at scale is something else entirely. Across this issue, we look at the ecosystem that sits behind modern AI. The global race for chips and specialised hardware. The sectors where automation is beginning to produce measurable returns.

The operational realities that determine whether ambitious projects survive contact with everyday business environments. There’s also a question that sits close to home.

Where Africa ultimately sits in the emerging intelligence economy will depend on decisions being made now about infrastructure, skills and localisation. The story of AI here will be shaped less by grand predictions and far more by the practical work of building the systems that make it possible.

Happy reading.

Brendon Petersen Editor

Brendon Petersen

AI IN ACTION

Insights from KPMG’S 2025 GLOBAL CEO OUTLOOK show that African financial institutions are deploying AI at scale, tackling legacy systems, workforce readiness and cyber-risk in 2026

African nancial services are entering 2026 at a turning point. CEOs across banking, capital markets and insurance are no longer experimenting with technology – AI, cybersecurity and regulatory resilience are shaping real strategies for growth and transformation.

on measurable transformation rather than experimentation.

INSURANCE: TECHNOLOGY AND SUSTAINABILITY DRIVE CONFIDENCE

changing skills required for entry-level roles. For insurers, the ability to equip staff with the right skills is increasingly central to capturing AI’s potential.

Despite geopolitical uncertainty and economic volatility, leaders are showing a clear appetite to modernise operations, integrate advanced technologies and strengthen risk management. According to KPMG’s 2025 Global CEO Outlook, these shifts are already in uencing investment priorities and operational focus across the continent’s nancial institutions.

Here are the key insights from the report:

• AI is no longer a pilot project, but a strategic lever.

• Legacy systems, workforce-readiness and cyber-risk remain major barriers.

• Investment is increasingly focused

Insurance CEOs are entering 2026 with growing optimism. Globally, 82 per cent of insurance CEOs are con dent in their company’s growth, up from 74 per cent in 2024, re ecting stronger earnings across health, life and specialty lines, including cyber and business interruption coverage.

AI adoption is accelerating across underwriting, onboarding, claims processing and cyberdefence. Globally, 67 per cent of CEOs expect returns from AI investments within 1–3 years, compared to 21 per cent last year, while two-thirds plan to allocate 10–20 per cent of budgets to AI initiatives. These gures underline that insurers are treating AI as a core operational tool rather than a speculative experiment.

Workforce transformation remains a critical challenge. Seventy-seven per cent of global insurance CEOs cite AI workforce-readiness and upskilling as a top constraint, while 83 per cent report that AI is reshaping training and development, and 79 per cent say it is

Sustainability and environmental, social and governance (ESG) compliance are also shaping strategy. Over half (55 per cent) of global insurance CEOs identify ESG reporting and compliance as their primary ESG priority. In Africa, where regulations often follow European trends, insurers must navigate both global expectations and local frameworks, making ESG a non-negotiable part of strategic planning.

Cybersecurity is another top concern. Eighty-three per cent of insurance CEOs name cybercrime as the biggest barrier

83% of CEOs say the biggest barrier to organisational growth is cybercrime and cyberinsecurity.

to growth, with digital risk resilience emerging as the leading area for risk mitigation investment.

Mark Danckwerts, head of insurance, KPMG One Africa, said: “Insurance leaders across Africa are navigating a complex operating environment, but they are doing so from a position of growing con dence. AI presents enormous opportunity to improve ef ciency, risk assessment and customer engagement. However, sustainable success will depend on responsible adoption, workforce-readiness and strong cyber-resilience. Insurers that balance innovation with trust will be best placed to outperform.”

77%

agree that a top constraint on growth is AI workforce-readiness and upskilling.

Inorganic growth is also on the rise, with Africa’s insurance sector showing some of the highest levels of high-impact mergers and acquisitions globally – a trend that highlights insurers’ willingness to combine scale with technology-driven ef ciency.

BANKING AND CAPITAL MARKETS: AI AS A STRATEGIC IMPERATIVE

For African banks, AI is no longer a theoretical discussion; it is the backbone of strategic reinvention.

“Technology, in particular AI, presents a huge opportunity, but also a challenge in terms of where to prioritise, how to achieve a measurable return on investment (ROI), and how to ensure responsible and safe adoption to maintain trust,” said Pierre Fourie, KPMG One Africa head of nancial services.

“Banks need to modernise legacy IT, cope with rising nancial crime risk, made more dif cult by sophisticated scams using AI, address new competitive threats from ntechs and nimble cloud-native banks, and comply with complex and changing regulations.”

AI functions both as an enabler and a risk ampli er. It can enhance customer engagement and deepen understanding of client needs, yet banks must avoid depersonalising interactions and maintain the human touch. At the same time, AI strengthens detection of bad actors, while increasing the complexity of the cyberthreat landscape.

Investment in AI is growing rapidly:

•70 per cent of banking CEOs plan to spend 10–20 per cent of their budgets on AI in the next 12 months.

•69 per cent expect ROI from AI within 1–3 years, up from 13 per cent last year.

•78 per cent cite workforce-readiness or upskilling as a potential risk if not addressed.

The top ve factors threatening banking prosperity highlight the operational challenges:

•86 per cent – cybercrime and cyberinsecurity.

•78 per cent – AI workforce-readiness.

•77 per cent – integration of AI into business processes.

•75 per cent – competition for AI talent.

•75 per cent – cost of technology infrastructure.

Fourie added: “For African banks, AI is not a theoretical discussion; it is a strategic imperative. The ability to integrate AI into core processes, manage cyber-risk and build the right talent base will determine competitive advantage. At the same time, banks must modernise legacy systems and manage infrastructure costs, all while protecting trust in an increasingly digital ecosystem.” Strategic M&A (mergers and acquisitions) continues to be a growth lever. With 25 per cent of banking CEOs citing “strategic differentiation” as primary driver of AI adoption, investment is increasingly linked to long-term competitive positioning rather than ef ciency, reinforcing that

AI strategy is now inseparable from business strategy.

A PAN-AFRICAN MOMENT FOR TRANSFORMATION

Across insurance and banking, a clear picture emerges: AI is moving from experimentation to industrial-scale deployment. Con dence is backed by disciplined transformation – measured investment in AI, prioritisation of cybersecurity, attention to ESG compliance and strategic M&A.

56% say ethical challenges are the biggest obstacle to AI implementation.

For African nancial institutions, the opportunity lies in balancing innovation with resilience, and growth with governance. Those that succeed will integrate AI into core operations, modernise infrastructure, equip the workforce and manage risk – demonstrating that AI is no longer a buzzword, but a critical tool for shaping the future of nance on the continent.

Mark Danckwerts

Pierre Fourie

FINANCIAL SERVICE INSIGHTS FROM KPMG’S 2025 GLOBAL CEO OUTLOOK AND CAPITAL MARKETS: CEO OUTLOOK

SMARTER WATER

Insights and analysis from XYLEM SOUTH AFRICA’S WATER TECHNOLOGY

TRENDS

2025 REPORT reveal how AI is helping water utilities unlock data, predict demand, optimise operations and boost resilience

Water utilities are under growing pressure. Ageing infrastructure, climate variability and rising demand mean that managers need smarter, more adaptive ways to operate. While digital monitoring and analytics have already improved ef ciency, arti cial intelligence (AI) is now taking water management to the next level. By identifying patterns in large datasets, AI enables predictive insights and supports better decision-making.

demand and optimise energy consumption by adjusting operations according to predicted peaks.

3. Advanced metering infrastructure

Smart meters have improved distribution ef ciency, but advanced metering infrastructure (AMI) takes this further.

AMI performs remote readings and integrates information into AI systems, providing near real-time monitoring and feedback for operations.

4. Decision support systems

Decision support systems use AI to analyse large datasets from hydrological and meteorological stations, expert knowledge and local inputs. These tools support planning and management at real-time, medium- and long-term levels, modelling different scenarios such as water body behaviour and consumption patterns.

OVERCOMING DEPLOYMENT CHALLENGES

Despite clear bene ts, deploying AI in water management is not always straightforward. Success depends on data quality, integration with existing infrastructure and organisational-readiness. Deployment can become complex, which is why leading water technology companies develop and maintain extensive software platforms designed speci cally for utility challenges.

Chetan Mistry, strategy and marketing manager at Xylem South Africa, WSS, explains: “Water distribution and treatment sites produce far more data than they use. But that data gets neglected because of capacity. It would take an enormous amount of time to organise and study the data for patterns and insights. Digital and AI systems are solving those problems. Digital systems record and share accurate and reliable data, which AI systems use to rapidly produce planning information, automation and other improvements.”

Adoption is on the rise. Around 15 per cent of large water utilities worldwide currently use AI, a gure expected to reach 30 per cent by 2026 and 75 per cent by 2035, according to Xylem South Africa’s Water Technology Trends 2025 Report. This growth re ects the sector’s recognition that AI can turn data into actionable insights.

UNLOCKING THE POTENTIAL OF UTILITY DATA

Experts see huge potential in AI-enabled water management. Digital systems are already delivering measurable results.

Similar capabilities are expanding into industrial water and wastewater operations. Predictive monitoring and process optimisation help improve compliance, reliability and resource ef ciency, showing the hidden capacity at every water management site.

HOW AI IS APPLIED IN WATER SYSTEMS

Water utilities are using AI and smart data in several ways:

1. Real-time process adjustment

Chetan Mistry

For example, Yorkshire Water Services in the United Kingdom, using Xylem Vue digital services, reduced visible leaks by 57 per cent while cutting annual distribution main repairs by 30 per cent.

Water treatment systems must maintain consistency even as ows change constantly. AI allows operators to de ne scenarios that automatically adjust operations, such as reagent dosing and treatment line control, using data from water management applications and business intelligence systems.

2. Predictive demand and optimisation

Predictive maintenance systems use AI-driven models and performance data, often integrated with digital twins, to anticipate equipment needs. AI also helps water managers forecast

“Companies like Xylem invest substantially in developing water management platforms that are secure, simple to deploy and ensure the data remain with the utility,” says Mistry.

“They create interactive and customisable dashboards and reports, which authorised staff and contractors can access on-site through smart devices and computers.”

The real advantage lies not just in new features, but in making existing data useful.

“Data that does nothing only takes up space. But data made useful through cloud-based management software opens additional dimensions for planning and predictive actions such as maintenance.”

AI’S MISSING LINK: FOCUS

While most organisations are investing in AI, only a few are experiencing immediate transformational impact. The challenge isn’t the technology itself – it’s knowing the right processes and focus areas, writes TERTIUS ZITZKE , Group CEO of 4Sight Holdings

For more than a century, the Pareto Principle, commonly known as the 80/20 rule, has held true across industries: roughly 80 per cent of outcomes are driven by 20 per cent of activities. In the era of arti cial intelligence, this principle is more relevant than ever. The golden rule of growth will always be 20 per cent effort to keep and maintain a customer and 80 per cent effort to gain a new one.

AI does not create value by automating everything. It creates value by amplifying the critical few decisions, actions and processes that matter most. This insight sits at the heart of how forward-looking organisations are now approaching AI-driven business automation.

AI HAS A FOCUS PROBLEM, THE 80/20 RULE EXPLAINS WHY

Arti cial intelligence is no longer a novelty in business. It is also no longer scarce. What is scarce is clarity.

Most organisations today are experimenting with AI across HR, sales, marketing, operations, nance and innovation, yet many leaders quietly admit that the results feel incremental rather than transformational. The issue is not the capability of AI. The issue is how leaders decide where intelligence should be applied. This is where an old idea becomes newly relevant.

FROM “AI EVERYWHERE” TO “AI WHERE IT MATTERS”

Many early AI initiatives failed because they focused on breadth instead of leverage:

• Automating low-impact tasks.

• Deploying tools without strategic alignment.

• Chasing innovation without measurable business outcomes. The next phase of AI adoption is different. Leading organisations are using AI to identify the vital 20 per cent inside each business function, and then systematically applying intelligence to those areas rst.

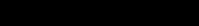

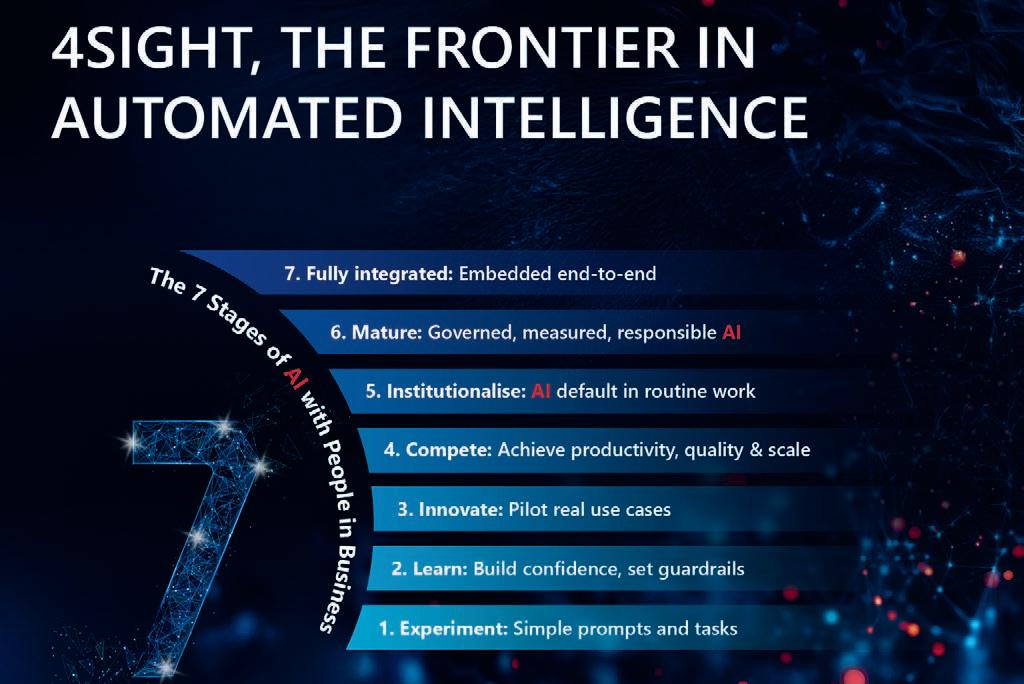

At 4Sight, this approach is structured through the Seven Stages of AI for Business, a maturity model that moves organisations from basic automation to autonomous, self-reinforcing intelligence.

APPLYING THE 80/20 PRINCIPLE ACROSS CORE BUSINESS FUNCTIONS

<strap><bold>IN PARTNERSHIP ITH 4SIGHT HOL INGS

Let’s look at applying the 80/20 principle to the ve pillars of business transformation: people, growth, operations, nance and innovation.

<header> HY THE 0/20 RULE IS THE MISSING LIN IN AI RIVEN USINESS TRANSFORMATION

<blurb> ost organisations are investing in AI. Few are seeing immediate transformational returns. The reason is not technology it is the process and where to focus By <bold>TERTIUS IT E<unbold>, group CEO of 4Sight oldings

<strap><bold>IN PARTNERSHIP ITH 4SIGHT HOL INGS

1. Human resources: from administration to talent advantage In people/HR, the highest value is not created by administration. It is created by hiring quality talent, retaining top performers and developing leadership. AI enables faster, fairer talent screening, predictive insights into attrition risk and data-driven workforce planning. The result is an HR function that moves beyond process management to measurable talent return on investment.

<body>

4Sight Stage HR focus (20per cent) Automation/intelligence impact

<header> HY THE 0/20 RULE IS THE MISSING LIN IN AI RIVEN USINESS TRANSFORMATION

<blurb> ost organisations are investing in AI. Few are seeing immediate transformational returns. The reason is not technology it is the process and where to focus By <bold>TERTIUS IT E<unbold>, group CEO of 4Sight oldings

Talent screening, CV matching Improves recruiter throughput

Performance insights Better promotion and reward decisions 5 Attrition prediction Retains top 2 per centperformers 6 Autonomous workforce planning ynamic role andskills allocation

7 Org design innovation Continuous talent model evolution

<body> 4Sight Stage HR focus (20per cent) Automation/intelligence impact 1–2 Payroll, leave, compliance Removes low-value admin work 3 Talent screening, CV matching Improves recruiter throughput

Outcome: R shifts from a cost centre to a talent return on investment engine.

Performance insights Better promotion and reward decisions

1–2 CR updates, reporting Frees selling time

Attrition prediction Retains top 2 per centperformers 6 Autonomous workforce planning ynamic role andskills allocation 7 Org design innovation Continuous talent model evolution

3 ead scoring Focus on high-conversion

4Sight Stages Sales focus (20per cent) Automation/intelligence impact

Outcome: R shifts from a cost centre to a talent return on investment engine.

2. Growth: sales: focusing on the revenue that actually matters In most organisations, a small portion of customers generate most of the revenue, and a minority of opportunities deliver the majority of margin. AI allows sales teams to prioritise high-probability opportunities, predict churn before it happens and guide sales actions in real-time. This shifts sales from activity volume to revenue precision.

4Sight Stages Sales focus (20per cent) Automation/intelligence impact

ext-best-action guidance Improves win rates

eal andchurn prediction Protects revenue concentration

Autonomous pipeline management Self-optimising sales execution

ew revenue model creation AI-enabled offerings pricing

Outcome: sales effort concentrates where the best margin exists

<bold>

Tertius Zitzke

Marketing: precision over spend

Marketing has long been governed by the 80/20 rule: only a few channels drive most conversions, and a small number of messages create real engagement. With AI, organisations can identify which audiences convert before campaigns launch, allocate spend dynamically to high-performing channels and continuously optimise content and messaging. The outcome is higher impact with lower waste.

4Sight Stage Marketing focus (20per cent) Automation/intelligence Impact

4Sight Stage Marketing focus (20per cent) Automation/intelligence Impact

1–2 Campaign execution Faster, cheaper delivery

1–2 Campaign execution Faster, cheaper delivery

3 Content optimisation igher engagement rates

3 Content optimisation igher engagement rates

4 Attribution intelligence Budget shifts to winning channels

4 Attribution intelligence Budget shifts to winning channels

5 Predictive segmentation Anticipates demand

5 Predictive segmentation Anticipates demand

6 Autonomous spend allocation Continuous ROI optimisation

6 Autonomous spend allocation Continuous ROI optimisation

7 arket creation AI-generated products andnarratives

7 arket creation AI-generated products andnarratives

Outcome: less spend, higher conversion density

Outcome: less spend, higher conversion density

3. Operations: eliminating bottlenecks, not just costs

<bold> . Operations: eliminating bottlenecks not ust costs<unbold>

<bold> . Operations: eliminating bottlenecks not ust costs<unbold>

Operational inef ciency rarely comes from everywhere. It comes from a small number of bottlenecks, exceptions and failure points. AI makes it possible to predict disruptions before they occur, automatically rebalances workloads and creates self-healing operational processes. Operations evolve from cost ef ciency to resilience and scalability.

4Sight Stage Finance focus (20per cent) Automation/intelligence impact

4Sight Stage Finance focus (20per cent) Automation/intelligence impact

1–2 Transaction processing Faster close cycles

1–2 Transaction processing Faster close cycles

3 Variance analysis Early anomaly detection

3 Variance analysis Early anomaly detection

4 Scenario modelling Better executive decisions

4 Scenario modelling Better executive decisions

5 Forecasting and risk prediction Capital protection

5 Forecasting and risk prediction Capital protection

6 Autonomous controls Continuous compliance

6 Autonomous controls Continuous compliance

7 Strategic finance innovation AI-driven business models

7 Strategic finance innovation AI-driven business models

Outcome: finance becomes predictive and strategic, not retrospective

Outcome: finance becomes predictive and strategic, not retrospective

5. Innovation: making breakthrough repeatable

<bold>5. Innovation: making breakthrough repeatable<unbold>

<bold>5. Innovation: making breakthrough repeatable<unbold>

Innovation is often treated as accidental. In reality, most future value comes from a small number insights and experiments. AI helps organisations detect emerging patterns and trends, prioritise right ideas early and scale successful innovation faster This turns innovation from chance into a system.

Innovation is often treated as accidental. In reality, most future value comes from a small number of insights and experiments. AI helps organisations detect emerging patterns and trends, prioritise the right ideas early and scale successful innovation faster. This turns innovation from chance into a system.

Innovation is often treated as accidental. In reality, most future value comes from a small number insights and experiments. AI helps organisations detect emerging patterns and trends, prioritise right ideas early and scale successful innovation faster This turns innovation from chance into a system.

4Sight Stage Innovation focus (20per cent) Automation/intelligence impact

4Sight Stage Innovation focus (20per cent) Automation/intelligence impact

1–2 Idea intake automation Faster experimentation

Operational inefficiency rarely comes from everywhere. It comes from a small number of bottlenecks, exceptions and failure points.AI makes it possible to predict disruptions before they occur, automatically rebalances workloads and creates self-healing operational processes. Operations evolve from cost efficiency to resilience and scalability. Operations evolve from cost efficiency to resilience and scalability.

1–2 Idea intake automation Faster experimentation

Operational inefficiency rarely comes from everywhere. It comes from a small number of bottlenecks, exceptions and failure points.AI makes it possible to predict disruptions before they occur, automatically rebalances workloads and creates self-healing operational processes. Operations evolve from cost efficiency to resilience and scalability. Operations evolve from cost efficiency to resilience and scalability.

4Sight Stage Operations focus (20per cent) Automation/intelligence impact

4Sight Stage Operations focus (20per cent) Automation/intelligence impact

1–2 RPA for repetitive steps Cost and error reduction

1–2 RPA for repetitive steps Cost and error reduction

3 Process visibility Root-cause clarity

3 Process visibility Root-cause clarity

4 ecision support Faster throughput

4 ecision support Faster throughput

5 Failure prediction Prevents disruption

5 Failure prediction Prevents disruption

6 Autonomous orchestration Self-healing operations

3 Pattern detection Better idea quality

3 Pattern detection Better idea quality

4 Portfolio decisioning Smarter bets

4 Portfolio decisioning Smarter bets

5 Trend prediction Early-mover advantage

5 Trend prediction Early-mover advantage

6 Autonomous experimentation Rapid scaling

6 Autonomous experimentation Rapid scaling

7 Continuous reinvention Innovation as a system

7 Continuous reinvention Innovation as a system

<crosshead><bold>THE 4SIGHT SEVEN STAGES OF AI: A PRACTICAL MATURITY

THE 4SIGHT SEVEN STAGES OF AI: A PRACTICAL MATURITY PATH

6 Autonomous orchestration Self-healing operations

7 Operational innovation ew operating models

7 Operational innovation ew operating models

Outcome: operations move from efficiency to resilience and scale,

Outcome: operations move from efficiency to resilience and scale,

4. Finance: from retrospective control to predictive insight –hindsight to 4Sight

<bold>4. Finance: from retrospective control to predictive insight hindsight to 4Sight<unbold>

<bold>4. Finance: from retrospective control to predictive insight hindsight to 4Sight<unbold>

Traditional finance looks backwards. igh-performing finance functions look forward.

Traditional finance looks backwards. igh-performing finance functions look forward.

PATH<unbold>

The real power of AI is unlocked progressively:

<crosshead><bold>THE 4SIGHT SEVEN STAGES OF AI: A PRACTICAL MATURITY PATH<unbold>

1. Task automation – removing manual effort.

The real power of AI is unlocked progressively:

2. Process automation – streamlining work ows.

The real power of AI is unlocked progressively:

3. Assisted intelligence – supporting human decisions.

<start numbered list>

<start numbered list>

4. Augmented decision-making – improving judgement quality.

1.Task automation –removing manual effort

5. Predictive intelligence – anticipating outcomes.

1.Task automation –removing manual effort

6. Autonomous intelligence – self-managing systems.

2.Process automation –streamlining workflows

2.Process automation –streamlining workflows

7. Innovative intelligence – continuous reinvention.

3.Assisted intelligence –supporting human decisions

3.Assisted intelligence –supporting human decisions

Each stage builds on the previous one, and each stage increases the organisation’s ability to apply AI to the 20 per cent that drives 80 per cent of results.

4.Augmented decision-making –improving judgement quality

4.Augmented decision-making –improving judgement quality

AI enables predictive cash-flow forecasting, early detection of financial riskand scenario-based decision modelling Finance becomes a strategic partner to the business, not just a reporting function.

A NEW LEADERSHIP IMPERATIVE

5.Predictive intelligence –anticipating outcomes

5.Predictive intelligence –anticipating outcomes

AI enables predictive cash-flow forecasting, early detection of financial riskand scenario-based decision modelling Finance becomes a strategic partner to the business, not just a reporting function.

Traditional nance looks backwards. High-performing nance functions look forward. AI enables predictive cash- ow forecasting, early detection of nancial risk and scenario-based decision modelling. Finance becomes a strategic partner to the business, not just a reporting function.

THE FUTURE OF BUSINESS AUTOMATION IS NOT ABOUT REPLACING PEOPLE. IT IS ABOUT AMPLIFYING HUMAN IMPACT WHERE IT COUNTS.

AI is no longer an IT conversation; it is a leadership discipline. The organisations that will outperform in the next decade are not those with the most AI tools, but those with the clearest focus on where value is truly created, which decisions matter most, and how intelligence should be applied responsibly. The future of business automation is not about replacing people. It is about amplifying human impact where it counts.

4Sight enables organisations to design, deploy and scale AI-driven business automation through structured maturity models, data intelligence and responsible AI adoption.

AT THE FRONTIER OF HUMAN-CENTRED AI TRANSFORMATION

4SIGHT HOLDINGS enables human-centred AI transformation, uniting data, automation and enterprise systems to deliver intelligent, scalable solutions that enhance performance, decision-making and sustainable business growth

AI transformation is most powerful when it elevates people, not replaces them. This principle underpins 4Sight’s approach to intelligent enterprise solutions, where data, automation and systems are seamlessly integrated. The result is scalable, outcome-driven transformation that enhances decision-making, performance and long-term business resilience.

4Sight lists on the JSE Main Board.

At 4Sight, AI is not a workforce replacement strategy; it is a people investment strategy. The group’s philosophy is grounded in the belief that sustainable AI transformation is achieved by elevating people, not removing them. By automating repetitive, low-value tasks and embedding AI as an intelligent assistant within everyday work ows, 4Sight enables employees to focus on innovation and decision-making. This people- rst approach ensures that AI adoption drives productivity and resilience while strengthening skills, accountability and human expertise across the organisation.

DIFFERENTIATING THROUGH STRATEGY

4Sight is unique in its ability to operate across not only information technology (IT) environments, but also across mission-critical operational technology (OT), the digital layer that runs, monitors and optimises physical industrial operations in real-time. Crucially, it connects these environments back into the business environment (BE), where strategy, governance, people, nancial performance and decision-making reside, ensuring that insights generated on the operational edge translate directly into measurable business outcomes.

The business is structured across business clusters that cover:

• Operational technologies – mission-critical industrial systems.

• Information technologies – Core technologies like finance, operations and HR systems.

• Business Environment – data and AI, automated intelligence and solutions for knowledge workers.

• Channel partners – scale distribution channel and ecosystem of partners, including independent software vendors, Sage and Microsoft partners.

Traditionally, these domains operate as silos, resulting in fragmented decision-making and limited visibility. 4Sight’s breadth of experience across all these technology pillars enables it to drive a connected strategy, unifying data, automation and operations across business functions. This convergence underpins many of the group’s most impactful solutions, particularly in asset-intensive and data-rich environments.

One

transformation framework, applied across industries.

INDUSTRY-AGNOSTIC, OUTCOME-DRIVEN

While 4Sight operates across a wide range of sectors, its approach is industry-agnostic but outcome-driven. The same transformation principles apply whether the challenge is optimising production schedules, modernising nance functions, enhancing customer experience or improving workforce productivity.

Key focus areas include:

• Intelligent automation of core business processes.

• AI-enabled nance, HR and operational platforms.

• Data-driven decision-making across executive and operational levels.

• Secure, hybrid-cloud architectures that support scale and compliance.

• AI governance frameworks aligned with organisational risk pro les.

DELIVERING AUTOMATED INTELLIGENCE SOLUTIONS

4Sight’s focus on automated intelligence is delivered through a structured set of solution areas spanning the full enterprise,

recognising that meaningful AI impact only occurs when technology, data and people work together.

Across the Business Environment, 4Sight enables organisations to modernise how work gets done through modern digital enterprise, intelligent automation, data and AI enablement and software and application development, ensuring AI is embedded into day-to-day decision-making rather than operating in isolation.

Within Information Technologies, the group applies automated intelligence to core business systems, such as enterprise resource planning (ERP), corporate resource planning, human capital and customer relationship management (CRM), helping organisations move from transactional processing to insight-driven operations.

In Operational Technologies, automated intelligence is applied to industrial environments through optimisation, automation and simulation, supporting safer, more ef cient and more predictable operations.

AT 4SIGHT, AI IS NOT A WORKFORCE REPLACEMENT STRATEGY; IT IS A PEOPLE INVESTMENT STRATEGY. THE GROUP’S PHILOSOPHY IS GROUNDED IN THE BELIEF THAT SUSTAINABLE AI TRANSFORMATION IS ACHIEVED BY ELEVATING PEOPLE, NOT REMOVING THEM.

This is complemented by 4Sight’s Channel Partner (CP) ecosystem, which allows these AI-enabled solutions to be scaled across industries and geographies through leading global vendors and independent software providers. Together, these clusters form an integrated automated intelligence capability – one that connects operational reality with enterprise data and human expertise to drive sustained, measurable business outcomes.

DATA AND INSIGHTS: FROM NO SIGHT TO FRONTIER

Many organisations operate with limited visibility, siloed data, manual processes and reactive decision-making. Others have invested in systems and dashboards but struggle to convert information into insight or insight into action.

4Sight frames this progression as a journey:

• No sight: zero digital visibility, fragmented systems and manual execution.

• Hindsight: decisions based on historical data and reporting.

• Insight: near real-time visibility and predictive analytics.

• Foresight: continuous, forward-looking decision-making.

• 4AI: automated intelligence, where AI-driven systems not only recommend actions, but also implement decisions and execute within governed parameters.

• 4frontier: the next-generation, AI-transformed organisation – driven by a culture that embraces AI to unlock productivity and innovation through intelligent agents, automation and data insights. This evolution is not theoretical. It is grounded in decades of operational experience across industries, such as mining, manufacturing, energy, nance, telecommunications and the public sector.

4SIGHT’S GO-TO-MARKET: SCALE, REACH AND CAPABILITY

4Sight operates at scale, with:

• Over 4 500 customers globally.

• Presence across 70-plus countries.

• 440 permanent employees.

• A partner ecosystem of more than 1 000 registered partners.

• Relationships with leading global technology vendors and independent software vendors.

DIGITAL AI TRANSFORMATION AS ORGANISATIONAL DNA

True transformation occurs when AI, automation and data are embedded into the operational DNA of the business – spanning strategy, governance, people, processes and technology. This requires more than software. It requires deep domain expertise, change management and a disciplined focus on value creation.

4Sight’s DNA-based transformation model focuses on:

• Foundational controls and governance to ensure trust, compliance and resilience.

• Data enablement across IT and OT environments.

• Process automation to remove repetitive, manual work.

• Predictive and prescriptive intelligence to support decision-making.

• Human-centric adoption, ensuring people understand, trust and use AI responsibly. Through a structured, experience-led approach, 4Sight helps businesses:

• Move beyond isolated AI pilots to embedded intelligence across the enterprise.

• Maintain control, transparency and accountability as automation scales.

• Translate innovation into measurable operational and strategic value. The result is not just AI adoption, but a transformation in how they operate, decide and compete – becoming frontier organisations where people lead, and intelligence is seamlessly automated across the business.

DELIVERING AGAINST THE 4SIGHT DNA

Across its 14 specialist divisions, 4Sight delivers in direct alignment with the core pillars of its DNA:

• People are enabled through robust HR and human capital solutions that support workforce management, skills development and organisational resilience.

• Sales and marketing is empowered through CRM platforms and a strong CP ecosystem that drives engagement, growth and long-term value creation.

• Operations are transformed through OT focused on asset automation, optimisation and simulation, enabling safer, more ef cient and data-driven environments.

• Finance is strengthened through enterprise-grade ERP solutions that enhance visibility, control and governance. Innovation runs across every division, with AI enablement embedded throughout the group to augment decision-making, accelerate outcomes and ensure technology serves people, performance and sustainable growth.

4SIGHT’S AI MATURITY MODEL

4Sight builds frontier rms – next-generation, AI-transformed organisations, driven by a culture that embraces AI to unlock productivity and innovation through intelligent agents, automation and data insights. 4Sight has developed the Seven Stages of AI for People in Business, recognising that the adoption of AI in organisations is a journey and an evolution, from individual use, through team and process enablement, to enterprise-wide orchestration. Importantly, it is a journey that remains human-led, governed and aligned with real business outcomes.

This framework re ects how organisations actually adopt AI in practice – often unevenly, sometimes cautiously, but always under pressure to deliver measurable value while managing risk.

WHY A STAGED APPROACH TO AI MATTERS

The Seven Stages model provides a common language for leadership teams to answer critical questions:

• Where are we today, really?

• What does “progress” look like in practical terms?

• How do we balance governance and innovation?

• How do people remain central as AI capability increases?

Crucially, organisations do not need to start at Stage 1, nor do they need to progress at the same speed across all functions. Finance may be at a more advanced stage than HR; operations may move faster than compliance. The value of the model lies in its exibility and clarity.

4Sight channel partners across 70-plus countries.

Digital AI transformation embedded into the DNA of the enterprise.

Stage 1: experiment: simple prompts and tasks

At the rst stage, organisations begin experimenting with AI at an individual level. AI is used to support employees with simple, low-risk tasks, such as drafting content, summarising information, translating text or generating basic insights. The focus is on building awareness and curiosity while demonstrating immediate productivity gains. Employees start to use AI as a simple copilot or personal assistant, experiencing productivity gains in existing tasks, but without changing core processes or decision-making structures.

Stage 2: learn: build confidence, set guardrails

In the second stage, AI adoption expands beyond individuals into teams and departments. AI begins acting as a digital colleague, supporting routine knowledge work while operating within de ned guardrails.

Organisations focus on establishing acceptable-use policies, data controls and governance frameworks. Employees gain con dence in using AI responsibly, and leadership ensures that AI use aligns with organisational standards, compliance requirements and ethical considerations.

Stage 3: innovate: pilot real-use cases

At this stage, organisations move from experimentation to intentional innovation, initiating pilots across a variety of business processes and functional areas. The emphasis

shifts to testing tangible use cases that improve quality, reduce errors and enhance decision-making. AI begins integrating with business systems, supporting users at the point of work while maintaining human oversight. Stage 4: compete: achieve productivity, quality and scale

Stage four marks a turning point where AI begins executing supervised tasks. Automation is introduced to handle repetitive, rules-based activities, such as data processing, transaction handling and operational work ows.

Organisations invest in solutions that provide material cost savings or introduce innovation or business process transformation. AI operates under clear accountability, with controls, human approval mechanisms and audit trails in place. This enables organisations to scale productivity, improve consistency and reduce operational bottlenecks, creating a measurable competitive advantage.

Stage 5: institutionalise: AI default in routine work

In the fth stage, AI becomes embedded as the default way of working for routine processes. Unattended agents operate independently within de ned service levels, handing control back to humans only when exceptions or anomalies arise.

AI is no longer an add-on; it is institutionalised into day-to-day operations. Organisations see signi cant gains in ef ciency, reliability, and speed, while governance and

performance monitoring ensure continued control and accountability.

Stage 6: mature: governed, measured, responsible AI

At maturity, AI co-ordinates end-to-end processes across functions, supported by strong governance and measurement frameworks. AI decisions are transparent, auditable and aligned with regulatory and ethical standards.

Organisations actively measure AI impact on performance, risk and outcomes. Human-in-the-loop oversight remains central, ensuring AI augments expertise and supports better decisions rather than replacing accountability. Governance reaches a stage of pervasive maturity.

Stage 7: fully integrated: embedded end-to-end

In the nal stage, AI, people and systems operate as a single, integrated enterprise intelligence layer. AI orchestrates work ows across departments, technologies and environments – from information systems to operational and industrial platforms. This is the frontier rm: human-led but AI-operated. Intelligence scales across the organisation as seamlessly as cloud infrastructure, enabling resilience, adaptability and continuous transformation in a rapidly changing world.

GOVERNANCE, TRUST AND THE HUMAN ROLE

Across all seven stages, one principle remains constant: AI must serve and augment people, not replace them. Governance, ethics and accountability are not add-ons; they are foundational. As AI capability increases, so too must transparency, oversight and organisational discipline.

The Seven Stages model ensures that progress is intentional, not accidental.

Seven Stages of AI for People in Business: a structured, governed journey from foundational digitalisation to automated intelligence.

THE NEW AI ARMS RACE: CHIPS AND THE BATTLE FOR COMPUTE

Artificial intelligence may look like a software revolution, but the real competition is unfolding in chips, data centres and computing power. The companies and countries controlling AI infrastructure may ultimately shape the technology’s future, writes BRENDON PETERSEN

Arti cial intelligence (AI) is often framed as a software revolution.

However, beneath the algorithms, chatbots and automation lies something far more physical. The real AI race is increasingly about hardware: specialised chips, massive data centres and the energy required to run them. In other words, the future of AI may depend less on who writes the best models and more on who controls the infrastructure that powers them.

At the centre of this shift is a simple reality. AI runs on hardware, and the scale of that hardware is growing rapidly.

THE COST AND DEMANDS OF TRAINING AND INFERENCE

Two workloads dominate the AI landscape: training and inference. Training involves building and re ning models using enormous datasets and compute clusters. Inference happens once that training is complete. It is the process of

running trained models repeatedly with new inputs.

Training large frontier models has become extraordinarily expensive. Systems such as Google’s Gemini, OpenAI’s ChatGPT and Anthropic’s Claude require vast computing clusters running thousands of specialised

hands of only a handful of global technology companies.

Training is expensive and episodic, while inference happens continuously. Every AI query, recommendation or automated decision triggers another inference workload. That demand is placing unprecedented pressure on the global

“Training frontier models is becoming increasingly sophisticated and expensive,” says Clifford de Wit, chief innovation of cer at Accelera Digital Group.

“For most companies, the opportunity lies not in building these giant models from scratch, but in building specialised models or solutions

Hyperscale cloud providers, such as Microsoft, Google and Amazon, are purchasing enormous quantities of graphic processing units (GPUs), central processing units (CPUs) and storage to support AI services.

HARDWARE AND INFRASTRUCTURE NEEDS DRIVE COMPETITIVENESS

The scale of AI infrastructure continues to grow rapidly.

At the Mobile World Congress this year, Huawei unveiled its Atlas 950 SuperPoD system, capable of linking up to 8 192 processors into a single computing cluster designed for AI training and inference workloads.

Huawei executives say infrastructure like this will underpin the AI economy. “Cloud computing is becoming the public power grid for the AI era,” said Tim Tao, president of Huawei Cloud Solution Sales.

Systems like these show how the AI race is increasingly de ned by the ability to deploy massive computing clusters rather than simply writing better algorithms.

Clifford de Wit

Tim Tao

The race for AI hardware is also becoming geopolitical. The United States and its allies have introduced export restrictions on advanced semiconductor technologies in an effort to limit China’s access to the most powerful AI chips. In response, Chinese technology companies have accelerated efforts to develop their own computing infrastructure and semiconductor capabilities. As AI becomes a strategic technology, access to chips and compute capacity is increasingly being treated as a matter of national competitiveness.

Specialised chips such as GPUs have become the workhorses of AI computing because they can perform the parallel mathematical operations required for machine learning.

Demand for these chips has surged as AI workloads expand.

The semiconductor supply chain has already faced disruption in recent years. The pandemic exposed the fragility of chip manufacturing and logistics networks, and AI demand is adding new pressure.

Industry executives say hyperscalers are purchasing large portions of the world’s supply of advanced processors and memory. Prices for GPUs and high-performance RAM have risen accordingly.

Yet this does not necessarily mean organisations cannot run AI workloads.

“There is still capacity available,” says de Wit. “The large hyperscalers and regional cloud providers continue to expand infrastructure. The challenge comes when organisations try to procure their own hardware.”

The rise of small language models illustrates this shift. Models such as Gemini Nano, Microsoft’s Phi-3 Mini and Meta’s Llama variants are compact enough to run directly on mobile devices.

In practice, the economics of AI favour shared infrastructure. Instead of building their own clusters, most businesses consume AI capabilities through cloud platforms.

At the same time, the industry is exploring ways to reduce reliance on massive models.

Smaller and more specialised AI models are gaining traction because they require far less compute while still delivering strong performance.

Many models are now optimised for different levels of complexity. Some are designed for rapid responses, while others handle deeper reasoning tasks that require more computing power.

South Africa has its own example. Lelapa AI’s InkubaLM model was designed for African languages and is signi cantly smaller than traditional large language models while outperforming them in languages such as isiZulu

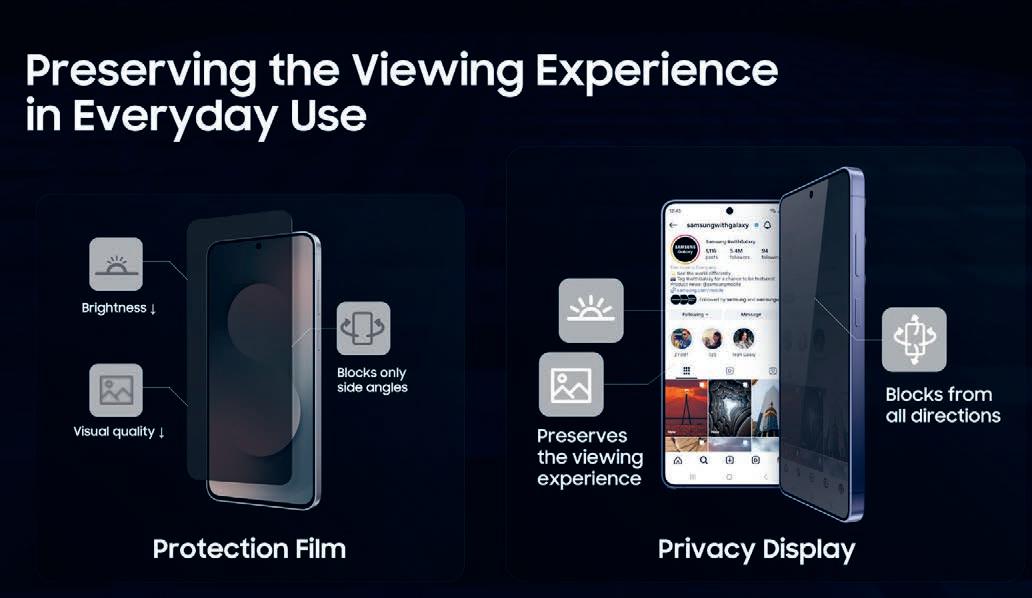

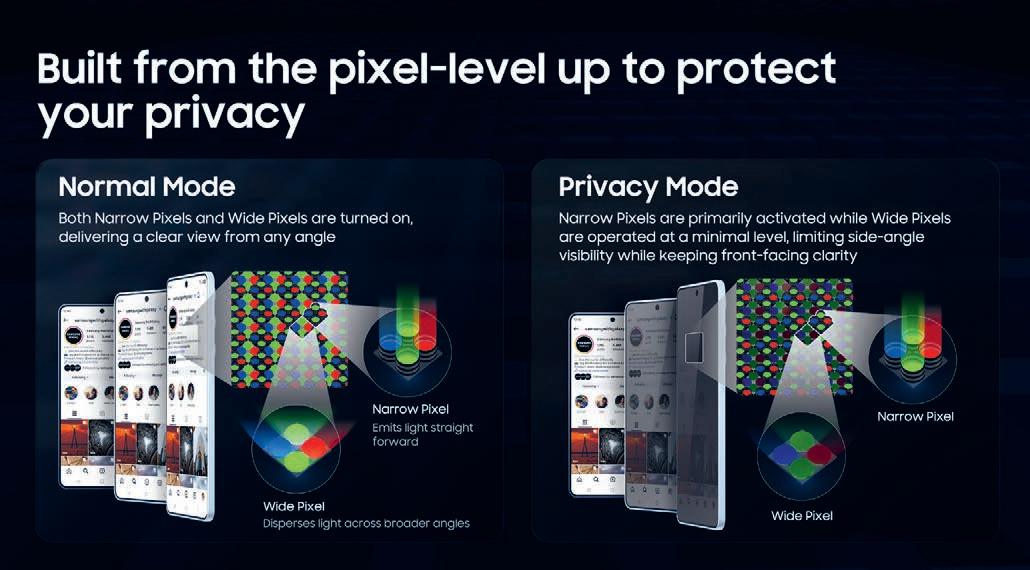

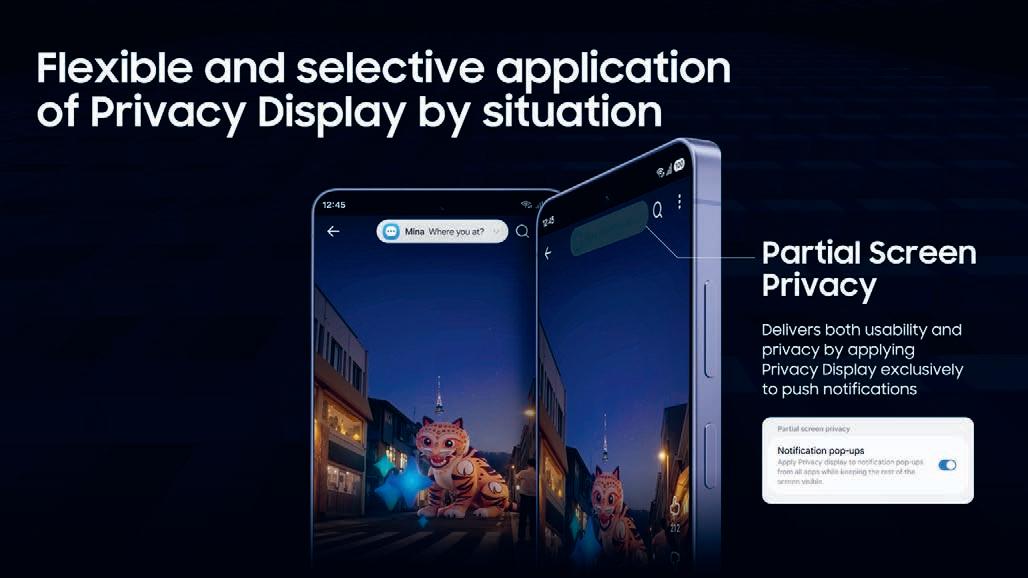

This shift is enabling AI to move directly onto consumer devices rather than relying entirely on cloud infrastructure. Samsung is betting heavily on on-device AI as the next phase of the industry. The company says it aims to reach 800 million Galaxy devices globally, re ecting how rapidly AI capabilities are spreading across smartphones and

Yet supply chains still shape what

“Global component availability can change, and manufacturers have to be able to adapt to those supply realities,” says Justin Hume, vice president of Mobile eXperience at Samsung South Africa.

Hume says decisions around which chipsets power Galaxy devices can shift depending on global component availability.

Behind the scenes, those dynamics extend beyond chips themselves. The production of advanced semiconductors depends on complex global supply chains

and specialised minerals used in modern chip manufacturing.

Control over these resources is becoming increasingly entangled with geopolitics.

iNCREASED ENERGY CONSUMPTION

Meanwhile, the energy required to run AI infrastructure is emerging as a strategic issue.

Large data centres consume enormous amounts of electricity and require sophisticated cooling systems.

AI training can involve thousands of accelerators running continuously for days or weeks, while inference workloads add ongoing energy demand as services scale globally.

Research into AI infrastructure highlights the environmental implications of these systems. Training large models requires signi cant electricity, while inference workloads add ongoing energy demand as services expand.

For the Global South, this raises important questions.

Much of the world’s AI infrastructure is concentrated in North America, Europe and parts of Asia, where hyperscale data centres and semiconductor industries are already established.

Demand for AI services is growing rapidly across Africa, but infrastructure limitations remain a challenge. Power grid reliability, data centre capacity and connectivity will shape how quickly AI adoption accelerates.

De Wit believes demand will continue to rise. “AI is moving from experimentation to real business value,” he says.

If current projections hold, the future of AI will depend not only on better algorithms, but also on the ability to scale the physical systems behind them.

Chips, compute and infrastructure are becoming the strategic assets of the AI era.

Justin Hume

AI WITHOUT LIMITS

How ALTRON ARROW is enabling the next wave of intelligent innovation

Arti cial intelligence is no longer a future ambition; it is a present-day competitive advantage. Across South Africa and the broader African continent, organisations are increasingly recognising AI as a catalyst for economic growth, operational ef ciency and digital transformation. Yet, despite this momentum, one critical challenge continues to slow adoption: access to the right infrastructure.

AI is fundamentally a compute problem. The ability to train, ne-tune and deploy AI models depends heavily on

high-performance computing environments, particularly graphics processing units (GPUs)-accelerated infrastructure. Without the right foundation, even the most promising AI strategies fail to scale beyond proof-of-concept.

This is where Altron Arrow plays a pivotal role.

As a leading distributor of enterprise technology solutions, Altron Arrow enables organisations across Africa to unlock the full potential of AI by providing access to world-class infrastructure, deep technical expertise and strategic partnerships.

ALTRON ARROW ENABLES ORGANISATIONS ACROSS AFRICA TO UNLOCK THE FULL POTENTIAL OF AI BY PROVIDING ACCESS TO WORLD-CLASS INFRASTRUCTURE, DEEP TECHNICAL EXPERTISE AND STRATEGIC PARTNERSHIPS.

At the heart of this capability lies a strong collaboration with ASUS, delivering cutting-edge GPU solutions and next-generation AI platforms tailored for both enterprise-scale deployments and emerging AI innovators.

THE

INFRASTRUCTURE

GAP IN AFRICA’S AI JOURNEY

Africa’s AI opportunity is immense. In critical industries, such as nancial services and telecommunications, mining, logistics and public sector transformation, the potential use cases are vast. However, the reality is that many organisations face signi cant barriers when it comes to infrastructure-readiness.

Traditional IT environments are not designed to handle the demands of modern AI workloads, and in many cases, organisations are forced to rely on cloud-based AI services. While cloud offers exibility, it also introduces challenges around cost, data sovereignty, latency and long-term scalability, especially in regions where connectivity and regulatory considerations are critical.

Altron Arrow addresses this challenge by bringing AI infrastructure to organisations across the African continent, enabling on-premise, hybrid and edge AI environments that are both scalable and cost-effective.

BUILDING THE AI BACKBONE WITH ASUS GPU INFRASTRUCTURE

At the core of Altron Arrow’s AI offering is its GPU business, powered by ASUS.

ASUS has emerged as a global leader in high-performance computing infrastructure, delivering solutions that span enterprise-grade GPU servers to compact AI development platforms.

Through this partnership, Altron Arrow provides organisations with access to advanced GPU-accelerated systems designed to support a wide range of AI workloads, from model training and inference to data processing and simulation.

These solutions are not just about raw performance; they are about enabling a full AI life cycle. ASUS GPU infrastructure provides the scalability and reliability required to move from experimentation or development to production.

For enterprises, this means the ability to build private AI environments that offer greater control over data, improved security and predictable cost structures. For industries, such as nance, healthcare and government, where data sensitivity is paramount, this capability is critical.

BY ENABLING LOCAL AI DEVELOPMENT, THE GX10 REDUCES DEPENDENCE ON CLOUD INFRASTRUCTURE, LOWERS COSTS AND ADDRESSES DATA SOVEREIGNTY CONCERNS.

Moreover, GPU infrastructure enables organisations to reduce reliance on external compute resources, accelerating time-to-value and ensuring AI initiatives can scale sustainably.

INTRODUCING THE ASUS ASCENT GX10: DEMOCRATISING AI DEVELOPMENT

While enterprise infrastructure is essential, the AI journey does not begin at scale. It begins with experimentation, prototyping and innovation.

Recognising this, Altron Arrow, in collaboration with ASUS, is bringing to market the ASUS Ascent GX10, a groundbreaking desktop AI supercomputer designed to empower developers, researchers and organisations at the start of their AI journey.

The ASUS Ascent GX10 represents a signi cant shift in how AI capabilities are accessed. Traditionally, developing AI models required access to large, expensive data centre infrastructure. The GX10 changes this paradigm by delivering peta op-scale AI performance in a compact, desktop form factor.

Powered by the NVIDIA GB10 Grace Blackwell Superchip, the GX10 integrates a high-performance central processing unit (CPU) and GPU into a uni ed architecture, enabling seamless data processing and model execution. With up to 128GB of uni ed memory and the ability to handle models with hundreds of billions of parameters, it provides developers with the tools needed to build and test advanced AI applications locally. This is particularly signi cant for the African market.

By enabling local AI development, the GX10 reduces dependence on cloud infrastructure, lowers costs and addresses data sovereignty concerns. Developers can prototype, ne-tune and validate models on their desks before scaling to larger environments, whether on-premise or in the cloud.

In addition, the GX10 supports a full AI software stack, including popular frameworks such as PyTorch and TensorFlow, making it accessible to both experienced data scientists and emerging AI talent.

FROM EDGE TO ENTERPRISE: A SCALABLE AI ECOSYSTEM

One of the key strengths of Altron Arrow’s AI offering is its ability to support organisations across every stage of the AI maturity curve.

For start-ups and developers, solutions like the GX10 provide an accessible entry point into AI development. These platforms enable rapid experimentation and innovation without the need for signi cant upfront investment.

As organisations mature, they can scale into more powerful GPU-accelerated servers and clusters, leveraging ASUS infrastructure to support production workloads, large-scale model training and real-time inference.

The GX10 itself is designed with scalability in mind. It allows clustering of two units for more compute power to handle larger models and more complex workloads, providing a pathway from desktop experimentation to distributed AI environments.

This seamless progression, from edge to enterprise, is critical in ensuring AI initiatives do not stall after initial success. Instead, organisations can evolve their infrastructure in line with their growing needs, supported by Altron Arrow’s expertise and partner ecosystem.

THROUGH ITS PARTNERSHIP WITH ASUS AND THE FOCUS ON GPU-ACCELERATED INFRASTRUCTURE, ALTRON ARROW IS PLAYING A CRITICAL ROLE IN SHAPING AFRICA’S AI FUTURE.

ENABLING INDUSTRY TRANSFORMATION ACROSS AFRICA

The impact of AI infrastructure extends far beyond technology; it drives real-world transformation across industries.

In the nancial sector, AI-powered analytics enable better risk assessment, fraud detection and personalised customer experiences. In mining and manufacturing, computer vision and predictive maintenance improve safety and operational ef ciency. In logistics and ports, AI enables real-time optimisation of supply chains, reducing costs and improving throughput.

For public sector organisations, AI has the potential to enhance service delivery, improve decision-making and drive inclusive growth.

However, none of these outcomes are possible without the right infrastructure.

By providing access to GPU-accelerated computing and AI development platforms, Altron Arrow is enabling organisations across Africa to move from concept to impact, turning AI from a strategic ambition into a practical reality.

BEYOND TECHNOLOGY: A PARTNER FOR THE AI JOURNEY

What sets Altron Arrow Enterprise Computing Solutions (ECS) apart is not just its technology portfolio, but its approach to partnership.

AI adoption is not a one-size- ts-all journey. Each organisation has unique requirements, challenges and objectives. Altron Arrow works closely with customers to understand their speci c use cases, design tailored infrastructure solutions and provide ongoing support throughout the AI life cycle.

This includes:

• Assessing AI-readiness and infrastructure requirements.

• Designing and deploying GPU-accelerated environments.

• Enabling hybrid and edge AI architectures.

• Supporting developers with tools and platforms like the GX10.

• Scaling solutions from pilot to production. By combining technical expertise with a deep understanding of the African market, Altron Arrow ensures organisations are not only equipped with the right technology, but also positioned for long-term success.

SHAPING THE FUTURE OF AI IN AFRICA

As AI continues to evolve, the importance of infrastructure will only increase. The organisations that succeed will be those that can access, deploy and scale compute resources effectively.

Through its partnership with ASUS and the focus on GPU-accelerated infrastructure, Altron Arrow is playing a critical role in shaping Africa’s AI future.

ALTRON ARROW WORKS CLOSELY WITH CUSTOMERS TO UNDERSTAND THEIR SPECIFIC USE CASES, DESIGN TAILORED INFRASTRUCTURE SOLUTIONS AND PROVIDE ONGOING SUPPORT.

From enabling developers with powerful desktop AI systems like the GX10 to delivering enterprise-grade GPU infrastructure, Altron Arrow ECS is bridging the gap between ambition and execution.

In doing so, it not only supports individual organisations, but also contributes to the broader development of an AI-driven economy across South Africa and the continent.

The future of AI in Africa is not just about algorithms; it is about access.

With Altron Arrow, that access is becoming a reality.

START YOUR AI JOURNEY WITH ALTRON ARROW

AI innovation begins with the right technology partner.

At Altron Arrow, we work with customers across industries to enable AI infrastructure designed for modern workloads and intelligent edge environments.

Explore our AI solutions:

ASUS AI GPUs

https://arrow.altron.com/asus-ai-gpus

ASUS GX10

https://arrow.altron.com/asus-gx-10

Speak to Altron Arrow to discuss how AI can power your next generation of innovation.

BUILT HERE

As Africa adopts AI built and hosted abroad, experts warn that control over data, infrastructure and compute will determine the continent’s digital independence.

BY TIANA CLINE

Africa is largely consuming AI that was built elsewhere, runs on infrastructure it does not control and is governed by priorities that are not its own. For most organisations on the continent, that is not a deliberate choice. It is simply what happened when cloud adoption moved faster than the conversation about where workloads should actually sit.

“Sovereignty is not just about data location. It is about operational authority across the AI life cycle,” says Asif Valley, national technology of cer at Microsoft. In other words, how data is handled, where models run and how security is enforced. Valley describes it as a continuum, with global cloud suited to frontier-scale training and rapid innovation, local or sovereign environments suited to regulated data, latency-sensitive workloads and anything requiring accountability within jurisdiction.

“Every time data moves across borders, it incurs cost,” explains Valley. Egress charges can accumulate quickly, so for organisations running AI at scale, these costs shift the economics of where workloads belong. For real-time use cases, such as fraud detection or customer engagement, routing data to a distant cloud and back also introduces latency that directly affects outcomes. This is one of the reasons that in sectors like nancial services and healthcare, AI pilots routinely stall in production. “It is because the compliance process was never built for offshore infrastructure,” says Valley. “Moving into a sovereign environment resolves that. It allows

organisations to put these things into production much faster.”

PORTABILITY, PERFORMANCE AND POLICY

There’s also the fact that without local adaptation to African languages, datasets and context, the intelligence organisations deploy is less accurate and less relevant to the people it serves. “The tweaking and ne-tuning is de nitely what you need to be doing locally,” says Valley. His advice here is portability: workloads should be architected to shift between global and sovereign environments as requirements change. Where data lives shapes what AI can do with it, which is why data residency should be at the centre of both performance and compliance conversations. Still, most African organisations have not yet fully understood what they hand over when workloads go offshore. “This is not just ignorance,” says Professor Stella Bvuma, director of the School of Consumer Intelligence and Information Systems at the University of Johannesburg. “It is a symptom of systemic challenges.”

Cost-driven cloud adoption, limited

“WORKLOADS SHOULD BE ARCHITECTED TO SHIFT BETWEEN GLOBAL AND SOVEREIGN ENVIRONMENTS AS REQUIREMENTS CHANGE.”

– ASIF VALLEY

“MOVING INTO A SOVEREIGN ENVIRONMENT RESOLVES THAT. IT ALLOWS ORGANISATIONS TO PUT THESE THINGS INTO PRODUCTION MUCH FASTER.”

– ASIF VALLEY

regulatory maturity and a persistent digital divide have made dependency on foreign cloud infrastructure the default. Although the Protection of Personal Information Act and the 2024 National Data and Cloud Policy are shifting the conversation in both nance and healthcare, Professor Bvuma believes awareness remains uneven across the broader business landscape. Small and medium businesses in particular lag behind, she says, facing both cost barriers and uneven regulatory enforcement.

According to Valley, building local AI capacity comes down to four fundamentals: power, AI-ready data centres, connectivity and skills. Africa holds a very small share of AI-grade compute globally, and that compute is being absorbed by large vendors at scale.

The continent does train smaller models, particularly for local languages, says Bruce Bassett, Wits AI chair in science, but the regional compute capacity at the scale needed to train and host advanced models remains limited.

“Unfortunately, I don’t see that commitment,” Bassett says, who puts the minimum funding requirement at R5-billion, from government, industry, academia, or a combination of all three. AI is now embedded in credit approvals, hiring, insurance pricing, logistics and public services. “You cannot meaningfully govern intelligence that runs on infrastructure you do not control,” adds Kutlwano Ngwarati, head of AI and intelligent automation at Exxaro Resources. “When the models, compute and data pipelines sit outside your jurisdiction, accountability becomes aspirational rather than enforceable.”

Asif Valley

Professor Stella Bvuma

BUILD VERSUS BUY: WHEN OFF-THE-SHELF AI ISN’T ENOUGH

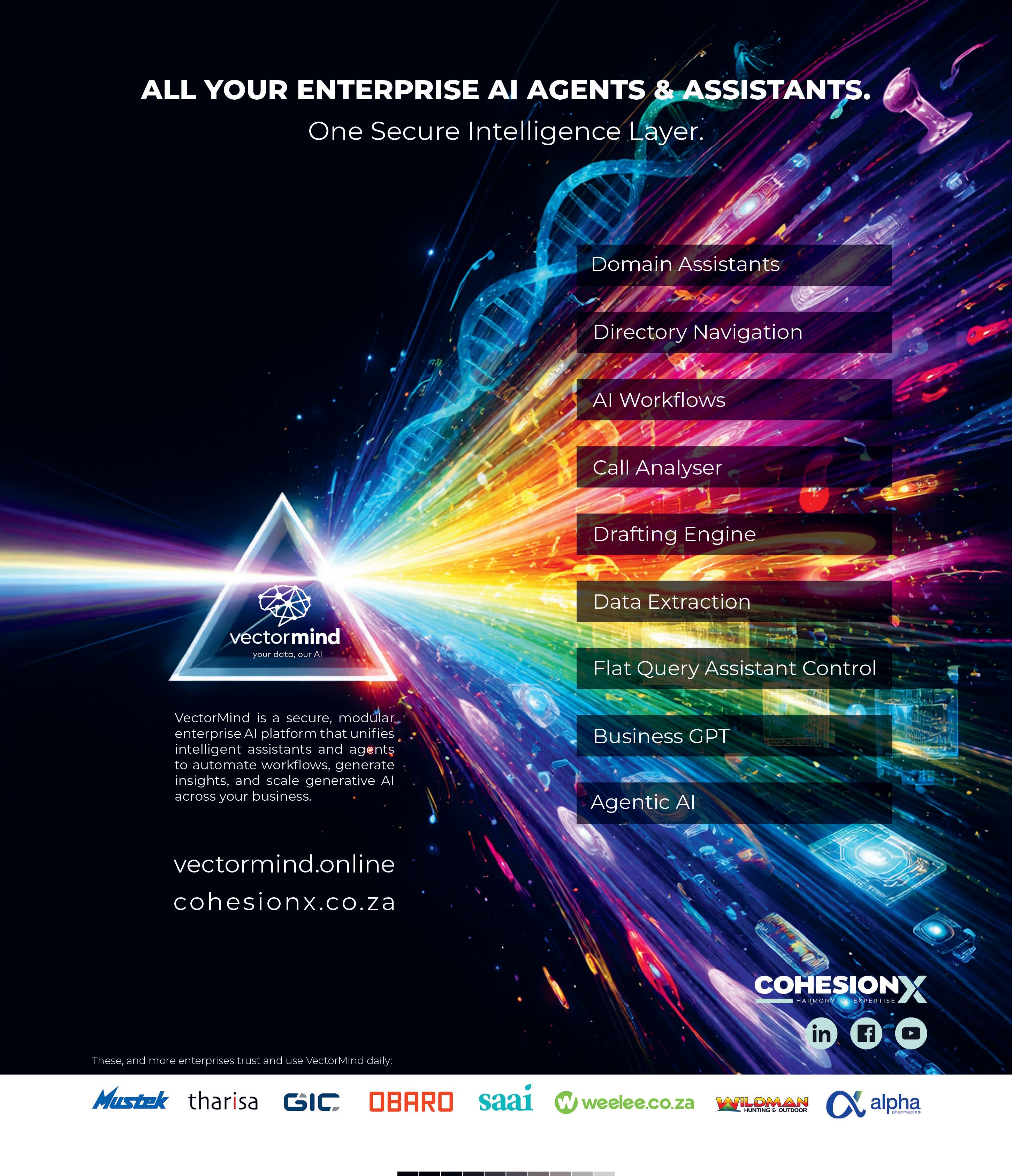

In the agentic AI era, the old “build versus buy” debate is outdated. South African corporates must strategically own proprietary data, governance and skills while renting commoditised AI components, writes BUSANI MOYO

Strauss

In the rapidly evolving AI landscape, organisations grapple with a deceptively simple choice: build custom AI systems or buy off-the-shelf solutions. As Andre Strauss, CEO of CohesionX, argues, this binary framing is outdated. AI is no longer monolithic, but a modular stack of components – models, tools, data pipelines, guardrails and orchestration engines. The real question is: which layers to own, rent or commoditise? For South African corporates facing talent shortages and Protection of Personal Information Act (POPIA) compliance, the answer often lies in strategic ownership. Insights from Strauss and Agile Bridge, illuminate when custom builds make sense, their pitfalls and the cost trade-offs.

PRIMARY FACTORS DRIVING CUSTOM AI BUILDS

Organisations opt for in-house development when off-the-shelf tools fall short in terms of differentiation. Strauss emphasises the need to own proprietary layers, such as data structuring, governance and evaluation logic, in systems like retrieval-augmented generation (RAG) assistants.

“Very little of a RAG system is inherently differentiating,” he notes, the IP resides in unique data boundaries and work ows. Koegelenberg echoes this, pinpointing four triggers:

• Competitive edges from proprietary datasets and domain knowledge unavailable to generic models.

•When the AI is the product, like a bespoke fraud-detection engine.

• Needs for tight integration with legacy systems, superior performance and customisation.

•“Service road versus main road” thinking, where proprietary tech creates a defensible moat.

In agentic AI, where systems reason, act via application programming interfaces – a set of rules and protocols that allow different software applications to communicate, exchange data or

signi cant hurdles. Strauss warns it’s “not a project, but a permanent capability”, requiring model evolution, security hardening and audit trails amid South Africa’s talent scarcity, infrastructure limits and budget pressures. When it comes to the challenges, Koegelenberg highlights acute talent gaps –machine learning engineers, data engineers and domain experts are rare – and a prolonged time-to-market, with 90 per cent of effort on data quality, infrastructure, integration and monitoring, not model-building. Risks amplify: technical lock-in, model obsolescence, compliance breaches under POPIA and operational overload. AI failures often stem not from models, but from poor adoption and governance, says Strauss. He adds that building monolithic systems invites “engineering ego”, diverting focus from core strengths.

DETERMINING COST-EFFECTIVENESS

Cost decisions hinge on life-cycle economics, not upfront spend. Strauss advocates “rent rst”: commoditise embedding models, vector databases and foundation models through vendors to slash risk and burden. He emphasises the importance of building selectively when vendor pricing becomes “structurally irrational at scale” or when logic forms a moat, such as proprietary scoring agents.

Based on these insights, organisations should focus on these questions when determining whether to rent or build:

• Internal skills: got talent for full ownership long term?

• Total costs (TCO): factor in 90 per cent effort on data, set-up and monitoring, not just the model.

• Scalability: does custom beat vendors at scale?

• Strategic fit: unique IP edge? Koegelenberg stresses the need to evaluate whether off-the-shelf suf ces in the long term. The 2026 rule, according to Strauss, is to rent commodities, own differentiation and orchestrate the rest. Winning rms build component libraries, control data/governance and integrate fast, avoiding unnecessary builds. In the agentic era, success favours modular portfolios over ego-driven monoliths. South African leaders who master layer ownership will thrive, turning AI constraints into agile advantages.

www.linkedin.com/in/andrestrauss www.linkedin.com/in/deon-koegelenberg-37335a4

Andre

Deon Koegelenberg

MEET ALEX

Your next executive hire isn’t human

Executive

capacity without executive headcount. By THE AI SHOP

There’s a problem every growing company experiences. You need a chief nancial of cer (CFO) who can tell you which customers are bleeding the business. A chief operating of cer (COO) who sees the bottleneck before it shuts down the production line. A head of legal who can ag that regulatory change before it costs you. A chief risk of cer who isn’t just ticking boxes. However, your leadership team is already stretched thin, or those roles simply don’t exist on your organogram.

You’re not alone. Most South African companies operate without a full C-suite. Not because they don’t need one, but because they can’t afford one. The result? Strategic blind spots. Decisions made on gut feel. Board packs assembled in a panic at midnight. Critical questions that never get asked because there’s nobody whose job it is to ask them.

ENTER ALEX

Alex isn’t a chatbot. It’s an entire leadership team – one that works around the clock, knows your business intimately and costs a fraction of a single executive salary.

Alex can manifest as any member of the C-suite – CFO, COO, chief of staff, chief human resources of cer (CHRO), head of legal, chief risk of cer, chief technical of cer, chief marketing of cer (CMO) – or as a board-level advisor helping directors navigate complex documentation and governance responsibilities. Each role brings the analytical

depth and strategic judgement you’d expect from a seasoned executive in that function.

What makes Alex fundamentally different from generic AI tools is what’s happening underneath. Alex connects to the knowledge sources you choose to give it: board packs and governance documents for a director, enterprise resource planning (ERP) data and operational systems for an executive, or both for a CEO who straddles the two worlds. From these, Alex builds a continuously updated context graph that maps the people, roles, decisions, relationships and history that matter to your role. Alex doesn’t just answer questions. Alex understands your company – at the level relevant to you.

And, your data stays yours. Your information is completely isolated – no cross-contamination, no shared environments. Alex never trains on your data. Everything is hosted in a secure cloud environment, fully compliant with the Protection Of Personal Information Act. The intelligence Alex builds about your business serves your business alone.

Powered by recent research from MIT, Alex’s analytical capabilities, particularly as CFO, are now on a par with a listed-company analyst, delivering investment-grade nancial analysis while openly agging information gaps. This isn’t AI that pretends to know everything. It’s AI that thinks like an executive.

NOT JUST ANOTHER CHATBOT