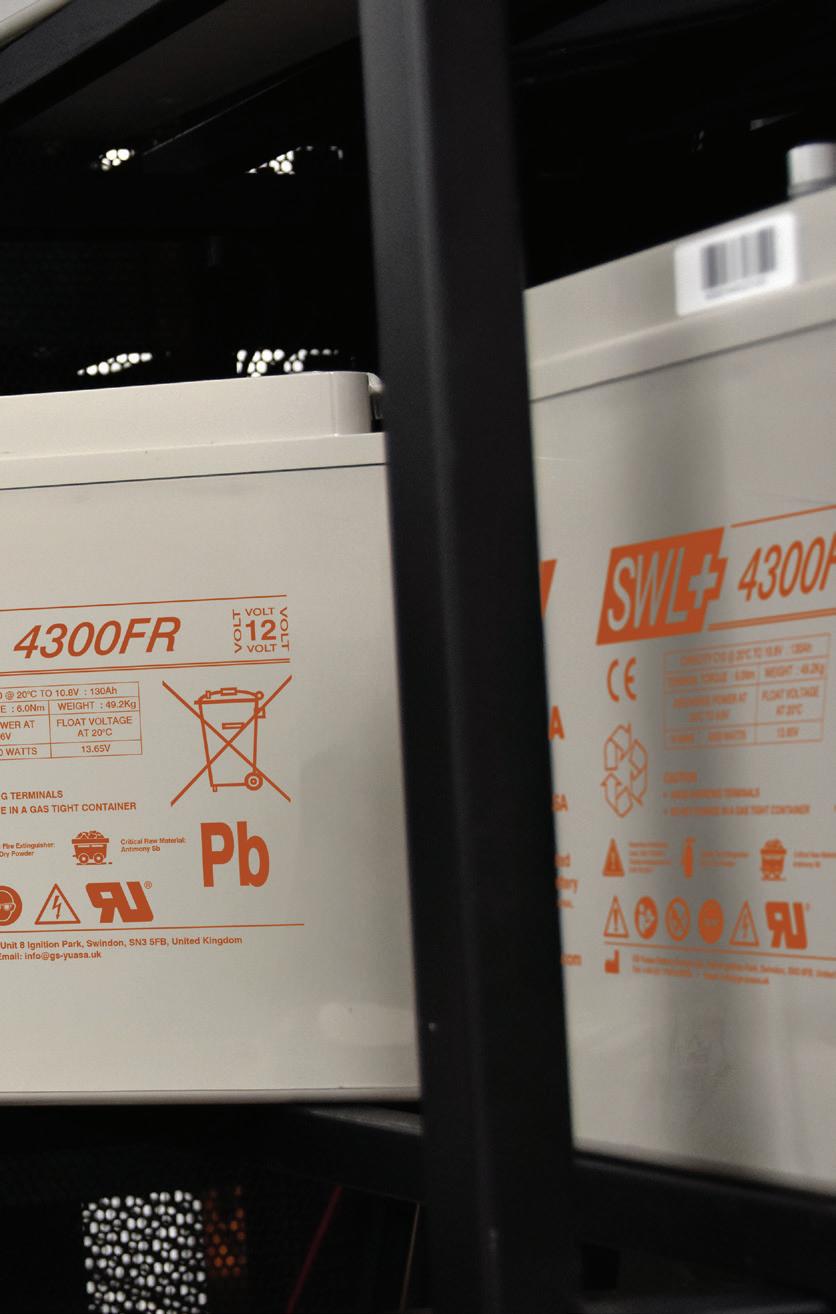

For over 40 years, Europe’s cities and infrastructure have trusted Yuasa for critical standby power. Now, the new SWL+ series builds on that legacy with more power, longer life, and zero compromise.

15 year design life (12 years @25°C) with enhanced highrate performance

Exclusive GS Yuasa HT Element X Alloy

Hybrid pure Lead construction

Sustainably manufactured & up to 98% recyclable

4 • Editor’s Comment

How to lose friends and alienate people.

8 • Cooling

ChemTreat’s Pete Elliott argues liquid cooling only works when chemistry, materials and commissioning are treated as mission-critical.

•

Day Two is the real stress test for AI infrastructure, says Matt Salter of Onnec.

18 • Green IT & Sustainability

EnergiRaven’s Simon Kerr wants UK policy to treat data centre waste heat as strategic infrastructure – and plan for reuse early.

22 • Networking & Connectivity

Livewire Digital’s Tristan Wood warns that ‘diverse’ networks can still share the same failure points – unless routes are designed and proven.

26 • Power

Electrical manufacturing capacity could decide who scales first, believes Matt Coffel of Mission Critical Group.

31 • On The Record

Data centres must be written into NESO’s clean flexibility approach, argues Venessa Moffat in the Data Centre Alliance’s latest column.

If you ever needed a case study in how not to talk about the data centre industry’s energy footprint, Sam Altman served one up on a platter. His recent attempt to frame AI’s power consumption by reminding everyone that “humans use a lot of energy too” didn’t land as clever reframing — it landed as dismissive, and it drew widespread condemnation online.

And that reaction matters, because this is the exact moment the UK data centre industry can’t afford to sound glib. When the public hears ‘AI boom’, they don’t think about throughput and innovation curves. They think about whether their bills go up, whether water becomes scarce, whether green space disappears, and whether their job still exists in five years. That’s the mismatch. AI might be a leap forward for corporate productivity, but plenty of people are still asking what it means for their lives.

The industry doesn’t need to agree with every fear to take it seriously. But in the worst examples, it’s been too quick to lean on promises – ‘a brighter future’, ‘national competitiveness’, ‘economic growth’ – without doing the unglamorous work of proving impacts and benefits in plain English. When that happens, you don’t get ‘nuanced debate’. You get organised resistance.

We’re already seeing it. Edinburgh councillors recently refused a proposed 213MW ‘green’ data centre – a clear signal that big numbers plus green language won’t be enough without credibility and local confidence. The UK Government, meanwhile, conceded a legal error over approving a hyperscale scheme in Buckinghamshire without a proper environmental impact assessment – exactly the kind of story that convinces communities the rules are being bent for compute.

And then there’s the grid. Ofgem is consulting on reforms to demand connections because the system is under strain and needs prioritisation. When communities hear ‘there isn’t enough electricity’ in the same breath as ‘another hyperscale campus’ they don’t instinctively reach for nuance – they reach for objections.

And who can blame them? So here’s the takeaway: the industry can’t afford ‘trust us’ messaging. If it wants permission to grow, it needs to publish the receipts – transparent water metrics (direct and indirect), cooling choices explained like you’re talking to a neighbour, credible pathways to non-potable supply where possible, grid impacts stated honestly, and a clear local give-back that isn’t just a brochure promise.

Because if you don’t tell that story first – carefully, humbly, and with numbers – someone else will. And they won’t be trying to help you get it built.

Jordan O’Brien, Managing Editor

Precision-engineered cooling for data centres and IT rooms, delivered with global expertise and local support.

Chris Cutler of Riello UPS explains how silicon carbide (SiC) semiconductors are driving next-generation modular UPS design, helping data centres balance reliability and sustainability.

he data centre landscape continues to undergo unprecedented transformation, with the seemingly unstoppable growth of AI and hyperscale computing leading to rising demand and rack densities. High energy costs are compounding these pressures, along with the rapidly fluctuating and unpredictable load profiles associated with AI applications. These factors pose huge challenges to traditional UPS architectures as manufacturers

Tneed to balance the primary purpose of an uninterruptible power supply – namely to guarantee resilience and reliability – with the sector’s desire for ever more sustainable solutions.

Historically, UPS systems have been manufactured using Insulated Gate Bipolar Transistors (IGBTs), a well-established, proven, and cost-effective technology. Incremental improvements, such as the evolution from

two-level to three-level architectures and new filter materials, have enabled manufacturers to enhance efficiency more in recent years. But where is that next leap forward? Our extensive R&D pinpointed the potential of using silicon carbide (SiC) semiconductors instead of IGBTs. SiC isn’t a new concept, for example, it’s well-proven in the electric vehicle sector. But specifically for UPS manufacturing, SiC offers various advantages compared to silicon-based IGBT:

• Higher Efficiency & Reduced Switching Losses: SiC components exhibit lower electrical resistance, resulting in reduced energy losses, which helps to maximise the overall efficiency of the UPS.

• Increased Power Density: the technology enables increased power density, making it possible to design more compact and lightweight UPS systems without compromising on overall power capacity.

• Increased Thermal Stability: SiC can operate at higher temperatures than IGBTs, translating to a broader operational range and reduced cooling demands. IGBTs require larger heat sinks as they dissipate more energy.

• Enhanced Frequency Response: SiC’s faster switching capabilities result in a more responsive UPS, crucial for handling the rapidly fluctuating load conditions typically found in modern data centres, particularly those dealing specifically with AI applications.

• Durability: the robustness of SiC and its ability to withstand high surge currents or voltage spikes reduces overall wear and tear, leading to extended component and UPS lifecycles, as well as reducing maintenance needs.

Bear in mind that SiC components do require anywhere from 4-6 times the energy to manufacture, so we’re talking about increased upfront production costs and CO2 emissions. But these factors are more than offset by the overall energy savings across the UPS’s overall lifecycle.

Riello UPS embraced this untapped potential of SiC with our Multi Power2 range. The evolution of our modular UPS offering, the series comes in three versions: MP2 (300-500-600 kW versions), Scalable M2S (1000-1250-1600 kW versions), and our new model M2X, which protects up to 120 kW and is designed with smaller data centres in mind.

All options are based on high density power

Our extensive R&D pinpointed the potential of using silicon carbide (SiC) semiconductors instead of IGBTs

modules. Thanks to the use of silicon carbide components, these modules deliver best-inclass efficiency of 98.1% in Online double conversion mode. This significantly reduces a data centre’s energy consumption and running costs.

The positive characteristics of SiC also shine through in the UPS’s ability to handle rapidly fluctuating AI loads and its overall robustness.

For example, with a typical UPS you’ll need to swap out the capacitors at service life year 5-7, potentially that’s twice or three times over a 15-year lifespan. But with the durability of SiC it is realistic to go through the entire lifespan without having to replace the capacitors at all, significantly cutting both maintenance and disposal costs.

The Multi Power2 range also offers data centres all the other benefits associated with modular UPS, for example, the ‘pay as you grow’ scalability to add extra power modules or cabinets when load requirements change.

The series is designed to avoid any single point of failure – the overall component and connection cable count is low, reducing the

RIELLO UPS IS AT THE EXCEL LONDON ON 4-5 MARCH FOR DATA CENTRE WORLD 2026 with the team available on STAND D90 to showcase the Multi Power2 and its wider range of data centre solutions. Register for a FREE visitor pass at www.techshowlondon.co.uk/RielloUPSLtd

risk of faults, while all power modules are mechanically and electronically segregated. While all modules and major components are easily hot-swappable meaning engineers can replace a module – or add one to increase power – in less than 5 minutes.

A modular UPS made with SiC components, such as Multi Power2, will deliver data centres sizeable cost, efficiency, and carbon emissions savings compared to both legacy monolithic UPS and even the latest IGBT-based modular solutions.

Here are a couple of real-life comparisons of the savings in action. Scenario 1 swaps out a monolithic 1 MW N+1 UPS (made up of 3 x 12 pulse 600 kVA 0.9pf UPS) and replaces it with a 1,250 kW Multi Power2 Scalable M2S:

• Total annual energy cost savings = £95,759

• Overall total annual cost savings = £117,544

• Total annual CO2 savings = 148.7 Tonnes

• Total 15-year cost savings = £2,353,655

• Total 15-year CO2 savings = 1702.8 Tonnes

Scenario 2 summarises the savings you get replacing a UPS comprising 3 x 400 kVA modular units with a 1,250 M2S running at 1 MW load:

• Total annual energy cost savings = £51,099

• Overall total annual cost savings = £53,839

• Total annual CO2 savings = 79.4 Tonnes

• Total 15-year cost savings = £1,078,068

• Total 15-year CO2 savings = 908.7 Tonnes

And don’t forget, if you take into account other benefits such as the lifelong components and not having to regularly swap out capacitors 2 or 3 times during a typical 15-year lifespan, data centre operators could additionally save between £80,000-£120,000 in maintenance costs alone.

Although SiC-based UPSs may come at a higher initial cost compared to traditional IGBT, the lower cooling requirements, reduced energy consumption, and longer operational life ultimately deliver a superior Total Cost of Ownership (TCO).

And while it is by no means a silver bullet, silicon carbide will likely become the go-to choice to help UPS manufacturers meet the needs of modern data centres’ ongoing demand for higher efficiency power infrastructure.

TPete Elliott, Senior Technical Staff Consultant at ChemTreat, argues that as rack densities soar, fluid chemistry, materials compatibility, and commissioning discipline will decide whether high-density cooling delivers reliability – or failures from day one.

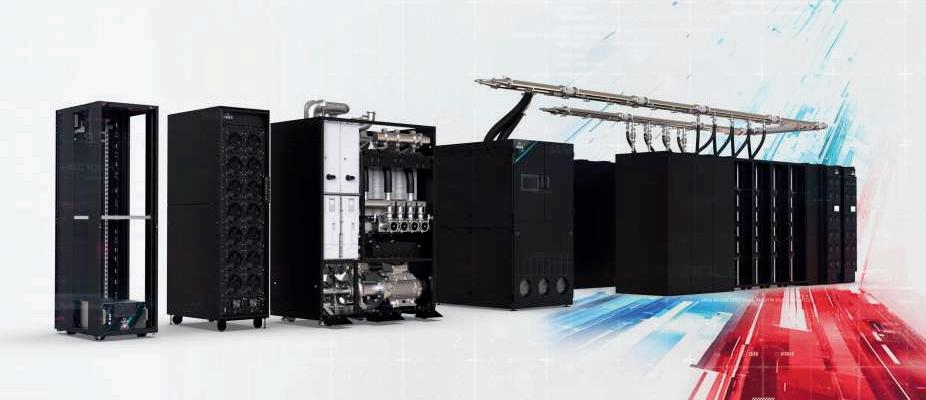

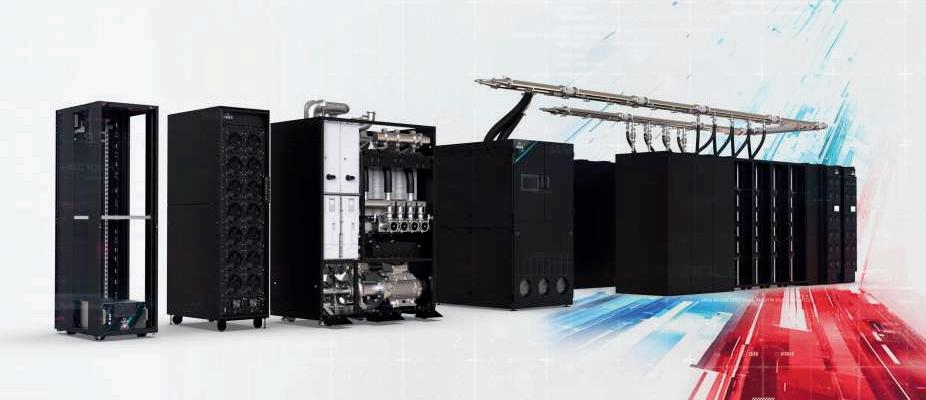

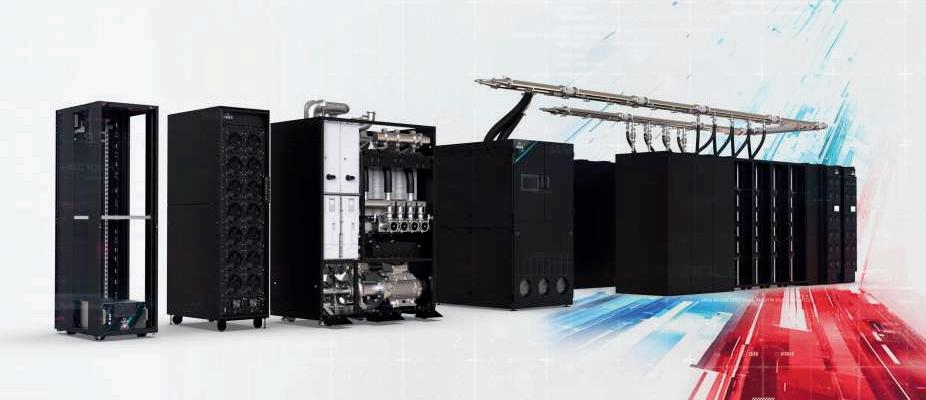

he rapid rise of AI-driven workloads has pushed data centre cooling design into unfamiliar territory. Rack densities that once defined the upper limit of facility planning are now baseline assumptions, and traditional air-based cooling and heat-rejection approaches are struggling to keep pace. Direct-to-chip liquid cooling, immersion systems, and hybrid architectures are becoming core elements of modern mechanical design.

Yet the shift to liquid cooling introduces new complexities that go well beyond thermal performance. Mechanical design choices, fluid chemistry, and materials compatibility now play a decisive role in longterm reliability, commissioning success, and sustainability outcomes. For engineers tasked with designing or retrofitting high-density environments, understanding these interactions – and their impact on ever-tightening project timelines – has become essential.

Designing for heat transfer is only the starting point

The appeal of liquid cooling is straightforward: liquids transfer heat far more efficiently than air, allowing direct-to-chip systems to remove heat at the source and stabilise temperatures under extreme loads. In general terms, water provides far higher thermal conductivity than air, which can reduce reliance on large air-handling systems and enable higher rack densities within a smaller footprint.

However, thermal performance alone does not guarantee operational success. As power density increases, systems become less tolerant of variation. Minor changes in flow distribution, water chemistry, or material condition can have disproportionate effects on performance. Many of the issues that arise in liquid-cooled environments are mechanical or chemical in nature rather than purely thermal, which

means early engineering decisions can significantly influence system reliability.

This is where disciplined design assumptions, consistent water quality from the start, and pre-operational system preparation matter most.

Mechanical design decisions that influence long-term performance

High-efficiency thermal management solutions rely on narrow channels, precision manifolds, and tight tolerances. These features improve heat transfer but increase sensitivity to fouling, corrosion, and flow imbalance, making materials selection for system components a key step in the design process.

Mixed-metal systems introduce galvanic corrosion potential that can be managed through considered design and water chemistry control. Copper, aluminium, stainless steel, and various alloys can coexist successfully, but their interactions should be anticipated from the outset. Electrically insulated junctions (dielectrics) can help mitigate galvanic effects. Treatment strategies may also be required to manage galvanically induced pitting, particularly where copper interfaces with less noble materials of construction such as aluminium or low-carbon steel.

Flow velocity presents another design trade-off. Excessive velocity can accelerate erosion and material wear, while insufficient velocity increases the risk of deposition and biofilm formation. Engineers should balance these forces while accounting for variable loads, particularly in hybrid environments where air- and liquid-cooled racks operate simultaneously.

In liquid-cooled systems, water quality is not a background consideration; it directly influences system performance and longevity.

Parameters such as pH, alkalinity, conductivity, hardness, and dissolved oxygen affect corrosion rates and material stability. Suspended solids and microbial growth can obstruct cold plates and reduce effective heat transfer long before alarms are triggered. Unlike traditional cooling towers, where some variability can be tolerated, direct-to-chip systems typically demand tighter control and more consistent monitoring.

Effective mechanical design may involve incorporating filtration (often at tighter thresholds than conventional cooling systems), sampling points, and online monitoring into the system layout from the earliest design phases. Treating fluid chemistry as an operational afterthought increases the likelihood of post-commissioning failures that are difficult and costly to correct.

Many liquid cooling issues surface not during steadystate operation but at start-up and early commissioning. Construction debris, residual oils, and incomplete system cleaning can compromise performance from day one.

Effective pre-operational planning typically benefits from early technical consultation. Reviewing system materials, operating conditions, and anticipated thermal loads upfront supports the selection of an appropriate water-management approach and feed strategy, helping stabilise water chemistry during the commissioning period, when the system is particularly vulnerable.

Pre-operational cleaning and passivation also play an important role. Without them, even welldesigned systems may experience accelerated corrosion or fouling that shortens component life. To preclude adverse conditions early on, it is important to create a detailed plan for a proper system flush, followed by cleaning and passivation. This means flush volumes and the time duration per flush need to be agreed upfront. Additionally, disposal of flushing fluid and cleaning solution requires discussion prior to commencing any of these pre-commissioning operations. Commissioning also validate monitoring strategies, confirm flow balance, and establish baseline performance metrics.

Skipping these steps introduces uncertainty and limits an operator’s ability to respond proactively as workloads evolve.

Few data centres transition entirely to liquid cooling in a single phase. Hybrid architectures that combine air and liquid cooling can offer a practical path forward.

Designing these environments involves careful integration between air systems, liquid loops, heat exchangers, and control platforms. Engineers should consider how thermal loads will shift as AI workloads expand, to ensure the infrastructure can adapt without major redesign.

Hybrid deployments also allow operators to test and refine watermanagement strategies before scaling further. Early implementation provides real-world data that can inform future decisions around chemistry control, filtration, and maintenance practices.

Sustainability in high-power data centres is often discussed in terms of energy efficiency, but water use is becoming an equally important part of the conversation.

When it comes to water usage efficiency, closed-loop cooling circuits can offer clear advantages when properly designed and maintained. By minimising evaporation and discharge, these systems reduce overall water demand while improving thermal stability. Integrating reuse strategies such as air-handler condensate recovery or reclaimed water, where feasible, can further reduce environmental impact. Rainwater recovery has also been shown to improve water usage effectiveness in some deployments, even if it is used principally for on-site

The most effective sustainability outcomes are achieved when water-management goals are embedded into mechanical design rather than added later, as retrofits are typically more costly in the long run. Systems designed for stability, cleanliness, and long service life tend

One of the most common challenges in high-density cooling projects is misalignment between mechanical design assumptions and operational realities. Early collaboration between mechanical engineers, materials specialists, and water-treatment experts can

Incorporating these disciplines into the design phase helps identify and address potential failure modes before construction begins. This approach can lead to more stable performance, fewer retrofits, and lower total cost of ownership.

The next generation of data centres will be defined not only by how much compute power they deliver, but by how reliably and efficiently they operate under extreme thermal conditions. Liquid cooling can enable that future – but only if supported by thoughtful mechanical design and disciplined water management.

Engineers who treat fluid chemistry, materials selection, and commissioning as core design considerations will be better positioned to deliver facilities that scale with confidence and withstand the demands of AI-driven workloads.

The build is the easy part

Matt Salter, Data Centre Director at Onnec, outlines how sudden demand surges, thermal events, and component lead times force operators to prove resilience in real time, not on paper.

Constructing AI-ready infrastructure is only the first milestone in the journey to providing AI compute. The real test begins once the facility is operational, servers are installed and workloads go live.

Day One focuses on planning and construction: blueprints, power distribution, cooling systems, connectivity and redundancy. These are all measurable elements that make a facility ‘AI-capable’ on paper.

Day Two, however, introduces complexity and unpredictability. Thermal spikes, workload surges, equipment failures and supply chain delays quickly expose the gap between design assumptions and operational reality.

Day Two is when resilience moves from theory to practice. AI workloads are inherently volatile, and stress conditions often emerge only once systems are live. How well a data centre adapts, responds and maintains performance under pressure separates designs that succeed from those that falter.

AI workloads and infrastructure stress

AI workloads behave very differently from traditional enterprise or cloud computing. Dense GPU clusters generate concentrated heat and draw power in sudden surges, sometimes changing markedly within seconds. Industry commentary has increasingly highlighted how these dynamics can strain transformers and upstream electrical infrastructure, creating fluctuations that older data centres were never designed to handle.

Networking interconnects can also become saturated by unpredictable east-west traffic, while even small inefficiencies in cabling, containment or floor layout are amplified under load – creating hotspots and airflow bottlenecks that compromise performance.

Operating under these conditions is a far greater challenge than building the facility. Thermal events can arise abruptly, and misaligned cooling, power distribution or interconnect capacity can quickly lead to performance degradation or downtime.

Older facilities, designed for lower-density racks and slower-growing workloads, are particularly vulnerable. Even where redundancy exists, the intensity and volatility of AI workloads demand rapid, continuous response, leaving traditional monitoring and manual intervention insufficient.

Legacy infrastructure compounds these risks: many centres can’t support modern interconnect technologies such as InfiniBand, and industry incident analyses frequently link outages to preventable issues in cabling and cooling practices.

In AI-scale environments, engineering decisions on airflow, rack density and cabling quality directly influence whether a facility can maintain performance under sustained, high-intensity workloads.

Supply chains, maintenance and skilled operations

Infrastructure stress is only part of the picture. Supply chain constraints further complicate operations. Critical components such as GPUs, optical

modules and cabling often have long lead times, and replacement can take weeks rather than days.

Even minor interruptions can escalate into significant operational issues if spare capacity, inventory management and contingency planning are not in place. According to the Data Centre Cost Index, 80% of operators report delays in manufacturing or delivery of essential equipment.

Shortages extend beyond GPUs; advanced fibre, switches and cabling are all in high demand, with multiple operators competing for the same scarce stock. Without timely access to the right components, even carefully designed facilities can struggle to maintain performance and execute planned upgrades.

Skills and process only go so far if the design limits operational options. Data centres must be engineered to be resilient and modular from the outset, because early design decisions often determine how effectively teams can deploy, monitor and maintain systems under realworld pressures.

Decisions made during design and construction have lasting operational consequences. Structured cabling, modular mechanical systems, spare power and cooling capacity, and flexible interconnect architectures all reduce the need for costly retrofits. Forward-looking design supports change without unnecessary disruption.

Starting early is vital, particularly when factoring in external constraints on designs that impact resilience. Labour shortages, regulatory changes, ESG compliance requirements and regional supply chain bottlenecks can all influence performance if not considered early.

In AI data centres, infrastructure and operations are inseparable: monitoring depth, operational runbooks and proactive planning are as important as the hardware itself. Facilities that embed these principles are better equipped to manage volatility, reduce downtime and maintain reliable performance even under extreme conditions.

Building an AI-ready data centre is an achievement; operating one reliably under high-density, dynamic workloads is the true test. Day Two challenges assumptions about power, cooling, networking and staffing, revealing whether a facility can sustain AI workloads continuously.

Success is not measured by capacity on paper but by the ability to maintain uptime, handle surges and adapt in real time.

Where on-site coverage is limited, some operators use third-party on-site support (‘smart hands’) under tightly defined runbooks to execute urgent maintenance and fault isolation. The goal is speed and consistency: shorten time-to-diagnosis, reduce time-to-repair and keep changes controlled when conditions are already stressed.

As AI workloads expand across industries, Day Two operations will determine which facilities can scale, perform and remain resilient. The data centres of the future will integrate infrastructure, monitoring and operational strategy seamlessly, with proactive response embedded into everyday practice.

In the era of accelerated compute, the real test begins once the build is complete; it is on Day Two that long-term reliability is earned.

UPS manufacturer CENTIEL delivers 10.5 Megawatt power protection capability.

Managed IT service provider, Redcentric has completed a multi-million pound electrical infrastructure upgrade as part of a wider datacentre refurbishment project at its facility located at Heathrow’s Corporate Park in London. The project that included a UPS replacement was Part funded through The Industrial Energy Transformation Fund (IETF) now closed for applications, which supports the deployment of technologies that enable businesses with high energy use to transition to a low carbon future.

As part of Redcentric’s high profile project, leading UPS manufacturer CENTIEL has delivered equipment to protect an existing 7 Megawatts of critical load through its multi-award winning, highly efficient true modular uninterruptable power supply (UPS) StratusPowerTM. The deployment of this modular UPS technology

enables Redcentric to scale to 10.5 Megawatts without the need for any further infrastructure change.

The facility, which is popular with Footsie 100 companies, is now seeing UPS efficiency improvements from below 90% to above 97% efficiency since the upgrade. This represents the potential to reduce more than 8,000 tonnes of CO₂ emissions over the next 15 years, supporting ESG compliance for both Redcentric and its household name clients.

Paul Hone, Data Centre Facilities Director, Redcentric confirms: “Our London West colocation datacentre is a strategically located facility that offers cost effective ISO-certified racks, cages, private suites, and complete data halls, as well as significant on-site office space. The datacentre is powered by 100% renewable energy, sourced solely from solar, wind and hydro.

“In 2023 we embarked on the start of a full

upgrade across the facility which included the electrical infrastructure and live replacement of legacy UPS before they reached end of life. This part of the project has now been completed with zero downtime or disruption.

At Redcentric, we pride ourselves on the high level of uptime across all of our datacentres.

The continuous re-investment into new equipment to gain efficiencies now means we can continue to offer our valued clients a 100% uptime guarantee at our London West facility.

“In addition, for 2026, we are also planning a further deployment of 12 Megawatts of power protection form two refurbished data halls being configured to support AI workloads of the future.”

Aaron Oddy, sales manager Centiel confirms: “A critical component of the project was the strategic removal of 22 Megawatts of inefficient, legacy UPS systems. By replacing outdated technology with the latest innovation, we have

A

critical component of the project was the strategic removal of 22 Megawatts of inefficient, legacy UPS systems

dramatically improved efficiency delivering immediate and substantial cost savings.

“StratusPower offers an exceptional 97.6% efficiency, dramatically increasing power utilisation and reducing the data centre’s overall carbon footprint a key driver for Redcentric.

“The legacy equipment was replaced by Centiel’s StratusPower UPS system, featuring 14x500kW Modular UPS Systems. This delivered a significant reduction in physical

size, while delivering greater resilience, as a direct result of StratusPower’s award-winning, unique architecture.

“StratusPower provides an unprecedented level of resilience and availability guaranteeing near-zero system downtime, ensuring the data centre can offer its clients the highest standard of power protection. Standardising with this design across the site enables UPS modules to be seamlessly redeployed between systems, ensuring maximum asset utilisation and operational agility.”

Durata, a leader in modular data centre solutions and critical power infrastructure completed the installation to the highest level.

Paul Hone continues: “Environmental considerations were a key driver for us.

StratusPower is a truly modular solution ensuring efficient running and maintenance of systems. Reducing the requirement for major midlife service component replacements

further adds to its green credentials.

“With no commissioning issues, zero reliability challenges or problems with the product, we are already talking to the Centiel team about how they can potentially support us with power protection at our other sites.”

• For further information about Centiel’s range of UPS systems please see: www.centiel.com

• For further information about Durata please see: www.durata-global.com

• For further information about RedCentric Data Centres please see: www.redcentricplc.com

Fabrizio Landini, Global Data Centre

Segment Leader at Hitachi Group, explains why the AI boom will stall unless data centre operators finally close the gap between IT and OT.

Over a quarter of organisations (37%) still report little or no collaboration between IT and OT teams (Cyolo/Ponemon Institute). This divide made sense in an earlier era. IT focused on storage, networking and compute, whilst OT managed physical infrastructure like power distribution, environmental controls and cooling systems. The two departments rarely needed to align beyond simple capacity planning.

Now, AI has altered that dynamic. Modern AI platforms are computeheavy, generating huge thermal loads that require flexible power allocation and real-time optimisation of cooling systems. When an LLM (large language model) is trained, every element of the data centre – from cooling output to network bandwidth to power draw – must respond in a coordinated way. That level of coordination is difficult to achieve if IT and OT systems remain siloed and unable to communicate.

Let’s take a look at why IT/OT convergence remains such a challenge for data centre operators, and why AI growth depends on more than technical integration between the two teams.

Data centres are no longer just rows of servers in climate-controlled rooms. They’re complex, dynamic ecosystems where digital workloads and physical infrastructure need to operate in close alignment. Yet historically, information technology (IT) and operational technology (OT) have been managed in isolation, with separate teams, tools and priorities.

IT/OT convergence addresses this challenge by creating a centralised

data and control plane that spans both domains. It allows operators to view the facility as a single, integrated system, rather than as a set of disconnected components. The result can include faster response times, better resource utilisation, and a stronger foundation for AIassisted operations.

When an LLM is trained, every element of the data centre – from cooling output to network bandwidth to power draw – must respond in a coordinated way

The benefits of IT/OT convergence are well understood. Despite this, true convergence remains elusive for many operators. While there are technical hurdles to overcome, the more persistent challenge is often cultural. IT and OT teams can have different priorities and risk tolerances. IT teams may move quickly, be receptive to change, and prioritise flexibility. OT teams, on the other hand, typically prioritise stability and reliability, and may resist change that introduces operational risk. Both approaches have clear strengths, but finding common ground can be difficult.

Successful convergence requires building bridges between these cultures. This can include creating cross-functional teams, establishing shared metrics, and developing a common language that both IT and OT professionals can use. It also means investing in training so that IT professionals understand physical systems, and OT professionals understand digital networks.

On the technical side, IT and OT systems often speak different languages. IT networks run on standard protocols like Ethernet and TCP/IP. OT systems may use proprietary industrial protocols designed for specific equipment. Unifying the two requires middleware, protocol translation and careful integration work. Legacy OT systems can also be difficult to integrate with modern tooling. Building management systems (BMS), for example, can be a stumbling block for data centre operators, particularly where older deployments have limited connectivity or rely on proprietary protocols.

It’s understandable if data centre operators feel overwhelmed by these challenges, and uncertain about where to begin. A phased approach is typically more realistic than attempting full convergence at once.

1. Ensure real-time data flow

Before you can converge operations, you need unified visibility. This means connecting OT systems to a common data platform, standardising data formats, and enabling real-time data flow. Begin with non-critical systems to build confidence and support a test-and-learn approach, before moving on to mission-critical infrastructure.

2. Create centralised dashboards

Once data is flowing, develop visualisation tools that give both IT and OT teams a shared view of the data centre. This helps reduce information silos and makes interdependencies more visible.

3. Automate responses

With unified visibility in place, operators can begin automating responses that span IT and OT domains. For example, when a high-power AI workload starts, cooling output can be adjusted automatically, with relevant notifications sent to power management systems.

4. Enable predictive monitoring and maintenance

One of AI’s most useful capabilities is anticipating faults before they result in service-impacting failures, helping teams prioritise corrective actions earlier. The quality of these predictions depends on high-quality historical data, robust analytics and appropriate machine learning models – but where the foundations are in place, the operational benefits can be meaningful.

As AI-driven workloads increase over the next decade, IT/OT convergence may shift from a competitive differentiator to a prerequisite for resilience and continuity. Operators best positioned to succeed are likely to be those that close the gaps between digital workloads and physical infrastructure. Without effective system integration, data remains fragmented – limiting visibility, reducing the accuracy of analytics, and constraining the operational value that AI tools can deliver.

Importantly, this doesn’t need to be tackled all at once. Many organisations make progress incrementally, focusing first on visibility and data quality, then on automation and optimisation as confidence and capability grow.

Somewhere right now, a data centre operator is staring at a proposal for an AI deployment that would transform their business. On a spreadsheet, the AI deployment is a masterstroke; on the 15-year-old facility floor, it’s a thermal impossibility. The servers are available. The customer is ready. The power is there. But the cooling infrastructure stands between ambition and execution - a gap measured in years of construction and tens of millions in capital.

This scenario is playing out across the industry with increasing urgency. Approximately 80% of existing data centres worldwide rely on air-cooled infrastructure, designed for an era when 10-15kW per rack was considered high density. Today’s AI workloads are rewriting those assumptions entirely, with next-generation systems demanding 50kW, 70kW, even upwards of 100kW per rack. The thermal challenge isn’t incremental - it’s exponential.

The conventional answer has been straightforward: build new facilities purposedesigned for liquid cooling or undertake comprehensive retrofits of existing ones. But comprehensive retrofits can require 18-24 months and significant capital expenditure. For operators watching competitors capture

AI workloads today, waiting until 2028 isn’t a strategy, it’s a concession.

The question facing most data centre operators isn’t whether liquid cooling is necessary. It’s whether there’s a practical path to get there without abandoning the infrastructure investments they’ve already made.

Air cooling has served data centres reliably for decades, and for good reason. It’s proven, wellunderstood, and cost-effective at moderate densities. Traditional computer room air handlers work efficiently for racks drawing 10-15kW, removing heat through carefully managed airflows between hot and cold aisles.

But physics imposes hard limits. Air has relatively low thermal conductivity and heat capacity compared to liquid. As rack densities climb, moving enough air to extract heat becomes increasingly impractical. Fan energy consumption skyrockets. Airflow velocities create noise and turbulence. Hot spots develop faster than air circulation can address them.

Next-generation AI systems have pushed past the threshold where air cooling alone can cope. Configurations like NVIDIA’s GB200 generate heat loads that simply cannot be dissipated through convection quickly enough to maintain safe operating temperatures. The

heat must be captured closer to its source—at the chip itself—and transported away via a medium far more efficient than air.

The answer is liquid. The question is how to deploy it.

For many operators, the phrase “liquid cooling” conjures images of extensive facility water systems - chillers, cooling towers, complex piping networks, and months of construction. This perception creates hesitation, particularly for facilities that were never designed to accommodate such infrastructure.

But a different approach exists: liquidto-air heat rejection. These self-contained systems enable direct-to-chip liquid cooling without any dependency on facility water infrastructure. The concept is elegantly simple. Coolant circulates in a closed loop between IT equipment and an adjacent heat rejection unit. Cold plates mounted directly on processors allow coolant to capture heat at the source with far greater efficiency than air. That heated coolant then circulates to an adjacent heat rejection unit, where fans expel the heat into the surrounding room - the same room already served by existing air handling systems.

The facility’s existing CRAH units then manage this rejected heat as they always have.

The liquid cooling loop remains entirely self-contained, operating independently while leveraging the air-cooled infrastructure already in place

No new chilled water lines. No cooling towers. No extensive mechanical room buildouts. The liquid cooling loop remains entirely selfcontained, operating independently while leveraging the air-cooled infrastructure already in place.

It’s a hybrid approach that bridges two worlds.

Let’s consider what a full liquid cooling retrofit actually demands. A 40MW facility - modest by hyperscale standards - carries construction costs between $280 million and $480 million according to recent market transactions. And those baseline figures are climbing. Supply chain constraints have stretched lead times for transformers and generators from months to

years, with expediting fees adding 5-10% to project costs. Specialized labor – e.g. certified electricians, HVAC technicians, systems integrators - commands premium rates with limited availability. In many markets, simply securing adequate utility power adds tens of millions before construction even begins.

Then there’s time. Comprehensive retrofits span 18-24 months under ideal conditions. For operators watching AI workloads flow to competitors today, that timeline represents lost revenue measured in hundreds of millions.

Liquid-to-air systems rewrite this equation entirely. Self-contained units deploy within existing data hall footprints, connecting directly to racks without facility-wide infrastructure changes. Deployment compresses from years to weeks. Capital shifts from massive upfront commitment to incremental investment that scales with actual demand.

For colocation providers especially, this flexibility transforms the risk profile: deploy cooling for specific customers today, expand as the market develops.

The competitive landscape for AI infrastructure is unforgiving. Workloads that cannot be deployed today will find homes elsewhere with operators who have found a way to get it done quickly and effectively. The data centres

capturing this demand aren’t necessarily those with the newest facilities or deepest pockets. They’re the ones that solved the cooling problem fastest.

The business case for hybrid cooling isn’t primarily about efficiency - it’s about speed. The ability to deploy AI-ready infrastructure in weeks rather than years creates competitive advantages that compound over time. Early movers capture customer relationships, operational expertise, and market positioning that late adopters struggle to match.

Hybrid liquid-to-air cooling isn’t a compromise or a stopgap. It’s a strategic choice that recognizes a fundamental truth: the perfect infrastructure delivered in three years loses to capable infrastructure delivered in three months.

That data centre operator staring at the impossible AI proposal? With hybrid cooling, they’re not waiting for a massive retrofit or watching the opportunity pass. They’re deploying next quarter - and capturing the future while others are still planning for it.

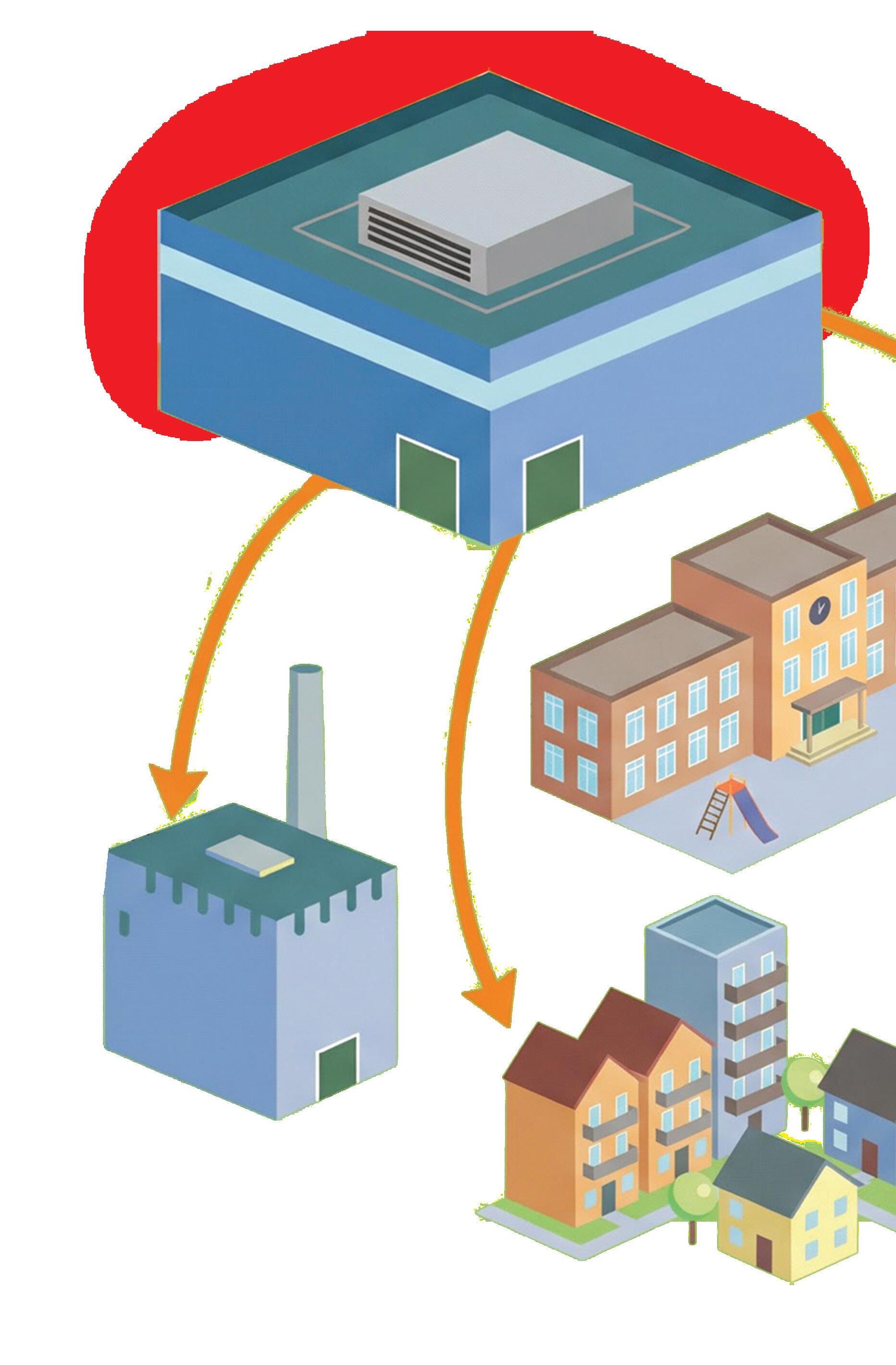

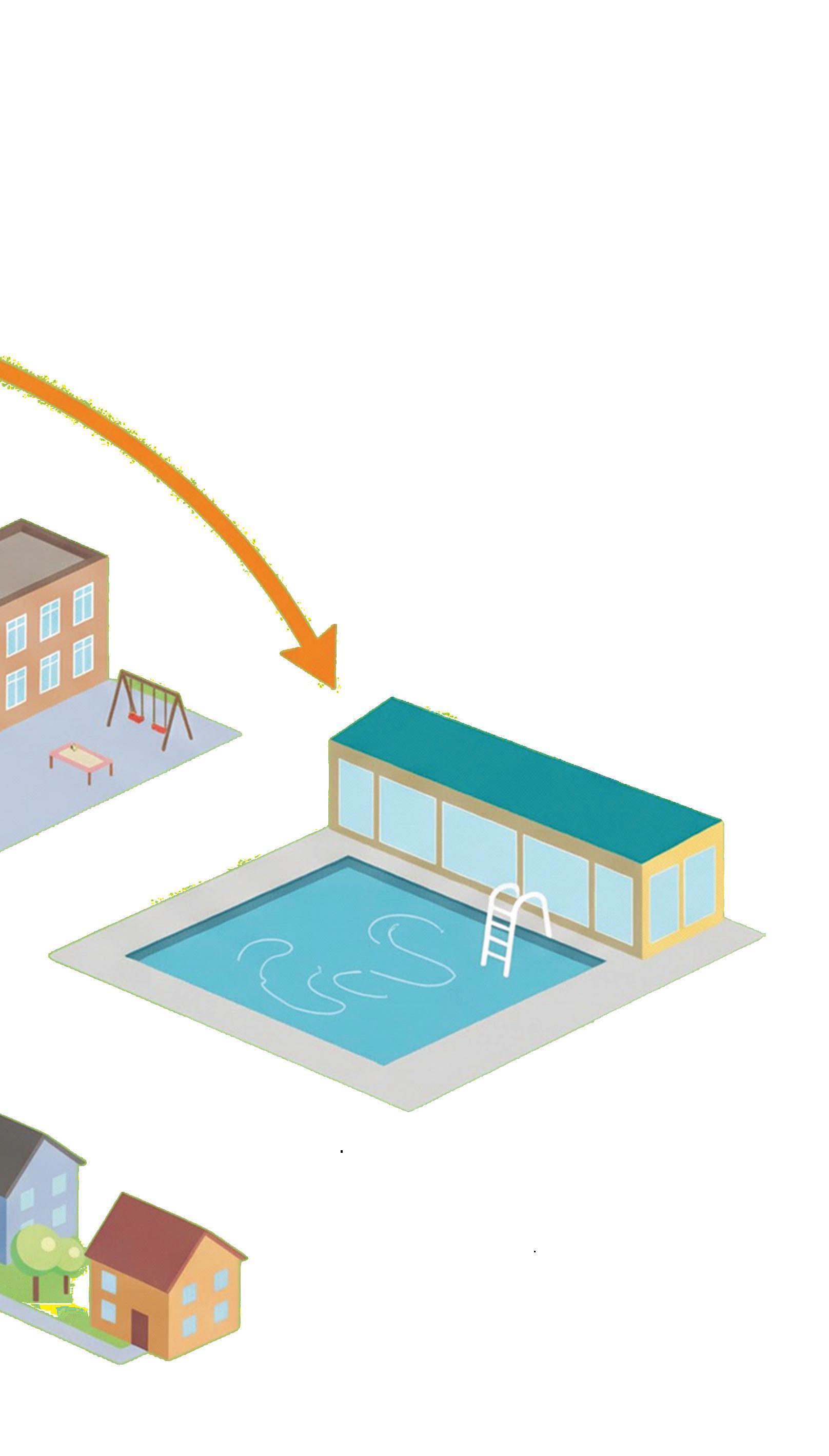

AData centre waste heat is already abundant and predictable. That’s why Simon Kerr, Head of Heat Networks at EnergiRaven, believes the UK needs joined-up regulation, heat zoning, and early planning engagement to capture it at scale.

s artificial intelligence, hyperscale computing and cloud services fuel an unprecedented expansion in the number of data centres, there is an accompanying increase in the amount of waste heat produced by digital infrastructure. Harnessing this heat could help the UK strengthen energy security and support decarbonisation, provided the right frameworks and infrastructure are put in place.

Each facility produces a continuous, predictable flow of heat which, with vision and planning, could contribute to urban energy systems, reduce reliance on gas for space heating, and support grid stability at a time of rising demand.

Today, much of this heat is treated as a by-product, expelled into the environment and lost. But with the right infrastructure, a larger share of it could be used to supply homes and public buildings, and to support local heat networks.

With careful policy and national planning, each unit of lowcarbon electricity could deliver more value – for example, once in a data centre for computation and again through useful heat in nearby buildings.

Learning from Scandinavia and charting our own path

Looking to our Northern European neighbours, Denmark and Sweden are demonstrating that heat reuse can work. Data centre heat flows into city-wide heat networks, reducing heating costs, gas consumption and exposure to volatile fossil fuel imports.

These outcomes are helped by alignment between policy, finance,

governance and planning, creating an environment where connection is expected, investment is bankable and energy systems are coordinated.

However, it would be naive to assume the UK can simply copy Scandinavia. The UK is different: local authority powers are fragmented, heat zoning is inconsistent, and there is no obligation to consider heat recovery. The UK must develop its own blueprint, one that reflects our geography, regulatory landscape and existing infrastructure, and decide which approach will deliver the most practical results for UK citizens.

With ambition, joined-up planning and predictable funding, the challenge of cooling data centres could also become part of a broader energy opportunity.

How can we make this happen?

To get to a networked UK – where waste heat from data centre clusters in Slough, West London, Manchester and Edinburgh is fed into local heat networks to support homes and businesses – planning reform should treat data centres as strategic national energy assets. Every new facility should assess heat recovery potential, and early engagement with heat network developers should become routine.

shift towards seeing heat itself as a utility. This is already underway, with Ofgem set to start regulating heat networks from January 2026.

Integrating a new energy source at national scale is a daunting task, but it is something we have done many times before.

There are a number of measures we can take to realise this vision. We can task a central body with providing guidance to local authorities to help them build expertise; mandate that operators engage at the earliest stages of planning to enable cost-effective integration; and ensure “lessons learned” are collected and shared widely among all stakeholders.

We don’t need to look far to find examples of communities making heat recovery and usage work for them. Shetland Heat Energy and Power (SHEAP) is one example: by recovering heat from a local waste-toenergy plant, residents have benefited from reduced exposure to energy price shocks in recent years. The UK can overcome these challenges, but only with clarity and ambition. Early alignment of policy, planning and investment can turn heat recovery from a theoretical possibility into a deliverable, repeatable model.

Regulation should bring waste heat into mainstream energy policy, requiring large producers to report on and evaluate options to act on their waste heat potential. This should be supported by clear guidance from Ofgem, DESNZ and local authorities. Predictable frameworks for connection, supported by heat zoning, would reduce uncertainty for operators and help communities plan around available supply. Meanwhile, establishing long-term capital frameworks and heatpurchase agreements would provide the commercial certainty required to accelerate adoption. This would encourage operators to treat heat as a managed output—valuable where there is a viable offtake route and a clear investment case.

To tie this all together, our mindset as a nation must

The heat is already there – the question is whether we have the foresight to use it

For data centre operators, heat reuse can create an additional revenue line in the right locations and, importantly, support decarbonisation objectives. Recovered heat can reduce cooling loads, improve ESG reporting, and strengthen investor confidence where delivery is measurable and contractual.

Early collaboration with regional heat networks can also improve project economics by aligning technical design, connection requirements and commercial terms from the outset. The operators best placed to benefit will be those that plan for heat export early, particularly in areas with dense heat demand and credible network development.

Operators who engage now may be better prepared as regulation evolves. Heat supply could become a stronger factor in planning decisions over time; planning early reduces risk and helps avoid costly retrofits.

The stakes are high. Reusing data centre heat can reduce household heating costs, enable urban heat zoning strategies, and cut national gas demand – while supporting a rapidly expanding digital economy. As AI and cloud computing drive energy demand, aligning digital infrastructure with energy planning is a pragmatic opportunity that can be captured where the technical and commercial conditions are right.

The UK has the chance to turn a by-product into a useful local resource. By combining long-term vision with practical action, we can support a future where digital growth and decarbonisation can progress in parallel. The heat is already there – the question is whether we have the foresight to use it.

Secure and sustainable DC power supply solutions for critical infrastructure applications in data centres. POWER SUPPLIES DC-UPS REDUNDANCY Solutions for modern data centres

The ILC 2250 BI from Phoenix Contact is a robust, modular, and secure building automation controller built on the Niagara framework, purposedesigned for mission-critical data centre environments. With a compact starting footprint of just 80 mm, it supports expansion of up to 63 I/O modules per controller, enabling scalable integration of complex cooling infrastructures. Its industrial-grade design includes built-in redundancy and native support for Rapid Spanning Tree Protocol (RSTP), ensuring high availability and resilient network communication. These features make the ILC 2250 BI exceptionally well-suited for reliable, efficient, and future-proof control of data centre cooling systems.

ACentralised Cooling Control can serve as the single control point for an entire cooling subsystem. For example, consider a typical data centre cooling setup: it may include chillers or CRAH (Computer Room Air Handler) units, pumps, cooling towers, temperature/ humidity sensors in server racks, and backup cooling units.

Critical cooling processes like chilled water supply temperature control, fan speed modulation, and compressor staging are orchestrated by a Control System. Because it interfaces with power systems too, it is important that a system also responds to power events – e.g. in case of a utility outage, it could adjust cooling setpoints to prevent thermal runaway while the data centre is on backup power. This holistic control improves system stability and ensures that all components work in harmony.

It’s true that Data Centre operators are under pressure to reduce energy usage. With the new GPUs that offer better Direct to Liquid Chip cooled design, it is also true is that cooling is often the second-largest energy consumer after the IT load, so even modest efficiency gains have significant impact. For example, if a rack temperature starts climbing beyond threshold, controls can automatically ramp up CRAH fan speeds or start an extra cooling unit and simultaneously send alerts.

Hundreds of environmental sensors provide detailed picture of conditions across the data floor. This high-resolution monitoring, combined with Niagara’s data logging, means Phoenix Contact’s ILC 2250 BI can support analytics for hotspots or airflow issues. Data centre facility managers can use the Niagara UI on the ILC to visualise temperature maps and identify inefficiencies- for instance, recognising that a particular aisle is consistently overcooled or under-cooled. In short, the ILC acts as the environmental nerve centre, constantly balancing the cooling output to maintain optimal conditions with minimal waste.

The ILC 2250’s design also helps in this aspect, reducing that risk. Many data centres

In a Data Centre, maintaining environmental conditions is essential for equipment reliability.

have N+1 or N+N redundancy (extra CRAH units or chillers). It is imperative to monitor the health of each unit (via status signals) and automatically activate reserve units if a primary fails or goes into alarm.

For Data Centre operators, a failed cooling unit means initiating emergency response procedures: they may need to reduce IT loads, deploy backup cooling, or even migrate workloads to avoid catastrophic overheating. Despite these efforts, the downtime resulting from a cooling failure can be extremely costly. Industry cases have seen single cooling failures

VISIT PHOENIX CONTACT AT DATA CENTRE WORLD, LONDON ON STAND F180 where you will be able to discover more about our products and solutions and how you can work together with us to operate your data centres efficiently and responsibly.

lead to seven-figure losses due to equipment damage and downtime penalties. Every minute offline also chips away at uptime commitments – potentially breaching SLAs if availability falls below agreed levels. This can incur financial penalties and require customer compensation.

In the end, the importance of the ILC 2250 in Data Centres is reflected in its impact: it provides the intelligence to keep servers cool with minimum energy, maximum uptime, and full transparency.

With data centres growing ever larger and more complex, tools like the ILC 2250 BI will be key to ensuring that the critical cooling systems are up to the task. Data centre cooling companies have been adopting the ILC controllers as part of their offerings and saw its benefit in providing a more PLC-like reliability combined with Tridium’s Niagara openness.

Is your ‘diverse’ network actually one fibre cut away from failure?

Tristan Wood, Managing Director at Livewire Digital, argues that resilience is being undermined by hidden shared dependencies – and that true network diversity must be designed, verified, and exercised, not assumed.

As data centres become critical infrastructure for digital economies, the most significant connectivity risks they face are no longer driven by capacity constraints. They stem from hidden dependencies embedded in network design. Resilience is not defined by how much bandwidth a facility can deliver, but by how effectively connectivity failures are contained when they occur. Genuine network diversity has therefore become a key feature of resilient data centre design, rather than a secondary enhancement. Connectivity strategies are still too often assessed on speed, latency, and headline cost. Bandwidth scale remains central to commercial positioning, and resilience is frequently assumed to improve alongside capacity. In practice, most connectivity failures in data centre environments are not caused by insufficient bandwidth. They arise from shared infrastructure that turns supposedly independent paths into correlated points of failure. When outages occur, the surprise is rarely that something broke, but that multiple routes described as diverse failed at the same time. For operators and developers of critical digital infrastructure, the objective is no longer simply to deliver capacity. It is to limit the impact of failures that are, in complex networks, unavoidable.

Many facilities meet formal redundancy requirements without achieving genuine independence. Multiple circuits, multiple providers, and favourable service level agreements can create confidence while concealing common dependencies. Two carriers may enter a site through different meet-me points yet share the same external duct for much of their route. Providers that appear diverse on paper may still converge at the same metropolitan point of presence or rely on the same wholesale

backhaul. Even routes designed to be physically separate can terminate at power-dependent aggregation facilities that receive limited design and operational scrutiny.

When a regional fibre cut, power incident, or maintenance error occurs, these dependencies are exposed immediately. Outages then cascade not because redundancy was absent, but because it was assumed rather than verified. For data centre operators, this distinction is critical. Redundancy addresses individual component failure. Resilience addresses systemic failure across interconnected infrastructure.

Physical diversity is not an abstract principle. It is a discipline rooted in route awareness. Operators and developers need a clear understanding of how fibre actually reaches a site, where routes intersect, and which assets are genuinely independent. This extends beyond high-level topology diagrams to include ducting, road crossings, building entry points, and campus-level distribution.

The last mile remains one of the most common sources of connectivity failure, where construction activity, accidental damage, and environmental factors converge. Multiple entry routes from different directions, built on genuinely separate infrastructure, significantly reduce this risk. These decisions are far easier to implement during site selection, when meaningful diversity can be designed in, than during later retrofit projects where vulnerabilities are costly and complex to address.

Carrier diversity only delivers value when it provides real operational separation. Selecting multiple providers offers limited protection if they rely on the same upstream plant or terminate in the same facilities. Separation of points of presence should be treated as a design requirement rather than a commercial preference. Within the campus, connectivity should be treated as core infrastructure, not as a bolt-on service. Route separation, equipment placement, and meet-me room design all influence whether diversity survives beyond the perimeter fence or collapses into a single effective failure domain.

When outages occur, the surprise is rarely that something broke, but that multiple routes described as diverse failed at the same time

Operational models ultimately determine whether diversity translates into resilience. Designs that rely on idle backup links are straightforward to specify but carry risk. Secondary paths are often under-tested, poorly monitored, or quietly degraded over time. When they are finally activated, it is usually during an incident when tolerance for failure is at its lowest.

By contrast, distributing traffic across multiple active paths can surface weaknesses earlier. Continuous use allows degradation to be identified before it becomes an outage and supports more controlled responses when conditions deteriorate. This approach requires stronger monitoring,

clearer accountability, and greater operational discipline, but it reduces recovery times and avoids surprises during incidents.

No single operational model is universally correct. What matters is clarity about trade-offs and rigour in execution. Regular failover testing is essential, and it must extend beyond idealised scenarios. Facilities should test partial failures that reflect real-world conditions, including upstream provider incidents and application behaviour, not just device-level events. The aim is to understand how services behave under stress and whether the intended diversity preserves continuity for customers.

The industry does not lack evidence of how networks fail. Regional fibre cuts frequently affect multiple carriers at once due to shared civil routes. Incidents at a single point of presence can isolate entire metropolitan areas. Maintenance and configuration errors continue to account for a significant proportion of outages, even in well-designed environments.

What distinguishes resilient data centres is not the absence of these events, but their containment. When connectivity is distributed across genuinely independent routes, facilities, and operators, failures remain localised. Recovery becomes a matter of rerouting traffic rather than waiting for physical repairs. Diversity also creates operational choice. Teams with multiple independent paths retain options under pressure. Teams without them are constrained, regardless of preparation or expertise.

One of the biggest barriers to meaningful network diversity is cultural. Projects are often driven by timelines, cost controls, and standardised templates that treat diversity as a secondary consideration rather than a core design constraint. This approach underestimates the true cost of failure. Outages do not only disrupt customer operations. They damage availability metrics, erode confidence, increase contractual exposure, and invite regulatory scrutiny.

For boards, investors, and developers, treating network diversity as a baseline requirement is often less costly than absorbing the impact of repeated major incidents. Designing for failure is not pessimistic. It is pragmatic in a landscape where complex systems fail in ways that design documents rarely anticipate.

Traditional resilience metrics are reaching their limits. Availability targets and recovery time objectives remain useful, but they do not capture the difference between a contained fault and a systemic outage. A more meaningful measure is service continuity under stress. This includes how much functionality is retained when components fail, how quickly traffic can be rerouted, and how many genuinely independent failure domains exist between a data centre and the wider network.

As reliance on digital infrastructure accelerates, these questions are becoming central to how facilities are designed, assessed, and trusted.

The resilience of data centres is no longer defined by the absence of failure, but by the ability to absorb disruption while continuing to deliver dependable services. Achieving this requires deliberate, verifiable network diversity across routes, carriers, facilities, and operations, rather than a narrow focus on raw bandwidth. Hybrid network diversity, designed from the outset and exercised through day-to-day operations, can be an effective yet underused approach to meeting continuity expectations.

Tony Fischels, Vice President of PowerOne, a division of Airsys, sits down with DCR to detail what AI-driven power density is doing to data centre cooling – and why the industry may need to rethink how it measures efficiency.

Can you tell me more about the PowerOne division within AirSys –and how your solutions are built to handle the ever-increasing power densities in AI data centre environments?

Yeah, of course. So PowerOne is a newer division within Airsys. It was really created to tackle some of the challenges we’re seeing today in AI data centre deployments. Some of those challenges are around infrastructure densification, stranded power, quick deployment etc.

So PowerOne is made-up of two single-phase liquid cooling solutions. One is direct-to-chip, which is more or less the standard product you’d see at most data centre deployments today. And we have a very revolutionary new technology called LiquidRack.

LiquidRack is a spray cooling technology where the coolant sprays directly on the chip rather than going through a cold plate. We’ve really created a completely new category in the industry which we’re calling server-level cooling or server level liquid cooling unit.

What are some of the advantages of LiquidRack, and how do you see this solution fitting into current or future data centres?

For LiquidRack, it has advantages at both the server server and chip level. And in terms of infrastructure too. So at the chip level with the spray cooling technology, we

Q A Q A Q A

have the ability to reject more heat at the chip compared to let’s say a standard direct-to-chip solution.

We’re going through a cold plate at the infrastructure-level. It really simplifies the cooling system overall. We bring those heat exchangers and pumps that you typically see in a CDU to the rack level and we also operate as a compressor–less system. So we really have a dry cooler connected to LiquidRack and nothing in between.

We see this as a really good fit for legacytype data centres where there isn’t a lot of room to maybe move from air cooling to liquid cooling and then as well as some of these larger data centres too that are just looking to reduce the amount of infrastructure they’re using for their cooling infrastructure..

For a long time, Power Usage Effectiveness has been the main benchmark used by data centres – but AirSys has been promoting ‘Power Compute Effectiveness’ as a new metric. Can you explain how this differs from PUE?

Our Power Compute Effectiveness does work hand in hand with PUE. It’s not that we’re trying to shove PUE aside. PUE has been used for a long time in the industry, and it’s essentially a metric for cooling efficiency for the most part.

PCE is more focused on compute and really what it is, is the ratio of power allocated

for compute compared to that of the facility. Overall, it’s a metric that falls more in line with how data centres are designed and built today and shows how much revenue is being generated from the provision of power as well.

So we feel that the way that we see data centres being built that PCE falls a little more in line with it.

With 2026 coming up – we’ve been getting a lot of predictions. Naturally AI isn’t going anywhere – so I’m wondering if you can share what you think are some of the challenges that customers should consider when preparing for the future of AI builds? And exactly how can AirSys be of support?

Q A

I’ll talk in terms of cooling systems here. But one of the bigger challenges is really designing for a data centre today knowing that, let’s say, your cooling system will have to adapt to the increasing workload densities of the future.

At Airsys, we do believe we have the technologies to create what we call a thermal evolution path, essentially a path to, you know, swap in one technology for another with that system that is designed today going from direct-to-chip to server-level liquid cooling.

And then moving on to potentially two phase solutions using the same system, we have a partner company called Aegis, which is our partner arm that has created two-phase solutions. So we can tap into that as well.

We’re fighting over GPUs and memory – but power manufacturing may decide who scales first

Matt Coffel, Chief Commercial and Innovation Officer at Mission Critical Group, argues that while data centres contend with tight silicon supply and rising costs, a quieter constraint is electrical manufacturing capacity and skilled trades – and that may ultimately determine how fast new AI capacity comes online.

Whether it’s GPU supply constraints, allocation battles or which hyperscaler will secure the next generation of chips, the spotlight rarely moves away from compute. But if you walk into any factory that builds electrical gear for data centre power systems, another constraint becomes clear – and it’s not silicon or rare earth metals.

Electrical manufacturing capacity and the availability of skilled trades are becoming significant factors in the rate at which data infrastructure can scale. The industry has spent decades optimising compute performance, but now it’ll need to optimise everything around it. Estimates vary, but power demand from data centres is expected to rise sharply by 2035 – for example, from about 33GW to 176GW – which means we’re entering a phase where the ability to build, test and deliver power systems efficiently will help determine who brings capacity online fastest.

AI-dense workloads are rewriting power requirements

AI-intensive data centres have different needs from traditional data centres, making electrical infrastructure critical. With rising power densities, loads are running harder and for longer durations, reinforcing redundancy expectations. Switchgear, relay panels, power distribution units and modular power and cooling systems must all support 24/7/365 continuity for workloads that need reliable and resilient power.

This shift goes beyond scale. AI adoption is expanding so rapidly that operators are requesting equipment and turnkey builds that typically take 18–24 months in roughly half the time. That expectation is at odds with a manufacturing landscape that wasn’t designed for this kind of acceleration.

From hyperscale to colocation to enterprise, telecoms and utilities, demand for electrical gear is rising across nearly every customer segment. At the same time, manufacturers are running into several simultaneous pressures, including:

• Component lead times for everything from switchgear to relays and more are widening.

• Workforce shortages are constraining how quickly assembly and testing lines can scale.

• Engineering overload from custom builds slows down production –and those delays can cascade into downstream projects.

AI loads also raise the stakes because the GPUs used in data centres require stable power quality. The cost of failure isn’t just downtime – it’s efficiency loss, accuracy issues and delayed model completion.

Electrical systems aren’t assembled like consumer electronics: precision industrial equipment requires specialised technicians, careful quality control and assurance, and field or field-simulated testing. Speed and reliability matter, but you can’t rush safety.

To move more quickly yet safely, the industry has an opportunity to embrace modularisation, prefabricated power systems, digital twins and in-factory testing, as well as standardised assemblies. However, these can only go so far when upstream components, skilled labour and testing capacity continue to bottleneck the downstream supply chain.

To address electrical manufacturing bottlenecks, operators can rethink how they plan and build power systems. There are a few ways to reduce risk and lead times:

• Bring manufacturers into the design phase early. Many of today’s fastest projects are those where engineering teams collaborate from day one. This reduces waste and prevents late-stage surprises.

• Reduce over-customisation. Every deviation from a standard design adds engineering hours, manufacturing spec changes, QA effort and testing complexities. Standardisation is one of the key levers to speed deployment.

• Plan around power system lead times. Many projects treat electrical gear as a downstream dependency, but it’s one of the first things you should factor into timelines.

• Use modularised, prefabricated solutions. This approach reduces onsite labour constraints and delivery risk, while also enabling operators to get what they need more quickly, with the option to scale in the future – without an entirely new design.

• Design for future power density. GPU generation changes are outpacing electrical redesign cycles. Flexibility at the outset can be the difference between being able to grow or starting over from scratch. Organisations moving fastest on AI deployments are treating power as a strategic planning input, not an afterthought.

The limiting factor for AI expansion may not be who can build the biggest data centres… but who can get reliable, resilient electrical infrastructure into the field quickly, safely and at scale

In many cases, the constraint on AI growth is shifting from algorithms to infrastructure.

The limiting factor for AI expansion may not be who can build the biggest data centres or the highest volume of them – but who can get reliable, resilient electrical infrastructure into the field quickly, safely and at scale. Compute innovation will continue to accelerate, but the limits of the grid and power manufacturing capacity will influence who can keep up. We should acknowledge that accelerating electrical infrastructure is just as crucial as chip production. If we get this right, AI’s next chapter can unfold at a pace that meets current expectations. If not, we’ll have the GPUs and not enough power systems to turn them on.

s data centres continue to expand in scale, density and complexity, the demand for resilient and reliable power infrastructure has never been greater. With growing reliance on cloud services, digital platforms and critical online systems, even the shortest power interruption can result in operational disruption, data risk and financial loss.

While servers, cooling systems and network architecture often dominate discussions around data centre performance, one essential component consistently underpins resilience behind the scenes: industrial battery technology. From uninterruptible power supply (UPS) systems to reserve energy storage, batteries remain the first line of defence when grid power fails.

For decades, Yuasa has supported missioncritical environments where reliability is nonnegotiable. Its experience across data centres and wider critical infrastructure highlights a simple reality: dependable battery performance is fundamental to maintaining uptime.

When power disturbances occur, batteries provide immediate, seamless energy to critical systems. Unlike generators, which require start-up time, batteries respond instantly, maintaining stable power to servers, cooling equipment and network infrastructure.

This rapid response protects sensitive electronics, prevents data loss and ensures operations continue uninterrupted while backup generation comes online. In highavailability environments, this performance is essential.

As data centre loads increase and infrastructure becomes more powerdense, battery systems must deliver higher performance with absolute consistency. Reliability is not defined solely by capacity or headline specifications; it is determined by how predictably batteries perform under real-world conditions, often over many years of continuous operation.

Many data centres rely on high-rate battery systems capable of delivering large amounts of power over short durations. These batteries support UPS installations designed to

bridge the gap between grid failure and generator activation.

High-rate batteries are engineered specifically for this role, optimised for short-duration, high-power discharge. Key characteristics typically include high power density, low internal resistance for efficient output, stable voltage under heavy load and compact, maintenance-free designs that help optimise space within battery rooms.

In environments where space, efficiency and reliability are all critical, these features support both operational resilience and infrastructure planning. As UPS systems evolve to support increasingly demanding loads, battery performance becomes a defining factor in overall system reliability.

Battery reliability begins at the design and manufacturing stage. Materials, internal construction, plate technology and quality control processes all directly influence how a

battery performs throughout its service life.

Industrial battery systems used in data centres are engineered to operate under challenging conditions, including continuous float charging, elevated ambient temperatures, high discharge currents and long service-life expectations. Consistent performance under these conditions is essential for mission-critical installations, where backup systems must perform exactly as intended when called upon.

By focusing on stable chemistry, robust internal construction and controlled manufacturing processes, industrial battery designs aim to deliver predictable behaviour over time. For operators and engineers, this predictability simplifies system design, supports effective maintenance strategies and reduces the risk of unexpected failures.

Resilience is not only defined by how a battery performs during a power outage; it is also shaped by how it performs over

years of operation.

Battery systems represent long-term infrastructure investments. Their ability to maintain consistent performance, age predictably and integrate with maintenance programmes is essential for effective operational planning. Key lifecycle considerations typically include design life, operating temperature, monitoring strategy and end-of-life replacement planning.

High-quality industrial batteries are designed to offer stable performance across their service life, allowing operators to forecast replacement schedules accurately and avoid unplanned failures. This predictability reduces risk, supports compliance with uptime requirements and helps maintain consistent resilience across data centre estates.

While valve-regulated lead-acid (VRLA) batteries remain widely used in UPS systems, lithium-ion technology is playing an increasingly important role in modern data centre infrastructure.

Lithium-ion batteries offer several advantages for certain applications, including higher energy density, reduced footprint and weight, faster charging capabilities, longer cycle life and lower maintenance requirements. These characteristics make them attractive for facilities where space efficiency, scalability and long-term operational performance are priorities.

As data centres continue to evolve, lithiumion technology provides an alternative energy

storage option that can complement, or in some cases replace, traditional battery systems depending on operational requirements. Selecting the right technology remains highly application-specific, with reliability and lifecycle performance central to decision-making.

The same battery technologies used in data centres also support a wide range of other critical infrastructure sectors, including telecommunications networks, healthcare facilities, transport systems, utilities and emergency services.

In each of these environments, uninterrupted power is essential for safety, service continuity and operational stability. Experience gained across these sectors informs battery design, performance expectations and reliability standards within data centre applications, reinforcing the importance of proven technologies in high-risk environments.

Battery performance is strongly influenced by environmental conditions such as temperature, ventilation and charging regimes. Data centre battery rooms must be carefully designed to maintain optimal operating conditions, helping maximise service life and performance consistency.

Modern battery systems are increasingly supported by monitoring technologies that track voltage stability, temperature variation, internal resistance and charge-discharge behaviour. This data allows operators to identify potential issues early, implement predictive maintenance strategies and reduce