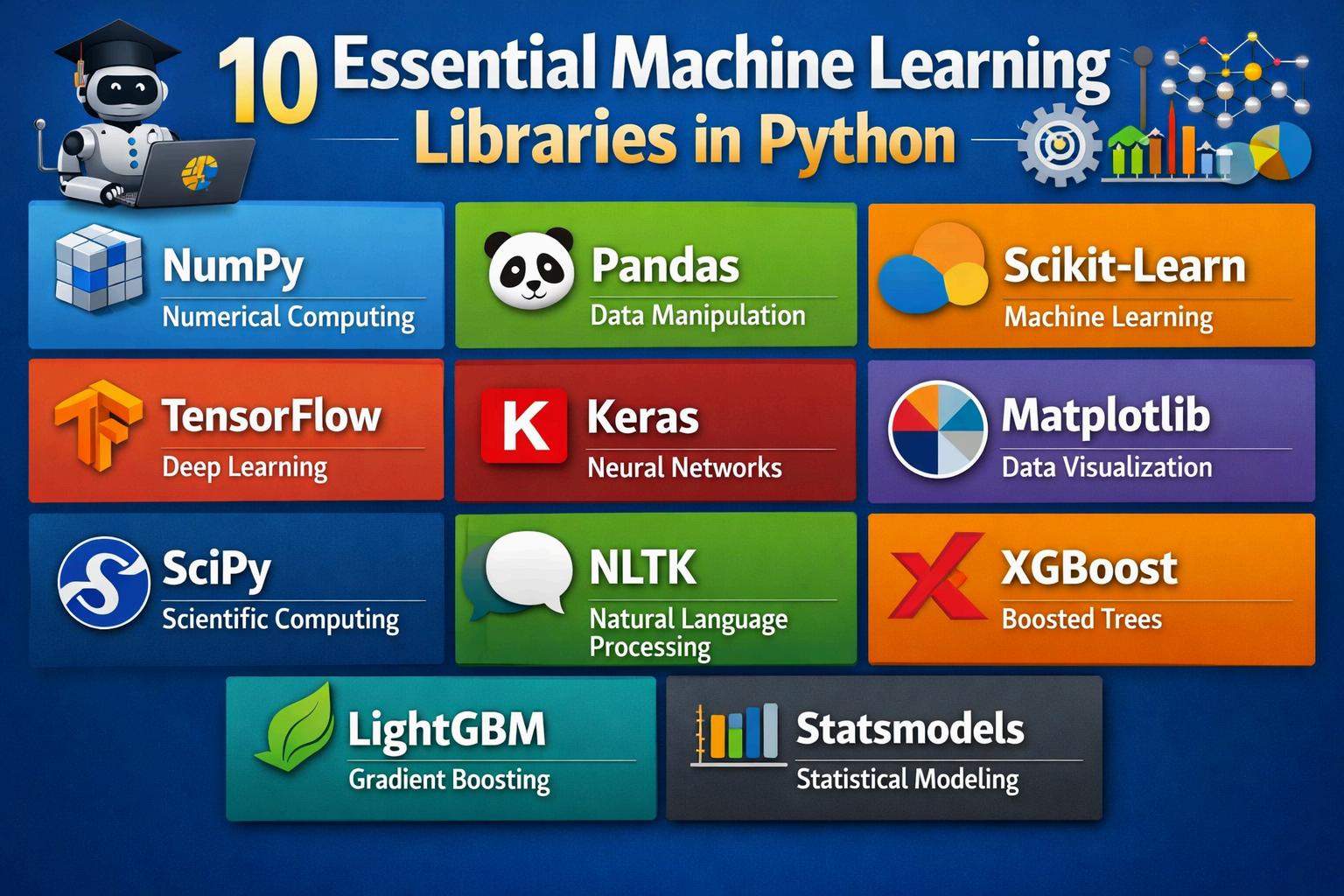

10 Essential Machine Learning Libraries in Python

Python has remained the dominant language for Machine Learning in 2026, largely due to its mature ecosystem of libraries that handle everything from basic data manipulation to complex generative AI.

Here are the 10 essential libraries you need to master for modern ML workflows.

1. NumPy: The Foundation

NumPy is the bedrock of scientific computing in Python. It provides highperformance multidimensional array objects and tools for working with them.

Key Use: Vectorized operations, linear algebra, and handling the raw numerical data that feeds into ML models

Why it’s essential: Almost every other library on this list (Pandas, ScikitLearn, TensorFlow) is built on top of NumPy.

2. Pandas & Polars: Data Wrangling

While Pandas remains the industry standard for data manipulation, Polars has become a crucial 2026 essential for high-performance, multi-threaded data processing.

Key Use: Cleaning messy data, handling missing values, and exploratory data analysis (EDA)

Pro Tip: Use Pandas for smaller datasets and Polars when you need to process millions of rows without crashing your memory.

3. Scikit-Learn: Classical Machine Learning

If you aren't doing Deep Learning, you're likely using Scikit-Learn. It is the go-to library for "classical" algorithms.

Core Algorithms: Linear Regression, Random Forests, Support Vector Machines (SVM), and K-Means Clustering.

Best Feature: Its unified API makes it incredibly easy to swap one algorithm for another to compare performance.

4. PyTorch: The Deep Learning Leader

In 2026, PyTorch has emerged as the preferred framework for researchers and GenAI developers alike due to its "pythonic" nature and dynamic computation graphs.

Key Use: Building Neural Networks, Computer Vision (CV), and Natural Language Processing (NLP).

Image of Neural Network Architecture]: Highlight: It is the primary backbone for training Large Language Models (LLMs).

5. TensorFlow / Keras: Enterprise Production

Developed by Google, TensorFlow is a robust ecosystem for deploying models at scale. Keras now serves as the high-level API that makes TensorFlow much more user-friendly.

Key Use: Production-grade deep learning and mobile/edge deployment via TensorFlow Lite.

Why it stays essential: Its integration with Google Cloud and specialized hardware (TPUs) makes it a beast for enterprise-scale training.

6. XGBoost / LightGBM: The Competition Kings

For structured/tabular data, Gradient Boosting Machines (GBMs) often outperform deep learning.

Key Use: Winning Kaggle competitions and high-accuracy business forecasting.

Performance: These libraries are highly optimized for speed and can handle missing data and categorical variables natively.

7. Hugging Face Transformers

No ML list in 2026 is complete without Hugging Face It has become the "App Store" for pre-trained AI models.

Key Use: Accessing state-of-the-art models like BERT, GPT, and Llama with just a few lines of code.

Impact: It has democratized NLP, allowing developers to use world-class models without needing a supercomputer to train them.

8. Matplotlib & Seaborn: Visualization

Data is useless if you can't explain it.

Matplotlib: The low-level workhorse for basic plots.

Seaborn: Built on top of Matplotlib, it makes beautiful, complex statistical visualizations (like heatmaps and violin plots) effortless

9. LangChain / LangGraph: Agentic AI

As we move toward "Agentic" workflows, LangChain and its stateful evolution, LangGraph, are essential for building applications that use LLMs as reasoning engines.

Key Use: Building RAG (Retrieval-Augmented Generation) systems and autonomous AI agents.

Context: It allows you to "chain" together models, vector databases, and external APIs

10. FastAPI: Deployment

Once your model is trained, you need a way for the world to use it. FastAPI is the modern standard for turning ML models into production-ready web APIs.

Key Use: Serving model predictions over the web. Why it wins: It is incredibly fast, supports asynchronous code, and automatically generates documentation (Swagger) for your API.

Comparison Table: Which Library to Use When?

Task Recommended Library

Numerical Math

NumPy

Data Cleaning Pandas / Polars

Classical ML

Deep Learning

Tabular Data / Tabular ML

NLP & Pre-trained Models

Visualizing Data

Building AI Agents

Scikit-Learn

PyTorch / TensorFlow

XGBoost / LightGBM

Hugging Face Transformers

Seaborn

LangChain