UNRAVELING THE DIGITAL TAPESTRY:

A CHRONICLE OF INTERNET REGULATION, DATA GOVERNANCE, AND THE DAWN OF AI LAWS

From the inception of the internet to the rise of AI, technology has revolutionized not just our daily lives but also raised significant questions about data and privacy regulation

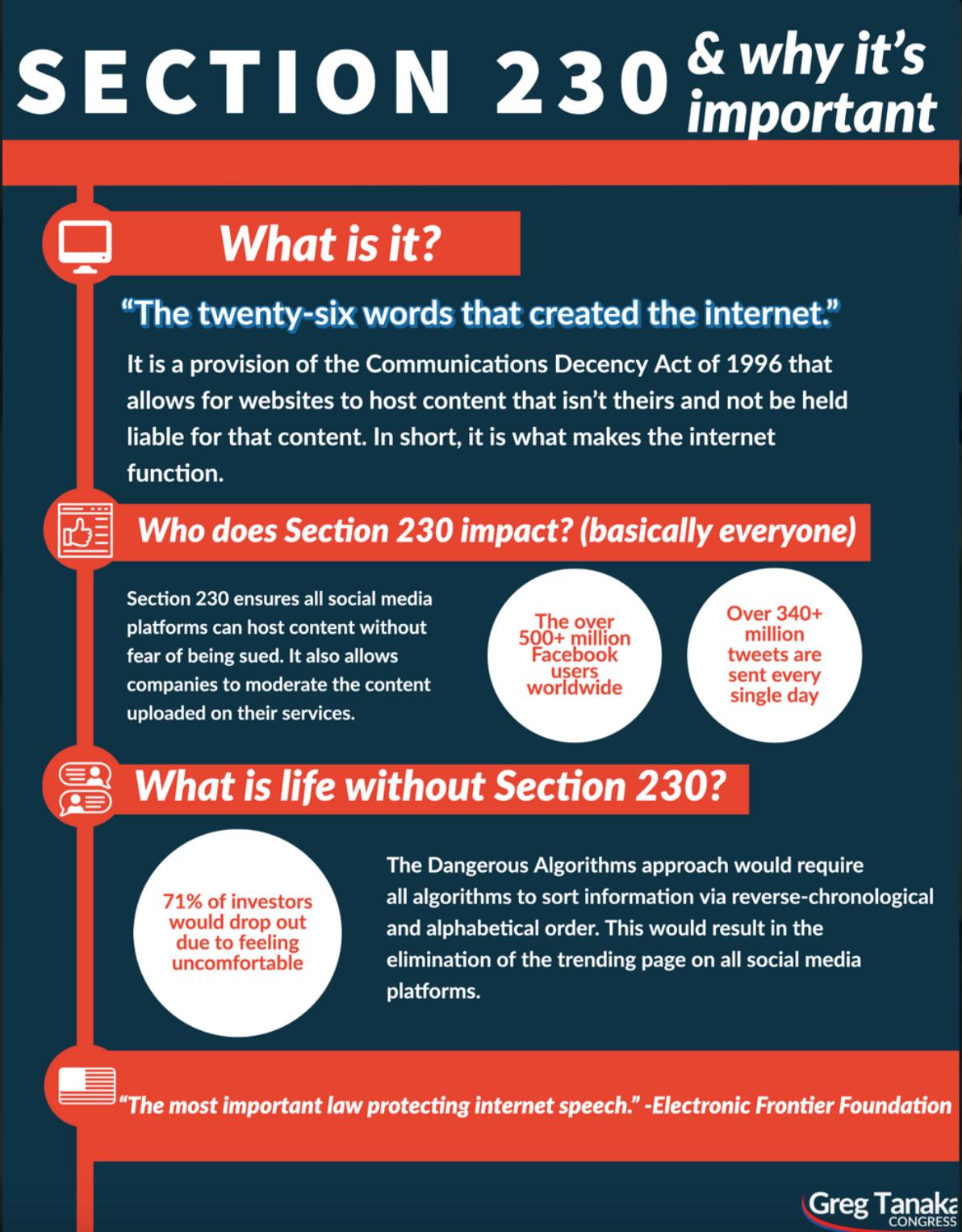

In the United States, Section 230 of the 1996 Communications Decency Act provides immunity to online platforms from liability for user-generated content while allowing them to moderate such content in good faith. However, this law which was created more than 25 years ago, still governs the current internet space and is now outdated by the rapid advancements of the 21st century, urging a critical reassessment especially on how it has impacted society, businesses, and government alike. It is imperative to update such a law, especially with the rise of AI.

In our zine, we embark on a journey to explore the evolution of internet regulation, delve into the current data and privacy regulations in the EU—examining the impact of GDPR. We also ponder the implications of the AI Act while providing solutions and insights from industry and academic experts, illuminating the lessons the US can glean from these regulatory frameworks.

Decency

Issue 15 : 1996 Communications

Act

Internet Law 1996!

Passed when Internet use was just starting to expand in both breadth of services and range of consumers in the United States.

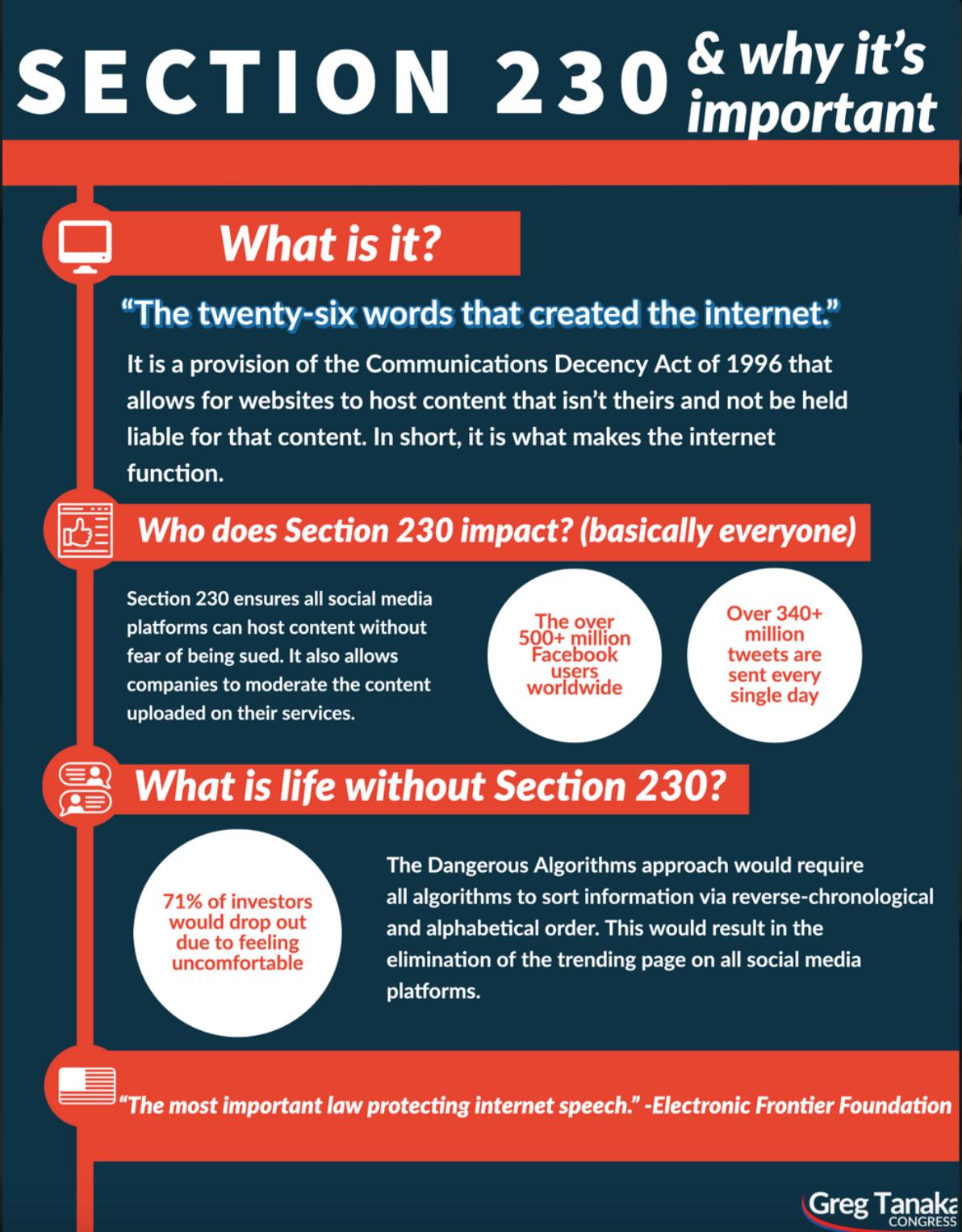

SECTION 230 (c)

Provides immunity from liability for providers and users of an "interactive computer service" who publish information provided by third-party users

SECTION 230 (c) (1)

SECTION 230: THE 90'S LAW STILL GOVERNING THE INTERNET

civil liability for operators of interactive computer services in the good faith removal or moderation of third-party material they deem "obscene, lewd, lascivious, filthy, excessively violent, harassing, or otherwise objectionable, whether or not such material is constitutionally protected."

Section 230 of the Communications Decency Act (CDA), enacted in 1996 as part of the broader Telecommunications Act, is a foundational piece of internet law in the United States. and generally provides immunity for online computer services with respect to third-party content generated by its users.

THE PROBLEM

The technology of the 1990s looked nothing like today’s connected world & internet hosted just a fraction of the billions of people who now use it every day.

Section 230 has frequently been referred to as a key law, which allowed the Internet to develop. provides "Good Samaritan" protection from

(1)

Issue 15 : 1996 Communications Decency Act

IMAGE SOURCE:

GDPR LAW 2016!

Passed when Internet use was just starting to expand in both breadth of services and range of consumers in the United States.

THE GDPR REPLACED THE DATA PROTECTION DIRECTIVE OF 1995, WHICH HAD BECOME OUTDATED IN THE DIGITAL AGE AND WAS IMPLEMENTED INCONSISTENTLY.

The General Data Protection Regulation (GDPR) is a comprehensive data protection law that was implemented by the European Union (EU) in 2018. It aims to protect the privacy rights of individuals within the EU and the European Economic Area (EEA) by regulating how organizations collect, process, and store personal data.

GDPR KEY PROVISIONS

Data Subject Rights: Gives individuals rights over their personal data like access, rectification, erasure, data portability, objection to processing.

Consent Requirements: Mandates explicit, affirmative consent from individuals for processing their personal data.

Data Protection by Design/Default: Requires data protection measures to be built into products/services from inception and as the default setting.

GDPR

Issue 153 : 2016

LAW

GDPRVS

By Dylan VOICE

OF THE PEOPLE

“ ”

There’s a whole subfield dedicated explicitly to privacy-preserving AI and tons of different technical approaches to these issues, but that isn’t my specialty— some google scholar searches on privacy-preserving AI might be useful to get a sense of what’s going on in the field rightnow!

GOOGLE'S GDPR WOES: HEFTY FINES AND LIMITED ENFORCEMENT OF THE PEOPLE

Google, a prominent tech giant, has faced hefty GDPR fines exceeding those of its Silicon Valley counterparts. In 2019, CNIL fined Google €50 million for insufficient disclosure of data collection methods, followed by additional fines of €60 million on Google LLC and €40 million on Google Ireland in 2020 for non-compliance with French cookie consent laws.

Despite numerous complaints and investigations, enforcement against major tech firms has been limited.

Consumer groups filed GDPR complaints against Google in 2018, prompted by concerns about its extensive location-tracking practices. BEUC's report exposed Google's deceptive practices, leading to calls for more effective GDPR enforcement.

ISSUE 01 • PAGE 1 HCI ETHICS WEEKLY NEWSLETTER

MILLION

GOOGLE TO PAY RECORD €50

GDPR FINES AS CNIL DRAWS LINES IN THE SAND

VS

“

VOICE OF PROFESSIONALS

Data that is created by users becomes companies’ property. This power imbalance is not fair (forced consent)

Common industry approaches put the responsibility on the users who has limited resources (not accessible & sustainable)

”

META HAS BEEN FINED $1.3 BILLION BY DPC FOR OVER 533 MILLION FACEBOOK USERS DATA BREACH FROM 106 COUNTRIES

Meta platform users are forced to consent to Facebook’s terms and conditions.

Facebook & IG contain vast troves of personal data visible only to you or friends. Meta has not confirmed whether this content could also train its AI models in the future.

Meta: while we don't sell your information, businesses and organizations can collect your activity from their apps and websites and send it to us.

Researcher response:

3-10% want personalized ads –but 99.9% consent. So-called “Pay or Okay” systems are the antithesis of free consent and fundamentally affect the “free will” of users.

Meta response:

Pay or Okay”: The Move to Paid subscription in exchange for an ad-free experience for EU users

“Generative AI Data Subject Rights” form: allowing users to delete certain bits of their personal data used for AI training (this only applies to third-party data, not content directly posted on Meta platforms)

PRODUCTS, WE COLLECT SOME INFORMATION ABOUT YOU EVEN IF YOU DON'T HAVE AN ACCOUNT. GDPR

WHEN YOU USE OUR

VS

GDPR

“

VOICE OF THE PEOPLE

By Anonymous

One of the ways that people take action is by online groups and meeting other people who have gone through similar things how can we design online systems giving each other that space

to design mitigate harm.

”

AMAZON FACES MULTIPLE GDPR VIOLATIONS: A CLOSER LOOK AT RECENT CHARGES AND LEGAL CHALLENGES

In 2021, Amazon incurred a €746 million fine for breaching the EU's General Data Protection Regulation (GDPR) regarding the processing of personal data.

Amazon was charged with several violations per GDPR guidelines: CNIL for breaching consent guidelines on its French website regarding cookie usage. 'noyb' lodged a complaint with

German regulators over email security, and the European Society for Data Protection sued Amazon for using the invalidated EU-US Privacy Shield agreement.

Despite being one of the largest GDPR fines, the frequency of such penalties remains uncertain, given historical reductions in fines against other companies. Amazon is currently contesting the charges in court, aiming to appeal the record fine.

ISSUE 01 • PAGE 2 HCI ETHICS WEEKLY NEWSLETTER

AMAZON INCURRED A €746 MILLION FINE FOR BREACHING GDPR

GDPRVS

VOICE OF THE PEOPLE “

By Anonymous

Data say a lot about us and it seems like there's a significant amount of our data out there. Especially with the emergence of AI systems, there's this black box surrounding their usage and how our data will be utilized. If we value autonomy over our data usage, it's crucial to ensure its protection.

By Dylan Baker ”

I don’t like feeling like my data is being used to create things that might be harmful to the world, like some AI systems

APPLE'S PRIVACY REPUTATION UNDER SCRUTINY: RECENT GDPR INVESTIGATIONS SPARK CONCERNS

Despite its reputation for prioritizing user privacy, Apple has faced numerous investigations since 2018. The Irish DPC launched an inquiry in 2018 into Apple's handling of personal data for targeted advertising, questioning the transparency of its privacy policy.

In 2019, Apple faced further scrutiny over GDPR compliance related to a customer access request. Recently, privacy groups like Noyb and France Digitale have filed complaints against Apple, alleging unauthorized data storage and sharing for ad tracking without user consent.

These actions highlight escalating tensions over Apple's privacy updates despite previous praise for limiting ad-tracking.

According to a study done by Pew Research institute, over 67% of Americans are unsure how their data is being used. Below we asked two industry and academia experts why data should be safegaurded.

ISSUE 01 • PAGE 2 HCI ETHICS WEEKLY NEWSLETTER

GDPR VIOLATIONS

WHAT EXPERTS SAID TO THE FUTURE AI

SHAOMEI WU FOUNDER AIMPOWER

I feel it would be helpful if there was a notification that was sent out to inform people that their data is being to help train a model. Always choosing to prirotize intersectionality and always try to recruit people from marginalized groups with stutters We pair people with different background and encourage them to work together on broad solutions from different backgrounds and to learn how to think collectively

There are other solutions: Enforce kind of collective consent (data governance, data sharing). There is no legal framework for collective community ownership. Current data collection culture assumes distinct parties to be the data collector, data owner, and data subject. This is not ideal, so that’s why there should be a social-technical infrastructure to support this kind of work

ANONYMOUS

One of the ways that people take action is bt online groups and meeting other people who have gone through similar things how can we design online systems giving each other that space to design mitigate harm. Participatory approaches are not for the users to understand the harm of the product but it is to understand how users work with products, it is it to understand how the users will engage with the product and assess with what kind of harm can come from it

DYLAN BAKER DAIR INSTITUTE

I think independent auditing and oversight could, in an ideal case, mean that a diverse group of researchers across varied disciplines and backgrounds could all do thorough research into social media companies, with guarantees that the data they are getting is complete and representative, and protections from retaliation from those companies. These kinds of auditing or oversight bodies would need the ability to substantively penalize tech companies for violations; and they’d probably need some kinds of data infrastructure to access internal data and actually perform audits.

I know these exist but no specific examples come to mind immediately. Towards this, I’d do some digging into examples of data cooperatives and see how those have played out. I’d also maybe look into work that a lot of groups are doing with the intersection of data/tech and indigenous knowledge production, like some of the work Indigenous AI does? I really like the work that the Open and Collaborative Science in Development Network does, but that’s a little more on the side of knowledge production and less around data practices. Still, I’d look into the way those groups write and talk about data and see if you find paths that look promising towards answering your question.

AI law for the future

Passed when Internet use was just starting to expand in both breadth of services and range of consumers in the United States.

INTRODUCTION TO THE EU AI ACT:

REGULATING ARTIFICIAL INTELLIGENCE SYSTEMS

IN THE

EUROPEAN UNION

• The EU AI Act proposes a comprehensive regulatory framework for artificial intelligence technologies within the European Union.

• Risk-Based Approach: It introduces a risk-based approach to regulating AI systems, categorizing them into different risk levels based on their potential impact on safety, fundamental rights, and societal values.

The EU AI Act was a legislative proposal put forth by the European Commission to regulate artificial intelligence (AI) systems across the European Union (EU). The EU AI Act aimed to establish a comprehensive framework for the development, deployment, and use of AI technologies while ensuring that they adhere to certain ethical and legal standards.

• Mandatory Requirements: The Act sets out mandatory requirements for high-risk AI systems, including provisions related to data quality, transparency, accountability, and human oversight.

• Conformity Assessments and Certification: It establishes procedures for conformity assessments and certification schemes to ensure compliance with regulatory requirements.

Issue243 :2024 EU AI Act

OUR LEARNINGS

Experts from industry and academia have noted a power imbalance in the tech industry that affects society, safety, and privacy. The US lacks a comprehensive federal statute governing data privacy, but frameworks like the GDPR and the upcoming AI Act can guide its own framework. The GDPR has improved data protection through transparency, accountability, individual control over personal data, and penalties for non-compliance. The US should prioritize similar principles, including data protection, transparency, accountability, individual rights, and risk mitigation, through AI laws that prioritize privacy, individual control, accountability, and penalties for violations. By applying these learnings, policymakers can create responsible and ethical AI regulatory frameworks.

As AI continues to grow across all industries, it is imperative for the U.S. to consider the following principles:

Data Protection and Transparency: Similar to the GDPR's focus, AI laws in the US should prioritize data protection and transparency, ensuring that individuals have visibility and control over how their data is used in AI systems.

Accountability and Compliance: AI laws should impose accountability measures on organizations developing and deploying AI systems, including requirements for compliance assessments and mechanisms for addressing non-compliance.

Penalties for Violations: Like the GDPR, AI laws should include penalties for violations to incentivize organizations to prioritize ethical and responsible AI practices.

Individual Rights and Privacy: AI laws should uphold individuals' rights to privacy and autonomy, providing mechanisms for individuals to access and control their data used in AI systems.

Risk Assessment and Mitigation: Adopting a risk-based approach, AI laws should require organizations to assess the risks associated with AI systems and implement measures to mitigate those risks, particularly those related to privacy, bias, and discrimination.