The industry is consolidating its approach away from deploying technology solutions as tools, towards redesigning how work, security, and decision-making come together in increasingly complex environments. With AI at the centre of this shift, the focus is moving beyond the initial phase of productivity gains towards execution by an AI-driven workforce.

From copilots to agents, from dashboards to decisions, enterprises are beginning to explore what it means for AI to not just assist, but actively participate in workflows. This raises new questions around accountability, governance, and the structure of work itself, particularly as organisations begin to manage both human and machine-led processes. We are fast-tracking towards an era where AI agents are likely to be colleagues, bringing associated challenges into the mix.

While this transition unfolds, data security and identity remain serious concerns that organisations must address right from the design and implementation stages. Governance assumes even greater significance when organisations operate with a mixed workforce of humans and AI agents working and collaborating together. The nature of these collaborations will vary across industries and organisations.

As identity becomes the primary attack surface and data environments grow more distributed, enterprises are being pushed to adopt models that prioritise continuous verification, unified visibility, and real-time control. The convergence of AI, data platforms, and security frameworks is no longer optional, it is becoming central to how organisations operate.

What is becoming increasingly evident is that technology alone will not define success. The organisations that move ahead will be those that align architecture with operating models, integrate intelligence into core workflows, and rethink how value is created and measured.

The enterprise is being restructured around intelligence, autonomy, and the ability to turn data into decisions at scale. This transition is also exposing new pressures such as CIO accountability tied to AI outcomes, highlighting that success will depend as much on alignment and execution as on technology itself.

RAMAN NARAYAN

Co-Founder & Editor in Chief narayan@leapmediallc.com Mob: +971-55-7802403

Ali Raza Designer

R. Narayan Editor in Chief, CXO DX

SAUMYADEEP HALDER

Co-Founder & MD saumyadeep@leapmediallc.com Mob: +971-54-4458401

Nihal Shetty Webmaster

MALLIKA REGO

Co-Founder & Director Client Solutions mallika@leapmediallc.com Mob: +971-50-2489676

Through its CONNECT platform and AI-infused digital twin capabilities, AVEVA is enabling the utilities industry transition from fragmented operations to unified, intelligence-driven systems.

Sabir Saleem, CEO at MBUZZ, highlights how the company is expanding its focus across AI, datacentre, networking, security, and intelligent computing to align capabilities with real business requirements.

Ahmad Shakora, Group Vice President at Cloudera how Cloudera is enabling organisations to bring AI to their data while maintaining governance, flexibility, and hybrid control.

Tommaso Stefano Tini, Head of Digital Twin Market Growth and Consulting at Omnix International writes that digital twins are being seen as foundational capabilities that support better governance and performance across complex built environments.

Tolga Özdil, Regional Commercial Director, Middle East, Turkey & Africa (META) at ASUS, outlines how the company is aligning its portfolio around AI-ready devices, hybrid work demands, and secure, high-performance computing.

The clock is ticking for security operation centers in the Middle East, with relevance and resilience hanging in the balance, writes Ahmad Alshaer, Security Leader, Middle East & Africa, DXC Technology

Siddhesh Nagaonkar, CISO at Direct Honest Safe International Exchange FZE, highlights how evolving threats and AI-driven attacks are reshaping how organisations approach identity security.

Mortada Ayad, VP – META at Delinea, examines why enterprises are managing AI agents with far less discipline than human employees, and the risks this creates.

Sameer Joshi, Digitalization Director, NADEC, explores how the rise of AI agents is redefining the nature of work and what it means for organisations preparing to manage a hybrid workforce of humans and machines.

Raif Abou Diab, General Manager –South Gulf & Sub-Saharan Africa at Nutanix, examines why cloud operations are struggling to keep pace and what organisations in the MEA region must rethink to regain control.

Pure Storage has announced its new name: Everpure. This change reflects the company’s greater impact from reshaping storage to defining the future of data management. The company also announced it has entered into a definitive agreement to acquire 1touch, an innovator in data intelligence and orchestration that provides a comprehensive, unified view of an enterprise’s information. With 1touch, Everpure furthers its commitment to data management innovation, making data secure, accessible, intelligent, and ready to perform.

“Everpure reflects the company we have become as we help enterprises unleash the full power of their data. It captures the power of our Enterprise Data Cloud architecture and adaptability of Evergreen, reinforcing what has always set us apart as we redefine important markets. With 1touch, we are taking the next step in helping organizations not only gain control of

their most valuable asset—data—but also understand, enhance, and contextualize that data for actionable intelligence,” said Charles Giancarlo, CEO of Everpure.

As AI becomes central to business operations, the modern enterprise has reached an inflection point. AI has exposed the weaknesses of current infrastructure, where siloed data, manual processes, and inflexible architectures cannot support the scale, speed, and intelligence demands of enterprise AI.

Powered by the Everpure Platform (formerly the Pure Storage Platform), Everpure’s Enterprise Data Cloud (EDC) architecture transforms storage into a unified, virtualized cloud of data, governed by an intelligent control plane. It manages datasets globally, through policy, eliminating the friction of manual configurations, which brings unprecedented simplicity,

Charles Giancarlo CEO, Everpure

agility, and efficiency to data management.

The acquisition of 1touch will extend Everpure’s data management capabilities by adding data discovery and semantic context to the Everpure Platform. By integrating storage with 1touch’s ability to discover, classify, contextualize, and enrich data across all datasets and any environment—from SaaS to the edge—Everpure will ensure enterprise data is inherently AIready at the source.

Forty-three percent of respondents reported increased physical security budgets in 2025

Genetec, a global leader in enterprise physical security software, released its Saudi Arabia findings from the 2026 State of Physical Security Report. Based on insights from more than 150 physical security professionals in Saudi Arabia, the findings show a market that is investing confidently in modern, connected security infrastructure, with strong momentum in cloud adoption and operational modernization.

The report reveals that Saudi Arabia has the highest proportion of cloud-based physical security systems in the EMEA region, with 13 percent of respondents stating they use cloud security systems, compared to the EMEA average of seven percent. This reflects a growing preference for flexible deployment models that support scalability, resilience and simplified system management.

Saudi Arabia also recorded the highest level of operating expenditure growth among

EMEA markets surveyed. Forty-three percent of respondents reported increased physical security budgets in 2025, nearly double the EMEA average of 24 percent. Among those reporting an increase, 92 percent said budgets grew by more than 10 percent, and nearly two-thirds (64 percent) reporting growth of 11 to 25 percent, highlighting sustained investment in security as a strategic business function.

Unlike many markets across EMEA, outdated infrastructure is not seen as a major barrier in Saudi Arabia. Only 12 percent of respondents cited legacy security infrastructure as a top challenge, compared with 44 percent across EMEA overall, reflecting the Kingdom’s continued investment in new infrastructure, smart cities and largescale development projects.

“Saudi organizations are moving quickly from traditional security deployments toward connected, flexible platforms that

Regional Director, MEA, Genetec

support broader operational requirements,” said Firas Jadalla, Regional Director for the Middle East and Africa at Genetec Inc. “The Saudi findings reinforce the Kingdom’s position as a fast-moving and future-focused security market, where organizations are prioritizing sustained investment that aligns with the Kingdom’s Vision 2030 digital transformation goals.”

The solution will detect AI, protect AI, and undo AI mistakes precisely with deep data and AI risk intelligence.

Veeam Software announced Agent Commander, a unified solution to help organizations safely detect AI risk, protect AI systems, and undo AI mistakes, empowering them to proactively address AI-driven risks and securely scale AI agents everywhere. The first integration from Veeam’s successful acquisition of Securiti AI, Agent Commander combines the capabilities of both to give organizations visibility, control, and protection over their entire data and AI estate, with the ability to undo AI mistakes with precision and ease. Agent Commander will be available in a future release of the Securiti Data Command Center, bringing together the industry’s leading Data Resilience and Data Security capabilities.

“AI happens at machine speed, which means organizations must understand what data is being used, by what agent, and how in real-time. If an error occurs, organiza-

tions not only need to understand what data was impacted, but they also need the ability to undo any damage rapidly,” said Anand Eswaran, CEO of Veeam. “With Agent Commander, organizations know what data is powering AI, and it gives them the power to detect, protect, and, when necessary, undo AI actions with speed and precision. It represents the future of what’s expected from data security and data resilience, and it’s only possible with Veeam’s unified platform.”

The most critical gap in AI infrastructure today is trust. An agent is only as trustworthy as the data it can see, access, and act on. Yet enterprise controls remain fragmented with separate systems for protection, security, governance, and recovery, and none built to provide unified visibility, granular control, or precision response at the speed and scale AI now demands.

Commander brings Veeam’s trusted data resilience together with Securiti AI’s Data Command Center. This unified platform gives organizations total visibility into their AI environment, detects hidden risks and Shadow AI, and provides comprehensive controls to protect data as it moves through AI systems.

New AgenticOps capabilities in networking, security, and observability reimagine how to automate, scale, and simplify IT operations in the AI era.

Cisco has announced new AgenticOps innovations for the AI era. First launched last year, AgenticOps is an agent-first IT operating model for autonomous action with built-in oversight. New capabilities unveiled today across networking, security, and observability further transform how IT teams operate at scale.

“For teams responsible for operating and securing distributed networks and infrastructure, AgenticOps represents a profound and fundamental shift away from complexity,” said Jeetu Patel, President and Chief Product Officer, Cisco. “This is the true power of Cisco as a platform. By delivering agentic capabilities aligned to critical IT operations priorities, we’re combining Cisco’s unique crossdomain visibility, purpose-built models, and governance together to supercharge teams.”

Cisco is extending agentic-driven operations across networking, security, and observability, delivering AgenticOps to support IT operations in cloud, onprem-

ises, airgapped industrial, enterprise, data center, and service provider environments.

New tools, skills, and platform enhancements across networking, security, and observability focus on operating networks at AI scale through intelligent, agentic execution. Capabilities include autonomous troubleshooting with end-to-end investigations, continuous optimization through context-aware recommendations, and trusted validation of network changes against live topology, configuration, and telemetry. Experience metrics consolidate network signals into a single actionable view, while agentic workflow creation enables deterministic automation within Cisco AI Assistant.

In data centers, AgenticOps enables early detection and intelligent event correlation to deliver prescriptive performance insights, while service providers benefit from Crosswork AI capabilities that identify and resolve complex multi-vendor issues more efficiently. Within Cisco Security Cloud

Control, firewall operations are enhanced through proactive policy recommendations, improved efficiency in detecting issues such as elephant flows, and continuous compliance monitoring for PCI-DSS. At the same time, AI Agent Monitoring in Splunk Observability Cloud provides visibility into the performance, cost, quality, and behavior of LLM and agentic applications.

BPS aims to enable MSPs to scale faster, simplify onboarding, and deliver Nutanix solutions through flexible, consumption-based models

Nutanix has announced a strategic new goto-market partnership with BPS, appointing the company as its first aggregator for Managed Service Provider (MSP) business across the Middle East and Egypt. The collaboration marks a significant milestone in Nutanix’s partner-first strategy and underscores its commitment to delivering customer-centric outcomes through a strong regional ecosystem.

Through this partnership, BPS aims to enable MSPs to scale faster, simplify onboarding, and deliver Nutanix solutions through flexible, consumption-based models. For customers, the collaboration translates into easier access to trusted local MSPs, consistent service quality, and solutions designed around evolving business needs.

Nutanix has launched a purpose-built MSP program designed to support both managed service providers and their customers over the long term. Through its partnership with BPS, Nutanix is positioned to deliver these benefits to MSPs more rapidly, enabling them to respond more effectively to customer needs. The agreement also

supports Nutanix’s expansion of its MSP footprint across the Middle East and Africa, strengthening an ecosystem that already includes more than 400 MSPs globally.

Shaista Ahmed, Director – Channel & Ecosystem, Middle East & Africa at Nutanix said, “By leveraging BPS’s extensive reach and expertise, we are able to access and nurture the MSP market, creating new opportunities for growth. This collaboration allows us to deliver tailored solutions, accelerate adoption of our portfolio, and provide dedicated support to these partners. Together, Nutanix and BPS are not just expanding our footprint—we are enabling a more focused, impactful, and sustainable approach to serving this critical market segment.”

“Being appointed as Nutanix’s first MSP aggregator across the Middle East and Egypt is a significant milestone for BPS. This partnership allows us to bring Nutanix’s industry-leading cloud platform closer to regional service providers, enabling faster onboarding, simplified consumption models, and stronger go-to-market execution,” concludes Negib Abouhabib, General Manager, BPS.

Dell will supply state-of-the-art client devices, workstations and infrastructure systems, supporting Ankabut’s mission

Ankabut, the UAE’s leading education cloud and network service provider, and Dell Technologies have signed a memorandum of understanding (MoU) to advance technological innovation within the country’s education sector.

The agreement, signed by Walid Yehia, Managing Director – South Gulf, Dell Technologies, and Tarek Jundi, CEO, Ankabut, underscores a shared commitment to redefine the learning experience in the UAE through cutting-edge technology and strategic collaboration.

Operating out of its state-of-the-art data centre at Khalifa University, Ankabut provides a wide array of services ranging from networking and virtualization to cloud, application services, security and managed support services.

Through this collaboration, Ankabut will leverage Dell’s GPU-as-a-service capabilities to empower academic institutions with accelerated computing for data-intensive research and advanced learning applica-

tions. Dell will also deliver high-performance computing systems and the latest client devices and workstations. These solutions will enable Ankabut to empower educational institutions across the UAE with secure, scalable and efficient technological infrastructures.

With this MoU, Ankabut aims to expand its capabilities as a Cloud Solution Provider, enabling more institutions to access high-performance computing and GPU resources that expand research frontiers, enhance teaching outcomes and foster innovation across the UAE’s education ecosystem.

Walid Yehia, Managing Director - South Gulf, Dell Technologies, said, “The UAE continues to set benchmarks for innovation in education through its commitment to technology-driven learning. Our collaboration with Ankabut aligns perfectly with this national vision, combining Dell’s advanced infrastructure solutions with Ankabut’s leadership in education networks to create a future-ready digital ecosystem for

students, researchers and educators across the country.”

Tarek Jundi, CEO, Ankabut, said, “This collaboration marks a pivotal step in advancing digital learning in the UAE. By integrating Dell Technologies’ advanced computing solutions with Ankabut’s cloud and connectivity expertise, we are empowering institutions to reimagine how education is delivered and experienced. Together, we’re enabling a smarter, more connected academic community that supports the UAE’s ambitions for a knowledge-based economy.”

Uptime Institute, the Global Digital Infrastructure Authority, announced that Khazna Data Centers, a global leader in hyperscale digital infrastructure, has achieved the Uptime Institute Tier III Certification of Design Documents (TCDD) award for its newest 100 MW AI-optimized data center, QAJ01 — set to be the first certified AI data center with liquid cooling in the Middle East and North Africa region.

This state-of-the-art development features 20 data halls, each delivering 5 MW of IT capacity, purpose-built to meet the demands of next-generation artificial intelligence (AI) workloads. The certification underscores Khazna’s commitment to designing world-class, resilient, and efficient data center infrastructure in alignment with the industry’s most rigorous global standards.

Located in Ajman, United Arab Emirates, the new facility has been designed with advanced liquid-cooling systems to support

the high rack densities and thermal loads required by large-scale AI training and inference applications, while optimizing energy efficiency and maintaining operational resilience.

“Achieving Tier III certification for our Ajman facility reflects Khazna’s deep commitment to engineering excellence and operational resilience as we scale to meet the AI era. QAJ1 sets a new regional benchmark, combining high-density readiness, advanced liquid cooling, and globally certified design to support the next generation of compute. It is a strategic milestone in our mission to deliver future-ready infrastructure, said Abdulmajeed Harmoodi, Chief Technology Officer, Khazna Data Centers.

“This Tier Certification marks an important advancement for the regional digital infrastructure ecosystem,” said Mustapha Louni, CBO, Uptime Institute. “Khazna’s AI-optimized facility integrates liquid cooling and high-density configurations

DXC proves AI at real enterprise scale through its own global deployment of Amazon Quick, supporting 115,000 employees across 70 countries.

DXC Technology, a leading enterprise technology and innovation partner, has announced the completion of DXC’s enterprise-wide deployment of Amazon Quick, the agentic AI-powered digital workspace, across its global workforce of 115,000 employees operating in 70 countries and the launch of the DXC Amazon Quick Practice, a new business unit focused on helping customers worldwide operationalize AI at scale across multivendor enterprise ecosystems.

Drawing on the same experience, operating models, and governance frameworks used inside DXC, the company helps customers move AI from pilot programs into full scale production with greater speed and confidence. As part of the rollout, DXC introduced an AI Advisor Agent that provides employees with a single access point for AI-related knowledge, tools, prototypes, and feedback and is now used by more than 40,000 engineers. The rollout

also includes role-based AI advisors, such as a Supply Chain Advisor that delivers fast, trusted operational guidance by connecting employees directly to validated knowledge, enabling teams to move faster with confidence.

“Deploying Amazon Quick across DXC’s global workforce gave us the opportunity to pressure-test at true enterprise scale. That experience now directly informs how we help our customers move beyond pilots and activate AI across their enterprises,” commented Russell Jukes, Chief Digital Information Officer, DXC.

DXC is launching the DXC Amazon Quick Practice to help enterprises deploy AI with greater speed, confidence, and control. Powered by more than 10,000 Amazon-certified professionals with over 1,000 trained and certified across Amazon AI specializations and DXC’s enterprise AI delivery programs, the practice com-

while maintaining Tier III level resilience. It demonstrates how data centers can evolve to meet the accelerating compute needs of AI without compromising reliability or efficiency.”

Uptime Institute’s Tier Certification of Design Documents (TCDD) is the first step in the Institute’s globally recognized Tier Certification process, validating that a facility’s design plans meet the requirements of its Tier Standard for Topology.

Russell Jukes Chief Digital Information Officer,

DXC

bines proven deployment methodologies, Amazon-native frameworks, and governance models validated within DXC’s own operations.

Cross-functional teams of AI architects, automation designers, and adoption leads partner with customers to identify high-impact use cases and rapidly deploy secure, pre-built AI capabilities spanning AI-powered research, advanced business intelligence, and agent-ready automation.

New index-based pricing model aims to offer governments worldwide equitable access to world class technology, software independence

WSO2, a leader in enterprise digital infrastructure technology, announced a new ‘Fair Pricing for Governments’ initiative, aimed at supporting public-sector organizations’ worldwide access to high-quality, ethical digital services at prices aligned with local economic realities.

The initiative is based on a simple principle: government technology fees should be proportional to the average citizen’s income to ensure fairness. By introducing a standardized, transparent pricing model for government customers, WSO2 seeks to eliminate arbitrage, reduce opportunities for corruption, and ensure that all eligible public-sector entities receive the best possible price.

“Our goal is to facilitate software independence and help governments worldwide access high-quality, ethical digital services,” said Dr. Sanjiva Weerawarana, Founder, CEO and chief product officer at

WSO2. “We believe that fair pricing must reflect the economic context in which governments operate. By aligning fees with national income levels and applying a consistent, transparent framework globally, we are taking a concrete step toward enabling more equitable digital modernization.”

The initiative builds on WSO2’s long-standing commitment to open-source principles, ethical technology practices, and inclusive modernization. By standardizing government pricing globally and anchoring it to internationally recognized economic indicators, WSO2 aims to remove financial barriers to adoption, strengthen digital sovereignty, and enable public-sector organizations to securely and sustainably transform critical digital services.

At the core of the initiative is an index-based pricing methodology that aligns WSO2’s government subscription fees with World Bank Country Income Clas-

Dr. Sanjiva Weerawarana

Founder, CEO & Chief Product Officer, WSO2

sifications, using W3C government membership fees as a baseline for calculation. Under this model, public sector entities can choose to receive standardized, non-negotiable discounts that reflect national income levels. For example, high-income countries will receive a 20% discount; upper-middle-income countries will be eligible for a 35% discount; lower-middle-income countries will receive a 50% discount, and low-income countries will be entitled to a 62% discount.

The company introduces its four-pillar approach to securing the AI transformation of enterprises

Check Point Software Technologies introduced its four-pillar strategy designed to help organizations securely navigate the AI era, alongside three strategic acquisitions that reinforce its platform and demonstrate execution of this vision.

At the core of this strategy are four pillars that reflect how organizations operate today:

Hybrid Mesh Network Security protects distributed enterprises across hybrid cloud, data centers, branch networks, and internet environments through a unified, AI-powered architecture.

Workspace Security focuses on securing the modern digital workspace — including endpoints, browsers, email, SaaS applications, and collaboration platforms — where users increasingly interact with AI technologies.

Exposure Management provides comprehensive visibility into organizational attack surfaces, enabling risk prioritization based on business context rather than isolated alerts.

AI Security protects the full lifecycle of AI adoption, including employee usage, enterprise AI applications, and autonomous AI agents.

These capabilities are delivered through Check Point’s open platform approach, often described as an “open garden” model, designed to integrate seamlessly with existing security ecosystems while providing prevention-first protection across multi-vendor environments.

To reinforce this strategy, Check Point announced the acquisitions of Cyata, Cyclops, and Rotate.

Cyata has developed an AI agent identity management platform that enables organizations to discover active AI agents, map permissions, monitor behavior, and enforce automated security policies. Cyclops provides a Cyber Asset Attack Surface Management platform that consolidates data across environments to deliver comprehensive asset visibility and risk prioritization. Rotate, an all-in-one platform purpose-built for managed service providers, enhances centralized protection across distributed workforces and SaaS environments.

Roi Karo Chief Strategy Officer, Check Point

Roi Karo, Chief Strategy Officer at Check Point, said, “As AI reshapes how organizations operate and how threats evolve, security must be fundamentally rethought. Our four-pillar strategy provides a clear framework to secure networks, workspaces, exposure risks, and AI-driven environments as a unified platform. The acquisitions we are announcing today demonstrate how we are executing on this vision and helping customers securely navigate the AI transformation.”

ElOuazzani will lead Censys’s expansion and position it as the region’s trusted internet intelligence partner, along with Rajaee Al-Dalgamouni and Ahmed Ehlayel

Censys has appointed Meriam ElOuazzani as its first dedicated Vice President for the Middle East, Turkey, and Africa (META) region. In her new role, Meriam will lead the company’s end-to-end regional growth strategy, including revenue expansion, partnerships and ecosystem building, as well as establishing the organization’s position as the default external attack surface intelligence layer for organizations across the region.

With over two decades of extensive experience in cybersecurity and enterprise technology, Meriam ElOuazzani has consistently built and scaled markets across the region, assembling the teams, channel ecosystems, and marketing blueprints. Her career trajectory reflects her strong regional leadership through her roles as Senior Regional Director at SentinelOne and, before that, multiple leadership roles at VMware across MENA. She has also previously led the Regional Product Sales for Mobility across the Middle East at Cisco Systems. At Censys, Meriam will focus on expand-

ing strategic partnerships across government and enterprises, including channels, MSSP, and hyperscaler alliances, to scale efficiently across diverse markets.

Meriam ElOuazzani, VP META, Censys said, “Over the past two decades in this region, I’ve witnessed firsthand how the right intelligence transforms the security operations entirely. Censys’s internet intelligence platform equips security teams with authoritative, real-time insight into exposure and adversary activity, replacing assumptions with actionable confidence. My mission is to establish Censys as a trusted partner across META, enabling the shift from reactive defense to proactive intelligence.”

Censys helps security teams identify exposures, monitor changes, and detect threats before they are exploited by continuously mapping internet-facing assets, services, and critical infrastructure. In the Middle East, Censys has already partnered with Rilian Technologies to bring its internet in-

telligence and ICS/OT capabilities to sovereign nations and critical infrastructure.

Censys has also appointed Rajaee Al-Dalgamouni as Regional Sales Director, META and Ahmed Ehlayel as Manager, Solutions Engineering, META to strengthen the regional team with Meriam.

Customers will benefit from capabilities that streamline secure software development and strengthen application protection at scale

AmiViz, a leading cybersecurity and AI-focused value-added distributor, announced a strategic partnership with Veracode, a global leader in application risk management, to distribute Veracode’s platform and help organisations secure modern software at scale. The partnership will enable Veracode to expand its presence across the Middle East, East Africa, and Libya. The alliance between trusted partners will leverage their complementary expertise to ensure cus-

tomers receive the highest standards of software security.

AmiViz has selected Veracode as a partner for its status as a pioneer in holistic application risk management, with nearly two decades of proprietary data, expertise, and innovation. Veracode empowers development and security teams to collaborate seamlessly, enabling them to build, secure, and maintain software from code to cloud. Leveraging Veracode’s cutting-edge technology and AI-powered remediation platform, organizations gain precise, actionable insights into exploitable risks, achieve real-time vulnerability remediation, and proactively reduce security debt at scale. This partnership underscores AmiViz’s commitment to

integrating advanced security solutions that align with modern software development and operational needs.

“Application security has become a board-level priority as organizations embrace AI-driven development,” said Ilyas Mohammed, COO of AmiViz. “By partnering with Veracode, we are equipping our partners and customers with a proven platform that embeds security directly into development workflows, enabling faster innovation with reduced risk.”

The partner programme provides solutions and services to get partners up and running straight away, with minimal impact to their existing business.

“Our partnership with AmiViz empowers security leaders across the Middle East and Africa to find, fix, and govern application risk at scale using Veracode’s integrated software security solutions,” said Michael Steinmetz, Senior Vice President of EMEA & APAC at Veracode. “Together, we’ll deliver transformative technology innovations, an enhanced customer experience, and deep, technical expertise to help organisations strengthen their security posture.”

98% of UAE CIOs say their professional reputation or career trajectory will be shaped by their success with AI, the highest globally

Sid Bhatia Area VP General Manager META, Dataiku

New global research from Dataiku, conducted by Harris Poll, The 7 Career-Making AI Decisions for CIOs in 2026, reveals that Artificial Intelligence is no longer just a business priority for CIOs; it is rapidly becoming their personal accountability test. Nowhere is the pressure more intense than in the UAE, where CIOs increasingly believe their careers, credibility, and organisational standing will be defined by how successfully they govern and deliver value from AI over the next 18 months.

Nearly all UAE CIOs (98%) say their professional reputation will be shaped by their success with AI, while 85% believe their role could be at risk if their organisation fails to deliver measurable business gains from AI in the next one to two years. This pressure is reinforced at the top, with 92% expecting CEO compensation to be directly linked to AI outcomes, signalling that accountability is cascading down from the boardroom.

This heightened scrutiny comes as UAE organisations surge ahead with AI adoption. Today, 65% of CIOs say AI agents are embedded in business-critical workflows, while reporting fewer day-to-day challenges with AI explainability than their global peers. Only 22% say they are frequently, or almost always, asked to justify AI outcomes they cannot fully explain (the lowest figure globally), suggesting a strong level of internal trust in AI-driven decision-making today.

However, the findings indicate that this confidence may mask growing exposure. The UAE ranks highest globally for concern that insufficient AI explainability could trigger a crisis that erodes

customer trust or brand credibility, with nearly two-thirds (63%) saying this outcome is very likely or certain. At the same time, three-quarters of UAE CIOs say their organisation would face high financial distress if the “AI bubble” were to burst, underscoring just how mission-critical AI has become to enterprise success in the country.

The pressure on CIOs is further compounded by the rapid decentralised adoption of AI across the workforce. More than three-quarters (78%) say employees are creating AI agents and applications faster than IT teams can govern them, while only one in five report having complete oversight of all AI agents in use across the organisation. This dynamic leaves CIOs personally accountable for systems they may not fully control, increasing the importance of traceability, governance, and visibility.

Encouragingly, the research suggests UAE organisations are beginning to respond. Two-thirds (67%) of CIOs say their organisations always require human sign-off before AI systems take action in business-critical workflows, and the UAE ranks first globally for having formal, documented human-in-the-loop procedures. Meanwhile, 65% believe it is at least very likely, if not certain, that governments will introduce AI explainability requirements this year, reinforcing the belief that the next phase of AI advancement in the country will be defined less by experimentation and more by defensibility.

“CIOs are moving from experimentation into accountability faster than most organisations expected,” said Florian Douetteau, Co-founder and CEO of Dataiku. “The pressure is real, and the timeline is tight, but there is a path to success. It favours CIOs who act decisively now, building AI systems they can explain, govern, and stand behind before accountability is imposed rather than chosen.”

Despite the rising pressure, UAE CIOs remain cautiously optimistic. They are the most confident globally that their current AI strategies will remain valid over the next year, suggesting that while the stakes are high, many believe they are moving in the right direction, provided they can maintain control as AI adoption accelerates.

“For CIOs in the UAE, the conversation is shifting from ‘how fast can we deploy AI?’ to ‘how confidently can we stand behind it,’” said Sid Bhatia, Area Vice President & General Manager –Middle East, Turkey & Africa at Dataiku. “If 2024 was the year enterprises proved they could build with AI, and 2025 was the year they proved they could deploy it, then 2026 is the year they must prove they can govern, defend, and measure it and do so at scale, under scrutiny, and with consequences attached. CIOs who focus on accountability and transparency now will be far better positioned to meet board expectations, regulatory scrutiny, and the realities of enterprise-wide AI adoption.”

MENA region becomes Zoho’s second fastest-growing market.

Zoho Corporation marked its 30th anniversary by announcing two major milestones: the company now supports more than one million paying customers and over 150 million users worldwide. The company — consisting of Zoho, ManageEngine, Qntrl, and TrainerCentral brands— is now a trusted technology provider to more than one million paying customers and over 150 million users globally.

In 2025, Zoho Corporation recorded a 32% year-on-year increase in customers and a 20% rise in revenue. Zoho also revealed that the Middle East and North Africa is the company’s second fastest-growing markets globally, which has seen 41% CAGR growth over the past five years with UAE being among its top markets, recording a CAGR of over 77% of customer growth and 45% revenue growth since 2021.

"Being bootstrapped, private, and built entirely in-house makes Zoho an outlier among competitors," said Sridhar Vembu, Co-founder and Chief Scientist, Zoho Corporation. "But vendors don't need our help, businesses do, which is why delivering customer value has, for 30 years, been Zoho Corporation's North Star. Before any innovation, strategy, or guiding principle becomes a product, pivot, or policy, it must first affirm the question, 'Will this help businesses?' We are incredibly grateful that companies around the world have responded so positively to our customer-first approach over the past three decades, and will continue to meet the evolving needs of businesses with powerful, scalable, and affordable solutions."

Since setting up its first regional office in Dubai, Zoho has strengthened its commitment through significant investments in local infrastructure, support, and product localisation as part of its ‘transnational localism’ strategy.

“Zoho’s expansion in the MENA region represents a significant milestone in our global journey. We are proud that our operations here, from our growing teams to strategic partnerships and local investments, have become a key driver of Zoho’s 30 years of success,” said Hyther Nizam, CEO of Zoho, Middle East and Africa (MEA). “Our commitment to the region goes beyond providing technology, it’s about empowering businesses, supporting digital transformation, and fostering innovation. The trust customers place in Zoho has been the foundation on which we continued to deliver scalable, secure, and locally relevant solutions that help organisations of all sizes thrive in an increasingly digital world.”

The company has committed AED 100 million to expand its presence in the UAE, including the setup of two data centres in Abu Dhabi and Dubai this year. Complementing this infrastructure, Zoho has invested nearly AED 80 million in strategic partnership initiatives in UAE to drive digital transformation across

Hyther Nizam CEO, MEA, Zoho

the Emirates, collaborating closely with key government entities such as the Department of Economic Development (DED), International Free Zone Authority (IFZA), Dubai Culture, Shams Free Zone, Umm Al Quwain Chamber of Commerce and Industry and Ras Al Khaimah Economic Zone (RAKEZ).

Since expanding in the MENA region, Zoho has enabled over 10,000 businesses adopt cloud technology through strategic partnerships with local governments from the public and private across many countries including UAE, KSA, Egypt, Bahrain, Qatar, Jordan, Morocco and Lebanon. These partnerships have been forged to support governments’ digitalisation agendas and incentivise businesses to adopt cloud technologies.

Through its CONNECT platform and AI-infused digital twin capabilities, AVEVA is enabling the utilities industry transition from fragmented operations to unified, intelligence-driven systems.

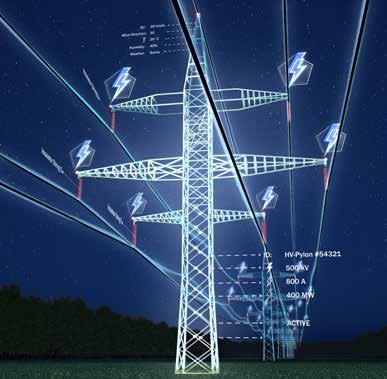

There is a fundamental shift in progress within the utilities industry. As energy systems become more distributed, data-intensive, and sustainability-driven, the traditional approaches to managing infrastructure are increasingly falling short. A new operating model is emerging that is defined by real-time intelligence, continuous optimisation, and the ability to turn data into decisions at scale.

As Nayef Bou Chaaya, Vice President, Middle East, Africa, and Turkey at AVEVA, notes, “There is a growing urgency around sustainability targets, particularly in emissions tracking and reporting.”

Within this transition unfolding in the industry, AVEVA is positioning industrial intelligence as a foundational layer for utilities navigating this complexity. Through its CONNECT platform and AI-infused digital twin capabilities, the company is bringing together data, analytics, and operational workflows to help utilities move from fragmented, reactive environments towards more unified, decision-driven systems. As the Middle East advances its sustainability agenda, supported by initiatives such as the UAE’s Net Zero 2050 strategy, this shift towards more connected and adaptive operations is becoming increasingly relevant and the need of the hour.

Across generation, transmission, and distribution networks, utilities already capture vast volumes of operational data, but much of it resides in distinct systems. Therefore, this can significantly limit the ability of organisations to translate data into timely and meaningful action. In complex environments, operators may have visibility into events as they occur, but they may not always have the exact context required to determine the next step of action or assess the immediate urgency.

Digital tools have significantly improved visibility across operations, with dashboards, alerts, and monitoring systems now wide-

ly deployed. However, there is an increasing focus on moving beyond visibility towards making sense of data at scale.

AVEVA’s approach focuses on connecting data across IT, OT, and engineering environments into a unified operational layer. This reflects a broader emphasis on enabling more consistent, system-level understanding of operations, rather than isolated views tied to individual systems or functions.

Within this context, scaling digital capabilities beyond individual use cases appears to be an important factor in unlocking value. The shift from fragmented visibility towards more connected intelligence is increasingly shaping how utilities are evolving their operational models.

At the core of this shift is the growing adoption of digital twins. “Digital twins are enabling utility organizations to transform sustainability tracking from periodic reporting to real-time, data-driven exercise,” says Nayef. “AVEVA's digital twin solutions combine data, models, and analytics to enable predictive maintenance, optimizing generation, transmission and distribution for utility companies.”

Digital twins are evolving from static visualisation tools into continuously updated representations of physical assets and systems. By integrating operational data with engineering models and analytics, they provide ongoing visibility into performance and system behaviour.

In the case of utilities, this capability appears to be particularly relevant for sustainability and operational efficiency. The ability to monitor performance continuously allows organisations to identify inefficiencies, optimise asset usage, and align operations more closely with environmental targets.

“This helps in increasing the use of renewables and reducing carbon footprint of existing facilities while strengthening regulatory compliance,” Nayef adds.

This suggests a broader shift in how sustainability is approached, which is moving from periodic reporting towards ongoing, operationally embedded tracking and optimisation.

While digital twins provide analytical depth, their effectiveness is closely tied to the availability of unified, high-quality data.

Utilities have traditionally operated across multiple domains, including IT, OT, and engineering systems. These domains often function independently, and therefore, this results in fragmented data environments that can limit visibility and coordination.

“Power producers and utilities face the challenge of integrating

renewables into generation portfolios and increasingly complex grids, and stringent sustainability mandates,” Nayef explains. “Our industrial intelligence platform, CONNECT aggregates real-time data and offers great visualization and analysis capabilities, enabling utilities to optimize asset performance, reduce operating costs, and ensure reliability.”

CONNECT is designed to integrate data across these domains into a unified edge-to-cloud architecture. This enables more consistent access to near real-time information across sites, systems, and teams.

“Built for the cloud, CONNECT streamlines the flow of nearreal-time data from diverse sources into a unified edge-to-cloud environment, giving teams flexible, secure access to high-quality data across sites, solutions, and trusted partners.”

This type of unified data foundation reflects a growing focus on enabling more coordinated and informed decision-making across the enterprise.

Utility environments are increasingly described as interconnected systems that span generation, transmission, distribution, markets, and end users.

AVEVA’s broader perspective highlights the importance of connecting these elements into a coherent and adaptive system through technologies such as IoT, interoperable data platforms, digital twins, and analytics.

When these layers are connected, it becomes possible to create a shared view of operations across the organisation. This can support more consistent decision-making, improve coordination between functions, and enable faster responses to changing conditions.

The ability to connect operational data across the value chain also appears to play a role in improving efficiency and reliability. By enabling information to flow more freely between systems and stakeholders, utilities may be better positioned to manage complexity and respond to operational challenges.

Resilience continues to be a key focus area for utilities, particularly as operational environments become more dynamic.

Factors such as fluctuating demand, evolving infrastructure requirements, and changing risk landscapes are contributing to increased complexity. In this context, the ability to respond quickly and effectively to disruptions is becoming increasingly important. Integrated platforms and digital twins support this by combining real-time and historical data, enabling utilities to forecast demand, optimise resource allocation, and respond to operational changes more effectively.

Emerging approaches that combine edge-based decision-making with centralised analytics and modelling suggest a model where both local responsiveness and system-wide optimisation can be achieved. This reflects how operational intelligence is being applied across different layers of the energy system.

Often, digital initiatives within utilities are implemented as targeted projects, addressing specific use cases or operational challenges. While these initiatives can deliver value, scaling them across the organisation can present challenges.

Fragmentation can arise when multiple tools and systems are deployed independently, limiting the ability to achieve system-level benefits.

Therefore, a move towards platform-based approaches reflects an effort to address this challenge by providing a more integrated foundation for digital capabilities. This may involve aligning technology, processes, and governance structures to support scalability and consistency across the enterprise.

As utilities continue to pursue net-zero targets, sustainability is increasingly being approached as an operational priority.

Digital twins and unified data platforms enable continuous monitoring of emissions, energy usage, and system performance. This

supports a shift from periodic reporting towards ongoing optimisation.

By integrating sustainability metrics into operational workflows, utilities may be able to align environmental objectives more closely with performance and efficiency goals.

The transformation underway in the utilities sector reflects a broader shift in how operations are structured and managed.

From fragmented systems to more unified platforms, and from reactive responses to predictive insights, the utilities industry is heading towards more intelligent and adaptive operating models. AVEVA’s approach of combining digital twins, unified data platforms, and AI-driven analytics, aligns with this shift, providing a framework for how utilities can connect data, systems, and decision-making.

As energy systems continue to evolve, the ability to translate data into actionable insight is emerging as a key capability. Operational intelligence is now viewed not as a useful or nice-to-have additional layer, but as a core component of how next-generation utilities manage performance, resilience, and sustainability in parallel.

"Digital twins are enabling utility organizations to transform sustainability tracking from periodic reporting to real-time, data-driven exercise. AVEVA's digital twin solutions combine data, models, and analytics to enable predictive maintenance, optimizing generation, transmission and distribution for utility companies."

Siddhesh Nagaonkar, CISO at Direct Honest Safe International Exchange FZE, highlights how evolving threats and AI-driven attacks are reshaping how organisations approach identity security.

Do you agree that identity has become the primary attack surface in today’s enterprise environments? What is driving this shift?

Absolutely. In the modern perimeter less enterprise, identity is the new firewall. The shift is driven by the rapid adoption of cloud-native architectures, remote work and the proliferation of SaaS applications. When users, devices and workloads are everywhere, traditional network boundaries vanish. Attackers have realized that it is far easier to log in using compromised credentials than to break in through hardened network defenses.

How are attacks such as MFA fatigue, credential phishing, and AI-driven deepfakes changing the nature of identity-based threats?

These attacks exploit the human element the weakest link in the security chain. MFA fatigue turns a security control into a nuisance, tricking users into approving unauthorized access. AI driven deepfakes and sophisticated phishing have reached a level of realism where traditional spot the red flag training is no longer enough. We are moving from a world of technical hacking to psychological manipulation where the scale and speed of attacks are now powered by AI.

Are organizations in the region adequately prepared to detect and respond to these evolving identity threats?

Preparation is a spectrum. While many organizations in the UAE and the broader region have implemented basic IAM and MFA, there is still a significant gap in Identity Threat Detection and Response (ITDR). Many are prepared for the known (standard logins) but struggle with the unknown such as lateral movement after a credential compromise or session hijacking. We need to move beyond static access controls to continuous, behavior based monitoring.

How significant is the challenge of identity sprawl across multicloud, SaaS, and partner ecosystems?

Identity sprawl is a silent killer of security posture. Managing thousands of identities across Azure, AWS and dozens of SaaS platforms creates dark identities unused or over privileged accounts that are goldmines for attackers. Without a centralized identity plane, visibility is fragmented, making it nearly impossible to enforce a consistent security policy or perform effective audits.

What are the most common gaps you see in how organizations manage identity and access today?

The most prevalent gaps are over privileged accounts (lack of Least Privilege) and the neglect of non human identities (service accounts, APIs and bots). Additionally many organizations fail to integrate identity into their broader SOC operations, treating IAM as an administrative task rather than a core security function.

What does an effective identity-first security architecture look like in practice?

It is built on the foundation of Zero Trust, meaning that security is enforced through continuous validation and controlled access. In

Siddhesh Nagaonkar CISO, Direct Honest Safe International Exchange FZE

practice, this involves a centralized identity provider (IdP) serving as a single source of truth for all identities, adaptive multi-factor authentication (MFA) that is triggered based on risk factors such as location, device health, and user behavior, and just-in-time (JIT) access that grants elevated privileges only when required and for a limited duration. This is further reinforced by continuous verification, where no session is assumed to be secure solely based on the initial login, ensuring ongoing validation throughout the user’s interaction.

How should organizations integrate identity with broader security frameworks such as endpoint, network, and cloud security?

Identity should be the connective tissue. For example an endpoint security alert (e.g., malware detected) should automatically trigger a reauthentication requirement or a total lockout of that users identity across the network and cloud apps. This XDR plus Identity approach ensures that a compromise in one silo is instantly mitigated across the entire ecosystem.

How can AI be leveraged to strengthen identity security, and where does it introduce new risks?

AI is our greatest ally in Behavioral Analytics detecting a impossible travel scenario or a login at 3 AM that deviates from a user's normal pattern. However it introduces the risk of AI powered social engineering and the ability for attackers to automate credential stuffing at an unprecedented scale. We are in an AI arms race where our defensive models must be faster and smarter than the offensive ones.

As enterprises shift towards integrated, outcome-driven technology environments, MBUZZ is repositioning itself in the market. Sabir Saleem, CEO at MBUZZ, highlights how the company is expanding its focus across AI, datacentre, networking, security, and intelligent computing to align capabilities with real business requirements.

How is MBUZZ redefining its place in the market as a provider of complete, business-aligned technology solutions?

MBUZZ’s evolution has been both deliberate and market-led. Having built a strong foundation in telecommunications over more than a decade, the company has progressively expanded its capabilities in line with how technology demand has matured across the region. Today, that means going well beyond product availability into areas such as HPC, AI infrastructure, client compute, enterprise storage, cybersecurity, and smart city solutions. This transition did not happen by accident; it has been driven by a clear strategic direction, a committed team, and a deep understanding of where the market is moving.

What we have consistently observed is that businesses are no longer looking for isolated technologies. They are looking for complete, end-to-end solutions that can support performance, scalability, resilience, and long-term business value. MBUZZ has responded to this shift by strengthening its solution capabilities, aligning closely with market trends, and building dedicated business units around high-growth, high-impact technology domains. In parallel, we have formed strategic partnerships with leading global brands that complement this vision and enable us to deliver more relevant, workload-focused solutions into the market.

As a result, MBUZZ today is more as a business-aligned technology solutions provider. Today, our solutions are designed to serve a broad spectrum of users—from public sector and enterprise environments to private businesses, professionals, and even end consumers Our role is to bring together the right mix of technologies, expertise, and ecosystem partnerships to help customers build infrastructure that is not only technically sound, but also aligned with the realities of modern digital enterprise. That is where we believe our value increasingly lies — in helping the market move forward with greater clarity, integration, and confidence.

As enterprises increasingly invest in integrated, outcome-driven technology environments, how is MBUZZ positioning itself as a solutions provider across the domains of AI, datacentre, networking, security, and advanced computing?

As enterprises increasingly invest in integrated, business-aligned

Sabir Saleem CEO, MBUZZ

technology environments, MBUZZ is positioning itself with a clear objective: to bridge the gap between business needs and the technologies required to address them effectively. Our role is not simply to offer access to products, but to create a structured pathway through which customers can identify, deploy, and scale the right solutions across AI, datacentre, networking, security, and advanced computing. That is enabled by a combination of

strong regional channel reach, responsive customer support, certified integration capabilities, strategic global partnerships, and an ecosystem designed to make even geographically complex deployments more seamless and practical.

A key part of this approach is MBUZZ Labs, which reflects how seriously we take end-to-end solution building. Through MBUZZ Labs, we provide a high-value environment for advanced integration, certified customization, solution validation, and functional alignment—ensuring that technologies are not only selected correctly, but engineered to perform in the way the business actually requires. From infrastructure tuning, workload-specific configuration, interoperability planning, to solution-level optimization, MBUZZ Labs strengthens our ability to approach customers with greater technical depth and a more complete execution model. It is an important extension of our strategic approach, allowing us to move beyond supply and into true solution enablement.

This is ultimately what defines MBUZZ’s position in the market. We have built a portfolio and partner ecosystem that are closely aligned with the priorities of the region, and we support that with the operational structure, technical capability, and market understanding needed to turn those technologies into meaningful outcomes.

How is the partnership with NVIDIA advancing its strategic AI agenda in the region, and what impact is this creating for partners and customers investing in AI-led growth?

MBUZZ’s partnership with NVIDIA is central to how we are advancing our AI agenda in the region. NVIDIA today offers a full-stack AI ecosystem spanning accelerated computing, AI software, DGX platforms, AI factories, high-performance networking, professional visualization, RTX workstations, virtual GPU and virtual workstation technologies, cloud AI, and enterprise AI deployment frameworks. That breadth is important because customers are entering AI from very different points — from gaming and creator platforms, graphics and laptops, and professional workstations, to AI-ready data centers, hybrid AI workspaces, cloud-connected infrastructure, and large-scale enterprise AI environments.

What makes the partnership especially relevant for MBUZZ is that it allows us to support the AI journey across multiple layers. For production AI, NVIDIA AI Enterprise provides an end-to-end software platform with frameworks, NIM microservices, orchestration, and infrastructure management for scalable deployment. For organizations building AI factories and model development environments, the NVIDIA DGX platform delivers full-stack infrastructure optimized for enterprise AI workflows. At the operational layer, NVIDIA Run:ai adds GPU orchestration and resource optimization, helping enterprises improve utilization, manage AI workloads dynamically, and scale across hybrid environments more efficiently. At the user and workspace layer, NVIDIA RTX Virtual Workstation, vGPU software, AI Virtual Workstation, and RTX PRO workstations extend GPU acceleration into distributed environments for AI development, simulation, rendering, visualization, and hybrid VDI use cases.

For MBUZZ, this creates real impact for both partners and customers. It means we can engage across the full NVIDIA ecosystem — from software and tools, networking, embedded and edge systems, data center infrastructure, cloud-aligned AI, graphics and GPUs, laptops, gaming and creative platforms, and professional workstations — with solutions that are technically aligned and deployment-ready. In practical terms, it strengthens our ability to help customers move from AI interest to AI execution with architectures that are more scalable, better managed, and far more relevant to real business adoption across the region.

Having built a strong focus across AI, HPC, and enterprise storage, how is MBUZZ now approaching cybersecurity as an important extension of what it offers to the market?

MBUZZ views cybersecurity as a natural and necessary extension of its broader technology vision. As digital environments become more complex, security must sit within the larger infrastructure strategy rather than outside it. Through our partnership with Acronis, we deliver an all-in-one cybersecurity and data protection platform that brings backup, disaster recovery, endpoint protection, anti-malware, ransomware protection, Microsoft 365 protection, email security, compliance, patch management, and secure remote workload access together under a single, unified console for simplified management and enhanced protection.

"Our role is to bring together the right mix of technologies, expertise, and ecosystem partnerships to help customers build infrastructure that is not only technically sound, but also aligned with the realities of modern digital enterprise."

Through Cloudmon, we provide a unified observability platform that enables proactive monitoring of IT infrastructure across cloud and on-premises environments. Its capabilities include digital experience monitoring (DEM) for end-user performance, network monitoring with topology and path tracing, network traffic analysis (NTA), availability monitoring of applications and endpoints, server and virtualization monitoring, desktop monitoring, syslog and log analytics, and network configuration management (NCM). Cloudmon also offers centralized dashboards, intelligent alerting, auto-remediation, and deep visibility into application, network, and user experience metrics to improve service levels while reducing operational costs. For us, this is not just about adding another business unit — it is about strengthening our ability to deliver complete, resilient, and business-aligned solution environments for the region.

MBUZZ today operates across multiple technology domains and vendor ecosystems. How is the company bringing cybersecurity solutions together to create more cohesive, workload-focused solutions for partners and end customers?

MBUZZ is bringing cybersecurity solutions together by designing them around the customer’s actual workload rather than around isolated tools. Through Acronis, for example, we can unify backup, disaster recovery, cybersecurity, and endpoint management within a single operational framework, which is especially relevant for service providers, businesses, and distributed work environments.

This enables us to build more cohesive solutions tailored to specific use cases. For Microsoft 365 environments, this includes protecting Exchange Online, OneDrive, SharePoint, and Teams with centralized backup and recovery. Similarly, for Google Workspace environments, it extends to securing Gmail, Google Drive, Google Calendar, and Contacts, ensuring comprehensive data protection and seamless recovery across platforms.

with centralized backup and recovery. For remote and distributed operations, Acronis Cyber Protect Connect adds secure remote access and workload management across Windows, macOS, and Linux. For resilience-led environments, hybrid and cloud disaster recovery can also be integrated into the same protection model.

What this means in practice is fewer security silos, better operational visibility, and a more workload-focused cybersecurity architecture. Instead of treating backup, endpoint protection, remote access, patching, and recovery as separate conversations, MBUZZ approaches them as part of one business continuity and cyber resilience strategy. That is how we are creating more practical, scalable, and business-aligned cybersecurity solutions for partners and end customers across the region

As the regional technology becomes more complex and opportunity-rich, how is MBUZZ defining its key role in helping customers navigate this shift through more complete and future-ready solutions?

MBUZZ is defining its leadership role by staying close to how technology is actually being adopted in the market. As customer environments become more complex, the need is no longer for isolated products, but for solution paths that are better aligned to

workload, scale, integration, and long-term business value. Our approach is built around understanding those shifts early, structuring dedicated business focus around them, and translating them into practical offerings across AI, HPC, cybersecurity, enterprise storage, networking, client compute, and smart infrastructure.

What makes this relevant in real market terms is the way MBUZZ operates. We combine strong vendor alignment, regional channel reach, technical integration capability, and solution-focused execution to help customers move from requirement to deployment with greater clarity. Through strategic partnerships, certified integration support, and MBUZZ Labs, we are able to approach opportunities not just from a product standpoint, but from an architecture and functionality standpoint — validating how technologies fit together, how they perform in real use cases, and how they can be adapted to the needs of enterprises, public sector organizations, professionals, and consumers.

Our leadership role is really being shaped by this ability to connect market demand with workable technology outcomes. From AI-ready infrastructure, hybrid workspaces, cyber-resilient environments, to high-performance enterprise platforms, MBUZZ’s strategy is to bring together the right technologies, the right expertise, and the right ecosystem support in a way that is scalable, future-ready, and grounded in real business needs across the region.

"From infrastructure tuning, workload-specific configuration, interoperability planning, to solution-level optimization, MBUZZ Labs strengthens our ability to approach customers with greater technical depth and a more complete execution model."

Sameer Joshi, Digitalization Director, NADEC, explores how the rise of AI agents is redefining the nature of work and what it means for organisations preparing to manage a hybrid workforce of humans and machines.

For the past two years, companies have been asking one question: How can AI help our employees work faster? But a far more disruptive question is now emerging. What happens when AI stops assisting work — and starts doing the work itself? Because the next phase of enterprise AI is not just about tools. It’s about AI agents becoming part of the workforce.

The Shift Most Leaders Are Missing

Most organizations still think about AI in one of three ways:

• a productivity tool • a chatbot interface • a digital assistant

And in many cases, that’s exactly how AI is being deployed today. Copilots that summarize documents. Assistants that draft emails. Systems that help analyze data. But something more powerful is quietly emerging.

AI agents.

Systems that can plan tasks, interact with enterprise systems, execute workflows, and deliver outcomes with minimal human intervention. This is not just an improvement in software. It represents a fundamental shift in how work gets done inside organizations.

From Copilots to Agents

To understand the difference, it helps to compare two models.

The Copilot Model:

AI assists a human performing a task. The human remains the primary executor.

Examples include:

• writing assistance • meeting summaries • code suggestions • data insights

In this model, AI augments human work.

The Agent Model

AI performs tasks on behalf of the human.

Examples already emerging include:

• AI agents resolving customer service tickets

• AI agents generating and updating reports • AI agents monitoring supply chain risks • AI agents coordinating workflows across systems

In this model, AI is no longer just augmenting work. It is executing work.

The Real Implication

Once AI begins executing tasks autonomously, the organizational question changes.

The challenge is no longer: “Where can we use AI?” The real question becomes: “Where will AI become part of the workforce?”

This shift has enormous implications. For the first time, organizations may have to manage two types of workers simultaneously:

• human employees • AI agents

And the structures most companies rely on today were never designed for this reality.

The Questions Leaders Are Not Asking Yet

If AI agents become operational workers, entirely new questions emerge. Who assigns work to AI agents? Who supervises them? How do we measure their performance? Who is accountable when an AI agent makes a decision? And perhaps most importantly: How do humans and AI work together inside the same operating model?

These are not just technology questions. They are leadership and organizational design questions.

The Next Transformation

Every major technology shift changes how work is organized. The industrial revolution changed physical labor. The internet transformed communication and knowledge work. AI may be the first technology shift that changes who the workers actually are. Not just humans. But humans working alongside intelligent systems.

Closing Insight

The organizations that succeed in the AI era will not simply deploy better models. They will redesign how work is structured across humans and machines. In other words, they will learn how to manage an AI workforce.

As enterprise and consumer expectations shift towards mobility, performance, and AI-driven productivity, ASUS is evolving its commercial laptop strategy in the region. Tolga Özdil, Regional Commercial Director, Middle East, Turkey & Africa (META) at ASUS, outlines how the company is aligning its portfolio around AI-ready devices, hybrid work demands, and secure, high-performance computing.

How is ASUS positioning its laptop portfolio in the Middle East amid evolving enterprise and consumer demands?

We are seeing a shift happening towards flexible work models and AI-driven productivity. With this in mind, ASUS is aligning its commercial portfolio to cater to professionals and businesses who require performance and power all in one compact package. Here in the Middle East, where high-mobility roles are now becoming a norm, we continue to support businesses with devices that are durable, long-lasting, secure and include collaborative tools that fit the demanding workloads of distributed teams and modern workplaces. We are also positioning our portfolio as AIready PCs to make them future-ready as workers rely on AI tools for increased productivity.

The ExpertBook Ultra series targets premium business users. How do you see the demand for this series among Business professionals in the region picking up since launch?

We designed the ExpertBook Ultra to meet the needs of professionals who are looking for a device that is powerful enough to handle complex workflows but also portable enough to be brought anywhere. Because more businesses are now adopting hybrid work, demand for this category of notebooks has increased. There is an increased interest in the device from senior executives, consultants and the like, and this response has been very encouraging to see. Moreover, we also see the device as a great addition to any modern workplace.

Overall, how has ASUS’s commercial laptop business grown in the Middle East over the past year?

Our commercial business growth is driven by enterprises investing in devices to support AI workloads locally while keeping sensitive data secure. We are also supporting the public sector in

Tolga Özdil

Regional Commercial Director, META, ASUS

rolling out its digital transformation initiatives, which require secure and scalable devices to support smart services and paperless operations. Another factor in our growth is the increasing refresh cycle for organizations upgrading to devices that support AI-driven platforms, enhanced security and modern collaboration tools. Our energy-efficient and responsibly designed devices have been a top choice for organizations looking to reduce their environmental impact.

The ExpertBook lineup emphasizes on-device AI capabilities with dedicated NPUs. How do you see enterprises in the region leveraging on-device AI to improve productivity while addressing concerns around data privacy and performance?

AI tools initially are cloud-based, which also requires constant connectivity. Since it is online, there are concerns about security and privacy. Because of this, on-device AI is becoming a preferred choice for enterprises, which is also becoming a practical advantage. Dedicated NPUs on laptops allow local AI processing, and this can be beneficial since it reduces speed and bandwidth dependency. AI tasks like real-time transcription and automated workflows are more responsive, and data is processed locally, so there is less risk of data getting exposed, which is beneficial in industries like healthcare and BSFI.

Apart from AI, discuss very briefly some of the other performance and design enhancements that Asus has introduced in its business laptops in the past year or so.

Beyond on-device AI support, we have designed our ExpertBook line to be the ultimate business device. We use magnesium-aluminum alloys that make the device lightweight and durable. Plus, our devices meet military-grade standards, so they’re guaranteed to withstand daily wear and tear. Even with its compact form, our devices have an advanced cooling architecture that delivers consistent performance plus long battery life for all-day usage. Lastly, we’ve fitted our notebooks with a fingerprint reader, BIOS protection and firmware-level security that can detect malicious threats and automatically restore them to the last trusted version.

ASUS Expert Connect, held last December, brought together end-users across sectors such as healthcare, BFSI, education, and enterprise. How important are such experiential events in moving conversations beyond device specifications to real business outcomes?

Events like ASUS Expert Connect allow us to engage with our customers directly and learn firsthand about how our products are helping them, and gather essential feedback that we can address in future products. It also provides an opportunity for us to talk with our partners and know what challenges they are facing and how they can be solved, leading to more informed decisions and

forging stronger relationships. We do these events with the aim of showing our customers and partners a better understanding of the solutions that we offer and the results that they can expect.

How is ASUS tailoring its commercial device strategy to support national AI ambitions and industry-specific use cases in the region?

ASUS fully supports digital transformation initiatives, which is why we tailor our commercial offerings to fit the needs of the region’s long-term goals. Many countries in this region have national AI agendas, where they are embedding AI in the public sector, healthcare, education and more. We collaborate with our ecosystem partners to deliver AI-ready hardware and software solutions that are ready for their use cases instead of just for general productivity. It’s all about making sure that the technology we provide is future-ready and reliable and delivers real value.

"AI tasks like real-time transcription and automated workflows are more responsive, and data is processed locally, so there is less risk of data getting exposed, which is beneficial in industries like healthcare and BSFI."

Ahmad Shakora, Group Vice President at Cloudera how Cloudera is enabling organisations to bring AI to their data while maintaining governance, flexibility, and hybrid control.

How would you describe the current state of AI adoption in the region?

AI adoption in the region is growing, and it’s growing at a faster pace than any other region in the world. Cloudera is right in the heart of it.

AI, at the end of the day, is powered by data. Where we are uniquely positioned is in our ability to run data anywhere and bring AI to your data, whether it’s on-premise, on the cloud, any cloud, or private cloud.

The acceleration of AI adoption is significant, and we’re in a unique place where it’s really taking off. We are positioned very well in the region to help customers adopt AI faster.

Is this growth concentrated in the UAE and Saudi Arabia, or are you seeing it across the region?