AI

“Our responsibility is to equip our children for a time unlike ours, with conditions different from ours, and with new skills and capabilities that ensure the continued momentum of development and progress in our nation for decades to come.”

His Highness Sheikh Mohammed bin Rashid Al Maktoum

Artificial Intelligence has disrupted the education sector more than any innovation that preceded it. It is also one of the most polarising topics ever seen in schools.

On one hand we’re told that AI is inevitable and essential whilst on the other hand the education community has faced spikes in academic dishonesty and seen rising concerns around cognitive offload and the potential degradation of learning skills if students outsource their thinking to AI.

This paradox is where schools around the world currently find themselves and whilst we cannot claim to have all of the answers, we are proud to have seen JESS Dubai emerge as a regional beacon for AI implementation.

Whilst our AI strategy is agile and evolving, we wanted to take a moment to capture and share some of the work we have done in this area, answering key questions you might have in the process. This past November we sent a form to all JESS parents giving you the opportunity to ask any questions that you might have about AI at JESS or AI in education more generally. In this document we have curated these questions and our responses.

We hope that you find this informative, illuminating, and inspiring as you gain greater insight into the journey we are on with AI. If you have further questions, comments, or concerns, our doors are always open, and we encourage open lines of dialogue.

Steve Bambury Head of AI & Digital Innovation

Dubai

JESS

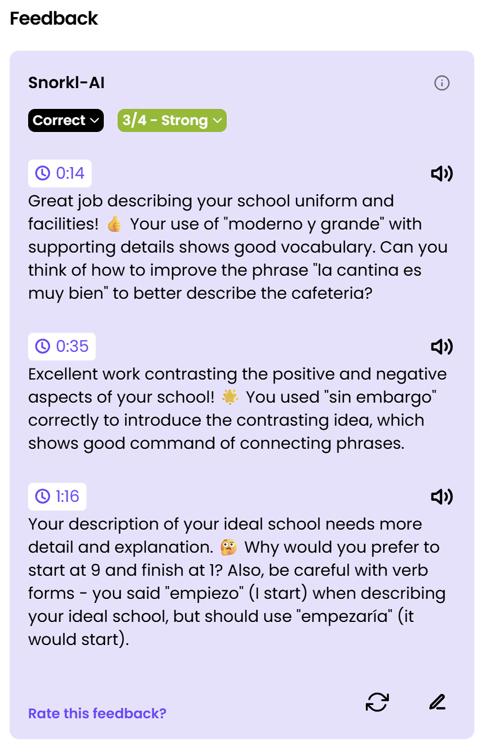

Does JESS have an AI policy for students and staff?

Yes, the school has a very detailed policy that was published in 2024 and updated in 2025. The policy was initially drafted with insight from international experts in the AI education field and then went through reviews with JESS Middle Leaders before being reviewed and reiterated by the JESS AI Steering Committee (AISC). This group is chaired by the Head of AI & Digital Innovation and includes JESS Director Mr O’Brien alongside the Director of IT, Head of Sixth Form and representatives from the Senior Leadership Teams in each school.

After a substantial review by the AISC, the policy was published in October 2024. It sets very clear guidelines for the professional use of AI by both teaching and administrative staff as well as all use of AI by students. The full policy can be found on the JESS Parent Portal within the documents & files section.

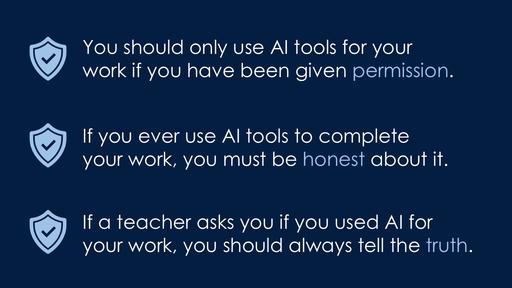

Recognising that a 4,000-word policy was not going to be particularly accessible for our students, we distilled it into our Golden Rules for Using AI Tools and aligned it to the JESS Core Values Two versions were created: one for the primary phase and one for secondary, the latter of which can be found on the next page. The Golden Rules were launched among students from Year 4 to Year 13 in January 2025 through a series of assemblies and workshops. The images below are an example of the slides and information shared.

Our Golden Rules for Using AI Tools

As JESS students we are intelligent, respectful digital citizens and recognise that AI tools offer great power but with that power comes great responsibility.

RESPECT

EXCELLENCE

CARE

INTEGRITY

CURIOSITY

We do not use AI tools to cut corners in our learning that prevent us from being independent learners.

We always check AI content carefully and cross-reference with reliable sources

We only use AI tools for positive things and never use it to cause any upset or harm.

We use AI responsibly and never share personal information with any AI tools

We only use AI tools that are age-appropriate and have been endorsed for use at JESS.

We always speak with a trusted adult if we are uncertain about which AI tools we can use or if we come into contact with something produced by AI which is inappropriate

We act with honesty and never submit work generated by AI tools, because we understand that this is a form of cheating.

If we ever use AI for our work, we acknowledge it.

We always use AI tools with an open mind and a desire to explore new ways to learn.

How does JESS help students develop crucial AI literacy skills?

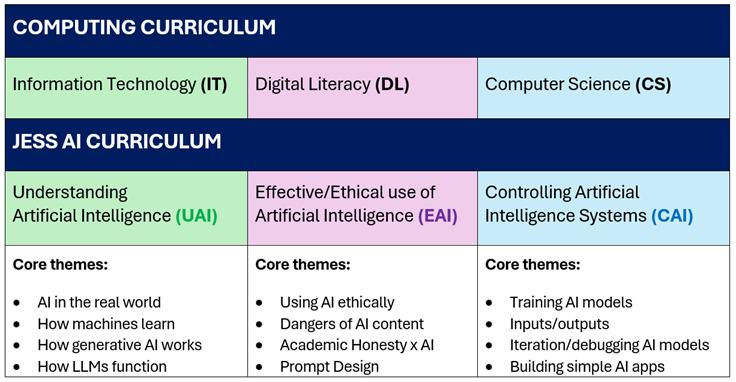

In December 2024, the JESS Digital Leadership Team, which is chaired by The Head of AI & Digital Innovation and includes the three Heads of Computing/Computer Science, began to develop a bespoke AI literacy curriculum. They devised a curriculum designed to mirror the three strands of the existing British Computing curriculum (digital literacy, information technology, and computer science):

The first draft of the AI literacy curriculum was shared with the AI Steering Committee in January 2025 yet the logistics of delivering the curriculum remained a challenge. The majority of curriculum objectives are delivered during Computing/Computer Science lessons. Beyond Key Stage 3, objectives are delivered through Mindbeat lessons, assemblies, Super Sixth Form sessions and within pastoral time.

Simultaneously, the team began weaving in elements from the draft AI Literacy Framework from The European Commission/OECD, which was published in May 2025. This framework shared a lot in common with the JESS curriculum and included additional concepts, which resonated with the team. As such, the published form of the JESS AI Literacy Curriculum incorporates three additional dimensions:

Knowledge (what knowledge about AI is developed?)

Skills (which skills are harnessed during lessons?)

Values (which of the JESS Core Values are employed?)

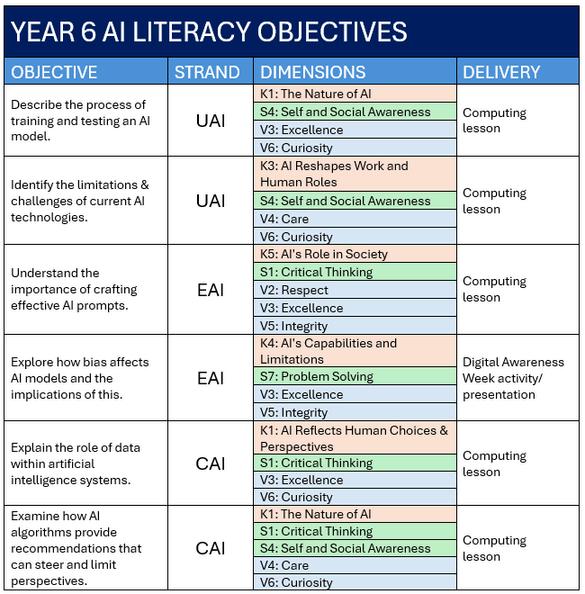

This version of the curriculum was launched among Year 4 to Year 10 students in August 2025 and is ongoing. Below is an example from the curriculum, showcasing the Year 6 objectives and the relevant strands/dimensions.

How do JESS students develop their understanding of machine learning?

JESS students develop their understanding of machine learning through units of study in the Understanding Artificial Intelligence (UAI) strand of our curriculum. In the primary phase, they explore the difference between artificial intelligence and machine learning, focusing on why data is vital and engage in hands-on activities such as creating chatbots in Scratch.

In Secondary, students go on to examine training and testing of AI models and explore the limitations of AI. By blending theory with practical projects, we can foster both critical thinking and problem-solving skills. Ethical considerations remain central, with students debating responsible AI use and privacy concerns in conjunction with sessions focused on machine learning.

How does JESS encourage students to use AI creatively?

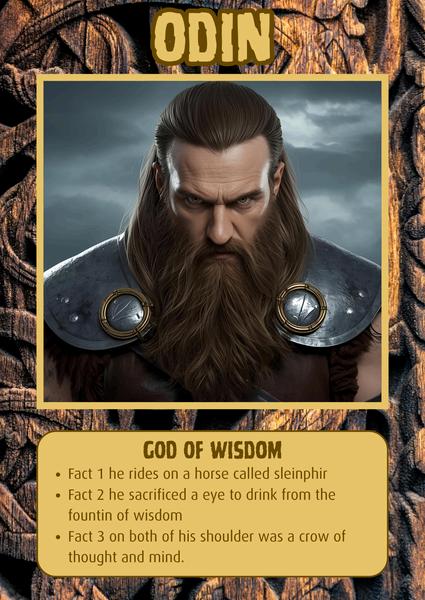

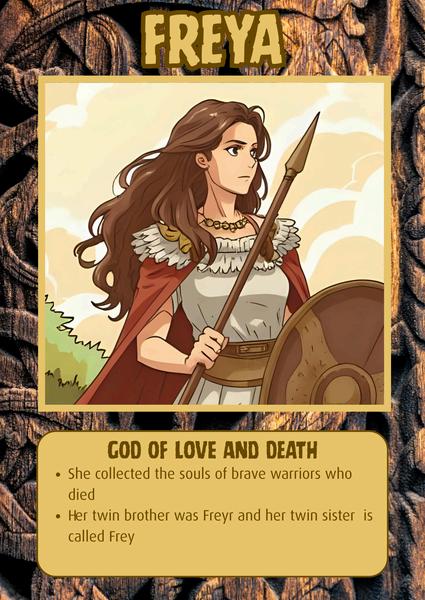

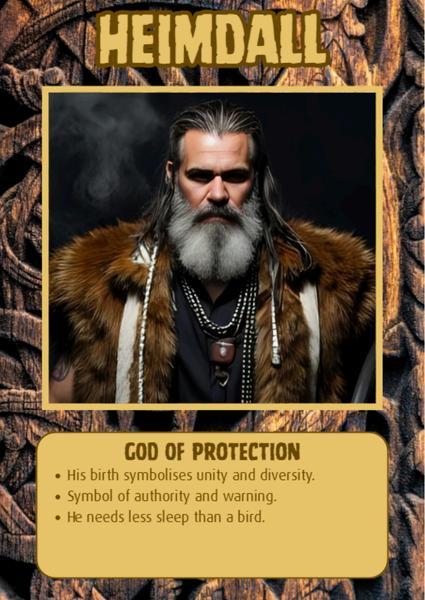

JESS staff have begun exploring more creative ways to harness AI in their classrooms A recent project with Year 4 at Arabian Ranches saw students prompting Canva AI to generate images of Norse gods and then animating them using another AI tool.

This project was preceded by the launch of the AI Golden Rules with the new cohort of students in Year 4 to ensure that students recognised the responsibility that comes with access to AI tools. Throughout the project, all use of AI was moderated and limited to specific sessions.

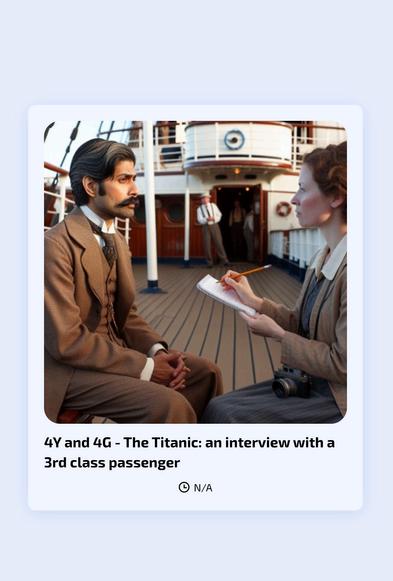

Meanwhile in Year 4 at JESS Jumeirah, staff explored the potential of using AI to bring history to life in a dynamic way by building AI chatbots to role play as passengers on the Titanic. Students were then able to interact with these characters and immerse themselves in history in a unique and engaging way. This chatbot platform (Mizou) was specifically designed for use in classrooms and provides real time feedback and conversation logs for staff.

In Secondary, language teachers have enjoyed success using the Snorkl platform to provide real-time feedback for written and oral work. This is great for students, who get instant guidance and can retry tasks to perfect them. Year 12 took part in a lesson in which they had to answer spontaneously using only spoken Spanish. They then used AI feedback to refine their approach and repeat the exercise until they scored 4/4. The Snorkl platform has been used to similar effect for English and Maths across both primary and secondary.

This term, Year 6 Jumeirah students used a custom-built agent made to craft prompts for manga characters as a part of a project on Japan. They then used their prompts to generate characters in Canva AI.

Also this term, students in Year 9 will develop their understanding of effective prompt design during literacy sessions before using Canva AI tools to build their very own interactive revision webs

How

do students know whether they are allowed to use AI to complete their work?

The curriculum at JESS is balanced and has not become dominated by AI use. There are many areas of the curriculum where technology plays a significantly limited role. AI access below Year 9 is limited and closely monitored as most AI tools are designated as 13+ due to data protection laws.

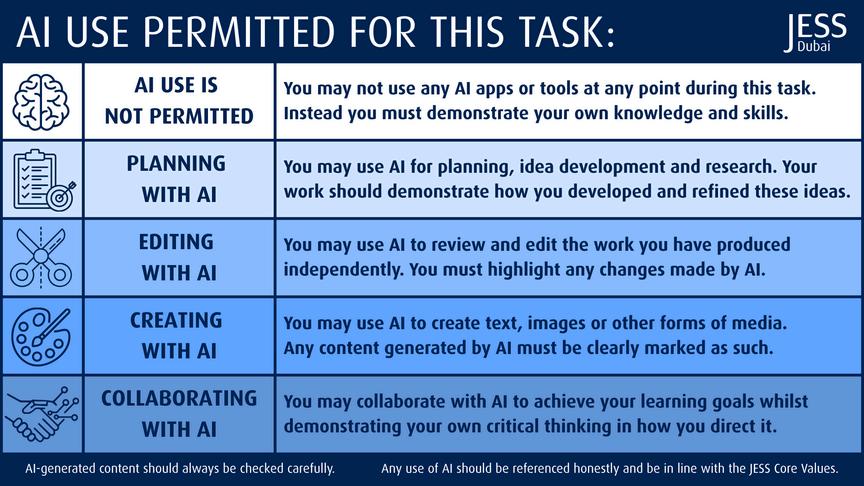

To help set clear expectations and ensure accountability, the JESS AI Working Party developed the JESS AI Use Scale in October 2025. This outlines five tiers of specific AI use both to ensure that JESS Secondary students understand what is acceptable for a given activity and to actively encourage our teachers to explore opportunities to leverage AI in creative ways when appropriate.

The AI Use Scale is displayed in every classroom and used in conjunction with a set of digital stickers, which are added to PowerPoint slides, OneNote pages, and other digital learning assets to clearly highlight the level of AI permitted for a given lesson or task.

How are students taught to use AI ethically and effectively?

The heart of our AI curriculum is the EAI strand focusing on the effective and ethical use of AI. This strand runs from Year 4 all the way to Year 13 and includes objectives looking at bias, inaccuracies, academic integrity, and much more To elevate the curriculum even further, we are leveraging industry experts to deliver some of the objectives and to showcase working examples. In November 2025, a Year 12 objective focused on AI and data protection was delivered by data security consultants 9ine Consulting. In early 2026 Microsoft’s AI Skills Director is set to deliver sessions on bias and AI’s impact on the workplace with Year 10 and 11 students.

What is the school’s stance on AI misuse and academic integrity?

The impact of AI on academic integrity has been at the forefront of our minds since the inception of the AI policy, and it is something we take very seriously. In Sixth Form, we utilise the Turnitin platform as a part of the process to assess for plagiarism yet we also acknowledge that AI detection tools have the propensity for error and can generate both false negatives and false positives. As such, it is only ever a part of an academic integrity solution, and our greatest strength remains our staff themselves. JESS staff are masters of their craft and develop a clear understanding of each student and their abilities with the ability to identify work that does not match the standard output from a given student.

Whether flagged by Turnitin or the staff themselves, the process we employ leads to discussion with the student to both ascertain authorship and have them explain the content they have produced. This interview process (commonly referred to as “viva voce”) is currently standard practice in Sixth Form and is increasingly being employed at GCSE level as well.

Head of JESS Sixth Form, Kosta Lekanides, covering academic integrity principles during a parent workshop on AI (June 2025)

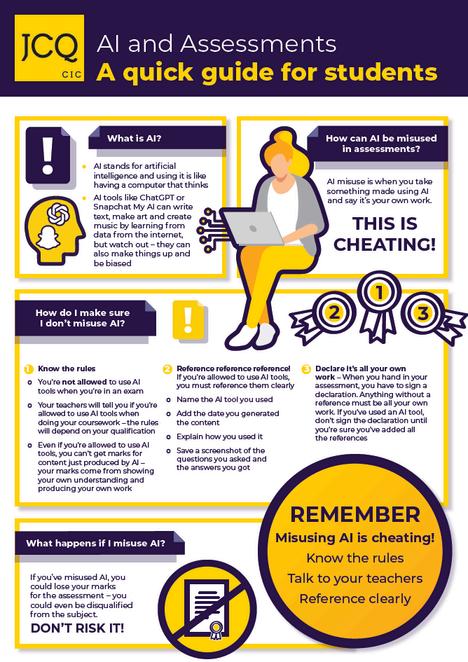

Students also have clear guidelines on AI use shared with them from external bodies such as the JCQ and IB, which are regularly reinforced through pastoral sessions. Lower down the school, where coursework does not form a part of the curriculum, AI literacy sessions cover the importance of academic integrity and ensure that students recognise the inherent risks in using AI for tasks intended to be completed independently.

We acknowledge the concerns around AI misuse and the impact on academic integrity, and recognise that there is no “silver bullet” solution to this issue. We believe that educating our students about the longer term implications is crucial and will continue to refine our approach to this to ensure that all JESS learners are empowered to make educated, ethical choices in how they harness AI.

What is JESS doing to develop student focus and resilience in the age of AI?

The protection of learning skill development has been another paramount concern for us since day one and is a regular theme at AI Steering Committee meetings. Whilst practical subjects help balance the curriculum, some assessments have been shifted to paper-based formats as staff strive to strike a balance between digital and analogue activities. We acknowledge the propensity for AI to degrade cognitive capacity, especially if a student becomes over-reliant on it The AI literacy curriculum has played a significant role in ensuring that students also recognise this issue and the potential long-term impact it can have on their ability to succeed in exams, interviews, and other scenarios where AI is unavailable.

Our focus has always been on developing the whole child, in line with our JESS Core Values to produce students who can make a difference in the world Whilst

How does JESS use AI for inclusion?

Our Oasis team have always been outstanding at integrating digital tools for accessibility and AI has been something they have been very proactive about researching and harnessing where appropriate. This includes the use of AI tools to promote independence and self-management as well as more subject specific tools to help identify and close gaps in reading, writing, and maths, such as the Microsoft Learning Accelerator tools. Naturally, any use of AI by our neurodiverse learners is carefully vetted and regulated by the Oasis team to ensure that tools are making a positive impact on their learning experiences.

Members of the Oasis team are currently collaborating with the school’s AI Working Party on a research project exploring the use of AI to support neurodiverse learners, which will be published in early 2026. Our Head of AI & Digital Innovation will also be sharing best practice in this field at the MENA Inclusion & Wellbeing Conference in February 2026.

Can JESS students use AI as a personal tutor?

We are aware that some of our older students have begun to leverage Microsoft Copilot in a tutor-style capacity. Whilst we encourage their initiative, it is important they remember that AI should complement, not replace, critical thinking and teacher guidance. They should approach outputs with a questioning mindset, cross-reference with trusted sources, and use AI as a springboard for deeper learning rather than a shortcut.

In early 2026, new functionality is coming to Microsoft Copilot, which will allow students to create flashcards, revision quizzes, and more directly within their Microsoft 365 accounts. These tools will be something we explore fully and share with relevant students when appropriate.

Responsible, informed use remains essential, and we must stress that it is essential that they do not use their JESS email address to sign up for any third-

Do JESS teachers use AI professionally?

Yes, our staff use the same core tools (Microsoft Copilot and Canva AI) in a professional capacity, ensuring that all use of AI falls in line with the Acceptable Use Policy. Staff engage with frequent professional development sessions on AI, which have included department-based AI training with every team across both JESS sites (delivered by the Head of AI & Digital Innovation between May and July 2025).

Deployments of strategic elements such as the Acceptable Use Policy and AI Use Scale are launched through whole-school sessions to ensure consistency of key messaging. Feedback data is captured regularly to ensure that staff needs relating to AI are heard and addressed accordingly.

Additionally, international AI education experts, including Dan Fitzpatrick and Dr Sabba Quidwai, have facilitated AI training at JESS in the last two years. These sessions are supplemented with a range of online training opportunities for staff via providers such as Microsoft, The National College, and Dubai Future Foundation.

Director of IT, Alan Richards, leading a whole-school training session as a part of the AI Acceptable Use Policy launch event (October 2024)

How does JESS ensure that staff and student data is kept safe from AI tools?

Data protection is something we take very seriously at JESS and is a cornerstone of our overall digital strategy. Our Director of IT works tirelessly to ensure that our systems are robust and state-of-the-art, and we are one of the only schools in the region with a full-time Data Protection Officer on our staff Additionally, we partner with 9ine Consulting from the UK to ensure data protection protocols are updated and cybersecurity threats are mitigated.

The two main AI tools that are broadly employed at JESS are Microsoft Copilot and Canva. Both platforms offer us enterprise data protection to education clients and this affords multiple benefits:

Our data is always secure

Our data is always private

Our data policies are upheld

We have protection against security threats

Our data isn’t used to train AI models

Any other AI tools that staff want to use must go through strict data protection checks before any deployment. In the 2024-2025 academic year, we vetted over 230 different AI platforms, assessing them for quality, purpose, and relevance as well as checking conformity with data protection and child protection protocols. Out of this vast number, only 4 were moved forward to initial pilot phases.

A site-wide block on other major AI platforms like ChatGPT, Grok, and Midjourney further helps us to ensure that student and staff AI use remains in line with both the AI and data protection policies.

What can we do as parents to support the school?

Parent partnerships have always been an integral part of the JESS community, and we encourage all parents to work with us to ensure that our students harness AI ethically and effectively. Talk to your children about AI: how they use it and how you yourself use it. Ask them what they like about AI, what concerns they have about it and what risks they recognise when it comes to AI.

Discuss the impact AI use can have on academic integrity and the ramifications of this. We want students to feel heard and understood but also to make sure that they understand AI cannot be used as a substitute for hard work and human thought It may also be beneficial to monitor their use of AI tools at home, especially for high stakes tasks such as coursework.

Finally, it is important to be digital role models. Setting an example in the way you use AI yourself, both professionally and personally, is an excellent way to

Guided by our values