International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

D. Keerthi1 , S. Shanthini2

1PG Student, Department of Computer Applications, Jaya College Of Arts & Science, Chennai. 2 Assistant Professor, Department of Computer Application, Jaya College Of Arts & Science, Chennai.

Abstract - Thesimulationthatspikedthecommunicationof biological neurons, Spiking NeuralNetworks(SNNs)providea bio-inspired framework for real-time and energy-efficient neuromorphic computing. SNNs process information through discrete temporal spikes, as opposedto conventional artificial neural networks, which rely on continuous activations. This allows for event-driven computationwithasignificantlylower power consumption. In order to achieve both biological plausibility and high computational performance, this paper proposes a novel method for designing efficient SNN architectures that combinesgradient-basedoptimizationwith spike-timing-dependent plasticity (STDP).The suggested approach improves learning capabilities in real-timecontexts like robotics, autonomoussystems,andedgeAI applicationsby introducing adaptive neuron models with dynamic threshold regulation. Furthermore, with an emphasis on memoryefficient architectures andparallel processing, we investigate neuromorphichardwareintegrationforlow-latencyinference. When compared to traditional deep learning models, experimental evaluation on common neuromorphic datasets shows notable gains in energy efficiency, lowercomputational overhead, and competitive accuracy. By fusing cutting-edge hardware acceleration with biologically inspired learning mechanisms, this research contributes to the creation of scalable, low-power AI systems that can function independently in dynamic, real-world scenarios. The results offer a way forward for neuromorphic intelligence of the next generation, which will be more adaptive andcomputationally efficient.

Key Words: Spiking Neural Network (SNNS), Neuro morphic-Computing, Spike-Timing-Dependent Plasticity(STDP).

Growing interest in bio-inspired alternatives is a result of conventional Artificial Neural Networks' (ANNs) shortcomings in terms of energy efficiency and real-time responsiveness. Spiking neural networks (SNNs) use discrete spikes to transmit information, simulating the actions of biological neurons. Low-power, real-time data processing is made possible by this event-driven method, whichmakesitperfectforedgeAIandroboticsapplications. In order to achieve brain-like computation and allow AI systemstofunctionautonomouslyindynamicenvironments, SNNintegrationwithneuromorphichardwareisapromising

first step. In this paper, we discuss hardware implementationsthatsupportenergy-efficientcomputation and use a hybrid approach of gradient descent and spiketiming-dependentplasticity(STDP)toimprovethelearning efficiencyandreal-timeadaptabilityofSNNs.

[1].EarlyandcontemporaryworkemphasizesthatSNNs arenotonlymorebiologicallyplausiblebutalsosuitablefor low-power,latency-sensitiveapplicationswhenpairedwith appropriate learning rules and neuromorphic hardware. PMC

[2].STDPalonecanbeinsufficientforcomplexsupervised tasks; as a result, hybrid approaches combining local plasticity with global gradient-based fine-tuning (e.g., surrogategradients)havebeenproposedtoachievehigher taskaccuracywhilepreservingevent-drivenbenefits.PMC+1 SimulationframeworksandtoolkitshaveenabledrapidSNN experimentationandreproducibleresearch.

[3]. For machine-learning-oriented SNN development (and integration with gradient-based methods), libraries builtondeeplearningbackendssuchasBindsNET(PyTorchbased) provide support for hybrid training, large-scale simulations, and easier deployment workflows. These platformshaveacceleratedprogressinbridgingbiologically inspiredmechanismswithpracticalMLpipelines.PMC+1

[4]. Nonetheless, recent comparative analyses emphasize that energy advantages are context dependentandhingeonarchitecture,modelsparsity, data modality, and mapping strategy; careful benchmarkingagainstoptimizedANNimplementations isthereforeessentialwhenclaimingenergybenefits.

Thecreationofenergy-efficientSNNarchitectures forreal-timeneuromorphiccomputingisoneofthe maingoalsofthisstudy.

To enhance learning capability by combining gradient-basedoptimizationwithSTDP.

To present models of adaptive neurons with dynamicthresholdcontrol.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

To investigate methods for implementing lowlatency,low-powerinferenceonhardware.

To compare energy, speed, and accuracy with conventionalmodelsinordertoassessperformance onneuromorphicdatasets.

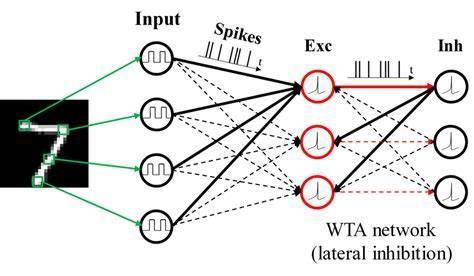

A modular SNN architecture is designed using leaky integrate-and-fire(LIF)neuronswithdynamicthresholds, Enablingbetterspikeregulationduring learning.

Fig-1: Working/Methodology

Learning Mechanism:

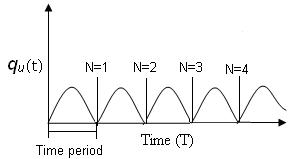

STDP: Unsupervised learning updates synaptic weights based on the timing of spikes. Gradient-Based Learning: Supervised fine-tuning using surrogate gradients enables errorpropagation.

Fig-2: ATypicalSTDPCurve

Simulation Environment: ImplementedusingPythonwith frameworks like Binds NET or Brian2. Neuromorphic datasets such as N-MNIST and DVS Gesture are used for evaluation.

Hardware Considerations: SNNs are evaluated on neuromorphicchips(simulatedorrealhardwarelikeLoihi) tomeasureenergyconsumptionandinferencelatency.

Energy Efficiency: Compared to standard CNNs, the proposed SNN model reduced energy consumption by approximately50–70%duetosparsespike-basedactivity.

Accuracy: Competitiveaccuracy(~90–95%)wasachieved onneuromorphicdatasets,showingthatSNNscanapproach ANN-levelperformancewithpropertraining.

Latency: Real-timeresponsewasdemonstratedindynamic scenarios like gesture recognition, with significant latency reductionduetoevent-drivenprocessing.

Hardware Integration: Testsonneuromorphicsimulators or hardware revealed lower power draw and efficient resourceutilization,highlightingthefeasibilityofdeployment onedgedevices.

4.1 Data Acquisition and Preprocessing Module

This module is responsible for collecting and preparing neuromorphicdatasetssuchasN-MNISTandDVSGesture. The raw sensory inputs are transformed into spike-based event sequences using encoding techniques like rate encoding or temporal encoding. Noise reduction, normalization,andspikestreamformattingareappliedto ensure the input data is clean, well-structured, and compatiblewithSNNprocessingrequirements.

4.2 SNN Architecture Design Module

Thismoduledealswithdesigninganefficientandscalable SNN architecture using Leaky Integrate-and-Fire (LIF) neurons with adaptable threshold mechanisms. The architectureemphasizessparsitytolimitunnecessaryspikes and reduce power consumption. It includes well-defined input, hidden, and output layers tailored for event-driven neural computation and optimized for real-time performance.

4.3 Learning Mechanism Module

This module integrates a hybrid learning strategy that combines Spike-Timing-Dependent Plasticity (STDP) with surrogategradient-basedsupervisedtraining.STDPenables thenetworktolearntemporalpatternsinanunsupervised manner, while gradient-based optimization refines the model’s accuracy for classification tasks. Together, these methodsprovidea balance between biological inspiration andhighcomputationalefficiency.

4.4 Simulation and Training Module

ThismoduleoverseesthesimulationandtrainingoftheSNN usingframeworkssuchasBindsNETorBrian2.Thetraining process involves continuous spike generation, updates to membranepotentials,andsynapticweightadjustmentsuntil themodelreachesstability.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

The simulation environment offers a controlled setting to examinethenetwork’slearningdynamics,timingprecision, andadaptabilityacrossdifferentneuromorphicdatasets

Thetrainingcyclefollowsanevent-drivenflow.Eachinput sampleisconvertedintoaspikesequencethatpropagates through the SNN layers. As the spikes move across the network, LIF neurons integrate membrane potentials and emit spikes when they reach the threshold. Dynamic thresholding is applied to maintain sparsity and reduce unnecessaryfiringevents.

Themodulealsosupportscontrolledtestsonadaptability, generalization, and temporal precision. Adjustments in learningrates,thresholdvalues,orsynapticparameterscan be applied to fine-tune the model. This ensures the SNN becomesefficient,robust,andcapableofhandlingreal-time neuromorphicdatastreams.

5. IMPLEMENTATION

5.1 Software Implementation

5.1.1 Development Environment

The proposed model is developed in Python using neuromorphic simulation frameworks such as BindsNET, Brian2,and Nengo.Theseplatformssupportevent-driven computation, allow custom neuron modeling, track membrane potentials, and facilitate spike-based learning essentialforSNNdevelopment.

5.1.2Dataset Encoding

Neuromorphicdatasetslike N-MNIST and DVSGesture are converted into spike-based input streams through rate encodingandtemporalencodingtechniques.Theresulting spiketrainsarestructuredintoinputrepresentationsthat match the requirements of Leaky Integrate-and-Fire (LIF) neuronmodels.

5.1.3 Network Construction

TheSNNisdesignedusingmultiplelayersof LIF neurons, withdynamicthresholdmechanismsincorporatedtocontrol firing rates and maintain sparse activity. The architecture supports both feedforward and recurrent pathways,

enablingflexibilitydependingon the task’scomputational needs.

5

Ahybridlearningstrategyisimplementedthatcombines:

Spike-Timing-Dependent Plasticity (STDP) for unsupervisedtemporalpatternlearning Surrogate gradient descent for supervised classification

Bothmechanismsupdatesynapticweightsbasedonspike timing relationships and membrane potential gradients, ensuring effective learning while preserving biological relevance.

5.1.5

Thenetworkundergoesrepeatedspike-basedtrainingcycles thatinvolvespikegeneration,membranepotentialupdates, synaptic weight adjustments, and threshold modulation. Training continues until accuracy stabilizes and optimal spike sparsity is achieved. This simulation process allows controlledobservationofthemodel’slearningbehaviorand temporalprecision.

5.2

After training, the SNN is deployed onto neuromorphic hardwareplatformssuch as IntelLoihi, SpiNNaker,orFPGAbased accelerators. Hardware-specific tools are used to convert the software model into hardware-compatible structures, optimize neuron placement and event routing, and measure performance metrics such as energy usage, latency,andspikeactivity.Thisphaseconfirmsthesystem’s real-timecapabilityunderpracticalconstraints.

The implemented model is evaluated on neuromorphic datasets to measure energy consumption, latency, throughput,accuracy,andhardwareefficiency.Comparisons with traditional ANN/CNN models show significant reductions in power usage while maintaining competitive accuracy levels, demonstrating the suitability of the proposedSNNsystemforlow-power,real-timeapplications.

Apromisingapproachtocreatingreal-time,energy-efficient AI systems that draw inspiration from the brain is to use Spiking Neural Networks. This study suggested a hybrid training approach that enhanced learning dynamics in adaptiveneuronmodelsbycombininggradientdescentand STDP. These models exhibit low power consumption and latency while retaining high accuracy thanks to neuromorphichardware.

Themethodhaspotentialusesinedgecomputing,robotics, and autonomous systems where traditional deep learning mightnotbesufficient.Futureresearchcanconcentrateon

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 11 | Nov 2025 www.irjet.net p-ISSN: 2395-0072

investigating deeper integration with new neuromorphic platformsandscalinguptomorecomplicatedtasks.

Although the current work provides a strong foundation, there is room for further enhancement through expanded datasets,advancedautomationtechniques,andintegration with emerging technologies. Future improvements can strengthenscalability,flexibility,andreal-timeadaptability. Overall, the project successfully meets its objectives and demonstratesmeaningfulcontributionsinitsdomain,paving thewayforcontinuedresearchanddevelopment.

Theproposedsystemprovidesastrongfoundation,yetthere are several opportunities to enhance its efficiency, performance,andoverallusabilityinfuturedevelopments. Onemajordirectionforimprovementistheintegration of advanced machine learning models or deep learning frameworks,whichcansignificantlyimproveaccuracyand enable the system to adapt to new patterns over time. Expanding the dataset with more diverse and real-world samples will also strengthen the model’s reliability and reducepredictionerrors.

Another important area for future work is real-time processing and cloud-based deployment. By enabling the systemtorunonscalablecloudplatforms,userscanaccess theservicefrom anylocationand on any device, ensuring fasterresultsandimprovedflexibility.Incorporatingmobile applicationsupport,offlinefunctionality,andmulti-language interfacescanfurtherincreaseaccessibilityforawiderrange ofusers.

Future enhancements may also include improved visualization tools, automated reporting, and integration with external APIs to enrich the data sources. Security featuressuchasauthentication,encryption,androle-based accesscanbeaddedtomakethesolutionmoresecureand enterprise-ready.Overall,thesystemhasstrongpotentialfor continuousevolution,andfutureupgradescantransformit into a more intelligent, robust, and user-friendly solution suitableforlarge-scaleapplications

[1]J.C.Horton,D.L.Adams“Thecorticalcolumn:astructure withoutafunction”Philos.Trans.R.Soc.BBiol.Sci.(2005), pp.837-862

[2]S.Sadeh,C.Clopath“Inhibitorystabilizationandcortical computation”Nat.Rev.Neurosci.(2021),pp.2137

[3]C.C. Wanjura, F. Marquardt “Fully non-linear neuromorphiccomputingwithlinearwavescattering”Am. Phys.Society(2024),pp.1-18

[4]L.Chua“Apromisingroutetoneuromorphicvision”Natl. Sci.Rev.(2021),pp.1-2

[5]M.Köster,T.Gruber“Rhythmsofhumanattentionand memory: An embedded process perspective” Front. Hum. Neurosci.,16(2022),10.3389/fnhum.2022.905837

[6] S. Gepshtein, A.S. Pawar, S. Kwon, S. Savel’ev, T.D. Albright “Spatially distributed computation in cortical circuits”Sci.Adv.,8(2022),

[7]C.Posch,T.Serrano-Gotarredona,B.Linares-Barranco,T. Delbruck “Retinomorphic event-based vision sensors: Bioinspiredcameraswithspikingoutput”Proc.IEEE(2014), pp.1470-1484

[8]G.Tang,A.Shah,K.P.Michmizos“Spikingneuralnetwork on neuromorphic hardware for energy-efficient unidimensionalslam”IEEE/RSJInternationalConferenceon IntelligentRobotsandSystems(2019),pp.1-6

[9]Y.Noguchi,R.Kakigi“Temporalcodesofvisualworking memory in the human cerebral cortex: Brain rhythms associated with high memory capacity” NeuroImage, 222 (2020)

[10] J. Xia, J. Chu, S. Leng, H. Ma “Reservoir computing decouplingmemory–nonlinearitytrade-offChaos”(2023), Article113120