International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Md. Mayn Uddin

Dept. of Electrical and Electronic Engineering, Jatiya Kabi Kazi Nazrul Islam University, Trishal, Mymensingh2224 , Bangladesh ***

Abstract - Outliers and inappropriate distance metrics remain major challenges in the successful improvement of the accuracy of K-Means clustering. Outliers distort centroid estimation, while conventional distance measurement methods often fail to capture true similarity among data points. This paper proposes a unified modification to the KMeans algorithm that combines systematic outlier detection and elimination before centroid calculation, with a novel adaptive distance metric for cluster assignment. By reducing the impression of anomalous data and refining similarity measurement, the modified algorithm achieves faster convergence and significantly higher clustering accuracy. An exploratory evaluation on nine benchmark multivariate datasets demonstrates up to 81% improvement in clustering performance compared to traditional K-Means. The proposed study using the python coding emphasizes the significance of robust preprocessing and metric design in unsupervised learning, and its practical implementation is illustrated using Python.

Key Words: K-Means Clustering, Outliers, Outliers removal, Adaptive Distance Function.

1. INTRODUCTION

Kmeansisanunsupervisedclusteringalgorithmdesigned todivideunlabeleddataintoindividualgroupsofselected numbers (that is, "K"). In other words, k means finds observationsthatshare importantfunctionsandclassifies them into clusters. An honest clustering solution is a solutionthatfindsclusterssothattheobservationsineach clusteraremoresimilarthantheclusteritself.InKMeans, each cluster is at the average center of gravity (called the "center of gravity"). Re-presented. The dem cluster has assigned an information point. Centroids are also information that represents the center (mean) of the cluster and do not necessarily have to be members of the dataset. In this way, the algorithm runs an iterative process until each piece of information is closer to the center of gravity of its own cluster than the center of gravity of another cluster, minimizing the distance betweentheclustersateachstep.Forexample,setting"k" to2group’srecordsintotwoclusters,andsetting"k"to4 groupsofknowledgeintofourclusters.KMeansbeginsoff evolved the system with a randomly decided on facts factor because the proposed centroid of the group, iteratively recalculates the brand new centroid, and converges at the very last clustering of facts factors

Specifically, the procedures are as follows: 1. The set of rules randomly selects the middle of gravity for every cluster.Forexample,incaseyoupickout3"k"s,thesetof ruleswillrandomlypickout3centroids.2.Kmeansmaps all of the facts with inside the dataset to the nearest centroid. That is, an expertise factor is taken into consideration to be with inside the decided on cluster if it'sfartowardthemiddleofgravityoftheclusterthanthe exchangemiddleofgravity. 3.For everycluster,thesetof rulesrecalculatesthecentroidviameansofgettingthenot unusual place cost of all factors with inside the cluster, decreasingthewholevariancewithinsidetheclusterwith recognition to the relevance of the preceding step. When the centroid changes, the set of rules reassigns factors to the closest centroid. 4. The set of rules repeats the centroid calculation and factor allocation till the whole distance among the expertise factor and the corresponding centroid is minimised, the most variety of iterations is reached, or the centroid cost does now no longerchange.

Within the lion's share of information sets there are exceptions. This predominance of exceptions is indeed morenoticeableinhugedatasetssincetheseareregularly assembled through a few computerized frameworks. This infers that there's likely no one physically checking for irregularities. And modern-day detecting frameworks ordinarily support simple gathering information over exactness. Confirmation is commonly the first costly portion, and other people assume that we are going to handlethem later.Incommon exceptionsare information designs that go astray from the quality or assumed conduct from the leftover portion of the data [1]. Given this definition, outliers are not one or the other neither loathsome nor extraordinary things; they’re fair outliers, discovery (fund), illicit chasing or deforestation (normal sciences), alter in society’s conduct (social sciences), among other exercises. In any case, those reasons have something in common: they're all intrigued. The interestingness or real-life significance of exceptions may wellbeakeyhighlightofpeculiarity[1].

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Figure 1:Outlinesacollectionoffocusesduringatwodimensionalspace.Therearetwofundamentalclusters andsomeoutliers.

Thetakingafterareanumberofthecommoncausesofthe presenceofexceptionsamidagiveninformationset:

Measurement Blunder - this can be regularly caused when the estimation instrument utilised appears to be faulty.

Data section Blunder - Human mistakes like blunders caused amid information collection, recording, or passage cancauseexceptionsindata.

Experimental Mistake - These blundersare causedamid information extraction or test arranging or whereas executinganexperiment.

Data Preparing Blunder -Thesearecausedwhencontrol orextractionofthedatasetisperformed.

Sampling Blunder -Thishappenswhenoneextricatesor blends information from the erroneous or different sources.

Intentional Exception -Theseareshamexceptionsmade tocheckdiscoverymethods.

Natural Exception - When an exception isn't counterfeit i.e.causedbyaslip-up,it'sacommonexception.Insidethe method of fabricating, collecting, handling and analyzing information,exceptionscancomefrom numeroussources andconcealinnumerousmeasurements.

1.3 Effect of outliers on a data set

Exceptions have an expansive effect on the coming of information examination and different measurable measures. Some of the first common impacts are as follows:

If the exceptions are no randomly dispersed, they'lldiminishnormality.

It increments the blunder fluctuation and diminishestheofficeofmeasurabletests.

They can cause inclination and/or impact estimates.

They can moreover affect the elemental suspicion of relapse besides as other measurablemodels.

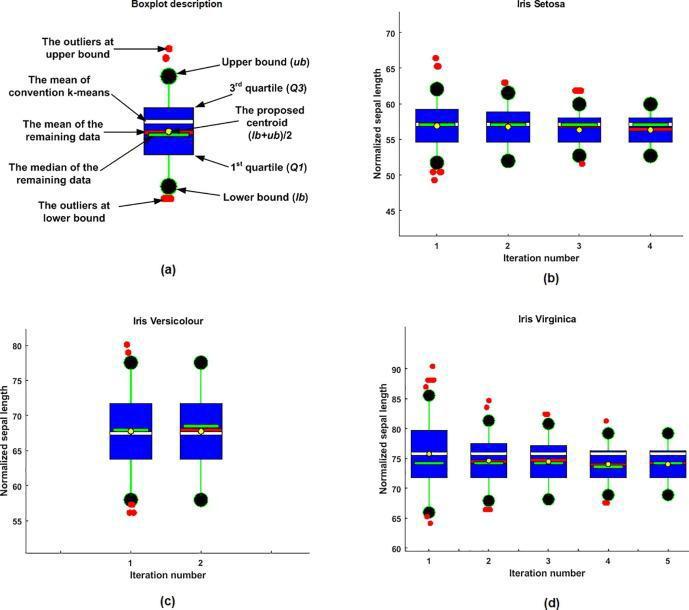

In terms of the exceptions expulsion component, Fig. 2 appears as a case of the centroid estimation at each cycle amid the exceptions expulsion preparation. Fig. 2(a) outlines a diagram of the boxplot parameters. During this case,thedataoftheSepallengthtraitoftheIrisdatasetis checked in each single cluster amid the exceptions expulsion process. Altogether three clusters, the remaining information at the extreme emphasis is completely outliers-free as appeared in Fig. 2(b), (c) and (d). It is famous that at the essential cycle there are a number of the exceptions that were recognized and reserved from the Sepal length quality in each cluster. Moreover,theproposedprocedureforcentroidestimation isdistinctivefromthecentroidofthestandardk-meansat the extreme cycle of expelling the exceptions. The proposed centroid tends to be close to the cruelty of the outliers-free information. The proposed centroid is adjusted at the middle of the blue box where the information is focused on. During this cluster, the information median is shifted at the lower boundary as showninFig.2(d).

2: k-meansalgorithmtodetectandremove outliersfromthedataset.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Numerous ponders moving forward the clustering exactness of the k-means calculation upheld different methods of exceptions evacuation. In terms of exceptions detection-based separate metrics, a few ponders have recognized the exceptions supporting the hole between the information point and its closest centroid (Sarvani et al., 2019, Barai (Deb) and Dey, 2017). In these strategies, theinformationpointwithanindeedgreaterseparationto theclosestcentroidisrecognizedasanexception.Also,the informationfocuseswithbothtenuityandgiganticremove to their centroids are considered as exceptions as displayed in He et al. (2020). In an awfully exceptionally distinctive approach, nearby look strategies (Gupta et al., 2017,Friggstadetal.,2019)areusualtohelpthek-means for exceptions location. The nearby lookpoints to initiate deter a few information focuses from the information insidetheclusterforminimisingthetargetwork.

On the off chance that the evacuated information focuses have minimised the target work at that point those information focuses are considered as exceptions and gathered in an exceedingly isolated cluster. In terms of preprocessing methods, kmeans++ is utilized as an additional sifting stepinIm etal.(2020)toinitiateend of information focuses as exceptions some time recently applying the standard kmeans. In spite of the fact that empowering clustering comes about from these methods, the clustering preparation was as it were performed on the remaining information which is outlier-free. The exception information is totally evacuated and not classifiedtoanyknownclusterascollectedinitially.

Inotherthings,exceptiondiscoveryisutilisedasareward to partition a question from its foundation like in picture handling(Tuetal.,2020,Tuetal.,2019).Bethatasitmay, few ponders tended to relieve outliers' impacts from the cruel estimation and classifying all information into known clusters as collected at first. In Olukanmi et al. (2017), a k-means# is proposed to dispense with the outliers’ impacts from the clusters’ centroid. The recognized exceptions are totally avoided from the cruel estimationasitwerebutthey'reincludedafterwardinside the clustering preparation. In this way, the impact of the exceptions is relieved from the centroid estimation and improvestheclustering exactness.Inspiteofthefactthat theproposedprocedurebeatedthestandardk-means,the data point with qualities was disposed of totally from centroidestimation.Amidthiscase,thecalculationcannot recognizeanoutlier'snearnessineachpropertyfreely.

Advancementoftheclusteringexactnessfromthepointof remove metric is illustrated in different ponders. Probabilistic removal for ICA blend models (PDI) is proposed in Safont et al. (2018). The hole measures the harshness between the likelihood thickness of the information,particularlytotheparametersofeachICAMM

show. The source partition of ICAMM is progressed by supporting PDI separately particularly after altering a limit esteem. In spite of the fact that, the great execution for identifying the failings and hence the variety in electroencephalography (EEG). In Meng et al. (2018), a fewordersofsubordinatedataaremeasuredbetweenthe compared vectors and included to the hole metric. The included data of the subsidiaries is valuable for capturing the contrasts between the compared utilitarian information. Be that as it may, this method is computational complex due to the calculation of a few subsidiaryordersoftheusefulinformation.

In terms of half breed separate measurements which is ordinarily utilized for moving forward clustering exactness,amostrecentremovemetricnamed“directionaware” is created in Gu et al. (2017) to zest up the clustering exactness of k-means. The proposed remove combines the quality Euclidean separately to handle the spatialsimilitudewhereasthecosinemetriccalculatesthe shape similitude. Compared to the beginning measurements, the hybridization of both measurements amid one one has moved forward the clustering virtue. Besides,aweightedentiretyofthe EuclideanandPearson separate is presented in Immink and Weber (2015) utilizingaweightforsummationofboththeEuclideanand Pearsoncoefficients.

The hybrid distance has improved the similarity between thecomparedsignalsoncethenoiseisaddedsignificantly. Theclusteringprecisionmaymoreoverbemovedforward as long as the exception is evacuated some time recently measuringthecluster'scentroidastalkedaboutamid this segment. Taking the preferences of commonly utilized remove measurements like Euclidean, cosine and relationship into a most recent single likeness metric can prepare the information from distinctive viewpoints. The potential of crossover separate approaches for progressing the clustering exactness is high particularly afterkillingtheimpactoftheexceptionsfromthecentroid ofinformationclusters[3].

Each machine learning build needs their calculations to make exact expectations. These sorts of learning calculations are frequently classified as administered or unsupervised. K-means clustering is an unsupervised method that needs no labeled reaction for the given computer file. K-means clustering can be broadly utilized Approach for clustering. By and large, professionals start by learning almost the design of the dataset. K-means clusters information focuses into interesting, no overlapping groupings. It works o.k. when the clusters havearoundframe.Bethatasitmay,itenduresfromthe genuinerealitythatclusters’geometricshapesleavefrom round shapes. Moreover, it doesn't learn the number of clustersfromtheinformationandwants

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Inconsistencies or outliers are described as observations that deviate sufficiently from the remaining observations to suggest that they were caused by a unique process (Hawkins, 1980). These signs can result from a series of processes such as measurement errors, or specific events such as stack events and climatic events (Chandola et al., 2009). One of the key areas of research within the data processingcommunityistheidentificationofoutsiders.

Many applications have been enhanced, including alien identification fraud detection, transportation networks, andmilitarysurveillance.Forexample,fieldyielddata(the subject of my paper) has shown several times, even to a limited extent, how outsiders affect the standards of the entire dataset (Griffin et al), 2008; Taylor et al., 2007). Interestingly, depending on the scope, you may want to remove the underdog from the dataset (because it affects quality and relevance), while finding and identifying the underdog thatis focused on a particular opportunity. You maybe particularlyinterestedindoing it,(For example, a sharp rise in temperature may indicate a fire outbreak.) [4] Cluster analysis is a basic task of data processing and machine learning, with different groups of information points.

The purposeistodivideitintotwosothat similarpoints can be assigned to the same cluster. Cluster analysis has been studied for a very long time, but thanks to its wide range of applications, from customer segmentation [5] to informationretrieval[6], andfromrecommendersystems [7] to resource allocation [8]. Therefore, cluster analysis has also been widely studied in science. Kmeans is one of theleadingclusteringtechniquesfor findingK-prototypes because it represents information points with the closest centre of gravity. Developed for graph partitions, spectral clustering minimizes the weights of intersecting edges to get individual subgraphs of nearly uniform size. The Gaussian mixture model estimates the K normal distribution using means and variances to fit the information.

Although much effort has been put into cluster analysis, mostcurrentmethodsassumethateachinformationpoint needs to be assigned a cluster label. That is, there are no anomalous data points in the clustering process. Unfortunately, this is not always the case, especially for unattended tasks. Potential anomalies or outliers inevitably degrade clustering performance. For example, there are few outliers that can easily destroy a cluster structure derived from Kmeans and produce a strange distribution in a Gaussian mixture model. Several robust clusteringtechniqueshavebeenproposedtorecoverclean data to handle outliers and noisy data. Distance function learning aims to find a powerful distance function that resists outliers [9]. The L1 norm is used to mitigate the negativeeffectsofoutliersontheclusterstructure[10].In

addition, some methods aim to find more practical expressions with some restrictions. The low-ranked representation assumes that unique or clean data is presentinthelowdimensionalmanifold[11].

Subspace sparse clustering uses sparse coefficients for expression learning to examine selfexpression properties. Nowadays, consensus clustering first creates a basic partition and then uses the required partition based on a robust partition representation. Note that these methods assignaclusterlabeltoallinformationinsteadofexplicitly removingtheanomalypoint.Severalunsupervisedoutlier detection methods have been implied in many ways to address the negative effects of outliers during the clustering process. Scores are usually calculated on a perinformation basis, detecting the extent of outliers and returning the best candidate for K outliers. The outlier factor is one of the common density-based methods of identifying outliers by comparing the local densities of informationpointsandtheirneighbors[12].

Similarly, local distancebased outlier detection uses the object's relative position to its neighbors to see how far the item deviates from its neighbors [13]. Angle-based outlier detection focuses on the variance within an angle between a one-degree difference vector with respect to the opposite point. Here, there are some deviations between the outliers and the angles of the two randomly selected points [13], [14]. Other typical methods include ensemble-based iForest [15], eigenvector-based OPCA [16],andclusterbasedTONMF[17].

Outlier detection and cluster analysis are typically performed as two separate tasks, although outlier detectionmethodsareoftenconsideredpre-processingfor cluster analysis. In fact, they are tightly bound. Cluster structures are often easily destroyed by some outliers [18]. Outliers, in contrast, are defined by the concept of clusters.Theclusterisdetectedbecausethepointdoesnot belongtoanycluster[11].

However, the integrated framework handles cluster analysis and outlier detection is just a small part of the workavailable.DBSCANisoneofthepioneeringstudiesof densitybased cluster analysis, with outliers set as additional output [19]. Here, all information points are categorized according to density into three categories: Twoconnectingcorepointsandtheirconnectedboundary points. Strictly speaking, DBSCAN does not belong to coclusteranalysisandoutlierdetection,whichfirstidentifies and removes outliers, that is, clustering. As far as we know,Kmeans[20]isthefirstworkinthisdirection.

It aims to detect outliers and subdivide the remaining points into Kclusters. Instances isolated from the nearest centroid are considered outliers during the clustering process. Since this hassle can be a discrete optimization hassle in essence, it`s herbal that Lagrangian Relaxation

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

(LP) [21] formulates the clustering with outliers as an integer programming hassle with numerous constraints, which desires the cluster introduction charges due to the enter parameter. Although those pioneering works offer newinstructionsforjointclusteringandoutlierdetection, the round shape assumption of K-approach and consequentlytheauthentic characteristicarearestrictsits potential for complicated records evaluation, and additionally the setup of enter parameters and time complexityinLPmakeitinfeasibleforlarge-scalerecords.

During this paper, we deal with the joint cluster evaluation and outlier detection hassle, and recommend the Clustering with Outlier Removal (COR) algorithm. Sincetheoutliersrelyupontheideaofclusters,werework the primary area into the partition area by jogging a few clustering algorithms (e.g. K Means) with specific parameters to get a collection of diverse fundamental walls. By this implies, the persistent records are mapped right into a binary area thru one warm encoding of fundamental walls. Inside the partition area, a goal characteristic is supposedly supported Holoentropy [20] to increase the compactness of each cluster after a few outliersareremoved.

Withsimilaranalyses,wereworkthepartialhassleofthe goal characteristic right into a K-approach optimizatimization.Toofferanentireandneatsolution,an auxiliarybinarymatrixderivedfromfundamental wallsis introduced. Then COR is performed at the concatenated matrix,whichabsolutelyandefficaciouslysolvesthetough hasslethruaunifiedKmeanswiththeoreticalsupport.

To choose the overall performance of COR, we conduct widespread experiments on severa records units in diverse domains. Compared with K-approach and diverse outlier detection methods, COR outperforms competitors in phrases of cluster validity and outlier detection via means of 4 metrics. Moreover, we show the excessive performance of COR, which suggests it is appropriate for large-scale and excessive-dimensional records evaluation. Some key elements in COR are similarly analysed for sensible use. Finally, an utility on flight trajectory is furnished to illustrate the effectiveness of COR inside the real-international scenario. Here we summarise our main contributionsasfollows.

i. As far as we know, we are the first company to performoutlierclusteringbyremovingoutliersinthe partition space, achieving consensus clustering and outlierdetectionatthesametime.

ii. Supported holo entropy. Design the objective function from the perspective of detecting outliers. This is partially resolved by kmeans clustering. By introducing a binary auxiliary matrix, we completely transform non-K Means clustering problems into Kmeansoptimizationswiththeoreticalsupport.

iii. Extensive experimental results have shown that the proposedCORisfarmoreeffectiveand efficientthan its cutting-edge competitors in terms of cluster validityandoutlierdetection.

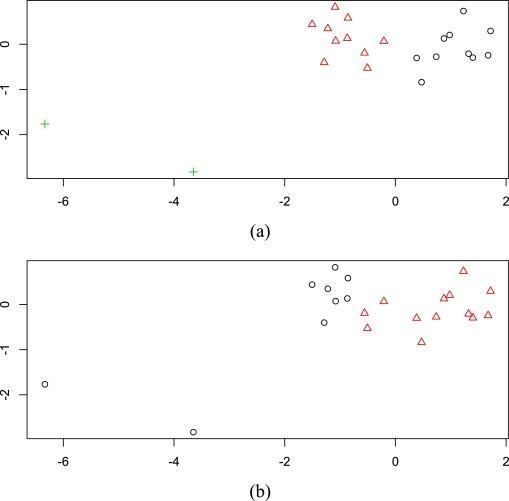

Thegoalofinformationclusteringistospothomogeneous groupsorclustersfromagroupofobjects.Inotherwords, data clustering aims to divide a collection of objects into groups or clusters specified objects within the same cluster are more kind of like one another than to things from other clusters. As an unsupervised learning process, data clustering is usually used as a preliminary step for dataanalytics.Forinstance,dataclusteringisemployedto spot the patterns hidden in organic phenomenon data, to supplyanhonestqualityofclustersorsummariesforgiant datatohandletheassociatedstorageandanalyticalissues, topickrepresentativeinsurancepoliciesfroman outsized portfolio so as to make met model models. Many clusteringalgorithmshavebeendevelopedwithinthepast sixty years. Among these algorithms, the k-means algorithm is one amongst the oldest and most typically used clustering algorithms. Despite being employed widely,thek-meansalgorithmhasseveraldrawbacks.One drawback is that it`s sensitive to noisy data and outliers. As an example, the k-means algorithm isn't ready to correctly recover the 2 clusters shown in Fig. 3(a) thanks totheoutliers.AsyoucanseefromFigure3(b),thethree pointswereincorrectlygrouped[6].

Figure 3: Anillustrationshowingthatthek-means algorithmissensitivetooutliers.

(a)Adatasetwithtwoclustersandtwooutliers.Thetwo clusters are plotted by triangles and circles, respectively. The two outliers are denoted by plus signs. (b) Two

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

clusters found by the k-means algorithm. The two found clustersareplottedbytrianglesandcircles,respectively.

The KMeans algorithm is an iterative algorithm that attempts to divide a dataset into non-overlapping subgroups (clusters) of k Predefined, where each piece of information belongs to only one group. Try to make the data points in the cluster as similar as possible while keeping the cluster as distinct (wide) as possible. Assign datapointstotheclustersothatthesumofthesquaresof the distances between the information points and the center of gravity ofthecluster (the arithmeticmeanof all informationpointsbelongingtothiscluster)isminimized. Thelessvariationwithinacluster,themorehomogeneous (similar) the information points within the same cluster. Thealgorithmkmeansthat:

i. GivesthenumberofclustersK.

ii. Initialize the centroid by first shuffling the dataset and then randomly selecting the K data points of the centroidwithoutreplacingthem.

iii. Repeatuntilthechangeinthecenterofgravitystops. Thismeans that the knowledge point assignments to theclusterwillnotchange.

iv. Calculate the sum of the squares of the distance betweenthedatapointsandeachcenterofgravity.

v. Matcheachpieceofinformationtotheclosestcluster (centerofgravity).

vi. Calculate the centroid of the cluster using the standardvaluesforalldatapointsthatbelongtoeach cluster.

Approach k means that solving the problem is called expected value maximization. Estep assigns information points to the nearest cluster. Step calculates the centroid of each cluster. Below is a breakdown of how to solve it mathematically.

Theobjectivefunctionis:

where wik=1 for information xi if it belongs to cluster k; otherwise, wik=0. Also, μk is the centroid of xi’s cluster. It’s a minimization problem of two parts. We first minimizeJw.r.t.wikandtreatμkfixed.Thenweminimize J w.r.t. μk and treat wik fixed. Technically speaking, we differentiate J w.r.t. wik first and update cluster assignments(E-step).ThenwedifferentiateJw.r.t.μkand recompute the centroids after the cluster assignments fromthepreviousstep(M-step).Therefore,E-stepis:

In other words, assign the data point xi to the closest cluster judged by its sum of squared distance from cluster’scentroid[22],andM-stepis:

5.1 Few things to notice here:

vii. 1. Clustering algorithms, including k-means, use distance-based measurements to check for similarities between data points, so each dataset features most of the time with a mean of 0 and a standard deviation of 1. It is recommended to standardize the information so that it becomes. Measurement units such as age and income are different.

viii. 2. Given the repeatability of the k-means clustering and the random initialization of the centroid at the start of the algorithm, different initializations can result in different clusters. This is because k means that the algorithm stays at the overly local optimum point and does not converge. Therefore, it is advisabletorunthealgorithmwithdifferentcentroid initializationsandselecttheexecutionresultwiththe smallersumofsquaresofdistances.

ix. 3. Assigning an example that does not change is the sameasnotchangingthevariationinthecluster.

5.2 Steps for solving the problem:

a. This article is considered to be derived from vector space.

b. AlgorithmforclusteringNdatapointsintoKdisjoint subsetsScontainingdatapointstominimizethesum ofsquarescriteria.

c. Simplyput,k-clusteringisanalgorithmforgrouping objectssupportedbyk-numbergroups.

d. Kcanbeapositiveinteger.

This paper proposes a modified k means algorithm to improve data clustering, but both online clustering and large-scale data clustering are outside the scope of this

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

work.Theapproachofkmeansreliesonsphericalclusters during which the info points converge surrounding the cluster`scentroid.Thekmeanssplitsagroupofknowledge points X=x1, x2, x3, ⋯, xN into k known number of clusters. Randomly, the k means selects k set of centroids C=c1, c2, c3, , ck where k≤N. Thereafter, each datum xi is assigned to the closest cluster Cj supported the littlest Euclideandistance.[24]

TheEuclideandistanceformulaisemployedtosearchout thespacebetweentwopointsonaplane.Thisformula saysthegapbetweentwopoints(x11,y11)and(x22, y22)isd=√[(x2–x1)2+(y2–y1)2].

5.3 Demonstration of the standard algorithm

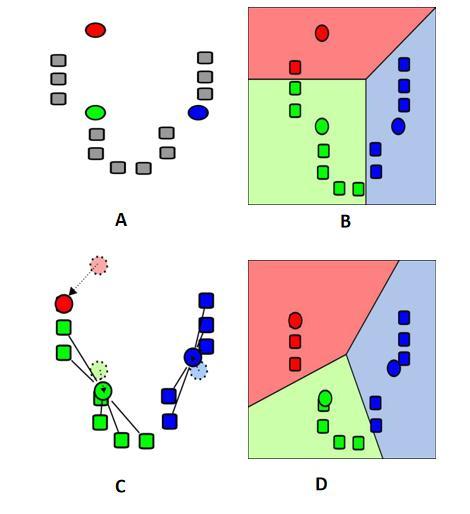

4: standardk-meansclusteringprocess

A. k initial "means" (in this case k=3) are randomly generatedwithinthedomain(shownincolour).

B. k clusters are created by associating every observation with the closest mean. The partitions hererepresenttheVoronoidiagramgeneratedbythe means.

C. The centroid of every of the k clusters becomes the newmean.

D. Steps2andthreearerepeateduntilconvergencehas beenreached[7].

5.4 The advantages of the algorithm are:

•Generallytallproductivitywithsimpleexecution.

•High-qualityclustering.

•Plausibilityofparallelization.

•Thepresenceofthenumerousmodifications.

5.5 The disadvantages of the algorithm are:

calculation

• Affectability to beginning conditions -initialization of cluster centres essentially influences the comes about of clustering.

•AffectabilitytoemanationsandclamourEmanationsthat are reserved from the centres of those clusters are still takenbeneaththoughtwhencalculatingtheircentres.

• The chance of joining an regional ideal - an iterative approachdoesn'tensuremeetinganidealsolution[8].

Conclusion

In this paper we presented outlier detection methods in both K-Means and Hierarchical Clustering. to get rid of outliersisa veryimportant task.Weproposedalgorithms by which we will remove outliers. We work on a benchmark dataset and after implementing our proposed algorithmit'sprovedthatourproposedalgorithmismore efficient than the previous one. After removing the outliers’ accuracy is increased. The approach must be implemented on more complex datasets. Future work requiresanapproachapplicableforvaryingdatasets.

[1] Chandola , V, Banerjee, A. & Kumar, V. (2009), “AnomalyDetection:Asurvey”

[2] W. T . Williams, “Principles of Clustering”, Annual Review of Ecology and Systematics Vol. 2 (1971), pp. 303-326(24)

[3] J Parienti, O Kuss, “ Cluster-crossover design: A methodforlimitingclustersleveleffectincommunityintervention studies ” Contemporary Clinical Trials, Volume28,Issue3,May2007,Pages316-323.

[4] Nawaf H.M.M Shrifan, Muhammad F. Akbar, Noor AshidiMatIsa,“AnAdaptiveOutlierRemovalAidedKMeans Clustering Algorithm” Computer and InformationSciences,Availableonline13July2021.

[5] C. Tsai, Y. Hu and Y. Lu, “Customer Segmentation Issues and Strategies for An Automobile Dealership With Two Clustering Techniques,” Expert Systems, vol.32,no.1,pp.65–76,2015.

[6] R. Campos, G. Dias, A. M. Jorge and A. Jatowt, “Survey of Temporal Information Retrieval and Related

• The sum of clusters may be a parameter of the Applications,” ACM Computing Surveys, vol. 47, no. 2, p.15,2015.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

[7] A. Shepitsen, J. Gemmell, B. Mobasher and R. Burke, “Personalised Recommendation in Social Tagging Systems Using Hierarchical Clustering,” in Proceedings of ACM Conference on Recommender Systems,2008.

[8] R. Grandl, G. Ananthanarayanan, S. Kandula, S. Rao, and A. Akella, “Multi-Resource Packing for Cluster Schedulers,” ACM SIGCOMM Computer CommunicationReview,vol.44no4,pp.455–466,2015.

[9] J. V. Davis, B. Kulis, P. Jain, S. Sra and I. S. Dhillon, “Information Theoretic Metric Learning,” in Proceedings of International Conference on Machine Learning,.J.V

[10] C.Ding,D.Zhou,X.He,andH.Zha,“R1-pca:Rotational Invariant L 1-norm Principal Component Analysis for Robust Subspace Factorization,” in Proceedings of InternationalConferenceonMachineLearning,2006.

[11] G. Liu, Z. Lin and Y. Yu, “Robust Subspace Segmentation by Low-Rank Representation,” in Proceedings of International Conference on Machine Learning,2010.

[12] M. M. Breunig, H.-P. Kriegel, R. T. Ng, and J. Sander, “Lof:IdentifyingDensity-BasedLocalOutliers,”inACM SigmodRecord,vol.29,no.2,2000,pp.93–104.

[13] K.Zhang,M.Hutter,andH.Jin, “ANewLocalDistance Based Outlier Detection Approach for Scattered RealWorldData,”inProceedingsofPacific-AsiaConference onKnowledgeDiscoveryandDataMining,2009. IEEE TRANSACTIONSONKNOWLEDGE.

[14] H. Kriegel and A. Zimek, “Angle-Based Outlier Detection in High Dimensional Data,” in Proceedings of ACM SIGKDD International Conference on KnowledgeDiscoveryandDataMining,2008.

[15] N. Pham and R. Pagh, “A Near-Linear Time Approximation Algorithm for Angle-Based Outlier Detection in High-Dimensional Data,” in Proceedings of ACM SIGKDD International Conference on KnowledgeDiscoveryandDataMining,2012.

[16] F.T.Liu,K.M.Ting,andZ.-H.Zhou,“IsolationForest,” in Proceedings of IEEE International Conference on DataMining,2008

[17] Y. Lee, Y. Yeh, and Y. Wang, “Anomaly Detection Via Online Oversampling Principal Component Analysis,” IEEE Transactions on Knowledge and Data Engineering,vol.25,no.7,pp.1460–1470,2013.

[18] R. Kannan, H. Woo, C. C. Aggarwal, and H. Park, “Outlier Detection for Text Data,” in Proceedings of SIAMInternational ConferenceonDataMining,2017.

[19] A. Georgogiannis, “Lof: Identifying Density-Based Local Outlier,” in Proceedings of Advances in Neural InformationProcessingSystems,2016.

[20] M. Ester, H.-P. Kriegel, J. Sander, X. Xu et al., “A Density-Based Algorithm for Discovering Clusters in LargeSpatialDatabasesWithNoise.”inProceedingsof ACM SIGKDD International Conference on Knowledge DiscoveryandDataMining,1996.

[21] S. Chawla and A. Gionis, “K-Means-: A Unified Approach to Clustering and Outlier Detection,” in ProceedingsofSIAMInternationalConferenceonData Mining,2013.