International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Kindipsingh Mallhi1, Aditi Chhajed2, Ojas Binakye3, Priyansh Katariya4 and Prof. Pramila M. Chawan5

1,2,3,4 B.Tech Student, Dept of Computer Engineering and IT, VJTI College, Mumbai, Maharashtra, India. 5 Associate Professor, Dept of Computer Engineering and IT, VJTI College, Mumbai, Maharashtra, India.

Abstract - Image inpainting and outpainting are crucial problems in computer vision, with applications ranging from restoration of historical artworks to modern-day photo editing, privacy preservation, and medical imaging. Traditional approaches such as diffusion and patch-based methodsoftenfailtopreservesemanticconsistencyforlarge missing regions. Recent advancements in deep learning, especially Convolutional Neural Networks (CNN), Generative Adversarial Networks (GAN), and Partial Convolutional Networks (PConv), have revolutionized this domain. This paper surveys existing approaches, discusses their merits and limitations, and positions our proposed work of applying PConv for image inpainting and outpainting as a robust and semantically consistent solution.

Key Words: Image Inpainting, Image Outpainting, Deep Learning, CNN, GAN, Partial Convolution, Image Restoration

1.1 Brief overview of image inpainting and outpainting

Image inpainting refers to the process of reconstructing lostordeterioratedpartsofanimagesothattherestored regions are visually plausible and semantically coherent. Outpainting extends an image beyond its original boundaries while maintaining contextual consistency. Both problems are of immense significance in fields like digital heritage restoration, entertainment, e-commerce, andmedicalimaging.

Early traditional methods relied on pixel diffusion and patch-based texture synthesis. While these approaches were widely used, they were computationally expensive and lacked semantic awareness, often producing unrealistic or repetitive patterns. The advent of deep learning introduced powerful alternatives such as Convolutional Neural Networks (CNNs), Generative Adversarial Networks (GANs), and Partial Convolutional Networks (PConv), which leverage large-scale datasets and semantic feature learning to produce realistic reconstructions.

This survey consolidates the progress across these methods, evaluates their strengths and limitations, and establishes the research gap for our project focused on PConvforinpaintingandoutpainting.

Image inpainting and outpainting deal with the reconstruction of missing or corrupted regions and the extension of image boundaries while ensuring that the generated content is visually plausible and semantically consistent.Traditionaltechniques,suchasdiffusion-based interpolation and patch-based texture synthesis, struggle withlargemissingregionsandlackcontextualawareness.

Althoughdeeplearninghassignificantlyimprovedresults, challenges remain unresolved. Maintaining semantic consistency across diverse scenes, handling irregularly shaped holes, and preserving fine details without blurriness are still difficult. Moreover, models must generalize across different image types from natural landscapes to medical scans while balancing reconstruction quality with computational efficiency. These challenges highlight the need for advanced frameworks such as PConv that can dynamically adapt to missingregionsandimprovebothaccuracyandstability.

The motivation for this study arises from the growing demand for reliable image completion across multiple domains. In cultural heritage, it is vital for restoring damaged artworks, wall paintings, and manuscripts. In healthcare, medical imaging often requires reconstructing incomplete scans for accurate diagnosis. In everyday applications such as photo editing, privacy preservation, and digital content creation, users expect seamless object removal,backgroundcompletion,andboundaryextension.

Similarly, industries like entertainment, e-commerce, and gaming rely on visually appealing and contextually accurate image modifications. While CNN and GAN-based models have shown remarkable progress, they still face limitations in stability, handling irregular regions, and computational cost. This necessitates exploration of more efficientandrobustalternativessuchasPConv.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

The applications of inpainting and outpainting are widespread and impactful:

Cultural Heritage: Digitalrestorationofdeterioratedwall paintings and manuscripts.

Medical Imaging: Reconstruction of incomplete MRI and CT scans for better diagnosis.

Digital Photography and Media: Object removal, background filling, and image expansion.

Privacy Preservation: Removal of sensitive content without visible artifacts.

E-commerce and Entertainment: Enhancement of product images and generation of immersive environmentsforgamingandvirtualreality.

Imageinpaintingandoutpaintingarecrucialtechniquesin computer vision for reconstructing or extrapolating missing regions of an image. Traditional exemplar-based and diffusion approaches struggled with structural consistency and semantic accuracy. With the advent of deeplearning,newarchitecturessuchasCNNs,GANs,and attention-based models have significantly advanced the field.

2.1.1 Liu et al. (2018) [1]: Proposed the Partial Convolution (PConv) framework, where invalid pixels are masked during convolution. Tested on ImageNet, Places2, and CelebA-HQ datasets, it achieved better performance on irregular holes compared to standard CNNs. However, itstruggledwithverylargemissingregions.

2.1.2 Patel et al. (2020) [2]:DevelopedamodifiedU-Net with PConv, improving restoration accuracy and reducing loss rates. Evaluated on CelebA-HQ, it demonstrated superior results for face inpainting tasks. Limitation: performance degraded when handling complex backgroundtextures.

2.1.3 Yan et al. (2021) [4]: Designed PCNet (PConv with Attention), which combined partial convolution and attention mechanisms. The model showed improved accuracy and faster convergence on Places2 and Paris StreetView datasets.Limitation:struggledwithfine facial features.

2.1.4 Chen et al. (2021) [6]:AppliedPConvwithaSliding Window Strategy to restore Dunhuang murals. This

approach yielded faster restoration with good accuracy. However, certain restored regions remained blurred with visibletraces.

2.2.1 Kang et al. (2022) [5]: Proposed Weighted Convolutions (WConv) with normalized masks to handle invalid pixels. Demonstrated efficiency on ImageNet and CelebA, solving instability issues in PConv. However, higher loss values were observed in comparison to stateof-the-artmodels.

2.2.2 Yu et al. (2020) [7]:IntroducedGatedConvolutions (GConv) for free-form inpainting. The model dynamically learnedmasksandimprovedboundarytransitions,tested on Places2 and CelebA-HQ datasets. The drawback was poorperformancewithverylargeirregularmasks.

2.3.1 Yu et al. (2019) [3]: Introduced a Contextual Attention + GAN framework. The model exploited distant features through attention layers to fill missing regions. ThisapproachproducedvisuallyrealisticresultsonPlaces and CelebA datasets, but required high computational resourcesfortraining.

2.3.2 Chang et al. (2021) [8]: Extended inpainting to videosusing3D-GatedCNN+TemporalGANloss.Applied on Free-form Video Inpainting datasets, it generated temporally consistent results. However, the approach failedwithhighlycomplexmasksandthickocclusions.

This survey highlights the progression from mask-based CNN approaches to GAN and attention-driven models, addressing structural realism and contextual coherence. While CNN and GAN-based methods provide strong performance, challenges remain in handling large missing regions, complex semantic structures, and computational cost.

Table - 1: SummaryofLiteratureonImageInpaintingand OutpaintingusingDL

Author( s) & Year Metho d / Model Dataset(s )Used Metrics Merits Demerits

Liu et al. (2018) Partial Convol ution (PConv ) ImageNet, Places2, CelebAHQ L1, PSNR, SSIM, Style Loss,TV Loss

Handles irregular holes, reduces artifacts Quality deteriorate s for large missing regions

Patel et al. (2020)

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Modifie d U-Net with PConv

CelebAHQ

Yu et al. (2019) Context ual Attenti on + GAN

Yanetal. (2021) PCNet (PConv + Attenti on)

Kang et al. (2022) Weight ed Convol ution (WCon v)

Chen et al. (2021) PConv + Sliding Windo w

Yu et al. (2020) Gated Convol utions (GConv )

Places, CelebA

L1,MSE, PSNR, SSIM Improve d accuracy and reduced loss vs. classical PConv Less effectiveon large missing structures

PSNR, Percept ualLoss Learns longrange depende ncies, realistic results

Places2, Paris Street View

PSNR, SSIM, Percept ual & Style Loss

Effective for large missing regions, faster converge nce

ImageNet, CelebA L1, L2, PSNR, SSIM Solves instabilit y due to invalid pixels

Dunhuan g Mural Dataset

Training is computatio nally expensive

often fail to preserve semantic consistency and structural details,especiallywhenthemaskgeometryiscomplex.

We propose a system based solely on Partial Convolutional Networks (PConv) for inpainting, which conditions each convolution operation on valid (unmasked) pixels and dynamically updates the mask throughout the network. This approach is designed to robustly reconstruct missing regions while minimizing artifacts and improving adaptation to diverse mask shapes.

Struggles with complex objects like faces

Higher loss values compared toothers

Random Mask, Accurac y Faster processin g,promisi ng accuracy

Some regions blurred withvisible traces

Places2, CelebAHQ L1, L2, GAN Loss Softmask learning, seamless boundary transitio ns Struggles with large masks on faces

Chang et al. (2021) 3DGated CNN + Tempor al GAN Loss Free-form Video Inpaintin g(FVI) FDI, MSE, LPI Highquality video inpaintin g, efficient

A. Problem Statement

Fails on thick/comp lexmasks

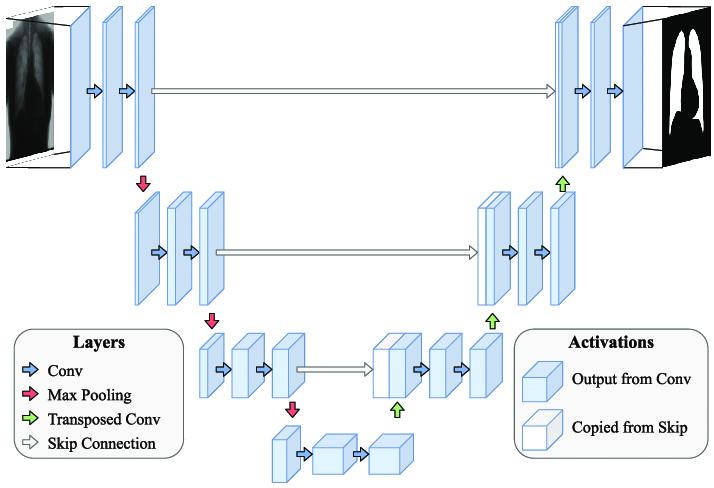

The proposed architecture uses a U-Net-like encoder–decoder framework with skip connections, optimized for processingmaskedimages.

● Encoder Module: Each encoder block consists of: Partial Convolutional Layer (PConv2D), which takesboththeimageandmaskasinput.

● ReLUactivation: Dynamic mask update after each partial convolution, expanding the set of valid pixels.The encoder extractshierarchical features, learning bothlocal textures andglobal contextual information.

● Latent Feature Representation: The bottleneck layer integrates semantic features from valid image areas, enabling the network to learn contextualcuesforfillinglargeholes.

● Decoder Module: Each decoder block includes: 1. Upsampling (e.g., bilinear interpolation). Skip connections from corresponding encoder layers (forbothimageandmask).

2. Partial Convolutional Layer and ReLU activation. The decoder reconstructs missing regions, aligning textures and structure with the surroundingcontext.

● Skip Connections: As in U-Net, skip connections allow low-level features to bypass the bottleneck, preserving edge and color details and improving visualsharpness.

TheoveralldesignoftheproposedsystemisbasedonaUNet-like encoder–decoder architecture with skip connections, tailored for processing masked images. The high-levelstructureofthemodelisillustratedinFig.1.

The objective of the proposed system is to address the shortcomings of standard image inpainting techniques when dealing with irregular or large missing regions. Classical methods and standard deep learning models

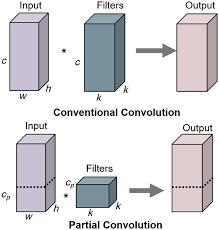

The central innovation is the mask-aware partial convolution:

1. Each convolution operates only on valid pixels withinthereceptivefield.

2. Aftereachconvolution,themaskisupdated:ifany validpixelsexistinthereceptive field, the output pixel becomes valid; otherwise, it remains masked.

3. Thisdynamicmaskupdateletsvalidpixelregions grow deeper in the network, progressively shrinkingthemaskedareaandenablingsemantic informationtopropagate.

4. Thecoreprinciple of Partial Convolution(PConv) is that each convolution operation is conditioned only on valid pixels, with the mask being dynamicallyupdatedateverylayer.

5. Fig. 2 illustrates how partial convolution differs from standard convolution and how the mask propagatesthroughthenetwork.

The system is trained with a composite loss function tailoredforinpainting:

● Reconstruction Loss (L1): Measures pixel-wise similarity between the output and ground-truth image,guidingrecoveryoffinedetails.

● Total Variation Loss: Reduces high-frequency artifactsandencouragessmoothness.

The final loss is a weighted sum to balance local accuracy and global realism. In a basic implementation, pixel-wise L1lossissufficient.

● Dataset: Training images are corrupted with randomly generated irregular masks, simulating diversemissingregions.

● Input: Each training sample consists of a masked imageanditsbinarymask.

● Training Loop: 1. Inputs are normalized and resized. 2.The network receivestheimageandmask and outputs the inpainted image. 3.Lossiscomputedovermaskedregions,andthe modelisupdatedusingAdamoptimizer.

● Augmentation: Standard image augmentation (flipping, rotation, cropping) can be used for robustness.

● Batching: Mini-batch training leverages GPU acceleration.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

F. Evaluation Metrics

Performanceisassessedusing:

● Peak Signal-to-Noise Ratio (PSNR): Quantifies pixel-levelreconstructionfidelity.

● Structural Similarity Index (SSIM): Evaluates perceptual similarity in luminance, contrast, and structure.

● Qualitative Visual Inspection: Human assessment of realism, artifact presence, and semantic coherence.

G. System Block Diagram

ProposedPipeline

1. InputModule: Acceptsamaskedimageandbinary mask.

2. Mask-Guided Encoder (PConv Layers): Extracts features conditioned on valid pixels, dynamically updatingthemask.

3. Latent Feature Representation: Encodes global contextandsemantics.

4. Decoder with Skip Connections: Reconstructs missing regions using encoder features and mask information.

5. Loss Computation Unit: Computes training loss (reconstruction and optionally perceptual/style/TV).

6. OutputModule: Producesthecompletedimage.

4. CONCLUSION

Image inpainting and outpainting have progressed from traditional diffusion and patch-based methods to advanced deep learning architectures that achieve semantically consistent and visually realistic reconstructions. Convolutional Neural Networks (CNNs), Generative Adversarial Networks (GANs), and attentionbased models have each enhanced structural coherence, contextual awareness, and perceptual quality. Among these, Partial Convolutional Networks (PConv) stand out for their ability to handle irregular masks and large missing regions by dynamically updating valid pixels during training. Despite these advancements, challenges such as preserving fine details, reducing computational complexity, and achieving generalization across diverse image domains remain unresolved. Outpainting further amplifies these challenges, demanding models that can extrapolatebeyondoriginalboundarieswhilemaintaining semanticrealism.

To address these limitations, our proposed approach leverages a PConv-enabled U-Net framework that integrates mask-aware feature extraction, skip connections, and tailored loss functions. This design enables sharper, contextually aligned, and artifact-free reconstructions suitable for real-world applications, including cultural heritage restoration, medical imaging, and digital media editing. Looking ahead, research may explore hybrid architectures that combine PConv with transformer-basedmodels,multimodaltrainingwithtext–image priors, and domain-specific fine-tuning for specialized use cases. Such advancements will push inpainting and outpainting closer to achieving high perceptual fidelity with computational efficiency, thereby enhancing their scalability and adoption in large-scale deployments.

[1] T. Yu, C. Lin, S. Zhang, S. You, X. Ding, J. Wu, and J. Zhang, “End-to-End Partial Convolutions Neural Networks for Dunhuang Grottoes Wall-painting Restoration,” Dunhuang Academy; Jinan University; TianjinMedicalUniversity;CSIRO;TianjinUniversity.

[2] J. Susan and P. Subashini, “Deep Learning Inpainting Model on Digital and Medical Images A Review,” AvinashilingamInstituteforHomeScienceandHigher EducationforWomen,India.

[3] S. Navasardyan and M. Ohanyan, “Image Inpainting with Onion Convolutions,” Picsart Inc., Yerevan, Armenia.

[4] A. Dash, G. Wang, and T. Han, “Attentive Partial Convolution for RGBD Image Inpainting,” New Jersey InstituteofTechnology,Newark,USA.

[5] S. S. Singh, A. N. Singh, B. R. Yadav, and K. Jayamalini, “Deep Learning Approach to Inpainting and Outpainting System,” Shree L.R. Tiwari College of Engineering,Maharashtra,India.

[6] C. Jo, W. Im, and S. E. Yoon, “In-N-Out: Towards Good Initialization for Inpainting and Outpainting,” Korea Advanced Institute of Science and Technology (KAIST),Daejeon,Korea.

[7] C. Li, D. Xu, and H. Zhang, “Multi-stage Image InpaintingusingImprovedPartialConvolutions.”

[8] G. Liu, F. A. Reda, A. Tao, K. J. Shih, T. C. Wang, and B. Catanzaro,“ImageInpaintingforIrregularHolesusing PartialConvolutions,”NVIDIACorporation.

International Research Journal of Engineering and Technology (IRJET) e-ISSN:2395-0056

Volume: 12 Issue: 10 | Oct 2025 www.irjet.net p-ISSN:2395-0072

Kindipsingh Mallhi, B Tech Student, Dept. of Computer Engineering and IT, VJTI College, Mumbai,Maharashtra,India

Aditi Chhajed, B Tech Student, Dept. of Computer Engineering and IT, VJTI College, Mumbai, Maharashtra,India

Ojas Binayke, B. Tech Student, Dept. of Computer Engineering and IT, VJTI College, Mumbai, Maharashtra,India

Priyansh Katariya, B Tech Student, Dept. of Computer Engineering and IT, VJTI College, Mumbai,Maharashtra,India

Prof. Pramila M. Chawan, is working as an Associate Professor in the Computer Engineering Department of VJTI, Mumbai. She has done her B.E.(Computer Engineering) and M.E.(Computer Engineering) from VJTI College of Engineering, Mumbai University. She has 30 years of teaching experience and has guided 85+ M. Tech. projects and 130+ B. Tech. projects. She has published 148 papers in the International Journals, 20 papers in the National/InternationalConferences/Symposiums.Shehas worked as an Organizing Committee member for 25 International Conferences and 5 AICTE/MHRD sponsored Workshops/STTPs/FDPs. She has participated in 17 National/InternationalConferences.WorkedasConsulting Editor on JEECER, JETR,JETMS, Technology Today, JAM&AER Engg. Today, The Tech. World Editor - Journals of ADR Reviewer -IJEF, Inderscience. She has worked as NBA Coordinator of the Computer Engineering DepartmentofVJTIfor5years.Shehadwrittenaproposal

under TEQIP-I in June 2004 for 'Creating Central Computing Facility at VJTI'. Rs. Eight Crore were sanctioned by the World Bank under TEQIP-I on this proposal.

CentralComputingFacilitywassetupatVJTIthroughthis fund which has played a key role in improving the teachinglearningprocessatVJTI.AwardedbySIESRPwith Innovative & Dedicated Educationalist Award Specialization: Computer Engineering & I.T. in 2020 AD Scientific Index Ranking (World Scientist and University Ranking 2022) - 2nd Rank- Best Scientist, VJTI Computer Science domain 1138th Rank- Best Scientist, Computer Science,India.