International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Spoorti swami1, Shreya channi2, Anandraddi.N3

1Student at S.G.Balekundri institute of technology, Belgavi,Karnataka,India

2Student at S.G.Balekundri institute of technology, Belgavi, Karnataka, India

3AssistantProfessor, S.G.Balekundri institute of technology, Belgavi, Karnataka, India

Abstract - The Tech Cane is an intelligent assistive technology intended to promote the mobility, security, and self-sufficiency of visually impaired people. The system incorporates a number of sensing and communication technologies, such as GPS for real-time position tracking, GSM for emergency alerts, a water-level sensor, a small camera with object identificationandaudio announcements, and ultrasonic obstacle detection. Instantaneous auditory input on barriers, nearby objects, and crucial circumstances is provided by an onboard speaker. Additionally, the gadget has an alarm system to assist find the cane when it becomes lost and to alert anyone in the vicinity When an emergency arises. The ESP32 microprocessor, which powers the Tech Cane, allows for wireless connection, low power consumption,andeffective sensor integration.

Key Words: Smart cane, navigation, GPS, GSM, Visual impairment.

Millions of people have partial or total vision impairment, makingindependentmovementadailystruggle,according to worldwide health data. Traditional items like the white caneorguide dogsare frequently needed tohelp navigate public areas, avoid obstructions, and recognize environmental risks.Althoughsomewhatsuccessful,these techniques have drawbacks. For example, the traditional canecannot recognize objects,detect barriershigher than waist height, or offer information about the user's whereabouts or situations. Because of this, visually impaired people may experience safety hazards, diminished self-esteem, and restricted freedom. Rapid developments in wireless communication, smart sensors, microcontrollers,andartificialintelligencehaveopenedup new avenues for assistive device improvement in recent years. These technologies can greatly improve user safety and environmental awareness when included with mobilityaids.Inspiredbythisneed, the TechCane project seeks to develop a cutting-edge, clever substitute for the conventional white cane that provides all-encompassing assistance for those with visual impairments. The Tech Cane is a small, light, and reasonably priced device with several sensor and connectivity modules. A water detection module warns the user of slick or wet routes, andultrasonicsensorsareutilizedtoidentifyobstructions at different distances. The gadget can recognize people,

cars, or significant items thanks to a camera module with real-time object identification. It can also instantly deliver audio response via an integrated speaker. Furthermore, continuous position monitoring is made possible by the integrationofGPS,andinemergencyscenarios,caregivers can get emergency messages with location information thankstotheGSMmodule.Additionally,analarmsystemis providedtoassistfindthecaneincaseitbecomeslostand toalertthoseinthevicinityincaseofanemergency.

Over the past ten years, assistive technologies for people with visual impairments have changed dramatically, particularly with the development of embedded systems and sophisticated sensing devices. Despite being widely used for decades, traditional mobility aids like the white caneandguidedogsprovidelittleassistanceinidentifying environmental risks, moving items, or steep obstructions. In order to increase navigation safety, this gap has prompted researchers to investigate electronic travel aids (ETAs) that integrate sensors, microcontrollers, and feedback systems. Early ETA research was mostly concerned with ultrasonic obstacle detection systems. These investigations showed that ultrasonic sensors were appropriate for real-time feedback as they could consistentlyidentifyobjectsatvariousdistances.However, these methods usually lacked contextual awareness of the surroundings and only offered basic distance measures. Subsequentdevelopmentsincludedvibrationfeedbackand infrared sensors, which gave users a more user-friendly method of detecting obstructions, but one that was still restricted. Researchers started creating more complex assistive gadgets that integrated many sensors when lowcost microcontrollers like Arduino, Raspberry Pi, and ESP series boards became available. Numerous prototypes supported outdoor navigation and position tracking by combining GPS modules with ultrasonic sensors. Route monitoring wasimprovedbythesesystems,althoughthey oftenneededcomplicatedhardwareconfigurationsorhigh power. Researchersinvestigatedtheapplicationofcamera based identification systems for environmental awareness and item detection as computer vision capabilities developed.Real-timeitemdetectionmightgreatlyimprove user safety, according to studies employing OpenCV and lightweight machine learning models. However, these

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

systems frequently encountered issues with processing speed,powerconsumption,andcost-allcrucialaspectsfor practical use. The significance of integrating sensing technologiesinsteadthandependingsolelyononemodule is highlighted by more recent research. A more comprehensive assistance system for visually impaired people may be created by combining ultrasonic detection, water sensors, GPS, GSM connection, and auditory feedback, according to research on multimodal smart canes. It has also been acknowledged that including emergencyalertsystemsiscrucialforprovidingcaregivers with real-time notifications in emergency scenarios. Even with these developments, many current solutions are still too costly, heavy, or technologically complicated for daily usage. This gap highlights the need for a useful, lightweight, and affordable device that makes use of contemporary microcontrollers like the ESP32, which is renowned for its support for wireless communication and economical power consumption. In order to improve the freedom and safety of visually impaired people, current research suggests creating small, multipurpose smart canes that integrate real-time detection, communication, andobjectidentification.

3.Problem Statement

For those with vision impairments, independent navigating is still quite difficult, especially in busy, unfamiliar, or outdoor settings. Despite being commonly used, the conventional white cane only provides limited tactile sense and is unable to detect impediments that are too far away, above ground, or beyond the user's physical grasp.

Additionally, it doesn't notify the user of environmental risks like water-filled places, uneven ground, or abrupt drop-offs, nor does it offer information about dynamic thingslikecarsorpeople.Accidents,alackoftrustinone's abilitytomoveabout,andarelianceonoutsidehelpmight result from these restrictions. By adding sensors and digital feedback systems, current electronic travel aids have tried to improve conventional mobility tools. However, many of these systems have limited practical utility since they mainly concentrate on one or two functions, like simple obstacle recognition or route tracking.OthersophisticatedsystemsthatuseGPStracking or camera modules frequently have problems including expensive, heavy hardware, complicated operation, high power consumption, and poor dependability in real-time situations. Consequently, there is still no complete, userfriendly, and affordable mobility solution for visually impaired people. A smart assistive device that combines many sensing technologies such as environmental sensing, ultrasonic obstacle detection, and vision-based object recognition into a single, small system is obviously

needed. To assist the user in both routine navigation and emergency scenarios, elements including real-time audio feedback, position tracking, GSM-based emergency warnings,andalarm notifications mustalso be integrated. The lack of an integrated, reasonably priced, and userfriendly smart cane that can provide real-time environmental awareness, obstacle detection, object recognition, and emergency communication is the main issue that this project seeks to solve. Without such a solution,visuallyimpairedpeoplestillhavetodeal.

The development of the Tech cane relies on an integrated set of hardware components and software tools that collectively enhance its functionality and performance. At thecoreofthedeviceistheESP32microcontroller,chosen for its dual-core processing capability, built-in Wi-Fi and Bluetooth features, and efficient power consumption, which together enable seamless control of all modules. Ultrasonic sensors are incorporated to measure the distance of nearby obstacles, offering reliable detection eveninlow-visibilityenvironments.Awater-levelsensoris included to identify wet or hazardous surfaces, allowing the system to warn users before they encounter slippery paths. The ESP32-CAM module provides visual input for basic object detection, which is processed and translated intomeaningfulfeedbackthroughtheaudiooutputsystem consisting of a speaker or buzzer. To support outdoor navigationandsafety,thecaneintegratesaGPSmodulefor real-time position tracking and a GSM module capable of transmitting emergency alerts, ensuring that caregivers receive immediate updates during critical situations. A rechargeable Li-ion or Li-Po battery supplies continuous power to the system, while push buttons and switches allow the user to activate emergency functions or switch between different modes of operation. All hardware components are securely mounted on durable yet lightweight cane structure to ensure practically, comfort, and long-term usability. On the software side, the Tech CaneisprogrammedusingArduinoIDE orESP-IDF,which facilitatemicrocontrollerconfiguration,sensorinterfacing, andcommunicationhandling.EmbeddedC/C++isused to implementthecorealgorithmsresponsibleforsensordata processing, audio output control, and emergency communication. Python plays a key role in the development of thevision-basedcomponents, particularly during the training, testing, and optimization of lightweight machine learning models used for object detection.PythonlibrariessuchasTensorFlow,Keras,and OpenCV are utilized for dataset creation, image preprocessing, and model evaluation before deployment ontotheESP32-CAM.AdditionallibrariesdedicatedtoGPS, GSM, ultrasonic sensors, and audio modules ensure reliable communication between the hardware components. Circuit simulation and design tools like

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Proteus, Tinker cad, or Fritzing are employed during the prototyping stage to verify connections and create accurate circuit layouts, reducing errors during hardware assembly.Together,thesehardwareandsoftwareelements form an efficient, user-friendly, and smart assistive device tailored to support the mobility and safety of visually impairedindividuals.

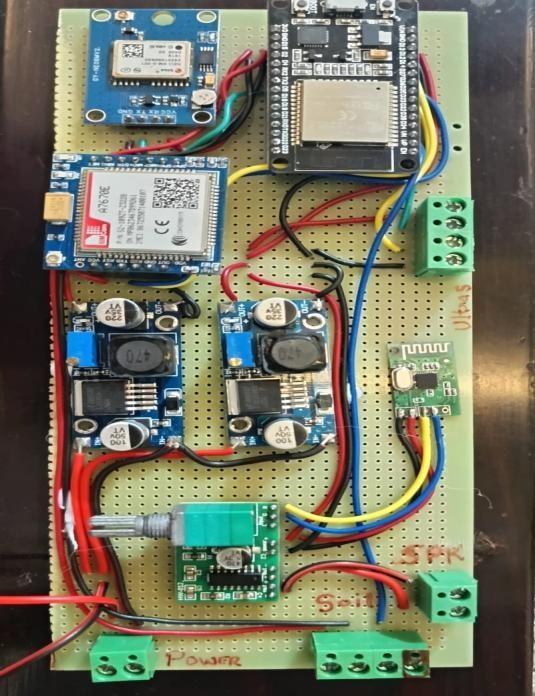

5.Assembling Prototype

The prototype of the Tech Cane was assembled through a systematic and carefully planned integration of all hardware components to ensure reliability, usability, and safety.Theassemblyprocessbeganwiththepreparationof the cane structure, which was selected to be lightweight, durable, and ergonomically suitable for daily use by visually impaired individuals. Internal space within the cane was allocated to accommodate electronic modules while maintaining proper balance and user comfort. The ESP32microcontrollerwassecurelymountedatthecentre of the assembly to act as the main control unit. Proper insulation and casing were provided to protect the microcontrollerfrommechanicalstressandenvironmental factors. Ultrasonic sensors were positioned at the front of the cane at an optimized height and angle to enable accurate detection of obstacles at varying distances. The waterdetectionsensorwasinstallednearthebottomtipof the cane to ensure early detection of wet or slippery surfaces.Thecameramodulewasfixedinaforwardfacing orientation to capture real-time visual data required for object detection and environmental awareness. Special attention was given to its alignment to minimize blind spotsandensureeffectiveimagecapture.Theaudiooutput module,consistingofaspeakerorbuzzer,wasplacednear the handle area to ensure that alerts and voice announcements were clearly audible to the user without causing discomfort. Push buttons for system control and emergency activation were integrated into the handle region,allowingeasyaccessduringoperation.TheGPSand GSM modules were carefully mounted within the cane body, with antennas positioned to maximize signal reception while minimizing interference. A rechargeable Li-ionorLi-Pobatterywasinstalledtosupplypowertoall system components, along with appropriate voltage regulation circuits to ensure stable and safe operation. All components were interconnected using insulated wiring and secure connectors, ensuring reliable electrical connections and proper power distribution. Once assembly was complete, the entire system was enclosed withinprotectivecasingtosafeguardtheelectronicswhile preservingthecane’sportabilityandaestheticappearance. The prototype then underwent preliminary testing to verify hardware integrity, sensor responsiveness, power stability,andcommunicationfunctionality.Thisstructured assembly process ensured that the Tech Cane prototype met the functional requirements and was suitable for furthersoftwareintegrationandperformanceevaluation.

The Tech Cane operates by continuously acquiring data from multiple sensors to provide real-time environmental awarenessandsafetyassistancetovisuallyimpairedusers. Each sensor plays a specific role in detecting obstacles, hazards, and surroundings, with all sensor outputs processedbytheESP32microcontroller.UltrasonicSensor Operation: Ultrasonic sensors function by emitting high frequency sound waves and measuring the time taken for thereflectedwavestoreturnafterstrikinganobstacle.The ESP32 calculates the distance to the object based on this time delay. When an obstacle is detected within a predefined safety range, the system immediately triggers anaudioalert throughthespeakeror buzzer,allowingthe user to adjust their movement accordingly. This process occurscontinuouslytoensurereal-timeobstacledetection during navigation. Water Detection Sensor Operation: The water detection sensor operates on the principle of conductivity. When the sensor comes into contact with water or moisture, a change in electrical resistance is detected. Thissignal isprocessed bytheESP32 to identify potentially slippery or flooded surfaces. Upon detection, the system generates an audio warning to alert the user and prevent accidents caused by wet pathways. Camera Module Operation: The camera module captures real-time imagesofthesurrounding environment.Theseimagesare processed using lightweight machine-learning models to identify common objects such as people, vehicles, or obstacles. Once an object is recognized, the system converts the information into meaningful audio announcements. This visual sensing capability enhances environmentalawarenessbeyondbasicobstacledetection. GPS Sensor Operation: The GPS module continuously receives signals from satellites to determine the user’s geographicallocation.Theobtainedlatitudeandlongitude data are used for real-time tracking and are transmitted during emergency situations. This ensures accurate location information is available to caregivers or emergency responders. GSM Module Operation: The GSM moduleoperatesbyutilizingcellularnetworkstosendtext messages or alerts. When an emergency button is pressed oracriticalconditionisdetected,theESP32commandsthe GSM module to transmit an alert message containing the user’s location and status to pre-registered contacts. Through the coordinated operation of these sensors, the Tech Cane provides a comprehensive, real-time assistive solution that enhances mobility, safety, and independence forvisuallyimpairedindividuals.

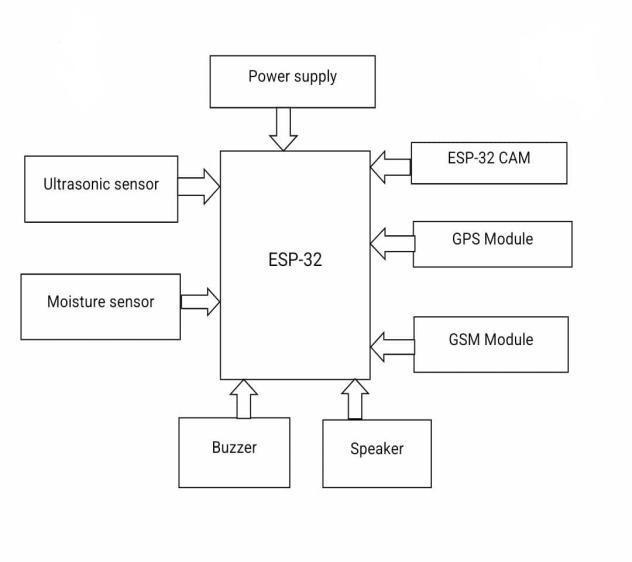

The block diagram of the Tech Cane illustrates the functional relationship between the sensing units, processing unit, output modules, and communication systems. At the centre of the system is the ESP32

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

microcontroller, which acts as the main control and processing unit. All sensors and modules are interfaced with the ESP32, enabling coordinated operation and realtime decision making. The input sensing block consists of ultrasonicsensors,a waterdetectionsensor,andacamera module. The ultrasonic sensors continuously measure the distance to nearby obstacles and transmit this data to the ESP32. The water detection sensor monitors the presence ofwaterormoistureonthepathandsendscorresponding signals to the controller. The camera module captures visual data, which is used for object detection and environmentalawareness.Theprocessingblockishandled by the ESP32 microcontroller. It receives inputdata from all sensors, processes the information using programmed logic and trained machine-learning models, and determines appropriate responses. The ESP32 also manages wireless communication and power control functions. The output block includes the audio output module, such as a speaker or buzzer. Based on the processed sensor data, the ESP32 generates audio alerts and voice announcements to inform the user about obstacles,objects,waterhazards,oremergencysituations. The communication block comprises the GPS and GSM modules. The GPS module provides real time location information, while the GSM module enables the transmission of emergency alerts along with location detailstopre-registered contacts.These modules enhance safety by ensuring external assistance can be accessed when required. The power supply block consists of a rechargeableLi-ionorLi-Pobatterythatsuppliespowerto all system components through appropriate voltage regulation.Pushbuttonsareintegratedaspartofthe user interface block, allowing the user to control system functions and trigger emergency alerts. Overall, the block diagram represents a structured and integrated system where sensor data is collected, processed by the ESP32, and conveyed to the user through audio feedback and emergency communication, ensuring effective mobility assistanceforvisuallyimpairedindividuals.

In addition to core obstacle detection and navigation assistance, the Tech Cane incorporates several supplementary features to enhance usability, safety, and user independence. One of the key additional features is the emergency alert mechanism, which allows the user to instantly notify caregivers or emergency contacts through a GSM-based messaging system, including realtime location data obtained from the GPS module. This featureisparticularlyusefulinunfamiliarenvironmentsor duringmedicalemergencies.TheTechCanealsoincludesa cane finding alarm system, which can be activated to produce an audible sound when the cane is misplaced, helping users quickly locate it. A manual control interface using push buttons enables users to easily switchmodes,activateemergencyalerts,orcontrolsystem functionswithoutcomplexity.Toimproveuserinteraction, the device supports voice-based audio feedback, ensuringclearcommunicationofobstaclewarnings,object identification, and environmental hazards. The system is designed to operate with low power consumption, allowing extended usage time on a single charge. Additionally, the compact and lightweight design of the cane ensures portability and comfort during prolonged use. These additional features collectively improve the practicality, reliability, and user-friendliness of the Tech Cane, making it a comprehensive assistive solution for visuallyimpairedindividuals.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

The Tech Cane represents a significant advancement over traditional mobility aids by integrating multiple sensing, communication, and intelligent processing technologies into a single assistive device. Unlike conventional canes that rely solely on tactile feedback, the proposed system providesreal-timeauditoryguidance,objectidentification, environmental hazard detection, and emergency communication. The use of the ESP32 microcontroller enables efficient multitasking, wireless connectivity, and low power consumption, making the device both compact and reliable. The incorporation of camera-based object detectionfurther enhances situational awareness,offering users more contextual information about their surroundings. Additionally, GPS and GSM integration improves user safety by enabling real-time tracking and emergency alert functionality. Despite these advancements, there is considerable scope for further improvement and expansion. In the future, advanced machine learning and deep learning models can be incorporated to improve object recognition accuracy and support face recognition, text reading, and traffic signal detection.Integration withsmartphone applications could allowcaregiverstomonitorusermovement,receivealerts, and customize system settings remotely. Voice command functionality may be added to enable hands-free interactionwiththedevice.

Further enhancements may include the use of haptic feedback through vibration motors in combination with audioalerts,providingmulti-sensoryguidance.Thesystem

can also be upgraded with obstacle mapping and path planning features using advanced sensors such as LiDAR. Improvements in battery technology and power management could extend operational time and reduce charging frequency. With continued research and development,theTechCanehasthepotentialtoevolveinto a fully intelligent mobility assistant that significantly improves independence, safety, and quality of life for visuallyimpairedindividuals.

The performance of the Tech Cane may vary under different environmental conditions such as low lighting, rain,orcrowded surroundings.Ultrasonicsensorreadings and camera-based object detection are limited by sensor alignment, lighting conditions, and the processing capabilityoftheESP32microcontroller.GPSaccuracymay decreaseinindoorenvironments,andGSMcommunication depends on cellular network availability. Continuous operationofmultiplesensorsandcommunicationmodules impacts battery life, requiring regular charging. Additionally,thesystemprimarilyreliesonaudiofeedback, whichmaybelesseffectiveinnoisyenvironments,andthe prototype requires careful handling and periodic maintenanceforreliableoperation

The Tech Cane presents an effective and intelligent assistive solution aimed at improving the mobility, safety, and independence of visually impaired individuals. By integrating ultrasonic obstacle detection, water sensing, camera-based object recognition, GPS tracking, and GSMbased emergency communication into a single device, the systemovercomesmanylimitationsoftraditionalmobility aids. The use of the ESP32 microcontroller enables efficient sensor integration, real-time processing, and low power consumption, making the device compact and practicalforeverydayuse.Theprototypedemonstratesthe potential of combining embedded systems and smart sensingtechnologiestoenhanceenvironmentalawareness and user confidence during navigation. Although certain limitations exist, the Tech Cane provides a strong foundation for future enhancements and real-world deployment. With further improvements in accuracy, usability, and power management, the proposed system can significantly contribute to assistive technology and promote greater independence for visually impaired individuals.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

[1] World Health Organization, World Report on Vision,WHOPress,2019.

[2] S. Jain, A. Gupta, and R. Mehta, “IoT-based smart walkingstick forvisuallyimpairedpeople,”International JournalofEngineeringandAdvancedTechnology(IJEAT), 2020.

[3] M. Al-Khateeb, A. Al-Qerem, and H. Al-Bustami, “Smart assistive cane for visually impaired using embedded systems,” International Journal of Advanced ComputerScienceandApplications,2021.

[4] Espressif Systems, ESP32 Technical Reference Manual,EspressifSystemsInc.,2022.

[5] R. Patil and S. Kulkarni, “Design and implementationofsmartnavigationaidforblindpeople,” International Journal of Scientific Research in EngineeringandManagement,2021.

[6] A. R. Yadav and P. S. Deshpande, “Vision-based assistive system for visually impaired using deep learning,”JournalofIntelligentSystems,2022.

[7] OpenCV Team, OpenCV Documentation, OpenSourceComputerVisionLibrary,2023. Documentation,Google,2023.

[8] TensorFlow Developers, TensorFlow Lite

2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008