International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Avula Swarna Rupa1, Pantham Pallavi2, Marri Bhavya Sri3 , Ambati Tharun Reddy 4 ,Mrs. Swathi Sugur5

1, 2, 3, 4 Department of CSE(Data Science), AVN Institute of Engineering and Technology

Under the guidance of 5 Asst. Professor, Department of CSE(Data Science), AVN Institute of Engineering and Technology

Abstract—Human–computer interaction has been mainly dependent on physical input devices like keyboards and mice. However, these devices may impose limitations on usability in certain environments and applications. With the development of computer vision techniques, touchless gesture-based interaction has become a natural and highly intuitive alternative. Gesture control systems are designed to understand human hand movements and then convert them into interacting commands for digital systems.

The article describes a camera-based gesture control method that is capable of allowing a user to interact with a computer by utilizing a set of fixed hand gestures. Through the real-time detection of hand gestures, the system consequently carries out a multitude of interaction tasks including navigation, executing control operations, and issuing system-level commands. Hand gestures as an input mode not only promote accessibility but also represent a more instinctive way of interaction which is especially advantageous in cases when physical touch is either not preferred or not feasible.

Gesture-based interaction systems hold the promise of fundamentally changing assistive technologies, smart environments, presentation control, and interactive systems. The research paper thus puts the spotlight on gesture recognition being a crucial facilitator of contemporary human–computer interaction and points out its utility in the development of natural, efficient, and contactless user interfaces.

Index Terms Gesture Recognition, Hand Tracking, Human–Computer Interaction, Touch less Interaction, VisionBased Interaction

Human–computerinteractionisacoreelementofhowusersrelatetodigitalsystems.However,thetypicallyusedmethodsof interaction, like keyboards and mice, have not changed significantly over the years and might sometimes even be unnatural and less efficient ways of controlling, especially in situations where touchless operation is required. As the need for more intuitive and contact-free interfaces grows, gesture-based interaction becomes a leading alternative which helps users communicatewithcomputersinanaturalwaybyhandmovements.

Gesturecontrol systemsare designed to understand humanhand gestures takenvisuallyandthus turning theminto system commandswhichcanbeunderstoodbymachines.Besidestraditionalinputdevices,peopleusinggesture-basedinterfacescan carry out their work even without any physical contact making it possible to improve usability and accessibility. These systems get rinsed benefits, for example, in situations such as presentations, assistive technologies, smart environments, interactive applications where it is important to have very simple interaction and the user must have complete freedom of movement.

The technological progress in the field of computer vision and the development of hand tracking in real time have helped gesturerecognitionsystemsingainingmoreandmoreaccuracyandreliability.Thankstothesedevelopments,thesystemcan preciselylocatethehands,identifytheshapesofthefingers,andeventheuser'sgesturepatternthustheuserswillbeableto convey their intent efficiently which the systems react. Gesture-based systems, through the identification of predefined gesturesandtheirassociationwithcontrolactions,deliveraninteractionexperiencethatismorenaturalandappealingto the usersascomparedtousingtraditionalinterfaces.

The ever-increasing curiosity about touchless interaction explains why it is necessary to create highly efficient and flexible gesturecontrolsystems.Gesture-basedmethodswhichallownaturalandintuitiveinteractionwithdigitaldevicesaretheones

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

thatlead human–computer interactionto the nextlevel.Atthesametime, these methodsfacilitate the developmentofmore intelligentandeasilyaccessiblecomputingenvironments.

Gesture-basedhuman-computerinteraction(HCI)isanareathatkeepschangingtosuittheuser'sneedsformorenaturaland intuitive ways of getting input. Traditional methods of interaction/application are limited to physical devices, whereas, the vision-based methods give the users an opportunity to control the devices through body movement observation without physically touching the devices. Mackenzie has thoroughly analyzed the human-computer interaction (HCI) principles and amongthepoints,hehasbroughtoutthemainfeaturesofinteractivesystemsincludingusability,intuitiveness,andefficiency. Thesearethesamefeaturesthatareatthecoreofgesture-basedinteractionsystems.

Initially, the progress in vision-based ways of interaction was greatly influenced by the depth and motion-sensing technologies.Zhang[3]investigatedhowthedepth-sensingtoolsliketheMicrosoftKinecthaverevolutionizedhumanmotion tracking for gesture-driven applications. Inspired by this trend, Shotton et al. [4] developed state-of-the-art human pose recognition algorithms that operate in real-time, thus accurately detecting body and hand movements and enabling the creationofvision-basedinteractivesystems.

Handstrackingandrecognitionofgesturesaretopicsthathavebeendeeplyscrutinizedbyutilizingthetraditionalcomputer visionmethods.Mittaletal.[5]cameupwithastrategytorecognizehandsbyintegratingvariousvisualcues.Theyidentified thedifficultiesthatarisefromthefactthathandsmayhavedifferentshapesandtheworkingconditionmayslightlyvarylike inthecaseofthelightandbackground.Poppe[6]conductedanin-depthreviewoftheliteratureonhumanactionrecognition based on the visual channel. He has classified different methods and singled out issues such as real-time performance and robustnesslimitations.Theseissuesarestillachallengeforthegesturecontrolsystems.

The current breakthroughs aim at creating perception pipelines which are not only extremely precise but at the same time theyarecapableofreal-timeoperation.Lugaresietal.[1]cameupwithaperceptionpipeline,whichmadeitpossibletodetect hand landmarks and thus perform accurate gesture recognition using a normal camera. The utility of such perception pipelinesisquiteevident,consideringthattheystillachieveahighlevelofaccuracybutatthesametimetheircomputational cost is greatly diminished. Thus, such pipelines are the perfect fit for human-computer interaction applications that require real-timeperformance.

Theusabilitytogetherwithuserexperiencedeterminethesuccessorfailureofgesture-basedsystemsinabigway.Greenberg andBuxton[7]elaboratedontheideathattheusabilityevaluationmethodsforinteractivesystemsmustbeappropriate.They pointed out that the design of a system should consider all functionalities but simultaneously a user’s comfort and ease of learningshouldbetakenintoaccountaswell.Themaingoaloftheseconsiderationsistoensurethatgesture-basedinterfaces areaconvenientandfriendlytoolforusers.

Thereshoulddefinitelybeadditionaloptionstothegesturesrecognitionthatfacilitateinteractionclarity,whichismultimodal feedback.Thereisanemergingtrendofadditionallyintroducinginteractionmodalitiessuchasspeechandaudiofeedback.To give an example, Taylor et al. [10] came up with speech synthesis systems that can provide auditory feedback. On the other hand, Young et al. [8] established the basic theories of speech processing that lead to the development of the voice-based interaction.Interestingly,Jainetal.[9]elaboratedonthebiometricscharacteristicsthatareconnectedtotheunderstandingof bothgestureandpatternrecognitionasbehavioralidentifiers.

In general, the literature has shown that there have been substantial developments in the field of vision-based gesture recognition, HCI, and multimodal feedback systems. Despite that, some of these works are focusing on the singular components,forexample,handdetectionorposeestimationanddonotprovidethebigpicture.This,therefore,indicatesthat thefieldisstillyearningforthekindofholisticsystemsthatwillsweepnotonlythegesturerecognitionbutalsotheusability issuesandfeedbackmechanismssoastofullyenableeffectiveandnaturaltouch-freeinteraction.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

The system that is being proposed is a gesture recognition system based on a camera and computer vision technology that allows users to perform various tasks on a computer just by using their hands in the air without physically touching the computer or mouse orkeyboard. The conceptis to capturespontaneous hand movements through thecamera and translate themintomeaningfulcommandsthatgetexecutedonthesystemwhich,inturn,makesitlessdependentontheconventional standby input devices like keyboard and mouse. The whole idea of this system is to support the user interaction with the computerbyhandgesturesthatitwillbepossibletoincreasetheaccessibility,usability,andtheoveralluserexperienceatany kindofcomputingenvironment.

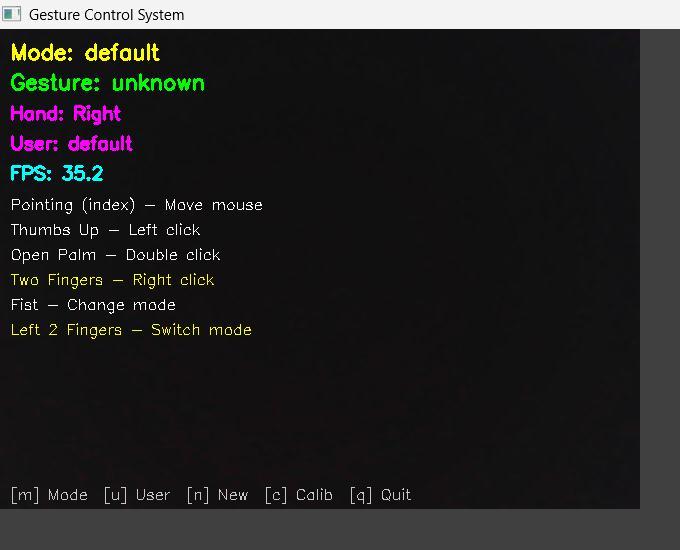

The system architecture includes the user interface and control layer and the processing and decision-making layer, which unitedly work to provide uninterrupted and dependable functionality. The user interface and control layer constantly grabs live video feed and simultaneously shows the results of the image processing in a manner of recognized gestures and hand landmarkstotheuser.Therefore,theuserhasnotonlybeenhelpedtoperformthegesturesmorevisuallybutalsotoquickly adapttothesystemwhichleadstoamorepleasantuserexperience.

A system control layer is the brain of the system behind the scenes. It is in charge of real-time hand landmark detection, gestureclassification,modemanagement,andexecutionofsystem-levelactions.

By processing the hand landmarks extracted, the system recognizes a set of predefined gesture patterns and carries out the corresponding control operation like moving the cursor, click actions ticking, scrolling, controlling presentation flow, launching applications, and adjusting system settings. To avoid unintended gesture activation, the system control layer also hasthecapabilityofmanagingthedifferentmodesofoperationdependingonthecontextoftheactionswhicharegoingtobe carriedout.

Forthepurposeoffurtherprovidingagoodexperienceanddiversificationoftheusers,thesystemcontrollayerfacilitatesnot only multi-user profiles but also custom gesture calibration. Through maintaining the user-specific gesture setups and the preferences,thesystemcontrollayermakesitpossibleforvarioususerstointeractwiththesysteminaccordancewiththeir uniquerequirements.Thisfunctionalityhasgiventhesystemahigherlevelofreliabilitybecauseitis,tosomeextent,capable ofhandlingchangesinhanddimension,gesturemanner,anduserbehavior.

Moreover, the system implements some other features such as voice feedback controlled by the system control layer which servesasaguideinrecognizingthehandgestures,confirmingthechangeinmodesandcompletingtheexecutedactionsbythe system. Such a feedback system improves the quality, smoothness, and clarity of the interaction ensuring that the user is at anytimeawareofthesystem'sresponsesduringoperation.Thesystemcontrollayerhasbeenoptimizedtobeabletoperform the tasks swiftly and efficiently even under resource limitations which guarantee the continuous flow of the interaction withoutanyirritatingtimelag.

The system as described here is an integration of several features such as real-time hand gesture recognition, a structured mode-based user interface, control and decision-making logic directed by the system control layer, personalization by user and feedback methods in a single framework. On the one hand, it can provide the users with a closed and well-organized interaction system by natural and hygienic means without compromising on the control and personalization being able to offeranefficient,scalable,andpracticalmethodofinteractingwithmachinesbygesture.

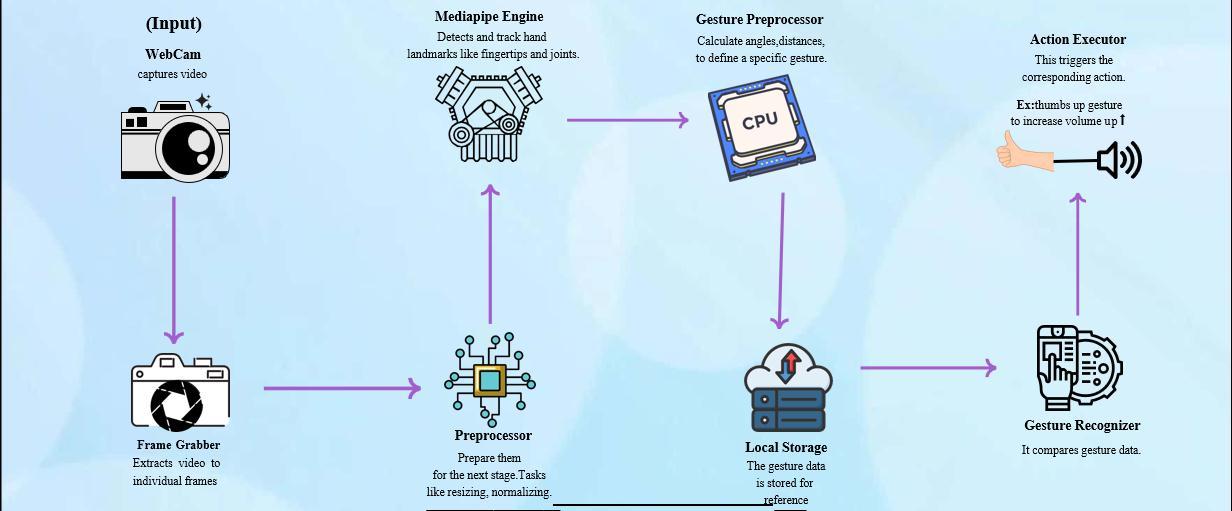

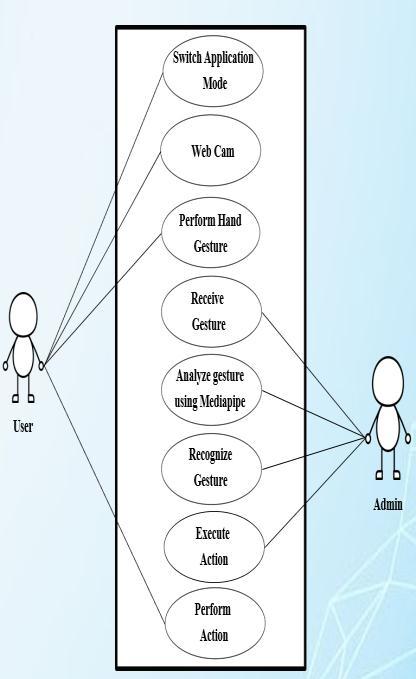

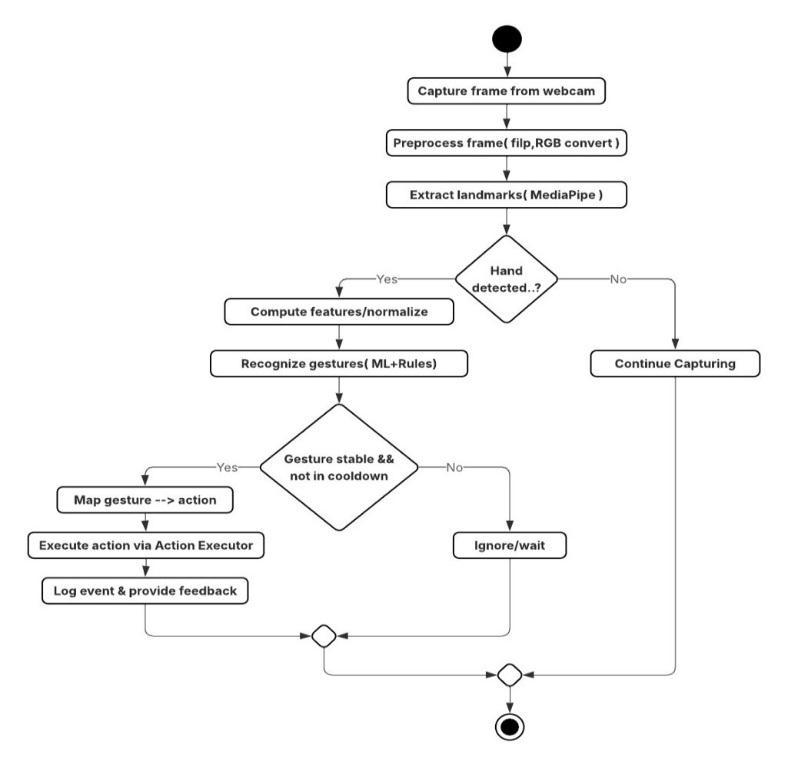

It incorporates vision-based gesture detection, backend processing, and action execution in a neat and efficient way. It is represented by three complementary views: a functional block diagram (Figure 1), a use-case perspective (Figure 2), and a detailedprocessingworkflow(Figure3).

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Altogether, these figures give a full picture of how the user hand signs are captured, examined, and converted into correspondingactions.

The workflow of the system is as outlined in Figure 1 where it starts with a webcam providing the live video feed that is continuouslybeingmonitored.Theframesgrabbedfromthefootagearedetachedbytheframegrabberunitandpassedonto the pre-processing module where besides other things, resizing, normalization, and color space conversion take place. From

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072 © 2025, IRJET | Impact Factor value: 8.315 | ISO 9001:2008 Certified Journal | Page941

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

there, the preprocessed frames are fed into the Media Pipe system that provides a hand model with numerous landmarks, fingerjoints,keypointsofthehand,etc.Thedetectedpointsarethekeyinputsofthegestureanalysismodule.

The gesture pre-processing unit on the backend uses these points or landmarks to derive geometrical characteristics of the handsuchasthedistancesandanglesbetweenvariouslandmarks,indicatingtheperformedgestures.Thesefeaturescouldbe keptontheuser'sdeviceforreferenceaswellasforpersonalization.Thegesturerecognizercomparestheextractedfeatures with predefined or user-calibrated gesture patterns to identify the most suitable gesture class. The action executor gets a signalfromtherecognitionmoduleandtriggersthematcheddevice-actionthatca n be mouse cursor movement, clicking, scrolling, volume control, or application navigation, etc. The modular design of the systemmakesitveryfast,extendable,andcapableofsupportingreal-timeinteraction.

Figure2depictsthesystemfromausecaseperspective,whichemphasizestheconnectionbetweentheuser,thesystem,and control administration. The user interacts with the system by performing hand gestures in front of a webcam, the system receivesthesegestures,andthegesturerecognitionpipelineanalyzesthem.Thesystemsupportsapplicationmodeswitching, allowing operation in different contexts such as desktop interaction, presentation control, scrolling, and system control. The administrativecontrolmonitorsgesturelogicandconfiguration,thusensuringthatthesystembehavescorrectly.

ThedetailedworkflowhighlightedinFigure3representsthesystem'sfunctionalprogression.Initially thesystemrecordsthe sequencesoftheframesandthenthepreprocessingofthecapturedimagestakesplacewhichisfollowedbytheextractionof thehandlandmarks.Atthispoint,thesystemneedstomakeadecisionwhetherhanddetectionhasbeensuccessfulornot;if not, frame capturing continues without interruption. When the hand is detected, the system calculates the normalized representations, and then, gesture recognition takes place by applying the mixture of the rule-based decisions alongside the pre-trained models. After recognition, the potential gesture is subjected to stability and cooldown verification prior to being translated intoan action. Actions will be executedupon confirmation of validgesturesaccompanied by eventjournaling and usernotification.Atthesametime,unstablegestureswillbedisregardedtoprecludeanyunintentionalactions.

The whole proposed architecture binds together the user's side interaction, backend processing, gesture recognition, and action realization in one solid and trustworthy framework. It is a multi-layered and modular composition that not only increases reliability but also decreases the likelihood of inadvertent triggers and at the same time, serves the user with a naturalandintuitivegesture-basedinteractionthatisfastandconvenientforhuman–computerinteraction(HCI)inthe21st century.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

This work presented a vision-based Gesture Control System aimed at enabling natural and touchless human–computer interaction. By interpreting real-time hand gestures captured through a camera, the system allows users to perform various computer operations without relying on conventional input devices such as a keyboard or mouse. The proposed system demonstrates how gesture recognition can be effectively used to improve interaction flexibility, accessibility, and user experience.Throughstructuredgesturerecognition,mode-basedoperation,andreliableactionexecution,thesystemprovides anefficientsolutionforintuitivecomputercontrol.Theoveralldesignhighlightsthepotentialofgesture-basedinterfacesasa practicalanduser-friendlyapproachinmodernhuman–computerinteractionenvironments.

As future work, the system will be extended by developing a dedicated web-based platform to make the gesture control software easily accessible to a wider set of users. The future enhancement aims to provide the system as a software-style installation,allowinguserstoinstalltheapplicationontheirsystemsinasimpleanduser-friendlymanner.Duringinstallation, the application will be configured to automatically register itself within the system environment, including adding the required executionpath to anautorun batchfile.Thisapproachwill enablethegesturecontrol system tostart automatically when the system boots, ensuring seamless availability without requiring manual execution. By integrating a web-based distribution mechanism and automated startup configuration, the future version of the system will improve usability, accessibility,andeaseofadoptionwhilemaintainingthecoregesture-basedinteractioncapabilities.

[1]C.Lugaresi, J.Tang,H.Nash,C.McClanahan,E.Uboweja,M. Hays,etal.,“MediaPipe: Aframework forbuildingperception pipelines,”arXivpreprintarXiv:1906.08172,2019.

[2]I.S.MacKenzie,Human–ComputerInteraction:AnEmpiricalResearchPerspective.MorganKaufmannPublishers,2013.

[3]Z.Zhang,“MicrosoftKinectsensoranditseffect,”IEEEMultimedia,vol.19,no.2,pp.4–10,2012.

[4]J.Shotton,A.Fitzgibbon,M.Cook,T.Sharp,M.Finocchio,R.Moore,etal.,“Real-timehumanposerecognitioninpartsfrom singledepthimages,”ProceedingsoftheIEEEConferenceonComputerVisionandPatternRecognition(CVPR),2011.

[5] A. Mittal, A. Zisserman, and P. H. S. Torr, “Hand detection using multiple proposals,” Proceedings of the British Machine VisionConference(BMVC),2011

[6]R.Poppe, “Asurveyonvision-basedhumanactionrecognition,”Imageand VisionComputing,vol.28, no.6,pp.976–990, 2010.

[7] S. Greenberg and C. Buxton, “Usability evaluation considered harmful (some of the time),” Proceedings of the SIGCHI ConferenceonHumanFactorsinComputingSystems,2008.

[8] S. Young, M. Evermann, T. Hain, D. Kershaw, G. Moore, J. Odell, et al., The HTK Book. Cambridge University Engineering Department,2006.

[9]A.K.Jain,A.Ross,andS.Prabhakar,“Anintroductiontobiometricrecognition,”IEEETransactionsonCircuitsandSystems forVideoTechnology,vol.14,no.1,pp.4–20,2004.

[10] P. Taylor, A. Black, and R. Caley, “The Festival speech synthesis system,” Proceedings of the ESCA Workshop on Speech Synthesis,1998.