International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Kilambi Pavanadithya1 , Malliboyini Venkat Kalyan2 , Yatakalla Sarika3 and Gondra Jahnavi4 ,Mr. Jagadeeshwar Reddy V5

1, 2, 3, 4 Department of CSE(Data Science), AVN Institute of Engineering and Technology undertheguidanceof 5 Asst. Professor, Department of CSE(Data Science), AVN Institute of Engineering and Technology ***

Abstract-With the rapid rise in spoken interactions happening in digital spaces, organizations, and research settings, there's a growing demand for reliable and automated conversation analytics. The goal of conversation analytics is to turn unstructured speech data into meaningful, structured insights, which helps us gain a better understanding of dialogues involving multiple speakers and the content being discussed. Thanks to recent advances in speech and language technologies, we're now able to analyze conversational audio more accurately and with better context than ever before.

This paper focuses on how conversation analytics can transform raw speech into valuable analytical information using techniques like automatic speech recognition, speaker diarization, and natural language processing. These methods help identify who’s speaking, transcribe what they’re saying, and pull out linguistic and contextual patterns from conversations. The insights we get from analyzing this conversational data enhance our understanding of communication dynamics and support better decisionmaking in various fields. Overall, this study underscores the increasing academic and practical importance of automated conversation analysis, especially when it comes to managing large volumes of speech data effectively.

Index Terms Automatic Speech Recognition, Conversation Analytics, Natural Language Processing, Speaker Diarization, Speech Processing

One of the easiest ways we connect with each other is through talking. Conversations are super important in settings like meetings, customer service, education, healthcare, and media. With more people chatting on digital platforms, tons of conversational audio is generated every day. But going through all that audio by hand is tough and takes a lot of time, especiallywithlongdiscussionsfeaturingdifferentspeakers.Becauseofthis,automatedconversationanalyticshasbecomea keyareaofresearch.

Conversationanalyticsisallaboutpullingvaluableinsightsfromspokeninteractions usingtechniqueslikespeechprocessing andnaturallanguageprocessing.Unliketraditionalaudioanalysis,whichmainlyfocusesonthesignals,conversationanalytics digs into the flow of ideas, who’s talking, and the sequence of what’s being said. Some of the main parts of conversation analytics include automatic speech recognition, figuring out who's speaking, and linguistic analysis. By blending these elementstogether,wecancreateastructuredmodelofconversationaldata.

Lately, breakthroughs in deep learning have really boosted how well speech recognition and speaker identification systems work.Now,wecanunderstandreal-lifeconversationsevenwhenpeopletalkovereachother,whenthere'sbackgroundnoise, orwhenspeakershavedifferentaccents.Plus,advancesinnaturallanguageprocessinghaveallowedustodivedeeperintothe spokenwords forexample,spottingsentiment,figuringoutintentions,andpinpointingcontext.Theseupgradeshavemade automated conversation analysis much more reliable and scalable, which is why it's gaining popularity in both research and practicaluse.

But conversation analytics is more than just turning speech into text. It gives us insights into communication styles, personalitytraitsofspeakers,andoverall interactionpatterns.Theseinsightsarereallyhelpfulforfiguringouthoweffective conversationsare,understandinggroupdiscussions,andhelpingwithdecision-making.Astheneedforsmartspeechanalysis toolsincreases,conversationanalyticsisbecominganessentialfieldthattransformsrawaudiointomeaningfulinsights.

Over the past two decades, there's been a lot of exciting progress in the field of speech and language processing, paving the way for the conversation analytics systems we have today. Early contributors like Jurafsky and Martin[1] really set the

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

groundwork,establishingasolidtheoreticalbaseforthingslikespeechrecognitionandnaturallanguageprocessing,aswellas discourseanalysisandspokendialoguesystems.Theyidentifiedsomekeyprinciplestograspconversationalspeech,including aspectslikeacousticmodeling,languagemodeling,and howconversationsarestructured.Theseprinciplesare allcriticalfor automaticallyanalyzingspokeninteractions.

AbigfocusinconversationanalysishasbeenonAutomaticSpeechRecognition(ASR).Thearrivalofdeepneuralnetworksfor acoustic modeling has truly transformed the field, leading to impressive improvements in ASR performance. Hinton et al.[7] showedthatdeeplearningmodelscouldsignificantlyoutperformoldertechniques,resultinginbetteraccuracyandreliability. Buildingonthis,Radford et al.[2]createda large-scale weaklysupervisedspeechrecognitionmodel thatcan work well with different accents, noisy settings, and multiple languages. This has made ASR much more effective in real-life conversations, allowingforaccuratetranscriptionsoflongandcomplicatedaudiodiscussions.

Speakerdiarization,whichaimstofigureoutwhospokewheninaconversation.EarlystudiesbyAnguera etal.[8]lookedat traditional approaches and pinpointed issues like overlapping voices and variability in speech. Lately, deep learning advancementshavetakendiarizationtothenextlevel.Forinstance,Bredinetal.[4]introducedaneuralframeworkthatacts as a versatile toolkit for speaker diarization, enhancing our ability to segment and identify speakers in group chats. These improvementshavereallybolsteredthereliabilityofdiarizationinreal-worldaudiodata.

NaturalLanguageProcessing(NLP)playsacrucialroleinpullingoutmeaningfulinsightsfromtranscribedspeech.Liu[9]laid down foundational techniques for sentiment analysis and opinion mining, which remain vital for interpreting how speakers feelandtheemotionalundertonesintext.ModernNLPframeworks,liketheefficienttoolkitdevelopedbyHannibaletal.[3], havestreamlinedlarge-scalelanguageanalysis,offeringsolidprocessesfortasksliketokenization,syntacticparsing,andtext classification.Theseresourcesmakeiteasiertoanalyzeconversationtranscriptsonalargerscale.

On the emotional side of things, there's growing attention on analyzing affective and conversational context. Poria et al.[5] have delved into affective computing, stressing the importance of studying emotions and contextual signals in human communication. They pointed out that grasping sentiment and emotional trends can provide deeper insights into how conversations unfold. Furthermore, Stocked and Shriberg[10] looked into statistical methods for spoken dialogue systems, focusing on how to model the structure of conversations, who speaks when, and how interactions flow. These aspects are crucialforunderstandingconversationsmoredeeply.

Audiopreprocessingandfeatureextractionarethestartingpointsforanyspeech-basedsystem.McFeeetal.[6]cameupwith a detailedPythonframework foranalyzingaudiosignals,givingtheessential tools neededtomanageandprocessrawaudio before diving into more complex speech and language analysis. These preprocessing techniques lay the groundwork for reliablefollow-uptaskslikediarizationandspeechrecognition.

There’sbeenalotofprogressinareaslikespeechrecognition,speakerdiarization,andtextanalytics,manyresearchershave beenlookingatthesecomponentsinisolation.Theworkisongoingintryingtobringthesetechniquestogetherintoaunified conversationanalyticsframework.Thishighlightstheneedforsystemsthatcanmergeaudioprocessing,speechrecognition, speakeridentification,andlinguisticanalysistotrulyunderstandconversationaldata.

The proposed system introduces a Conversation Analytics System that can effectively automate the processing and understanding of spoken conversations by means of speech and language processing techniques. The web-based application allows users to authenticate themselves and thereby be able to analyze conversational audio by either uploading recorded filesorperforminglivevoicerecording.Thus,thesystemintendstotransformunstructuredaudiodataintoanalytical insights thatbetterfacilitateunderstandingoftheconversationalcontent.

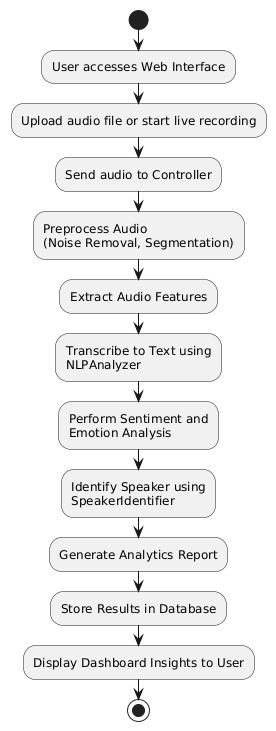

Inthe activityflow diagram, itisshownthatthesystem initiatesfromuserinteractionvia a web interface.Usersgothrough the process of registration or logging in to the system in a secure way and thus get access to the main dashboard. From the dashboard, users decide on the input mode uploading a file or using live recording. The audio selected is sent to the controllermodulethathandlestheentireprocessingpipeline.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Thesystemfirstconductsaudiopreprocessingtoreadytherawaudioforthenextstagesoftheanalysis.Noisereductionand segmentation are some of the steps taken during preprocessing to make sure that the processing remains reliable. After preprocessing, relevant features of the audio are extracted and then sent to the speech analysis pipeline along with the processed audio. To convert vocal utterances into textual form, automatic speech recognition is implemented, making it possibleforthelinguisticsanalysistocontinue.

Formulti-speakerconversations,thesystemhasspeakeridentificationanddiarizationcapabilitiesthatenableittodetectand separateindividualspeakerswhentheconversationhastakenplace.Thismeansthatitwillbeperfectly clearwhosaidwhat. Alongthesamelines,thetexttranscriptissubjectedtonaturallanguageprocessingwhereinitssentimentandotheremotional componentscanbeanalyzed,therebyunderstandingthecontextualtoneoftheconversation.

Thedifferentcomponentsinvolvedintheprocessingarehousedinoneplace,avoiceanalysisengine,asshowninthefigureof the system architecture. The analytical results such as speaker-wise transcription, sentiment markers, and conversational summariesarecollatedintooneanalyticsreport.Theconversationhistoryisarchived,andfutureaccessisfacilitatedthrough thestorageoftheoriginalresultsinadatabase.

Intheend,theinsightsobtainedfromtheanalysisarebroughttotheuserbymeansofaninteractivedashboardthatmakesit possible to view andinterpret the conversation analysisin a clear way.Moreover, thesystem offersa featurethatlets users export or download the generated report. The sequence diagram indicates the interaction among the web interface, authentication service, controller, processing modules, andanalyticscomponent thatis performed inan orderly,secure, and efficientmanner.

Thesystemisleveragingaudioprocessing,automaticspeechrecognition,speakeridentification,andtextanalysiswhichareall combined in a modular and scalable framework for an integrated and fully automated conversation analytics solution. The systemactsasagoodlinkbetweenunprocessedconversationalaudioandwell-structuredanalyticalinsights.

TheConversationAnalyticsSystemwillbewrittenmainlyinPython.TheFlaskwebframeworkwillbeutilizedforthesystem backend, which will be responsible for user interaction and processing workflows management. The front-end interface will be a product of HTML, CSS, and JavaScript that will be capable of supporting audio uploading, live recording, and providing visualizationoftheanalyticsresults.

The audio preprocessing and signal handling tasks will be done through the use of Python-based audio processing libraries. Neural-based diarization methods will be used for speaker diarization, and deep learning–based speech recognition models will be used for automatic speech recognition to convert speech into the text. Natural Language Processing methods will be employedtoanalyzethecopiedtexttogettheconversationalinsights.Alightweightdatabasesystemwillserveasaplaceto keepuserinformation,conversationdata,andanalysisresults.

V FIGURES

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

Here, a Conversation Analytics System has been introduced through this paper. It centers on automated analysis of spoken conversations.Theaimistoextractmeaningfulandstructuredinsightsfromaudiodata.Theproposedsystemisessentiallya conversationanalyticstoolthatdealswithunstructured speech,i.e.,itappliesspeechrecognition,speakeridentification,and language analysis techniques to a human speech. By converting conversational data into easily understandable analytical results, the system facilitates better comprehension of communication patterns and conversational dynamics. The paper effectively conveys the significance of automated conversational analytics within speech and language processing research andlaysdownasolidtheoreticalframeworkfortheanalysisofreal-worldconversationaldata.

Thenextstageofworkontheintroducedsystemwillbetocontinueitsdevelopmentandconvertitintoafully-fledgedwebbasedapplicationbymeansofFlaskandothersupportivePythontechnologies.Futureupgradeswillthereforebespecifically directedtowardsconsolidatingthetyingofthesystem'scomponentsandenhancingtheanalyticalfunctionalityofthespeech and language processing modules. The system's effectiveness and user-friendliness will be improved by including more conversationalfeaturesandpolishedanalysismethods.

[1]D.JurafskyandJ.H.Martin,SpeechandLanguageProcessing,3rded.PearsonEducation,2023.

[2] A. Radford, J. W. Kim, T. Xu, G. Brockman, C. McLeavey, and I. Sutskever, “Robust speech recognition via large-scale weak supervision,”ProceedingsoftheInternationalConferenceonMachineLearning(ICML),2023.

International Research Journal of Engineering and Technology (IRJET) e-ISSN: 2395-0056

Volume: 12 Issue: 12 | Dec 2025 www.irjet.net p-ISSN: 2395-0072

[3]M.Honnibal,I.Montani,S.VanLandeghem,andA.Boyd,“spaCy:Industrial-strengthnaturallanguageprocessinginPython,” Zenodo,2020.

[4]H.Bredin,R.Yin,J.M.Coria,G.Gelly,P.Korshunov,M.Lavechin,etal.,“pyannote.audio:Neuralbuildingblocksfor speaker diarization,”ProceedingsoftheIEEEInternationalConferenceonAcoustics,SpeechandSignalProcessing(ICASSP),2020.

[5] S. Poria, E. Cambria, R. Bajpai, and A. Hussain, “A review of affective computing: From unimodal analysis to multimodal fusion,”InformationFusion,vol.37,pp.98–125,2017.

[6]B.McFee,C.Raffel,D.Liang,D.P.Ellis,M.McVicar,E.Battenberg,andO.Nieto,“librosa:Audioandmusicsignalanalysisin Python,”Proceedingsofthe14thPythoninScienceConference,2015.

[7] G. Hinton, L. Deng, D. Yu, G. E. Dahl, A. Mohamed, N. Jaitly, et al., “Deep neural networks for acoustic modeling in speech recognition,”IEEESignalProcessingMagazine,vol.29,no.6,pp.82–97,2012.

[8] X. Anguera, S. Bozonnet, N. Evans, C. Fredouille, G. Friedland, and O. Vinyals, “Speaker diarization: A review of recent research,”IEEETransactionsonAudio,Speech,andLanguageProcessing,vol.20,no.2,pp.356–370,2012.

[9] B. Liu, Sentiment Analysis and Opinion Mining, Synthesis Lectures on Human Language Technologies. Morgan & Claypool Publishers,2012.

[10] A. Stolcke and E. Shriberg, “Statistical approaches to spoken dialogue systems,” in Handbook of Speech Processing. Springer, 2006