ISSUE 3APRIL 2026

"Uh-oh"

Leveraging FPGAs for deploying AI in small satellites

The Cyber Resilience Actand what it means for FPGAs +

Streamlining the FPGA-SoC developer experience

FPGA strategies for Sparse vs Dense PCB layouts

Accelerating system-level verification for adaptive SoCs

“The

Marcus Ward, Regional Sales Manager, Samtec

“It

Richard Parks, Head of Critical Solutions, Telesoft

Publisher: Adam Taylor

Editor: Matt Hilbert

Designer/Cover art:

Susie Hinchliffe

Marketer: Louise Paul

Printer: Printerbello

CONTRIBUTORS:

Adam Taylor, Adiuvo Engineering

Alexander Wirthmueller, MPSI Technologies

Dr James Murphy, Setanta Space Systems

Jim Lewis, SynthWorks Design Inc

Martin Kellerman, Microchip & Nikola Velinov, Green Hills Software

Peter Trott, Microchip

Dr Pierre Maillard, AMD

Tomas Chester, Chester Electronic Design

Published by Adiuvo Events. ©️ Adiuvo Events. All rights reserved.

No part of this publication may be reproduced in whole or in part in any medium without the express permission of the publisher.

For editorial enquiries email contribute@fpgahorizons.com

For advertising enquiries email advertise@fpgahorizons.com

There is something happening here in the FPGA world

Through the FPGA Horizons conferences and this journal, we are building something very special: a growing community for FPGA engineers, researchers, and enthusiasts. It is a place where ideas, experiences, and practical knowledge can be shared openly, and where people who care deeply about this technology can connect and learn from one another. The response so far has been incredibly encouraging, and it is clear there is a real appetite for a space dedicated to thoughtful discussion and deep technical exploration of FPGA technology.

Welcome to the third issue of FPGA Horizons Journal

This issue accompanies the second FPGA Horizons Conference and marks an exciting milestone as the event is being held in the United States for the first time. The conference itself promises to be excellent, with a strong program of educational sessions and hands-on tutorials designed to provide practical insights and learning opportunities. Attendees will also have the chance to see a preview of emerging technologies that will shape the future of FPGA and adaptive computing.

Of course, FPGA Horizons is not only about the conference. As always, the journal is packed with insightful technical articles that explore many different aspects of FPGA design and development.

In this issue we begin by looking at approaches to accelerating systemlevel verification for AMD Versal adaptive SoC designs. Our cover story explores how FPGAs are enabling AI deployment in small satellites, demonstrating the increasing role of adaptive computing in space applications. We also examine the EU Cyber Resilience Act and what it means for engineers developing FPGA-based systems in an increasingly regulated environment.

For engineers working closer to the hardware, we include a practical deep dive into FPGA PCB layout strategies, examining sparse versus dense breakout techniques. Elsewhere in the issue we look at developing radiation-tolerant AI platforms using AMD Versal devices, explore improved verification approaches using OSVVM for VHDL designs, and discuss how functional safety can be implemented across both hardware and software systems. We also examine ways to streamline the FPGA-SoC developer experience and improve productivity across the development workflow.

In just a few issues, FPGA Horizons Journal has quickly established itself as a publication that experts across the industry are eager to contribute to. In an age where AI-generated content is becoming increasingly common, there is something refreshing about hearing directly from engineers who practise this craft every day. Their insights, experience, and passion for FPGA technology are what make this journal special.

I hope you enjoy this issue and the ideas shared throughout its pages.

Adam Taylor Publisher

8

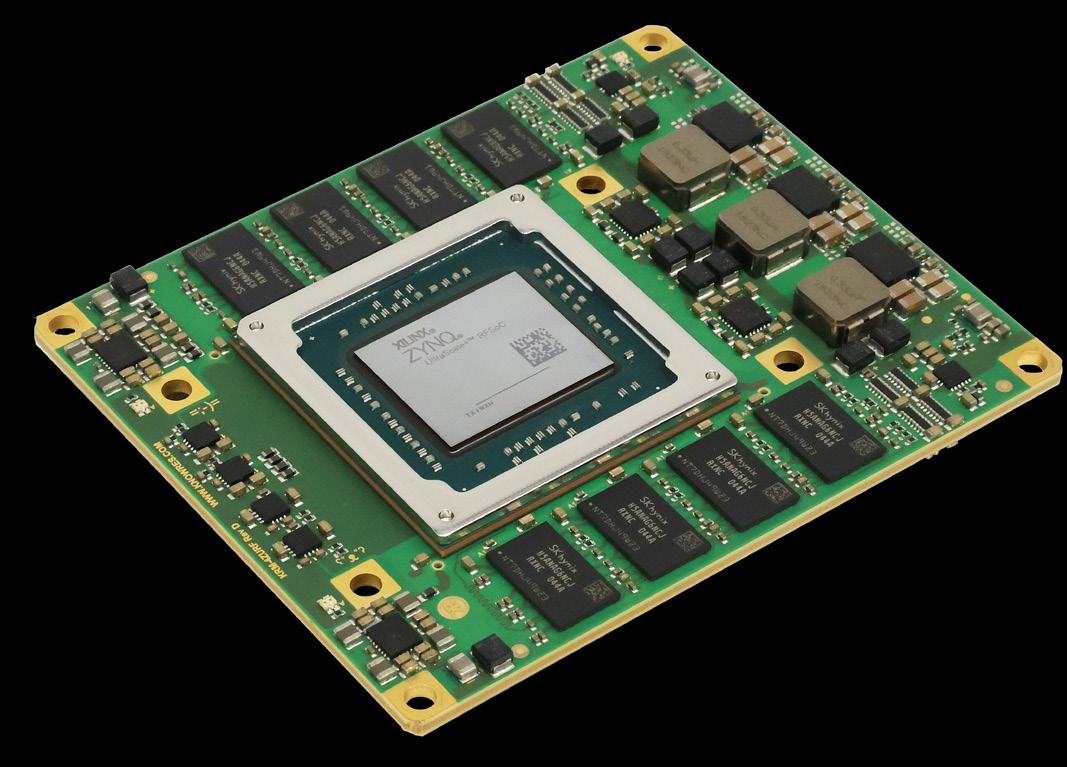

Adam Taylor (Adiuvo Engineering / Publisher) takes A progressive approach to accelerating system-level verification for AMD VersalTM adaptive SoC design

14

COVER STORY

Dr James Murphy (Setanta Space Systems) discusses Leveraging FPGAs for deploying AI in small satellites

Peter Trott (Microchip) sheds light on The Cyber Resilience Actand what it means for FPGAs 20

Tomas Chester (Chester Electronic Design) presents FPGA strategies for PCB layouts: Sparse vs Dense via breakouts

Dr. Pierre Maillard (AMD) explains Developing a radiation-tolerant AI platform for space using AMD Versal adaptive SoCs

Jim Lewis (Synthworks) introduces OSVVM: A better way to verify your VHDL designs 36

Martin Kellerman (Microchip Technology Germany GmbH) & Nikola Velinov, (Green Hills Software) show the process of Implementing functional safety in hardware and software 42

Disclaimer

Alexander Wirthmueller (MPSI Technologies) talks about Streamlining the FPGA-SoC developer experience 46

The content published in the FPGA Horizons Journal is contributed by independent authors and researchers. While we strive to ensure accuracy and maintain a standard of quality, the views and opinions expressed in individual articles are those of the respective contributors and do not necessarily reflect the views of the FPGA Horizons Journal editorial team or its affiliates.

We are committed to using neutral and inclusive language wherever possible. However, given the diversity of voices and topics, variations in tone and expression may occur. The FPGA Horizons Journal does not accept responsibility for any errors, omissions, or differing viewpoints presented in the submitted content.

Readers are encouraged to critically engage with the material and consult additional sources where appropriate.

Industry roundup

Is it time to speak Livt?

A new programming language aims to address the steep learning curve of FPGA development. Positioned as a universal language for FPGA and ASIC design, Livt brings software style abstractions, package management and CI-friendly tooling to design flows still dominated by VHDL and Verilog. Instead of forcing developers to think in signals, processes and resets from the first line of code, Livt lets you describe behavior while the compiler generates deterministic, synthesizable VHDL that fits into existing toolchains.

The goal isn’t to replace HDLs but to extend them. Livtgenerated code can sit alongside handwritten VHDL or Verilog when deeper control is needed, while most of a design can be expressed at a higher level with better readability and maintainability. Its integration with HxS, a domain specific language for defining hardware-software interfaces, also changes how processors and FPGA logic interact by turning register maps and control points into explicit APIs.

Livt offers a programming model that feels familiar to embedded software engineers while still producing efficient hardware. Whether it becomes a new common language for FPGA design remains to be seen. Are you, for example, tempted to speak Livt?

Welcome to oHFM, the open, vendorindependent FPGA standard

Behind the big headlines at CES 2026 about AI and robotics, sustainable tech and Lego Smart Bricks, another announcement was also made: the launch of the Open Harmonized FPGA Module (oHFM), the world’s first open standard designed for FPGA and SOC-FPGA modules. Version 1.0 of the standard was published at CES by the Standardization Group for Embedded Technologies (SGeT) in an effort prevent vendor lock-in. The standard introduces a common carrier board concept with standardized pinouts and interfaces to enable FPGA modules to be swapped when needed.

To cater for the different physical requirements of FPGAs, it offers two variants that follow the same system thinking. oHFM.s (Solderable) is optimized for compact, costefficient series production, and oHFM.c (Connector-based) is designed for high-speed performance, scalability, and easy upgrades.

The Atari ST is back … well, kind of Forty one years after it first launched, the Atari ST home computer is back. Famous for being the first to include MIDI musical instrument ports, the gaming, music and desktop publishing machine is returning as the MiniST. The Tang Nano FPGApowered recreation uses a MiSTeryNano core to emulate the original Motorola 68000-based system and is housed in a 3D-printed, 60% scale Atari-like case. That’s the good news.

NG-ULTRA SoC FPGA heads for space Europe’s latest space-grade SoC FPGA has passed a major milestone. NanoXplore and STMicroelectronics have qualified the NGULTRA under the ESCC 9030 standard, making it the first plastic-packaged microcircuit certified to the new European specification. Designed for low- and medium-earth orbit missions, the radiation-hardened device is now launch-ready for programmes such as Galileo, Copernicus and the IRIS² constellation.

ESCC 9030 is a recent European space component standard that defines how plastic-encapsulated and flip-chip monolithic microcircuits can be qualified for space use. As demand for onboard digital processing grows, so does the need for more compute at the edge of orbit. By supporting scalable, non-ceramic packaging, the standard shifts space hardware toward the economics of satellite programmes rather than deep space missions. NG-ULTRA is built for that transition, enabling more data processing in orbit while keeping energy use and system cost under control.

Thanks,

Intel – it was good while it lasted With open-source cutbacks in the works, Intel has continued to archive projects it no longer supports. Among the latest round are the ipmctl code for managing Intel’s Optane DC Persistent Memory; the Mu2SV project, a translator for Murphi code to SystemVerilog; and the intel/fpga-partial-reconfig project.

After supporting the FPGA Partial Reconfiguration Design Flow with various tutorials, reference designs and Linux drivers for over ten years, the announcement is not really a surprise. In 2025, Intel sold a controlling 51% stake in Altera to private equity group Silver Lake as part of its plan to divest non-core assets. While Intel retains a 49% interest, Altera is now independent again and free to focus on expanding its FPGA portfolio.

The bad news? Only five units are being produced at a cost of around $400 each. Don’t worry, however. The MiniST is built using opensource materials and the enthusiast behind it, Dennis Shaw, has made it clear that anyone with a 3D printer and the skill, patience and time can spend what will probably be hours and hours (and more hours) of effort to build their own.

A progressive approach to accelerating

system-level verification for AMD

Versal™ adaptive SoC designs

AMD Versal™ adaptive SoCs provide developers a heterogeneous computing architecture that combines programmable logic (PL), AI Engines, and high-performance processing systems within a single device. These compute elements are interconnected through a programmable network on chip (NoC) and are used together to implement complex, high-performance systems.

While this architectural flexibility enables significant gains in performance and efficiency, it also introduces additional verification complexity. A Versal device design spans multiple domains, including AI Engine graphs, and HLS or RTL-based PL design, along with software running on the processing system. Each domain is developed using different tools and abstractions, often by different teams.

Verifying these components function correctly as an integrated system is one of the most demanding aspects of Versal device development. System-level verification must ensure algorithmic correctness, correct integration between subsystems, and correct behavior once deployed to hardware. Historically, this level of verification has relied on hardware emulation, which, while accurate, introduces complexity and performance limitations that restrict its usefulness earlier in the design cycle.

The AMD Vitis™ Unified Software Platform introduces an alternative range of simulation flows that overcome these limitations. Functional simulation, XSIM-based subsystem simulation, and hardware-inthe-loop verification enable a progressive approach to verification that begins earlier, executes faster, and reduces overall project risk. These flows complement one another and can be applied effectively to Versal adaptive SoC system development.

Hardware emulation as the traditional baseline

Prior to the availability of newer system simulation flows, the primary verification approach for Versal device designs was hardware emulation within Vitis software. This flow combines several independent simulators to model the major subsystems of a Versal device.

In a typical hardware emulation verification, the processing system is simulated using QEMU, the PL is simulated using XSIM with an HDL testbench, and the AI Engine array is simulated using the System-C based AI Engine (AIE) simulator. Together, these simulators provide functional accuracy across the full system and support execution of software applications interacting with the PL and AI Engines.

While this approach produces valid and informative results, it is complex. Each simulator operates at a much lower speed than real silicon and often at different time resolutions. As a result, significant effort is required to configure and maintain synchronization between simulators.

To preserve correct system behavior, clocks, events, memory transactions, and interrupts must be continuously coordinated across QEMU, XSIM, and the AIE simulator. This cross-simulator synchronization introduces communication overhead and frequent context switching, which significantly reduces overall simulation throughput. Additional factors such as debug visibility and transaction-level modelling further contribute to slow execution.

As Versal adaptive SoC designs scale in complexity, with deeper pipelines, wider datapaths, and more sophisticated interconnects, the limitations of hardware emulation become increasingly pronounced. While hardware emulation remains valuable for certain use cases, it is not always well suited for rapid iteration or early-stage system validation.

A progressive system simulation strategy

Rather than relying exclusively on hardware emulation, Versal device development benefits from a progressive system simulation strategy that applies different verification techniques at different stages of the design process.

This strategy consists of three complementary flows:

1. Functional simulation focuses on algorithmic correctness at a high level

2. XSIM-based subsystem simulation validates integration between AI Engines and the PL

3. Hardware-in-the-loop verification executes the design on a real Versal device under software control

Each flow addresses specific verification goals and avoids the overhead associated with full hardware emulation when it is not required. Together, they provide a structured path from development to hardware validation.

Functional simulation for Versal adaptive SoC systems

Functional simulation focuses on verifying what a design does rather than how it behaves at the cycle level. For Versal devices, this typically involves validating AI Engine graphs and HLS-generated kernels before they are integrated into a larger system.

Vitis Functional Simulation enables developers to simulate AI Engine and HLS designs using Python, MATLAB, or C++ (early access). This allows verification to be performed in the same environments commonly used for algorithm development, reducing friction between software modelling and hardware implementation.

Because functional simulation operates at a higher level of abstraction, simulation performance is significantly higher than hardware emulation. This makes it practical to process large datasets, explore architectural parameters, and evaluate numerical performance metrics early in the design flow.

To enable seamless interaction between software frameworks and Versal device designs, functional simulation uses a unified array type that supports fixed-point and floating-point data formats commonly used by AI Engines and HLS kernels. This allows data to be exchanged without manual conversion or loss of fidelity.

Functional simulation is particularly effective for early validation, where rapid iteration and algorithmic insight are more important than cycle accuracy.

Transitioning from algorithms to subsystems

Once functional correctness has been established, the next challenge is to verify that AI Engine designs integrate correctly with the PL and system-level infrastructure. At this stage, functional simulation alone is no longer sufficient, as it does not capture data movement, interface behavior, or subsystem interactions.

This transition marks the point where subsystem-level simulation becomes essential.

2

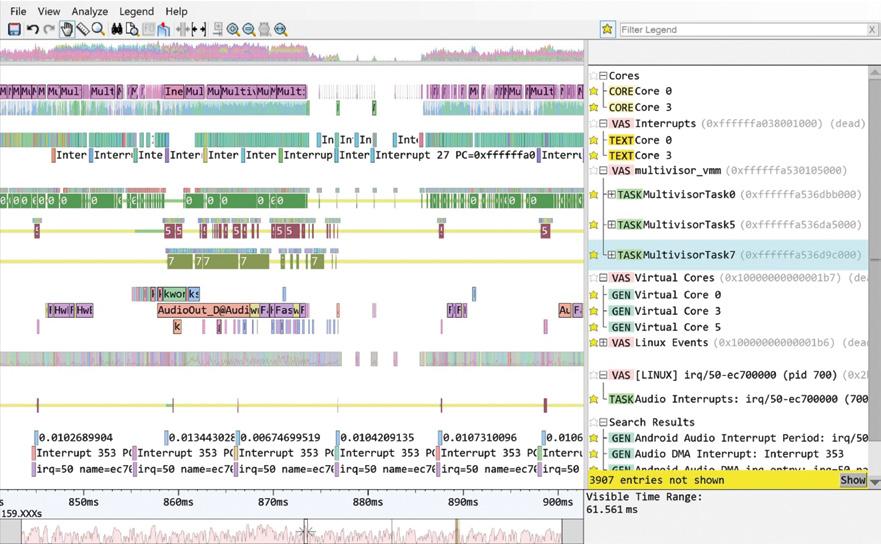

XSIM-based subsystem simulation

XSIM-based simulation enables Vitis subsystems to be simulated directly within the AMD Vivado™ Design Suite environment. In this flow, the design defined in Vitis containing a combination of AI Engine, HLS, and PL kernels is packaged as a Vitis subsystem and imported into a Vivado Design Suite project, where it is integrated with surrounding PL.

The Vitis subsystem is exercised using the AI Engine testbench replacing the processing system. The testbench in this case should be SystemVerilog, which eliminates the need for QEMU. This significantly reduces simulation overhead compared to hardware emulation. This approach allows PL designers to verify not only the AI Engine functionality but also data movement, interface correctness, and integration behavior with custom RTL models while retaining visibility into both RTL and AI Engine activity.

Because this flow avoids full processor simulation and associated overhead, it executes significantly faster than hardware emulation while still providing meaningful system context. It represents an important intermediate step between functional simulation and hardware execution. Crucially, XSIM-based verification is cycle accurate (AI Engine simulation is cycle approximate) and enables developers to verify not only performance and interfacing but also throughput and latency.

Analysis and visibility with Vitis tools

During XSIM-based simulation, AI Engine execution can be analyzed using Vitis Analyzer. This provides insight into graph structure, tile utilization, and execution behavior, complementing the RTL-level visibility provided by Vivado tools.

By correlating subsystem behavior observed in XSIM with AI Engine execution data, developers can identify performance bottlenecks and integration issues before committing to hardware implementation.

At this stage, teams can be confident that the algorithm is correct and that the AI Engine and PL subsystems function together as intended.

Hardware-in-the-loop verification

Hardware-in-the-loop verification represents the final stage of the progressive simulation strategy. In this flow, the design is deployed onto a real Versal device but remains controlled by a host-based software environment.

Using Vitis hardware-in-the-loop capabilities, a Vitis subsystem is packaged with a lightweight server that runs on the target Versal device. A host system communicates with this server over Ethernet, sending test vectors and receiving results for analysis. Data from the host can be passed using either MATLAB or Python, both commonly used for algorithm development.

Unlike hardware emulation, computation is performed on real silicon. This eliminates the need for cross-simulator synchronization and allows performance, timing, and numerical behavior to be measured accurately. At the same time, the software-driven nature of the flow preserves repeatability and observability.

Hardware-in-the-loop testing enables developers to validate subsystem behavior and performance before integrating the design into a complete system, reducing risk at a critical stage of development.

Reducing risk through progressive verification

By structuring verification around functional simulation, XSIM-based subsystem simulation, and hardware-in-the-loop execution, Versal device developers can avoid the cost and complexity of hardware emulation when it is not required.

Each stage addresses a specific class of risk and builds confidence incrementally. Functional simulation validates algorithms. Subsystem simulation validates integration. Hardware-in-the-loop validates real-world execution.

This approach enables faster iteration, earlier insights, and more predictable system-level outcomes.

Wrap-up

As Versal adaptive SoC designs continue to grow in complexity, verification strategies must evolve beyond reliance on hardware emulation alone. While hardware emulation remains valuable, its complexity and performance limitations make it less suitable for early-stage and iterative verification.

The Vitis Unified Software Platform provides a set of complementary simulation and verification flows that address these challenges. Functional simulation, XSIM-based subsystem simulation, and hardware-in-the-loop verification together form a scalable and efficient system-level verification strategy for Versal designs.

By applying these flows progressively, you can reduce risk, improve confidence, and accelerate the path from algorithm development to hardware deployment.

Resources

If you would like more information on the techniques mentioned in this article, the following resources will help.

UG1701 VFS Documentation: Vitis Functional Simulation Overview • Embedded Design Development Using Vitis User Guide (UG1701) • Reader • AMD Technical Information Portal (https://docs.amd.com/r/en-US/ug1701-vitisaccelerated-embedded/Vitis-Functional-Simulation-Overview)

Vitis-Tutorials VFS Examples: Vitis-Tutorials/Vitis_System_Design/Feature_ Tutorials/01-Vitis_Functional_Simulation at 2025.2 ? Xilinx/Vitis-Tutorials (https://github.com/Xilinx/Vitis-Tutorials/tree/2025.2/Vitis_System_ Design/Feature_Tutorials/01-Vitis_Functional_Simulation)

Vitis Functional Simulation Early Access Lounge (for a new C++ Flow; AMD is aiming for this to be in production in 2026.1): Vitis Functional Simulation

Early Access Secure Site (https://account.amd.com/en/member/vitisfunctional-simulation.html)

Hardware in the Loop Early Access Lounge: Vitis Hardware-in-the-Loop

Early Access Secure Site (https://account.amd.com/en/member/vitishardware-in-the-loop.html)

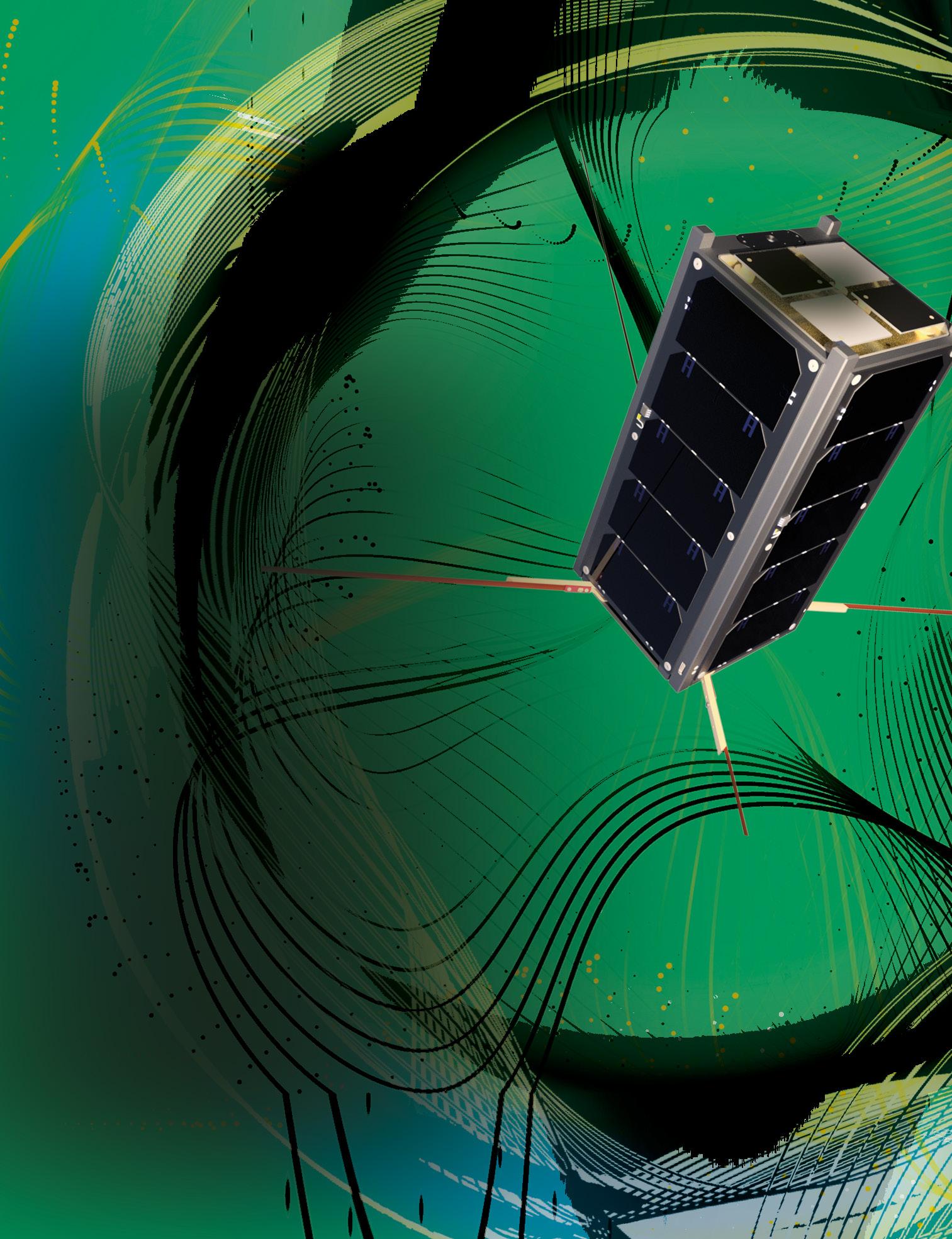

Leveraging FPGAs for deploying AI in small satellites

Dr James Murphy, Founder & CTO, Setanta Space Systems

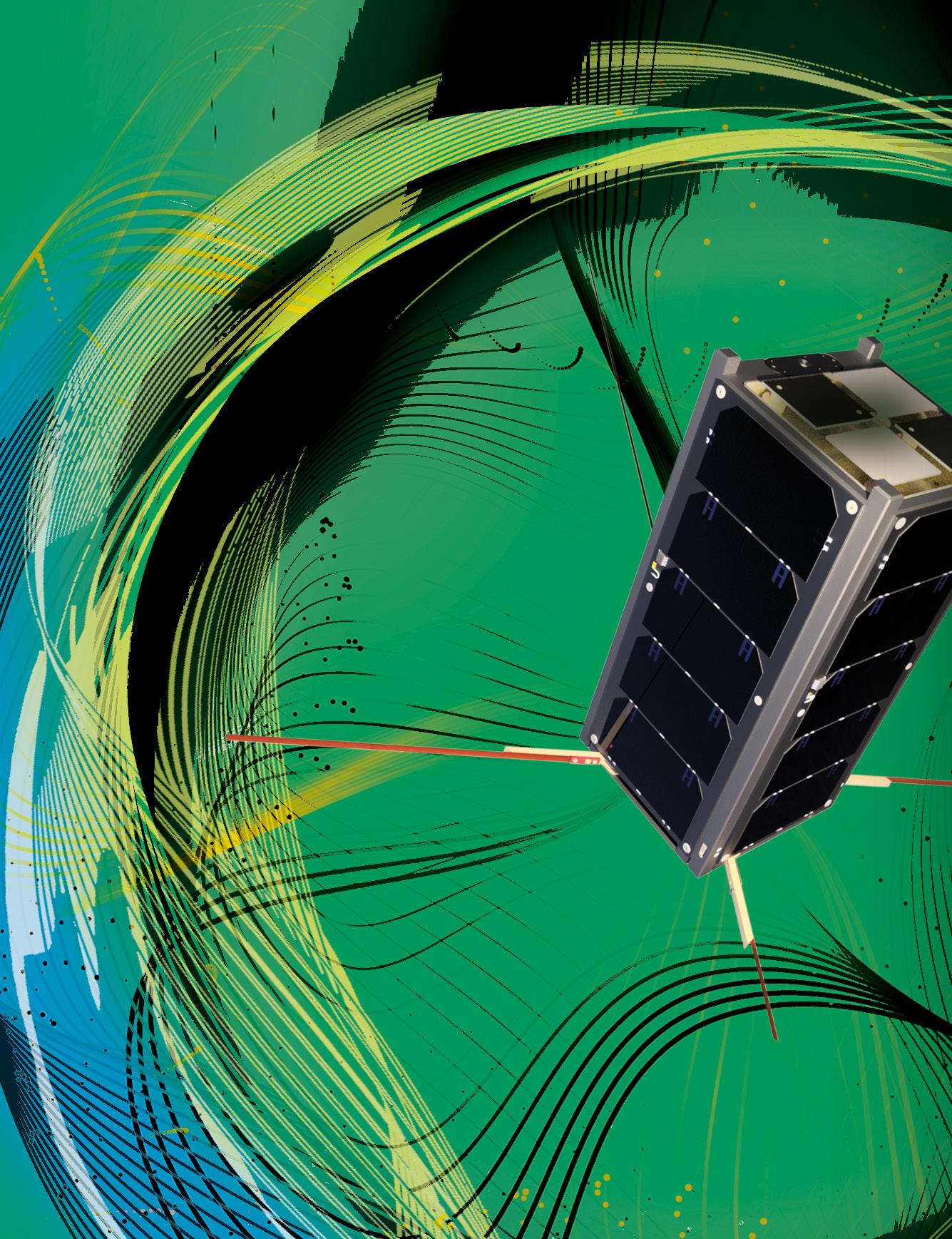

Space is nothing new to FPGAs. They’ve become crucial for high-speed communications, payload management, sensor data analysis, and critical control systems in satellites, rovers, and probes. As of February 2026, there were ~14,400 active satellites in space1, and that’s predicted to grow to 100,000 by 20302, driven mostly by the deployment of large, small-satellite constellations by companies such as Starlink and OneWeb.

This in turn has prompted the next big challenge for FPGAs: using AI to detect and manage anomalies like hardware malfunctions, software

errors,

and environmental impacts in small satellites to enable, improve and enhance realtime decision-making.

This automated approach is crucial because while satellites generate substantial amounts of data, only a fraction makes it to ground due to downlink bandwidth restrictions. They’re also limited when it comes to autonomy. If an error occurs on a satellite, it will either recover from it using pre-established procedures, or go into a safe state while waiting for an onground operator to analyze it. The trade-off here is the delay involved in the downlink cycle and the limited information that can be provided due to the download budget. This can potentially lead to the loss of the satellite and the premature end of the mission.

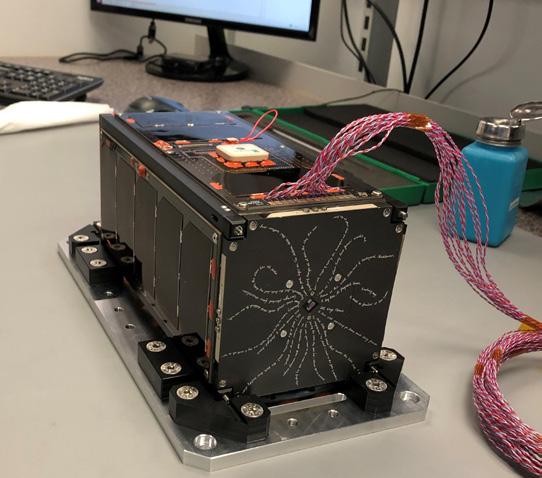

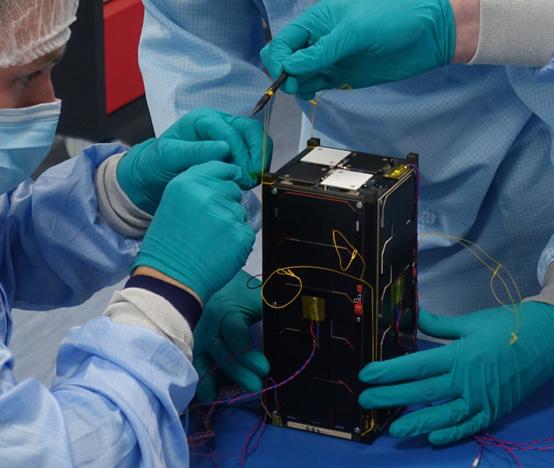

To understand this further, an ESA-funded project was undertaken in partnership with University College Dublin (UCD), which built Educational Irish Research Satellite-1 (EIRSAT-1), Ireland’s first domestically produced satellite. This was based around a CubeSat, one of the smallest satellites you’re going to see in space. These follow the CubeSat architecture where 1U is 10cm x 10cm x 10cm. A 2U version was used in the EIRSAT-1 mission, but was still very small.

The satellite launched on a SpaceX Falcon 9 in December 2023 and de-orbited in September 2025 after fulfilling its mission parameters. Our work didn’t fly on EIRSAT-1, but we treated the project as a pseudo mission where we deployed AI models on-ground, but on hardware that could have flown on the mission, and using a live Flight Dataset from the mission downloaded in real time. That distinction allowed us to explore the constraints of real flight systems without the cost and risk of an actual launch.

Those constraints are severe because even the 2U CubeSats are small, they’re low power with only solar panels for power generation, and the vacuum environment adds thermal management problems. So there are a lot of issues that you encounter when you try to put electronics in space. On top of that, the worst thing about space is its radiation environment. That’s one of the reasons we looked at FPGAs for this project because you can make FPGAs resilient in those environments, whereas you can’t with other edge devices like GPUs.

Understanding the data

Every satellite, no matter how small, produces telemetry in the form of streams of data describing temperatures, voltages, currents, reaction wheel speeds, payload states, and countless other parameters.

EIRSAT-1 was no exception. It carried three payloads:

The Gamma-ray Module (GMOD), designed to detect and analyze gamma-ray bursts; The ENBIO Module (EMOD), which tested advanced thermal management coatings on the exterior of the spacecraft; and the Wave-Based Control (WBC) experiment, a UCD-developed software platform that used Earth’s magnetic field to orient the CubeSat.

Beyond the payloads, the satellite also had a full suite of telemetry, command, and data-handling systems which enabled communication with ground stations and transmission of scientific data back to Earth. Ground analysis of the data the satellite system provided was conducted for both nominal spacecraft operations and the onboard payloads.

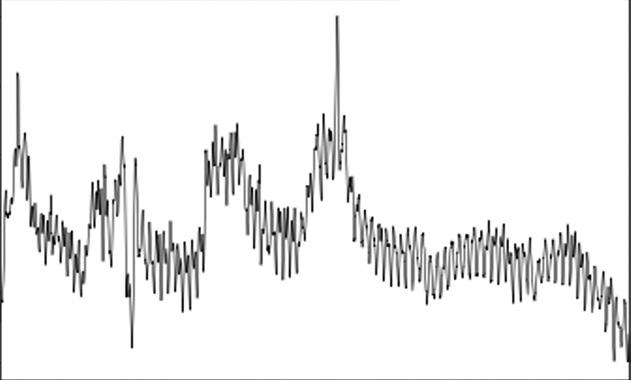

This raw data was down-sampled from the satellite as hexadecimal bytes and a data dictionary broke the data stream into individual rows and subsequent features. Each row of features was then stored in a CSV file with a Row ID and a time stamp, resulting in a time-series dataset. This was the live Flight Dataset we could work with on the ground, and we could view it as a concatenation of a series of channels translated into a 2D image (figure 2).

The data in each dataset is divided into four categories:

Housekeeping, Telemetry-EnhancedDiagnostics (TED), Attitude Control (ADCS), and Power

In our case, we were looking primarily at the Housekeeping telemetry which provides core health-and-status data. and our experiment was to develop an AI model for anomaly detection in that category.

Building an unsupervised anomaly detection model

We took an unsupervised approach and trained the AI model using the EIRSAT-1 dataset as the data source. This actually consisted of three subdatasets because every satellite sent to space has to go through a qualification campaign to make sure that it works. So alongside the live Flight Dataset, we also had the Flight Test Dataset and the Thermal Vacuum Test Dataset. The satellite suffered some failures during qualification, which is quite common given the advanced technologies involved, and we had some labeled data we could use to quantify how well our model was performing.

We initially trained the AI model on the labeled artificial anomalies from the Flight Test dataset, before validating the trained model on the unseen Thermal Vacuum Test dataset in order to classify anomalies. We could then use the metrics and insights gained to deploy a model and threshold to the live Flight Dataset.

"Uh-oh"

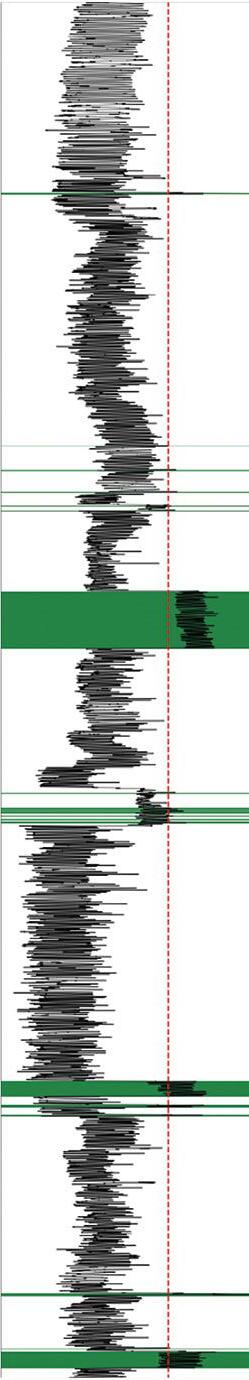

At this stage, we had no idea if something was going to happen or not. If it did, we didn’t know if it was an anomaly or not since we were looking at unsupervised learning, so any highlighted regions were called Out of Ordinary Operations (OOO), which we referred to as “Uh-ohs”. Interestingly, we successfully detected all of the anomalies plus one that wasn’t picked up, which was an extremely good result (Figure 3).

We contacted the team and worked out the anomaly was payloads pairing on and off. So while it was an OOO rather than an anomaly, its detection helped to validate our model.

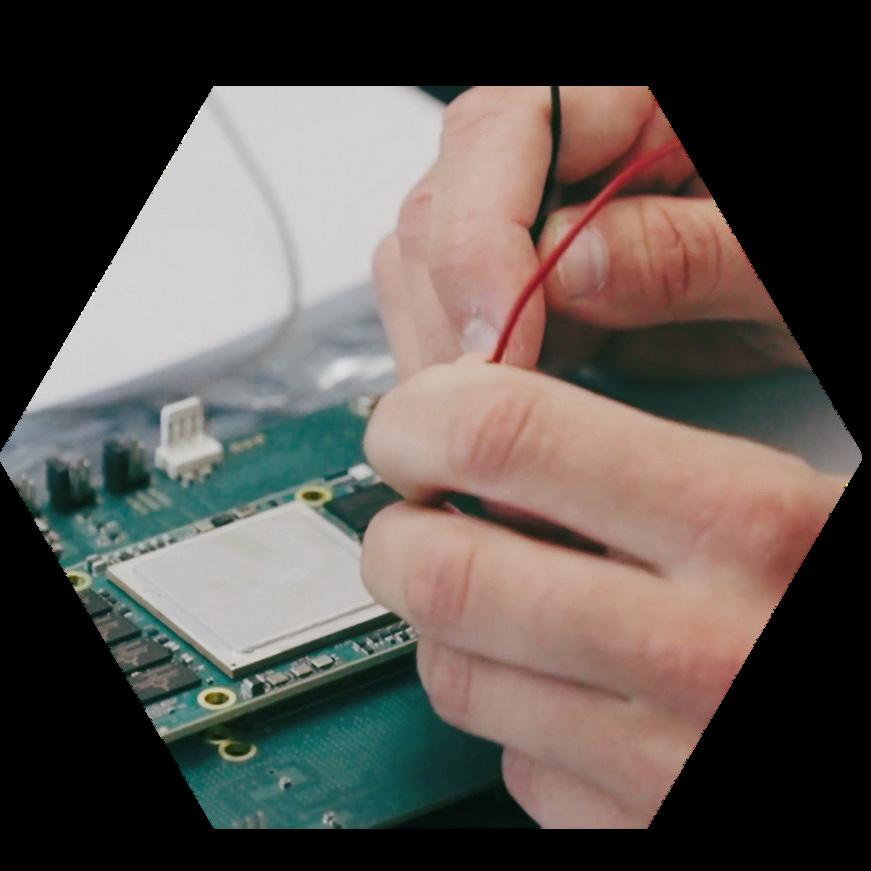

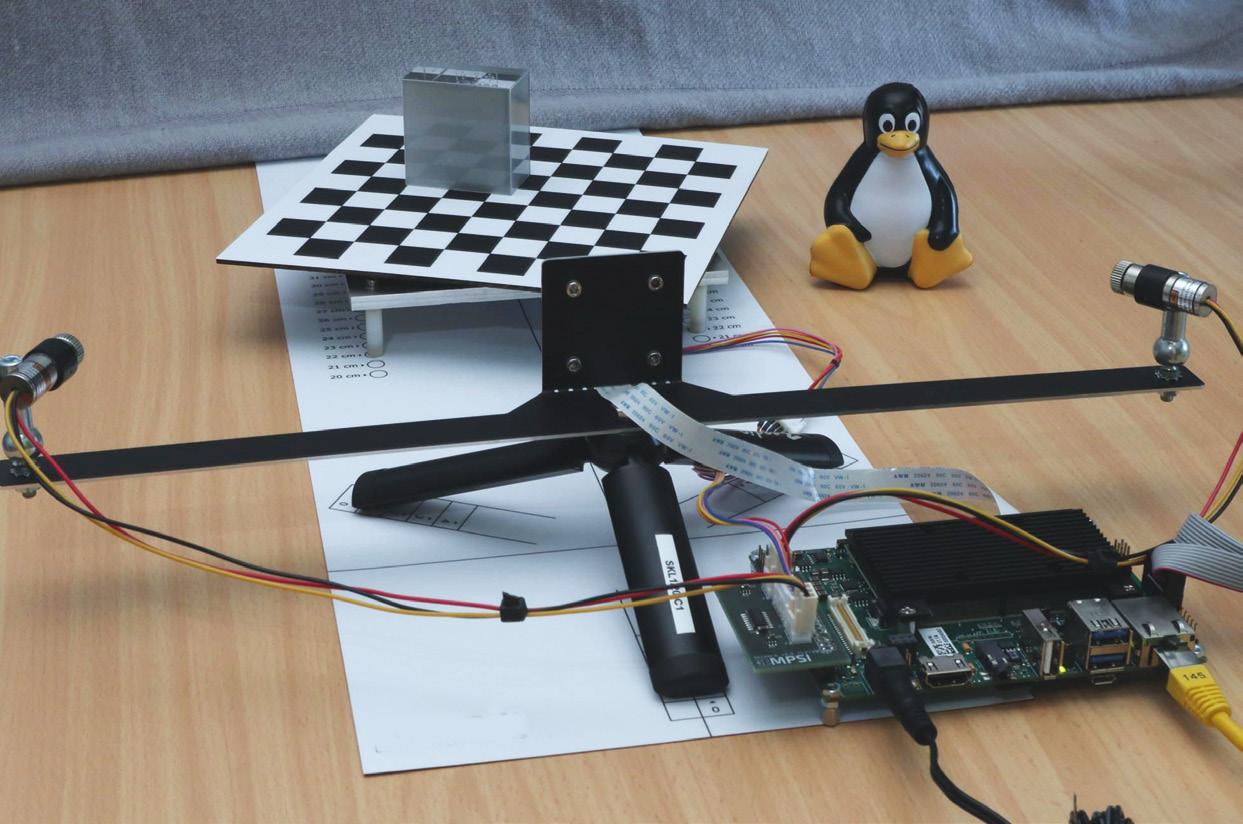

Deploying the model on FPGA hardware

We began our FPGA development on an AMD KCU105 Evaluation Kit and ended up moving to an AMD Zynq UltraScale+ MPSoC ZCU106 platform, which offered a more convenient and flexible pipeline for our deployment workflow.

The process required converting the original TensorFlow models into TFLite format, and then transforming those TFLite models into C header files suitable for use with TFLite for Microcontrollers. Along the way, issues within the TFLite repository required a complete refactoring of the build system. The resulting flow consists of two main stages: the first builds the TFLite libraries for the target microcontroller, and the second uses a separate makefile to compile the application itself against those precompiled libraries.

Despite the engineering hurdles, the performance results were encouraging. We benchmarked the FPGA implementation against a number of edge devices operating within a comparable 5-watt power envelope. That included the NVIDIA Jetson Orin Nano SoM, the Nano NX which was just above the envelope, the Intel Movidius Myriad X Vision Processing Unit (VPU), and the Raspberry Pi 4. All platforms achieved the required inference rate of 10–100 Hz and, although the FPGA landed toward the lower end of the performance range, it still comfortably met the demands of onboard telemetry workloads.

The really interesting thing here is that you’re not losing that much performance by going from the smaller edge devices to an FPGA, but you are gaining the resilience that FPGAs offer for space missions. That trade-off of a slightly lower performance for dramatically higher resilience is what makes FPGAs so attractive for spaceborne AI.

Autonomy in high-radiation environments

One of the driving factors is that if you’re deploying in low Earth orbit (LEO), most of the edge devices will last three to five years. The Nvidia Orin NX has been radiation-tested and the results say that it will fail within three years of LEO. The Myriad X has also been radiation-tested and it’s a little bit more resilient and will be around for five to ten years in LEO. And while Raspberry Pis are used in space, they’re susceptible to Single Event Upsets (SEUs) where memory becomes corrupted or the processor freezes due to radiation exposure, and are confined to auxiliary operations which can be briefly interrupted rather than critical operations.

All of that is in LEO and once you start pushing the envelope and leave LEO, you see substantially more radiation, and all of the edge devices will fail, except the FPGAs. That’s an issue because the push in the space sector now is autonomy in high radiation environments as well as low radiation environments.

A good example is NASA’s Perseverance Rover which, together with its Ingenuity Mars Helicopter, landed on Mars in February 2021. Ingenuity, which was actually a drone not a full-size helicopter, was only meant to be a technology demonstration that would make five flights in 30 days. It ended up making 72 flights over three years. It was the first aircraft to achieve powered, controlled flight on another planet and in many ways it also redefined Mars exploration.

We’ve made our research dataset publicly available at https://zenodo.org/records/11551556, so if you want to base your own models on satellite telemetry, please go ahead. It’s an open-source system, and if anyone wants to try and match us or beat us, our results are also available. Please do so because we want to make this a much better system for everybody.

It demonstrated that aerial rather than ground navigation could survey vast areas that would take wheeled vehicles months or even years to cover.

Both the Perseverance Rover and the Ingenuity used radiation-hardened AMD FPGAs for tasks like image detection, matching, and rectification; range and velocity measurements; operational control and system management; and flight control and power conservation.

That’s why it’s really interesting to push for this autonomy, and when we’re looking at deep space missions, it becomes even more necessary. So I think it’s very promising for FPGAs in the space sector and always has been.

Our work with EIRSAT-1 telemetry demonstrated that unsupervised anomaly detection can be deployed on FPGA hardware without sacrificing mission constraints. It also showed that AI can detect both genuine anomalies and operational events like payload power cycles, offering valuable insights into spacecraft behavior.

Most importantly, it showed that the combination of AI and FPGAs is not just feasible, it’s promising. As missions push beyond LEO and into harsher environments, that combination may become essential.

1. CelesTrak Active Satellites tracker, https://celestrak.org/ NORAD/elements/table.php?GROUP=active&FORMAT=tle, accessed February 2026

2. ‘Space Debris: Is it a Crisis’, The European Space Agency, https://www.esa.int/ESA_Multimedia/Videos/2025/04/ Space_Debris_Is_it_a_Crisis, accessed February 2026

Cover and article artwork includes rendering of EIRSAT-1, @ESA; EIRSAT-1 team

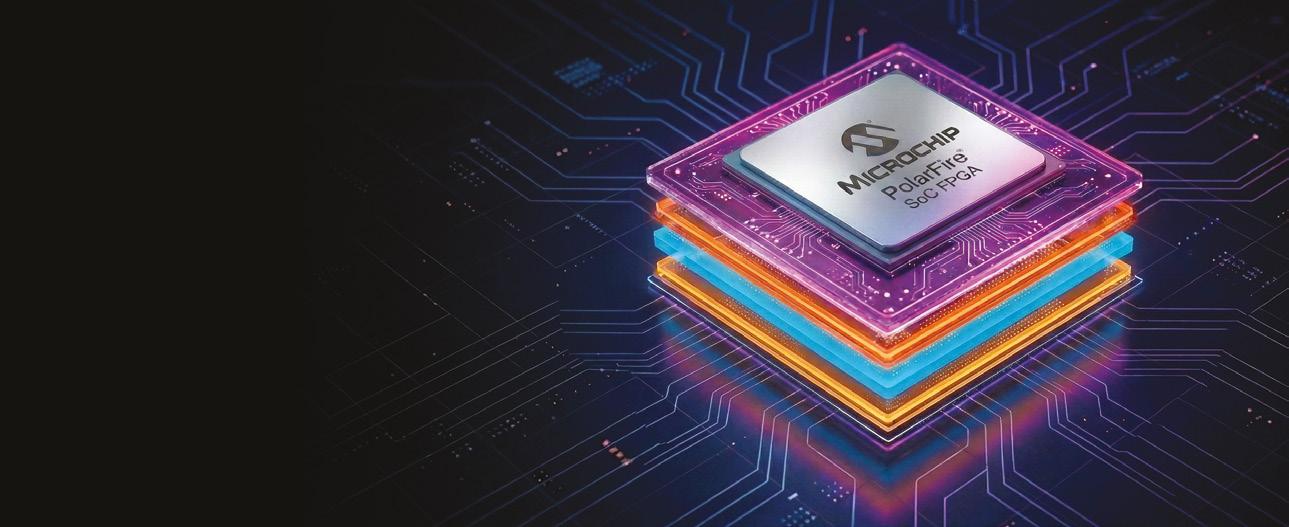

PolarFire® FPGA Solution Stacks

Build Faster With Less Risk

From Prototype to Deployment

Our solution stacks combine secure, low-power FPGA and SoC platforms with evaluation kits, development tools, IP cores and reference designs so you can simplify the design process, enhance security and move to market faster with less risk.

Solution Stacks for Edge Applications

Ethernet sensor bridge: Low-power sensor-bridging platform for edge-to-cloud AI using NVIDIA Jetson AGX Orin and IGX Orin developer platforms.

Smart robotics: Boost efficiency with secure solutions that can save up to 50% energy and seamlessly integrate AI/ML.

Medical imaging: Deliver cutting edge ultrasound, MRI and CT with enhanced image quality, security and diagnostic accuracy.

Smart embedded vision: Deliver 4K resolutions with low-power 12 Gbps SerDes and a variety of sensor, display and transport interfaces like SDI, CoaXPress, HDMI, MIPI, SLVS-EC ™ and more.

Motor control: Flexible, low-power motor control with deterministic, synchronous performance for multi-axis and high-RPM control.

Edge communications: Portfolio includes DSP, SerDes, networking, MPUs and analog blocks to address power, size, cost and security needs.

The Cyber Resilience Act

and what it means for FPGAs

The industry today is highly focused on the Cyber Resilience Act (CRA). But what exactly does it mean for FPGAs and FPGA design implementation? Why is it important to all designers, and what does everyone need to do to be compliant?

From 11 December 2027, all Products with Digital Elements (PwDE), which includes hardware and software, placed onto the European Union (EU) market must be compliant with the CRA if they are directly or indirectly connected to a network. A digital product is both the final product and the components used to build products. Placed on the market applies to all products, not just when you place or sell the first version of that product. So, if you sell a product on 10 December 2027, it does not need to be compliant, based on the regulations in place. A day later, the CRA regulations will be enforceable and the product must comply.

The Act applies to all PwDEs and has been defined to help protect critical infrastructure within the EU. However, it does not apply to military or national security products, medical, civil aviation or automotive products which already have their own regulations and standards which cover the same level of protection. However, it does apply if a product is intended for a dual-use application.

To be compliant with the Act, a set of essential cybersecurity requirements are laid out in Annex 1 of the CRA which can be found online. Those requirements are split into two parts. Firstly, the components of the Act that the product must conform to, and then the vulnerability handling requirements that need to be carried out on the device. The Act also addresses the continuous support that must be applied to the product once it is in the market and the penalties that could be imposed if the essential requirements are not met.

In practical terms, the inference is that security can no longer be treated as a checklist item at the end of the design and development process. In its place, the Act mandates a secure-bydesign methodology. Companies must show that risks have been identified, attack surfaces are limited, interfaces have been assessed and protected, and that FPGAs can be supported on an ongoing and continuous basis by, for example, updating the logic in the field. This requires a structured approach and an understanding of how components like FPGAs meet these obligations.

That means including a secure development lifecycle, clear documentation before and during development, and early threat analysis to demonstrate compliance.

This then informs the design-focused considerations that follow and the rigorous assessment that needs to be carried out to allow products to be placed on the market.

The implications for design

Fundamentally, the design process to develop a product needs to change from what the industry has been using up to the present day. It is no longer a process that takes a set of marketing requirements and from that, a block diagram is created to support the desired set of features.

From the outset, a Threat and Risk Assessment (TARA) is required to be created from this initial development phase to address the Essential Requirements. Exposed interfaces and features of the design must be examined to determine how they are protected and what threats could be encountered by the device.

Product classes

This applies to the product being developed and also the components that could potentially be used within the product. To support this, the Act defines classes of products at the component level and for horizontal markets from a default class to Class I, Class II, and Critical Class. It is envisaged that most products will fall into the default class where the product developer will do a self-assessment against the Essential Requirements before it is made available on the market.

For Class I products, a similar self-assessment is required but technical standards are being developed to support this process. Class II products have a more stringent standard to adhere to and must be assessed by an external third party. Critical Class is for critical products and carries the burden of requiring a higher level of assurance to an EU approved scheme. In Annex III of the Act a set of important products are defined and assigned to either Class I or Class II. Item 15 of the Class I set specifies: “Application specific integrated circuits (ASIC) and field-programmable gate arrays (FPGA) with security-related functionalities.”

Since the majority of FPGAs available today contain some level of security they will fall into this class of product and a European standard is being established, prEN 50767, to support the development and analysis of the security features of these products that can then be leveraged by the end user’s application to address the risks within their products.

It should be noted that classification is for the product being placed on the market. Since an FPGA is placed on the market as an individual product it must comply with its own regulations. A product needs to conform only to the class defined. Thus, a default product could contain multiple components each with different classes, which could be higher, but the use of higher-class components in the product does not increase the class of the product they are used within.

How is this applied to FPGAs?

Now all FPGAs are not the same. There are two different technologies, SRAM and Flash, and they provide a different fundamental base. On top of this, there is no standard set of cryptographic features. The prEN 50767 standard does not specify what these features are but just that they need to support the Essential Requirements.

How this is done is down to the technology and the implemented elements. The TARA for each family will describe how the requirements are addressed within the device, but it will be down to the user to determine if the methods described within the device’s documentation address the Essential Requirements that need to be met within their product.

Microchip FPGAs

Microchip FPGAs and SoCs provide a feature-rich set of cryptographic features within the Igloo2 FPGA, SmartFusion2 FPGA, PolarFire FPGA and PolarFire SoC families. Firstly, as Flash-based technologies, the devices are secure by design since the configuration cannot be read out of the non-volatile families.

The programming file is encrypted by default, but the user can add their own keys which are stored securely in a hashed format. Each device has a unique ID determined through a Physically Unclonable Function (PUF) which can also be used to generate private keys that can be used in secure communications. The PolarFire families also have the addition of a highly versatile cryptographic coprocessor that can be utilized in a user’s application.

Finally, although not required or described within the FPGA standard, Microchip FPGAs and SoCs include several anti-tamper features that can be used to secure interfaces, protect against physical attacks and return a device to its default state if required. This makes the families compliant with some of the requirements defined for Class II devices. A simplified security model of PolarFire can be seen in figure 1.

What is the Cyber Resilience Act trying to achieve?

The properties of a product that the Essential Requirements are addressing are:

a) Security by design

b) No known exploitable vulnerabilities

c) Secure by default configuration

d) Security updates

e) Access control

f) Confidentiality protection

g) Data minimization

h) Availability of essential and basic functions

i) Minimizing negative impact

j) Limiting the attack surface

k) Mitigation of incidents

l) Recording and monitoring

m) Deletion of data and settings

When it comes to the vulnerability handling, the Essential Requirements relate to:

a) Identifying the components and their vulnerabilities

b) Addressing vulnerabilities

c) Regular testing

d) Publishing addressed vulnerabilities

e) Having a coordinated vulnerability disclosure policy

f) Secure distribution of updates

g) Dissemination of updates

To achieve this, the product supplier needs to provide both technical and user documentation to describe the product. The technical documentation should include the process used to create the product, its cybersecurity risks, the product’s support period, the tests that were performed and a list of all its components.

Technical documentation must be made available to regulators on request and does not need to be released publicly. The User documentation should include the manufacturer’s details, the unique identifier for the product, its intended purpose, the support period, user guidance on its use and documentation of its components.

What is your next step?

So, in essence, what should you, as a product manufacturer do? Initially you need a process that defines a secure development lifecycle, and establish a cybersecurity culture within your company. For your product you need to identify which product category and class it falls within and, if necessary, identify any harmonized standards that are applicable.

Everything needs to be documented through the product’s full lifecycle, a minimum of five years, and these documents must be kept for at least ten years. Your product must be designed to be secure as defined by the Essential Requirements and the installation of your product must be done securely. Once on the market this security must be maintained continuously through a Vulnerability Management process with provision of updates to the product to maintain a state of continuous compliance.

It must be supported for at least the expected lifecycle and any vulnerabilities discovered during this time must be disclosed and addressed by monitoring and managing any cyber incidents. In the event of a vulnerability being exposed and exploited, both the regulators and customers must be informed. By using FPGAs within your product, you will have the assurance of their integrity as a Class I product and have the documentation available to support your own vulnerability analysis.

The CRA introduces many product requirements and changes to the development and lifecycle management. Some of these requirements will be new to many developers and thus have an impact on the new skills and ongoing support required to maintain a product. This will undoubtedly affect the design cycle time, so working with your vendors to leverage the capabilities and documentation they provide will be essential to success.

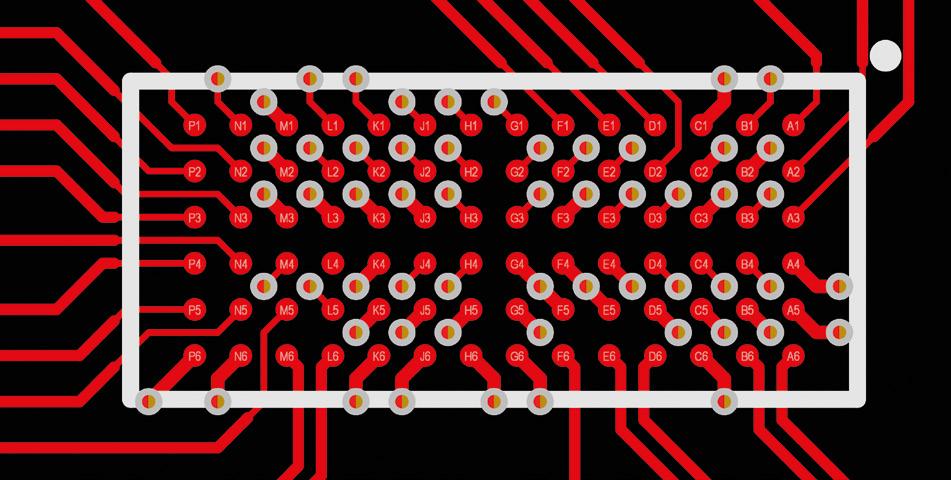

FPGA strategies for PCB layouts: Sparse vs Dense via breakouts

Tomas Chester, Hardware Designer and Founder, Chester Electronic Design

As semiconductor processes advance, FPGA packages have moved from spacious 1.0mm BGA pitches to denser pitches of 0.5mm and below, changing how engineers approach BGA breakout thinking and methodologies. Tighter pitches reduce routing options, constrain drill sizes, and redefine which via technologies are viable and when HighDensity Interconnect (HDI) becomes the only option. Understanding how pitch influences manufacturing choices is now essential for reliable PCB design.

The root cause of these denser BGA grids lies in semiconductor scaling. Photolithography, one of the core microfabrication techniques used in semiconductors, enables the creation of nanometerscale features of integrated circuits on silicon wafers. As fabrication processes and equipment have improved, smaller minimum feature sizes have been made possible. This has led to the shrinking of silicon usage, while enabling higher transistor density, increased performance, lower power consumption, and better cost efficiency. This can be seen in AMD FPGAs and adaptive SoCs, with the older Spartan 6 FPGAs utilizing a 45nm process, reducing to 28nm in the Spartan 7, and falling to just 7nm in the recently released Versal AI Edge Series Gen 2 adaptive SoCs.

Parallel to these enhancements, demand for part functionality and connectivity has increased, leading to the rise of higher I/O pin count requirements. To keep component sizes reasonable, the sacrifice comes in the increased density of pin connections. While BGA 1.0mm pin pitch devices are still common, devices with improved speed, power consumption and lower costs now utilize small BGA pitches like 0.65mm, 0.5mm, or even 0.25mm. These are pushing the limits of component fabrication, and causing headaches for hardware engineers and PCB designers due to board design and manufacturing needing to move on from the standard mechanical processes.

When asking questions about PCB design elements and the differences between FPGA BGA pin pitches, you may have heard the answer “It depends”. This is due to the number of variables involved and can result in an incorrect or even damaging response. To avoid this, it’s better to ground discussions by establishing a few guidelines like those followed in this article which presumes:

1. PCB thickness of 1.57mm

2. IPC Class 2 construction

3. Single lamination construction

4. Moderate high-speed material (Isola FR408HR or equivalent)

You can change these specifications to your own requirements: the key is to be consistent once the guidelines are established.

BGA pitch size and usable area

With a 1.0mm pin pitch BGA, we have ample room between the pads to place a standard 0.3mm mechanical drill along with a healthy 0.15mm annular ring. At this pitch, we aren’t yet fighting the mechanical limitations of our fabrication house.

The aspect ratio, which is the ratio of the board thickness to the drill diameter, remains well within standard limits, and drill wander is a non-issue because the clearances are so wide. With IPC Class 2 construction, even a slight breakout of the drill from the pad is permissible, though it is easily avoided given the significant space available.

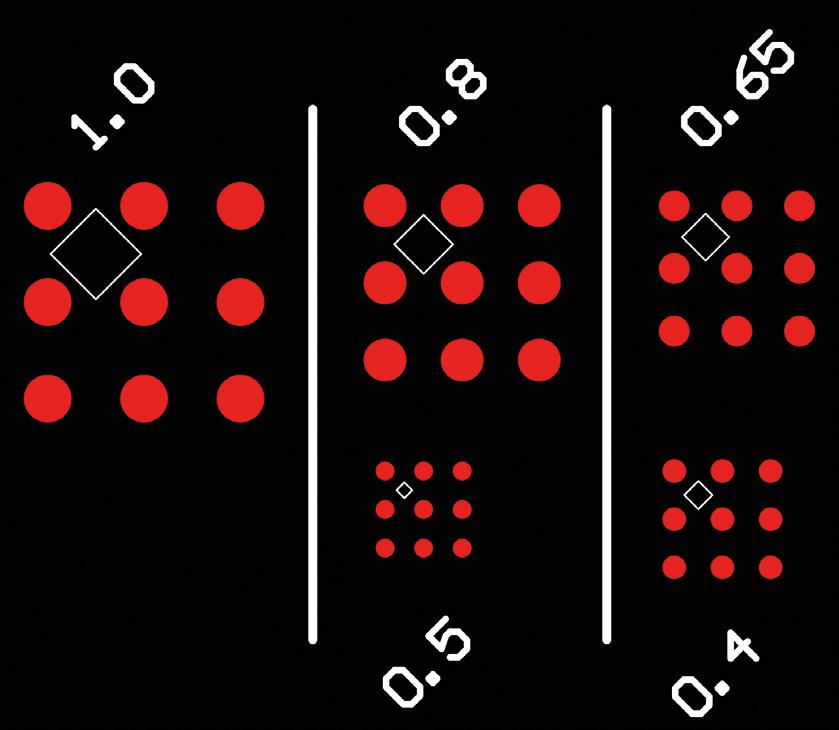

As we shift the BGA pin pitch down to 0.8mm, the design remains manageable with traditional mechanical fabrication processes. We are still able to utilize a 0.2mm or 0.25mm drill while allowing for a 0.1mm annular ring. If we visualize a diamond shape inscribed between the four pads, this represents the safe zone for via placement as shown in figure 1. In both the 1.0mm and 0.8mm BGA patterns, assuming a comfortable 0.125mm clearance to the surrounding copper, this usable area occupies approximately 53% of the grid. We are able to mechanically handle the design and have yet to be truly challenged by the limits of the fabrication process.

The difficulty begins when stepping down to a 0.65mm pitch. To maintain that same 53% usable area, our via pad and annular ring must shrink to roughly 0.345mm. This necessitates a 0.15mm drill bit, which has a ripple effect on the design’s solvability. On a standard 1.57mm board, a 0.15mm drill pushes us toward a 10.5-to-1 aspect ratio.

This not only uses a smaller, more fragile drill bit, but also forces us to worry about potential drill wander. A drill bit that wanders out of its pad target could easily move too close to routing areas on other layers or approach a dangerous proximity to the BGA pads themselves, while in all cases it reduces the reliability of the circuit board.

To avoid this, we need to start playing with the numbers within our design elements. For example, we can reduce our copper clearance to save the mechanical drill, although doing so will drive up manufacturing costs and can lower yield. We can reduce our annular ring to leave our clearance alone, but this will cause more failures when attempting to adhere to the IPC Class 2 breakout requirements. This crossroads is typically where we see the transition from standard mechanical drill fabrication into the world of HDI.

Shrinking down our size once again to a 0.5mm pitch causes non-HDI strategies to break. The pads themselves become so close together that we no longer have the space required to place a mechanically drilled via and its annular ring within the four-pad grid. At this stage, the transition is no longer a luxury, it is a necessity. The design must embrace laser drilling or other HDI techniques such as via-in-pad or multiple laminations with blind / buried via structures or microvias.

The via terminology within design

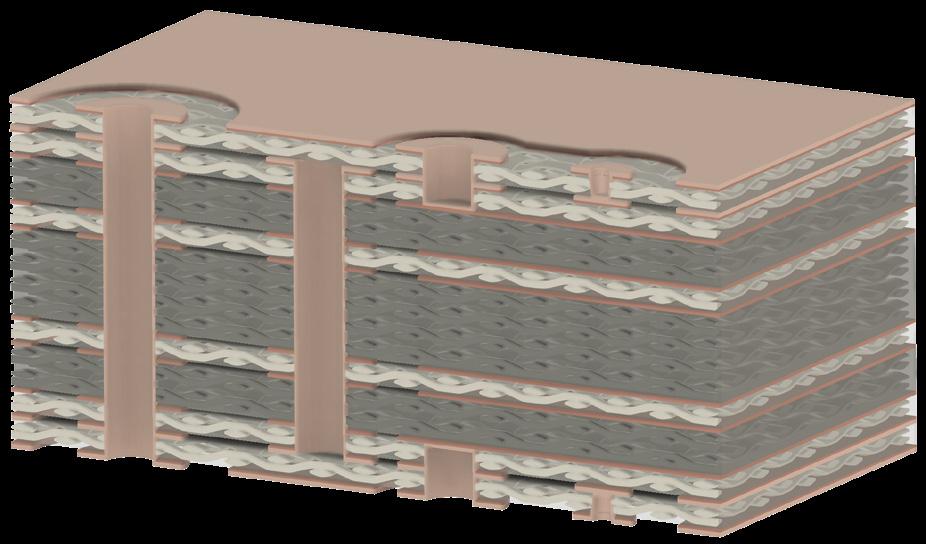

It’s worth detailing at this point the specific via architectures available to a hardware engineer or designer. Figure 2 shows the via structures mentioned so far, including the standard through-hole via, blind and buried vias, and the microvia.

A through-hole via, or through-via, is a structure that spans the entire thickness of the PCB, running from the primary/top layer (Layer 1) to the secondary/bottom layer (Layer N). Because this via traverses every layer in the PCB stackup, it is the primary architecture of concern regarding our mechanical drill aspect ratio which is the key limitation.

In contrast, blind and buried vias do not span the entire thickness of the board. A blind via is accessible or visible from Layer 1 or Layer N, but terminates on an internal layer. Conversely, a buried via is entirely contained within the internal stackup of the board and is not visible or known from simply looking at the outside of the circuit board. Utilizing these structures necessitates a more complex manufacturing flow involving multiple lamination cycles to build the connections. While both offer significant routing freedom between Layer 1 and Layer N, they represent a notable increase in fabrication cost.

The microvia serves as a cornerstone of high-density design, typically defined by a drill aspect ratio of roughly 0.8:1. These vias generally traverse a single dielectric layer, though in some configurations they may span two layers with a middle copper landing pad.

While microvias can be created in several ways, the most common method is laser drilling, which utilizes specific wavelengths to precisely cut through copper or dielectric materials.

A good comparison to understanding all of this is your car. Just as it can be equipped with advanced safety features or a highperformance engine, these optional changes from the standard command a premium price change. When utilizing HDI manufacturing capabilities, they incur a significant fabrication cost jump due to the specialized equipment and sophisticated techniques required. Therefore, a transition into HDI should be carefully evaluated not only for its budgetary impact but also for its implications on longterm reliability, from the sequential lamination required to generating the complex via structures.

There is, however, a cost-effective bridge that stands between standard through-vias and full HDI integration, and this is the use of Via-in-Pad Plated Over (VIPPO), which allows the via to be placed directly within the BGA landing pad. To prevent solder from being wicked into the via, it is plugged with epoxy and plated over with copper to create a flat, solderable surface. While this again uses additional equipment and process steps to create, it can avoid the need for multiple lamination cycles and helps reduce the reliability risks in a design.

Via breakout patterns

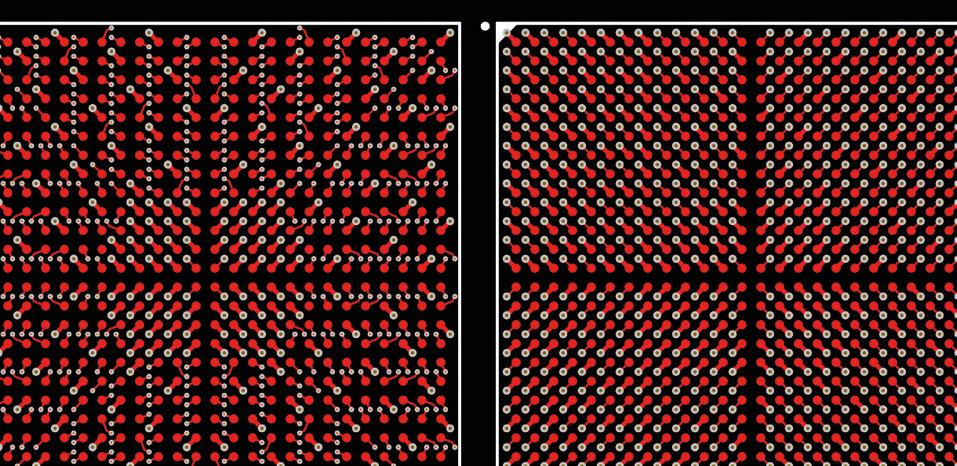

While there is a natural transition point around the 0.65mm to 0.5mm mark where HDI becomes standard, these techniques can also be applied to larger 1.0mm pitch grids to create unique routing patterns. For example, figure 3 compares two distinct breakout options in a 676-pin BGA.

The first utilizes standard through-vias in a quadrant breakout, where the BGA field is divided into four sections, each routed toward the nearest outside corner. This leaves a plus-shaped structure of open channels, facilitating power delivery to the core.

The second option leverages a mix of standard through-hole vias and HDI microvias to create more complex patterns, such as the L-shaped or chevron sections which are shown that grow from the outer corners toward the central core. This allows for a reduction in layer count within a board, as routing channels are wider, allowing for more traces to be placed per layer.

While a standardized breakout pattern is generally advised because it provides a predictable baseline for pin swapping or handling unused pads, modifying the breakout geometry is sometimes necessary in congested designs. However, designers should proceed with caution. As soon as a single via deviates from the established pattern, it often triggers a ripple effect requiring multiple adjacent elements to be relocated.

In a standard mechanical via drill breakout, each BGA ball typically has one of four possible positions for its associated via as shown on the left of figure 4. By introducing HDI, this limit is greatly expanded as shown on the right, providing significantly more degrees of freedom depending on the package size and the specific HDI toolset utilized.

Breakout methodologies

With every design, the BGA package breakout problem can morph with density, complexity, and its own unique design requirements. To this end, I implement a hierarchy of operations to help my problem-solving and move towards a functional solution.

The first is handling every power and ground connection. To ensure robust power delivery to the FPGA, I typically use traces connected to power pads which are slightly larger than standard connectivity traces. Beyond the electrical benefits of increased current capacity, these wider traces allow for a quick visual audit of the breakout, identifying power rails at a glance without needing to toggle net colors or utilize highlight tools in the CAD software.

Breaking out the power first also sets the stage, establishing a rigid skeleton of vias and traces that should not be moved for the sake of power stability. Great care must be taken here to ensure that the trace width does not exceed the pad size on Non-Solder Mask Defined (NSMD) pads, as oversized traces can alter the pad geometry and lead to assembly defects.

Once the power network is secured, the next stage is to break out high-speed differential pairs. These connections have a high sensitivity to discontinuities, and I highly recommend consulting experts like Dan Binnun of E3 Designers for deeper insights into field theory and signal integrity. These connections may require specific geometries and as they are a differential connection, will also require additional layout space to keep the pair of traces together.

Following the high-speed signals, any remaining sensitive or critical lines such as analog interfaces or critical system clocks are routed. Finally, all remaining general-purpose I/O pads are broken out. This utilizes any remaining open spaces, allowing for the strategic rotation or positioning of vias to optimize the routing paths as the signals exit the BGA perimeter.

These strategies are highly effective on large packages where the via grid provides ample space for copper to flow. However, as we move to the denser 0.5mm packages, the challenge shifts toward preventing connectivity blockages. In these dense environments, microvias are mandatory, but the breakout must still be designed to allow power to reach the center of the device.

As seen in the 0.5mm example in figure 5, the via grid has been limited to two rows deep before an escape channel is implemented. This ensures that large copper planes, or pours, remain continuous and that no individual via becomes electrically isolated by a perimeter of surrounding vias.

One additional consideration is the use of voided BGA packages. With some manufacturer packages in the 0.65mm to 0.5mm spacing size, deliberate removal of pads in key areas is performed. This removal of entire rows or different areas of a package creates internal gaps within the package, which in turn allows for larger standard mechanical drills to be used within the extra space of the device.

Summary

This analysis serves as a reference for engineers navigating the transition from sparse to dense BGA geometries. At the 1.0mm and 0.8mm pitch levels, standard through-hole methodologies remain viable, though HDI can be integrated for advanced signal integrity requirements. At 0.65mm, the designer reaches a crossroads requiring either advanced mechanical drilling or a shift into HDI. Finally, at 0.5mm and below, HDI is the only path forward. In all cases, early consultation with your fabricator regarding their specific registration tolerances and aspect ratio limits is essential.

The trend toward smaller photolithography and the relentless drive for performance will only increase the prevalence of these dense BGA packages. While we have focused on the physics of the via and the breakout, the next step in the process is to examine the routing of HDI traces themselves and the unique options that exist once signals have successfully escaped the BGA package.

For further reading, I’d recommend ‘Avoiding costly respins: The case for prelayout signal integration’ by Dan Binnun of E3 Designers. Published in issue 2 of the FPGA Horizons Journal, the article talks about why shifting signal integrity analysis and optimization into the pre-layout phase can help teams make informed choices before costly mistakes are baked into the design.

Meet certiqo, a prototype AI debugging and verification tool for FPGA designs that bridges the gap between natural-language requirements and HDL implementations. Verification escapes bugging you?

certiqo is currently in development. Learn more about our progress at certiqo.ai

Developing a radiation-tolerant AI platform for space using AMD Versal adaptive SoCs

Pierre Maillard, Ph.D., Radiation Effects and RAS Solution Team Lead, AMD

In the January 2026 issue of FPGA Horizons Journal, we examined the broad landscape of radiation effects on modern FPGAs and SoCs, spanning environments from ground level to geostationary orbit. That article focused on why radiation still matters, introduced the taxonomy of single-event effects, and outlined the layered mitigation strategies now used across silicon, device, and system levels.

This article continues that discussion but shifts the focus from overview to implementation

Rather than revisiting the fundamentals of radiation physics or fault classification, the goal here is to look inside a modern FPGAbased AI platform, understand how radiation effects manifest in practice in a convolutional neural network, and how AMD developed a radiation-tolerant AI platform for space. What happens when a charged particle strikes a deeply pipelined neural network datapath? How do configuration upsets differ from functional interruptions in today’s heterogeneous devices? And why can an AI system continue running, produce the correct answer, and still fail at the application level?

These questions are increasingly relevant as FPGAs and adaptive SoCs are deployed for onboard AI processing in aerospace, defense, and high-altitude platforms. In such systems, radiation events do not always cause obvious crashes. Instead, they can subtly degrade outputs, confidence metrics, or downstream decision-making failure modes that traditional reliability models were never designed to capture.

Drawing on validated proton-beam testing of a 7 nm AMD Versal™ adaptive SoC platform running a production-scale deep learning workload, this article examines the AMD threetier mitigation strategy.

This strategy spans silicon-level design, devicelevel IP (XilSEM), and system-level integration. We then show how fault-aware training, an algorithm-aware technique that injects simulated faults during neural network training, makes AI datapaths inherently robust to radiation without additional hardware overhead. The result is approximately a 4X reduction in datapath errors.1

Radiation effects in AI-centric FPGA systems

When high-energy particles interact with AI-centric SRAM-based FPGA and adaptive SoC designs, the resulting single-event effects response can be grouped into three signatures that directly impact AI workloads at the silicon/device level and at the datapath level.

Single-Event Functional Interrupts (SEFIs)

SEFIs are system-level events that disrupt normal operation and typically require a reset or reprogramming to recover. Examples include platform hangs, loss of configuration access, or processing subsystems becoming unresponsive. From a mission perspective, SEFIs define availability and recovery requirements.

During accelerated beam testing at energies representative of space and high-altitude environments, SEFI cross-sections on a 7 nm adaptive SoC platform were measured at approximately 1.3–1.4 × 10-10 cm² per design. Translated to a representative 500 km low-Earth orbit with moderate inclination, this corresponds to one to two SEFI events per year for the complete AI subsystem – events that must be handled through system-level recovery strategies.

Misclassification errors

Misclassification occurs when the neural network produces an incorrect result. In computer-vision applications such as object detection, terrain analysis, or cloud classification, this type of error is often the most visible and easiest to determine the root cause. However, misclassification is not the most common radiation-induced failure mode observed in modern AI pipelines.

Probability degradation events

The most subtle, and often most disruptive, errors are probability degradation events. In these cases, the neural network still produces the correct classification, but the associated confidence score drops significantly. During proton-beam testing,1 classification confidence was observed to fall from nominal values near 95% down to ~70% in some cases.

In many deployed systems, confidence thresholds are used to gate decisions or discard low-confidence results. As a result, probability degradation can lead to effective data loss even when the classification itself is correct. These events do not crash the system, trigger alarms, or necessarily correlate with detected configuration upsets, making them particularly difficult to manage using conventional fault-tolerance techniques alone.

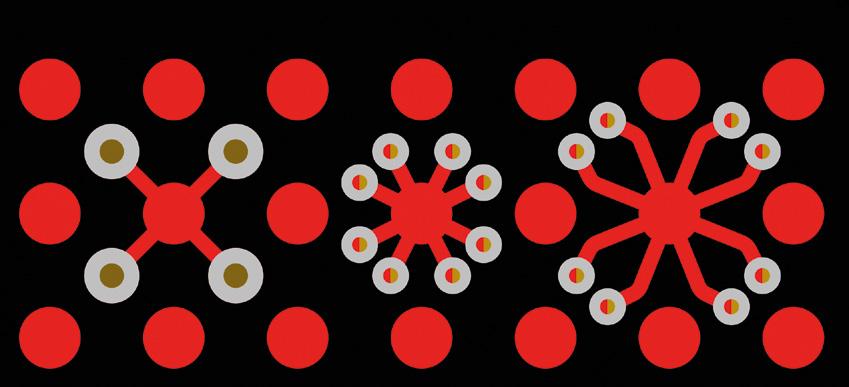

AMD three-layer mitigation strategy

Addressing radiation effects in AI-centric FPGA systems requires a layered approach, with each layer targeting a different class of failure.

Layer 1: Configuration memory protection with scrubbing

At the foundation of the platform is continuous configuration memory scrubbing using soft-error mitigation implemented outside the programmable logic fabric. This scans configuration memory, detects single-event upsets (SEUs) using CRC mechanisms, and corrects the SEUs before they can accumulate or propagate into functional failures.

In the tested platform,1 the scrubbing, operated at 320 MHz, achieved a full configuration memory scan in approximately 13.6 milliseconds. During proton irradiation, more than 2,000 configuration upsets were detected and corrected at a measured cross-section of roughly 3 × 10-17 cm² per bit, without disrupting system operation.

Because this mitigation runs in hardened IP logic rather than consuming programmable resources, it provides continuous protection without reducing available compute capacity. Fast scan rates are critical, because they minimize the window during which an uncorrected upset can influence downstream logic.

Layer 2: Architectural advantages of the AI Engine array

The AI Engine architecture used in the Versal platform introduces several characteristics that improve radiation behavior at the datapath level. Each of the hundreds of AI Engine tiles includes dedicated program and data memory, eliminating cache-related timing variability. This deterministic execution model reduces the likelihood that a transient fault is amplified by timing uncertainty.

The deep learning workload used and evaluated during testing1 used a ResNet-50 convolutional neural network running on a Deep Learning Processing Unit (DPUCVDX8H)2 configured for 8-bit implementation processed 224 × 224 pixel images at over 1,300 frames per second, with the full AI Engine array operating at 1.25 GHz.

The combination of VLIW and SIMD execution provides significant instruction-level and data-level parallelism. While this does not prevent radiationinduced faults, it helps contain their impact by limiting how errors propagate across the datapath in time and space.

Shared Weights Buffer Conv. PEs

Batch Engines

Peripherals ML Pre and PostProcessing I/O

Boot, Networking, UART, GPIO, Debug, etc.

Layer 3: Fault-Aware Training

While hardware-level mitigation addresses configuration and control-path vulnerabilities, it does not fully protect the numerical datapath of a neural network. To address this, the platform incorporated a technique known as fault-aware training (FAT).

FAT modifies the neural network training process to explicitly account for hardware faults. During training, error-injection layers are inserted after activation functions, randomly forcing entire channels of the output feature map to zero with a low probability. In this case, a 1% injection probability was used to emulate the effects of transient hardware corruption during inference.

By exposing the network to these perturbations during training, the resulting model learns representations that are inherently more tolerant to faults during deployment. Importantly, this approach does not require changes to the inference hardware or additional redundancy in the datapath.

Accelerated beam test validation

The effectiveness of this three-layer strategy was validated through extensive proton-beam testing at energies of 64 MeV, with fluences reaching 1 × 10¹¹ protons/cm². Both a baseline neuralnetwork implementation and a fault-aware trained implementation were evaluated on identical hardware, using the same configuration scrubbing setup.

Fault Tolerance and Error Management

PS-based (FPD+LPD PMC)

Fault-Aware Training (FAT)

XilSEM (CRAM Error Correction and Detecion)

Off-chip DDR for Model Weights, Activations, Host OS, etc.

The two designs consumed the same FPGA resources, approximately three-quarters of the available logic and over 90% of block RAM, demonstrating that the added resilience came without hardware overhead or performance penalties.

AMD radiation-tolerant AI platform behavior under the beam

The difference between the two implementations was most apparent in datapath behavior. The baseline network exhibited probability degradation events of up to 30%, with many results falling below a predefined 5% degradation threshold for acceptable operation. Such events would be rejected by downstream systems despite being nominally correct classifications.

Probab. Degradation <=5% (datapath errors)

Probab. Degradation >=5% (datapath errors)

SEFI (silicon errors)

reduction in datapath errors

Baseline DPU/ML Radiation Tolerant DPU/ML

Fig. 5. Summary of SEE cross-section for degradation in probability (datapath errors) and SEFI events

In contrast, the fault-aware trained network showed no probability degradation events above 5%, with observed degradations limited to approximately 2% or less. Overall datapath error rates were reduced by roughly a factor of four, and severe degradation events were eliminated.

SEFI rates and system availability

As expected, SEFI rates were statistically equivalent between the two implementations, since both relied on the same hardware-level mitigation. Measured SEFI cross-sections translated to approximately 0.01–0.02 events per day across the full AI Engine array in a representative low-Earth orbit scenario. These events define system-level recovery requirements rather than datapath correctness.

Practical design guidelines

Several practical lessons emerge from this work that are broadly applicable to FPGA engineers designing radiation-tolerant AI systems:

Treat training as part of the reliability strategy. Radiation tolerance should not be added after deployment. Incorporating FAT early provides significant resilience gains without hardware cost.

Maximize scrubbing effectiveness. Configuration scrubbing should run at the highest feasible frequency to minimize exposure time for uncorrected upsets.

Favor deterministic architectures. Dedicated memories and predictable execution reduce the ways in which faults can be amplified by timing variability.

Define acceptance criteria early. Establish what level of output degradation is acceptable for the target application before testing begins, so mitigation effectiveness can be evaluated objectively.

Validate after quantization. When deploying quantized networks, ensure that FAT benefits persist after conversion from floating-point models.

Looking ahead

As AI workloads move from the ground into highaltitude and space environments, the definition of reliability must expand beyond system uptime to include output integrity. Radiation-induced faults that degrade confidence or subtly alter results can be just as damaging as outright failures.

The results presented here show that platforms evaluated under these test conditions can support sophisticated deep learning workloads in radiation environments without sacrificing performance. By combining continuous configuration protection, architectural predictability, and algorithm-aware training techniques, designers can build systems that behave predictably even in the presence of radiation.

For FPGA engineers, the key takeaway is that radiation tolerance is no longer solely a hardware problem. It is a cross-layer design challenge, one that spans silicon, architecture, and algorithms, and one that can be addressed effectively with the right combination of techniques.

References:

1. Maillard, Pierre; Dhavlle, Abhijitt; Chen, Yanran Paula; Fraser, Nicholas; Chen, Yushan; and Vacirca, Nicholas. “Protons Evaluation of 7nm Versal™ AI Engine (AIE) Based Radiation Tolerant Platform for Deep Learning Applications.” (https://ieeexplore.ieee.org/document/10759203)

2. Advanced Micro Devices, Inc., AMD Vitis™ AI User Guide, UG1414, Rev. 3.0. 2023. (https://docs.amd.com/r/3.0-English/ug1414-vitis-ai/Versal-AI-Core-Series-DPUCVDX8H)

DESIGN SERVICES

Enclustra powers your FPGA & SoC projects with expert engineering, fullcycle support, rapid turnaround, and professional troubleshooting. Our Design Services span high-speed hardware, HDL firmware, and embedded software, from specification and implementation to prototype production. No matter the stage of your project, Enclustra supports you with turnkey FPGA Design Solutions.

Check out some of our success stories!

OSVVM: A better way to verify your VHDL designs

Jim Lewis, VHDL Trainer & OSVVM Chief Architect, SynthWorks Design Inc

Today’s FPGA designs continue to grow in size while schedules either stay the same or shrink. According to the 2024 Wilson Research Group FPGA functional verification trend report, verification takes a significant portion of a project’s schedule, yet numerous functional bugs escape into production, and schedule milestones are missed more often than achieved. As a result, powerful verification capabilities are necessary to improve both productivity and quality.

EDA vendors would like us to think that SystemVerilog is dominant and VHDL verification methodologies are a fringe thing. The Wilson Research Group report shows this is far from the truth. VHDL is used by 66% of the global market for FPGA design and 48% for FPGA verification, making VHDL #1 for FPGA design and verification.

For FPGA verification methodologies worldwide, UVM (based on SystemVerilog) is used by 48%, Open Source VHDL Verification Methodology (OSVVM) by 35%, and Universal VHDL Verification Methodology (UVVM) by 27%. In Europe, OSVVM is the market-leading FPGA verification methodology.

For the VHDL community, OSVVM answers the call for powerful verification capabilities. There is no new language to learn. All you need is a regular VHDL license and a simulator that supports VHDL-2008, or an open-source simulator such as NVC or GHDL.

What is OSVVM?

OSVVM is a suite of libraries designed to streamline your entire VHDL verification process, boosting productivity and reducing development time. Each library provides independent capabilities, allowing selective adoption and a learnas-you-go approach. OSVVM facilitates writing concise and readable tests for either simple, directed, RTL-level tests or complex, randomized, chip-level tests.

Collectively, these libraries provide VHDL with verification capabilities that rival SystemVerilog + UVM. Capabilities include transaction-based testbenches, verification components (VCs), self-checking, error tracking, functional coverage, randomization, requirements tracking, cosimulation with software, comprehensive reporting, and a universal scripting API.

With OSVVM and a good team lead, any VHDL engineer can do verification – and have fun doing it.

Transactions: The easy way to write tests

Writing tests involves creating waveforms at an interface to the device under test (DUT). In a basic testbench, signals are driven directly to create interface waveforms. This is tedious and error prone. To make it simpler, OSVVM replaces signal wiggling with transactions.

A transaction is an abstract representation of either an interface waveform (such as Send for a UART transmitter or Read for an AXI4 bus), or a directive (such as WaitForClock). In OSVVM, a transaction is initiated using a procedure call. For example:

Send(UartTxRec, X"4A"); Send(UartTxRec, X"4B");

These two calls generate the UART waveforms shown in figure 1 above.

Writing tests using transactions makes tests easy to read by anyone who has basic programming experience, including hardware, software, and system engineers.

Testbench framework: Make the DUT feel like it’s on the board

The objective of any verification framework is to make the DUT feel like it has been plugged into the board. Hence, the framework must be able to produce the same sequence of waveforms that the DUT will see on the board, including the simultaneous behavior of independent interfaces.

The OSVVM testbench framework (TestHarness), shown in figure 2, looks identical to other frameworks, including SystemVerilog. It includes the test sequencer (TestCtrl), VC (AxiStreamTransmitter, AxiStreamReceiver, etc.), and the DUT.

SerialData

Each of these connect together as VHDL components – just like RTL design. As a result, unlike SystemVerilog, OSVVM does not need the complexity of object orientation (OO) and execution phases.

A test is an architecture of the test sequencer and consists of a sequence of transactions. Each procedure call passes transaction information to the VC via the transaction interface (a record). The VC uses this information to create interface waveforms on the DUT interface.

Standardized transactions simplify VCs and tests

Ordinarily, each VC must define a transaction interface (a record) and an API (procedures). Instead, OSVVM’s Model Independent Transaction (MIT) library defines these for two classes of interfaces: Address Buses (such as AXI4 and Wishbone), and Streams (such as UART and AXI Stream).