Can AI teach kids to read? UF researchers create interactive literacy app

DoctorGPT: AI’s rising role in Gainesville healthcare

THE APP AIMS TO ENGAGE YOUNG READERS WITH VOCABULARY AND COMPREHENSION

By Grace Larson Alligator Staff Writer

Every month, Kayla Sharp’s son excitedly awaits a book delivered to him by mail. He’s not just excited for the words on the page, but for the adventures he’ll follow along with on his tablet.

Sharp’s son is enrolled in New Worlds Reading Initiative, a program designed to improve literacy skills in young readers by providing them with free monthly books.

Motivated by her son’s excitement, Sharp took on a roughly twoand-a-half-year project to design an artificial intelligence-integrated app that helps young readers develop literacy skills.

The project provides greater insight into how AI fits into the literary and educational spaces, Sharp said. As education funding — particularly for K-12 teacher salaries — remains low in Florida and Alachua County, experts and community members say they’re increasingly looking to resources like the app to fill in learning gaps.

“As we move into a space of AI becoming more readily accessible, more built into things that we use every day, it’s important to stop and consider not just how can we use AI, but how can we use AI in a strategic way that improves the impact of what we’re doing?” Sharp said.

UF College of Education’s ELearning, Technology and Communications Team designed the app in partnership with the UF Lastinger Center for Education’s New Worlds Reading Initiative. Sharp is the assistant director of operations for the technology team.

The app, AR Expeditions, features four augmented reality experiences based on books provided by the initiative. Students can engage with an array of topics, ranging from marine life to superheroes, while improving their

reading comprehension skills.

The app combines real-world surroundings with 3D animated characters like sea creatures. Students can view the world around them through the camera feature, with various characters and games appearing on the screen.

Also crucial to the design process was the use of AI, which was integrated in all steps of development, Sharp said. From brainstorming to coding and proofreading, AI helped facilitate the process.

The app is meant to excite and motivate young readers like her son, Sharp said.

“The ultimate goal of this app was to improve motivation for children who may not necessarily feel excited about reading, because reading is hard,” Sharp said. “It’s a new skill that they’re learning, and like any new skill, there’s a learning curve, and sometimes it takes a little bit of motivation and fun to make that learning curve seem less daunting.”

With students at the core of the project, the developers relied on feedback from “mini-researchers,” or third grade students who tested the app.

Their input was crucial to the design process, Sharp said.

“Even though they are children, they still have different needs to be able to access that type of technology,” she said. “Meeting them at their level was very important.”

The app’s development comes amid an ongoing conversation about the role of AI in childhood development.

Anand Rao, the director of the Center for AI and the Liberal Arts at University of Mary Washington, currently works with middle and high schools to improve AI literacy education. The goal is to teach students how AI works, discuss its limitations and show them how to use it responsibly, Rao said.

“If students are taught how to use it [AI] responsibly, they can think about using it for brainstorming,” he said. “They can use it in a Socratic dialogue, where it will ask questions and guide students.”

AI-based outside resources can

Reagan Bresnahan // Alligator Staff

provide more personalized learning experiences for students falling behind, Rao said, but these resources cannot replace a teacher.

To promote a healthy relationship between AI and education, parents should learn more about AI to facilitate open discussions with their children about how to responsibly use it, Rao said.

According to a 2024 study from Pew Research Center, just over a third of adults said AI is more likely to have a negative impact on K-12 education. About 24% of adults said the impact would be positive, and 23% said it would be both positive and negative.

AI can be used to improve literacy rates, researcher Ying Xu found in a 2024 article published by the Harvard Graduate School of Education. AI companions can ask questions during reading that improve comprehension and vocabulary.

However, AI fails to simulate personalized instruction offered by teachers and tutors. Xu’s study found that while children were quite talkative with AI, they were even more engaged when speaking with a human — steering the conversation, asking follow-ups and sharing thoughts.

Interactivity boosts enthusiasm for literacy in the AR Expeditions app, according to its leaders. Shaunté Duggins, the New Worlds Reading Initiative’s associate director, said the program aims to offer interactive resources to excite hesitant readers.

“We wanted to think about how we embed something that’s more interactive, that helps children to be engaged and also to increase their literacy skill,” Duggins said.

Within the marine life portion of the app, students can play a bubble game, where they answer questions about fish by popping a bubble with the right answer. They can then read facts about the animals. Other games include vocabulary fishing and coloring fish to build an aquarium.

The hope is the app will excite users to engage with topics present in the program’s books, Duggins said.

Through the program, eligible pre-K through fifth grade students receive a free monthly book from October to July. The books are selected in partnership with the Florida Department of Education and Scholastic, a children’s book distributor.

In addition to books, New Worlds Reading Initiative provides caregivers with family reading guides and teachers with professional development programs to help them become better facilitators of learning.

“We are part of the solution,” Duggins said. “We’re contributing to meeting the needs of students who need some additional support, their teachers that need support, the families that need support.”

The program has served over 500,000 students, Duggins said, delivering more than 13 million books since its inception in 2021.

Read the rest online at alligator.org.

@graceellarson glarson@alligator.org

ALACHUA COUNTY FIRE RESCUE, SHANDS HOSPITAL AND UF HEALTH REFLECT ON USE

By Nevaeh Baker Harris Alligator Staff Writer

Artificial intelligence has entered health care. Medical notes can now be transcribed with AI, diagnoses can be made on Google with the click of a button and ChatGPT is taking over the role of a traditional therapist for younger generations.

Still, some in the medical field across Alachua County say AI needs more research, largely due to privacy concerns.

Alachua County Deputy Fire Chief Jeff Taylor said the department uses AI for administrative tasks but remains wary of bringing the technology into patient care.

“Currently, we use tools that measure our effectiveness and response and help us to understand the types of calls that we run,” Taylor said. The county is taking a “conservative approach” to AI, using it only for note-taking, he said.

Taylor also noted that while there are concerns surrounding patient privacy and HIPAA laws with AI, Alachua County doesn’t put any patient info into a large language model, or LLM, that could potentially use that sensitive information.

Access to patient records has been a topic of debate since AI first crept its way into health care, a trend that was the topic of a roundtable with health officials given by Guidehouse. Since AI deals with sensitive information, it must be HIPAA compliant.

According to The HIPAA Journal, AI doesn’t have its own safety rules. Instead, it must follow the same rules that govern protected health information for humans or more traditional documentation systems. Other local emergency responders echoed Taylor’s concerns. Derek Hunt, a clinical educator at UF Health Shands Hospital and flight paramedic, said he hasn’t seen AI being used much in the field due to its inaccuracy. However, some medical equipment, such as ultrasound machines, are beginning to use AI, Hunt said.

Hunt also said patients use chatbots for their symptoms “all the time.”

“Sometimes the information is good, and sometimes it isn’t, and it’s really a case-by-case basis,” Hunt said. “But everyone’s going to Google everything before or while you’re talking to them.”

Research from Duke University published in February found chatbots tend to give advice that is medically correct but lacks context. LLMs can’t take patient histories or read between the lines with symptoms, researchers concluded.

Like Taylor and the Alachua County Fire Rescue, Hunt says UF Health has been proactive in protecting patient privacy and HIPAA laws even with the use of AI programs, saying that Shands uses rigorous procedures to make sure it’s not violating any laws.

At UF, AI is also slowly being integrated into coursework and patient care. The College of Public Health and Health Professions offers a certification in AI public health and health care. UF Health has hired more than 30 faculty members who specialize in AI across six UF Health colleges.

As part of AI research, UF Health has also begun to use AI in operating rooms to analyze patient care and complications in the ICU.

Dr. James Wesley, a primary care provider for the Student Health Care Center, said in an email to The Alligator he has patients come to him with AI health advice at least once a day.

Wesley also echoed earlier sentiments of AI not being a useful tool for patients to get medical advice from, saying chatbots have no safeguards and are unable to identify true crises.

Research published in the journal Nature Medicine found LLMs were able to pass medical exams but couldn’t correctly identify diseases in real-world settings. In an interview with the BBC, a co-author of the study, Dr. Rebecca Payne, explained people are using LLMs to act as a doctor even though the technology isn’t advanced enough yet.

Liyana Ahmed, an 18-year-old UF biology freshman, said she has done research on how AI is affecting health care, and she believes there are positive and negative implications of AI use within health professions.

“I think that it can be very, very beneficial and help doctors with diagnoses of patients in a much faster and easier way,” Ahmed said. “But I also think that it should be used with caution and only if its validity can be proved.”

Angie Joseph, an 18-year-old UF biology freshman, also believes AI can be used for good in the medical field if humans check the results. However, she believes AI shouldn’t be used to get results for patient care.

“It’s not a human at the end of the day,” Joseph said. “They are not going to have the same ethics that a human might have.”

@nbakerharris nbakerharris@alligator.org

Assistant Director of Operations for E-Learning, Technology and Communications at UF’s College of Education Kayla Sharp poses at her desk, Thursday, April 2, 2026, in Gainesville, Fla.

AI misconduct cases are climbing at UF — and so are the stakes for students

UF PROMOTES RESPONSIBLE AI USE AS STUDENTS ACCUSED OF MISUSE CRITICIZE LONG PROCESS

By Swasthi Maharaj Alligator Staff Writer

Reports of academic misconduct at UF that reference artificial intelligence violations have surged in recent semesters, according to university records obtained through a public records request.

From Fall 2021 to Fall 2023, UF reported no Honor Code violations that included terms like “artificial intelligence,” “AI” or “ChatGPT.”

In Spring 2024, 20 cases were identified; that figure rose to 42 in Fall 2024 and 66 in Spring 2025. The records only count misconduct reports explicitly mentioning AI in their descriptions.

The increase of AI Honor Code violations come as the university expands its AI training and academic programming.

University officials say they are attempting to teach students and faculty how to use AI responsibly, rather than banning the technology from the classroom.

Hans van Oostrom, the director of UF’s Artificial Intelligence

Academic Initiative Center, said AI is everywhere, across every discipline.

“We recommend that we don’t ban AI for everything but to carefully figure out where can we use AI with the students and where should they not,” he said.

To achieve this, the center created the AI Across the Curriculum program, which aims to integrate AI concepts into academic programs across all colleges. The effort utilizes faculty training workshops and the AI Education Committee to evaluate how much and what type of AI content should be included in different courses.

“To do that, we need to train the faculty who aren’t necessarily experts in AI,” van Oostrom said.

UF, he said, now has over 200 courses that include AI content. This Spring alone, 177 courses were designated to include AI in their curriculum.

The center also offers student certificates designed to build a foundational understanding of AI. Almost 800 students were enrolled in introductory AI certificate courses this Spring, which can be taken in addition to any major. This, van Oostrom said, is the highest enrollment since the program launched in 2022.

Nearly half of all Honor Code cases in Fall 2025 involved AI-related misconduct, prompting questions about enforcement, student understanding and whether institutional responses are keeping pace with growing usage. SEE AI IN CLASSROOMS, PAGE 6

Local agencies, researchers keep up with quickly developing AI deepfakes

AI ADVANCEMENTS FUEL GROWING CONCERNS OVER CHILD EXPLOITATION

By Vanessa Norris Alligator Staff Writer

A North Florida taskforce on crimes against children received 27 reports from the artificial intelligence chatbot Grok and 11 reports from OpenAI in the past year.

The reports indicate someone prompting the platforms to create

SPORTS/SPECIAL/CUTOUT

University

child exploitation material, said Sgt. Christopher King, a Gainesville Police Department detective and commander of the North Florida Internet Crimes Against Children Task Force.

GPD is a host agency for ICAC, which covers 38 counties in northern Florida. The data indicates deepfakes, which have garnered nationwide alarm, are seeping into local communities.

Deepfakes are photo, video or audio content generated with AI models, particularly generative AI, that

depict people performing actions or saying phrases they never actually did.

As Florida lawmakers attempt to grapple with restrictions on the everevolving technology, local experts say deepfakes pose a threat to public safety.

King supervises an investigative squad that follows up on CyberTips sent by the National Center for Missing & Exploited Children. The tips are submitted when online platforms, such as Meta, TikTok and Snapchat,

believe child exploitation is happening on their platform, King said.

In Florida, it is a third-degree felony to generate child pornography, which is the possession or control of images modified to portray a minor engaged in sexual activity.

North Florida ICAC has an inhouse programmer monitoring the dark web who has found AI-generated images and videos of child exploitation material, King added.

“It’s definitely a growing issue that parents and children need to be

aware of,” he said. “This, in return, should caution anyone when they’re sharing any photographs online.”

On March 31, the NCMEC released child exploitation data gathered from 2025. According to the report, their CyberTipline received more than 1.5 million tips linked to AI-generated child sexual exploitation — an over 2,000% increase from 2024.

Advanced AI models can create “photorealistic, high-resolution content of any images,” said Kevin Butler, a UF professor in computer and information science and engineering.

Story description finish with comma, pg#

Computer science students grapple with unemployment. Read more on pg. 13.

The Avenue: Film

UF film community decries AI-generated content, pg. 8

Metro

Alachua County hires first AI analyst, pg. 5

Eva Lu // Alligator Staff

Today’s Weather

When The Alligator sends a reporter to cover an event, we tell t hem to use their “five senses.” How many people were there? Can they describe the atmosphere? What did it smell like? Sound like? How high was the sun in the sky? Our stories come from scribbles on notebooks, hastily labeled transcripts and a methodical Google Docs organi zation system. Yet more and more, the content we consume online comes from a simple prompt in sans-serif font: “Ask anything.”

Many media outlets now lean on artificial intelligence to report and write stories. Papers like Cleveland's The Plain Dealer are using generative AI tools to draft articles. Major publications have entered corporate partnerships with OpenAI.

In the middle of it all, student journalists are wondering how AI will affect their careers. It’s not just journalists wondering. AI touches every aspect of our lives, from arts to finances to health care. Algorithms plan our vacations, and large language models write our wedding toasts.

Most large-scale AI systems live in data centers that can take a heavy toll on the planet. Electricity consumption at U.S. data centers is expected to more than double by 2030, and in 2024, these centers consumed about the same amount of electricity as the entire nat ion of Pakistan.

There are advantages, too. A 2023 Swedish study found AI helped health care workers detect 20% more cases of breast cancer, avoiding an increase in false posi tives and reducing doctor workloads.

Here in Gainesville, we’ve watched UF rush to position itself at the forefront of the AI Revolution. All 16 colleges at UF offer AI courses, with more added every semester. Fourteen thousand students enroll in these classes annually. Three hundred AI-focused faculty work throughout the university. The HiPerGator, located on UF’s East Campus, is the fastest university-own ed supercomputer in the country.

Alachua County recently hired its first AI analyst. Local businesses are using tools like ChatGPT to market margarita specials and manage payrolls. Children as young as 8 years old are navigating AI-driven apps as educational tools.

For this special edition, containing 29 AI-centered stories, our reporters used their five senses to cover the effects of this evolving technology on our daily lives.

Our food reporter minced, grated and chopped the ingredients for two versions of a Honey Garlic Korean BBQ Shrimp. Our university reporter camped outside the computer science engineering building to ask students how they felt about the field’s shrinking job oppor tunities.

We spoke with professors, politicians, health care professionals and athletes to ask the impossible question: What does our future look like?

At The Alligator, we hope the answer continues to be: “human-ge nerated.”

- The Alligator Editorial Board (Zoey Thomas, Megan Howard, Sara-James Ranta and Pristine Thai)

GAPS IN U.S. COPYRIGHT LAW LEAVE AUTHORSHIP UNRESOLVED

By Sara Dhorasoo Alligator Staff Writer

It’s a scenario playing out more and more frequently. Someone directs a chatbot to make artwork. The bot responds with a piece that draws from existing art online. Who now owns the resulting work: the prompter, the bot or the original artist?

That’s the question artists and lawyers are struggling to answer.

Under current U.S. intellectual property law, only humans and legal entities, not AI systems, can hold copyright. But the law didn’t anticipate machine-generated creative work. Today, courts are sorting out how to treat AI outputs.

For now, the prompter is often considered the author, according to Nouvelle Gonzalo, a managing attorney at Gonzalo Law in Gainesville.

“But then the question arises,” Gonzalo said. “Who's the author when you're putting in the work of someone else?”

The possibilities, she said, are tangled: the user who wrote the prompt, the platform that produced the output or the original creator whose work was used as a springboard.

“Yes, there are gaps. No, there's not enough legal coverage,” Gonzalo said. “The law doesn't move as fast as AI development and technology, so it's just catching up.”

Jacquelyne Collett, a 73-year-old Gainesville artist, feels concerned her two-dimensional work could appear in AI output. She’s less concerned about her glass work, which she said requires crafting, moving, touching and designing of the actual piece.

Throughout her career, Collett has always felt concerned about people replicating her ideas. She now believes AI will intensify that concern on a “wider scale” for the next generation of artists. At her age, though, she doesn’t think she’ll have to participate in that new world of art and copyright complications, which she

compared to the “wild west.”

“It's all hypothetical right now,” Collett said. “We're imagining what to be afraid about.”

Current copyright law protects any “fixed, tangible creative work,” such as writing, music, artwork, film and recordings. When AI produces one of those works, the ownership question emerges, because federal law doesn’t recognize a machine as an author.

Derek Bambauer, a UF law professor with expertise in intellectual property, said generative AI poses an intellectual property problem. But he has another, more urgent worry: “front-end copying," when machines create new material identical or similar to existing works.

The real risk comes from AI that mimics or replaces art without licensing, Bambauer said. In doing so, the technology may cut into the original artists’ revenue. Distinguishing human-made elements from machine-generated ones is often difficult, which worsens the issue, he said.

The Trump administration is adopting a limited approach to AI regulation. As a result, states are driving most of its governance. Florida appears eager to position itself among the early regulators.

States should focus on real gaps in existing law rather than hypothetical risks, Bambauer said, because predicting which problems will actually emerge is difficult.

“From my perspective, the worry, as always, when you start enacting technology specific regulation, is that it gets out of date very quickly,” Bambauer said. “I think that there's a virtue in waiting until we have a clear sense of the problem.”

Looking ahead, Bambauer said, copyright law must evolve as AI becomes a routine part of creative work, forcing clearer standards for what counts as human authorship.

For now, the safest path remains using material with clear authorization, either by purchasing a license or relying on works released under a Creative Commons license.

Read the rest online at alligator.org.

sdhorasoo@alligator.org

Editor-In-Chief

Engagement Managing Editor

Digital Managing Editor

Senior News Director

Enterprise Editor

Metro Editor

Zoey Thomas, zthomas@alligator.org

Megan Howard, mhoward@alligator.org

Sara-James Ranta, sranta@alligator.org

Pristine Thai, pthai@alligator.org

Vera Lucia Pappaterra, vpappaterra@alligator.org

Bailey Diem, bdiem@alligator.org

Sofia Meyers, smeyers@alligator.org

Opinions Editor

University Editor El Caimán Editor

Sofia Bravo, sbravo@alligator.org

Corey Fiske, cfiske@alligator.org

Avery Parker, aparker@alligator.org

Ava DiCecca, adicecca@alligator.org

Assistant Sports Editor

Assistant Multimedia Editor the Avenue Editor

Max Bernstein, mbernstein@alligator.org

Multimedia Editor

Noah Lantor, nlantor@alligator.org

Sports Editor Editorial Board

DISPLAY ADVERTISING

Advertising Office Manager

Bayden Armstrong, barmstrong@alligator.org

Copy Desk Chief Pristine Thai, pthai@alligator.org

Zoey Thomas, Sara-James Ranta, Megan Howard, Pristine Thai

352-376-4482

Sales Representatives Cheryl del Rosario, cdelrosario@alligator.org

Sales Interns

CLASSIFIED ADVERTISING

Paige Montero, Simone Simpson, Makenna Paul, Sofia Korostyshevsky, Daniel Sanchez

Sofia Morales-Guzman, Alessandra Puccini, Olivia Fussner

352-373-3463

Classified Advertising Manager Ellen Light, elight@alligator.org

BUSINESS

352-376-4446

Comptroller Delia Kradolfer, dkradolfer@alligator.org

Bookkeeper Cheryl del Rosario, cdelrosario@alligator.org

Administrative Assistant Ellen Light, elight@alligator.org

ADMINISTRATION

352-376-4446

General Manager Shaun O'Connor, soconnor@alligator.org

President Emeritus C.E. Barber, cebarber@alligator.org

SYSTEMS

IT System Engineer Kevin Hart

PRODUCTION

Production Manager

Namari Lock, nlock@alligator.org

Publication Manager Deion McLeod, dmcleod@alligator.org

The Alligator strives to be accurate and clear in its news reports and editorials. If you find an error, please call our newsroom at 352-376-4458 or email editor@alligator.org 352-376-4458

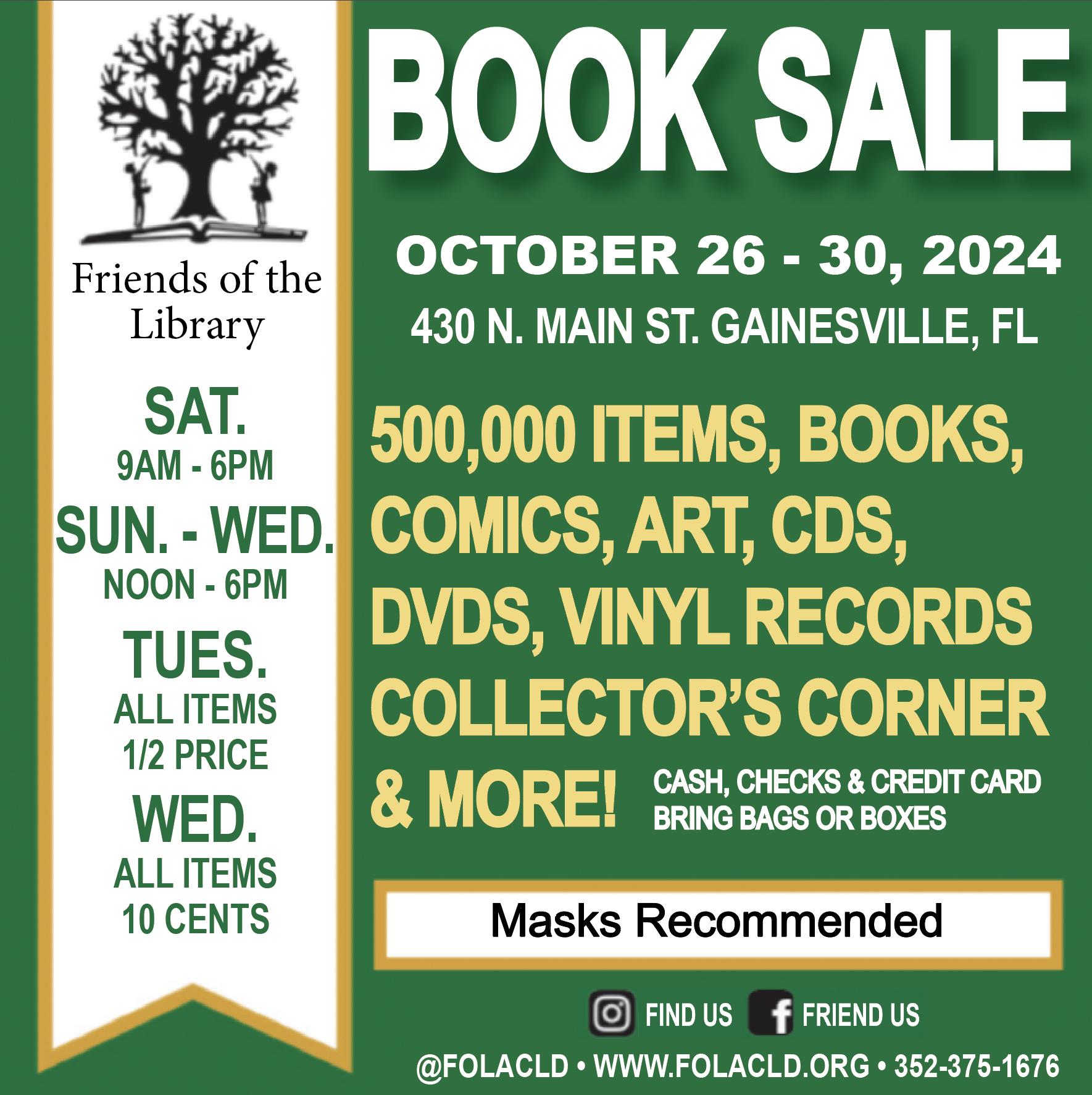

Alachua County is all in on the AI Revolution

COUNTY OFFICIALS, INCLUDING A NEW AI ANALYST, REVEAL DEVELOPING PLANS FOR WIDESPREAD USE

By Kaitlyn McCormack Alligator Staff Writer

Last year, Alachua County announced a new job position: artificial intelligence analyst.

Sameer Shaik, an AI researcher and expert from Hyderabad, India, filled the role. As part of the job, Shaik advises county leadership on how to implement AI in everyday operations.

Shaik’s role fits into the county’s larger mission to use AI to increase productivity, streamline internal operations and create faster, more efficient responses to community inquiries. It’s a goal shared by local governments across the country, with experts describing the most common uses as marketing tactics and internal organization.

The county is working with different vendors to integrate AI systems for internal and external uses. Right now, it’s only in the beginning stages, finding systems that best fit the county’s needs. Still, Shaik doesn’t predict much delay.

“As long as our leadership works out the details with all these vendors, I think it’s gonna move really, really fast,” he said.

The county’s first large AI venture so far: Copilot. Microsoft Copilot is an AI assistant integrated into all Microsoft 365 apps.

The county eventually wants to use Copilot to aid staff in their everyday work. Copilot will help staff save time navigating, summarizing and analyzing long, complex government documents and staff emails.

The county is working to obtain a Microsoft Copilot license, which costs about $30 per month and grants all county staff access to Copilot features. Right now, the program is in its pilot phase of testing.

“There are a lot of areas where we can integrate AI, but right now, it’s mostly about researching our internal efficiency tools for that and also on the side of resident facing tools as well,” Shaik said.

His biggest concern with integrating AI systems for a government client like Alachua County, he said, is privacy. Privacy is a large factor because AI systems will often interact with sensitive information related to residents, like personal, financial or service related data, Shaik said.

“Any day, I would pick the privacy and the security over accuracy,” Shaik said. “We cannot afford to give out data or have any leakages in terms of data, so we have been very, very cautious.”

The county meets with AI vendors at least once a month, he said, and privacy and accuracy are the biggest things he looks for in any company.

“There will always be room for improvement, and we’re always exploring products,” he said.

Shaik is now building security and privacy guardrails for the Copilot project. The pilot phase is setting a good precedent for work with future vendors, he said, which makes him optimistic.

“We’re in a very strong position in terms of data governance and security,” Shaik said. “So that will enable us, going forward, to have more smooth adaptations with the rest of the AI.”

AI introduction in Alachua County

Gee Chow, Alachua County’s information technology specialist director, said the county is making strides toward artificial intelligence integration.

“If we become more efficient, we can serve our customers better,” Chow said.

Last month, more than 100 county staff members participated in a four-week AI introduction course provided by Miami Dade College, Chow said. The course taught the basic concepts and uses of AI.

Chow said he thinks the training was wellreceived by staff.

“People appreciate the fact that the county has some kind of training offer[ed] in this topic, which is very relevant for us right now,” he said.

Chow noted the county is also considering internal AI systems like ChatGPT and Google’s Gemini. However, developing external, customer-facing AI systems is also a county priority down the road.

In the future, Chow hopes to integrate a CRM, or customer relations manager, into the county’s website. CRMs have many functions, he said, including creating a centralized database for county information to help streamline and track progress of specific projects.

A chatbot would be an important aspect of the county’s CRM, Chow said. The tool could give community members immediate answers.

Because the county’s only in the beginning stages of AI implementation, Chow said they’ve

run into hurdles and anticipate more.

“Challenges will be … understanding what the business needs are and making sure we interpret that correctly and provide the proper technology solution,” he said.

Funds aren’t yet allocated to AI operations in the county’s budget, Chow said — but this might change as operations grow.

“We will have to ask for proper allocations, but we’re not at that stage yet,” he said.

Expert opinion

Anthony Coman, a UF instructional associate professor, teaches professional writing in the Management Communication Center. Coman researches the impressions people have of AI communication.

He said using AI for external communication has pros and cons.

“If you’ve ever tried using the county website or looking up information, it can often be either intimidating or hard to navigate the system and hard to find a clear answer,” Coman said.

He said an advantage of using AI chatbots on government websites is that they can sift through information quickly and deliver unique answers to narrow questions.

However, Coman said issues can arise when businesses rely on AI systems to communicate with constituents.

“It’s important to remember that communication is a fundamentally human process, and people prefer to be communicated with by other humans,” Coman said.

Especially when communicating with concerned or upset constituents, Coman said businesses and governments should remember human responses go a long way.

“My research suggests that AI-powered chatbots will not be good at responding to complaints, and the impact may in fact be negative,” Coman said. “The county would be wise to have a strategy for pivoting to human responses when the chatbot receives complaints or angry messages.”

Coman also said governments using AI systems should be particularly careful due to the potential sensitivity of information shared.

“I think that the most important things to remember are that AI is not infallible,” Coman said. “Accidentally having wrong information get out there or misinforming a constituent would be problematic.”

Integration around north central Florida AI integration isn’t unique to the county. Ryan Morales, owner of the Delpuma AI consulting firm, has worked with 150 clients in north central Florida to integrate AI into their business models over the past two years.

Morales advises companies on how AI can fill potential gaps in their workflows. He holds seminars to teach businesses how to use AI productively without feeling overwhelmed.

Marketing tactics and internal organization systems, such as CRMs, are the most common uses implemented, he said. Leaders in AI integration are often law and medical offices, Morales said, because they have the money to stay at the forefront of the development.

However, he said he’s seen a sharp increase in small businesses and solo entrepreneurs seeking AI consulting and services in the past couple of years — primarily for making advertisements and promotional content with generative photo and video technology.

“Now it’s basically everybody and anybody, because the cost has come down so much,” he said. “What used to cost thousands of dollars just for five seconds of video is now being done for maybe $20 to $40 on a tool online.”

Seven out of 10 of Morales’ clients are small businesses looking to elevate their practices, he said. He expects AI to be an integral part of many companies in the near future, helping them deliver better products and services.

“I always tell business owners, ‘It’s best you embrace it now, learn about it now, because if you don’t, your competitors are the ones that are going to outpace you,’” he said.

@kaitmccormack20 kmccormack@alligator.org

How 3 small Gainesville businesses are using — or rejecting — AI

By Lily Hartzema Alligator Staff Writer

When it comes to smelling fish, there’s only so much artificial intelligence can do.

As the integration of AI becomes more prominent, local businesses are deciding how far they are willing to incorporate it into their systems. For some, AI serves as a tool for brainstorming ideas, managing inventory or scheduling shifts. For others, it’s an unnecessary tool that can’t replace human-driven operations — and one that experts say leaves customers skeptical.

Lee Dedrick, the 60-year-old coowner of Northwest Seafood in the Millhopper area of north Gainesville, doesn’t find it necessary.

Because Northwest Seafood’s products rely heavily on freshness and quality, the company prioritizes hands-on inspection and trusted re-

lationships with suppliers — something AI can’t replace, he said.

“We focus on inspecting the quality of seafood firsthand,” he said. “Taking a look at it, feeling it, smelling it, touching it, talk[ing] to the people who catch it, produce it, import it.”

While Dedrick said he knows friends and associates who use AI for marketing, Northwest Seafood’s service model remains centered around human operations. Even when it comes to tracking inventory, Dedrick said, visual inspection remains more practical.

“We’re a small business,” Dedrick said. “It takes less than 10 seconds to walk in and put your eyes on what’s stacked up in boxes with labels on them.”

But other businesses have found uses for AI. Small business use of AI increased 18% nationwide from 2024 to 2025, according to an an-

nual report from the U.S. Chamber of Commerce. Over half of retail and restaurant owners reported using the technology.

Eros Puentes, the 38-year-old general manager of La Maracucha Restaurant & Creperie, said while AI helps with internal operations, it will not replace human interactions with customers.

The restaurant operated as a food truck from 2017 to 2023, when the business moved to a brick-andmortar location on West University Avenue.

When it comes to marketing and promotions, he said, he doesn’t use AI to create posts. However, he used AI to help him visualize a redesign of the restaurant’s logo when he moved to the brick-and-mortar location.

Now, Puentes uses AI for internal operations, keeping track of inventory and making alterations to online menu items, but not for front-end services.

“Our customers do not interact with AI when they’re trying to get our services,” Puentes said.

In separating customer relations from AI, Puentes breaks from the norm among small business owners. Among owners who reported embracing the technology in the 2025 Chamber report, 87% agreed AI helped them communicate more with customers, with a similar percentage saying AI helped them find new customers and build stronger relationships.

La Maracucha uses Toast as its management software to optimize operations and sales. Puentes said he uses the point-of-sale system that Toast provides, which includes its own version of ChatGPT where restaurant owners can ask restaurantrelated questions.

“It is great for restaurant questions,” he said. “If you want to solve

something quick, I ask the question; it gets me the answer.”

The AI service allows the restaurant to more efficiently manage its menu for online orders, he said. It makes real-time adjustments to online menu items when an ingredient used in several dishes runs out. This saves the staff from manually updating each dish, he said.

“Otherwise, they [employees] have to go to each category and put ‘out of stock’ — that takes time,” Puentes said.

Puentes said he hopes to see an AI tool that would allow him to create a website entirely based on his artistic vision and preference. An AI chat assistant could be implemented alongside it to address simple customer questions.

Read the rest online at alligator.org.

@lilyhartzema lhartzema@alligator.org

Caroline Walsh // Alligator Staff

Sameer Shaik, an artificial intelligence analyst, looks at his computer in his cubicle, Friday, April 3, 2026, in Gainesville, Fla.

Butler serves as the director of the Florida Institute for Cybersecurity Research. He’s researched AI and sexually explicit deepfakes with graduate students and researchers at UF and Georgetown University. The team analyzes the development of deepfakes, their harm and how humans can detect them.

“This is an area that has seen rapidly increasing levels of quality compared to when they started,” Butler said.

Most commercial AI models, such as ChatGPT, have internal guardrails to prevent explicit content creation, Butler said. But perpetrators can train some open-source models, such as DeepFaceLab, to create harmful content.

In a Summer 2025 characterization study, he said, his team found many of the chatbots didn’t verify age or consent. They’ve also found evidence of online discussion boards where users exchange tutorials on how to generate the images.

People in the community who have been victimized by deepfakes have reached out to his team for help, Butler added.

Legislative restrictions

A law approved by Gov. Ron DeSantis in May 2025 directly prohibits possessing, re-

questing and creating nonconsensual sexually explicit material.

Violating the law is a third-degree felony, punishable by up to five years in prison and registration as a sex offender.

It passed entirely unopposed on both the House and Senate floors.

Despite the action’s legislative success, questions remain for researchers like Butler. It’s unclear whether the legislation refers to the AI companies themselves, he said, and it doesn’t clarify their responsibility to monitor what’s happening on their platforms.

The question arises, he said, of whether an AI platform is partially responsible for the creation of nonconsensual imagery, even if the platforms themselves are not distributing the images.

Preventing deepfakes relies on collaboration between legislators and technical communities, Butler said.

“The ability to create these images is clearly very easy,” he said. “The solutions are really that we need to be addressing how to mitigate and stop these.”

Ryan Kennedy, the chief operating officer of Florida Citizens Alliance, lobbied for the law passed in 2025, but he said more legislation is necessary to protect Floridians.

This legislative session, the Florida Citizens Alliance supported a new Florida bill, the “Ar-

“We feel that our students need to be properly educated to use AI,” he said.

Accurate AI detection is not truly feasible, he said, and even the best AI detectors have a 4% false positive rate.

“This means that if you use them and rely on them, you’re going to send 4% of your students to the Dean of Students [Office for] Honor Code violation while they did not use AI,” he said. Preventing this, he said, is part of the center’s training.

‘They are not learning anything’

Shu-Jen Huang, a UF professor of mathematics, teaches a large mathematics class in which students complete assignments based on the computing platform MATLAB. At the start of the course, the AI class policy is made clear to students: They may not use AI to complete homework.

tificial Intelligence Bill of Rights,” which would place stricter regulations on AI companies. The proposal would ban chatbots from communicating with minors without parental consent, and chatbots would be required to frequently remind users they are not speaking to humans.

The bill passed through the Florida Senate but got shot down in the Florida House of Representatives.

Kennedy believes there’s still hope — and need — for the bill to resurface.

“Congress is very slow to act on federal legislation,” Kennedy said. “We believe that Florida needs to be a leader in this and protect the citizens here.”

It will always be a challenge for legislation to keep up with ever-evolving technology, said Zoey Scheinblum-Brewer, the policy and grassroots coordinator for the Rape, Abuse & Incest National Network.

RAINN has received an increase of calls related to sexually explicit deepfakes on its national hotline, she said.

The nonprofit anti-sexual violence organization provides support to survivors and advocates for policy change.

This growing form of sexual violence is just as negatively impactful as in-person offenses, Scheinblum-Brewer said.

In many of the cases RAINN has worked on, deepfakes are created by people directly connected to the victim, including peers and classmates, Scheinblum-Brewer said. Still, any-

However, she said of 600 students, at least 80 were found using AI to complete their very first assignment.

“Some students just do the minimum work. They just put everything to AI, and they get the results,” she said. “They are not learning anything.”

Huang described multiple strategies she has tried to detect AI misuse. One method involved embedding words and prompts in white text that would not appear on a screen but could trigger incorrect responses if a student copied and pasted the assignment into a chatbot.

However, some students quickly found ways to work around these methods, she said.

Instead, she said, she started embedding prompts into the assignment template designed to produce specific wrong answers if students pasted the assignment into a chatbot.

Huang said detecting AI use can be difficult when students make edits after generating initial responses with AI. Some of her students simply copy prompts into assignment submissions, while others refine AI output, making it harder to identify without deeper comparison.

AI creates an unfair advantage, she said, because some

one pictured in images online is susceptible to being victimized.

“Nonconsensual intimate images take away a person’s control over their body and identity entirely,” she said. “Now, you don’t even have to be in the same room as your perpetrator in order for them to harm you.”

@vanessajnorris

vnorris@alligator.org

DUSTY

Deepfakes are digital content manipulated or generated using artificial intelligence.

students spend hours trying to complete an assignment, while others finish in a matter of minutes.

“If you don’t want to spend that time, then I don’t think you deserve to get an A,” she said.

Inside the conduct process

At UF, alleged academic misconduct, including AI-related cases, are handled through the Dean of Students Office and Honor Council framework.

When a faculty member suspects an Honor Code violation, the case typically begins with a referral to conduct officers. Students may receive an email outlining the alleged violation, followed by a meeting to review the charge. From there, a student may choose to accept responsibility for the alleged misconduct or proceed to a formal hearing.

Read the rest online at alligator.org. @s_maharaj1611 smaharaj@alligator.org

Daniela Penafiel // Alligator Staff

www.alligator.org/section/opinions

Adrien Brody should not have won his Oscar

There are some awards that represent the true pinnacle of one’s craft.

They are so synonymous with success that their attainment solidifies the awardee as one of the best in their field. For a musician, it’s the Grammy. For a college football player, it’s the Heisman.

And for an actor, that award is an Oscar.

Chief among all the Oscars that can be won is the Academy Award for Best Actor.

The list of men nominated for this award represents some of the greatest actors of all time; the list of men who have won it is even greater. It includes legends such as Tom Hanks, Marlon Brando and Daniel Day-Lewis.

On March 2, 2025, the Academy gave the award to Adrien Brody for his performance in “The Brutalist.” Brody, who had already won the award in 2003 for his performance in “The Pianist,” played the Hungarian-Jewish architect László Tóth. The three-and-a-half-hour film follows Tóth’s immigration to and subsequent struggle in 1940s America. Brody fought against a field of memorable performances to get the award. Against him were Timothée Chalamet — who had spent six years learning guitar to play Bob Dylan in “A Complete Unknown” — and Sebastian Stan, who mas-

terfully embodied the mannerisms of Donald Trump while not veering into caricature for his role in “The Apprentice.”

But, the sad truth: Adrien Brody shouldn’t have won the award.

The film brought in Ukrainian company Respeecher to use artificial intelligence for “voice conversion technology.” Although the company explained it was only used to “polish a few tricky Hungarian vowels in Brody’s existing performance,” Brody’s performance nonetheless utilized AI.

The use of AI in Brody’s experience, and the fact a performance benefiting from AI earned the premier award in the acting world, sets a dangerous precedent for the future of acting.

The defense for AI in Brody’s performance was that he was incapable of fully adopting the Hungarian accent. While that is understandable — the Hungarian accent is one of the hardest to learn — it does not excuse the use of AI.

If the authenticity of the character’s accent was so important, then the company should have used a Hungarian actor. Through moments like these, when films require precise accents, actors who would otherwise be unknown should be brought to the spotlight.

Actors who currently thrive in the films of their home

country may never have the chance to make it to the international stage simply because film companies opt to cast well-known actors and use AI to create the accent.

Timothy Dillehay

opinions@alligator.org

The use of AI in “The Brutalist” insulted the art of acting, of being able to assimilate into a role so well the audience cannot tell. In a year when another actor spent years learning a new instrument and another studied footage from the 1970s to learn the mannerisms of one of the most caricatured men of our time, the award was given to the actor who fell back on AI to fix his performance.

The award created a dangerous precedent.

Today, AI is used to slightly improve the small shortcomings of Brody’s accents. Tomorrow, it may be used to completely create the accent.

@timothydilleh

tdillehay@alligator.org

‘The Match Point’: Bring back the bad line calls

No disputes with umpires over bad line calls. No resounding boos reverberating across a packed stadium after a controversial call. No one standing up to shout, “Hey Ref, you forgot your glasses!” (Because we’ve all done that, right?) No iconic eruptions of emotion from players that glue you to your seat like you’re watching a James Bond action scene.

It sounds clean. It sounds responsible. It sounds … boring.

Electronic line-calling and automated umpire systems are quietly stripping away a part of sports we love: the messy stuff. Who’s the overzealous fan supposed to yell at now?

Recently, Major League Baseball introduced its Automated Ball-Strike Challenge System for the 2026 season, allowing players to appeal the home plate umpire’s ball and strike calls.

Tennis already took a headfirst plunge into automated line-calling when the U.S. Open removed line judges entirely in 2022. Today, nearly all top-level matches are played without human line judges.

The technology doesn’t argue back. It doesn’t have a bias you can blame or eyesight you can poke fun at. It’s unquestionable.

But it isn’t perfect, even though it’s supposed to be.

Last year at Wimbledon, the automated system glitched and stopped working mid-match. When it’s wrong, it’s a quieter, less debatable version of error — one you can’t argue with, one you just have to accept.

And where’s the fun in that?

Watching a tennis match without line judges feels like something is missing. It’s like an ice cream cone without sprinkles or Rafa Nadal without his headband.

Everything is technically still there. But it doesn’t feel complete.

“There’s a little bit of life missing from the court,” said Scarlett Nicholson, junior Gators women’s tennis player. “If you just make it [the game] too perfect, it’s not as enjoyable anymore.”

One of tennis’s greatest legends, John McEnroe, built part of his identity on his fiery

32604-2257.Columns

reactions to line calls. People didn’t just come to watch great tennis; they came to hear him shout, “You cannot be serious!” whenever a line call inevitably upset him.

People come for the drama. The characters.

In college tennis, electronic line-calling systems are slowly being phased in. I’ve played a few matches with it, which have generally consisted of fewer disputes and less unpredictability. But also, fewer moments you remember.

As a player, I’m all for reducing errors. You never want to have to worry about being cheated out of a match because of a bad call. Nobody wants a championship decided by something obviously wrong.

But there’s a difference between correcting the worst mistakes and eliminating imperfection entirely. Imperfection is where emotion lives. A nebulous call can flip momentum, wake up a crowd and turn a forgettable match into something people talk about for days.

Stripping sports of human umpires in the name of optimization is a fragile decision. It might be “better,” but it also might be flat-

ter. Linespeople are a historic part of the fabric of the game.

Maybe that’s the trade-off we’re making: fewer errors, fewer arguments, fewer spikes in blood pressure.

India Houghton opinions@alligator.org

But also, fewer reasons to care just a little bit more.

Sports don’t need to be perfectly efficient. They’re not airport security lines. They need friction. They need unpredictability. They need, occasionally, something to go a little wrong.

Because once the arguments disappear, once the tension fades, once there’s no one left to question or blame or challenge, you don’t just lose the bad calls. You lose a piece of the game.

@indiahoughton16 ihoughton@alligator.org

MONDAY, APRIL 13, 2026

www.alligator.org/section/the-avenue

No more notecards: UF uses AI-aided system for name pronunciation at graduation

TASSEL PLATFORM ALLOWS STUDENTS TO VERIFY PRONUNCIATIONS AHEAD OF CEREMONIES

By Aaliyah Evertz Avenue Staff Writer

For many graduates, the most anticipated moment of commencement is also the most uncertain: hearing their name said out loud in front of thousands of people.

As UF prepares for Spring ceremonies, the university is continuing to expand its use of Tassel, an artificial intelligence-assisted system designed to address a recurring issue at graduation — name pronunciation.

UF first introduced Tassel, formerly known as MarchingOrder, in Spring 2024. By Fall 2024, the platform incorporated an AI component that generates pronunciations for student names, which are then verified or corrected before voice actors produce final recordings. The system now appears in multiple ceremonies as thousands of students prepare to

graduate.

UF commencement director Jason Degen said the change was driven in part by the scale of UF’s ceremonies and the limitations of previous methods.

With about 9,000 students participating in Spring commencement, faculty readers often encountered unfamiliar naming conventions across a diverse student body.

“I want our students to feel like they’ve been as best represented as possible,” Degen said.

Only three of the 16 UF colleges are not participating in the Tassel AI program: the College of Liberal Arts and Sciences, the Warrington College of Business and the College of Agricultural and Life Sciences.

Before adopting Tassel, UF used tools such as phonetic spellings and audio platforms to assist with pronunciation. Those systems depended on student participation and still required readers to interpret names in real time.

The Tassel system shifts that process earlier. Students who plan to attend commencement receive a prompt to confirm how their name should be displayed and pronounced.

Bayden Armstrong // Alligator Staff Graduation tassels on sale at the UF Bookstore in the Reitz Union, Friday, April 10, 2026, in Gainesville, Fla.

They can listen to AI-generated versions, make corrections or submit their own recordings.

In an email, a Tassel representative described how the system integrates human voice work with AI-generated audio:

“We partnered with our voice professionals, compensating them to use their voices for

Is the future of film AI-generated?

AI-generated announcements. These aren’t basic AI voices like Siri or Alexa — they’re broadcast-quality and virtually indistinguishable from manual recordings. Our voice actors are fully supportive of the AI naming announcement process, as it significantly reduces their recording workload during an already demanding period.”

The system also allows students to confirm the name that will be read during the ceremony.

Paola Grijalva, a 22-year-old UF advertising senior, said she initially felt unsure about the system because of past experiences with her name being mispronounced.

“When people try to say my name, it’s always difficult,” she said. “So my thought process was like, ‘If a person can’t say my name right, how is a robot going to say my name right?’”

Read the rest online at alligator.org/ section/the-avenue.

@aaliyahevertz1 aevertz@alligator.org

humans first,” he said.

Because AI scrapes prior work from the internet, its output comprises an amalgamation of “everything and everybody’s thoughts,” Vollmer said. The result, he said, is

El Caimán

LA COMUNIDAD LATINA DEBATE LOS PROS Y LOS CONTRAS DE LA TRADUCCIÓN MEDIANTE CHATBOTS

Por Angelique Rodriguez

Escritora de El Caiman

Claudio Ferrer es hispanohablante nativo. Eso no impide que este estudiante de 18 años de primer año de ingeniería eléctrica en la UF obtenga un poco de ayuda adicional con sus traducciones con ChatGPT.

Ferrer llegó a EE. UU. desde La Habana cuando tenía 11 años. Habla español todos los días con sus amigos más cercanos, sus padres y su novia.

A veces, sin embargo, usa la inteligencia artificial para obtener ayuda con la traducción de idiomas. No la utiliza para escribir párrafos o ensayos completos, dijo. Pero cuando escribe papeles académicos, solicitudes de becas o pasantías, le pregunta a ChatGPT como expresar ciertos términos o frases profesionales en inglés. Su objetivo es encontrar alternativas más elevadas para sonar más profesional, dijo.

“Cuando estoy pensando en una frase que suene bien o profesional en español y no logro averiguar como hacer que suene igual en inglés, suelo consultarlo a ChatGPT”, dijo.

Ocasionalmente, ChatGPT le ofrece una traducción que no es exactamente lo que busca. Pero, en la mayoría de los casos, Ferrer dijo que le ayuda a encontrar un equivalente adecuado de lo que tiene en mente en español. Dijo no percibir ningún efecto perjudicial de la IA en la traducción de idiomas.

“No le veo nada malo”, dijo Ferrer. “Creo que es bueno”.

Algunos expertos en lingüística y traductores coinciden en que la IA es util para

University

la traduccion de idiomas, si es utilizada con moderacion. Otros estudiantes y miembros del cuerpo critican la tecnología por su incapacidad para retener el conocimiento cultural y dicen que la traducción humana nunca podrá ser reemplazada.

Russell Scott Valentino, profesor y director del Departamento de Lenguas y Culturas Eslavas y de Europa del Este de la Universidad de Indiana, además expresidente de la Asociación Estadounidense de Traductores Literarios, dijo que haber utilizado la traducción asistida por computadora en su trabajo.

Valentino enseña talleres de traducción y una clase que se llama “Como traducir cualquier cosa”. El emplea traducción asistida por computadora y la enseña como parte de las clases.

Muchos de los traductores profesionales modernos utilizan traducción asistida por la IA y computadora, dijo. En la traducción de textos con un vocabulario específico, como un texto de la ley, la traducción asistida por la IA y la computadora desarrollan una memoria para una gran cantidad de términos, lo que agiliza y facilita el proceso de traducción, dijo.

A veces, dijo Valentino, las personas usan la inteligencia artificial y la traducción automática para traducir un texto y luego se lo entregan a un traductor profesional, quien le da una segunda revisión y corrige el contenido para afinar los matices, las decisiones estilísticas y la precisión.

“Cuando se llega al nivel en el que se está haciendo algo muy específico con la traducción y se piensa en la audiencia, la IA solo puede hacer hasta cierto punto”, dijo Valentino.

La IA tiene tendencia a cometer errores, dijo Valentino, por lo que es necesario verificar si está malinterpretando un texto. Esto ocurre con frecuencia, especialmente en textos especializados, indicó. Si, por ejemplo,

How are UF professors using AI — and how do students feel about it? Read more on pg. 13.

se necesita traducir un expediente médico, debe hacerse con sumo cuidado debido a las posibles consecuencias graves de una mala traducción.

Los textos más difíciles de traducir para la IA, dijo, son aquellos con un fuerte componente cultural.

Valentino dijo que muchas palabras existen dentro de un contexto cultural. Al traducirlas a otro idioma, el significado podría cambiar e incluso volverse ofensivo.

“Entonces, alguien cuenta un chiste. ¿Puede la IA lograr que sea gracioso? ¿Vale la pena traducir el chiste? ¿Es de mal gusto? Todas esas son, en cierto modo, preguntas humanas”, dijo.

La IA también puede tener dificultades con la poesía o los modismos, más que con textos simples como correos electrónicos, dijo Brent Henderson, profesor y director del departamento de lingüística de la UF.

Las personas cuya lengua materna es el español o el criollo haitiano pueden beneficiarse de la IA al traducir sitios web de gobiernos locales y textos sencillos, dijo Henderson. En la comunidad de Gainesville y la UF, los países de origen más comunes entre los residentes nacidos en el extranjero son Cuba, Haití y Colombia.

“No creo que la IA vaya a reemplazar por completo la necesidad de traductores humanos … pero poder hacer que más conocimiento esté disponible en más idiomas para las personas parece algo muy positivo”, dijo.

Sin embargo, Adam Bishop, estudiante del doctorado en lingüística de la UF, es más escéptico respecto a la traducción con IA. Los humanos pueden traducir mucho mejor los significados de los textos, dijo.

“Creo que hay algo muy bueno, muy hermoso en la traducción humana”, dijo Bishop. “Un traductor humano siempre podrá pensar en formas alternativas de expresar

Síganos para actualizaciones Para obtener actualizaciones de El Caim www.alligator.org/section/spanish.

¿Pueden los ‘chatbots’ llenar el vacío terapéutico para los latinos?

EXPERTOS EN LA FLORIDA PONDERAN EL ESTIGMA, LA TRADUCCIÓN Y LOS RIESGOS

Por Ariana Badra

Escritora de El Caiman

Traducido por Simon Wieselberg

Escritor de El Caiman

Los latinos reciben tratamiento para la salud mental a una tasa inferior a la de los adultos estadounidenses, en general. Hoy, expertos dicen que los ‘chatbots’ podrían llenar ese hueco — aunque la tecnología trae sus propias complicaciones.

Daniel Enrique Jimenez, un psicólogo clínico y profesor asociado en el departamento de psiquiatría en la Universidad de Miami Miller School of Medicine, estudia el estigma sobre la salud mental en las comunidades latinos más viejos.

“Si nosotros, como proveedores de servicios para la salud mental, sólo esperamos y decimos ‘Hablo español, vení,’ la realidad es que no muchos van a venir,” dijo Jimenez. Para las personas hispanas mayores, las enfermedades mentales pueden ser vistas como defectos de carácter. Si alguien no sale de la cama y va al trabajo, su reacción típicamente es preguntar “Qué te pasa?”, dijo. La percepción que la enfermedad mental es un defecto personal que necesita ser arreglado, previene a la gente latina de entender que la enfermedad mental como cualquier otra enfermedad física necesita ser tratada por un Doctor, dijo.

También, algunas personas piensan erróneamente que salir de su país de nacimiento es la causa de su estrés psicológico, agregó Jimenez. La migración puede ser “la cerilla que encendió el fuego” de la salud mental de alguien, pero esa perspectiva sola no logra darse cuenta de la predisposición genética de alguien.

Debido al estigma y barreras de lenguaje y cultura que desalienta las personas hispanas de encontrar ayuda por su salud mental, y

Lantor // Alligator Staff

Un letrero del Departamento de Español y Portugues se encuentra afuera Dauer Hall en el campus de la UF, miércoles, 8 de abril de 2026.

la escasez de recursos e intérpretes, el crecimiento de IA tiene la potencial de cambiar los servicios de la salud mental, dijo.

“La IA nos da una herramienta que nos permite decir ‘Ok, ahora podemos poner los servicios de la salud mental en las manos de gente que típicamente no tienen acceso”, dijo Jimenez.

Esta herramienta podría reducir los costos, especialmente para las personas sin seguro médico, y ampliar el acceso a aquellas personas que atraviesan dificultades o que sienten cierto estigma sobre ir a terapia, dijo.

A pesar de estos beneficios, la integración de la IA suscita inquietudes en la comunidad de salud.

“Le estás confiando a este chatbot cosas que no le contarías a ninguna otra alma”, dijo Jiménez.

También, comentó que la IA es incapaz de empatía, limitándolo al brindar consuelo durante momentos de vulnerabilidad. Al mismo tiempo, estos datos personales quedan a disposición del chatbot, que puede recopilarlos y utilizarlos de diversas formas, fuera del control del usuario.

Jiménez sostuvo que la integración de la IA en el sector sanitario es, probablemente, algo inevitable, entonces los profesionales

de la salud, los investigadores y los responsables políticos deberían priorizar el desarrollo de marcos regulatorios eficaces para su uso.

“No pretendo adoptar una postura de ‘estoy en contra de la IA’ o ‘estoy a favor de la IA’”, aclaró. “La gente ya la está utilizando, y lo hace precisamente de esta manera. Por tanto, ¿cómo podemos asegurarnos de que todas estas acciones se están haciendo y de que estas herramientas se emplean de forma segura y eficaz?”

María Laura Mecías, profesora del Departamento de Estudios Hispánicos y Portugueses de la UF y intérprete certificada en la Red de Clínicas de Acceso Equitativo de la UF, dijo que la persistente escasez de recursos de salud mental resulta más acentuada para los pacientes que no hablan inglés.

En su labor como intérprete, Mecías observa con frecuencia que los médicos intentan referir a los pacientes latinos a servicios de salud mental en español, pero las opciones disponibles son escasas. El uso de herramientas de traducción o de IA para ayudar a validar los síntomas podría contribuir a cerrar esta brecha con el paciente, dijo.

También, hizo referencia a estudios recientes realizados en España que sugieren que los sistemas de IA pueden reforzar, o amplificar, ciertos estereotipos cuando dichos sesgos se hallan integrados en sus datos de entrenamiento.

“Si se alimenta un modelo con sesgos, este puede volverse excesivamente generalista e incapaz de identificar adecuadamente aspectos como, ‘bueno, esta persona es diferente, este contexto es diferente’”, dijo Mecías.

Las diferencias culturales pueden pasar desapercibidas cuando la IA no logra percibir la interseccionalidad que define al paciente, dijo.

Algunas enfermedades mentales se comprenden y diagnostican en los pacientes estadounidenses mediante criterios sintomáticos específicos y listas de verificación, sin embargo, no siempre se manifiestan de la misma manera en los pacientes latinos, dijo. En el caso de la depresión, por ejemplo, in-

Can chatbots fill the Latino therapy gap?

FLORIDA EXPERTS WEIGH IN ON STIGMA, TRANSLATION AND RISKS

By Ariana Badra Alligator Staff Writer

Latino people receive mental health treatment at a lower rate than U.S. adults overall. Today, experts say chatbots could fill that gap — though the technology brings its own complications.

Daniel Enrique Jimenez, a clinical psychologist and associate professor in the department of psychiatry at the University of Miami Miller School of Medicine, researches mental illness stigma in older Latino communities.

“If we, as mental health providers, just wait and be like, ‘I speak Spanish, come,’ the reality is that not a lot will,” he said.

For older Hispanic individuals, mental illness can be seen as a character weakness. If someone does not get out of bed and go to work, the reaction is often to question, “What is wrong with you?” he said.

The perception of mental illness as a personality flaw to be fixed prevents Latino people from understanding mental illness, like any physical sickness, needs to be treated by a doctor, he said.

Some people also mistakenly think leaving their home country is the root of their psycho-

logical distress, he added. Migration can act as “the match that lit the fire” of someone’s mental illness, but that perspective alone fails to account for someone’s genetic predispositions.

Due to the stigma, language and cultural barriers that discourage Hispanic individuals from seeking mental health care, and the scarcity of resources and interpreters, the rise of AI has the potential to shift mental health care services, he said.

“AI provides this tool of ‘OK, now we can put mental health services in the hands of people that ordinarily wouldn’t or couldn’t,’” Jimenez said.

The tool could reduce cost, especially to those without insurance, and expand access to those who struggle or feel stigma around attending therapy, he said.

Despite these benefits, the integration of AI raises concerns in the health care community.

“You’re telling things to this chatbot that you wouldn’t tell a soul,” Jimenez said.

He said the system is incapable of empathy, restricting it from providing comfort during times of vulnerability. Simultaneously, this personal data becomes available for the chatbot to collect and use in various ways beyond the user’s control.

Jimenez said AI integration in health care is probably unavoidable, and health care professionals, researchers and policymakers should prioritize developing effective regula-

dicó que muchos individuos hispanos no pueden simplemente dejar de trabajar o quedarse en casa, a pesar de estar lidiando con dicha afección. “No están llorando ... pero sigue siendo depresión”, dijo Mecías.

Otro usos de la IA en la atención médica para los latinos

Mecías noto que los recursos en la UF son relativamente accesibles para los estudiantes, pero afirmó que la situación es diferente en la comunidad de Gainesville en general. . Por ejemplo, según Mecías, el Hospital Shands solo tiene una intérprete de español a tiempo completo y uno a tiempo parcial para atender a su población de pacientes. Además, los materiales traducidos, como los formularios de registro de pacientes, a veces se ofrecen en un número limitado de idiomas, e incluso esas traducciones llegan a un punto límite en el que el paciente no puede continuar en ningún otro idioma que no sea el inglés, comentó.

Mecías considera que las traducciones mediante la IA pueden ser útiles, pero carecen de una voz humana y de comprensión cultural. Si se utiliza la IA, agregó, debería ayudar a los pacientes a encontrar los recursos profesionales existentes que satisfacen sus necesidades específicas, en lugar de sustituir los servicios de terapia y traducción. Dylan Siegel, un estudiante de segundo año de 20 años de la UF que especializa en Lenguas y Literaturas Extranjera de China que habla inglés, portugués, español, mandarín, criollo y vietnamita básico, afirmó que las barreras idiomáticas pueden impedir que las personas se expresen completamente utilizando las palabras exactas que las herramientas de traducción o la IA no logran capturar.

Lea el resto en línea en alligator.org/ section/spanish.

@arianavbm abadra@alligator.org

tory frameworks for its use.

“I’m not trying to be like, ‘I am anti-AI, or I am pro-AI.’ People are using it, and they are using it in this manner,” he said. “So, how can we make sure that all these things are being done and that these tools are being used safely and effectively?”

María Laura Mecías, a professor at the UF department of Spanish and Portuguese studies and certified interpreter at the UF Equal Access Clinic Network, said the ongoing shortage of mental health resources is more pronounced for patients who do not speak English.

In her work as an interpreter, Mecías often sees doctors try to refer Latino patients to Spanish-speaking counseling services, but available options are scarce. The use of translation tools or AI to help validate symptoms can help bridge this gap with the patient, she said.

However, she pointed to recent studies conducted in Spain that suggest AI systems can reinforce, or even amplify, stereotypes when those biases are embedded in their training data.

“If one feeds a model with biases, it can become very generalistic and cannot properly identify ‘well, this person is different, this context is different,’” Mecías said.

Cultural differences can be overlooked when AI fails to see the intersectionality that shapes a patient, she said.

Some mental illnesses are understood and diagnosed in American patients through specific symptom criteria and checklists, but they do not always present the same for Latino patients, she said. In the case of depression, for example, she said many Hispanic individuals cannot simply stop working or stay home despite struggling with the condition.

“They are not crying … but it’s still depression,” Mecías said.

Other uses for AI in Latino healthcare

Mecías noted resources at UF are relatively accessible for students, but she said the situation is different in the broader Gainesville community.

For example, according to Mecías, Shands Hospital has only one full-time and one parttime Spanish interpreter serving its patient population. Additionally, translated materials, such as patient registration forms, are sometimes offered in a limited number of languages, and even those translations reach a limit where the patient cannot proceed in any language other than English, she said.

Mecías believes AI translations can be helpful but can be absent of a human voice and cultural understanding. If AI is used, she added, it should help patients find existing professional resources that meet their specific needs, rather than replace therapy and translation services.

Read the rest onilne at alligator.org.

Noah

Los Angeles Times Daily Crossword Puzzle

Edited by Patti Varol

How to Place a Classified Ad:

Furnished Rooms Rooms with internet, TV, fridge, desk, and utilities. Shared bath, kitchen, LR, DR and W/D. Drive by 2220 SE 44th Terr, call/text 386-623-9228 for info. 4-20-26-3-1

You need the money to do what you will Rich at Best Jewelry and Loan has the cash for those bills 523 NW 3rd Ave 352-371-4367 12-1-15-2

2/1 Short drive to UF w/ laundry, parking & backyard. Move-in early Aug. $1750/mo + utilities. 1009 NW 11th Ave. Call/text 904-290-3061 4-20-26-2-2

3 Bedroom 2 Bath close to campus w/ laundry, parking & backyard. Move-in early Aug. $1840/mo + utilities. 1402 NW 6th Pl. Spacious, comfortable layout! Call/text 904290-3061 4-20-26-2-2

We Buy Houses for Cash AS-IS! No repairs. No fuss. Any condition. Easy process: Call, get cash offer and get paid. Call today for your fair cash offer: 1-321-603-3026 4-13-21-5

When the heat is on and it's bucks that you need, Best Jewelry and Loan your requests we will heed. 523 NW 3rd Ave 352-371-4367 4-20-14-5

The surf's up at "Pawn Beach". We're all making the scene. If you're in need go see Rich, Best Jewelry and Loan's got the "green" 523 NW 3rd Ave 352-371-4367 4-20-14-5

● UF Surplus On-Line Auctions ● are underway...bikes, computers, furniture, vehicles & more. All individuals interested in bidding go to: SURPLUS.UFL.EDU 392-0370 4-20-26-14-10

Donate your vehicle to help find missing kids and keep kids safe. Fast free pickup, running or not, 24 hr. response. No emission test required, maximum tax deduction. Support Find the Children Call – Call 1-833-546-7050 4-13-47-12

19201980 Gibson, Martin, Fender, Gretsch, Epiphone, Guild, Mosrite, Rickenbacker, Prairie State, D'Angelico, Stromberg. And Gibson Mandolins / Banjos. These brands only! Call for a quote: 1-833-641-6789 4-13-42-13

Shands Teaching Hospital and Clinics, Inc. in Gainesville, Florida is seeking a Medical Technologist Lead-Core Lab. Duties and responsibilities include but are not limited to Overseeing technical operations in the relevant clinical lab section, including technical support of laboratory operations, problem resolution, technical development and procedure implementation, purchasing, etc. The position will also supervise preparation of specimens for analysis, perform analytical procedures when required, and be responsible for Quality Assurance functions, including data collection, analysis and reporting, as well as assume operational responsibility during Manager's absence.

Position requires a Bachelor Degree in Clinical/Medical Laboratory Science, Medical Technology, Biology, or closely related field + 3 years of technical operations experience in Hematology and Coagulation. Must possess either a temporary or current license as a State of Florida Clinical Laboratory Technologist in Hematology and Coagulation.

For more information about the position, including instructions on how to apply, please visit us on-line at https://jobs.ufhealth.org/ careers-home and reference job opening ID: 61471. 4-16-1-14

Trivia Test

1. GEOGRAPHY: Which country is also known to residents as Hellas?

2. U.S. STATES: Which state is the least populated?

3. ENTERTAINERS: Which show launched the career of comedian/actor Jim Carrey?

4. MOVIES: What museum is featured in the movie "Night at the Museum"?

5. HISTORY: When was Earth Day first celebrated?

6. MUSIC: Which song begins with the lines, "Is this the real life? Is this just fantasy"?

7. TELEVISION: Who starred in the title role of the TV drama "Designated Survivor"?

8. GENERAL KNOWLEDGE: What is the only sport that has been played on the moon?

9. LITERATURE: What is the name of the language used in the novel "1984"?

10. ANIMAL KINGDOM: What is a group of giraffes called?

2. King Power Stadium, formerly known as Walkers Stadium, is the home field of which English Football League Championship team?

3. In November 1895, J. Frank Duryea won the first auto race in the United States -- a 54-mile race from Chicago to Evanston, Illinois, and back in heavy snow - with what average speed in miles per hour?

4. After being drafted into the U.S. Army, New York Giants outfielder Willie Mays played for what Virginia military baseball team from 1952 to 1953?

5. Soviets LEOnid Spirin, Antanas Mikenas and Bruno Junk swept the medals in what athletics event at the 1956 Melbourne Summer Olympics?

6. What multisport college coach and sports innovator was inducted into the College Football Hall of Fame in 1951 and the Naismith Basketball Hall of Fame in 1959?

7. In 1991, gambler Howard Spira was convicted of trying to extort $110,000 from what Major League Baseball team

Jacoby Brissett.

Leicester City Football Club.

Approximately 5.25 mph.

The Fort Eustis Wheels.

The men's 20-kilometer race walk.

Amos Alonzo Stagg.

George Steinbrenner, owner of

New York Yankees.

Greece.

York City.

"Bohemian Rhapsody" by Queen.

Kiefer Sutherland.

2025 King Features Synd., Inc.

1. Which Arizona Cardinals quarterback set a new NFL record for passes completed in a regular-season game with 47 versus the San Francisco 49ers in November 2025?

Cognitive computing curriculum: Should UF professors be able to use AI?

AI IS DEVELOPING RAPIDLY FOR INSTRUCTORS, AN EXPERT SAYS

By Leona Masangkay Alligator Staff Writer

Artificial intelligence usage isn’t just rising among students — it’s rising among professors, too.