1 • 2026

1 • 2026

64-BIT MPU FOR MASS MARKET IoT

Youshouldexpectnoless.

Precision,speed,andreliabilityarenotoptional.Theyarethebaseline.

STM32C5deliversallthree,withoutpushingyourbillofmaterials. BuiltontwodecadesofSTM32heritage,itisafamilyaffair,anditshows.

STM32C5givesyouperformance,dependablebehavior,andthe headroomtodesignwithoutworkarounds.

Generative AI has reshaped software development at remarkable speed. In many teams, a significant share of new code is now drafted with AI assistance. Boilerplate, unit tests, peripheral drivers, documentation, even architectural sketches can be generated in seconds. Productivity gains are real, and for general-purpose software the benefits are already measurable.

But embedded systems are different.

Unlike cloud backends or mobile apps, embedded software operates under strict physical constraints. Memory is limited. Timing must be deterministic. Power budgets are finite. In safety-critical domains, standards such as MISRA-C and ISO 26262 define what is acceptable.

A function that “works” is not enough. It must work predictably, within tight resource envelopes, and often under certification requirements.

Most generative models are trained on vast repositories of general-purpose code. They are statistically excellent at reproducing common programming patterns. They are not inherently aware of interrupt latency, stack depth constraints, DMA side effects, or worst-case execution time analysis. An AI-generated driver may compile and even pass superficial tests, yet still violate real-time guarantees or safety assumptions.

That does not make GenAI irrelevant to embedded development. On the contrary, it can accelerate scaffolding, documentation, test generation, and refactoring. It can serve as a powerful co-pilot.

However, in embedded engineering, responsibility cannot be delegated to probability. Determinism, traceability, and verification remain human obligations. Generative AI may write code, but only engineers can certify that it truly belongs in a device that must work every time, under every condition.

ETNdigi

Editor-in-chief

Veijo Ojanperä

vo@etn.fi

+358-407072530

Sales manager Rainer Raitasuo

+46-734171099

rr@etn.se

Advertising prices: etn.fi/advertise

ETNdigi is a digital magazine specialised on IoT and embedded technology. It is published 2 times a year.

ETN (www.etn.fi) is a 24/7 news service focusing on electronics, telecommunications, nanotechnology and emerging applications. We publish in-depth articles regularly, written by our cooperation companies and partners.

Veijo Ojanperä ETN, Editor-in-chief

ETN organises the only independent embedded conference in Finland every year. More info on ECF can be found on the event website at www. embeddedconference.fi.

The easiest way to access our daily news service is to subscribe to our daily newsletter at etn.fi/tilaa.

In Finland, the electronics sector had a turnover of 20 billion EUR in 2024 and employs 44,000 people. The computer and IT industry had a turnover of 19 billion EUR and employs 81,300 people.

In total, these high-tech industries account for more than 50 per cent of Finnish exports. The technology sector also represents more than 65 per cent of all R&D investments in Finland.

ETN is a Finnish technology media outlet for people working, studying or simply interested in technology. Through its website with daily news and technical articles, as well as newsletters and columns, ETN covers every aspect of high technology.

Join us in 2026. See the media kit here.

• Espoo-based IQM is building superconducting quantum computers to compete with giants like IBM and Google.

• When Finnish startup Donut Lab unveiled its new battery at CES 2026, it quickly became one of the most discussed technologies at the show.

19 RETURNTOGROWTHIN2026

Growth in 2026 will be driven not by volume rebounds but by stronger design activity, AI deployment and more resilient architectures, says Rebeca Obregon, President of Farnell Global.

The release of Aliro 1.0 signals that the new industry standard for secure and interoperable access control is ready to conquer the market.

24

In the Nordics, EV charging is becoming everyday infrastructure, but the user experience still depends on the data connection behind the charger.

28

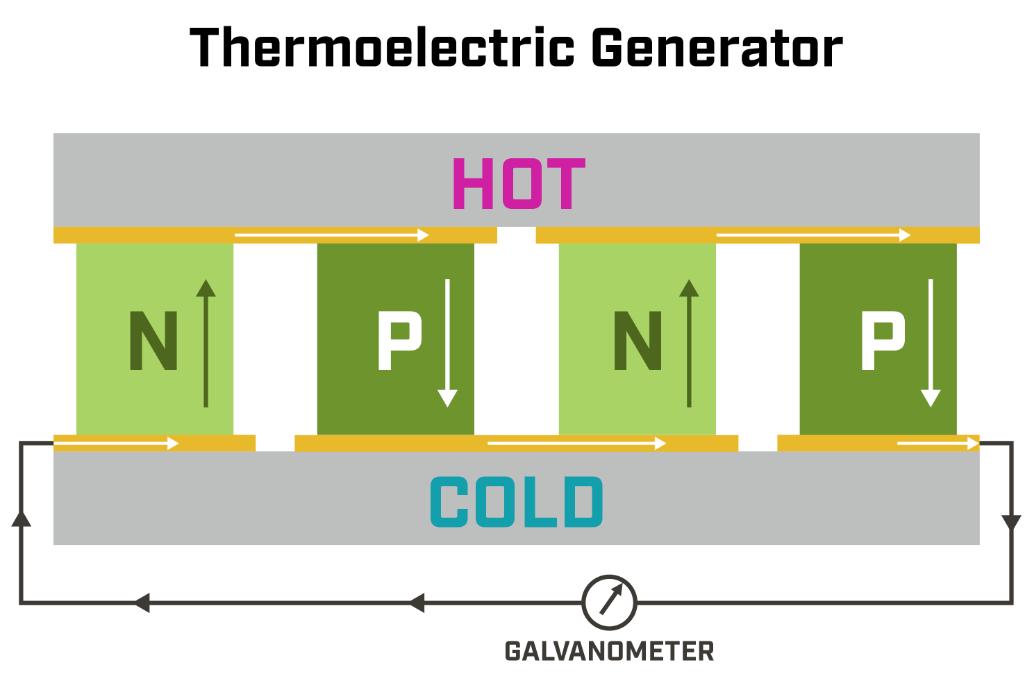

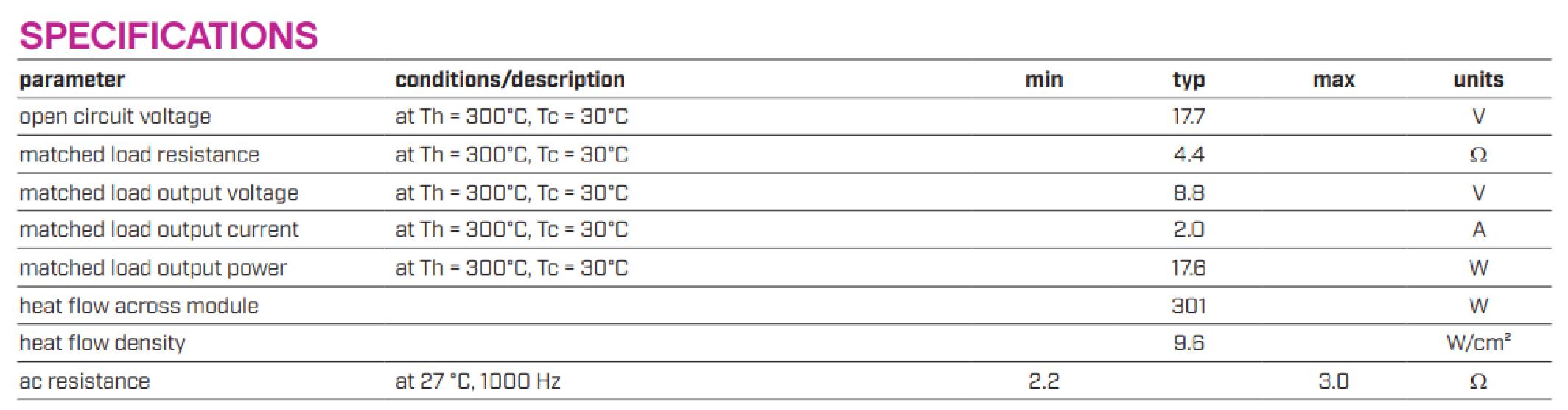

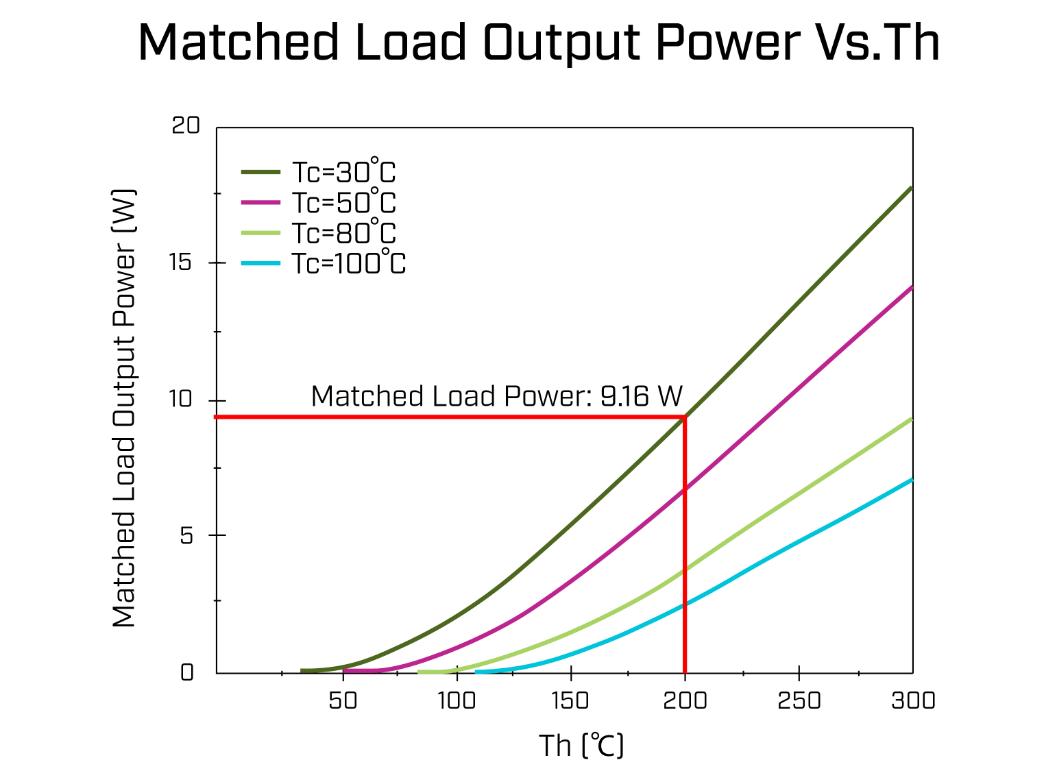

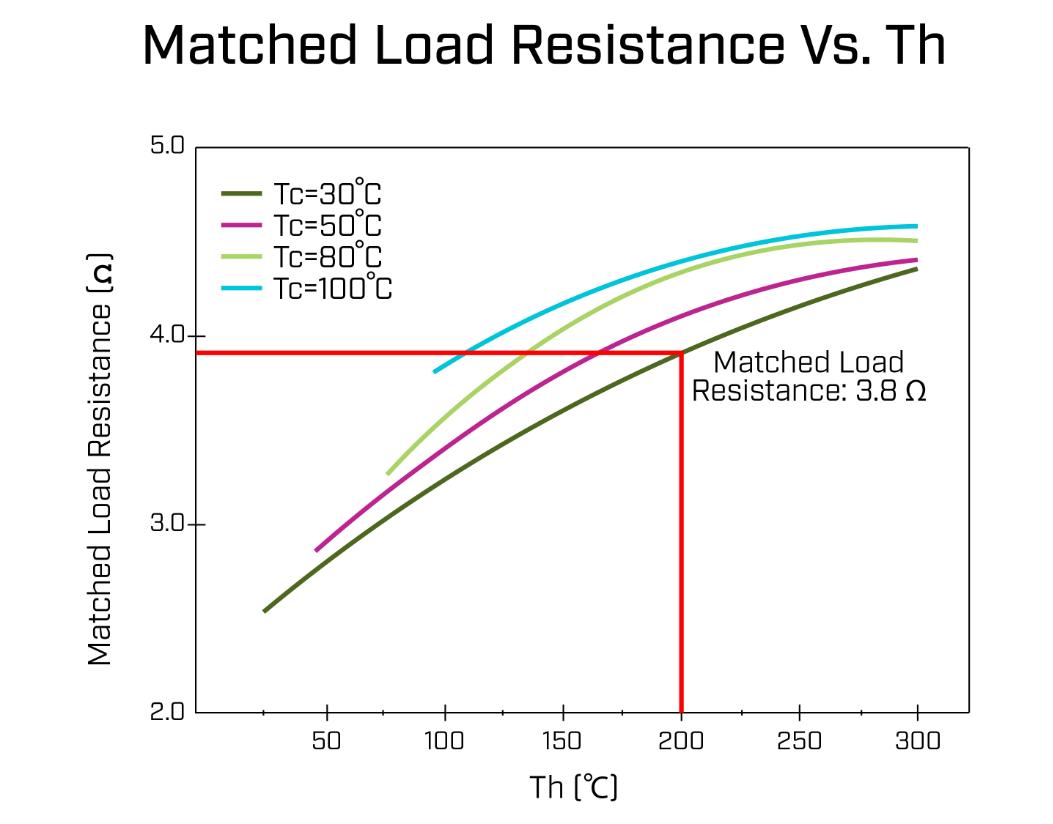

Since the formulation of the First Law of Thermodynamics, engineers have searched for ways to convert energy into forms that are easier to use.

32 TURNHEATINTOPOWER

Since the formulation of the First Law of Thermodynamics, engineers have searched for ways to convert energy into forms that are easier to use.

38 TECHNOLOGYHELPSPREVENTHEATSTROKES

Biodata Bank’s CANARIA watch predicts dangerous rises in core body temperature before symptoms escalate, using Renesas’ RA0 microcontroller.

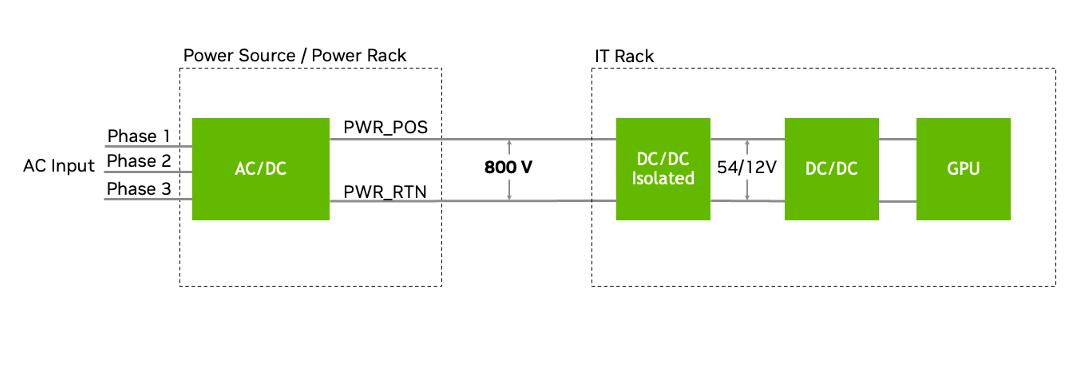

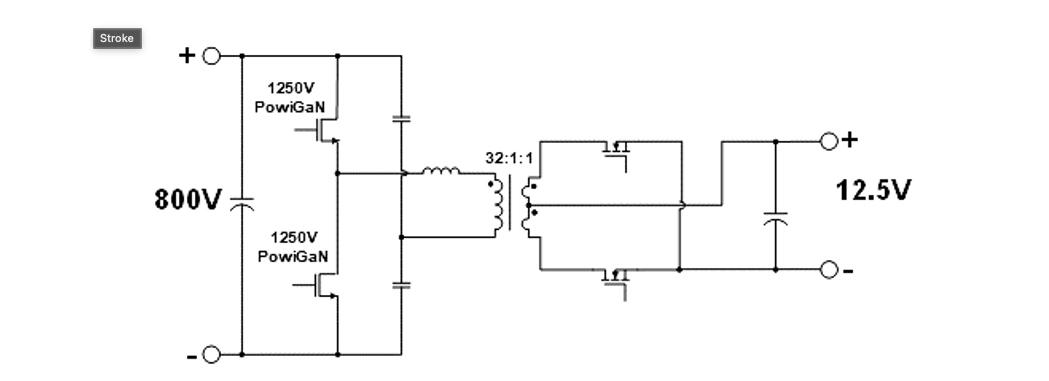

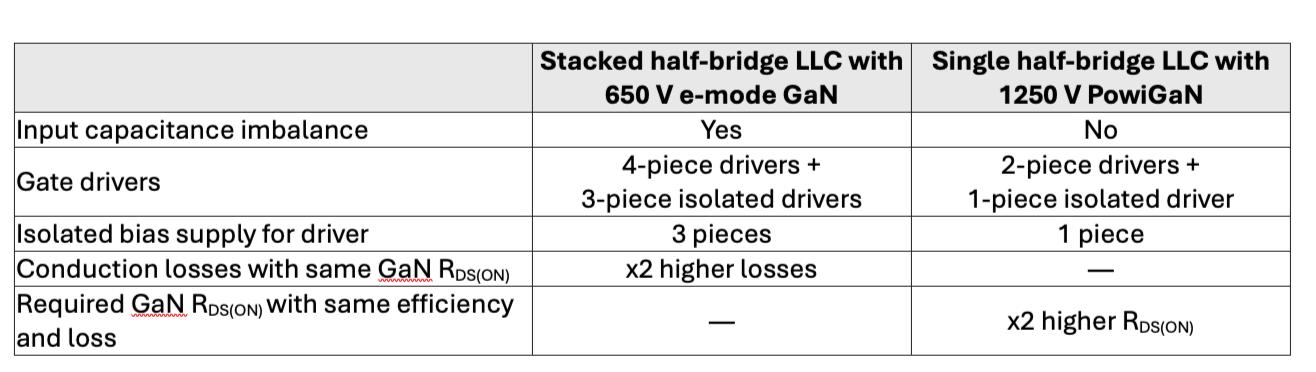

42 GaNBRINGS800VTODATACENTERS

Generative AI and power-hungry GPUs are pushing rack power demand from tens of kilowatts to well over 100 kilowatts, with megawatt-per-rack designs expected soon.

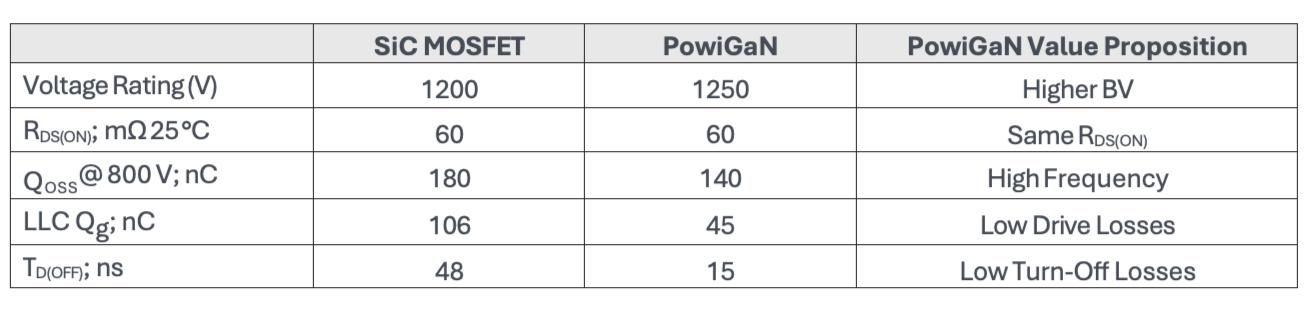

46 TRUEMESHTOLoRa

Combining NeoMesh’s distributed routing protocol with LoRa’s physical layer aims to eliminate gateway density constraints in large IoT networks.

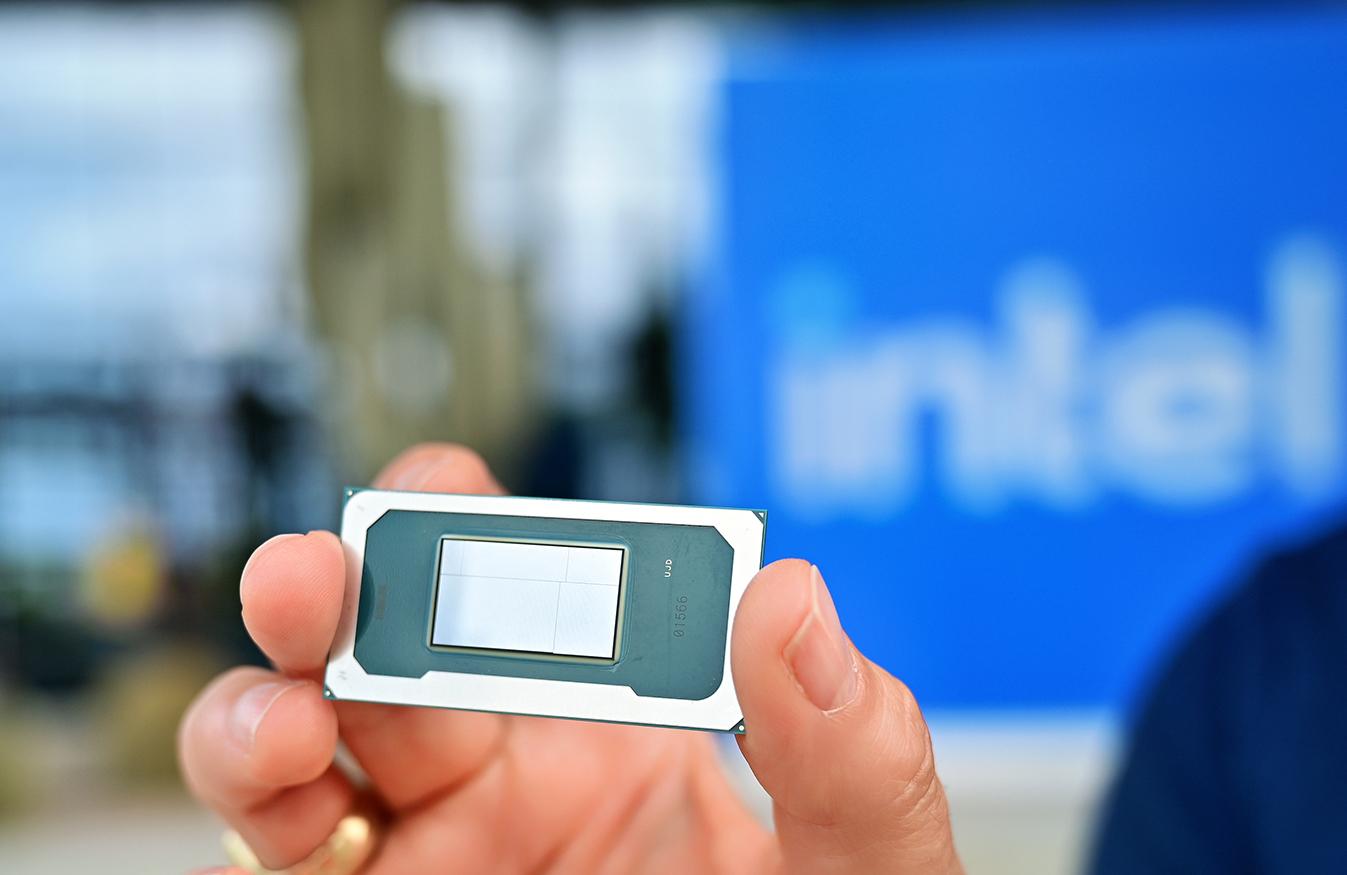

50 PANTHERLAKE:AIPCPERFORMANCETOTHEEDGE

Intel’s Core Ultra Series 3 combines CPU, GPU, and NPU acceleration for AI PCs and industrial edge systems..

24 28 34 50 38 38

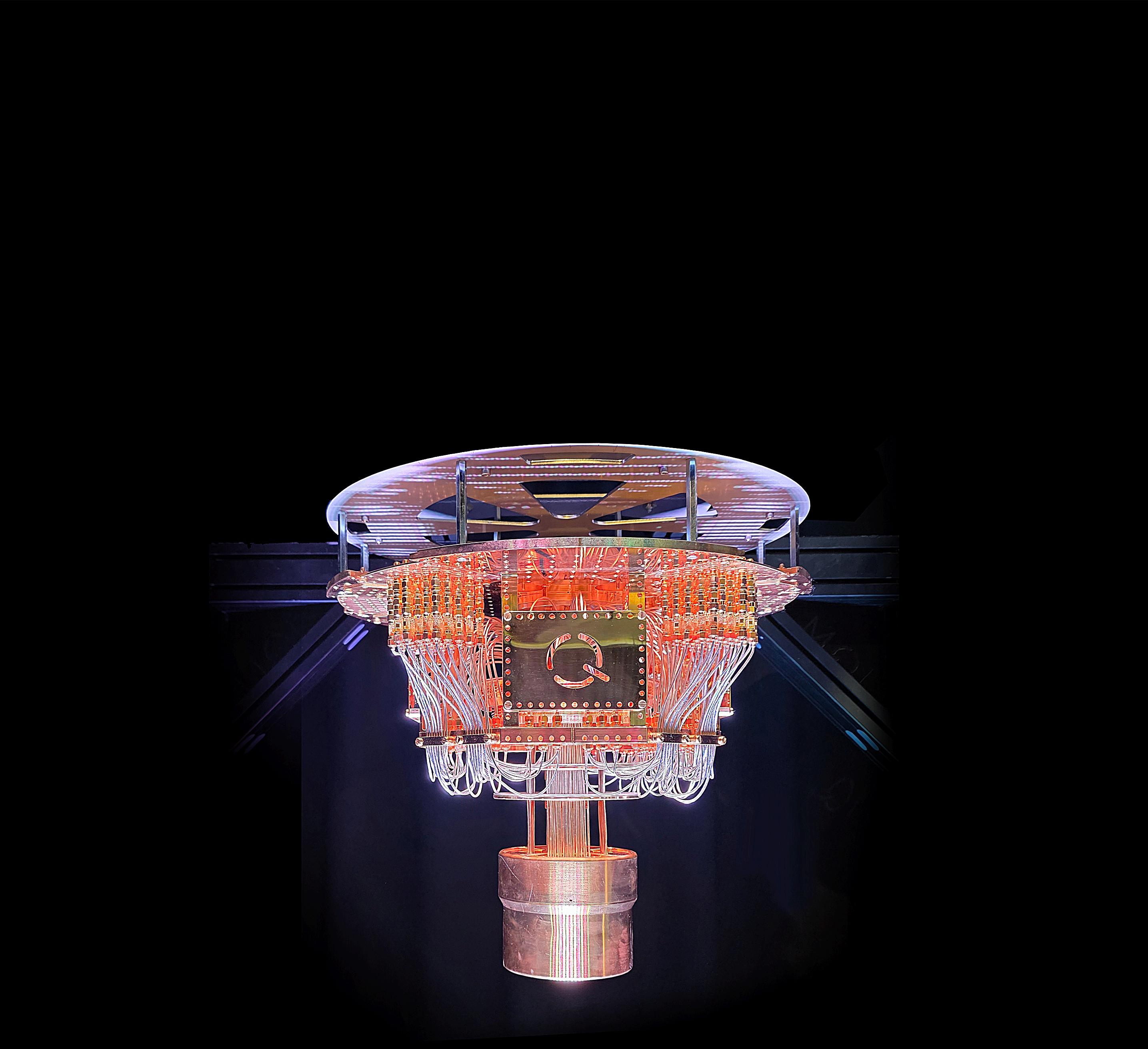

IQM of Espoo, Finland is trying something that sounds close to impossible. Build one of the world’s most demanding machines, a superconducting quantum computer to compete with the giants like IBM and Google.

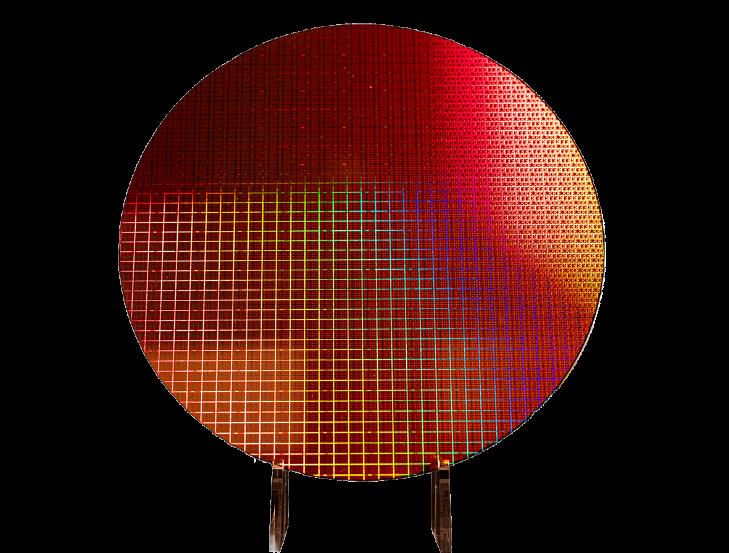

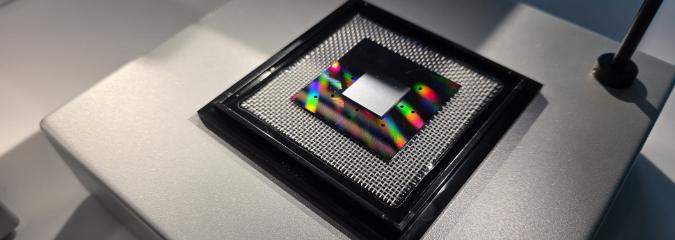

Quantum computing is arguably the hardest technology stack being industrialized today, spanning cryogenics, microwave engineering, quantum chip fabrication, control electronics, software, and an entirely new discipline of error correction. Yet IQM increasingly looks like a company that can challenge for the market lead.

When I meet one of the founders, Chief Global Affairs Officer Juha Vartiainen at IQM’s Espoo headquarters, he does not sell quantum computing as magic. If anything, he pushes the opposite. Quantum machines are not “just faster computers.” They are different computers that can be transformative for a narrow set of problems, while being slow and awkward for most everyday workloads.

- That popular idea that quantum computers are simply faster than classical computers is misleading.. A quantum computer is, as a computer, often slow and poor at what digital computers do well. The value is that for certain classes of problems the complexity changes, ,Vartiainen says

That realism is a recurring theme. Vartiainen is clearly allergic to hype, even though hype would be the easier route in an industry that still lives on promises. IQM’s pitch is more pragmatic: engineering discipline, transparent benchmarking, and a manufacturing ramp that turns research breakthroughs into deliverable systems.

IQM describes itself as the global leader in on-premises quantum systems. By early 2026, the company says it has sold 21 systems to 13 customers and delivered 15 systems, a higher publicly disclosed delivery count than most peers are willing to state openly.

In Vartiainen’s version of the story, the point is not the number itself. It is what the number implies: IQM has moved beyond “one heroic machine” and into a pipeline where systems are assembled, tested, shipped, and supported as products.

The pipeline is also visible in the company’s order metrics. IQM has talked publicly about “bookings” and “revenue visibility”ofat least USD 35 million2025 in revenue (unaudited),and over USD 100 million in bookings/visibility as of yearend 2025.

Revenue is still not the headline, at least not yet. IQM has been building a capitalintensive deep-tech business and has required significant external funding. Vartiainen is candid about the logic of venture-backed quantum. Cash burn is expected, because the product is not a mobile app. It is industrial hardware at the edge of physics.

Scaling in Espoo and Oulu

IQM’s footprint in Finland is now spread across multiple sites. Espoo hosts offices and customer-facing facilities, but also

manufacturing capacity to 30 quantum computers, up from the earlier capacity of around 20 systems per year.

If quantum computing has a “hidden bottleneck,” it is not only qubit fidelity. It is operations. Assembly, calibration, testing, verification, maintenance. A superconducting quantum system is not a laptop you unbox and boot. It is a cryogenic stack built around a dilution refrigerator, with sensitive microwave paths, RF electronics, and a control layer that must remain stable in an environment where tiny disturbances can destroy performance.

Vartiainen also highlights a second Finnish node: Oulu. IQM opened an R&D office there in December 2025, explicitly to tap local expertise and strengthen core development.

- Oulu has exactly the kind of competence we need. We have a core development team there. It goes right into improving the performance of the chip technology, he says.

the manufacturing and test functions that turn fragile quantum hardware into something customers can run. A production expansion in the Mankkaa area is central to the next stage.

In late 2025, IQM announced an investment of more than €40 million to expand its production facility in Finland. The stated goal is to scale annual

He hints that Oulu’s RF heritage could become even more relevant. Quantum processors do not run on digital logic alone. They are driven and measured through microwave control. In that sense, advanced RF engineering remains part of the quantum “engine room.”

The 150-qubit step

The most concrete near-term milestone is a high-qubit-count system for

Finland’s VTT. In May 2025, VTT and IQM announced a joint development project that aims to deliver a 150-qubit superconducting quantum computer in mid-2026, followed by a 300-qubit system in late 2027.

This is not merely a “bigger number” announcement. The VTT systems are framed as testbeds for quantum error correction, the key technology required to move from noisy intermediate-scale quantum computing to fault-tolerant machines.

That distinction matters, because qubit count by itself is a misleading scoreboard.

Vartiainen is quick to point out that the industry has suffered from “number marketing,” where companies advertise large qubit counts even if the effective performance is constrained by error rates, connectivity, calibration drift, or limited programmability.

IQM’s approach, he argues, is to optimize not only the best qubits under ideal conditions, but the worst qubits on a real system on a real day.

- In products we have many qubits, and performance has to be good even in the worst case. The ‘bad qubits,’ when everything is not perfect, still need to be usable. Scaling is where the hard work is, he says.

Million qubits?

At the heart of the machine is a simple question with a non-simple answer: can you preserve a quantum state long enough, and manipulate it accurately enough, to do useful computation?

Vartiainen talks about coherence times and gate fidelities with the caution of someone who knows the numbers are contextual. In the interview, he references demonstrations where qubit lifetimes reach around a millisecond, and mentions that longer times have been shown in laboratory conditions. The point is not to win a single “world record” but to ensure a stable distribution of good qubits across a product.

On the gate side, he uses 99.9% as a bestcase level for one- and two-qubit gates in optimal calibration, then immediately explains why this is not the end of the story. If conditions change, performance drops. So the system must detect drift, recalibrate, and recover quickly.

Importantly, he draws a bright line between calibration and error correction.

- Error correction is a different thing. Here we are talking about keeping the system

calibrated. Errors still happen all the time.

He gives a blunt example: at 99.9% fidelity, one operation in a thousand is wrong. For meaningful algorithms, that is still far too many errors. So the path forward is twofold: push the physical layer to higher fidelity, and then apply error correction techniques that consume additional qubits to suppress remaining errors.

This is where the “million qubits” vision must be decoded. On paper, million qubits sounds like science fiction. In engineering terms, it is a statement about logical computing capacity rather than raw device count.

Vartiainen explains the key idea: in a fault-tolerant quantum computer, you do not use every physical qubit as a “useful” qubit. You spend a large fraction of them on error correction overhead. A simplified rule of thumb is that you may need on the order of 100 physical qubits to create one high-quality, error-corrected logical qubit, depending on the code, the architecture, and the underlying physical error rates.

So a “million-qubit” machine could translate into something like 10,000 truly computation-ready logical qubits, if the physical layer has reached a threshold where error correction becomes efficient.

In other words, scaling is not only about making more qubits. It is about making qubits good enough that adding more begins to compound value rather than compound noise.

The “quantum threat”

No interview about quantum computing avoids security. The idea that a quantum computer will break today’s public-key cryptography is now common enough to influence policy and procurement.

Vartiainen’s view is balanced. The threat is closer than ever in the sense that the physics is progressing, but it remains far from a commodity capability.

He frames it as something that, if achieved soon, would require a large, capable state-level actor. Even then, it would not mean “everything breaks at once.” It would mean targeted attacks on high-value secrets where the attacker is willing to invest extraordinary resources. For most people, it is not a reason to panic.

He does, however, treat post-quantum cryptography as a rational response, especially for data that must remain confidential for a long time. The right move is preparation, not fear.

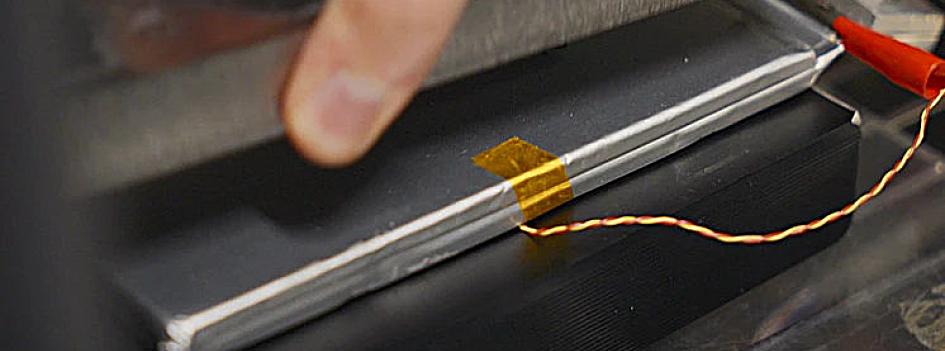

When Finnish startup Donut Lab unveiled its new battery at CES 2026, it quickly became one of the most discussed technologies at the show. The reason was simple: the performance claims sounded almost unbelievable.

According to the company, its fully solidstate battery combines properties that normally conflict with each other. Donut Lab speaks of an energy density of around 400 Wh/kg, the ability to charge in about five minutes, and a lifetime of up to 100,000 charge cycles.

If such a battery were real and scalable, the consequences would be enormous. The global lithium-ion industry has invested hundreds of billions of euros in factories and supply chains. A battery that charges in minutes and lasts decades would disrupt that entire ecosystem.

At CES, Donut Lab presented the technology not as a laboratory concept but as a product already entering real vehicles. The company says its solid-state battery is used in the latest Verge Motorcycles models being delivered to customers.

Those claims immediately triggered both excitement and skepticism. The combination of extremely high energy density, ultra-fast charging and unprecedented cycle life challenges several well-known trade-offs in battery chemistry.

First independent tests

In the weeks after CES, Donut Lab began presenting independent validation. The first report came from Finland’s VTT Technical Research Centre, which tested the charging performance of the company’s Solid-State Battery V1 cell.

The results showed that the cell could reach 80% charge in roughly five minutes when charged at an 11C rate. Charging from empty to full took around 7-8 minutes.

After fast charging, the cell delivered almost all of the stored energy during discharge. However, temperatures rose rapidly. With limited cooling the cell temperature approached 90 °C, highlighting the importance of effective thermal management for extreme charging speeds.

The report verified fast charging behaviour but did not address other claims such as energy density or cycle life.

A second VTT report examined how the battery behaves in high temperatures.

The cell was discharged at +80 °C without visible changes and delivered more than its reference capacity measured at room temperature. For comparison, 80 °C already exceeds the recommended operating range of many conventional lithium-ion cells.

The test was then extended to +100 °C. The battery still functioned electrically and could be recharged after the experiment. However, the pouch casing was no longer under vacuum after the test, indicating that the cell structure had changed under extreme heat.

Many questions remain

The two VTT reports provide the first independent evidence supporting parts of Donut Lab’s claims. They suggest that the battery can tolerate very fast charging

and operate at unusually high temperatures during short tests.

But the evidence remains limited. The tests involved individual cells (and different cell in all three tests), not large sample sets, and long-term cycling or pack-level performance has not yet been demonstrated. The most ambitious claims — 400 Wh/kg energy density and a 100 000-cycle lifetime — still lack independent verification.

The global battery sector has invested enormous sums into lithium-ion manufacturing. Gigafactories are being built across Europe, North America and Asia to supply electric vehicles and energy storage systems.

If a fundamentally better battery architecture were ready for mass production, the implications would be profound. Existing investments could lose value, supply chains might shift and the competitive balance between manufacturers could change rapidly.

A third VTT report, published in early March, examined the cell’s self-discharge behaviour. In the test the battery was charged to roughly 50 % state of charge and left idle for 240 hours at room temperature. After the ten-day period, 97.7 % of the stored energy could still be discharged from the cell.

The result indicates that the device stores energy chemically like a battery rather than electrically like a supercapacitor — one suspicion raised by critics because of the extremely fast charging claims. However, the test again involved a single, different cell and does not yet address the most controversial claims such as energy density or longterm cycle life.

Finnish company Senop has secured a significant order from the French Armed Forces. France’s defence procurement agency, DGA, has selected the company’s AFCD TI smart sighting system for use by the Army.

The procurement follows a development and evaluation programme that has spanned several years. According to Senop, the order will be delivered over multiple years and will provide long-term workload for the company. The monetary value of the contract and the number of systems to be delivered have not been disclosed.

France is one of NATO’s most operationally experienced member states. Senop notes that the selection serves as an important reference within the Alliance and strengthens the company’s position in the European defence market.

- The DGA’s decision demonstrates confidence in our sighting system and in the ability of Finnish product development to meet strict European operational and technical requirements, says Aki Korhonen, CEO of Senop Oy.

AFCD TI is a thermal imaging-based smart sight designed to operate as part of a fire control system. Unlike traditional sights, the system measures distance, tracks moving targets and automatically calculates lead. The objective is to improve first-round hit probability and accelerate engagement.

The system is designed to minimise the soldier’s cognitive load. Operation is based on two controls: a laser button and a four-way selector. The weapon and the fire-control system sensors automatically feed data to the sight.

AFCD TI identifies the type of ammunition and its temperature directly from the weapon. The system also supports ammunition programming, including AirBurst functionality, without separate manual steps.

- The user can focus on the mission rather than operating the system. The sight is simple and quick to learn, says Product Manager Lasse Elomaa.

The smart sight can be integrated with Saab’s simulation systems. It supports video recording and real-time video streaming from both daylight and thermal cameras. Recorded data can be used for training and performance analysis.

AFCD TI is designed with a modular architecture. The structure enables upgrades of components, subsystems and software. The same sight supports multiple Saab shoulder-fired weapon systems, facilitating deployment in NATO countries and reducing lifecycle costs.

The order also supports Senop’s growth in Finland. The company is investing in new production facilities in Lievestuore.

The approximately 3,000-square-metre facility will increase capacity and improve manufacturing efficiency. The total investment is valued at around €10 million.

According to Senop, the French programme strengthens the position of Finnish optronics expertise in Europe and opens new opportunities for NATO cooperation.

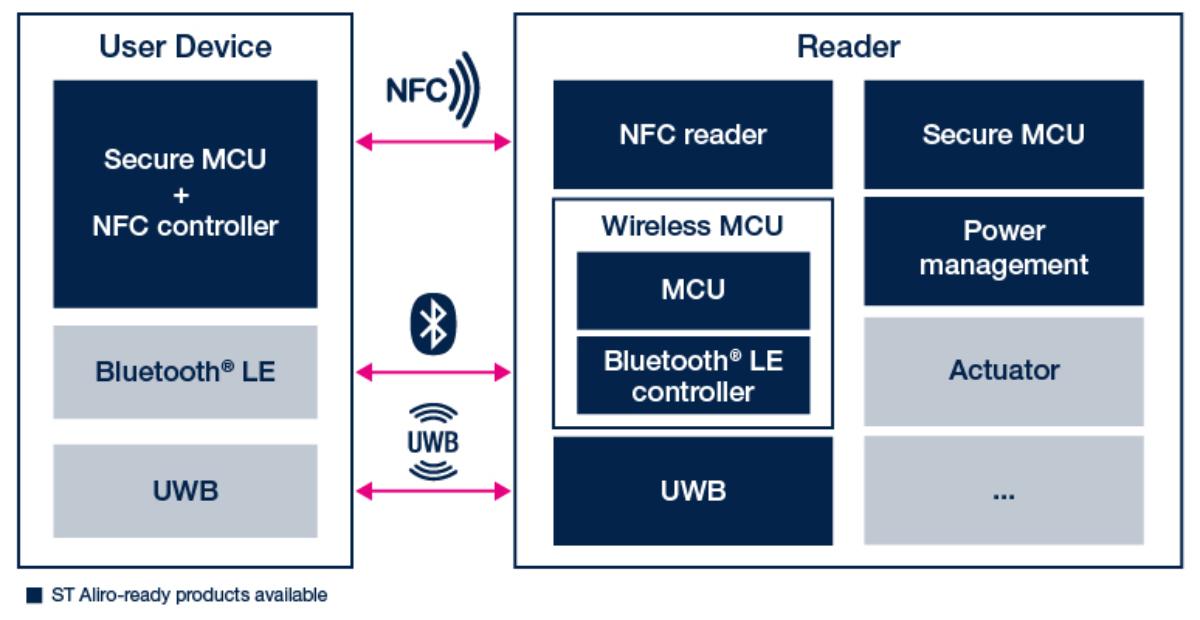

The Connectivity Standards Alliance (CSA) has released the Aliro 1.0 specification, defining for the first time a common protocol for a digital key stored on a smartphone. The goal of the standard is to enable the same mobile credential to work across readers from different manufacturers via NFC, Bluetooth Low Energy and Ultra-Wideband (UWB). Aliro is supported by Apple, Google and Samsung, among others.

Aliro 1.0 defines the NFC and Bluetooth LE interfaces between the user device and the reader. UWB is used alongside BLE to verify proximity and enable hands-free access. The specification does not cover backend systems or the interface between the reader and an access control server. Instead, it focuses specifically on the transaction between the mobile device and the reader.

Aliro defines a two-phase access protocol. First, a fast so-called expedited phase is performed, during which the device proves possession of the correct key. If this is sufficient, the door can be opened immediately. If needed, the process moves to a step-up phase, where a signed Access Document is presented to the reader. The document contains more detailed access rights, time constraints and possible restrictions.

cards. BLE enables longer range connections initiated by the user. UWB adds distance-based protection, helping to mitigate relay attacks.

Aliro is not just a technical specification but an ecosystem initiative. According to the CSA, more than 220 member companies have participated in developing the standard, including Apple, Google, Samsung, ASSA ABLOY, HID, NXP, STMicroelectronics, Infineon and Nordic Semiconductor. A central objective is to integrate digital access credentials directly into mobile

STMicroelectronics was one of the first companies to launch a reference design for Aliro 1.0 development.

Technically, the solution is based on ECC P-256 keys and ES256 signatures. The access credential is a document based structure that can be transmitted and validated even in offline environments.

Aliro is architecturally radio-agnostic, but in version 1.0 the supported transport layers are limited to three options: NFC, Bluetooth Low Energy, and a combination of BLE and UWB. NFC operates in a tap-to-access model, effectively replacing physical

This marks a significant shift from previous approaches. Digital keys have so far largely been manufacturerspecific and application-dependent solutions. Aliro aims to introduce a unified credential model and authentication protocol on which different vendors can build interoperable products.

If widely adopted, access control could move from a card-based model to the operating system level. The smartphone could become a universal key for homes, offices, hotels, campuses and parking

Aliro 1.0 is only the first version, but its architecture has been designed to be extensible. According to the CSA, the standard will continue to evolve to address new use cases and market requirements while maintaining backward compatibility.

For access control, this could represent a transformation comparable to what EMV brought to payment cards: a shift from closed, proprietary solutions to a shared, globally supported standard.

Emerson is expanding the National Instruments PXI test platform with new, more cost-effective hardware. The goal is to make modular, scalable automated test systems accessible to smaller development teams and emerging industries without compromising measurement accuracy or synchronization.

For 25 years, PXI has been one of the core architectures in modular test and measurement, particularly in automated test equipment, validation and production test environments. Emerson is now lowering the cost barrier by introducing high-performance hardware at a more accessible price point.

The new portfolio covers the essential PXI building blocks. The NI PXIe-5108 oscilloscope is available in four- and eight-channel versions, offering 100 MHz bandwidth, a 250 MS/s sampling rate and 14-bit resolution. The high channel density within a single module supports mixed-signal measurements and parallel signal analysis.

On the data-acquisition side, Emerson introduces 18-bit multifunction DAQ modules, the NI PXIe-6381 and 6383. They deliver absolute accuracy down to 980 microvolts and provide 16 or 32 channels for low-noise voltage measurements. This level of resolution and channel count is typically critical in

Iridium Communications has introduced a new IoT module that integrates satellite connectivity, 4G LTE-M cellular, and GNSS positioning into a single 16 × 26 millimeter package. According to the company, the solution reduces PCB space requirements by up to 60 percent compared to designs built from multiple discrete components.

The Iridium 9604 module measures 16 × 26 × 2.4 millimeters, corresponding to an area of approximately 416 square millimeters, clearly smaller than a typical postage stamp. It is mounted directly onto the IoT device PCB as an LGA-type component.

The module combines Iridium Short Burst Data (SBD) satellite connectivity, LTE-M cellular connectivity and GNSS positioning in one package. The satellite link operates in the L-band via Iridium’s global constellation. LTE-M and GNSS

functions are based on the u-blox SARA-R5 platform.

In practice, a device can use LTE-M whenever terrestrial coverage is available and automatically switch to satellite connectivity when cellular service is absent. The integrated GNSS receiver enables location-aware use cases such as network selection, tracking applications, and asset management.

Iridium positions the solution for costsensitive, high-volume IoT applications where combining satellite and cellular connectivity has previously required multiple radio modules, increased board space, and more complex power architectures.

Commercial availability begins in June 2026, when a development kit will be available for testing satellite and LTE-M connectivity.

Huawei has introduced several technical improvements in its new Watch GT Runner 2 sports watch. The most significant innovation is a 3D Floating antenna integrated into the watch bezel, designed to optimize the radiation pattern of the satellite positioning antenna within the watch body. Traditionally, GNSS antennas in smartwatches have been placed inside the device´s housing, where the user’s hand and the metal structure of the watch can weaken the signal. Huawei addresses this by relocating the antenna into the watch bezel. This design gives the antenna more space and allows it to radiate the signal more evenly in different directions.

Nokia invests exceptionally heavily in research. According to the company’s Form 20-F 2025 annual report filed with the U.S. Securities and Exchange Commission (SEC), its research and development spending reached €4.9 billion last year, representing 24.4 percent of revenue. In practice, this means Nokia spends roughly one out of every four euros it earns on research and product development. In total, Nokia says it has invested around €160 billion in research since 2000.

the validation of analog front ends, sensor interfaces and power electronics.

The chassis architecture is reinforced with the 18-slot NI PXIe-1081 hybrid chassis, offering 2 GB/s system bandwidth. The all-hybrid design enables flexible combinations of different module

types within a single system and simplifies scaling as test requirements grow.

The embedded controllers NI PXIe-8842 and PXIe-8862 support Windows 11 and NI Linux Real-Time. The solution is aimed at teams that do not require a separate GPIB interface and want to maintain a compact, deterministic system architecture.

According to Emerson, the new hardware retains the traditional strengths of the PXI platform: tightly synchronized measurements, high channel density and seamless integration with the NI software ecosystem, including LabVIEW,

InstrumentStudio and TestStand. In practice, this allows hardware, test sequences and analytics to be combined into a unified, software-driven test system.

The company also highlights the role of artificial intelligence in future test environments. High data throughput and a modular architecture create the foundation for real-time analytics and intelligent decision-making during the test process. In this context, a PXI system becomes not only a measurement platform but also a data acquisition and analytics infrastructure. As the entry cost decreases, modular and software-defined testing becomes a realistic option for even smaller development organizations.

Pickering Interfaces has introduced a new Test System Architect tool that allows the entire test system architecture to be designed graphically before the actual hardware is built. The tool is intended for designing automated test systems that combine instruments, switch matrices, cabling, and the device under test (DUT). In TSA, these elements can be modeled within a single view and connected at the signal-path level. According to Pickering, the hardware itself often accounts for less than half of the total cost of a test system. The new tool aims to make these phases visible already at the early stages of the design process.

The Battery Data Alliance, operating under LF Energy, has released a new open Battery Data Format (BDF) aimed at standardizing the structure and metadata of battery testing data. The objective is to make battery research and development data portable, reproducible, and directly usable in modeling workflows.

Until now, data generated in battery labs has largely been vendor- and softwarespecific. Different cycler manufacturers, such as Arbin and MACCOR, use proprietary file structures and naming conventions. Even software updates can alter formats. This fragmentation slows down modeling, benchmarking, and cross-laboratory data exchange.

BDF defines a fixed table schema for time-series cycling data, complemented by a machine-readable ontology aligned with BattINFO. This means the format goes beyond column headers and CSV compatibility: it encodes semantic meaning in a structured way. That capability is particularly important for physics-based modeling and machine learning-driven battery development.

The standard has been validated in real research workflows. The tooling from Faraday Institution, including the PyProBE software stack and its optimized BDX binary format, has been aligned with BDF. The format is also compatible with

widely used modeling frameworks such as PyBaMM and BattMo.

The surrounding ecosystem is already taking shape. Microsoft has announced plans to release a reference battery dataset in BDF format. Startup Ohm is contributing a web-based converter that enables users to transform raw commercial cycler data into BDFcompliant files. In addition, a collaboration between Empa, ETH Zurich, EPFL, and SINTEF has published a dataset of nearly 200 coin cells tested over 1,000 cycles in BDF format.

In its initial scope, BDF focuses on timeseries cycler data. Next steps include defining a dedicated metadata storage format and extending coverage to other laboratory data types, such as impedance measurements.

The implications could be significant. A unified data format simplifies model comparison, accelerates AI-driven optimization, and improves lifecycle traceability. It may also support the economics of second-life battery applications, where reliable and comparable test data is essential.

STMicroelectronics has unveiled its Stellar P3E microcontroller, bringing AI acceleration directly into an automotive-grade control unit. The launch marks a significant step toward distributed, real-time intelligence in vehicles, where AI computing is no longer confined to centralized SoCs or domain microcontrollers.

At the heart of the Stellar P3E is ST’s Neural-ART Accelerator, a dedicated NPU integrated directly on the microcontroller. The concept is to move machine learning-based decision-making closer to sensors and actuators. This enables microsecond-level response times and always-on AI without high power consumption or the need for a separate processor.

According to ST, the controller delivers up to 30 times greater energy efficiency in AI

inference compared to traditional MCU core-based implementations. In practice, this opens the door to virtual sensors, predictive maintenance, and real-time fine-tuning of engine or battery performance. It can also reduce the number of physical sensors, wiring, and standalone control units.

Stellar P3E is targeted particularly at socalled X-in-1 ECU solutions, where

AMD’s new Kintex UltraScale+ Gen 2 FPGA family does not attempt to win the performance race with logic cells alone. Instead, it addresses a problem that is already visible but rarely solved. How can devices be protected against quantum-era threats before those threats fully materialize?

Quantum computers are not yet breaking encryption in production environments. Nevertheless, the risk model has already changed. Data collected today can be stored and decrypted later when sufficient computational power becomes available. This is known as the “harvest now, decrypt later” threat. AMD brings a response directly into the FPGA fabric.

Kintex UltraScale+ Gen 2 devices integrate security mechanisms that support postquantum cryptography as well as functionality aligned with CNSA 2.0 requirements. These include strong root of trust, device-level authentication, key management, and secure boot. In many systems, the FPGA is the only component that can be updated in the field. That makes it a natural anchor point for quantum-resistant protection today.

Security, however, is not an isolated addon. It is embedded in the overall architecture of the device. AMD’s core

message is that performance is driven by data movement, not by individual compute units.

The second-generation Kintex UltraScale+ architecture is built for data flows. It combines high-speed serial transceivers, wide parallel I/O, hardened memory controllers, and high DSP density. The goal is to move data in and oput of memory and through computation, and out again without bottlenecks.

This is particularly relevant for test and measurement equipment, machine vision, medical imaging, and AV-over-IP systems.

The new generation delivers up to five times the external memory bandwidth of its predecessor. Multiple hardened LPDDR4X, LPDDR5, and LPDDR5X controllers are integrated. On-chip memory reaches up to 51 megabits.

multiple functions are consolidated into a single controller. This architecture supports the development of softwaredefined vehicles and helps reduce vehicle weight and overall system cost.

The controller is built around 500 MHz Arm Cortex-R52+ cores and a split-lock architecture that balances performance with functional safety. For memory, ST uses its PCM-based xMemory, which offers higher density than traditional embedded flash and enables software growth without hardware modifications.

ST supports the new controller with its Edge AI Suite tools and the Stellar Studio development environment, allowing AI models to be deployed directly to invehicle edge computing platforms. Production of the Stellar P3E is scheduled to begin in the fourth quarter of 2026.

Renesas has expanded its RH850 automotive microcontroller family with the new RH850/U2C device. The new MCU brings the performance benefits of a 28nanometer manufacturing process to a lower price segment.

The RH850/U2C extends Renesas’ U2 generation at the lower end of the lineup. The higher-end RH850/U2B and U2A devices are aimed at more demanding systems, while the U2C offers the same

Finnish quantum-computer developer IQM plans to go public through a merger with the Nasdaq-listed special purpose acquisition company Real Asset Acquisition Corp. (RAAQ). The agreement would result in IQM’s American Depositary Shares being listed on a major U.S. stock exchange. The company is also considering a dual listing in Helsinki after the transaction is completed. IQM designs its own quantum chips and operates an integrated production chain that includes chip design tools, fabrication, assembly and datacentre infrastructure.

The production of the most advanced semiconductor chips could be accelerated with a surprisingly simple process change. Belgian research institute Imec has demonstrated that increasing oxygen concentration during the postexposure bake step of EUV lithography can reduce the required exposure dose of metaloxide resists by up to 20 percent. In EUV lithography, the exposure dose largely determines how quickly wafers can be processed in the scanner. Lower dose requirements mean that the same EUV system can expose more wafers per hour, effectively increasing tool throughput.

architectural foundation for smaller ECU applications. This also enables an easier migration from older RH850/P1x and F1x microcontrollers to the latest automotive electrical and electronic architectures.

The new controller integrates four RH850 processor cores running at up to 320 MHz. For safety-critical applications, two lockstep cores are used to meet the ASILD requirements of the ISO 26262 functional safety standard. The device integrates up to 8 MB of on-chip flash memory.

A key feature is its broad interface support, covering both current and nextgeneration in-vehicle networks. The MCU supports automotive Ethernet 10BASET1S, deterministic Ethernet TSN (100 Mbps and 1 Gbps), CAN-XL, and I3C. At the same time, it maintains compatibility with widely used interfaces such as CAN-FD, LIN, UART, CXPI, I²C, I²S, and PSI5.

Renesas has expanded its automotive RH850 microcontroller family with the new RH850/U2C device. The chip brings the performance of a 28nanometre process node to a lower price segment and is aimed at applications such as chassis and safety systems, battery management and vehicle body electronics. The RH850/ U2C complements the company’s U2-generation portfolio at the entry level. Higher-end RH850/ U2B and RH850/U2A devices target more demanding systems, while U2C offers the same architecture for smaller ECU designs. This also makes it easier for carmakers to migrate from older RH850/P1x and F1x MCUs.

Nordic Semiconductor and Sequans Communications both showcased 5G eRedCap technologies at MWC Barcelona, signaling that the next phase of low-power 5G for IoT is moving from specification to commercial reality.

The new eRedCap, short for enhanced Reduced Capability, is a streamlined 5G device profile defined in 3GPP Release 18. It builds on the original RedCap concept introduced in Release 17 but pushes optimization further, particularly in terms of power consumption, RF complexity and baseband processing requirements.

Unlike full-scale 5G NR, which can utilize up to 100 MHz of bandwidth in FR1, eRedCap targets significantly narrower bandwidths, typically in the range of a few megahertz. This reduction directly lowers RF front-end demands, ADC/DAC requirements, and digital signal processing load. The result

Taara has unveiled a new wireless connectivity technology based on optical photonics that aims to deliver fiber-like speeds without trenching or spectrum licenses. According to the company, its device, called Taara Beam can reach speeds of up to 25 gigabits per second and operate over distances of up to 10 kilometers.

The system is a line-of-sight free-space optics (FSO) link in which data is transmitted via a narrow beam of light between two points. The key difference compared to traditional FSO systems lies in the architecture. Beam steering is not handled by mechanical mirrors but by an optical phased array implemented on an integrated silicon chip. The chip contains more than a thousand miniature emitters that allow the beam to be electronically steered and shaped.

According to the company, most of the

is a simpler modem architecture with lower silicon area, reduced memory footprint and improved energy efficiency.

The aim is not peak data rates. eRedCap is designed for costand power-efficient 5G connectivity. It bridges the gap between LTE-M and full 5G NR, offering a migration path for IoT devices as networks transition toward 5G core architectures.

This is particularly relevant for smart meters and other massive IoT deployments. Many of these devices currently rely on LTE-M, NB-IoT or even legacy 2G networks that are being phased out. eRedCap allows such devices to remain compatible with evolving 5G infrastructure without incurring the cost and power penalties of a full NR implementation.

From a system perspective, eRedCap enables operators to consolidate IoT traffic within 5G networks while maintaining the long battery life and low bill-of-materials (BOM) cost structure required in high-volume IoT applications.

eRedCap brings smart meters and similar low-throughput devices into the 5G era. Not because they require higher throughput, but because long-term network evolution makes compatibility strategically necessary.

Researchers at the Hong Kong University of Science and Technology say they have developed a novel calcium-ion battery that could offer an alternative to lithiumion technology. The study, published in Advanced Science, is based on a quasisolid-state electrolyte and redox-active covalent organic frameworks (COFs).

Calcium is far more abundant in the Earth’s crust than lithium, making it an attractive raw material for large-scale energy storage. In terms of the electrochemical stability window, calciumion batteries can theoretically approach lithium-ion systems.

In practice, however, development has been hindered by two key challenges: sluggish Ca²⁺ ion transport in the electrolyte and poor cycling stability.

The HKUST research team addressed these issues by developing a COF-based

Taara Beam is targeted at operators, enterprise campuses, data-center interconnects, and backhaul links for 5G and future network base stations. The unit can be installed on rooftops or poles within hours. It operates in the optical spectrum, which does not require spectrum licenses, meaning spectrum fees do not constrain deployment.

The main technical question relates to weather resilience. Fog, heavy rain, and atmospheric turbulence can degrade optical links. Real competitiveness against fiber and millimeter-wave RF will depend on practical availability figures and link budget performance, not just peak data rates.

Taara originated from X, the Moonshot unit of Alphabet Inc. X develops highrisk, radical technologies, some of which are spun out into independent companies. Taara is one of these spin-offs.

quasi-solid-state electrolyte containing carbonyl groups. Within the porous framework, Ca²⁺ ions migrate along the aligned carbonyl groups. At room temperature, the material demonstrated an ionic conductivity of 0.46 mS/cm.

The researchers also built a full-cell prototype. It delivered a reversible specific capacity of 155.9 mAh/g at 0.15 A/g and retained 74.6 percent of its capacity after 1,000 cycles at 1 A/g. The results suggest that key bottlenecks in calcium-ion battery development can be mitigated through materials engineering.

The question is whether this is enough to challenge lithium. For comparison, LFP cathode materials in lithium-ion batteries typically offer around 150–170 mAh/g specific capacity, while NMC/NCA cathodes range from roughly 160–220 mAh/g. The reported 155.9 mAh/g is therefore in the same order of magnitude as LFP.

Qualcomm has unveiled its new Snapdragon Wear Elite platform, designed to bring true edge-sAI capabilities to smartwatches and other wearable devices. The company describes the concept as “Personal AI”, where wearables act as independent, contextaware devices rather than simple extensions of a smartphone. Wear Elite is Qualcomm’s first wearable platform to integrate a dedicated NPU for AI processing. The Hexagon NPU can run models with up to one billion parameters directly on the device, allowing part of the AI workload to operate without a cloud connection.

Rohde & Schwarz and Qualcomm have demonstrated carrier aggregation between the traditional FR1 band and the emerging FR3 spectrum at MWC Barcelona. The FR3 range is not yet part of commercial 5G networks but is being studied as a potential component of future 6G spectrum architecture. In the demo, a roughly 2.5 GHz FR1 channel was combined with a roughly 7-GHz FR3 channel into a single logical radio link. Both channels used 4×4 MIMO and high-order modulation. Instead of extending a single channel, the setup aggregates carriers operating in two different frequency ranges into one data connection.

AMD TARGETS VRAN WITH NEW EPYC 8005 PROCESSORS

The era of the entry-level laptop may be coming to an end. According to new forecasts from Gartner, rapidly rising memory prices are set to make sub-$500 notebooks economically unsustainable, potentially pushing the basic PC segment out of the market entirely by 2028.

The main driver is a sharp increase in DRAM and SSD prices. Gartner estimates that combined memory prices could rise by as much as 130 percent by the end of 2026. As a result, the average price of PCs is expected to increase by around 17 percent compared with 2025.

Memory components will account for a significantly larger share of a PC’s bill of materials. Gartner estimates that the share will rise from about 16 percent today to roughly 23 percent. This disproportionately affects low-cost devices, where manufacturers have little margin to absorb cost increases.

As component costs rise, most pricesensitive buyers are unlikely to accept higher prices for entry-level laptops. This is why Gartner expects the market for sub-$500 laptops to gradually disappear over the next two years.

AMD has introduced its fifthgeneration EPYC 8005 server processors and is positioning them as a platform for telecom networks. The company argues that baseband processing can be handled by general-purpose x86 CPUs rather than relying on dedicated baseband ASICs or FPGA accelerators. The new EPYC 8005 series, code-named Sorano, is aimed specifically at virtualized RAN (vRAN) deployments. The goal is to run the demanding L1–L2 baseband processing of a base station directly on an x86 server.

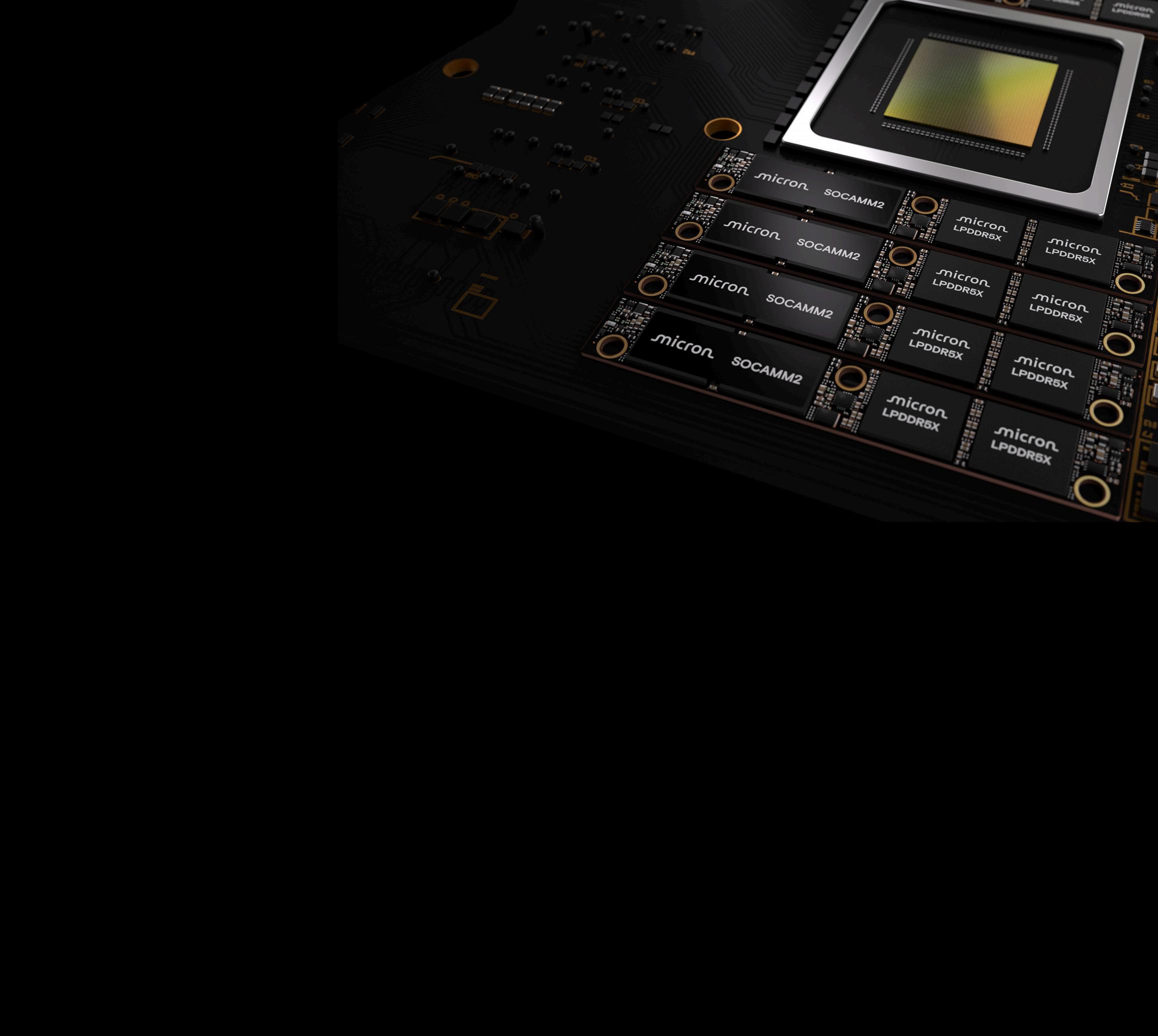

Artificial intelligence is pushing a surprising technology shift in data center memory. The low-power LPDDR memory familiar from smartphones is now moving into servers, driven by the need to improve energy efficiency and memory capacity for AI workloads. Micron has now introduced a new 256GB SOCAMM2 module, which the company says is the highest-capacity LPDDR server memory available today.

LPDDR (Low Power DDR) was originally developed for mobile devices where power consumption is critical. Traditional servers, in contrast, have relied on DDR-based RDIMM modules that provide large capacity but consume significantly more energy. As AI servers scale up, memory has become one of the largest contributors to power consumption and heat generation in data centers.

Micron’s new module is based on what the company describes as the industry’s first monolithic 32Gb LPDDR5X memory die. The technology enables a capacity increase to 256GB per SOCAMM2 module, up from the previous 192GB generation.

Higher capacity at the module level allows servers to scale memory capacity much further. According to Micron, an eight-channel server CPU can now support up to 2TB of LPDRAM. This is particularly relevant for AI inference workloads, where large context windows and key-value caches consume significant amounts of memory.

Power efficiency is the main driver behind the shift. Micron says SOCAMM2 modules can consume roughly one-third of the power of comparable RDIMM memory while occupying

only about one-third of the physical footprint in the server. At the same time, AI performance can improve. In longcontext LLM inference workloads, Micron reports that timeto-first-token can improve by more than 2.3 times.

The SOCAMM architecture also solves one of the traditional challenges of LPDDR memory in servers. In mobile devices, LPDDR is typically soldered directly next to the processor. SOCAMM packages LPDDR chips into a small removable module, enabling serviceability and scalability similar to conventional DIMMs.

The technology originated in NVIDIA’s Grace CPU platform, which uses LPDDR5X to improve energy efficiency in AI servers. The newer SOCAMM2 version is currently moving through JEDEC standardization, which could enable broader adoption across the data center industry. Memory vendors including Samsung, SK hynix and Micron are all developing SOCAMM2 solutions as demand for AI infrastructure continues to grow.

Wi-Fi 8 can now be tested at the protocol level

Rohde & Schwarz and Broadcom are demonstrating Wi-Fi 8 RF signaling tests in a live device environment for the first time. The tests are implemented on the CMX500 signaling tester, now extended with support for the upcoming IEEE 802.11bn standard.

This is not just RF measurement in nonsignaling mode, but full protocol-level signaling testing. The tester and Broadcom’s Wi-Fi 8 prototype communicate with each other as they would in a real network. This enables validation of PHY-layer and partially MAC-layer features in realistic operating conditions.

Wi-Fi 8, or 802.11bn, shifts the development focus from peak data rates toward ultra-high reliability (UHR). It

retains the key physical-layer parameters of Wi-Fi 7, including operation in the 6 GHz band up to 7.125 GHz, 320 MHz channel bandwidths, 4096-QAM modulation, and Multi-Link Operation. New features include a distributed resource unit (dRU) approach to work around uplink PSD limits, as well as mechanisms allowing different MIMO

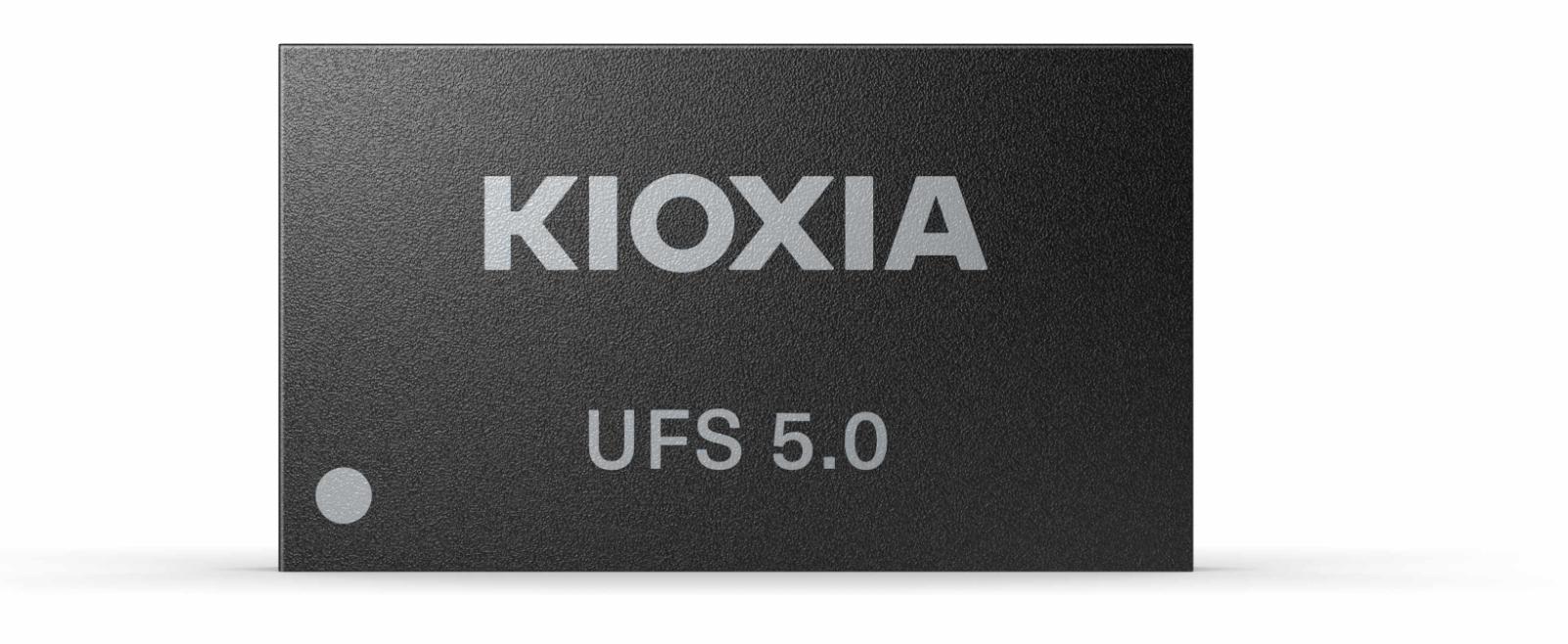

Kioxia has begun shipping evaluation samples of its UFS 5.0-compatible embedded flash devices, marking a significant step toward a new performance class for mobile storage. The driving force behind the shift is clear: large language models and generative AI workloads running directly on devices are redefining storage bandwidth requirements.

The UFS 5.0 standard is currently being developed by JEDEC. It builds on MIPI MPHY 6.0 for the physical layer and UniPro 3.0 for the protocol layer. The new HSGEAR6 mode supports a theoretical interface speed of 46.6 Gbps per lane. With two lanes, total throughput can reach approximately 10.8 GB/s.

That figure is significant. It approaches the practical bandwidth levels of LPDDR5X system memory in mobile devices. While NAND flash latency remains fundamentally higher than DRAM, raw data throughput is increasing to a level where storage is far less likely to become a bottleneck in AI-heavy workloads.

In practical terms, this shifts the role of flash in the memory hierarchy. Large model weights can be streamed more efficiently from storage to CPU and NPU accelerators. Swap-related performance penalties are reduced. Faster data movement also enables processors to return more quickly to low-power idle states, a critical factor in battery-powered devices.

Kioxia’s evaluation samples integrate a newly developed in-house UFS 5.0 controller together with the company’s

8th-generation BiCS FLASH. Capacities are 512 GB and 1 TB, and the package measures 7.5 × 13 mm, supporting compact board layouts in high-end smartphones.

Although UFS 5.0 has not yet been fully ratified, the availability of evaluation silicon indicates that the ecosystem is entering the validation phase. Commercial implementations are likely to appear in next-generation flagship mobile platforms designed for on-device artificial intelligence.

Rohde & Schwarz and Realtek have validated the first test solution for the Bluetooth LE High Data Throughput (HDT) feature. The new PHY extension increases the maximum data rate of BLE connections from 2 megabits per second to 7.5 megabits per second.

links to use different modulation schemes under challenging reception conditions.

Testing these features requires signaling mode. dRU measurements assess a device’s ability to increase transmit power within spectral density constraints, while UEQM analysis verifies whether the device adapts modulation correctly according to defined MCS combinations.

The joint demo will be shown next week at MWC 2026 in Barcelona. It is worth noting that the 802.11bn standard has not yet been finally ratified. In practice, this means Wi-Fi 8 device development and validation are moving from laboratory and simulation stages toward full system-level testing even before the standard is officially completed.

In practice, the peak data rate of low-power Bluetooth increases by a factor of 3.75. This is not merely an optimization, but a new physical layer implementation combining new modulation schemes with multiple levels of forward error correction. HDT supports five different data rates between 2 and 7.5 Mb/s.

Barcelona, and it is also being presented at Embedded World in Nuremberg.

It is important to note that 7.5 Mb/s is the maximum PHY-layer data rate. Application-level throughput is still affected by the BLE protocol stack

The higher data rate changes the role of BLE. Traditionally, Bluetooth LE has been optimized for small data payloads and ultra-low power consumption. HDT now enables low-latency audio, faster OTA software updates, and higher data density in consumer and PC devices. At the same time, the boundary between classic Bluetooth and LE is becoming less distinct.

The technology was validated using Rohde & Schwarz’s CMP180 radio communication tester together with Realtek’s next-generation chips, the RTL8922D and RTL8773J. chipsets. The companies showcased the solution at Mobile World Congress in

overhead and the selected forward error correction level. However, the key advantage of HDT is not just peak speed, but improved spectrum efficiency, energy efficiency, and link reliability.

In addition to Bluetooth LE testing, the CMP180 supports multiple other radio technologies, including Wi-Fi 8 and 5G NR FR1 frequencies up to 8 GHz. According to Rohde & Schwarz, the instrument is also ready for the upcoming universal test protocol.

Bluetooth LE High Data Throughput is not yet a widely commercialized feature, but the first validation solution indicates that the ecosystem is already preparing for the next performance class.

War and the deteriorating security environment are increasingly visible in the financial results of technology companies. Rising defense budgets, a stronger emphasis on European strategic autonomy, and the modernization of command-and-control systems are accelerating investments. Finland’s Bittium is one of the clear beneficiaries, even if the company does not phrase it quite so directly.

CEO Petri Toljamo speaks of a “deterioration of the global security situation” and growing tensions between major powers. In practice, this has significantly increased demand for tactical radios and communication networks.

In January, Bittium took a strategically important step. The company signed an agreement with NATO’s information systems agency, the NATO Communications and Information Agency (NCIA). The agreement is a so-called Basic Ordering Agreement (BSO) procurement framework.

The agreement is not a single purchase order. It makes Bittium a recommended supplier for NATO and its member states. In practice, this gives the company a fast track into NATO procurements, as theproducts can be acquired without lengthy separate tendering processes.

The framework covers commercially available solutions. NATO can procure secure smartphones, encrypted communication software, and related accessories for operational use. The solutions are designed to address growing cyber threats.

The agreement includes Bittium Tough Mobile smartphones as well as SafeMove and Secure Call software. These enable encrypted connections, secure voice communication, and mobile device management. The software supports Android, iOS, and Windows environments.

Interoperability is a key requirement for NATO. SafeMove Mobile VPN securely connects tactical networks with commercial 4G and 5G networks. This addresses NATO’s need to integrate military and commercial networks in a controlled manner.

Restricted level use. The newer Tough Mobile 3 is based on a dual-persona system that fully separates personal and professional use. The agreement does not guarantee an immediate sales spike. However, it gives Bittium a strategically significant position within NATO’s procurement system.

Bittium’s revenue for October–December grew by 62.5 percent to EUR 53.9 million. EBITDA increased to EUR 23.0 million, or 42.7 percent of revenue. Operating profit amounted to EUR 15.4 million.

Growth was driven particularly by the Defense & Security business segment. The share of product-based revenue rose to 84.7 percent.

New orders totaled EUR 97.9 million during the quarter. In December, the company received EUR 15.9 million in orders from the Finnish Defence Forces for Tough SDR radios and related software development. Austria’s Cancom Austria AG ordered tactical data transfer systems worth EUR 18.5 million. In addition, Bittium signed a EUR 50 million licensing agreement with Spain’s Indra Group for its Tough SDR technology.

Revenue for January–December increased by 40.1 percent to EUR 119.3 million. Operating profit rose to EUR 19.4 million. EBITDA amounted to EUR 32.4 million. New orders grew to EUR 153.3 million and the order backlog increased to EUR 77.9 million.

Bittium estimates that revenue in 2026 will be EUR 140–155 million and operating profit EUR 26–32 million. Revenue and profit are expected to be weighted toward the second half of the year.

The shift in the security environment is now clearly reflected in the figures. Bittium’s position is strengthening in both national and NATO-related procurements.

After a prolonged inventory correction cycle, the electronics industry is entering a more disciplined phase. Growth in 2026 will be driven not by volume rebounds, but by stronger design activity, AI deployment and more resilient architectures, says Rebeca Obregon, President of Farnell Global.

The electronics market through late 2025 has been characterized by gradual stabilisation rather than a sharp rebound. Across regions, we've seen customers working through excess inventory, reassessing demand forecasts and refocusing on resilience after a prolonged period of uncertainty. While recovery has not been uniform by geography or sector, the second half of the year has delivered clearer signals that demand is returning in a more sustainable way.

Farnell Global's recent earnings performance reflects this shift. Growth across all regions indicates that customers are beginning to invest again, particularly in areas that support longterm competitiveness rather than shortterm volume. Design activity is increasing, and that is a critical leading indicator. When engineers return to innovationprototyping, evaluation kits and new architectures — it suggests confidence is rebuilding beyond simple replenishment buying.

Looking ahead to 2026, we expect market conditions to remain dynamic. Inventory levels are normalising, but customers are also becoming more disciplined in planning and forecasting. Visibility, flexibility and speed to market are becoming decisive advantages, particularly as supply chains remain exposed to geopolitical pressures, tariffs and shifting trade dynamics. While lead times have stabilised, they are unlikely to remain static as demand accelerates in specific technologies, particularly in memory and AI-related applications.

AI is moving from experimentation toward deployment. We're seeing real

projects across industrial automation, data infrastructure, defence and energy, where processing power, memory and connectivity are central to performance.

This shift is reflected in Avnet's latest Insights Survey, which shows AI adoption accelerating beyond proof-of-concept, with engineers increasingly focused on deployment timelines, performance optimisation and long-term scalability rather than experimentation alone.

This is influencing both component availability and pricing, and it underscores the importance of early engagement among engineers, manufacturers, and distribution partners.

Another important shift we see heading into 2026 is a renewed focus on design robustness. The experience of recent years has highlighted the risks of singlesource dependencies and rigid architectures. Customers are now prioritising designs that allow for component substitution, multi-vendor strategies and longer product lifecycles. This is driving demand for technical support, evaluation platforms and deeper collaboration — areas where Farnell continues to invest.

From a regional perspective, growth drivers will vary. Industrial automation, energy, defence and medical applications remain constructive, while automotive continues to face structural challenges in some markets. What is consistent across regions is the need for partners who can combine global scale with local expertise, ensuring customers have access to both supply continuity and application-level support.

As we move into 2026, our focus at Farnell is on enabling customers to navigate uncertainty with confidence. Through our 'Power of One' approach — combining Avnet's global capabilities with Farnell's digital platform and regional presence — we are committed to supporting customers throughout the whole design and sourcing lifecycle. Innovation, resilience and speed will define the next phase of growth, and we see strong momentum as customers prepare for what comes next.

We'll be continuing these conversations with customers and partners. The focus will be on supporting engineers as they translate renewed design activity into resilient, production-ready solutions.

The full release of Aliro 1.0 by the Connectivity Standards Alliancesignalsthat the new industry standard for secureand interoperableaccess controlis ready toprovideaconvenientalternative to proprietary solutions.

Patrick Sohn STMicroelectronics

The industry has long envisioned a truly connected world where our mobile devices serve as a universal digital key to allow us freedom of movement between our homes, workplaces, and public spaces. A universal mobile wallet with valid credentials would give users the freedom to make payments, access open-loop transit systems, and unlock doors in residential, commercial or hospitality settings.

Turning this vision of a universal mobile wallet into reality requires a unified standard for access control to guarantee interoperability and meet security requirements considered critical for use cases with high turnover and complex ecosystem integrations. The release of Aliro 1.0 in February 2026 represents a key milestone for device makers who promised their customers interoperable access control experiences and are ready to deliver a fully standardized solution.

new industry standard: from the experts behind Matter and Zigbee

The Connectivity Standards Alliance (Alliance), the industry-backed group behind well-known IoT standards Matter and Zigbee, recently released Aliro 1.0: a fully standardized credential format and secure communication protocol that defines how smartphones, wearables, and smartcards securely exchange digital wallet credentials with access control readers to reliably grant or deny access.

The release of Aliro 1.0 follows a proven model: the same experts from leading mobile platforms, silicon

providers, and IoT device makers behind the standards that delivered a unified IoT ecosystem have now released a standard for access control to ensure unified behavior across devices and deployment models. As a member of the Aliro Working Group, STMicroelectronics contributed expertise and application examples to ensure the specification leverages wireless connectivity technologies supported in smartphones already in users’ hands. ST has also made available engineering resources to ease implementation of the Aliro protocol and compliance testing for market-ready products.

In addition to the full Aliro 1.0 specification, the Alliance released comprehensive test suites and implemented a robust certification program to give device makers and integrators a clear path to

validate interoperability and security claims prior to the commercialization of Aliro-compliant designs.

Recent announcements from mobile platform leaders, silicon vendors, and access control device makers about Aliro—ranging from planned digital wallet integration to Aliro-compliant reference designs and commercialization plans--indicate broad industry support for a unified access control standard and cooperation between ecosystem partners to prepare for the first real-world deployments.

A unified access control standard that supports multiple connectivity technologies

To accommodate the widest possible range of user devices and installation scenarios--such as shortterm rentals, commercial venues, or co-working spaces--Aliro 1.0 defines a transport layer that can run over multiple wireless connectivity technologies:

• Near Field Communication (NFC) for secure access with a simple tap from a smartphone, wearable device, or smartcard. This optimized configuration is ideal for close-range “tap-tounlock” use cases.

• Bluetooth Low Energy for longer-range communication, enabling doors to unlock as users approach within a range of up to 100 meters, with NFC technology present as a reliable fallback option when the user device is offline or in areas with poor signal coverage such as parking structures, elevators, or shielded installations.

• NFC and Bluetooth LE combined with Ultrawideband (UWB) for precise localization and hands-free authentication.

The multiple configurations supported by Aliro 1.0 give device makers the flexibility necessary to design solutions for real-world access control scenarios and installation constraints while ensuring ecosystem interoperability.

All Aliro-compliant designs require secure data exchange based on asymmetric key cryptography. This requirement ensures that every transaction between a user device and an access control reader is genuine, confidential, and resistant to cloning or replay attacks. To ensure a high level of security for large-scale IoT deployments, a certified secure microcontroller is strongly recommended to protect stored certificates and credentials for cases where vulnerable installations may be exposed to physical tampering or remote logical attacks.

The flexibility to combine standard connectivity and security building blocks positions Aliro 1.0 as a practical blueprint for engineers who need to design access control readers that are suitable for real-world use cases while ensuring the unified ecosystem remains interoperable.

Accelerate deployment of new access control devices with Aliro 1.0

Aliro-compliant reader designs will be interoperable with user devices that support the access control standard, eliminating the need for engineers to maintain multiple protocol stacks and complex integrations for different customers or regional ecosystems that can delay the rollout of new access control designs.

By standardizing how access control readers and user devices with access credentials communicate,

Aliro offers device makers a common technical foundation that is not based on proprietary technology. Instead of locking solution providers into a proprietary ecosystem based on a closed protocol, Aliro is an industry-backed communication protocol with clear technical requirements that can be implemented by any compliant device.

By mitigating protocol fragmentation and redundant integrations, we can expect Aliro to eliminate some barriers to market entry and help device makers to accelerate their design cycles: design for standard compliance once and scale up fast for deployment across millions of residential, commercial or hospitality installations around the world without compromising security or interoperability.

Device makers who are ready to implement Aliro 1.0 can choose to work with their preferred semiconductor technology partner able to provide everything needed to build Aliro-compliant access control readers: a competitive portfolio of security & connectivity products with long-term availability commitments, a complete ecosystem with hardware development tools and ready-to-use software development kit (SDK), and expert resources to accelerate the validation and certification process. As Aliro moves into the commercial rollout phase, the relationship between access control device makers and semiconductor vendors will play a key role in turning the specification into robust, scalable and interoperable products. ST has already products for both the user and reader devices supporting Aliro 1.0.

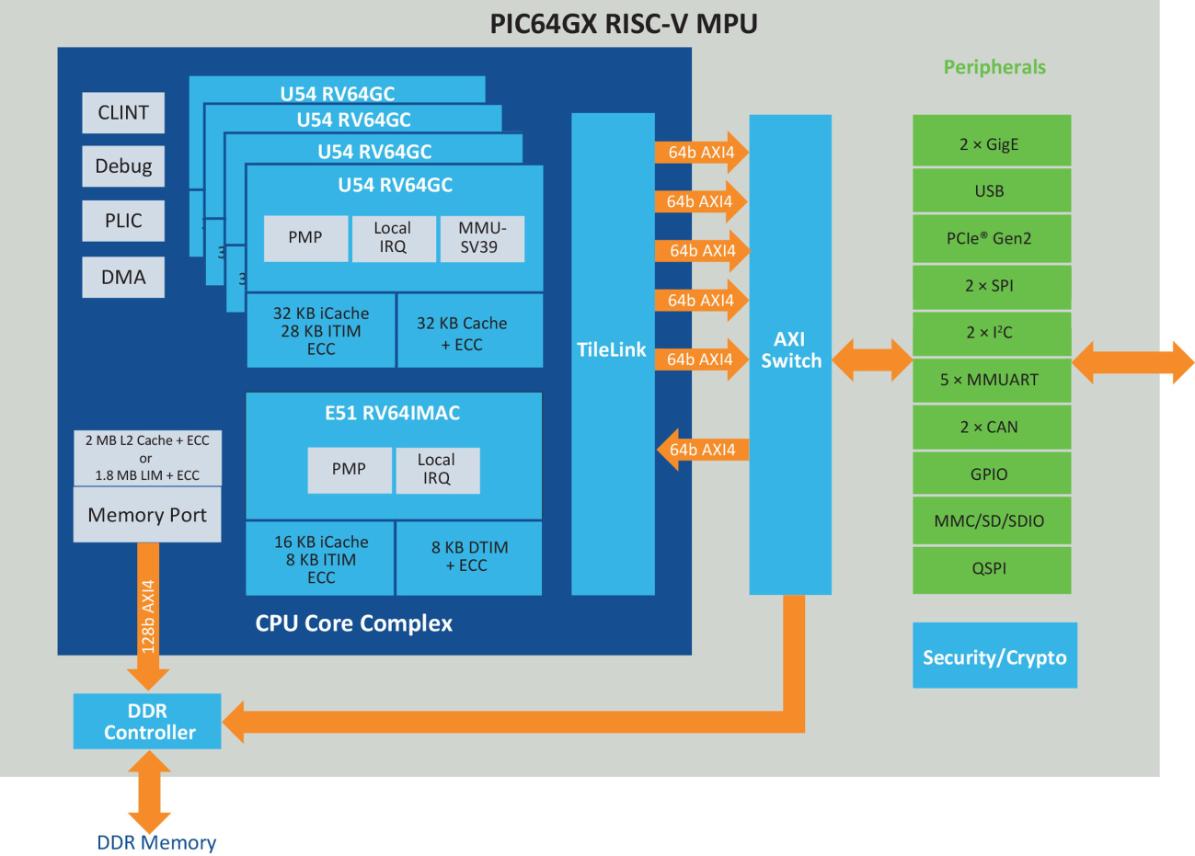

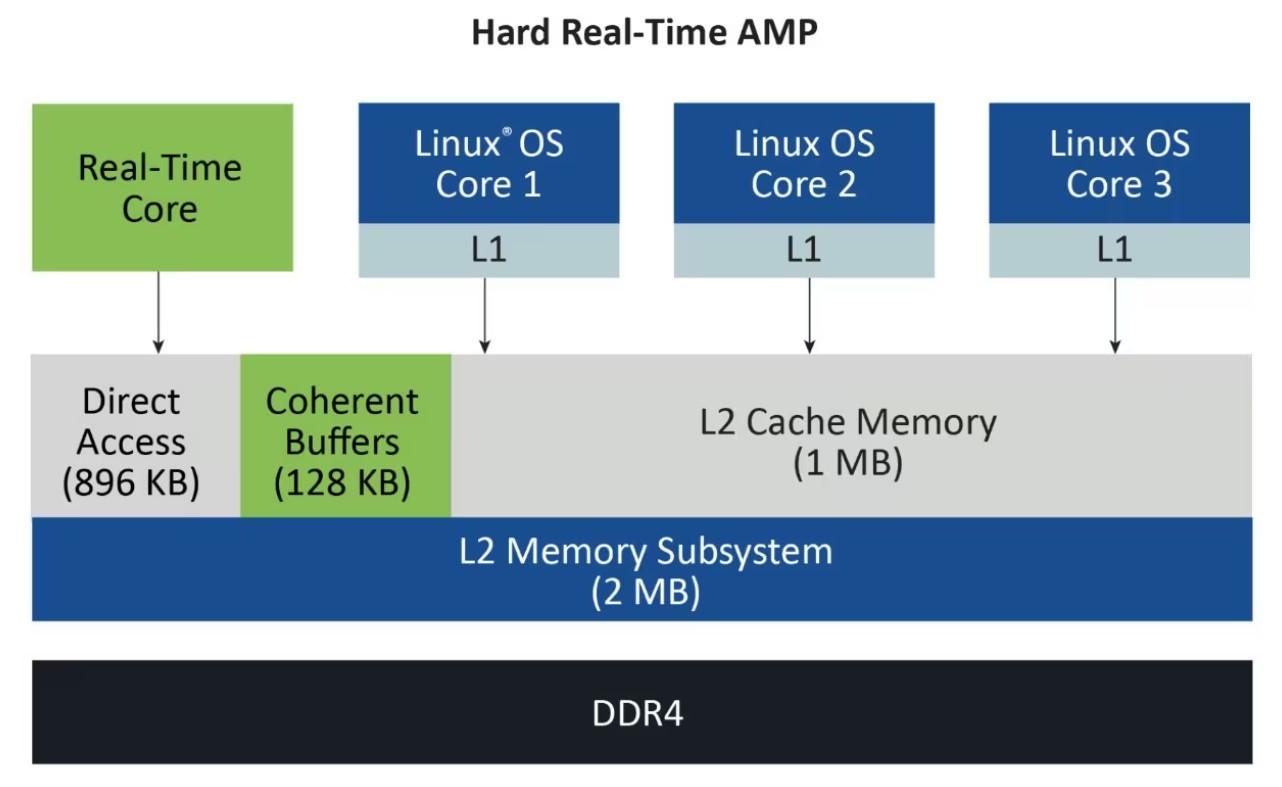

64-bitRISC-V®Quad-ProcessorWithAsymmetricMul�-Processing(AMP)

IntroducingMicrochip’sPIC64GXmicroprocessor(MPU),designedtomeetthedemandsofmodernintelligent edgeapplicationsacrossindustrieslikeindustrial,automotive,communications,IoT,aerospaceanddefense. ThePIC64GXfamilyoffersunparalleledcomputepowerandscalableperformance,enablingengineersto innovatewithconfidence.

SupportedbyourrobustMPLAB®developmentecosystemandanextensivesuiteoflibraries,you’llaccelerate yourdesign,debugandverificationprocesses,significantlyreducingtimetomarket.Discoverthepowerof thePIC64GXseriesforcreatinghigh-performance,real-timeAMPsystemsthatexecuteflawlesslyeverytime.

KeyFeatures

• 64-bitRISC-Vquad-coreprocessorwithAsymmetricMulti-Processing(AMP)anddeterministiclatencies

• AdvancedsecurityfeaturesincludingintegratedNonvolatileMemory(NVM)toimplementsecure bootandmanagesecuritykeys

• AwidevarietyofconnectivityinterfacesincludingEthernetTimeSensitiveNetworking(TSN),USB, PCIe®,SPIandI2C

• MIPICSI-2®,HDMI®2.0andavideopipelineforimagingapplications

• SupportforLinux®,RTOSandcommercialoperatingsystems

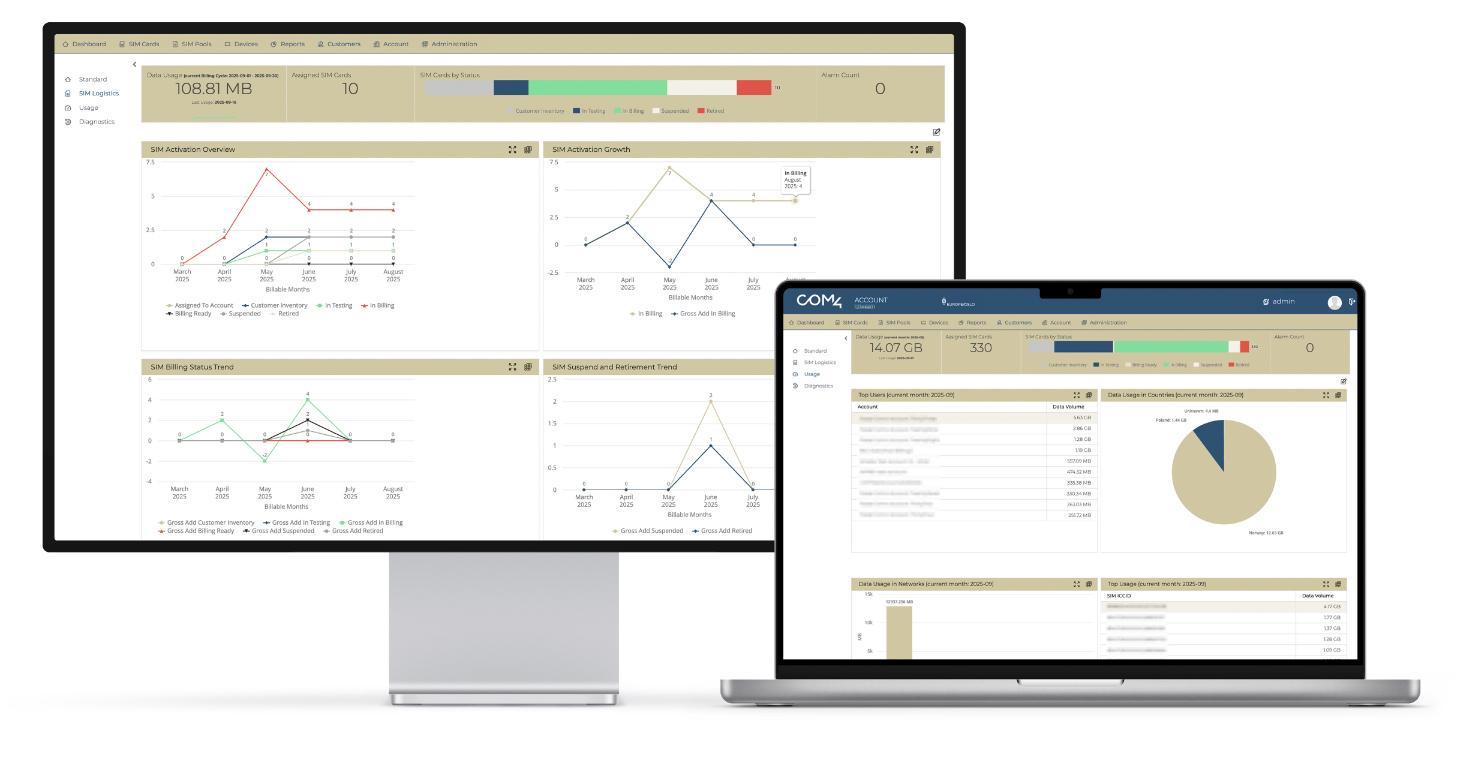

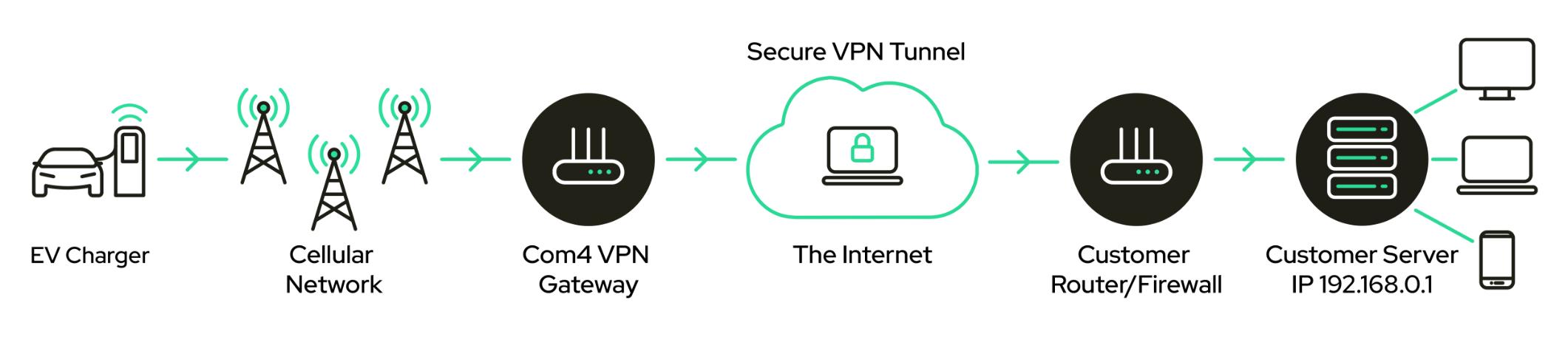

Across the Nordics, EV charging is turning into everyday infrastructure. For charge point operators, charger manufacturers, and service partners, the user experience still depends on something many people never see: the data connection behind the charge point.

Authentication, billing, monitoring, remote control, diagnostics, load management and software updates all rely on an uninterrupted link to the backend. When

predictable operations as the network grows.

The Nordic reality

is unstable, everything around it becomes harder to operate, harder to scale, and harder to secure.

At Com4, we treat connectivity as a first-class design parameter. When the connection is engineered properly from the start, operators reduce recurring issues like failed transactions, remote actions that don’t reach the charger, updates that never complete, and support cases that end in expensive site visits. The result is higher uptime, smoother commissioning, fewer truck rolls, and more

The Nordics combine high expectations with challenging deployment conditions. Chargers are often installed in indoor parking facilities, underground areas, and dense structures where concrete and steel can turn a strong outdoor signal into an unreliable indoor link. At the same time, charging networks are expanding along highways, in rural

The Com4 CMP interface on laptop, tablet, and mobile — designed for speed, clarity, and large-scale operations.

areas and across long distances where fixed-line access is not always practical.

Weather adds another layer. Cold seasons increase the cost of downtime and the cost of sending technicians on-site, which is why remote diagnostics and over-the-air maintenance are essential for predictable operations. For operators, the goal is simple: keep the network online and keep the payment flow stable, regardless of location, season or traffic peaks.

The most effective way to improve availability is to remove single points of failure. In charging, that starts with multinetwork, non-steered roaming. Instead of being locked to one operator, the charger connects to the strongest available mobile network automatically. That reduces dropouts in coverage shadows, accelerates deployments, and supports expansion without connectivity becoming the bottleneck.

Uptime also requires visibility and control at fleet scale. Connectivity must be monitored just as closely as the chargers themselves. Through Com4’s Connectivity Management

Platform (CMP), operators get a real-time view of SIM status, data usage and device performance. This shortens troubleshooting cycles and helps prevent small connectivity issues from turning into operational downtime.

2G/3G sunsets are already underway

Mobile operators across the Nordics are reallocating spectrum from legacy 2G and 3G services to strengthen 4G and 5G. For EV charging, this matters because older charge points, routers, or fallback profiles that still rely on 2G/3G risk losing connectivity when local shutdown milestones are reached. The result is not just “a slower connection” – it can mean lost remote control, failed monitoring, and interrupted service workflows, and chargers that appear powered but can no longer be reliably operated.

The practical takeaway is to treat migration as part of your uptime strategy. Charge points should be deployed with 4G/5G-capable communications from

Com4’s Connectivity Management Platform (CMP) unifies automation, diagnostics, billing, and reporting so you can monitor millions of devices, receive smart notifications, and make data-driven decisions in real time.

the start, and existing fleets should be mapped early so upgrades can happen ahead of regional switchoffs. A good audit looks beyond the charge point itself and checks modem capability, router and antenna setup, any dependency on 2G/3G fallback, and the firmware or backend constraints that can complicate upgrades.

With eSIM/eUICC, multi-network connectivity and centralized SIM management, the transition becomes significantly easier because service continuity can be maintained while networks change underneath you.

Charging infrastructure is connected infrastructure. It carries operational commands and frequently supports payment-related traffic, which makes security a baseline requirement. We use Private APN connectivity and VPN encryption to create isolated, controlled communication paths and secure end-toend data transport between charging stations and

Data from the EV charger travels through the cellular network and a Com4 VPN gateway to the internet, where it is securely routed via a VPN tunnel to the operator’s backend server.

backend systems. This reduces exposure to public internet threats and supports clearer segmentation and governance across deployments, including controlled access, consistent routing, and a cleaner operational security model at fleet scale.

Interoperability matters just as much. We support deployments aligned with OCPP 1.6 and 2.0, so charge points can communicate reliably with the central system while operators plan for long lifecycles and evolving requirements.

Global coverage and 24/7 support

Nordic charging networks increasingly expand beyond one country, whether through cross-border traffic, shared platforms or international operators. Com4 provides seamless global cellular IoT connectivity with extensive coverage, supporting consistent rollouts and stable performance across regions.

Reliability is also about architecture. Com4 operates dual core sites so that if one core site experiences an outage, the other can take over to prevent disruptions in IoT connectivity. And because EV charging is a 24/7 service, our expert support team is available 24/7 to help keep deployments running smoothly at all times.

Make connectivity a first-class design parameter

Large-scale charging is not only about installing hardware. It is about operating a distributed, always-

on connected system where uptime, security, and manageability determine the real-world outcome. The strongest networks are built for operational reality from day one: connectivity that holds up indoors and across long distances, secure communications end-to-end, and centralized control that keeps small issues from becoming downtime.

Com4 has more than 13 years of experience delivering managed IoT connectivity, and we build tailored solutions because no two charging rollouts are identical. Today we manage over 500,000 SIM cards in charging stations worldwide, and that scale informs how we design reliability, security and fleet control.

If you want to improve uptime and future-proof your Nordic rollout, we can start with a practical connectivity check: mapping challenging site types such as garages and remote locations, reviewing 2G/3G dependency in the installed base, and designing a secure architecture with Private APN, VPN, and multi-network connectivity. Contact Com4 to plan the next steps and tailor a setup that keeps charging stations online, secure and easier to scale across the Nordics.

For more information, see

application-level functions running on Linux on another core.