Authors:

DDI: Merima Dzanic, Henrik Hansen

DCAI: Ali Syed, Liat Tamam, Peter Godtfredsen, Piotr Chmura

BoldQubit: Nadia Carlsten - Founder & Principal, BoldQubit - Internationally renowned Quantum & AI SME

About Danish Data Center Industry Association

DDI is the national data center association, a not-for-profit organisation representing the Danish data center ecosystem

For more information, please visit our website: www.datacenterindustrien.dk.

About Danish Centre for AI Innovation

DCAI is a company established to operate Gefion, Denmark’s first AI supercomputer, working to lower barriers to advanced computing and accelerate AI research and innovation across academia, startups, and enterprise.

For more information, please visit our website: www.dcai.dk.

Disclaimer

This publication is produced by the Danish Data Center Industry (DDI) and Danish Centre for AI Innovation (DCAI). All references, reuse or copy of the whitepaper data should be approved by DDI and DCAI in writing beforehand

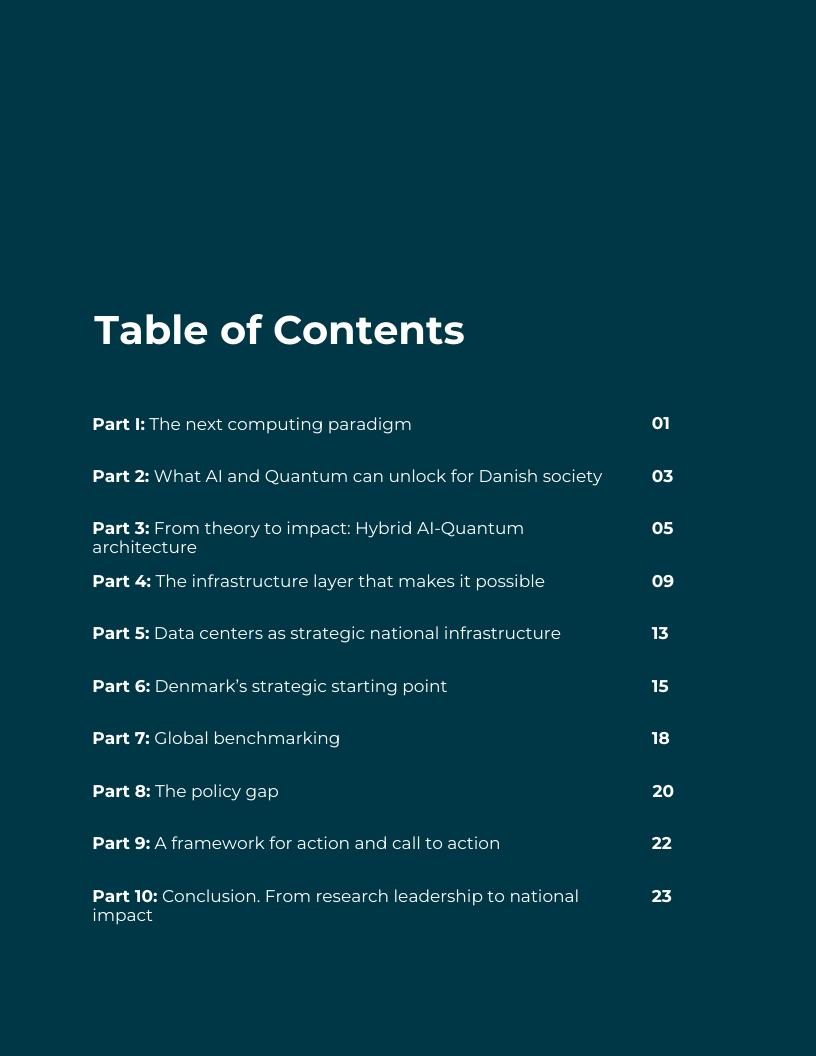

Artificial intelligence and quantum computing are converging into hybrid computing models, where quantum processors accelerate specific, hard-to-solve problems, and AI combined with classical high-performance computing provide scale, control, and usability. Together, they underpin the next generation of digital and industrial capability.

Hybrid AI–quantum computing is not primarily an algorithmic shift; it is an infrastructure shift. Quantum systems do not operate in isolation They depend on tightly integrated classical computing, AI workloads, orchestration software, stable power, high-performance connectivity, and controlled physical environments. The decisive factor in capturing value from hybrid computing is therefore not research alone, but the infrastructure that enables it

Data centers are becoming the execution layer of this new computing paradigm. Performance, reliability, security, and scalability are determined by how well classical and quantum systems are integrated within resilient, high-density facilities. Energy-intensive AI clusters and specialized quantum environments must operate side by side, requiring coordinated planning across digital policy, energy systems, and infrastructure design.

Denmark enters this era from a position of strength The country combines world-class research in AI and quantum technologies, a mature and energy-efficient data center ecosystem, abundant renewable energy, robust fiber connectivity, and strong governance frameworks Platforms such as the GPU-based supercomputer Gefion and emerging quantum initiatives demonstrate Denmark’s early capability to host advanced hybrid workloads within a sovereign infrastructure environment

Yet a structural policy gap exists. Denmark has national strategies for AI and quantum computing, but data centers are not yet consistently treated as strategic national infrastructure aligned with these ambitions. Without coordinated planning, advanced hybrid workloads risk being hosted abroad, exporting economic value, intellectual property, tacit knowledge, and strategic capability

This whitepaper examines how hybrid AI–quantum computing reshapes the role of digital infrastructure. It outlines hybrid system requirements, Denmark’s strategic advantages, international developments, and the emerging infrastructure gap. It argues that data centers must be recognised not merely as industrial facilities, but as strategic execution platforms enabling research, industrial competitiveness, energy optimisation, and national resilience. The coming decade will be decisive Countries that align research, infrastructure, energy, and policy will convert technological leadership into economic and societal impact By linking its AI and quantum ambitions with a coordinated national data center strategy, Denmark can secure technological sovereignty, strengthen key industries, and position itself as a trusted European hub for hybrid AI–quantum execution.

For decades, progress in computing has followed a familiar trajectory: faster processors, larger data centers, and more capable software. Artificial intelligence has accelerated this development, driven by large-scale GPU clusters and advanced data architectures, enabling breakthroughs in automation, modelling, and scientific research.

At the same time, many of the most complex challenges facing society and industry are becoming increasingly difficult to address using classical computing alone Drug discovery, climate modelling, energy system optimisation, financial risk modelling, logistics planning, and advanced materials design involve vast solution spaces that are costly or impractical to explore fully, even with state-of-the-art AI.

Quantum computing represents a new class of computational capability. It is not a replacement for classical systems, but a specialised accelerator designed to address specific types of problems, particularly complex simulations and high-dimensional optimisation tasks where classical approaches struggle.

Today, quantum computing is still at an early stage of maturity. Systems are advancing rapidly, yet remain constrained by stability, error rates, and scale. Practical deployment therefore relies on hybrid models in which quantum processors operate alongside classical CPUs and GPUs.

In one possible workflow:

Classical systems prepare and structure data

AI models guide optimisation and reduce noise

Control systems manage quantum operations

Quantum processors accelerate specific computational steps

Classical infrastructure validates and scales results

The future of computing is therefore hybrid. AI, high-performance computing, and quantum processors operate as parts of a single, integrated workflow requiring rapid interaction, secure environments, and stable, predictable infrastructure.

Data centers & their role in the next computing evolution

Data centers provide the foundation for this model. They have evolved into advanced digital infrastructure capable of supporting diverse compute architectures, continuous operation, and high levels of security. Increasingly, quantum systems are being designed to integrate into data center environments rather than operate in isolation. In this new computing paradigm, infrastructure plays an active, enabling role.

As AI and hybrid workloads become larger, more energy-intensive, and more tightly integrated across classical and quantum systems, performance, reliability, and scalability depend directly on facility design, power availability, connectivity, and orchestration capabilities.

Data centers are therefore moving beyond real estate assets where technology is housed, becoming strategic execution layers that enable advanced technologies to deliver real industrial and societal impact.

Countries and regions that recognise and prepare for this shift will be best positioned to translate AI and quantum research leadership into economic competitiveness and public value.

The convergence of AI and quantum computing has the potential to address some of Denmark’s most important societal and industrial challenges. While many applications are still emerging, hybrid AI–quantum computing is particularly well suited to problems that are too complex, data-intensive, and costly for classical computing alone.

Healthcare & life sciences: Denmark’s strong life sciences ecosystem stands to benefit significantly. AI systems can analyse large biological datasets to identify promising drug candidates, while quantum simulations can model molecular interactions with higher precision in specific contexts. Hybrid workflows may accelerate compound screening, optimise molecular structures, reduce development timelines, and strengthen Denmark’s competitiveness in pharmaceutical research and advanced therapeutics.

Energy & Climate: In energy and climate systems, hybrid computing can improve optimisation of electricity grids with high shares of renewables, enhance energy storage modelling, and support advanced materials development for batteries and power systems. Hybrid models may also refine weather and climate simulations by improving parameter optimisation within large-scale GPU-based models Even incremental efficiency gains can produce substantial economic and environmental benefits when applied at national scale.

Logistics & Transport: Supply chains, freight networks, and traffic systems involve large sets of dynamic variables and constraints. AI already plays an important role in route planning and demand forecasting, but hybrid AI–quantum approaches can refine complex scheduling, fleet optimisation, and congestion modelling challenges. This can improve efficiency, resilience, and emissions reduction across critical infrastructure systems.

Industry & Manufacturing: Advanced manufacturing relies increasingly on predictive maintenance, process optimisation, and materials innovation. Hybrid AI–quantum computing can enhance simulation accuracy in complex production environments, optimise multi-variable industrial processes, and support advanced materials design, improving productivity and competitive positioning for Danish industry in global markets.

Financial Services & Advanced Analytics: In financial services, AI models running on GPU clusters analyse large time-series datasets for portfolio optimisation, anomaly detection, and stress testing. Quantum-enhanced optimisation routines can refine high-dimensional allocation, correlation modelling, and risk constraint problems under complex market scenarios. Integrated hybrid workflows can allow advanced modelling while maintaining secure, auditable, and sovereign governance standards.

These domains closely align with Denmark’s existing strengths in life sciences, green energy, advanced manufacturing, financial services and digital innovation. However, the value of hybrid AI–quantum computing does not arise from algorithms alone.

Across all sectors, these applications depend on access to advanced computing environments that combine quantum processors with large-scale classical compute, secure data access, and reliable orchestration.

Hybrid AI–quantum computing builds on a strong classical computing foundation Large-scale GPU and high-performance computing clusters provide the capacity to train models, process data pipelines, and execute industrial workloads at scale

In Denmark, this foundation is exemplified by Gefion, a national AI supercomputing platform operating over 1,500 NVIDIA H100 and B300 GPUs within a DGX SuperPOD architecture interconnected by NVIDIA Quantum-2 InfiniBand (3.2 Terabit/s per node). This high-density, sovereign accelerated computing backbone enables large-scale AI training, simulation, and advanced analytics within a sovereign national infrastructure platform.

Hybrid AI–Quantum architectures can extend this classical backbone by introducing quantum processors as specialised accelerators within tightly integrated workflows - not all problems can be solved with quantum algorithms, but many benefit from introducing quantum components in their execution. In this model, most computation remains classical: CPUs and GPUs prepare inputs, execute control logic, mitigate errors, and post-process results while AI models increasingly assist in tuning parameters and stabilising execution

The quantum processors contribute targeted advantages in specific domains such as molecular simulation, sampling, and high-dimensional optimisation, often providing drop-in replacements for functions and libraries, easing adoption for end users

In practice, hybrid applications operate in iterative loops:

Classical systems encode the problem and generate candidate solutions. AI models select candidates, tune parameters, and guide noise-aware optimisation.

Control systems compile circuits, schedule runs, and manage quantum operations

Quantum routines execute targeted subroutines to refine precision or explore complex solution spaces

Classical infrastructure post-processes results, mitigates errors, validates outputs, and scales workflows.

This model requires a coordinated orchestration layer capable of scheduling multi-stage workloads, allocating resources across CPUs, GPUs, and future QPUs, and ensuring monitoring, isolation, and auditability Hybrid architecture must therefore be designed as an integrated execution environment rather than a collection of components

The Gefion AI Supercomputer’s architecture has been designed with forwardthinking extensibility in mind, allowing for easy addition of quantum devices in the customers’ pipelines with minimal changes.

Latency is a critical constraint for many hybrid algorithms, posing real challenges for many data centers and supercomputing facilities. Classical control systems often need to communicate with quantum hardware in tight feedback loops – it is essential not just for performance and stability, but for scaling up execution as well. Early-stage research may tolerate physical separation of the two systems, but production-scale deployments will increasingly require co-location within the same controlled facility environment.

Co-location means more than just placing systems in the same building. It demands shared high-speed interconnects, unified orchestration, and synchronized scheduling to minimize latency and timing jitter. The "plug-and-play" narrative pushed by many QPU vendors considerably understates the complexity involved in cutting-edge algorithms. The imposed strict requirements impact not only the classical CPU-GPU architecture they interface with, but also on the physical environment the quantum hardware itself needs to operate reliably.

Bridging quantum processors and classical systems requires purpose-built interconnect technologies at both the hardware and software levelNVIDIA’s NVLink enables sub-microsecond to ~microsecond-class GPU memory access in published benchmarks, while RDMA over Ethernet (RoCE) can deliver microsecond-class application-level latency (subject to hardware and fabric configuration) DCAI's Gefion is one of very few European systems currently supporting this capability based on NVQLink reference architecture 1 2

Denmark’s planned quantum capability, Magne under QuNorth, is expected to complement Gefion as a quantum layer within a broader hybrid environment.

In materials science and enzyme development, AI systems generate candidate molecular structures from large datasets. Quantum simulations then refine specific molecular interactions, before classical systems validate and scale results for industrial use. In financial services, GPU-based AI models process time-series data for portfolio optimisation and risk modelling, with quantum-enhanced routines tackling complex allocation or correlation problems after which classical systems evaluate them for compliance and operational scaling. Statistical analysis offers another use case: many computationally expensive algorithms, such as sampling, stand to benefit directly from QPU acceleration.

Hybrid AI–quantum computing is therefore not defined by quantum hardware alone, but by how effectively classical and quantum systems are integrated within resilient, secure, and energy-aligned data center environments The capacity to design and govern this integrated architecture will determine whether Denmark can translate research capability into sustained industrial and strategic value 7

This architecture can be conceptualised as a layered execution stack, connecting application workflows, orchestration software, and physical infrastructure. In practice, however, the boundaries between these layers are blurry - workflows utilize many components of the system simultaneously, and the result is a tightly coupled design where real operational complexities, such as sub-zero operating temperature and stable power requirements, are often overlooked in high-level diagrams and descriptions

Finance forecasting + portfolio constraints

Market data/time-series → CPU/GPU step → QPU step → loop

Improved decision signal

ORCHESTRATION

A) Preprocess (CPU/GPU): prepare inputs

B) QPU window (QPU): reserve slot → run circuits → return results

C) Post-process (CPU/GPU): decode → update → iterate

D) Operate (all): quotas | isolation | audit | retries | monitoring

Gefion (GPU/HPC) → low-latency link → Magne (QPU)

Co-location: same site → short links → stable timing

Hybrid AI–quantum computing shifts the focus from isolated laboratory systems to the data center as the primary execution environment. Without purpose-built facilities and specialised operational expertise, hybrid workflows cannot make the leap from research prototypes to reliable production systems. Without that leap, the cost reductions from the effect of economies of scale and broader adoption the field promises will remain out of reach. The trajectory mirrors what happened with large language models: once experimental novelties, they are becoming ubiquitous components of data processing pipelines through use of programmatic interfaces and machine-compatible protocols.

Performance, reliability, scalability, and security are all shaped by the infrastructure in which hybrid workloads run Modern data centers engineered for high density, low latency, and energy efficiency therefore become the essential foundation for production-grade hybrid AI–quantum capability The field is moving too fast to design a “perfect” environment for a system arriving in five to ten years The right approach is to act now: extend existing systems, accumulate operational experience, build competence, and iterate toward the next generation

In Denmark, platforms like Gefion show how sovereign accelerated computing environments can anchor national AI capability. But extending such environments toward quantum integration demands deliberate facility planning, cross-domain engineering, and long-term infrastructure coordination. Post-deployment realities must also be taken seriously as operating heterogeneous infrastructures over time is its own challenge, and consistency of service levels matters just as much (if not more) as their quality.

From AI-ready to quantum-ready

AI workloads have already reshaped data center design with high-density GPU clusters demanding advanced cooling, resilient power infrastructure, and highperformance networking. Hybrid AI–quantum systems add further layers of complexity on top of this

Depending on the architecture, quantum processors may require cryogenic environments operating at ultra-low temperatures, vibration isolation, electromagnetic stability, deterministic power quality, precision signal control, and continuous environmental monitoring. These requirements are fundamentally different from conventional rack-based deployments – quantum installations can look far more like a physics laboratory than a typical data center floor.

Integrating quantum systems into existing facilities demands deliberate architectural planning from the outset. Incremental retrofitting is unlikely to be sufficient, particularly where sub-ambient operating temperatures are involved. Nor is it practical to house QPUs in a dedicated building adjacent to the data center as the added distance reintroduces the network latency and bandwidth constraints the co-location model is specifically designed to eliminate

Quantum processors themselves are not significant energy consumers, but the broader hybrid workload introducing GPUs, CPUs, storage, and interconnects, remains highly energy-intensive Quantum hardware functions as a specialised accelerator within an AI-ready environment, which reinforces the case for facilities capable of supporting both high-density compute and specialised environmental zones within a single, unified operational framework

Co-location as infrastructure strategy

As hybrid workflows mature, latency and integration requirements increasingly favour co-location within the same controlled facility or data center campus. Keeping classical and quantum systems in close physical proximity reduces turnaround time in iterative execution loops and enables stable, predictable performance.

In practice, co-location implies shared high-speed fibre infrastructure, reliable and redundant connectivity, unified orchestration and scheduling, and integrated monitoring and governance For operators and investors, this represents a structural shift in how facilities must be conceived

Future-ready data centers may need to combine modular high-density compute zones with specialised quantum environments Crucially, these must be designed for hybrid integration from the ground up, not added on as afterthoughts

There is a temptation to treat quantum hosting as simply an extension of existing data center design but this would be a very costly mistake AI systems broadly demand more of what data centers already provide (power, cooling, networking) but some quantum deployments introduce different requirements that cannot be accommodated through incremental expansion They must be accounted for at the site selection, design and construction stage Some equipment might be sensitive enough to pick up power line instabilities and vibrations from nearby tram lines.

The first generation of hybrid infrastructures has unsurprisingly come out of national supercomputing environments, signalling that these systems have outgrown the standalone laboratory model and are ready for broader adoption.

In the United States, Oak Ridge and Argonne National Laboratories are integrating quantum testbeds alongside existing supercomputing infrastructure under Department of Energy programs. In Europe, initiatives such as HPCQS are connecting Pasqal quantum processors to Tier-0 supercomputers in Germany and France, demonstrating that sovereign hybrid integration within national HPC ecosystems is already underway The sameapplies to the Quera with the supercomputer in Japan

3 4

These examples point to a broader pattern: the competitive advantage of firstgeneration hybrid systems is emerging from coordinated infrastructure integration, not isolated deployments This dynamic is also reshaping the workforce as operating these environments requires new categories of high-skill expertise AI operations specialists, quantum engineers, orchestration architects, and infrastructure integration experts need to develop operating models and crosstrain to stay effective. Workforce development will therefore matter as much as the physical infrastructure itself.

As with the first generation of AI systems, value creation follows the workloads, starting with where they are hosted, then flowing toward the users and industries that depend on them A gap in domestic hybrid AI–quantum capability would mean losing the ability to retain compute revenue, intellectual property, and highvalue technical roles within national jurisdiction The presence of sovereign infrastructure of this kind strengthens downstream industries across life sciences, energy, financial services, and advanced manufacturing If hybrid infrastructure capacity develops primarily abroad, Denmark risks exporting not just workloads but economic leverage and strategic control over what may become a critical and contested computational resource.

Infrastructure decisions therefore carry consequences well beyond cost and operational efficiency. They shape national capability, long-term competitiveness, and strategic resilience.

As AI and quantum computing advance, data centers are no longer just industrial facilities; they are core digital infrastructure. They enable advanced capabilities in healthcare, energy, industry, research, and public services. As these technologies mature, where and how computing is executed increasingly shapes national control, trust, and resilience.

Hybrid AI–quantum workloads require secure environments, stable energy, highperformance connectivity, and tight integration of classical and quantum systems. These requirements go beyond traditional technology policy, making data center decisions central to technological sovereignty and economic competitiveness AI and Quantum systems are becoming high-value assets and, in some cases, support applications of national strategic importance

As quantum capabilities advance globally, cybersecurity considerations extend beyond traditional threat models Future-proofing critical infrastructure will require attention to post-quantum encryption standards, secure key management, and hardened data center environments capable of protecting sensitive intellectual property, public-sector systems, and nationally significant installations Denmark’s opportunity lies not in claiming immunity from cyber threats, but in building resilient, high-assurance facilities designed to anticipate evolving security risks.

Today, many data centers are treated primarily as private industrial developments, evaluated on local planning, energy use, or environmental impact. The hybrid AI–quantum era makes the strategic gap clear: decisions about location, operation, and access have direct implications for national capability.

Not all data centers need the same level of oversight. But facilities supporting advanced AI, quantum computing, or other critical digital functions should be considered strategic infrastructure, alongside energy networks, telecommunications, and transport systems Denmark’s strong renewable energy supply, stable grids, and world-leading data center platforms make it uniquely positioned to host such facilities safely and sustainably

Key policy questions therefore emerge:

How should Denmark plan and coordinate strategic data center capacity?

How can national goals around energy transition, digital sovereignty, and innovation align with private investment?

How can trust, security, and resilience be ensured as computing systems grow more powerful and complex?

Where is responsibility best placed for coordination across digital policy, energy policy, infrastructure planning, and national security?

Answering these questions requires national-level alignment across digital policy, energy policy, infrastructure planning, and national security. By treating key data centers as active enablers of national capability, Denmark can turn research leadership in AI-quantum computing into real economic and societal value.

Countries that fail to recognise this risk losing control over where and how advanced computing is deployed, even if they remain strong in research and innovation

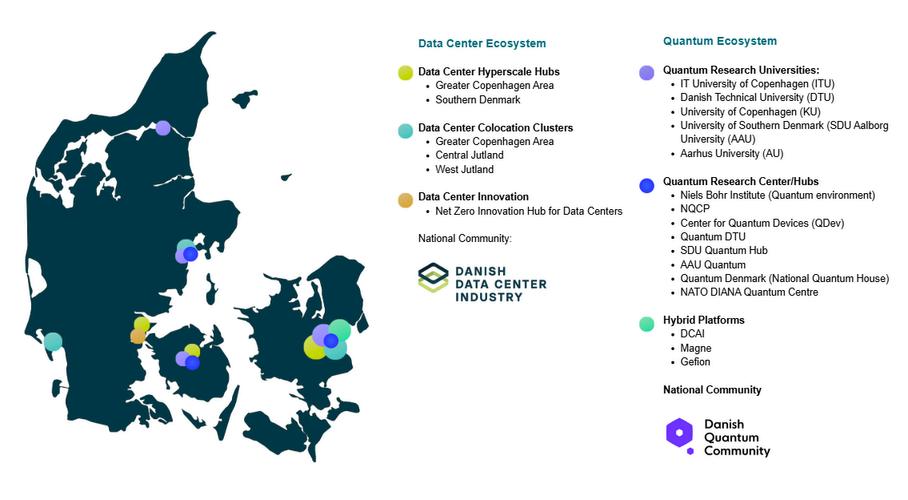

Denmark enters the quantum era from a position of unusual strength. As an active participant in the EU Quantum Flagship, the country is already embedded in Europe’s most ambitious quantum research and innovation programs.

Denmark’s universities, especially DTU and the University of Copenhagen, are recognised internationally for foundational work in quantum physics, photonics, and materials science, forming a research ecosystem that directly feeds talent and technology into industry

At the infrastructure level, Denmark benefits from a rapidly growing Nordic data center landscape powered by abundant renewable energy, robust fiber connectivity, and some of Europe’s best conditions for efficient cooling These same strengths make Denmark an attractive candidate for future quantum facilities, which demand stable power, controlled environments, and high-performance interconnects.

The country’s ongoing giga-factory Letter of Intent further highlights its readiness, with strategically located, infrastructure-prepared sites that could support quantum labs, hybrid data centers, and advanced manufacturing clusters.

Visualizing Denmark’s assets, its green energy corridors, cooling advantages, dense fiber routes, and existing data centers, helps illustrate a clear message: the country already has the foundational layer needed to support an AI and quantum-enabled digital economy.

Research & talent

Advanced digital infrastructure

Renewable energy & stable grids

World-class expertise in quantum computing, AI, and highperformance computing

Leading universities and research centers: DTU, Aalborg University, University of Copenhagen

Strong pipeline of talent and early-stage innovation

Mature, efficient, and growing data center ecosystem

Well-connected, energy-efficient, and integrated with national and international networks

Early hybrid AI–quantum deployments: Gefion (AI supercomputer platform) + Magne (quantum computer system), designed to scale

Global leader in wind energy and clean power

Reliable, stable electricity grid supporting energy-intensive AI and quantum workloads

Enables sustainable scaling of hybrid computing

Governance, trust, & security culture

Industrial & societal alignment

Robust regulatory frameworks and secure data handling

Trusted environment for sensitive workloads: healthcare, industrial R&D, and national applications

Enhance credibility as a European hub for hybrid computing

Strong sectors: life sciences, energy, logistics, advanced manufacturing

Readiness to translate research into real-world applications

Ecosystem supports rapid deployment from lab to market

Unlike many countries that are strong in research but lack industrial execution or have data center capacity but limited quantum expertise – Denmark has both What is still emerging is a coherent framework that unites these strengths into a unified execution capability

By building on this foundation, Denmark can become a trusted execution hub for European and global workloads, advancing societal goals, industrial competitiveness, and technological sovereignty. Coordinated investment in infrastructure, policy, and talent over the coming decade, will be critical to realizing this potential.

3: Data Center & Quantum Ecosystems in Denmark

Hybrid AI–quantum computing is no longer theoretical. Countries and companies around the world are investing heavily in research, infrastructure, and execution platforms These efforts show that leadership depends not just on scientific excellence, but on building the physical and digital foundations to host hybrid systems at scale

United States: Federal initiatives such as the CHIPS Act and the QIST National Strategy are accelerating quantum research, manufacturing, and hybrid computing deployment Private sector leaders like Amazon (AWS Braket) and Google are co-locating quantum hardware with classical compute in data centers, building integrated campuses with cryogenic labs and high-density connectivity

Canada: Focused on quantum research and early hybrid integration, often in partnership with universities and cloud providers

Finland: VTT has created one of Europe’s leading quantum labs, integrating cryogenic facilities with national high-performance computing to form a fully operational quantum testbed.

Germany: Regional quantum hubs link universities, industry, and sovereign cloud infrastructure, aligned with the national data strategy

Denmark: National initiatives such as Gefion, supported by the Novo Nordisk Foundation, and QNorth demonstrate Denmark’s growing capacity to host advanced AI and emerging hybrid AI–quantum workloads Together, they link research, national compute infrastructure, and international collaboration, positioning Denmark as an active participant in Europe’s hybrid computing landscape

European Union: Funding programs support research centers, national quantum hubs, and testbeds, emphasising secure, energy-efficient infrastructure for sensitive workloads

ASIAPACIFIC

China: Invests in large-scale quantum networks and research centers, making quantum computing a core national capability

Japan and Singapore: Developing hybrid computing platforms that integrate AI, classical HPC, and quantum processors These efforts combine infrastructure, talent development, and industry engagement in a coordinated approach

Implications for Denmark: Scientific research is necessary, but not sufficient for competitive advantage. As seen in the United States and across Europe, leadership depends on translating research into integrated infrastructure and coordinated deployment. Denmark has strong research capabilities and a growing, highly efficient data center ecosystem, but lacks a coordinated national framework that treats data centers as strategic execution platforms

Without this alignment, Denmark risks lagging in practical deployment, even with world-class research Acting early to align infrastructure, policy, and industry will determine whether Denmark fully capitalizes on hybrid AI–quantum computing

Denmark has strong ambitions in quantum computing and AI, with leading research, a mature data center ecosystem, and reliable renewable energy Yet current policies do not fully recognise the strategic role of data centers and digital infrastructure in enabling hybrid AI–quantum computing This creates both risks and missed opportunities.

Infrastructure planning is not fully aligned: Existing data center policies focus on energy efficiency, local planning, and environmental compliance, but do not address low-latency quantum–classical integration, cryogenic cooling, or secure hybrid computing platforms. Without coordinated planning, advanced workloads may be hosted abroad, slowing adoption and industrial impact.

Ecosystems are fragmented: Strong research centers, active Quantum community and an active data center industry exist, but investments in hardware, software, and operations are not fully coordinated. This limits the translation of research leadership into commercial and societal value

Trust, sovereignty, and security gaps: Hybrid AI–quantum systems will handle sensitive data and critical workloads, but current policies do not yet address physical security, cybersecurity, or governance for quantum-enabled data centers, creating potential vulnerabilities

Workforce readiness is underdeveloped: Running AI- and quantum-enabled data centers demands specialized, full-stack skills, from managing high-density, highvoltage hardware and cryogenic systems to orchestrating hybrid AI–quantum workloads. Current training and policies only partly address these needs, creating a bottleneck even where infrastructure exists. Building this integrated workforce will be key to scaling Denmark’s hybrid computing capabilities safely and efficiently.

Energy and sustainability considerations: AI workloads are highly energyintensive, while quantum workloads require specialized infrastructure such as cryogenic cooling. Denying or delaying data center development due to energy concerns risks slowing both AI and quantum adoption. Coordinated policy is urgently needed to ensure that Denmark’s data centers remain sustainable, efficient, and capable of supporting next-generation workloads

Opportunity cost of inaction: Other nations are actively coordinating research, infrastructure, and industry to scale hybrid AI–quantum capabilities. Without a national framework that links data centers to research, industrial applications, and workforce development, Denmark risks losing control over where advanced computing happens.

In particular, as quantum workloads scale, digital sovereignty and security will increasingly depend on keeping sensitive AI–quantum systems within the country. Failure to act could turn Denmark into a consumer rather than a provider of strategic computing capabilities

With a coordinated national framework, Denmark can leverage its research, energy, and data center strengths to become a European hub for hybrid AI–quantum computing Early alignment is critical The decisions made today on infrastructure, energy policy, and

As hybrid AI–quantum capabilities scale, cybersecurity and infrastructure resilience become foundational national priorities alongside energy and industrial competitiveness.

To realise the potential of hybrid AI–quantum computing that is robust and secure, Denmark needs a coordinated, cross-sector approach connecting research, infrastructure, industry, and policy

Early action is critical: other nations are rapidly scaling hybrid AI–quantum capabilities, and even brief delay risks Denmark becoming a service consumer rather than a global leader

To realise this vision, six strategic pillars of action are proposed to guide Denmark’s development of secure and competitive hybrid AI–quantum capabilities.

Figure 5: 6 Pillars of action

Designate hybrid AI–quantum facilities as critical national infrastructure, comparable to energy, transport, and telecom networks

1) Recognise data centers as strategic infrastructure

Integrate requirements for low-latency quantum–classical integration, cryogenics, security, and resilience into regulation and incentives

Foster public–private collaboration and establish a Danish Quantum–AI Data Center Taskforce aligned with the EU Apply AI framework

2) Align research, industry, and infrastructure

3) Build workforce and operational capability

4) Ensure energy and sustainability alignment

5) Strengthen trust, security, and governance

6) Mobilise coordinated public and private investment

Connect universities, research centres, and industry with the data center ecosystem through testbeds and pilot environments. Scale Danish platforms such as Magne and Gefion as examples of hybrid AI–quantum workflows

Promote knowledge sharing to accelerate translation from research to real-world applications

Develop skills in hybrid workload orchestration, AI- and quantum-aware facility management, and cryogenics.

Expand talent pipelines through targeted training for quantum engineers and infrastructure specialists

Align hybrid data center development with Denmark’s renewable energy strategy and grid stability goals

Promote energy-efficient facility design while maintaining compute capacity and flexibility

Leverage Denmark’s renewable leadership to provide green, secure, scalable compute platforms

Establish national standards for physical security, cybersecurity, data sovereignty, and operational oversight

Design infrastructure for resilience, transparency, and rapid incident response

Position Denmark as a trusted and sovereign hub for hybrid AI–quantum research and innovation

Create a national investment framework combining EU, Danish public, and private funding

Align investments across the hybrid stack: energy, data centers, compute, interconnects, and orchestration

Use co-investment and risk-sharing models to accelerate deployment and ensure long-term infrastructure investment certainty

Hybrid AI–quantum computing is rapidly moving from research into real-world deployment. Early pilots, co-located infrastructure, and strategic coordination will give Denmark a decisive advantage By leveraging its research excellence, mature data center ecosystem, renewable energy, and industrial readiness, Denmark can become Europe’s trusted execution layer for hybrid AI–quantum workloads, attracting investment, strengthening competitiveness, and advancing societal goals, while safeguarding technological and national sovereignty

The window to act is now Denmark faces both an energy and infrastructure challenge posed by AI and advanced computing, and an equally significant opportunity to unlock industrial, societal, and technological value Seizing this requires a coordinated national approach, by linking digital policy, energy strategy, climate goals, industrial priorities, workforce development, and national security. Central to this approach is the creation of a national data center strategy that treats strategic facilities as core national infrastructure. Such a strategy would align public and private stakeholders across ministries and industries, ensuring that AI and quantum computing grow sustainably, securely, and in line with Denmark’s societal and industrial objectives.

As hybrid AI–quantum workloads evolve, this also has direct implications for data center design, location, and operations. Investors and operators should anticipate high-density AI and quantum systems, low-latency interconnects, and resilient, sustainable power requirements Aligning facility design with national priorities will not only support technological leadership but also make Denmark a globally attractive destination for strategic computing infrastructure

By acting decisively today, Denmark can transform its research leadership into practical outcomes, turning advanced computing capabilities into economic growth, climate-positive innovation, public benefit, and strategic influence on the global stage Treating data centers as strategic national assets will ensure that the country’s AI and quantum ambitions translate into sustainable, sovereign, and long-term national impact.

https://network nvidiacom/pdf/whitepapers/WP Mellanox Exegypdf

https://developer.nvidia.com/blog/nvidia-nvqlink-architecture-integrates-acceleratedcomputing-with-quantum-processors/

https://wwwolcfornlgov/2025/09/02/quantum-brilliance-ornl-pioneer-quantumclassical-hybrid-computing/

https://wwwanlgov/cels/article/argonnes-aurora-supercomputer-drivesgroundbreaking-simulations-to-explore-how-light-shapes-quantum

https://www.fz-juelich.de/en/news/archive/press-release/2025/jade

https://wwweurohpc-jueuropaeu/new-eurohpc-quantum-computer-be-hostedluxembourg-2024-10-21 en

Contact DDI

Danish Data Center Industry (DDI)

Vendersgade 74B, 7000, Fredericia

Phone: +45 20 15 50 21

Website: www.datacenterindustrien.dk

Contact DCAI

Danish Center for AI Innovation (DCAI)

Lyngby Hovedgade 4, 2800, Kongens Lyngby

Website: www.dcai.dk