Newsrooms within major media organisations are undergoing a process of transformation, aligned with the profound changes currently reshaping the broadcast industry. This evolution operates on multiple levels: from the strictly technological to a broader shift in culture and mindset, affecting both technical professionals and journalists themselves.

As we have been analysing in TM BROADCAST, the shared objective —with all the nuances introduced by each corporation’s specific circumstances— appears to be moving towards a digital-first model. This implies a gradual convergence of radio, television and digital teams, as well as the development of tools capable of unifying workflows, optimising resources and facilitating content exchange across departments.

The logic behind this shift is clear: to leverage the synergies created when any piece of content can be made available to the entire newsroom and adapted for multiple formats and platforms, from streaming and broadcast to social media.

However, beyond strategic considerations, the practical implementation of this model raises complex challenges. Not all formats perform equally well in linear and digital environments, and audience fragmentation requires different narrative approaches, which also has an impact on technological tools. In this context, technologies such as HTML graphics, new cloud-based editing systems and artificial intelligence applications are beginning to play a key role.

That said, the process is far from complete. In recent months, we have analysed how numerous European public broadcasters are addressing this challenge through their technical leadership, from Portugal’s RTP to Denmark’s TV 2. This month, we focus on a particularly emblematic case due to its weight and influence within the media ecosystem: the BBC.

But we will not stop in Europe. In the next issue, we will cross the Atlantic to analyse the case of a North American broadcaster. Stay tuned.

Editor in chief

Javier de Martín editor@tmbroadcast.com

Director Daniel Esparza press@tmbroadcast.com

Editorial Staff

Bárbara Ausín

Carlos Serrano

Key account manager

Patricia Pérez ppt@tmbroadcast.com

Graphic Design and Layout

Sorex Media

Ana Guijarro

Administration

Laura de Diego administration@tmbroadcast.com Published in Spain ISSN: 2659-5966

TM Broadcast International #151 March 2026

TM Broadcast International is a magazine published by Daró Media Group SL Centro Empresarial Tartessos Calle Pollensa 2, oficina 14 28290 Las Rozas (Madrid), Spain Phone +34 91 640 46 43

The dilemmas of Artificial Intelligence from a copyright perspective

By Miranda Rivero Domínguez, lawyer

in the Entertainment and Sports Law Department at Montero Aramburu & Gómez-Villares Atencia

26

The BBC’s roadmap for a digital-first newsroom

From unified newsroom workflows to HTML graphics and AI-driven tools, Morwen Williams, Director of Media Operations at the BBC, explains to TM BROADCAST how the broadcaster is adapting for a multi-platform future, the strategic and operational challenges it faces, and some key insights for navigating this process

RAI Milan: the technical heart of Italy’s Olympic coverage

We spoke with Gianluca Latini, Head of the TV Production Centre in Milan, to understand how RAI approached the production of the Games from its Milan headquarters and what lessons this experience offers for the future of large-scale event production

Karen Chupka, VP of Global Connections and Events at NAB:

“This year the Show will feature roughly twice as many AI-focused exhibitors as last year”

Jason Kornweiss, Senior Vice President, Advisory Services, Diversified:

“Every space is now a point of origination for live content”

We explore some of the key takeaways on broadcast-AV convergence — one of the major talking points at the latest edition of ISE —with insights from one of the world’s leading system integrators

AWS has announced AWS Elemental Inference, a fully managed AI service designed with the intention of automatically transforming live and on-demand video broadcasts. At launch, AWS Elemental Inference will enable video customers to adapt video content into vertical formats optimized for mobile and social platforms in real time, as it has claimed in a statement.

Additionally, it has selected Big Blue Marble as its launch partner. Integrated into its cloud-native video processing and delivery platform, Cloud Video Kit, AWS Elemental Inference applies AI-driven capabilities to live video streams in real time, with the intention of enabling broadcasters, media organizations, and sports rights holders to adapt content for platforms such as TikTok, Instagram and YouTube.

The service uses an agentic AI application by analyzing video in real time and automatically

applies the right optimizations at the right moments. Detection of vertical video cropping and clip generation happens independently.

AWS Elemental Inference applies AI capabilities in parallel with live video, achieving 6-10 second latency. This “process once, optimize everywhere” method runs multiple AI features simultaneously on the same video stream.

Additionally, the service integrates with AWS Elemental MediaLive,using fully managed foundation models (FMs) that are automatically updated and optimized.

Cloud Video Kit combines scalable tools for live and on-demand video encoding, packaging and distribution with content security features, including DRM and forensic watermarking.

Operating in parallel with the encoding process rather than during post-processing, AWS Elemental Inference aims to allow broadcasters to generate vertical feeds with minimal latency (typically within 6-10 seconds) while eliminating the need for manual reframing or AI expertise.

“Integrating AWS Elemental Inference with our Cloud Video Kit will allow us to help traditional broadcasters and streaming platforms compete more effectively with social media platforms”, explains Krzysztof Bartkowski, CEO of Big Blue Marble Streaming and Cloud Media. “Our platform has always focused on comprehensive video workflows and robust content protection. The addition of advanced AI capabilities allows our customers to adapt quickly to changing market demands and convert live content into vertical formats within seconds, without compromising performance or security”.

AWS Elemental Inference is available today in 4 AWS Regions: US East (N. Virginia), US West (Oregon), Europe (Ireland), and Asia Pacific (Mumbai). Users can enable it through the AWS Elemental MediaLive console or integrate it into workflows using the APIs. With consumption-based pricing, buyers pay only for the features they use and the video they process, with no upfront costs or commitments.

Canal+ and Google Cloud have announced a new multi-year partnership focused on artificial intelligence. Starting in June 2026, Canal+ will deploy Google Cloud’s AI technologies across European and African markets where its app is available, as the company has claimed in a statement.

Using Google Cloud’s technologies, Canal+ will focus on accelerating the content video indexing of its content library. The new content classification aims to provide the global media and entertainment group with an in-depth multimodal database combining sound, video, and text data.

This granularity in content classification looks forward to enabling more personalized content recommendations on the homepage of the Canal+ App.

Canal+ will also leverage Veo3, Google’s new genAI video technology, to try to provide its production partners and creative teams with different tools, for instance, previsualizing a scene before shooting it or recreating historical moments from a single archival photo.

Stéphane Baumier, Chief Technology Officer of Canal+, affirms: “We are pleased to leverage Google Cloud’s most advanced AI technologies to drive Canal+’s technical innovation. Building on a long-standing collaboration with Google, this strategic partnership paves the way for limitless possibilities. Content video indexing for Canal+ at scale gives the group a significant edge, notably by enabling us to deliver sharper discovery and truly enhanced personalized journeys on the Canal+ App across all

our markets. Creativity is the cornerstone of Canal+’s content production. We are excited to push creative boundaries by providing creators with tools that enable AI-generated video scenes, impossible to produce using traditional methods”.

Matt Renner, President, Chief Revenue Officer – Google Cloud, adds: “The entertainment industry is at a pivotal inflection point where the intersection of creativity and compute power defines market leadership. Our deepened collaboration with Canal+ is a testament to a shared culture of relentless innovation. By leveraging Google Cloud’s generative AI technologies, Canal+ is not just adopting tools; they are architecting the future of media and fundamentally transforming the entertainment landscape on a global scale”.

FIFA has selected YouTube to be a Preferred Platform for the FIFA World Cup 2026. This partnership between the two organisations looks forward to providing audiences with more ways to enjoy the tournament, as Youtube has claimed in a statement.

“FIFA is delighted to welcome YouTube as a Preferred Platform for the FIFA World Cup 2026. By spotlighting FIFA’s premium content and unlocking new opportunities for Media Partners

and creators, this agreement will engage global fans in ways never seen before”, shares FIFA Secretary General Mattias Grafström. “As the world’s attention turns to the action in Canada, Mexico and the United States, this collaboration with YouTube reinforces our ambition to maximise the tournament’s impact across the ever-evolving media landscape, offering fans everywhere easy access to an immersive view of the biggest single-sport event in history”.

“This collaboration with YouTube reinforces our ambition to maximise the tournament’s impact across the ever-evolving media landscape”, adds Grafström.

This partnership will focus on providing an immersive FIFA World Cup experience, where premium content from the tournament’s media partners and creators live side-by-side, everywhere YouTube is available.

NEP Europe has redesigned an outside broadcast (OB) unit with the intention of helping broadcasters and rightsholders across Europe scale live coverage more efficiently and reliably.

The new unit, EU-03, manages broadcast tools using a software platform, includes a full SMPTE ST 2110 IP transition and supports the demand for 1080p HDR productions, as the company has claimed in a statement.

The launch of EU-03is designed to introduce a more software-enabled production environment to the European market, supporting the use of license-based broadcast tools. Additionally, its software-defined approach—using a common looks forward to allowing new production capabilities to be

added through software updates instead of major rebuilds.

“Our clients want the freedom to produce more content in smarter ways, without taking risks on quality or reliability”, explains Lise Heidal, President of NEP Europe. “EU-03 gives customers more flexibility in how productions are delivered, backed by the operational discipline and resilience NEP is known for”.

Based in Oslo, EU-03 will primarily serve customers in the Nordics, launching in April with coverage of the Norwegian Football League, among other projects.

The unit also intents to support remote production workflows, connecting directly to NEP’s production hub in Oslo.

This enables certain production roles to be handled from the hub when appropriate.

“What matters most to customers is that live production works every time, and that it can adapt quickly when needs change”, affirms Eirik Nakken, Director of Technology for NEP Norway.

“EU-03 is built to scale from smaller shows to major events, while helping customers optimize footprint and associated costs. Across Europe and around the world, we’re investing in this software platform-based approach, combining the flexibility of modern software workflows with the proven resilience and redundancy required for mission-critical live events. We’re very proud of the innovative solutions we’re providing to our customers”.

XGN Global and X1 Mobile have announced the release of the world’s first commercial rugged smartphone capable of receiving 5G Broadcast television signals. This device has been specifically designed for first responder solutions, looking forward to delivering enhanced broadcast content in demanding environments where traditional cellular networks may be congested or unavailable, as the comapny has claimed in a statement.

5G Broadcast is conceived as a transformative free-to-air technology that aims to enable direct delivery of live television, audio, and emergency information to compatible devices without requiring a cellular subscription or consuming mobile data. Its objective focus on leveraging existing broadcast infrastructure for efficient, wide-area coverage.

The rugged smartphone is designed to try to enable first responders to receive emergency alerts in under ½ second, along with solutions such as resilient, subscription-free access to critical alerts, encrypted communications, and real-time information delivery even when cellular networks are down or overloaded.

The first model of this 5G Broadcast-enabled rugged smartphone is a European variant, supporting the majority of European television frequencies, and will be available starting in May 2026.

A companion Customer Premises Equipment (CPE) device is scheduled to follow in Q3 2026.

X1 Mobile will subsequently release a US-specific model capable of receiving American broadcast frequencies in Q3 2026.

The technology has already been tested at major global events, including the Paris 2024 Olympics and ongoing trials at the Milano Cortina 2026 Winter Olympics.

Additionally, it integrates Enensys 5G Broadcast Client, the Cube Agent Mobile, which serves as the interface between the 5G Broadcast chipset and the application player.

The Cube Agent is interfaced with proprietary code developed by X1 Mobile, working to ensure seamless reception and robust performance tailored for mission-critical applications.

Attendees at Mobile World Congress (MWC) in Barcelona, Spain, from March 2–5, 2026, can visit the Enensys booth, 5B-61-34, to discuss 5G Broadcast and this device firsthand.

European Broadcasting Union (EBU) Member broadcasters have delivered their most successful Winter Olympic Games ever, with Milano Cortina 2026 achieving recordbreaking reach and exceptional engagement across Europe. All figures are based on data provided by EBU Members and their respective audience measurement systems, as EBU has claimed in a statement.

In Austria, ORF’s coverage of Milano Cortina 2026 became the most watched Games in the broadcaster’s history, reaching 6.138 million viewers, or 81% of the TV population aged 12 and over, across more than 500 hours of coverage on ORF 1 and ORF SPORT +.

Česká televize reported that total coverage of the Winter Olympics on ČT Sport and ČT Sport Plus reached approximately 7.39 million viewers. This marks a substantial increase compared with the 5.46 million reach for Beijing 2022, benefiting from the favorable European time zone.

In Finland, Yle reached 4.5 million viewers on linear broadcast, up from 4.1 million for both Beijing 2022 and Paris 2024. Daily TV reach averaged 2.5 million viewers and an average market share of 47.9%,

while the ice hockey semi-final between Canada and Finland peaked at 2 million.

In France, more than 50 million viewers followed coverage on France Télévisions, delivering a higher reach than Torino 2006. France 2 peaked at 6.5 million viewers, capturing a 52% audience share, during coverage of the French biathletes’ gold and silver medal triumph.

In Germany, ARD and ZDF delivered similarly strong performances. ARD reached around 40 million viewers on Das Erste, equivalent to almost half of the German population. Its Olympic broadcasts averaged 3.14 million viewers with a

23.2% market share, rising to 34.5% among 14–29-yearolds, while peak audiences hit 6.79 million for the doubles luge bronze medal race. Digital engagement was particularly strong, generating more than 85 million video views across the ARD Mediathek and Sportschau. de, including over 65 million livestream views, with the first week marking the highest usage in ARD Mediathek history.

In Italy, engagement reached exceptional levels in the host nation, with two out of three Italians watching RAI’s coverage of the Games marking a higher proportion than for Paris 2024. The Opening Ceremony attracted more than 9.2 million viewers and a 46% share, while the

Closing Ceremony drew over 6.2 million viewers and a 31% share, demonstrating sustained nationwide interest from start to finish.

In the Netherlands, NOS coverage reached approximately 12.3 million viewers in the Netherlands, representing around 73.8% of the population, across the Games period.

NRK delivered record engagement for its coverage across Norway, reaching 4.1 million people across linear TV and online, equivalent to around 87% of the population aged 10–79. The most-watched event was the men’s 50 km mass start in cross-country skiing, which averaged 839,000 viewers, achieved a 94% market share, and peaked at 1.178 million viewers.

In Poland, TVP recorded significant growth compared with Beijing 2022, with TVP 1 averaging 621,697 viewers for live Olympic coverage, while TVP Sport more than doubled its Beijing performance, averaging 270,286 viewers.

In Sweden, SVT reached 7.4 million viewers with its coverage, meaning that around seven out of ten Swedes watched the Games on SVT. This represents the broadcaster’s highest Olympic reach since it last held the rights in 2012, despite having live rights to only around half of the events.

RTV SLO recorded its strongest Olympic broadcast performance in a decade, reaching 75% of the population in Slovenia, or around 1.43 million people, across TV, radio and digital platforms. More than 200 hours of original programming drove strong engagement, with ski jumping on 15 February peaking at a 66% audience share and averaging 583,300 viewers per minute.

In Slovakia, STVR delivered historic results during its Milano Cortina 2026 coverage, led by the Olympic ice hockey quarterfinal between Slovakia and Germany. The match averaged 835,000 viewers aged 12+ on the :Šport channel with a 66.8% market share, peaking at 956,000 viewers, and became the mostwatched programme across the entire Slovak TV market, outperforming major news broadcasts.

In Switzerland, SRG broadcasters RTS, RSI and SRF reached 4.8 million TV viewers with its coverage of the Games. Alpine skiing proved particularly popular, with several men’s and women’s races averaging over 1 million viewers nationwide and delivering market shares of around 80%. The men’s downhill drew 1.2 million viewers, the women’s downhill 1.1 million, and the men’s giant slalom 1.1 million. Ice hockey and curling also generated strong audiences, reaching 2.4 million and 2.3 million viewers respectively across the event.

In Ukraine, Suspilne adapted its Milano Cortina 2026 coverage strategy in response to nationwide electricity shortages, placing increased emphasis on digital distribution and social media highlights while maintaining a strong linear presence on Suspilne Sport. The linear channel achieved a reach of approximately 5 million Ukrainians, while the Suspilne Sport YouTube channel also reached 5 million users, marking a platform record.

In the UK, BBC Sport’s coverage of the Winter Olympic Games 2026 delivered its largest overall audience consumption ever, driven by a record 83 million streams and more than 44 million streamed hours across BBC iPlayer, the BBC Sport website and app.

A total of 26.3 million viewers tuned in on TV, with a peak audience of 5.5 million for Team GB’s men’s curling final on BBC One. Social coverage generated 235 million views, confirming the Games as the most widely consumed Winter Olympics ever on the BBC.

Andreas Aristodemou, Director of Olympics, EBU remarks: “Milano Cortina 2026 stands as the strongest Winter Olympic Games on record for EBU Members, with audiences across Europe engaging at historic levels from Opening Ceremony through to the final medal events”.

SRF, the Swiss public broadcaster, has taken a step forward in its live production workflow by introducing JPEG XS compression and Media Pro App functionality on its existing Nimbra event-based infrastructure. This marks the first time the organization is using an IP compression format across its current deployment, spanning both Nimbra 600 and Nimbra 1000 platforms, as the company has lcaimed in a statement.

Rather than introducing new hardware or redesigning workflows, SRF has enabled Net Insight’s JPEG XS solution directly within its Nimbra environment. The move looks forward to suuporting enhanced flexibility and efficiency, and lower latency.

JPEG XS enables visually lossless compression with ultra-low latency, making it well suited for live contribution and production

workflows. Combined with the Media Pro App, SRF can now manage and scale IP-based media flows more dynamically.

This deployment aims to enable broadcasters and production teams to evolve toward IP and software-defined workflows—at their own pace, and without compromising predictability.

“By introducing JPEG XS on our existing Nimbra setup, and using it in live production during the recent Winter Games, we’ve been able to take a clear step toward more flexible IP-based workflows without changing how we operate day to day. It’s a controlled, future-oriented evolution that gives us new production options while maintaining the reliability we require”, explains Daniel Graf, Head of CSM at SRF.

Yospace stitched 5.4 billion one-to-one addressable OTT advertisements across the 17 days of Milano Cortina 2026. This marks an increase of 35.7% from Paris 2024, as the company has claimed in a statement.

Yospace stitched 0.73 more ads per stream start compared to Paris 2024. In total, the company stitched 3,675 years’ worth of ad content during the Olympic Winter Games. To show this, the company explains that if

someone was to stream all the ads in order and finish watching today, then the moment it pressed play would pre-date the first ever Games in Ancient Greece by almost a thousand years.

Unlike Paris 2024, which hosted several tentpole track-and-field events, Yospace monetised the Winter Games for nine customers worldwide, several of whom ran multiple live event (pop-up) channels.

Yospace’s Orchestrator system was applied across many of its live channels with the intention of maximising ad opportunities across multiple live event channels. In addition, Yospace’s prefetch system was applied to try to help the advertising scale at times of peak traffic. Prefetch assesses the capacity of ad servers and paces out ad requests accordingly to ensure that the adtech ecosystem has the time it needs to realise the highest possible value for each ad spot.

“This was the 7th edition of the Games that Yospace has helped

monetise. Each edition has brought greater audiences and a greater imperative to get the advertising right”, shares Tim Sewell, CEO of Yospace. “The huge streaming audiences we’re seeing mean that scalability of DAI technology, while delivering maximum value and

Since 1986, Auditel has been Italy’s reference point for television audience measurement, providing viewing data for broadcasters and the wider advertising market. The company recently turned to creative agency Coo’ee Italia with the intention of creating a campaign that would convey that role in an original way, as Blackmagic has claimed in a statement.

A simple metaphor drives the campaign created by Alessandro Tosatto: a typical Italian family portrayed in a stylized living room, watching programming from RAI, Mediaset and Sky played on a huge screen. “The idea was to let the screen content light the space and play across the actors”, Tosatto explains. “That was our way of showing Auditel in everyday Italian homes.”

a premium viewer experience, is more important than ever”.

Yospace is showcasing its Dynamic Ad Insertion technology, featuring Server-Side Ad Insertion (SSAI) and Server-Guided Ad Insertion (SGAI), at the NAB Show in Las Vegas, April 19 – 22, 2026

DP Alessandro Zonin (AIC, IMAGO) shot the campaign on the Blackmagic PYXIS 12K digital film camera, with post production in DaVinci Resolve Studio. “The commercial was shot on an LED volume stage at STS Communication in Milan”, reveals Zonin.

Channel 9 Sydney Headquarters has presented its newly upgraded Studio A, a xR broadcast environment for sports programming and special broadcasts. Delivered by Creative Technology Australia, the installation is powered by ROE Visual’s Meru, featuring a 14 m × 3m video wall, as ROE has claimed in a statement.

The project was designed with the intention of creating a broadcast space capable of supporting a wide range of programming for major sports and special broadcast presentations. With a large central display and extended visual environment powered by Meru, the studio looks forward to enbaling the in-house

production team to build tailored visuals for each story.

The upgraded Studio A made its on-air debut during coverage of the 2026 Winter Olympics.

“The studio features one large hero screen and a world of possibilities around it. Each show can have its own unique studio environment, with storytelling enhanced by graphics for promotional material, player data, and sports highlights in a fully immersive experience for viewers”, explains Alex Rolls, Head of Creative and Innovation at Channel 9.

For this installation, Creative Technology selected the LED solution Meru to integrate it into

a permanent broadcast studio, which is available in multiple pixel pitches and form factors.

“This high-end product suits these kinds of installations perfectly. I hope to see it further integrated into broadcast studios around the world”, adds Jeremy Moore, Creative Technology Project and Account Manager.

“ROE Visual consistently delivers high-quality products and takes a genuinely collaborative approach to development. They listen to real-world feedback and use it to refine and improve their technology, which makes a real difference for teams like ours. Their technical support and after-sales service are excellent, and that’s why they remain a trusted partner for Creative Technology”, Moore continues.

Luc Neyt, Technical Director at ROE Visual, highlights the significance of the installation for the broadcast market: “ROE Visual and Creative Technology share a strong commitment to innovation and delivering high-quality solutions. Channel 9 Studio A perfectly demonstrates how Meru supports xR technology to enhance viewer engagement and content creation in the broadcast industry. We’re excited to see how our new fixed installation solution continues to provide outstanding experiences and long-term value for our customers”.

Gravity Media has deployed a remote production gallery to support ITV Studios’ MultiStory Media programming at its new Covent Garden facility in London. The solution looks forward to enabling the production and broadcast of 900 hours of live daily programming each year across three shows: Lorraine, This Morning and Loose Women, as the company has claimed in a statement.

The new studio, which launched in January 2026, features a 360-degree set with LED walls designed for rapid turnarounds between shows. Production has been built with sustainability at its core: a single remote gallery produces and broadcasts

all three live daily programmes, as well as additional productions outside of MultiStory Media transmission.

The remote production infrastructure includes approximately 45,000 metres of video, audio, SMPTE, data and fibre cabling, supporting 13 technical equipment racks and nine wall boxes across 34 camera positions.

Gravity Media’s Remote Production service uses high-bandwidth, low-latency fibre, satellite, broadband and mobile network technologies to transmit footage in real time to Gravity Media’s Production Centre in White City, where engineering,

editorial and creative teams focus on enhancing content for final delivery.

Phase 2 of the project, launching later in 2026, will build on this foundation by adding advanced graphics and finishing capabilities.

Kate Rendle, Director – Global Sales Director at Gravity Media comments: “Remote production allows us to deliver the broadcast quality ITV’s audiences expect, while reducing environmental impact and operational costs. By centralising production, we can manage multiple live feeds, maintain high technical standards and build greater resilience into daily live production”.

Lawo has announced the appointment of Jamie Dunn as Chief Executive Officer (CEO) of Lawo AG. Dunn joined Lawo in 2011 and has served as ‘Vorstand’ and Deputy CEO since 2024, as the company has claimed in a statement.

As CEO, Jamie Dunn will play a key role in shaping the continued development of the Lawo Group. His experience features long tenure with the company, deep market understanding and global experience. Reflecting on his role, Jamie comments: “Lawo’s strength has always been rooted in its people, its partnerships, its values and its commitment to innovation. I am honored to lead the next chapter of success of

this remarkable company. These are exciting times – and there are huge opportunities ahead for our company”.

As part of the transition, Philipp Lawo steps down from the ‘Vorstand’ of Lawo AG and joins the company’s Supervisory Board.

Lawo’s Management Board now consists of Jamie Dunn (CEO and ‘Vorstand’), Claus Gärtner (CFO and ‘Vorstand’), Andreas Hilmer (CMO), Christian Lukic (CSCO), Phil Myers (CTO) and Ulrich Schnabl (COO).

Michael Sonnabend, Chairman of the Supervisory Board, emphasizes the continuity in leadership: “These changes reaffirm our confidence in Lawo’s

proven management structure and the strong collaboration within the Management Team. This transition ensures the company’s continued stability as we look to the future”.

Under its Management Team, the company has focused on navigated global market challenges, whilst accelerating its transformation into a market leader of IP- and IT-based audio and video media infrastructure solutions. “This Management Team has consistently demonstrated its ability to define, execute and deliver on the company’s long-term strategy”, Sonnabend notes.

With his transition to the Supervisory Board, Philipp Lawo will continue supporting Lawo’s development and ensuring that the values of the Lawo family remain at the heart of the company’s culture. As the sole ‘Vorstand’ of Lawo Holding AG, he will be responsible for the strategic direction of the entire Lawo Group, reinforcing the focus on long-term stability and sustainable growth.

Sonnabend further adds: “Our goal is to ensure that Lawo continues to build on its strong foundations while pursuing a sustainable and robust growth model. The Supervisory Board has full trust in Jamie Dunn and the entire Management Team. With their collective expertise and dedication, Lawo is ideally positioned for the next chapter of its journey”.

Jan Schaffner, Riedel’s new Vice President, Managed Technology Americas, and Lutz Rathmann, CEO Managed Technology

Riedel Communications has announced the expansion of its Managed Technology Division in the Americas and the appointment of Jan Schaffner as Vice President, Managed Technology Americas. The move aims to formalize the company’s market development strategy and reinforce its service solutions across sports and live production environments in the region, as it has claimed in a statement.

Over the past years, Riedel’s Managed Technology Division has established a structured go-to-market approach in the Americas. As part of this strategy, Riedel has strengthened its local presence with personnel, project management, and infrastructure in North America, including an operational base in Los Angeles and an additional hub in Concord, N.C.

“We are here to stay — and we are investing in people, infrastructure, and longterm relationships across the Americas”, affirms Lutz Rathmann, CEO Managed Technology at Riedel Communications. “With Jan leading our efforts in the Americas, we have someone who understands both worlds — our technology ecosystem and the realities of the U.S. market — and is well-positioned to bring them together. Having already helped establish our Managed Technology activities in the region, he is the right person to continue building the business locally together with our customers and partners”.

Rather than competing with traditional rental providers, the Managed Technology Division focuses on service solutions and complex workflows that extend beyond classic rental models.

Schaffner joined Riedel in 2018 and has played a key role in developing the company’s

Managed Technology activities in the Americas. Having most recently served as Program Manager responsible for the division’s regional expansion, he has contributed to multiple innovation initiatives across global sports leagues and live event environments. In his new role, Schaffner will lead the continued growth of the Managed Technology Division in the Americas, focusing on sustainable expansion, local market alignment, and operational excellence.

“We are building Managed Technology in the Americas in a way that reflects the realities of the market”, notes Schaffner. “Rather than copying models from other regions, we are working closely with customers and partners to develop solutions that match how the market operates and where it is heading”.

Riedel will be at the 2026 NAB Show in Las Vegas, April 19-22, booth #C4908.

By Miranda Rivero Domínguez, lawyer in the Entertainment and Sports Law Department at Montero Aramburu

& Gómez-Villares Atencia

Artificial Intelligence (AI) has burst into our lives, redefining and transforming every aspect of them. No one can deny the significant impact that AI is having at all levels, being one of the fundamental pillars of what is known as the Fourth Industrial Revolution. In this context, despite the many advantages that the appropriate use of this technology offers, it is crucial not to lose sight of

the major challenges we also face, particularly in the field of copyright, due to the legal issues that arise around the authorship of works generated by AI.

In response to these challenges, Regulation (European Union) 2024/1689 on Artificial Intelligence addresses the risks associated with the specific use of AI, but it does not resolve all the conflicts between AI and copyright.

In light of these circumstances, this article aims to present some brief reflections on the impact that AI is having on copyright.

1. A brief overview: copyright and AI-generated works

Traditionally, both the continental legal system and the Anglo-Saxon legal system establish that, in matters of intellectual property, copyright protection is granted to original works created by human beings. In this way, a work must meet two basic

requirements: human authorship and originality, the latter understood as the personal effort or contribution of its author.

In contrast to this traditional conception, AI emerges along with the main challenges and drawbacks that arise in practice, particularly with the controversies that may arise regarding the authorship of these works, as well as the use of protected works to train AI systems.

If we take into account that the non-human nature of AI is unquestionable, the issue might seem resolved. Both doctrinally and institutionally, it is clearly recognised that, for the time being, authorship of a creation cannot be attributed to AI.

But what happens with works generated with the assistance of AI in whose creative process a human being has actively participated?

Both doctrinally and institutionally, it is clearly recognised that, for the time being, authorship of a creation cannot be attributed to AI. But what happens with works generated with the assistance of AI in whose creative process a human being has actively participated?

This question completely redefines the boundaries of copyright and is far from simple. However, it can be argued that the answer lies in the degree of human participation in the creative process.

The debate surrounding the authorship of works created by technology is not new. If we go back to the 1960s, the legal form of protection for software generated numerous doctrinal conflicts which, today, have been overcome through its protection under copyright.

Now it is the turn of AI, although there is still a long way to go before answers can be provided to the many questions arising in this field.

As noted above, what seems clear is that the key to protecting AI-generated works lies in the extent of human intervention in the creative process. This raises the question: what level of human intervention is required for an AI-generated work to be protected by copyright?

So far, the response from various offices, such as the Spanish Patent and Trademark Office (OEPM), the European Union Intellectual Property Office (EUIPO), and even the U.S. Copyright Office (USCO), has been negative. In general terms, protection is not granted to AI-generated works unless a significant human contribution can be demonstrated — one that is sufficiently relevant to rule out the possibility that the AI system created the work randomly and unpredictably.

A clear example of this is the application submitted to the USCO for the comic titled Zarya of the Dawn, whose images were created

by Midjourney, one of the best-known AI systems. The USCO excluded the images generated by Midjourney from copyright protection because it considered that they were not the result of traditional human authorship, since the AI “independently determined the artistic expression.”

The key to protecting AI-generated works lies in the extent of human intervention in the creative process.

A different outcome was reached in the case of Invoke, also processed before the USCO, in which the image generated by an AI system was successfully registered. The key factor in this case was the extent and significance of human intervention within the creative process, which was not limited to simply giving an instruction. Instead, it was demonstrated that decisions were made using an interface that allowed a high level of artistic control.

In the field of the music industry, the controversy is also evident, as it is one of the main sectors in which artists most frequently rely on innovative and technological resources as part of their creative process. In the context of music, the response has also generally been negative. However, last October the associations American Society of Composers, Authors and Publishers (ASCAP), Broadcast Music, Inc. (BMI) and the Society of Composers, Authors and Music Publishers of Canada (SOCAN) announced that they will accept the registration of musical works partially generated by AI (only those parts of the composition that are the result of human authorship), while continuing to reject the registration of compositions generated entirely by AI.

3. The use of protected works to train AI systems

Another dilemma arises in relation to AI training data and its subsequent

reproduction. This is particularly the case with Generative Artificial Intelligence (GAI), capable of creating content based on a set of pre-existing information and data.

It is well known that GAI is trained on such information, placing us in a scenario of real uncertainty — not only because of the clear violation of copyright that may arise from the use of protected works without authorisation or financial compensation, but also because of the significant risks to data privacy.

In this regard, the case Kadrey v. Meta in the United States is particularly relevant. In this case, thirteen publishers filed a lawsuit against Meta alleging copyright infringement due to the unauthorised use of their works in training AI models. Although the issue seemed straightforward, the plaintiffs saw their claims dismissed under the doctrine known as “fair use”, according to which limited use of information protected by copyright

is permitted without the authorisation of its holder in certain justified situations (research, academic purposes, etc.).

Clearly, it will be necessary to wait for the rulings of the competent courts in different countries in order to clarify the issue.

4. Recommendations for the protection of AI-generated works

Although there is still a long way to go, in the meantime it is necessary to establish certain guidelines and recommendations to mitigate the associated risks.

Based on this premise, one recommendation for companies is to use AI within the framework of a previously configured process, which should include human elements and actions that directly influence the final result through the use of visual and interaction elements capable of demonstrating artistic control over the work. In this way, the more original, detailed and precise the instructions

provided to the AI, the greater the likelihood that the result will be considered an original creation of the user.

It will be essential to minimise risks through the implementation of legal audits that assess both the use of AI and compliance with copyright regulations.

Likewise, it will be essential to minimise risks through the implementation of legal audits that assess both the use of AI and compliance with copyright regulations.

In this context, specialised legal advice will be essential, as it is recommended to formalise agreements that include specific clauses on the authorship of this type of work, ensuring a precise attribution of copyright in contracts

with creators, developers and users of AI systems.

It is undeniable that in the coming years these issues are expected to be resolved through the adoption of a specific regulatory framework, as well as through the responses provided by the various bodies, courts and legal operators in the sector. For now, however, the debate remains the subject of ongoing analysis and discussion.

From unified newsroom workflows to HTML graphics and AI-driven tools, Morwen Williams, Director of Media Operations at the BBC, explains to TM BROADCAST how the broadcaster is adapting for a multi-platform future, the strategic and operational challenges it faces, and some key insights for navigating this process

By Daniel Esparza

Large media organisations’ news departments are undergoing a profound process of transformation. As we have been analysing in TM BROADCAST, the shared objective —with all the nuances introduced by the circumstances of each corporation— appears to be moving towards a digital-first model.

This implies a gradual convergence of radio, television and digital newsroom teams, as well as the development of tools capable of unifying workflows, optimising resources and facilitating the exchange of content between teams. The logic behind this shift is clear: to take advantage of the synergies created when any piece of content can be made available to the entire newsroom and adapted for multiple formats and platforms, from streaming and broadcast to social media.

However, beyond strategic considerations, implementing this model in practice raises complex challenges. Not all formats work equally

well in broadcast and online environments, and the fragmentation of audiences requires both narrative approaches and technological tools to evolve. In this context, technologies such as HTML graphics, new cloud-based editing systems and artificial intelligence applications are beginning to play a key role.

If we look for a major news organisation where these challenges can be observed in practice, the BBC is undoubtedly one of the first that comes to mind, given its scale and its influence on the global media ecosystem. To explore the issues and trends outlined above, we had the opportunity to gather some key insights from Morwen Williams, Director of Media Operations at the BBC.

“Standardising and simplifying”

In the case of the BBC, the ongoing transformation of newsroom workflows is being driven above all by a commitment to simplification, integration and cross-platform collaboration. From the

perspective of Media Operations, this evolution is closely tied to the need to connect processes that have traditionally operated in parallel. “Standardising and simplifying our toolset is the big driver here,” explains Morwen Williams. “Working closely with colleagues in Technology and importantly listening to our journalists and their needs, we are starting to connect our workflows into one overarching space.”

“This will take us from plan and capture through to edit (on a single platform for various outlets digital and linear) and keeping

the metadata through to archive,” she notes. The approach reduces friction within production processes and opens new possibilities for collaboration.

Alongside these structural changes, Williams highlights other pillars shaping the evolution of BBC News operations. As she explains, “it’s been in simpler workflows in our control room and a simplified presentation space for streaming, enhanced podcast and radio visualisation and more compelling studio looks for our audiences.”

“Working closely with colleagues in Technology and importantly listening to our journalists and their needs, we are starting to connect our workflows into one overarching space”

Managing such an ecosystem is particularly complex given the BBC’s distributed operational structure, which spans multiple production hubs across the United Kingdom, including London, Salford, Birmingham and Bristol. Williams emphasises that while the organisation

operates under a unified strategic framework, flexibility at a local level remains essential.

“There will always be local autonomy because of the immediate nature of lots of the work we do,” she explains. “And local decision making with our production partners needs to be dynamic.”

At the same time, the organisation maintains a shared strategic vision across its leadership teams.

“We have an overall strategy that we share among our leadership team – from our team leaders who are often operational to our more senior Head of Department. We try to meet up face to face several times a year, and leaders visit sites. We share regular updates to all this team in a 2-way nature to support them in their roles whatever and wherever that is based. But the overall model is a centralised strategy.”

Convergence between online and broadcast operations

Convergence between broadcast, radio and digital platforms has become a central theme across the media industry, and the BBC is actively progressing towards more unified newsroom structures. “This is certainly the overall aim, and one we are well on the road with”, she explains.

“It’s a much more effective and efficient way of working for the speed of output, and helps with career progression as teams could work across several platforms but using similar or the same tools to do so. We are not there yet, but it’s firmly in the action plan.”

In practice, the relationship between online and broadcast operations

is already much closer than in previous years. According to Morwen Williams, the integration begins at the editorial level. “The journalism and work underpinning both broadcast and online is closer than ever,” she says.

“Right now, we go to our audiences/ platforms via different tools in the final stage. That is something that might change to simplify even more”

“The journalism and planning all works together. They sit alongside each other in our main London Newsroom.”

However, the final stages of content distribution still involve different tools and formats depending on the target platform. “Right now, we go to our audiences/ platforms via different tools in the final stage. That is something that might change to simplify even more,” she explains.

Even so, she stresses that platform diversity remains essential. “We need to recognise that some formats work better for broadcast and some better for digital audiences,

including of course our social offerings (e.g. vertical video, short explainers). One size cannot fit all as audiences are extremely mature at knowing what they want to consume, and how and where to consume it.”

Technological development is playing a crucial role in enabling this convergence. One of the most significant initiatives currently underway is the integration of browser-based editing capabilities directly into the BBC’s media asset management environment. “We are in the final stages of integrating browserbased edit (Cutting Room) into our own MAM system,

Jupiter Cloud for Jupiter Edit,” Williams explains. “This is currently in BETA and will go live around April. A single (or closely integrated) editing solution that supports digital publishing endpoints. The ability to share edits of content in the same space is crucial to the closer working between broadcast and digital, and turns us from a broadcast first news workflow, to a digital first one.”

Looking ahead, the BBC’s roadmap for media operations includes a broad set of technological priorities aimed at improving accessibility, traceability and efficiency across the entire media lifecycle.

“There is a list,” Morwen Williams notes when asked about the BBC’s priorities for the coming years. She outlines several key areas: “The ability to passively share any content anywhere; Comprehensive search and rich metadata; a restoreless video system with ‘use it, don’t move it’;

a media stack with no silos, full visibility across teams, one click reuse, preserving editorial decisions (no flattened files); a tech stack that decreases TTA (time to air) and increases shelf life of each media asset; a video system that makes BBC video assets traceable, and a singular consolidated video storage that can act as bible and source of truth with lighter format and proper metadata design and that supports archiving in-place.”

Scaling graphics production

Graphics production is another area undergoing continuous evolution.

“The ability to share edits of content in the same space is crucial to the closer working between broadcast and digital, and turns us from a broadcast first news workflow, to a digital first one”

The BBC currently combines templated graphic workflows with bespoke design processes depending on the editorial needs of each production. “Workflows are designed for editorial and production staff to perform the most basic graphic design tasks i.e. creating a ‘tower’ or quote graphic for quick turnaround,” she explains.

Alongside these capabilities, the BBC has developed several internal tools to support graphics production at scale. “We have internal tools such as BBC Graphics which have been focussed originally on live graphics for streams, or rendering files for social. These new tools can be used on-premise or in the cloud, and at scale.”

One of the most significant technological trends in this area is the growing adoption of HTML-based graphics. “We're excited about HTML graphics and we're using them more and more in our workflows; we have them on-air every day and you'll see them on screen for the Elections,” Williams says. As part of this transition, the BBC is collaborating with the European Broadcasting Union’s OGraf initiative to develop open standards for exchanging graphics data.

The BBC’s move towards HTML graphics is closely

linked to the broader transformation of the media landscape. As digital platforms continue to expand, the corporation sees HTML-based workflows as a more adaptable solution for modern content production. “Across the BBC we have been rolling out the use of HTML graphics, new workflows on BBC Sport use this and in BBC News we used HTML on most streams, the General and U.S. Elections,” Williams explains. “We are moving to a fully HTML workflow for lower third graphics in the coming months/year (Project Overlay)”.

“We are in the final stages of integrating browser-based edit (Cutting Room) into our own MAM system, Jupiter Cloud for Jupiter Edit. This is currently in BETA and will go live around April”

At the same time, the BBC is playing an active role in shaping the technological standards that will underpin these workflows across the industry. “We’re also heavily involved in the EBU’s OGraf initiative, shaping the standards and specification of HTML media graphics with other public broadcasters and suppliers.” The rationale behind this strategy is clear. “The BBC is behind HTML graphics as the media landscape is changing with the growth of digital content. We needed a more flexible, scalable, costeffective way of telling the audience the story.”

Immersive visual storytelling technologies are also playing an increasing role in the BBC’s editorial output. Augmented and virtual reality tools are already integrated across multiple platforms and formats.

“We use AR and VR across all our platforms and output,” Williams confirms. “We’ll be using this in a newly relaunched ‘Trending’ on BBC Arabic but we often

use these tools for major event production including the General Election, the World Cup and when we’re required to tell complex stories that require immersive graphics like explaining what happened with the Titan Submersible in 2024.”

And we couldn’t miss the opportunity to ask Morwen Williams about artificial intelligence. AI is certainly another area where the BBC is experimenting with

practical applications within production and editorial workflows. “We are currently piloting three AI production workflows,” Williams explains.

One of them, Style Assist, “allows us to publish more stories from the Local Democracy Reporters Network by adapting them into BBC style draft articles, which are then edited, checked and published by our journalists.”

Another initiative focuses on live sports coverage. “It helps

“ We use AR and VR across all our platforms and output,” Williams says. The images show some examples.

audiences keep up with their football teams' matches on the go by using our worldclass EFL commentary to add expertise and texture to our live pages on the BBC Sport app, all overseen by journalists to ensure the highest quality and accuracy.”

“We are moving to a fully HTML workflow for lower third graphics in the coming months/year (Project Overlay)”

The third pilot project, My Club Daily, explores the creation of audio bulletins for football teams using synthetic voice technologies, “making a one-stop-shop audio bulletin for EFL teams by using synthetic voice to read scripts sourced from articles on the Sport app, delivering content to come back for every day, all overseen by journalists and audio producers,” Williams concludes.

We spoke with Gianluca Latini, Head of the TV Production Centre in Milan, to understand how RAI approached the production of the Games from its Milan headquarters and what lessons this experience offers for the future of large-scale event production. Remote production was the central pillar of the project

The Olympic Games are always one of the major milestones in the global television calendar. The scale of the production effort is reflected in audience response: this year’s Winter Games recorded record levels of engagement among broadcasters within the European Broadcasting Union (EBU). In the host country, Italy, the impact was particularly notable. Two out of three viewers followed the Games’ coverage through RAI, a proportion even higher than that recorded during Paris 2024.

At the centre of the Olympic production ecosystem stands Olympic Broadcasting Services (OBS). Since replacing the traditional host broadcaster model after the Sydney 2000 Games, OBS has profoundly transformed the way Olympic television coverage is conceived and produced. Rather than reinventing the entire production ecosystem at every edition, the Olympic movement now relies on a permanent host broadcaster, equipped with

a long-term technological roadmap capable of evolving from one Games to the next.

The innovations deployed this year were already analysed in a previous issue of TM BROADCAST (you can read the article here), based on insights from OBS CEO Yiannis Exarchos. On this occasion, we aim to offer a complementary perspective by focusing on the public broadcaster of the host country. Through an in-depth conversation with Gianluca Latini, we explore how RAI approached the production of the Games from its Milan headquarters — and what lessons this experience may hold for the future of major event production.

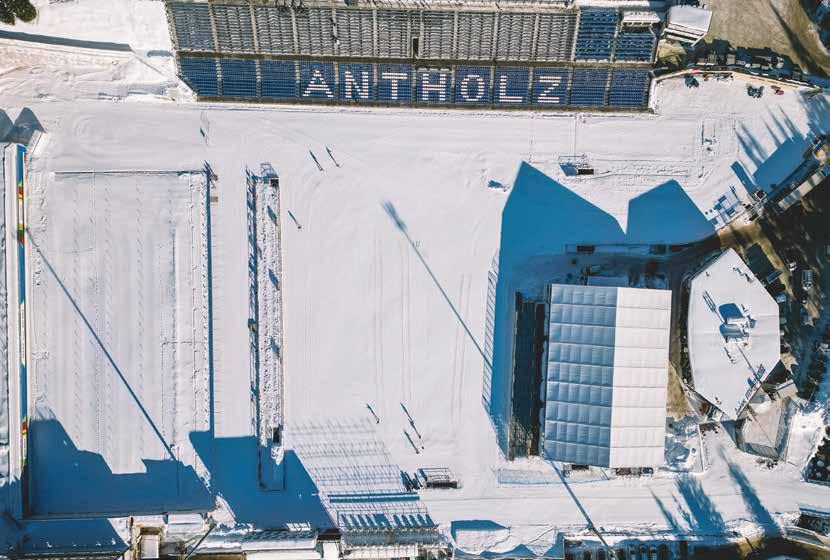

RAI’s Milan headquarters played a central role in the broadcaster’s coverage of the Winter Olympic Games. With around 650 people working in the building, it is the second most important RAI facility in Italy — and during the Games it effectively became the technical hub of the public broadcaster’s Olympic production.

For Gianluca Latini, this position was the result of several converging factors. The first was the role of the city itself within the organisation of the Games. “In Milan, as you know, we host all the ice sports, so it was a fundamental site for the Olympic Games”.

“That was probably the most difficult aspect of the project: we had to deliver essentially the same level of coverage, but with lower costs”

The second reason is related to the broader context of the Milano-Cortina Games, which reinforced the city’s importance. While Cortina is a small mountain town, Milan is a major metropolitan centre that became deeply involved in the overall Olympic operation. The third key factor was geography. “We are very close — around two kilometres — to the IBC, where the ‘palace of

television’ for the Games is located”, Gianluca Latini explains.

When these elements are combined, the result becomes clear: Milan became the focal point for many operational activities surrounding the Games, even those not directly related to the competitions themselves. “Everything that happened before — and of course during — the Olympic Games made it clear that the Milan

headquarters of RAI had to become the centre of our technical project for the Olympics”, he concludes.

The production of the Games presented significant operational challenges for the Italian public broadcaster. “The first challenge was the responsibility of being the national public

broadcaster in Italy, which meant providing very strong coverage of the Winter Olympic Games,” Latini says.

The second challenge was financial. “We didn’t have the same budget we had in the past, for example during Torino 2006. That was probably the most difficult aspect of the project: we had to deliver essentially the same level of coverage, but with lower costs”.

This constraint forced RAI to rethink its production model, with remote production becoming the central pillar of the strategy. “In practice, this meant having fewer people on site, both because of the logistical challenges and because of the cost of hotels”, he explains, noting that many of the competition venues were located in small mountain towns where accommodation capacity was limited.

As a result, the production team decided early on to minimise the number of staff deployed to the venues. “Our first decision was to try to have as few

people as possible on site in the mountains and to rely mainly on remote production.”

The second challenge was ensuring that cost reductions did not translate into a perception of reduced coverage for viewers at home. “We didn’t want to reduce our presence too much,” Latini says. “We still wanted viewers at home to feel that this was a major broadcast operation.”

In this context, he highlights the role played

by Olympic Broadcasting Services (OBS), the host broadcaster responsible for the international feed. “They did a really special job with drones, special images and many other elements. I know Yiannis [Exarchos, CEO of OBS] very well, because over the past year we have had the chance to work together, and he was very helpful in giving me the possibilities I needed.”

One example of this collaboration was the selection of the main studio location in Milan. “I wanted to have a very nice studio in Milan, in a particular location. I didn’t want it to be in Piazza Duomo, because that is where all

the television networks usually build their studios. I wanted a different point of view of Milan”.

“We didn’t want to reduce our presence too much. We still wanted viewers at home to feel that this was a major

broadcast operation”

The solution was found at the Arco della Pace, near the Olympic Court. “With the help of OBS, we found the right place at the Arco della Pace, where the Olympic Court

was located. So I placed my studio right in front of it, in a very distinctive part of the city”, he explains.

The decision also brought financial advantages. “A studio in Piazza Duomo would have been quite expensive, while our studio at Arco della Pace cost significantly less”.

From a technological perspective, the project relied heavily on remote production infrastructure connecting the IBC with RAI’s Milan headquarters. “From the IBC to the RAI building in Milan, I asked to have dark fibre connections,” Latini explains. This approach provided major operational advantages. “It gave us many possibilities and meant that we did not need to take up much space inside the IBC, which, as you know, is quite expensive.”

At the IBC, RAI installed only a small operational room acting as an interface between its infrastructure

and the OBS production systems. “That way we could clearly understand where a possible signal problem might occur,” Latini says. “For example, if we didn’t receive one of the feeds, we could check whether the issue was on the OBS side or on our side. Having that interface allowed us to identify the problem immediately. In practice, it was just a small room — a signal control room receiving the feeds and sending them back to us.”

The use of dark fibre also ensured a highly reliable connection. “It was not a standard connection, which can be more difficult to stabilise. Dark fibre is much more reliable. In fact, we never had any problems, and it was also cheaper than building everything inside the IBC”, Latini highlights.

The real production centre was located inside the RAI’s headquarters in Milan. “We dedicated an entire floor of the building — the fourth floor — which we called

the ‘Olympic floor’,” Latini explains. The space hosted two identical production galleries. “One was dedicated to Rai 2, which was the Olympic channel for RAI, broadcasting the competitions throughout the day. Rai 2 is one of RAI’s main channels. The second gallery was entirely dedicated to the Rai Sport channel.”

All production resources were connected through a single technical infrastructure. “All these systems were based on a single matrix. All the resources were shared for everything we had on that floor. That included editing, the two galleries and all the other operational areas”.

and another on Rai Sport — not necessarily all day long, because there are not enough football matches for that, but for the main match and the second most relevant one”.

At the IBC, RAI installed only a small operational room acting as an interface between its infrastructure and the OBS production systems

This configuration allowed RAI to manage simultaneous competitions effectively. “When two races were happening at the same time, and both were important — for example, one could be a major race in Cortina and the other important because an Italian athlete was competing — we could broadcast both: one on Rai 2 and one on Rai Sport.”

The facility was designed not only for the Olympics but also as a long-term production hub for future events. “Of course, in the future we will not have that dark fibre connection, so we will rely on IP connections, but the concept will remain the same”, Latini notes. “The idea is that we will not go where the signals are — the signals will come to where we are.”

RAI intends to apply the same approach to upcoming international productions. Latini specifically mentions the future FIFA World Cup in the United States, Canada and Mexico. “In that case we might have two channels — one on Rai 2

In this model, Milan becomes the permanent production centre. “The idea is to use Milan as a kind of standard production hub: today for football matches, tomorrow for the European Athletics Championships, then for the Swimming World Championships or European Championships. Everything will come from remote locations into this

floor, which will become the central production structure.”

The layout of the production space also plays an important role. “Everything is on the same floor: offices, production areas, editing. This is important because all the people work together in the same place. The main concept is to have an operational area that is always ready to work at any time.”

The infrastructure is also designed for flexibility. “All the equipment is mounted on wheels. So if for six

months we don’t need the full setup, we can move the equipment and use it elsewhere — for example, in Sanremo or other major events in Italy produced by RAI. Nothing remains unused. The cabling is permanent, so when we need to reactivate the system we simply reconnect the equipment and everything is ready again.”

“This saves time, saves money at the IBC and reduces the number of people needed on site. This is the main concept that is changing the production of large events,” Latini concludes.

The remote production model also influenced RAI’s on-site presence at the competition venues. “ The same approach applied to external connections. At each venue we only had one camera in the mixed zone, because we decided not to build studios on site, mainly for cost reasons and also because of the logistical difficulties of bringing people there,” Latini explains. Most editing work was carried out at the Olympic floor in Milan.

Journalists working at the venues transmitted their material via mobile networks using SIM-based transmitters. “With a new system, the journalist could open their own computer and see exactly what the editing technician was doing on the editing station. They could say, for example, ‘Please use this shot’ or suggest changes in real time,” Latini explains.

In practice, this meant that almost all editing operations were handled remotely. “In practice

we did not need editing technicians on site — only one as a backup, who was also a cameraman in the mixed zone. If necessary, he could edit from the cabin, but in reality we did all the editing in Milan”.

“The camera signal from the mixed zone was also sent here remotely,” Latini continues. “We asked OBS to send us the feed directly, and we used it here exactly like a studio camera, as if it were physically connected to the studio. The same applied to all the venues — Cortina, Livigno and the others.”

The Arco della Pace studio itself operated almost

entirely remotely. “We only had a cameraman and one technician there to provide microphones and earpieces for journalists and guests. Everything else was done remotely. The camera was connected directly to the studio in Milan, so the production team could communicate with the cameraman, manage tally signals and operate everything exactly as if the camera were physically connected.”

RAI applied the same approach to other locations in the city. “We did the same at Casa Italia in Milan. That was also a remote position, with only two or three

technicians: a cameraman and one or two technicians to manage the logistics of guests and interviews”.

“In this way we had two studios showing Milan live: one at Arco della Pace and another at Casa Italia, which was located at the Triennale, another iconic location in the city.”

Additional studio facilities were also prepared inside the RAI headquarters itself. “Inside the RAI building we prepared three additional studios,” Latini says. “One small studio for news; another slightly larger one that could be used for different situations — for example if the

connection with the IBC failed or if weather conditions stopped a race for some time”. These spaces functioned primarily as backup environments.

A third studio was designed for larger productions.

“The third studio was much larger and used for postrace coverage. Many guests came there, and it allowed for full production with replay systems and editing after the competitions.”

In events such as the Olympic Games, the international feed produced by OBS sets an extremely high production standard. As a result, the room for differentiation by national broadcasters is often limited.

“The Winter Games are not like a football match,” Latini says. “In football you can place many cameras. You want to see your coach, your number 10, the penalty area, because anything can happen. You have many possibilities

Key personal takeaways on the remote production model

“Sometimes only when you are forced into a certain situation do you discover the best solutions”

“In the end it worked very well. We had no signal failures, nothing happened, and the costs were reduced by a significant percentage”

“The idea is that we will not go where the signals are — the signals will come to where we are”

“This is the main concept that is changing the production of large events”

to add cameras and create different angles”.

“In winter sports it is not exactly the same,” Latini continues. “Take hockey, for example. In Italy we don’t have such a strong national involvement that would justify placing additional cameras just to follow one particular player. It’s not like football for us”.

The limitations are even clearer in disciplines such as alpine skiing. “If you want to add a camera on a ski course, you have

to run cables across the mountain,” he explains. “That is very expensive — and the athlete passes in front of the camera for just a few seconds.”

Given the extremely high quality of the OBS production, additional investments do not always make sense. “For me it is not really necessary to add something very expensive that would not give you a significantly different point of view. That is why I did not feel the need to deploy our own cameras”.

“That is also why we decided to place cameras only in the mixed zone, because that is a real editorial position where you can offer interviews and interact with the athletes. But beyond that, it didn’t make much sense”.

This philosophy also extends to the way RAI approaches studio locations during major events. While many broadcasters aim to build visually striking studios directly at the venue, Latini believes the balance between editorial value and cost must be carefully considered. “Of course, I would have loved to have a studio in Cortina. That was actually my dream. But the cost was

simply too high, so in the end we decided not to do it”.

He argues that this dilemma is not unique to the Winter Games and will likely become even more relevant for future global events — for example, the Football World Cup in the USA. For Latini, the solution lies in combining remote production with more flexible visual environments. “We prefer to have the studio here, with a very nice LED wall behind it, where you can display beautiful images from New York or wherever you want. For viewers at home it is almost impossible to tell that you are not physically there but actually in Milan”.

At the same time, a minimal presence at the venue can still preserve the sense of immediacy.

“Then you can have a very small position inside the stadium —two people, a very small studio —just to give the feeling of being present at the venue. This is the direction we want to follow in the future for our productions.”

The key lesson

Looking back at the project, Latini identifies several important lessons. From a production perspective, he is unequivocal about the quality delivered by the host broadcaster. “If I were a broadcaster

anywhere in the world, I would like to have the same level of quality that OBS delivered for this event. I think they produced a really special broadcast for these Winter Games”.

For RAI itself, however, the most important takeaway concerns remote production. “We were forced to adopt remote production because we simply didn’t have the possibility to do everything on site, mainly because of the costs and the particular geography of the mountain venues.”

So there are two lessons he took from this experience. “The first lesson

is that we will try to use remote production much more in the future.”

The second lesson is more philosophical. “Sometimes only when you are forced into a certain situation do you discover the best solutions.” Without the budget constraints, RAI might never have adopted such an ambitious remote production model. “We might have said: yes, we could try remote production, but maybe it is not possible, maybe it is strange, maybe people would not be happy because the journalists are not on site, or because there could be signal issues”.

In practice, however, the experience proved highly successful. “In this case we had to do it, and in the end it worked very well. We had no signal failures, nothing happened, and the costs were reduced by a significant percentage.”

“For me,” he adds, “this is my main personal takeaway from these Winter Games”.

“If I were a broadcaster anywhere in the world, I would like to have the same level of quality that OBS delivered for this event”

VP of Global Connections and Events at NAB:

“This year the Show will feature roughly twice as many AI-focused exhibitors as last year”

The executive behind the iconic global trade show held each year in Las Vegas (April 18–22) joins Industry Voices to break down the key developments for this year’s edition, the elements that make NAB Show distinctive, and the trends shaping the evolution of the industry: “The challenge is integrating new tools without disrupting existing operations”

It is not only about hearing about isolated trends, but about testing them in real-world environments and within an interconnected ecosystem. This is one of the ideas Karen Chupka, VP of Global Connections and Events at NAB Show, repeatedly emphasizes — and one of the aspects that, in her view, makes an event like NAB Show truly distinctive.

With the upcoming edition of the event approaching (April 18–22, 2026, at the Las Vegas Convention Center, USA), we have the opportunity to welcome her as this month’s guest in our Industry Voices section.

In line with what other professionals who have appeared in this space have noted, the debate

among industry players is no longer whether technologies such as IP, cloud or artificial intelligence can be integrated into companies’ workflows, but how to do so. In this context, Chupka highlights the value of the event: “The Show is one of the few places where the entire ecosystem can examine how those pieces fit together.”

“AI, cloud and IP workflows are increasingly part of the same production architecture, and the Show is one of the few places where the entire ecosystem can examine how those pieces fit together”

Among these technologies, artificial intelligence stands out — as expected. Chupka illustrates this with a telling figure: “This year the Show will feature roughly twice as many AIfocused exhibitors as last year, which reflects how quickly AI tools are moving from experimentation into everyday production workflows.”

Another key element she emphasizes is NAB Show’s ability to bring together the entire industry. The Show has deep roots in broadcast engineering, but today it spans the full lifecycle of media, from content creation and production to distribution and monetization.” In this regard, she adds: “Streaming platforms, technology providers, sports organizations, creators, postproduction teams and enterprise media groups are all part of the same conversation. That range creates a setting where discussions about technology, storytelling and business models happen side by side.”

In a conversation we held around this time last year (which you can read here), Chupka pointed to sports and the emergence of new professional profiles entering the sector as areas of growing interest for NAB Show. Those themes remain fully relevant this year, although — as she explains — they are part of a broader shift: “Those themes absolutely continue, but they’re part of a broader picture where the media ecosystem is becoming more interconnected than ever.”

Attendance projections for this year’s edition, the economic impact of the event and the main highlights of the program are also among the topics addressed in this conversation. Below is the interview.

First, what are your expectations for the upcoming edition of NAB Show?

The overall expectation for the 2026 NAB Show is that it will reflect just how quickly the media and broadcast technology

landscape is evolving. Across the industry we’re seeing rapid changes in production workflows, distribution models and the tools used to create content.

“Instead

of reading about technologies in isolation, professionals can see how systems interact, compare approaches and talk directly with the companies building them”

That momentum is already showing up in the numbers. We’re projecting roughly 60,000 attendees from around 160 countries and about 1,100 exhibitors, including roughly 125 companies exhibiting for the first time. Registrations are pacing well ahead of the last few years, which suggests people are eager to come together and see how these technologies are actually being applied.