CXO TRAIL INSIGHT

This issue of CxOTrail Insights magazine explores the pulse of innovation, resilience against growing threats, and visionary leadership shaping a secure digital future for a continent on the rise.

Cast aside any preconceived notions. The digital landscape across Africa is not merely catching up; in many ways, it's defining new pathways. With this accelerated digital transformation, however, comes an equally rapid evolution of cyber threats. Our in-depth reports reveal that the notion of Africa as a less-targeted region is a dangerous misconception. The data presented in these pages, drawn from the latest INTERPOL assessments and regional analyses, paints a clear picture: Africa is firmly in the crosshairs of sophisticated cyber adversaries.

In this issue, we dive deep into the specific threats plaguing the continent. You'll find startling statistics on the prevalence of phishing, ransomware, and Business Email

Compromise (BEC) that are draining billions from African economies annually. We explore how sectors like telecommunications, government, and finance are grappling with these challenges, often with limited resources but boundless ingenuity.

But this isn't a story of vulnerability alone. Far from it. This issue celebrates the architects of Africa's digital defense. We bring you exclusive interviews with Chief Information Security O cers (CISOs) from leading organizations across the continent. These are the unsung heroes navigating complex threat landscapes, building resilient infrastructures, and fostering a culture of cybersecurity awareness from the ground up. Their insights o er a rare glimpse into the practical realities, innovative strategies, and sheer determination required to protect digital assets in a rapidly expanding and diverse ecosystem. You'll hear firsthand about their triumphs, their biggest hurdles, and their vision for a continent where digital trust is paramount.

What makes Africa's cybersecurity journey unique is its opportunity to overcome traditional security models. With a young, tech-savvy population and a spirit of entrepreneurial innovation, there's immense potential to embed security by design, harness emerging technologies like AI for defense, and cultivate a robust, localized talent pool. However, this potential is currently challenged by significant gaps in capacity, legal frameworks, and international cooperation, as our statistics section rigorously details.

This issue is a call to action, an ode to innovation, and a testament to resilience. It’s an essential read for anyone invested in the future of cybersecurity, understanding that the lessons learned on the digital savannah of Africa hold relevance for the entire connected world. Join us as we explore the threats, celebrate the defenders, and envision a secure, prosperous digital future for Africa. Enjoy the read,

Anabel Emekene Editor

Published by:

The forum, co-hosted by the United Nations Secretary-General, brought together heads of state, CEOs, and global investors to cement Africa’s position at the heart of global innovation, powered by massive new commitments in AI and finance

The most striking announcement came from Zimbabwean billionaire Strive Masiyiwa, Founder and Executive Chairman of Econet Global and Cassava Technologies. He revealed that work is underway to establish Africa’s first network of AI factories.

These facilities, powered by NVIDIA GPUs, are slated for completion by the end of 2026. This landmark project is more than an infrastructure upgrade; it’s a foundation for homegrown innovation, providing the crucial computing power necessary for African stakeholders to develop local AI solutions tailored to the continent’s most pressing challenges in health, agriculture, and finance.Further bolstering the digital ecosystem, Meta, represented by Vice President Kojo Boakye, signaled upcoming investment opportunities in Africa’s tech sector, a powerful vote of confidence in the continent’s rapidly accelerating digital and AI potential. These initiatives signal a decisive shift toward locally led, long-term solutions designed to make Africa a creator of advanced technologies.

The dialogue at Unstoppable Africa 2025 wasn’t solely focused on tech; it also highlighted a monumental push to mobilize internal African capital for long-term growth.

In the financial services sector, the Africa Finance Corporation (AFC), in partnership with African Pension and Social Security Institutions, launched the Africa Savings for Growth initiative. This continental drive aims to channel Africa’s vast institutional savings into productive, inclusive, and long-term infrastructure and development projects. The initiative is built on a key finding from the AFC: African institutional assets toal at least

$1.17 trillion, a significant portion of which is currently allocated to short-term, low-yield instruments. By shifting this capital, Africa can fuel its own economic transformation and global competitiveness. Action Pathways on Health and Digital Resilience

The forum cemented its focus on tangible outcomes by launching two new GABI Action Pathways centered on Digital Transformation and Healthcare. These pathways are designed to connect businesses, governments, and innovators to accelerate work in sectors critical to Africa’s resilience:

By shifting this capital, Africa can fuel its own economic transformation and global competitiveness

Exhibitors cite strong investor leads and international visibility as the inaugural event brings in participants from 78 countries

The debut of GITEX NIGERIA brought a global spotlight to Nigeria's digital economy, with international exhibitors and investors confirming strong engagement and immediate business opportunities.

Held under the patronage of H.E. Bola Ahmed Tinubu GCFR, President of the Federal Republic of Nigeria, GITEX NIGERIA ran from 1-4 September across Abuja and Lagos. The event was supported by the Federal Ministry of Communications, Innovation and Digital Economy in collaboration with the National Information Technology Development Agency (NITDA), endorsed by the Lagos State Government, and organised by KAOUN International, global producer of GITEX events.

The Hon. Olatunbosun Alake, Commissioner, Innovation, Science & Technology, Lagos State, said: “In three short days, GITEX NIGERIA has already had a meaningful impact on our nation, from startups seeking funds and exposure with global investors to international organisations discovering the vast growth opportunities within our digital economy. This annual event will continue to grow, have a long-term contribution to Nigerian digitalisation, and show the world the power of international collaboration.”

International participants highlighted the quality of engagement with decision makers and the value of Nigeria as a market. Abdelaziz Saidu, Country Leader at Cisco Nigeria & Ghana, said: “GITEX NIGERIA has been amazing - the crowd has been overwhelming, not just in size but in the quality of people coming to our stand, including the Lagos State Governor and the Minister, who were impressed with our AI and cyber security showcases. From day one we've generated strong leads, some already converting into opportunities, and engaged with organisations like the African Union. The brand reputation of GITEX has pulled in the right crowd locally, regionally and internationally, making this inaugural edition truly impactful.”

The event hosted dual platforms in Lagos - the GITEX NIGERIA Tech Expo & Future Economy Conference at the Eko Hotel Convention Centre and the GITEX NIGERIA Startup Festival at the Landmark Centre. Together, these platforms provided an international stage for Nigerian startups, investors, and corporates to connect, build partnerships, and explore the country's digital growth potential.

As West Africa's largest tech and startup show, GITEX NIGERIA also featured the country's most internationally diverse investor programme, facilitating meetings between startups, corporates, investors, and government stakeholders to advance cross-border collaboration.

The event was supported by international tech companies and organisations, including AWS, the International Finance Corporation (IFC), IBM, Meta, United Nations Development Programme (UNDP), Kaspersky, Space42, Microsoft, and NVIDIA.

GITEX NIGERIA puts a global spotlight on West Africa as government and global tech leaders back Nigeria's digital future

The interest in AI extends beyond academic assistance, with a growing curiosity about the technology’s fundamentals.

New data from Google Search trends shows a dramatic shift in how Nigerian students are approaching their education, with a significant increase in the use of Artificial Intelligence for academic support. As students return to school, search interest for “AI + studying” has surged by over 200% compared to last year, highlighting a growing reliance on AI-powered tools as a primary learning resource.

This trend is part of a broader rise in AI curiosity across Nigeria, where overall Google Search interest in AI has grown by 60% in the last 12 months. Nigerians aren’t just curious about the technology itself, but are actively looking for ways to integrate it into their daily lives. Searches for “AI + lessons” have also increased by 60% over the past year.

AI’s Impact on Academic Disciplines Students are applying AI to a wide range of subjects, seeking practical help with specific challenges. This is reflected in the remarkable growth of searches combining AI with various academic fields:

The nature of these queries shows a demand for hands-on solutions. For example, top math-related searches include “what is the best AI in the world for solving mathematical problems” and “how to use AI to solve math problems.” Similarly, students are looking for “AI tutor for students,” “free AI tools for studying,” and “useful AI prompts for studying.”

The interest in AI extends beyond academic assistance, with a growing curiosity about the technology’s fundamentals. Trending questions like “how to use ai” (+80%), “what is the full meaning of AI” (+80%), and “who is the father of ai” (+70%) have all seen a significant rise.

As students use AI for their work, they are also becoming more aware of the associated ethical considerations. Searches for “AI detection” have seen a massive 290% increase in the past 12 months, indicating a growing conversation around academic integrity. tStudents are

looking ahead to future careers. “Generative AI” emerged as a breakout search term, often appearing alongside searches for “professional certification.” This suggests that young people are not only using AI to excel in their current studies but are also actively building skills to prepare for the future workforce

Google’s Commitment to Empowering Learners

According to Olumide Balogun, West Africa Director at Google, “It’s inspiring to see Nigerian students so eagerly embrace AI to support their learning journeys. This back-to-school season, the data shows that students are not just using AI for answers, but as a tool to deepen their understanding of complex subjects. This curiosity is key to fostering a new generation of innovators, and we are committed to providing tools that empower them to learn, grow, and succeed.”

school, search interest for “AI + studying” has surged by over 200% compared to last year, highlighting a growing reliance on AI-powered tools as a primary learning resource.

GEORGE NJUGUNA

Cybersecurity and AI Governance Leader

“As Kenya’s Global Ambassador for Responsible AI, I’m working to ensure AI is built and secured with transparency, accountability, and people’s rights at the center.”

From Nairobi to the continent, Njuguna is shaping Africa’s cyber future through responsible AI, resilience, and talent development

George Njuguna is a dynamic cybersecurity and AI governance leader driving digital trust across Africa’s financial, telecom, and regulatory sectors. He translates global standards like PCI DSS, SWIFT, ISO, NIST, SOC2, GDPR, FedRAMP, COBIT and ITIL into enterprise resilience strategies that support innovation and compliance.

As Kenya’s Global Ambassador for Responsible AI, he advocates for secure and ethical AI adoption aligned with local context and global accountability. George advises Banks, Governments, Telcos, Regulators and Businesses on Digital Transformation, Information & Cybersecurity, Risk, Data Privacy and Emerging Threats and Technologies. Through Africa Cyber Defenders, he has mentored hundreds of emerging professionals, while contributing to high-level policy and boardroom conversations. As a respected speaker and panelist on global cybersecurity and AI forums,

he continues to shape resilient, inclusive, and future-ready digital ecosystems across the continent.

In this exclusive interview, George Njuguna unpacks how African enterprises can align cybersecurity with business goals, harness AI responsibly, and build digital trust in an era of rising risk.

Q&A with George Njuguna

Q: From intern to influential leader, what inspired your cybersecurity journey, and how did Kenya shape your early perspective on digital trust?

The biggest governance gap isn’t just about policies or technology, it’s about how disconnected and uncoordinated our cybersecurity e orts remain.

A: Growing up, I was driven by a strong desire to create solutions that could meaningfully address societal challenges. I didn’t yet know what form that would take but I had a natural curiosity for how things worked, especially electronics. This inquisitive nature, paired with a love for learning, became the bedrock of a journey that would eventually lead me into cybersecurity. My formal path began at Jomo Kenyatta University of Agriculture and Technology (JKUAT), where I pursued a Bachelor’s degree in Information Technology (BSc IT). At JKUAT. But beyond the theory, I became increasingly fascinated by how technology could be applied to solve real world problems, especially those a ecting everyday people and institutions. During my university years, I had the opportunity to intern at the Communications Authority of Kenya (CA), which gave me a foundational understanding of the tech regulatory landscape. The Serianu Cybersecurity Immersion Program (SCIP) exposed me to real world cybersecurity challenges, particularly in the financial sector, where digital trust isn’t just important, it’s existential. I learned how breaches, fraud, and weak infrastructure don’t just compromise data; they compromise confidence. Cybersecurity wasn’t just a technical field it was a mission-driven profession. It was about enabling societies, communities and the continent to move forward confidently in the digital age. Through SCIP, I also attended the inaugural ISACA Kenya InterVarsity Bootcamp at the United States International University (USIU-Africa), thanks to the sponsorship of Serianu and the support of Mr. William Makatiani. That spirit of contribution inspired me to volunteer with ISACA, particularly within the Kenya Chapter, where I’ve continued to serve in various capacities for nearly a decade. I also led the leadership segment, conducting interviews with notable figures such as Dr. Nancy Onyango (then Director of Internal Audit at IMF), George Njuguna (then CIO, Safaricom), Joan Mburu (CISO, Airtel), and Dr. Vincent Ngundi (Director of Cybersecurity & Head of the National Cybersecurity Centre at CA). These conversations were more than inspiring, they were formative. I’ve been privileged to work with organizations like Craft Silicon, Silensec and now CYBER RANGES, where

I engage with clients across multiple sectors from banking and telecoms to national cybersecurity agencies supporting capacity building, resilience, and digital trust across Africa. Reflecting on my journey, Kenya has been both the training ground and the launchpad. It is a country where digital innovation runs deep, and so do the risks.As we continue to accelerate digital transformation across Africa, we must remember that cybersecurity is not just about defense it is about enabling progress. And that’s the responsibility that continues to inspire me: to protect not just data, but the future we are building together.

Q: You’ve led cybersecurity programs across 5+ African countries and advised banks, telcos, and regulators. What are the biggest governance gaps you’ve seen and how do we close them?

A: From my experience across multiple African countries, the biggest governance gap isn’t just about policies or technology, it’s about how disconnected and uncoordinated our cybersecurity e orts remain. Many organizations, regulators, and industries operate in silos, which creates blind spots and leaves critical digital infrastructure vulnerable. Another challenge is the lack of investment in building local expertise and sustainable capacity. Without skilled people on the ground, even the best frameworks stay on paper. A crucial missing piece is e ective cyber threat intelligence sharing, when organizations don’t collaborate or share insights, it’s harder to anticipate and respond to emerging threats collectively.Closing these gaps means building stronger partnerships across governments, regulators, and industry, establishing clear roles and accountability, and investing deeply in local talent development.

Q: What does “cyber resilience” mean to you at a boardroom level and how do you translate technical risk into executive language that drives decisions?

A: Cyber resilience, at a boardroom level, means the organization’s ability to prepare for, withstand, and recover from cyber disruptions without losing business continuity or stakeholder trust. It’s not just

about preventing attacks; it’s about how quickly and e ectively we respond, adapt, and keep the business running when incidents happen.When engaging executives, I focus on translating technical risks into business impact, for example: This vulnerability puts the customer data at risk, which could lead to regulatory fines, reputational damage, or service downtime. My goal is to make cyber a business conversation, not just a technical one, aligning security priorities with strategic objectives, and helping leadership see it as a value enabler, not just a cost center.

Q: With CIMSA, CDPO, CSCSO, CAIP, CC, ISO 27001, ISO 27034, ISO 27035, SOC2 Analyst, ISO 42001, PCI DSS and GDPR under your belt and now finalizing your C|CISO and CISSP, how can African enterprises move from compliance-driven to culture-driven security?

A: Compliance is a good start, but it’s not enough. I’ve seen many organizations achieve ISO 27001 or PCI DSS certification, yet still struggle with day-to-day security behavior. To move from compliance-driven to culture-driven security, leaders need to own the message. That means making cybersecurity a shared responsibility, not something left to IT. It also means embedding security into daily operations, through awareness, user-friendly controls, and recognizing secure behavior, just like we do with safety in other industries. Regulations like the Kenya’s Data Protection Act and GDPR help build the foundation, but what sustains it is empowered people who understand the “why” behind security.

As we

continue to accelerate digital transformation across

Africa, we must remember that cybersecurity is not just about defense it is about enabling progress

Q: You’ve mentored 200+ professionals through Africa Cyber Defenders. What’s your message to young cyber talent trying to break into the field today?

To young people trying to break into the field, I always say: start where you are, use what you have, and stay curious. You don’t need to know everything, just be willing to learn, build, break, and grow.

A: The truth is, the field needs your perspective, your creativity, and your hunger. Whether you’re writing code, analyzing logs, or helping people understand risk, you are part of something bigger: protecting trust in a digital world. I also remind them that no one walks this journey alone. Communities like Africa Cyber Defenders, ISACA, AfricaHackon, GCRAI, eDigital Community, KCSFA among others exist for a reason, to learn together, support one another, and lift as we climb. So, stay grounded, stay hungry, and never underestimate what you bring to the table, because the continent needs you.

Q: As Kenya’s Global Ambassador for Responsible AI, where do you see the greatest risks and opportunities, in merging cybersecurity with artificial intelligence?

A: The intersection of AI and cybersecurity is both one of our biggest opportunities and our greatest risks. AI can help us detect threats faster, respond smarter, and automate defenses at scale, especially in environments where resources are stretched. That’s powerful for Africa. But the same AI can be weaponized, to launch faster attacks, generate deepfakes, or bypass traditional controls. The risk grows when we adopt AI without understanding the data governance, bias, or security implications behind it.

Q: You juggle roles as a speaker, mentor, consultant, and trainer. What keeps you grounded and how do you stay sharp across such a broad knowledge base?

A: What keeps me grounded is purpose. I’ve always been driven by a desire to solve real problems and contribute meaningfully, whether that’s through mentoring, training, consulting, or speaking.

Staying sharp comes from constant learning and staying close to the community. I learn from the people I mentor, the clients I work with, and the teams I train. I also make time to read, explore new technologies, and engage in forums that challenge my thinking. Most importantly, I stay humble. In this field, the moment you think you know it all, you’re

already behind. So, I approach every opportunity as a learner first, that’s what keeps me both sharp and centered.

Q: In your view, what’s holding back cybersecurity maturity in Africa and what role should collaboration across nations play in solving it?

One of the biggest things holding back cybersecurity maturity in Africa is fragmentation, both in capability and coordination. Some countries or sectors are advancing, but others are still laying the foundation. That unevenness creates weak links, especially as systems become more connected across borders.

A: We also face challenges around skills gaps, underinvestment, and limited threat intelligence sharing, which leaves organizations reactive instead of proactive. Collaboration is key. We need stronger regional partnerships, not just at the government level, but across industries and civil society, to harmonize standards, pool expertise, and respond to threats as a collective. Cybersecurity is a shared challenge, and Africa’s strength will lie in how well we work together to build trust, capacity, and resilience across the continent.

Q: If you could give just one piece of advice to African CISOs building their teams in 2025, what would it be?

A: Build for mindset, not just skillset. In 2025, tools continue to evolve, threats continue to grow more complex but what will set strong teams apart is how they think, adapt, and collaborate. Hire people who are curious, ethical, and resilient, you can train the rest. And don’t build in isolation. Invest in mentorship, cross-functional learning, and community engagement. The strength of your team will reflect not just what they know, but how well they can learn, unlearn, and lead through change.

As Kenya’s Global Ambassador for Responsible AI, I see a real opportunity to shape how AI is developed, deployed, and secured in a way that aligns with our values, especially around transparency, accountability, and protecting people’s rights

At Meta Connect, Mark Zuckerberg unveiled a strategic shift with a bold declaration: glasses would become the vessel for personal superintelligence. This marked the company’s most coherent vision to date for a future beyond the smartphone, a vision backed by three significant hardware announcements that signal Meta’s unwavering ambition to own the next computing paradigm. Unlike previous conferences focused heavily on virtual worlds and metaverse platitudes, this year’s event felt grounded in tangible technology and practical solutions for real-world problems.

The centerpiece of the conference was not another VR headset. Instead, Meta unveiled the Ray-Ban Meta Display At Meta Connect, Mark Zuckerberg unveiled a strategic shift with a bold declaration: glasses would become the vessel for personal superintelligence. This marked the company’s most coherent vision to date for a future beyond the smartphone, a vision backed by three significant hardware announcements that signal Meta’s unwavering ambition to own the next computing paradigm. Unlike previous conferences focused heavily on virtual worlds and metaverse platitudes, this year’s event felt grounded in tangible technology and practical solutions for real-world problems.

The centerpiece of the conference was not another VR headset. Instead, Meta unveiled the Ray-Ban Meta Display glasses, its first consumer device with an actual screen, along with the Neural Band, a breakthrough wrist-worn controller that reads electrical signals from your muscles to enable silent gesture control. This isn’t merely an incremental hardware upgrade. Meta is betting that this combination of always-on AI, augmented displays, and neural interfaces will fundamentally alter how we interact with technology. he Neural Band Gambit The Neural Band represents what many consider Meta’s most significant hardware breakthrough since the Quest headset. Using surface electromyography (sEMG) technology

that the company has been developing since 2021, the waterproof wristband can detect subtle finger movements and hand gestures, translating them into commands for the connected glasses. This allows users to navigate interfaces, control media, and interact with AI without speaking aloud or touching anything.

While industry experts have long predicted that neural interfaces would eventually replace touchscreens and voice commands, most assumed a consumer-ready implementation was still years away. Meta’s decision to launch this technology now, rather than waiting for a perfect implementation, suggests the company sees a narrow window to establish dominance in ambient computing before competitors like Apple or Google make their moves.

The Display glasses themselves boast impressive specifications for a first-generation product. The monocular display delivers 42 pixels per degree, which is a higher resolution than Meta’s VR headsets, and its brightness reaches up to 5,000 nits, making it readable even in direct sunlight. The display is designed to disappear when not in use, a feature that addresses a key usability concern that contributed to the failure of Google Glass a decade ago. At a price of $799 for the glasses and Neural Band combo, Meta is clearly positioning this as a premium o ering for early adopters, though its track record suggests prices will likely drop as manufacturing scales up.

The introduction of the Oakley Meta Vanguard glasses signals that Meta understands mainstream adoption requires devices tailored for di erent lifestyles. These glasses, designed for sports and outdoor activities, feature a nine-hour battery life, a waterproof design, and integration with fitness platforms like Garmin and Strava, targeting a specific audience willing to pay $499 for specialized functionality.

the Frontline:

In an era where data is the heart of the financial industry, the role of a Data Protection O cer has never been more critical. The delicate balance between leveraging customer data for innovation and upholding the highest standards of privacy and security is a central challenge for banks worldwide. We sat down with Daphne Njeri, the Data Protection O cer at the National Bank of Kenya, to discuss her unique career journey, the evolving regulatory landscape, and the strategic imperatives for corporate leaders in a data-driven world. In this candid interview, Daphne shares her expertise on everything from conducting Data Protection Impact Assessments for AI-driven products to fostering a company-wide culture of data privacy. Her insights o er a roadmap for financial institutions seeking to build a foundation of digital trust in a rapidly changing technological and regulatory environment.

Q: Can you tell us about your career path that led you to specialize in data protection. What motivated you to choose this field?

A: The lecturer who taught me ICT Law School challenged us to contextualize how technology works so we can imagine how laws

It is really important to align privacy recommendations with the company’s goals, using risk-based language that resonates with executives such as quantified exposure, potential fines and reputational damage

should be drafted. This sparked a little interest in technology regulation but it was not until my human rights class that I was really interested in data privacy, first as a human right and much later in the context of how businesses should incorporate privacy as they do business. After a few years in my traditional legal practice (law school, bar exams, pupillage and law firm) I decided to resign from my position and pursue data protection specialization. I was motivated to choose data protection because I had interest in the intersection of law and technology and at the time, Kenya had passed the Data Protection Act, 2019 and had just established the O ce of the Data Protection Commissioner (ODPC). At the time there was a small number of people working in data protection and institutions were trying to understand the law and how to be compliant with the law. Once I got immersed in the ecosystem, I have not looked back. It has been an interesting field with di erent challenges every day.

Q:The banking sector relies heavily on data. From a strategic perspective, how do leaders balance business objectives such as customer acquisition and product development with the need to ensure strict data privacy and protection?

A: As one of the most regulated industries, the banking sector is required by law to collect certain information intended to properly identify their customer ie, Know Your Customer (KYC requirement). The data privacy laws only dictate how to safeguard personal data while a bank or any other business tries to achieve its commercial objectives. Leaders should understand the boundaries of the data protection law, which are very clear when it comes to direct marketing, onboarding customers and development of new products. Consulting with the Data Protection O cer before launching new product or a new business process allows data protection by design and by default to be incorporated at the very beginning of the project and thus ensuring strict compliance with the data protection laws.

Q: In your view, what is the value of collaboration among banks to standardize data protection practices. What are some of the key issues that such industry-wide working groups typically address?

A: Collaboration is quite important, especially for stakeholders in the same industry. Banking sector is highly sensitive and highly regulated and data protection being a new-ish law that adds to the other laws in the banking sector, it is very important to discuss with other peers issues that a ect the industry and how to address them. Collaboration also helps in raising collective issues faced when implementing the law to the data protection authority who will assist addressing the issue or interpreting the law and thus more

compliance levels for the industry. Among the issues addressed by working groups include cross border transfer of data especially where banking institutions traverse di erent countries or continents, standardization and best practice for privacy compliance, sector-specific challenges, advocacy and policy influence for regulations a ecting them.

Q:If a financial institution were to introduce a new digital product that uses AI to analyse customer spending habits, what would be the key steps and best practices for conducting a Data Protection Impact Assessment (DPIA) for that project?

A:The first and most important thing is to identify the need for a DPIA and if under the Kenyan Data Protection Act, 2019, the requirements are set under section 31. In this case the need for the DPIA will be because customers (data subjects) are subjected to automated decision making. Once the need for a DPIA is established, one needs to describe the processing activities and this will answer questions like what personal data is collected, how the personal data will be processed (the analysis of the spending habits), why the processing is necessary, who the data subjects will be in the processing activities and the data flows therein and the relationship with vendors if any.

Q: The next step is to assess the necessity and proportionality of the processing activity (use of AI to analyse customer spending activities). In this part, one justifies why they need to use AI to analyse the spending habits of the customers and ask yourself questions like: is AI the most e ective and least intrusive method? Are there alternative approaches to achieve the same objective? Is the processing proportionate to the intended benefits?

A: Once the above questions are answered and the processing activity is justified, one needs to identify and assess the privacy risks that will arise in the processing activity. In assessing the risks, you will assign likelihood of the risks to occur and the severity score to each of the risks. Once the risks are identified, one needs to identify the measures to mitigate the risks

by implementing the relevant safeguards such as data minimization, bias detection and correction, transparent privacy notices, human oversight, among others. Then the DPO needs to consult with internal stakeholders such as legal, IT, compliance, the business unit and also external stakeholders including the data protection authorities and consultants to determine levels of compliance and also provide advise or oversight. The complete DPIA is then filed with the data protection authority (ODPC in Kenya) sixty (60) days prior to the start of the processing activity. The ODPC will give advice or ask for further information before giving a go ahead with the processing activity.

The DPIA is then kept as a live document where new risks identified are mitigated against.

Q: When business priorities and data privacy principles clash, how do you approach that conversation at the executive level and what strategies have you found most e ective in finding middle ground?

A: The most e ective approach is to frame privacy as a strategic enabler as opposed to a constraint by highlighting how it builds customer trust, mitigates regulatory and reputational risks and supports growth in the long term. It is really important to align privacy recommendations with the company’s goals, using risk-based language that resonates with executives such as quantified exposure, potential fines and reputational damage. One needs to o er practical, privacy enhancing alternatives such as data minimization, phased out rolls, consent-based models, transparency through proper privacy notices among others so that you shift the conversation from “no” to “how”.

Q: Privacy and AI regulation is evolving rapidly across Africa and globally. Which upcoming shifts should corporate leaders in financial services sector be preparing for over the next two or three years?

A: When it comes to data privacy, the most vulnerable point is when financial institutions work with vendors or third parties who process personal data on their behalf. From majority of the privacy laws

across Africa, the data controller (financial institution) is responsible for privacy compliance even when it works with the data processors (vendors). This means that in-house, a financial institution may be 100% compliant with privacy laws but will be held liable when the vendors are found non-compliant by the data protection authorities. It is really important that leaders evaluate the third parties they work with, especially the ones that deal with personal data collected by the financial institutions. Third party compliance and vendor management becomes a critical area when it comes to privacy. On AI, in as much as most countries are preparing to have AI Regulations, financial institutions are bound by other laws when it comes to use of AI.

The Kenyan Data Protection Act, 2019, for example sets conditions to be met before deployment of AI and this includes carrying out a Data Protection Impact Assessment, proper privacy notices among other requirements. In future, leaders should use best practice when it comes to use of AI in the financial services sector and be involved in policy advocacy as di erent countries draft AI Regulations.

Once the need for a DPIA is established, one needs to describe the processing activities and this will answer questions like what personal data is collected, how the personal data will be processed the analysis of the spending habits

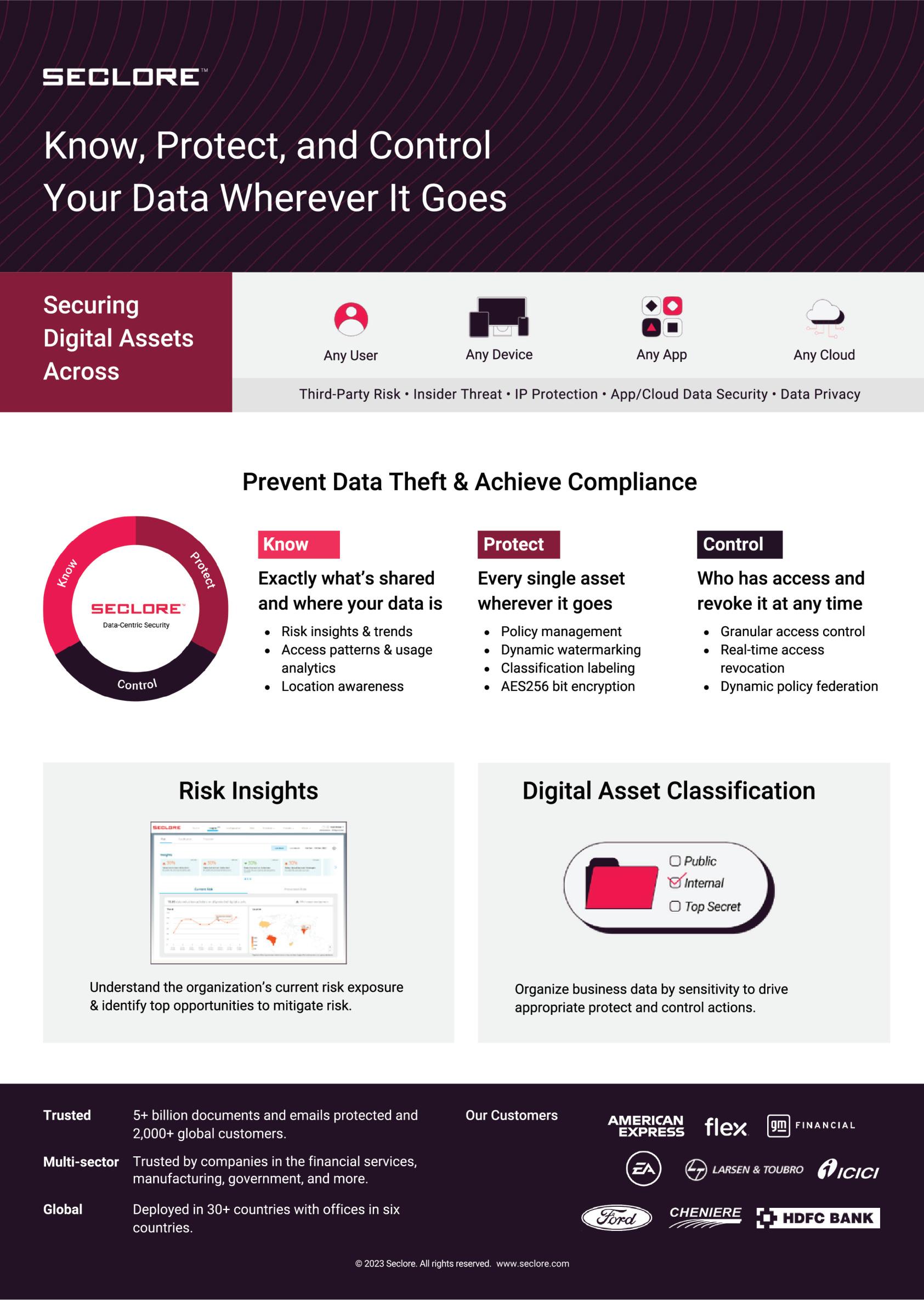

The SANS Institute today announced the o cial release of its AI security blueprint “Own AI Securely” o ering a structured, innovative model to help organizations adopt artificial intelligence securely and responsibly across their operations. This blueprint meets a clear demand from global enterprises facing complex questions around AI safety, compliance, and operational control. Security teams in both the public and private sectors are being asked to defend systems that evolve faster than existing playbooks can support and need the emerging skills to do so. Across industries, there is a growing need for reliable, outcome-focused guidance that reflects the operational, technical, and governance realities of enterprise-scale AI, and the workforce it demands.

The UAE has long positioned AI as a transformative force to the nation’s governance, growth, societal wellbeing, and economic diversification. The UAE National Strategy for AI 2031 focuses on integrating AI as a key driver of future prosperity, creating new pathways for growth, boosting e ciency, and supporting long-term sustainability. According to PwC, By 2026, global AI spending is projected to surpass USD 300 billion, with AI contributing up to 13.6% of the UAE’s GDP by 2030.

“As roles related to AI rapidly shift and new risks constantly emerge, organizations need to keep up with this pace of change and respond decisively,” said Rob T. Lee, Chief of Research and Chief AI O cer at SANS Institute. “This blueprint gives structure to an environment that has, until now, lacked clear direction. It’s built on field-tested insights and designed to help security leaders act with clarity and purpose.”

The Secure AI Blueprint introduces a three-track model, mapped to 14 industry-leading training courses and a series of GIAC certifications that align directly with organizational functions and enables organizations to develop a capable, skilled AI cybersecurity workforce

“The blueprint is based on what’s already changing inside real organizations. Leaders are not looking for more generic headlines about how the threat landscape is evolving fast, they’re looking for a way to act,” said Lee. “The SANS model provides structure where there has been confusion, and alignment where there has been fragmentation.”

The AI security blueprint “Own AI Securely with SANS” and the AI Career Framework, and course catalog are all available at sans.org/ai

Protect AI – Security and engineering teams must defend AI systems from poisoning, prompt attacks, and data leaks. Strong controls across access, data, deployment, and monitoring make sure AI is secure and run safely and resiliently

Utilize AI – Cyber defenders need to fight fire with fire by harnessing AI inside the SOC and other cybersecurity functions, such as digital forensics or red teams. AI-powered tools accelerate detection, triage, and response so security teams can keep pace with machine-speed adversaries.

Govern AI – Executives, managers, and boards must lead with accountability. Clear oversight, compliance frameworks, and AI fluency at the top ensures organizations stay aligned with regulations, design strong policies and implement e ective strategies, all while building trust.

The

blueprint is based on what’s already changing inside real organizations. Leaders are not looking for more generic headlines about how the threat landscape is evolving fast, they’re looking for a way to act

There are over 600 policies, standards, frameworks, and legislative documents published on Artificial Intelligence, but less than 10% of these documents originate from Africa.

South Africa, Nigeria, Kenya, and Egypt are leading the drive for Artificial Intelligence in Africa. Nigeria, Kenya, and Egypt haveclear directions and strategic documents supporting the potential growth of Artificial Intelligence within their territories. SouthAfrica is the only country in Africa with a clear and direct policy framework on Artificial Intelligence, called the South Africa National Artificial Intelligence Policy Framework, which was published in August 2024. The other countries have their own national AI strategy documents. In addition, Nigeria has the National Centre for AI and Robotics, while Kenya has the Kenyan AI and Blockchain Taskforce.

Meanwhile, globally, frameworks are being established, policies are being developed, standards are being structured, and legislation is being enacted to support, guide, direct, and manage the development, deployment, and adoption of Artificial Intelligence as part of business processes, government procedures, and social security indicators in the digital economy. For example, in 2024, the European Union passed the EU AI Act, which establishes what is permitted within their region regarding AI development and adoption. The United States has the Blueprint for an AI Bill of Rights, released by the White House O ce of Science and Technology Policy. This document provides ethical clarity on what is acceptable within the public sector in the deployment of automated technology.

There is also the AI Risk Management Framework, published by the U.S. National Institute of Standards and Technology (NIST), which classifies AI risks and their possible impact on people, organizations, and ecosystems. Additionally, the U.S. has the Algorithmic Accountability Act, originally passed in 2019 and updated in 2022.

The Asian continent is not left behind. China has developed several policies and frameworks toward AI development and adoption. Some of these include the Ethical Guidelines for the New Generation of AI, published in 2021; the Interim Measures

for the Administration of Generative Artificial Intelligence Services, released in 2023; and the Provisions on the Management of Deep Synthesis of Internet Information Services, also published in 2023.

China is not the only country pushing AI development in Asia. India and Singapore are also advancing. Singapore became the first country globally to develop an AI governance framework in 2019, known as the Singapore Model AI Governance Framework. In India, the sub-committee released its AI Governance Guidelines in January 2025.

Government agencies are not the only ones developing AI governance. Organizations such as the Future of Life Institute developed the Asilomar AI Principles. A group of experts came together in Montreal to sign the Montreal Declaration for Responsible AI in 2017, and the United Nations had previously released its recommendation guidelines for responsible AI, which were later superseded by the Hamburg Declaration on Responsible Artificial Intelligence (AI).

The International Organization for Standardization (ISO) has also developed a standard for AI management known as the AI Management System, with the code ISO 42001.

Due to Africa low contribute toward AI governance in comparism to the other regions, a group of researchers in Africa came together to develop the first-ever comprehensive, multi-industrial AI Governance Framework known as REST-AI. The core of the REST-AI Governance Framework is built on three pillars: Ethics & Responsibility, Safety & Security, and Trust & Acceptability.

The REST AI Governance Framework is designed to guide and direct the development, deployment, and adoption of AI models. Its structure relies on a three-tier model: General, Core, and Elective (or Selective), which encompass five independent pillars: Responsible, Ethical, Security, Trust, and Industry. The framework includes 27 principles, 72 key considerations, and 142 action points.

It is specifically designed for organizations, both in the private and public sectors, that have a direct relationship with AI stakeholders, including AI actors, AI users, AI solution providers, and AI developers. The REST AI Governance Framework is not just about compliance; it is about ensuring the security and sustainability of businesses.

Globally, organizations are adopting AI and integrating it into their operations—from core business processes to marketingstrategies and decision-making. However, it is an uncalculated risk to embed AI into operations without understanding the core of the model, its fairness metrics, bias measurement, accuracy, and, more importantly, its security.

This is where REST AI comes into play. How? As an organization, complying with the REST AI framework can serve as a business strategy to grow your client base by demonstrating accurate knowledge of a model’s operationalization, even whendeveloped by a third-party organization.

An organization that complies with the REST AI framework will have at its disposal all the information needed to assure AI solution providers and users of the model’s responsible functioning, the ethical restrictions embedded in its design, its operational safety and security, and the level of trust it can earn from stakeholders.

They won’t just have information; they will have carried out a thorough assessment. Their Data Governance Board would have been established to enforce best practices for data privacy and protection management. Their AI Audit and Accountability Board would have conducted a comprehensive audit on the AI model, the training dataset, and the algorithms used in its development. Their AI Security Teams would have performed AI penetration testing on the model, its applications, and public resources.

In addition, there would be comprehensive documentation of the model development process, detailing how the training dataset was sourced and defining roles and responsibilities throughout development. These are examples of the kinds of requirements the REST AI Governance Framework mandates for organizations developing AI models to ensure compliance.

As an organization looking to adopt an AI model developed by another organization and integrate it into your operations, you will need this information in the model card before making an implementation decision.

On the other hand, as an organization that has already adopted an AI model into its operations, you may need to share some of this information with your users, clients, or business partners to gain the trust and backing of your business stakeholders.

As AI users, we often interact with AI applications without knowing the underlying AI model, how the data sources were developed, who created the model, or who should be held accountable if the model fails. This type of information is crucial in determining whether to start or continue using an AI application. When an organization complies with the REST AI Governance Framework, it provides this level of transparency to all its stakeholders.

For AI users, REST AI Governance serves as a source of accurate information. For AI solution providers adopting AI models, REST AI acts as a validator. And for AI developers building AI models, compliance with REST AI becomes a business strategy forgrowth and trust.

A long-running cybercrime group, RevengeHotels, has significantly upgraded its methods by leveraging Artificial Intelligence (AI) to launch sophisticated attacks on hotels worldwide, putting guests’ payment information at higher risk.

A long-running cybercrime group, RevengeHotels, has significantly upgraded its methods by leveraging Artificial Intelligence (AI) to launch sophisticated attacks on hotels worldwide, putting guests’ payment information at higher risk.

Kaspersky’s Global Research and Analysis Team (GReAT) discovered a new wave of these attacks between June and August 2025. While hotels in Brazil have been the primary targets, the threat is global, with cases reported in other countries and growing potential to a ect popular destinations across Africa, including South Africa, Kenya, and Nigeria

The RevengeHotels group has been active since 2015, but their new campaign marks a dangerous evolution. Analysis of the recent malicious programs shows they contain code likely generated by AI models, which makes them:

More Sophisticated: The malware is cleaner, more structured, and often includes detailed comments, helping the attackers manage and scale their tools more e ectively.

Harder to Detect: The AI-assisted code allows the threat actors to bypass traditional security measures more easily, enhancing the malware’s evasion capabilities.

As Lisandro Ubiedo, an expert at Kaspersky’s Great, notes: “Cybercriminals are increasingly using AI to create new tools and make their attacks more e ective. This means that even familiar schemes, like phishing emails, are becoming harder to spot for a common user. For hotel guests, this translates into higher risks of card and personal data theft, even when you trust well-known hotels.”

The operation targets the hospitality sector’s weakest link: front-desk sta .

Attackers send convincing phishing emails directly to hotel employees. These emails are typically disguised as seemingly legitimate reservation requests or even job applications, complete with documents the sta would be expected to open. Once a hotel employee interacts with the email, by clicking a malicious link or opening an attachment, the system is infected with a Remote Access Trojan (RAT) called VenomRAT. VenomRAT gives the attackers remote access to the hotel’s systems, allowing them to steal sensitive guest information, including payment card data and other personal details stored in the reservation software.

The goal remains the same, to compromise hotel systems to harvest sensitive data from travelers worldwide.

For both individuals and hotels, heightened vigilance is necessary to defend against these AI-enhanced threats:

• Keep a close eye on your credit card statements for any unauthorized charges following a hotel stay, especially one in a high-risk region.

• When booking, consider using a virtual credit card number or a payment service that doesn’t expose your primary card details directly to the hotel’s system.

• Be mindful of the personal data you provide, particularly when communicating via email or unsecured channels.

• Conduct mandatory and regular training to help sta recognize sophisticated phishing emails, especially those disguised as reservation queries or CVs. Stress the importance of verifying unexpected requests.

• Never open attachments or click links from unexpected emails, even if they look friendly. Fine-tune your antispam settings to filter out suspicious communications.

• Ensure your company uses modern security solutions that provide real-time protection and the advanced detection and response capabilities (EDR/XDR) needed to spot AI-generated malware.

• Treat all unexpected files, even those from o cial-looking emails, as potential threats like ransomware or spyware.

Attackers are sending convincing phishing emails directly to hotel employees. These emails are typically disguised as seemingly legitimate reservation requests

The most frequently reported cyberthreats in Africa, as highlighted by INTERPOL

In Western and Eastern Africa, cybercrime accounts for more than 30% of all reported crime.

Africa is estimated to be losing $3 billion annually to cybercrime.

The most frequently reported cyberthreats in Africa, as highlighted by INTERPOL, include:

Online Scams (especially Phishing):

These are the most frequently reported cybercrimes. In some African countries, suspected scam notifications reportedly rose by up to 3,000% in the past year.

Ransomware: This continues to be widespread.

In 2024, South Africa (detections) and Egypt (detections) reported the highest numbers of ransomware detections, followed by Nigeria and Kenya.

Business Email Compromise (BEC): Incidents have risen significantly, with 11 African nations identified as primary sources for this type of fraud originating on the

continent. Organized transnational syndicates, like Black Axe in West Africa, are often involved.

Digital Sextortion:

Sixty percent of African member countries reported an increase in cases where threat actors use real or AI-generated explicit content for blackmail.

•Based on reports from Check Point Research and INTERPOL:

• Highly Targeted Industries across Africa:

• Telecommunications

• Government Institutions (Public Sector)

• Consumer Goods and Services

• Critical Infrastructure/High-Profile Incidents:

Attacks have hit critical infrastructure and government databases in highly digitized economies, such as a breach at Kenya's Urban Roads Authority (KURA) and hacks of Nigeria's National Bureau of Statistics (NBS).The Finance, Healthcare, Energy, and Government sectors are among the hardest hit by financially damaging cybercrimes.

Cybersecurity Capacity and Law Enforcement Challenges African law enforcement agencies face significant structural challenges in combating the surge in cybercrime:

Enforcement Capacity: 90% of African countries report needing significant improvement' in law enforcement or prosecution capacity.

• Legal Frameworks: 75% of countries cited outdated legal frameworks and prosecution capacity needing improvement.

• Resource Constraints: 95% of respondents cited inadequate training, resource constraints, and a lack of access to specialized tools.

• Only 30% of countries reported having an incident reporting system.

• Only 29% have a digital evidence repository.

• Only 19% maintain a cyberthreat intelligence database.

International Cooperation: 86% of African member countries surveyed stated their international cooperation capacity needs improvement due to slow processes.

Private-Sector Collaboration: 89% of countries struggle with e ective collaboration with the private sector due to unclear engagement channels.

“As Kenya’s Global Ambassador for Responsible AI, I’m working to ensure AI is built and secured with transparency, accountability, and people’s rights at the center.”

How would you react if you saw a video of your CEO seemingly ordering a transfer of company funds to an unknown account? Or if you heard a recording of a political leader making inflammatory statements they never actually uttered? These scenarios, once relegated to the realm of science fiction, are now increasingly possible and increasingly dangerous thanks to the rise of deepfakes.

Deepfakes, short for deep learning fakes, are AI-generated synthetic media that can realistically mimic a person’s appearance, voice, and actions. Using sophisticated algorithms, deepfakes can convincingly swap faces in videos, generate entirely new audio recordings, and create fabricated scenarios that are nearly indistinguishable from reality. What was once the domain of skilled technicians and well-funded organizations is now becoming increasingly accessible to anyone with a computer and the right software. As the technology matures, so does the potential for misuse.

Deepfakes represent a significant and evolving threat to both cybersecurity and public trust, demanding proactive defense strategies that combine technological innovation, policy reform, and heightened public awareness. The ability to convincingly manipulate audio and video has the potential to disrupt cybersecurity practices and spread misinformation at a scale never seen before.

The potential for misuse of deepfake technology extends across various sectors, posing significant risks to cybersecurity, national security, and even individual reputations.

Impact of Deepfake on Cybersecurity and National Security:

Deepfakes amplify the e ectiveness of social engineering attacks. A convincingly fabricated video of a trusted colleague or executive directing an employee to take a specific action, such as transferring funds or revealing sensitive data, can be incredibly persuasive. The visual element adds a layer of authenticity that traditional phishing emails lack, making it far more likely to succeed. Imagine a deepfake video call where an IT support person asks for login details under false pretenses.

Deepfake audio recordings, simulating the voice of a CEO or CFO, can be used to authorize fraudulent wire transfers. These attacks exploit the trust-based relationships within organizations and can result in significant financial losses. The subtlety of mimicking speech patterns and vocal nuances makes it incredibly challenging to detect in real-time.

Deepfakes can be employed to create scandalous or damaging content that harms the reputation of individuals and organizations. This could involve creating fake videos showing a public figure engaging in unethical or illegal behavior, leading to reputational damage and potential legal repercussions. The speed at which these fabricated narratives can spread online further exacerbates the problem.

Political Disinformation

The use of deepfakes to spread false information and manipulate public opinion during elections or political crises poses a grave threat to democratic processes. Fabricated videos of candidates making controversial statements or engaging in illicit activities can sway voters and undermine the legitimacy of electoral outcomes. This can lead to political instability and societal unrest.

Creating fake videos or audio recordings of political leaders making inflammatory statements can trigger international conflict and damage diplomatic relations between nations. Such deepfakes could be strategically released to incite mistrust and hostility, leading to potentially devastating consequences.

Erosion of Trust

The proliferation of deepfakes erodes public trust in legitimate news sources and institutions. When people become unsure of

what to believe, it creates a climate of uncertainty and cynicism, making it more di cult to discern truth from falsehood. This can weaken social cohesion and undermine the foundations of democracy.

Deepfakes can be used to impersonate individuals for espionage purposes. By creating a convincing deepfake identity, intelligence agencies or malicious actors could gain access to sensitive information or infiltrate secure networks.

In 2019, a deepfake video of Nancy Pelosi was slowed down to make her appear drunk and incoherent, illustrating how even rudimentary manipulation can spread misinformation. As deepfake technology matures, we can anticipate more sophisticated attacks targeting high-value individuals and critical infrastructure. Imagine deepfake-generated instructions to control critical systems, or fake biometrics used to bypass security.

Eye BlinkingUnnatural Lighting or Shadows

Lip Syncing Issues

Source Verification

Combating the deepfake threat demands a multi-faceted approach. Technological advancements in detection, coupled with responsible policy frameworks, enhanced media literacy, and global collaboration, are essential. As consumers of information, we must cultivate a critical eye, verifying sources and questioning the authenticity of what we see and hear. By embracing vigilance and supporting e orts to combat deepfakes, we can safeguard ourselves and our society from the insidious e ects of AI-generated disinformation.

By creating a convincing deepfake identity, intelligence agencies or malicious actors could gain access to sensitive information or infiltrate secure networks

For too long, enterprises have managed data resilience (backup and recovery) and data security (governance and threat management) as two siloed, often conflicting, disciplines. This separation creates blind spots. An attacker compromises production data, but that compromise might also lurk undetected in backup environments, ready to relaunch an attack after recovery. Veeam Software just signaled the end of this separation.

Veeam announced a definitive agreement on October 21, 2025, to acquire data security powerhouse Securiti AI for $1.725 billion. This isn't just a portfolio expansion; it's a strategic move to create an end-to-end platform for Data Resilience and Security—a convergence the market has been demanding.

Future-Proofing for AI: The new platform explicitly addresses AI trust and governance. As AI models consume vast amounts of enterprise data, the ability to manage, secure, and ensure the privacy of that data from one console becomes non-negotiable.

The strategic intent is underscored by the appointment of Securiti AI’s founder and CEO, Rehan Jalil, who will join Veeam as the new President of Security and AI. This leadership injection confirms that Veeam is not merely acquiring a product but integrating a vision focused on data intelligence and security at the highest level.

The integration is designed to merge Veeam's proven ability to recover data with Securiti AI's expertise in securing and governing data, particularly in modern, distributed environments.

The combined o ering will revolve around Securiti AI’s Data Command Center, which will be folded into Veeam’s product suite. This gives customers a single pane of glass to address critical modern challenges:

Data Security Posture Management (DSPM): Security teams can now instantly identify sensitive data across hybrid, multi-cloud, and SaaS environments, and spot security misconfigurations before they're exploited.

Backup Integrity: The integration allows organizations to ensure that the data they are recovering is clean and compliant, closing the critical loop where compromised production data poisons the recovery process.

Expected to close in Q4 2025, this $1.725 billion transaction (financed by JPMorgan Chase Bank) is the clearest signal yet that the cybersecurity industry is moving past point solutions. Data protection can no longer be reactive; it must be an intelligent, unified layer of defense that spans both production and backup systems. For the enterprise, this promises reduced downtime, simplified compliance, and a much stronger defense against increasingly sophisticated ransomware and privacy risks.

Tunde Dada on Engineering a Mutually Reinforcing Strategy for IT, Security, and Business Continuity Across inq. digital's Multinational Edge

A leader is no longer defined by a single title. They must be a polymath—a strategist, a protector, and an architect of resilience. Tunde Dada, Group CIO, Business Continuity Management (BCM) leader, and Data Protection O cer (DPO) at inq. digital Group, is precisely that leader. Holding a unique tri-factor of responsibilities, he sits at the crucial intersection where business strategy meets digital defense.

In this exclusive interview, Dada takes us behind the curtain of inq. digital’s success, revealing the philosophy that transforms his IT strategy, cybersecurity program, and business continuity plans from mere checklists into a mutually reinforcing ecosystem. From his five-fold approach to security—anchored by the critical "People and Processes"—to his proactive preparation for the looming threat of AI-powered attacks, Dada o ers a masterclass in leadership, team development, and securing the distributed, edge-centric environments that define the next frontier of connectivity. Read on to discover how he is building the future of digital resilience, one strategic layer at a time.

Interview Questions for Tunde Dada Group CIO/BCM at inq. digital Group

Q: As a Group CIO, Business Continuity Management (BCM) leader, and Data Protection O cer (DPO), you hold a unique position. How do you ensure that the IT strategy, cybersecurity program, and business continuity plans are not only aligned but are also mutually reinforcing across a diverse, multinational group like inq. digital?

A: Our business is a multidisciplinary one with a digital focus. We have a robust Its strategy that seeks to give the business competitive advantage by leveraging technology to its full potential, and allowing the businesses to improve e ciency and stay ahead of competition. This is also in line with Cybersecurity strategy which ensures that the business have in place a well-defined Cyber strategy includes plans to protect sensitive data and infrastructure, reducing the risk of failures and disruptions. Also, in the same vein we developed a BCM strategy which at a very high level provides a proactive plan that outlines our organization's approach to maintaining critical operations during and after a disruptive event, such as a natural disaster, cyberattack, or supply chain failure. Our BCM strategy serves as a roadmap for identifying threats, assessing their potential impact, and implementing preventive and recovery measures to ensure business resilience, protect reputation, and minimize costs. We have developed a high level KPI structure that helps to measure the e ectiveness of each of the strategic arms and ensure that they align with the business goals. We have seen over the years that an e ective KPI scoreline approach helps us to track the components of the various strategies and gives us the areas that needs improvement. This has significantly helped the board to measure and track our strategic alignment with the business goals.

Q:What are the key components of a successful high-level security program, and how do you measure its e ectiveness beyond simple metrics? How do you manage the potential conflicts of interest or competing priorities among your CIO, BCM, and DPO responsibilities?

The inq. digital approach to security programs is five-fold: Governance, Risk and asset management, Defensive capabilities, O ensive reflections, and Peoples and processes. This five-fold approach is all encompassing and allows us to have a robust security program in place. In terms of governance, we have a strategic approach that starts with the Board/Executive buy-in from the onset to ensure that there is full

oversight and adequate governance structure in place. This is coupled with the adoption of the ISO 27000 security framework which we have deployed all our OPCOs and obtained the certification. The security framework has also ensured the development of adequate policies and procedures in line with the ISO framework which is audited both internally and externally for full compliance. Our governance approach has also helped us to put in place the appropriate risk appetite that is appropriate for the business to function e ectively. The risk and asset management component of the Security program allows us to put in place a robust risk assessment program that helps continually identify and prioritize risks within the business. As expected, this would not be possible without the appropriate process of identifying the inventory of the business on a continuous basis including virtual and physical assets that are covered by the risk management process. To compliment this, we have a vulnerability management process in place in line with the

requirements of the ISO framework. Our defensive capabilities cover the security architecture and implementation of network security mechanisms that help to identify and filter network tra c with deep inspection algorithms. This also includes a comprehensive logging and monitoring process and mechanism in place using various tools that are automated and backed up by adequate data protection strategy. In terms of our O ensive component, we have put in place adequate "O ensive plans" which includes regular penetration tests across all our public interfaces to ensure that we have the "threat actors" blueprint in place and be able to design the appropriate defensive schemes needed for all our operating environments. The last component is considered by us as the most crucial; the people and the process. Over the years we have learnt that no matter the level of technical expertise or devices in place, the people eventually determine the success of the program.' In view of this we have put in place adequate user awareness program that is continually reviewed and audited for e ectiveness. We also have a "live" incident management program in place under the auspices of the BCM program and we ensure a system of continuous improvement in line with the ISO framework.

How do I combine the CIO, DPO, and MCM roles? This by itself is made possible by our approach to agile business framework which allows business agility across the entire ecosystem. My CIO role is the main anchor and allows me to have the strategic plan that covers how we manage and protect data across the business. With this in place I have a team of agile workforce that function in various capacities covering security, data governance, business continuity and system architecture which covers all the relevant areas.

Q: You have a history of successful leadership and organizational development. What is your philosophy for building and mentoring a high-performing IT and security team, and how Do you foster a culture of continuous learning and proactive threat awareness?

A: I have been in the IT and Cyber industry for close to 3 decades and have seen technology transitions at a massive scale. The rate of transition has also seen massive changes in workforce capacities and this was massively tested by the COVID pandemic. I have developed my own form of transformational leadership to cope over the years with rapid technology transitions. Over these years, I have focused on inspiring and motivating my team members to achieve a shared, compelling vision for the business. I have seen over the years that IT teams normally lack the managerial edge and clearly need development programs to help them cultivate qualities that help their growth in the workforce. Various ways to do this have been adopted by me over the years, including inspirational motivation to communicate a promising future to the teams, intellectual stimulation to help challenge assumptions and encouraging creative problem-solving, and also individualized consideration, which entails mentorship and coaching. I have seen that these approaches, when combined, help the team individually and collectively and produce an environment with continuous learning in place and help the team's alertness to threats.

Q:Given the distributed nature of an edge solutions provider like inq. digital, what are the most significant emerging threats you are preparing for? What are the key di erences in securing an edge-centric environment versus a traditional centralized one?

The security landscape of the future is pretty scary and exciting and we believe will o er a lot of opportunities to organizations that prepare for it adequately. This is one of the things that has shaped our strategy to date and obviously will continue to shape our strategy. As a start, AI-powered attacks are our main focus and a key strategy driver. We now see threat actors using artificial intelligence and machine learning to automate, accelerate, and amplify attacks. We now see ample evidence of this with automated and polymorphic malware, which shows. We have also seen an increase in AI-driven phishing and social engineering for instance an executive's cloned voice, for instance, could trick an employee into wiring funds to a fraudulent account. And also, we foresee more adversarial AI attacks where threat actors can poison or manipulate the training data of AI models to corrupt their integrity. Our huge focus in the past years has been geared to prepare the business for the future of AI, both for o ensive and defensive purposes.

Q: How do you leverage external influence to benefit your work within inq. digital, and what responsibility do you feel senior leaders have in contributing to the broader cybersecurity community?

A: The current business ecosystem on a global scale works better in an atmosphere of collaboration, especially amongst industry peers, which can be termed as an external influence in a way. I leverage on this quite well, and the influence has been very beneficial to the business. One area where this is easily seen is in the supply chain arena, where external collaboration is essential to ensure timely delivery of services. The collaboration with the external influences also aims to ensure that the broader Cybersecurity community benefits from the sharing of ideas and threat intelligence within the industry. I strongly advocate that senior leaders create an enabling environment that fosters collaboration within the industry and be ready to contribute to the larger Cybersecurity community as an industry obligation.

I strongly advocate that senior leaders create an enabling environment that fosters collaboration within the industry and be ready to contribute to the larger Cybersecurity community as an industry obligation