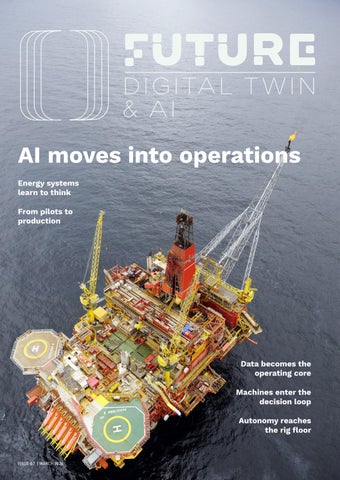

Artificial intelligence has moved beyond pilots. In this edition, we explore how AI, digital twins and agentic systems are being embedded into live operations across oil, gas and energy infrastructure.

Issue 67 examines what happens when data becomes operational infrastructure rather than an IT initiative. From AI at scale in upstream production to the rewiring of the digital nervous system of energy, the focus shifts from experimentation to execution.

Inside:

The oil and gas operating model of the future

AI at scale in upstream operations

The digital nervous system of energy

The oilfield is done with pilots

Data as the operating model

Drilling autonomy as a discipline

An exclusive interview with Sheikh Nawaf S Al-Sabah

Downstream transition strategy with MOL Group

This edition moves beyond digital theatre, highlighting how organisations are redesigning workflows, governance and accountability to operate with intelligence at scale.

Future Digital Twin & AI connects engineering rigour with digital