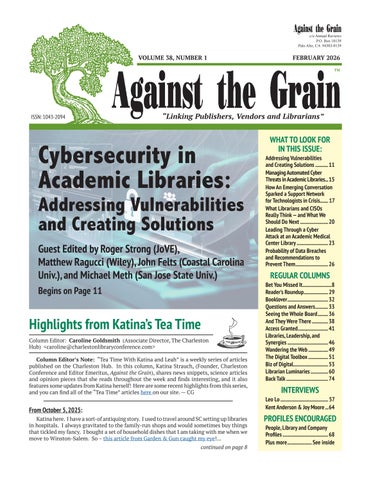

c/o Annual Reviews P.O. Box 10139 Palo Alto, CA 94303-0139

VOLUME 38, NUMBER 1

FEBRUARY 2026 TM

ISSN: 1043-2094

“Linking Publishers, Vendors and Librarians”

Cybersecurity in Academic Libraries:

Addressing Vulnerabilities and Creating Solutions Guest Edited by Roger Strong (JoVE), Matthew Ragucci (Wiley), John Felts (Coastal Carolina Univ.), and Michael Meth (San Jose State Univ.) Begins on Page 11

Highlights from Katina’s Tea Time Column Editor: Caroline Goldsmith (Associate Director, The Charleston Hub) <caroline@charlestonlibraryconference.com> Column Editor’s Note: “Tea Time With Katina and Leah” is a weekly series of articles published on the Charleston Hub. In this column, Katina Strauch, (Founder, Charleston Conference and Editor Emeritus, Against the Grain), shares news snippets, science articles and opinion pieces that she reads throughout the week and finds interesting, and it also features some updates from Katina herself! Here are some recent highlights from this series, and you can find all of the “Tea Time” articles here on our site. — CG

From October 5, 2025: Katina here. I have a sort-of antiquing story. I used to travel around SC setting up libraries in hospitals. I always gravitated to the family-run shops and would sometimes buy things that tickled my fancy. I bought a set of household dishes that I am taking with me when we move to Winston-Salem. So – this article from Garden & Gun caught my eye!... continued on page 8

WHAT TO LOOK FOR IN THIS ISSUE: Addressing Vulnerabilities and Creating Solutions............ 11 Managing Automated Cyber Threats in Academic Libraries.... 15 How An Emerging Conversation Sparked a Support Network for Technologists in Crisis....... 17 What Librarians and CISOs Really Think — and What We Should Do Next......................... 20 Leading Through a Cyber Attack at an Academic Medical Center Library............................ 23 Probability of Data Breaches and Recommendations to Prevent Them............................. 26

REGULAR COLUMNS Bet You Missed It..........................8 Reader’s Roundup..................... 29 Booklover.................................... 32 Questions and Answers............ 33 Seeing the Whole Board.......... 36 And They Were There............... 38 Access Granted........................... 41 Libraries, Leadership, and Synergies.................................... 46 Wandering the Web.................. 49 The Digital Toolbox.................. 51 Biz of Digital............................... 53 Librarian Luminaries................ 60 Back Talk..................................... 74

INTERVIEWS Leo Lo.......................................... 57 Kent Anderson & Joy Moore....64

PROFILES ENCOURAGED People, Library and Company Profiles........................................ 68 Plus more...................... See inside